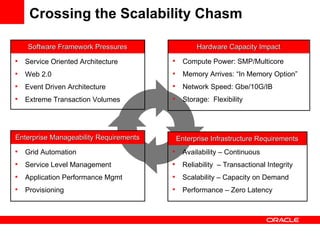

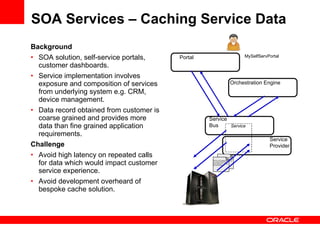

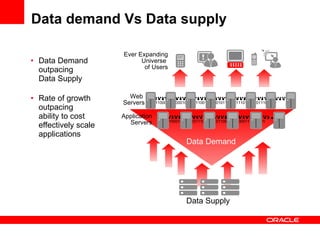

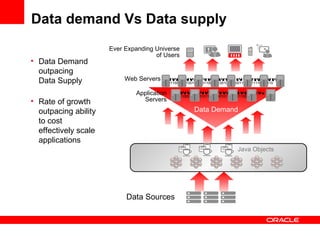

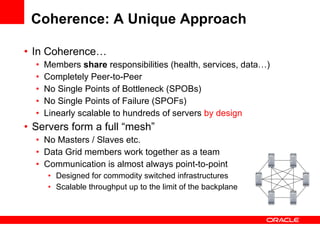

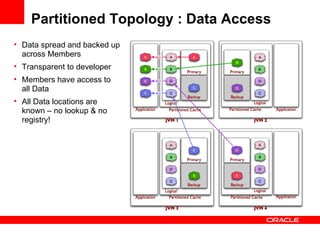

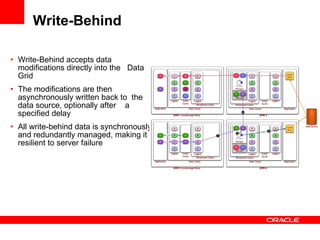

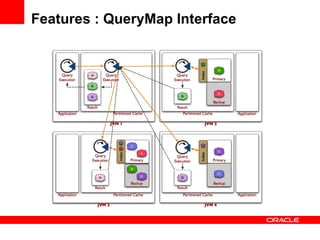

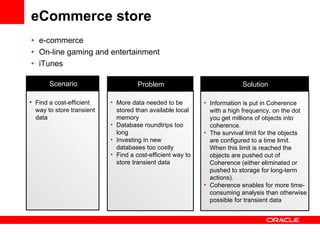

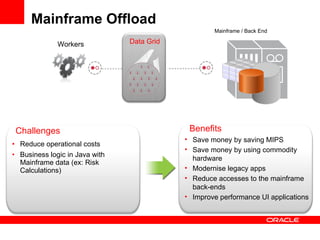

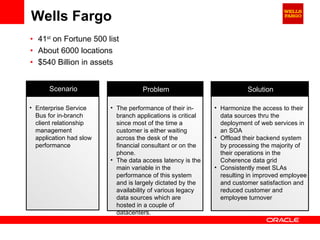

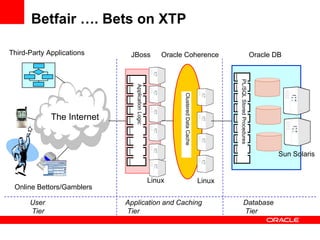

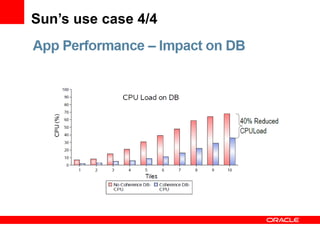

The document discusses Oracle Coherence, an in-memory data grid solution designed to enhance the scalability, reliability, and performance of applications by caching data and managing data access efficiently. It highlights the need for such solutions in contexts like e-commerce and enterprise applications, showcasing use cases and benefits, such as improved customer experience and reduced operational costs. The overview covers various features, benefits, and the architecture of Oracle Coherence, emphasizing its ability to provide continuous data availability and support for large-scale data processing.