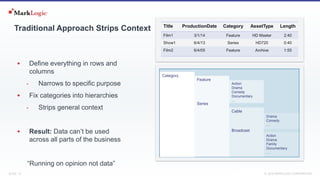

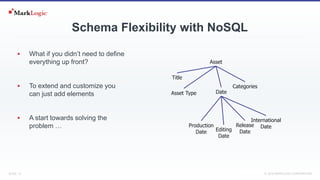

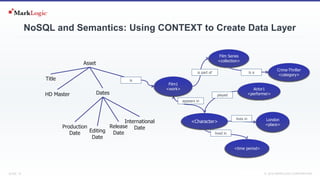

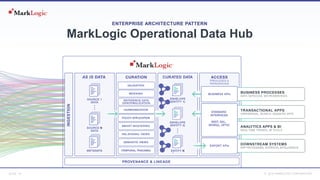

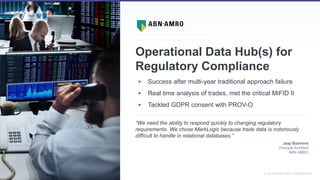

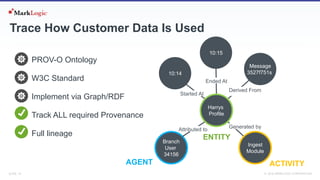

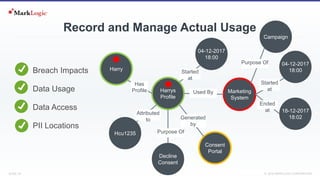

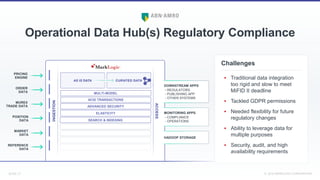

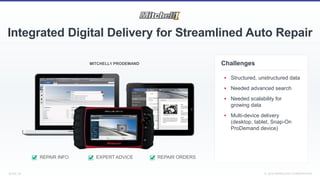

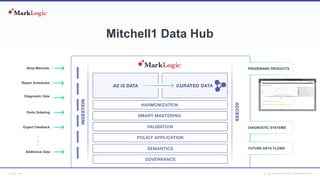

This document summarizes a presentation about operationalizing linked data to transform industries using a multi-model approach. It discusses the importance of data and challenges with traditional data approaches. It promotes using linked data and semantic techniques to create flexible, contextual data layers that can be used across business units. Examples are provided of companies using these approaches for regulatory compliance, integrated digital delivery for auto repair, and open data sharing without data silos.