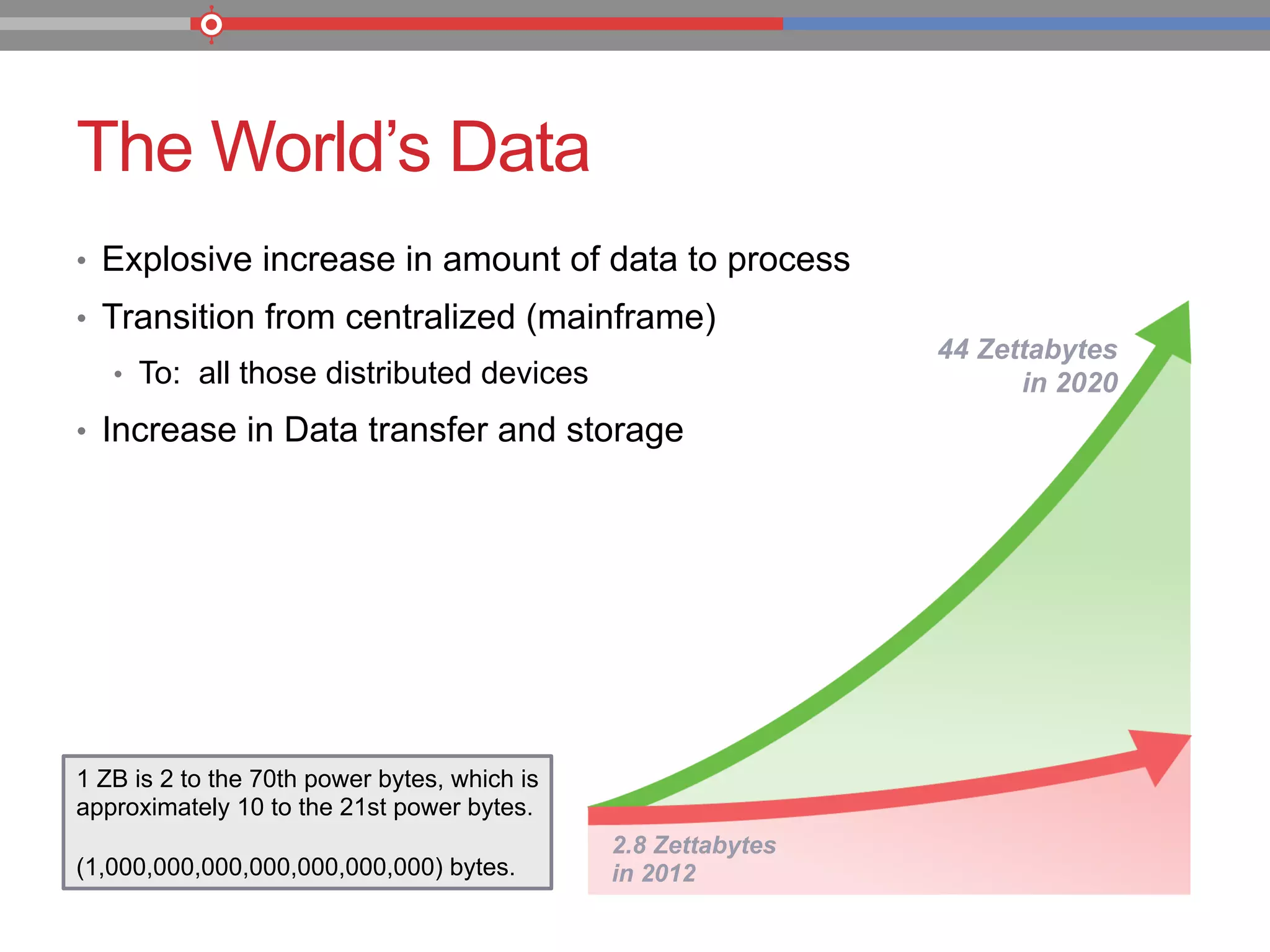

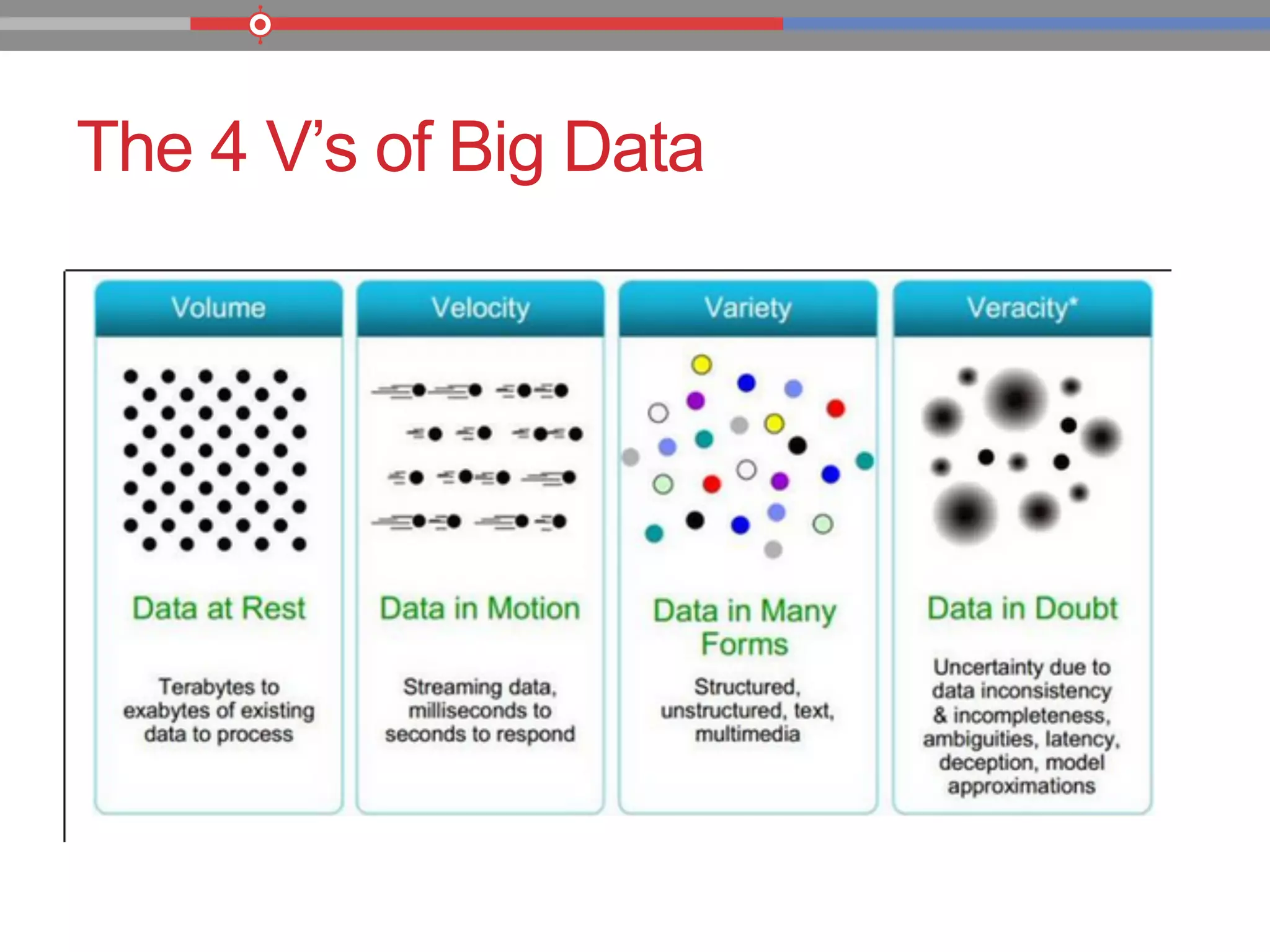

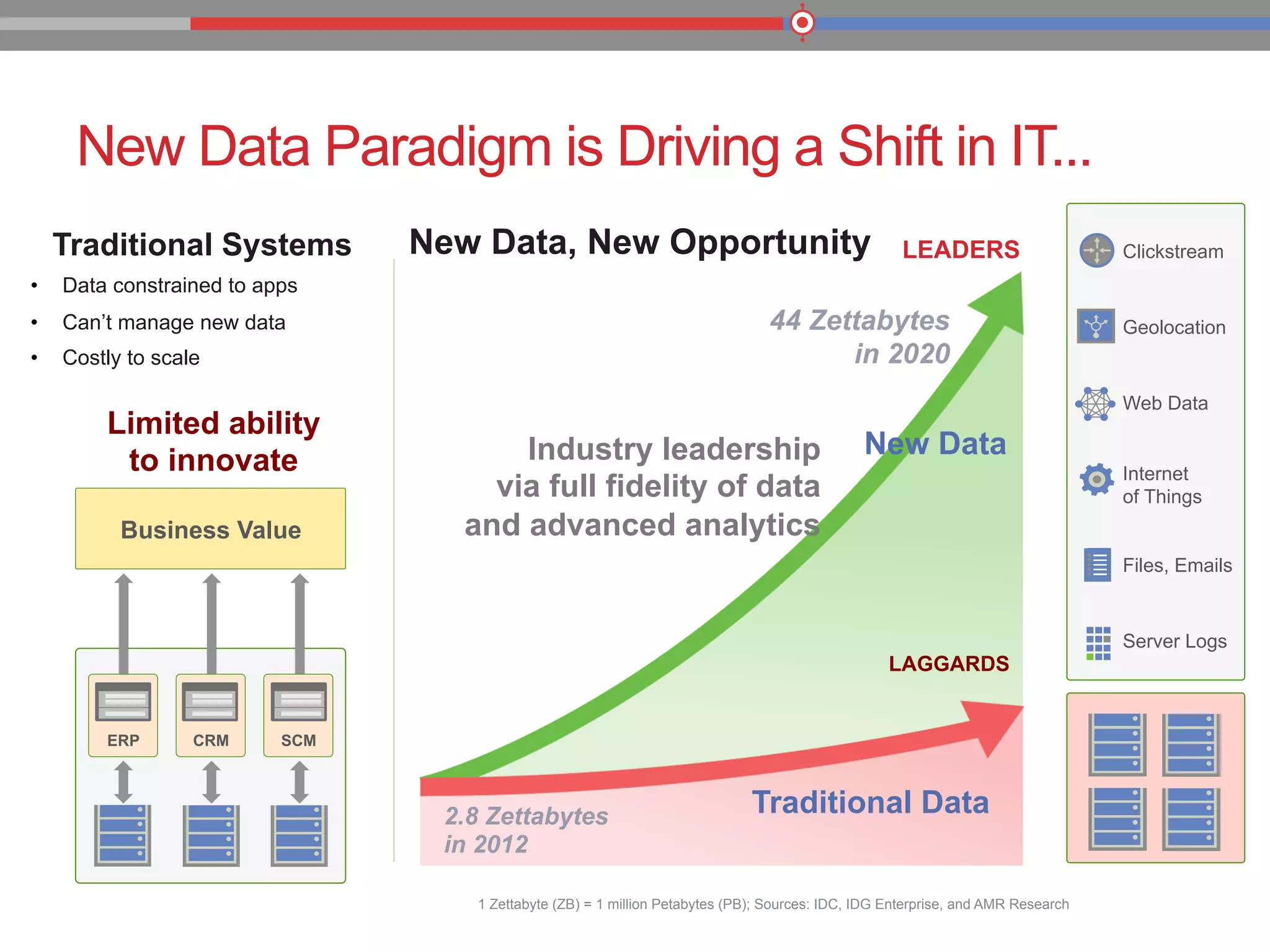

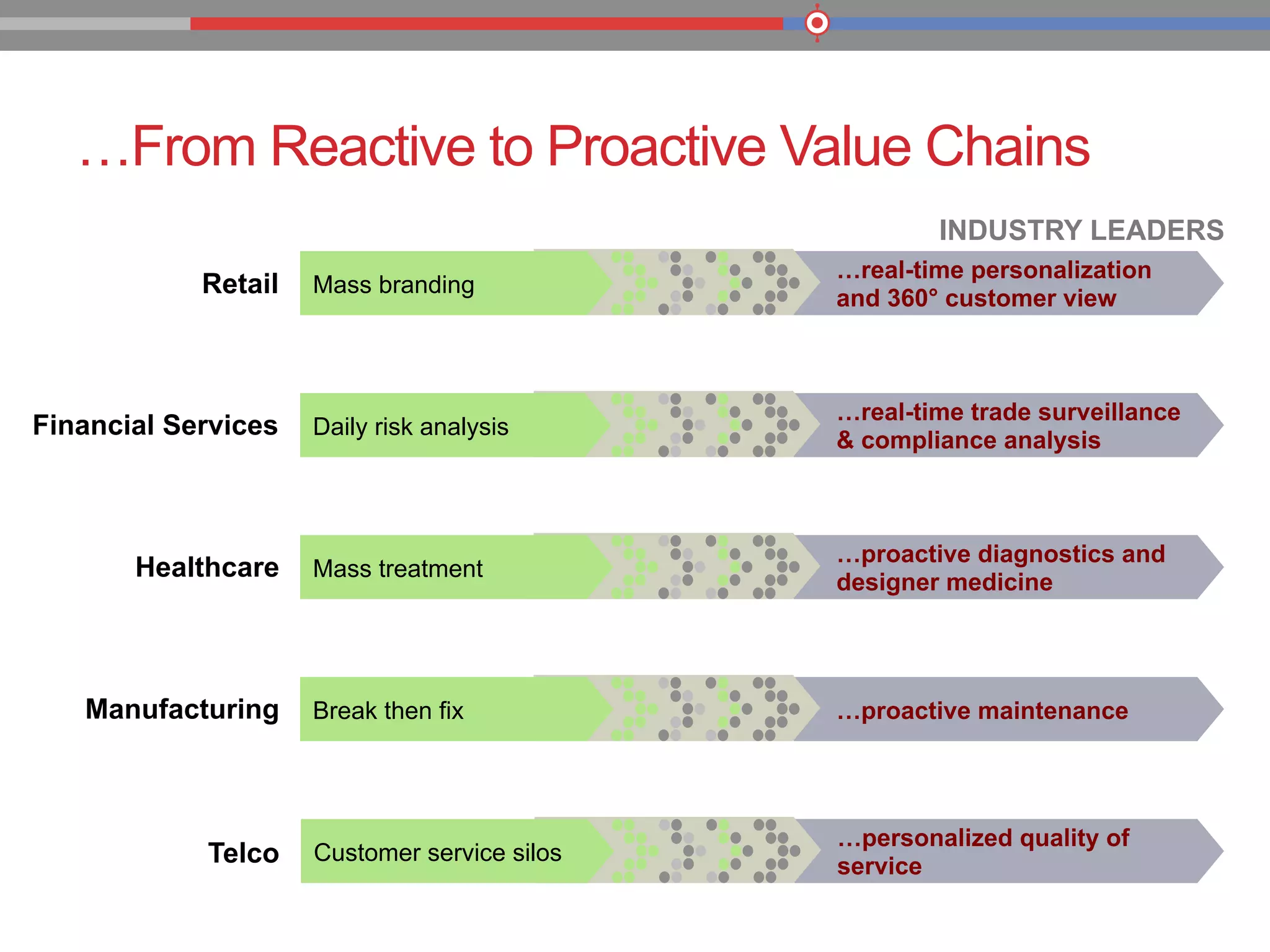

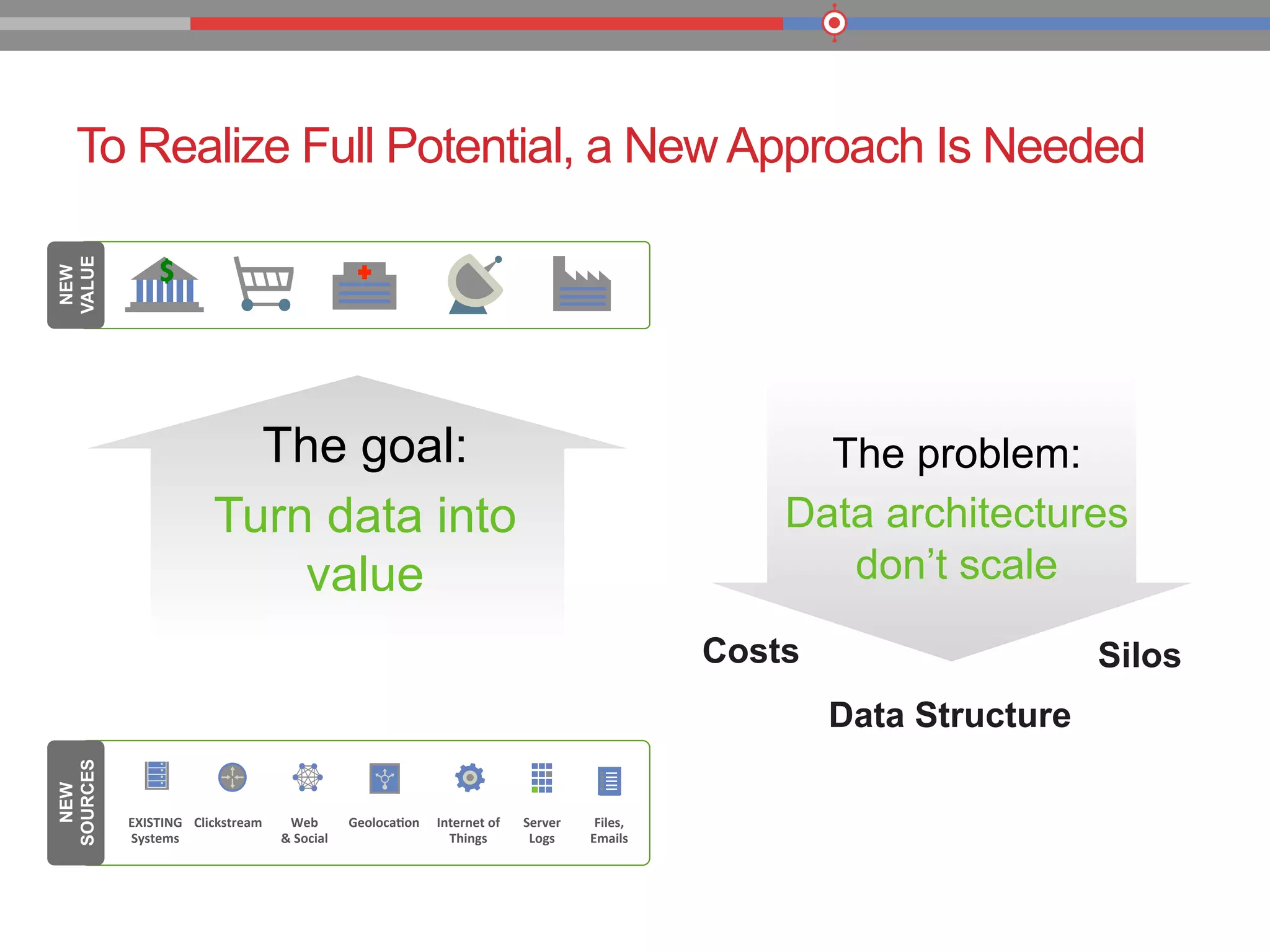

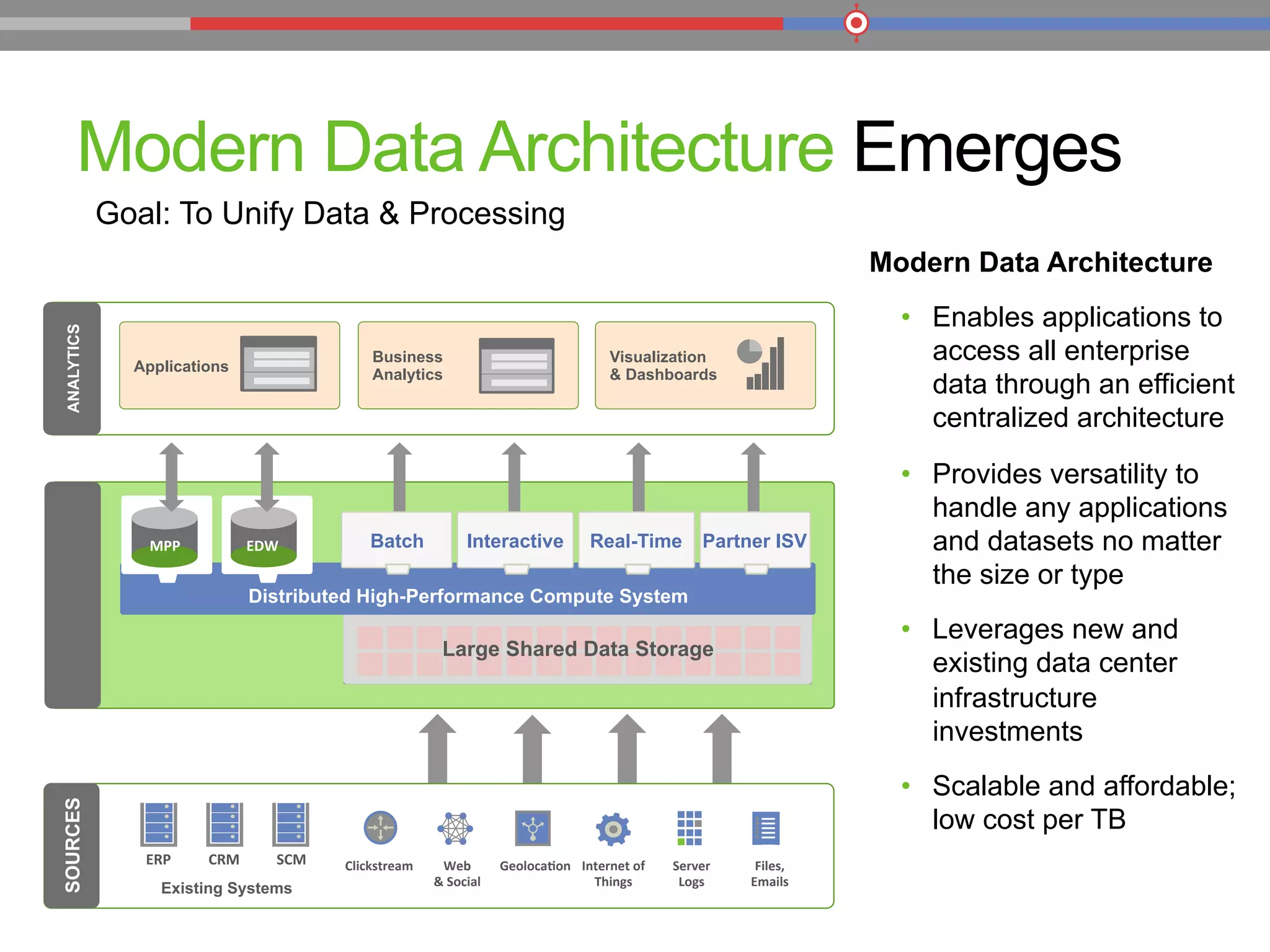

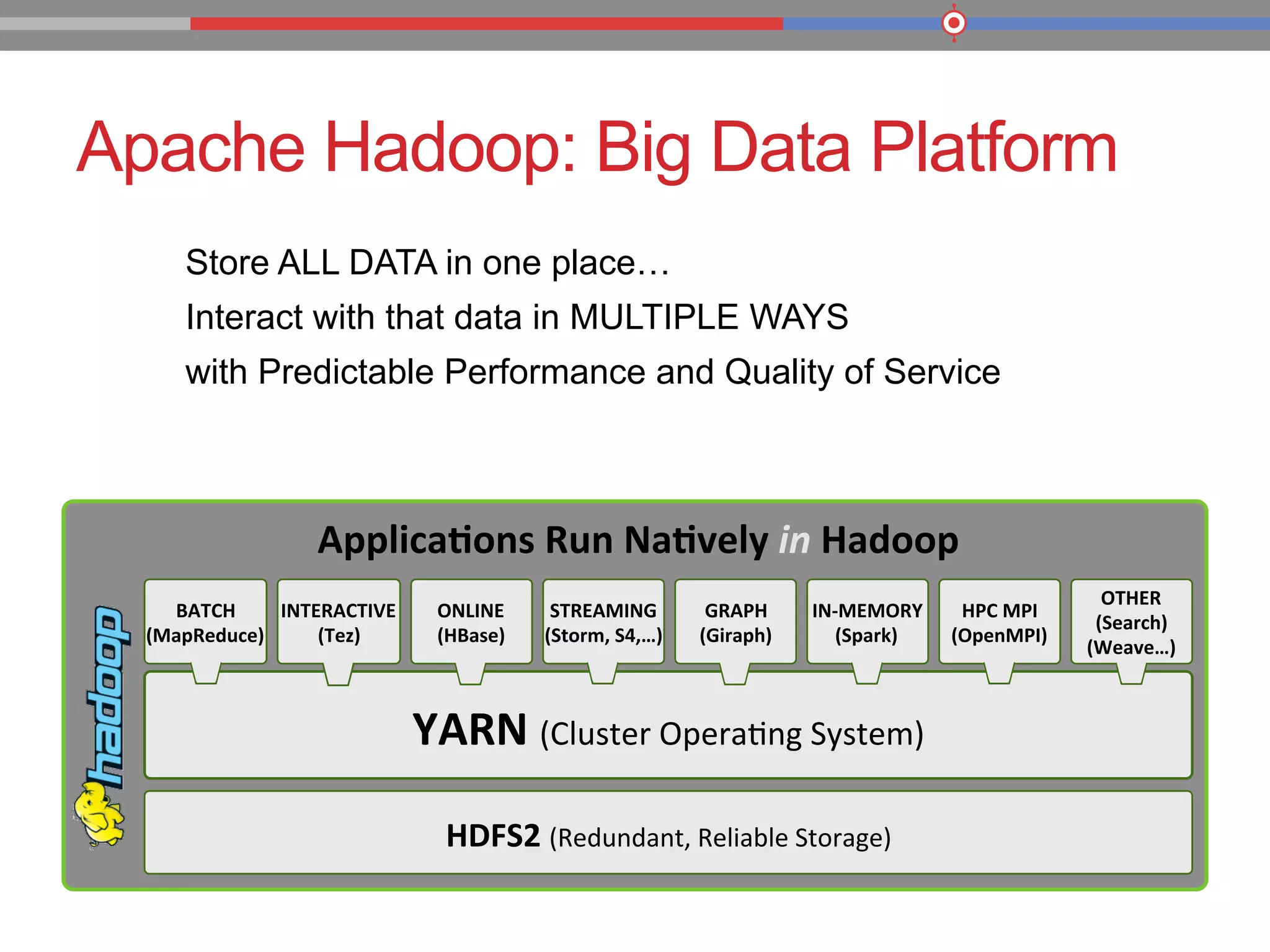

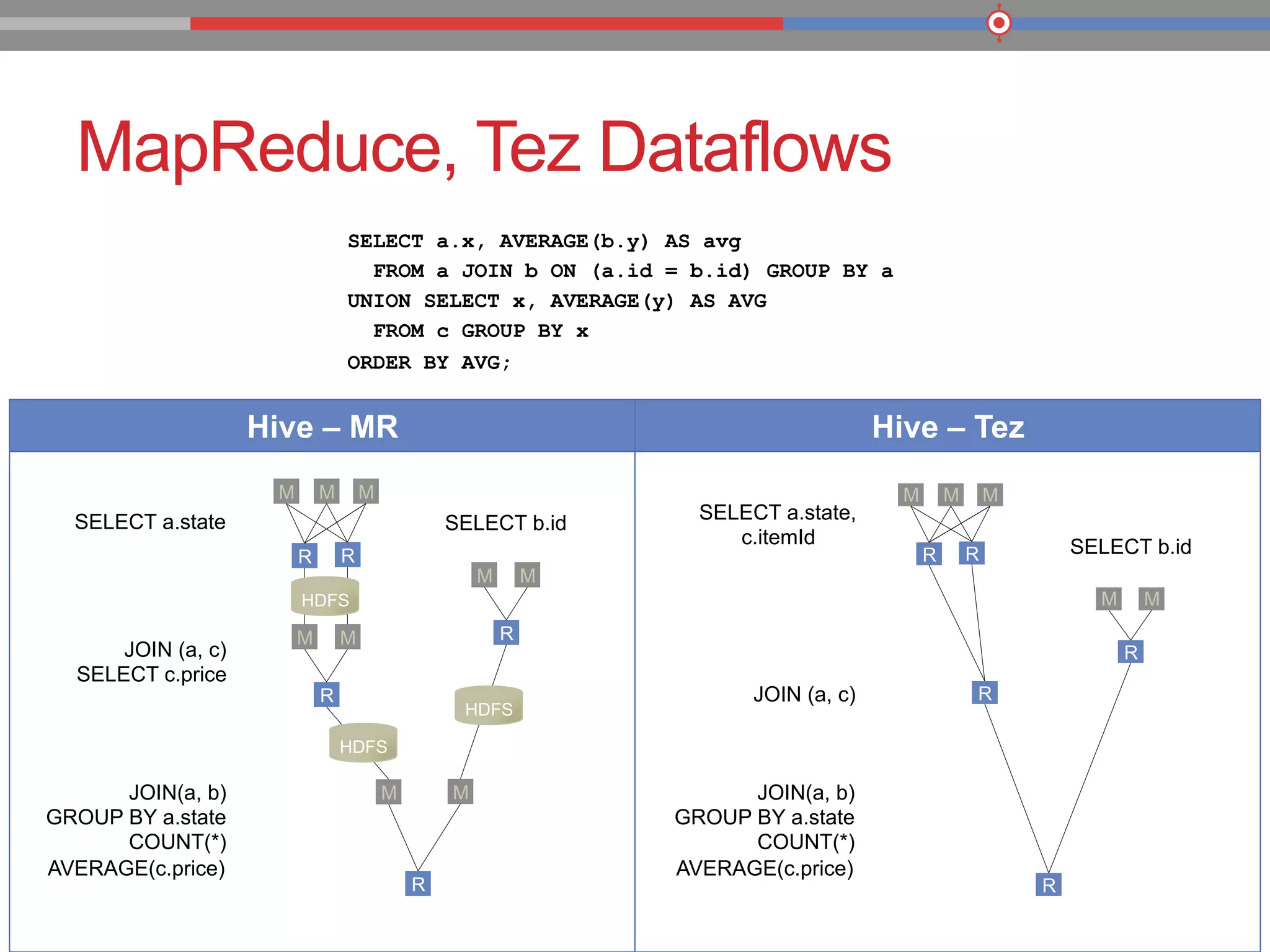

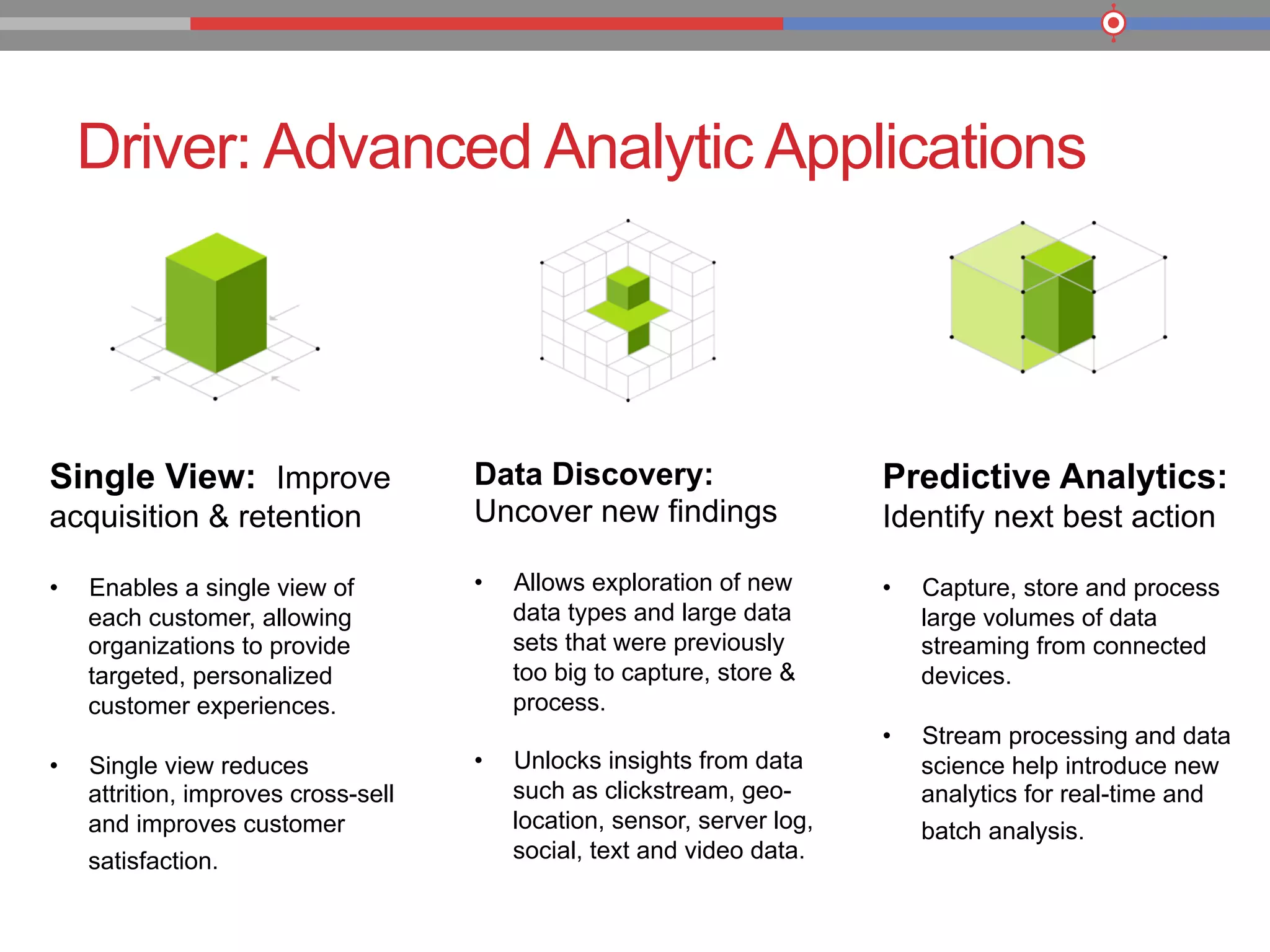

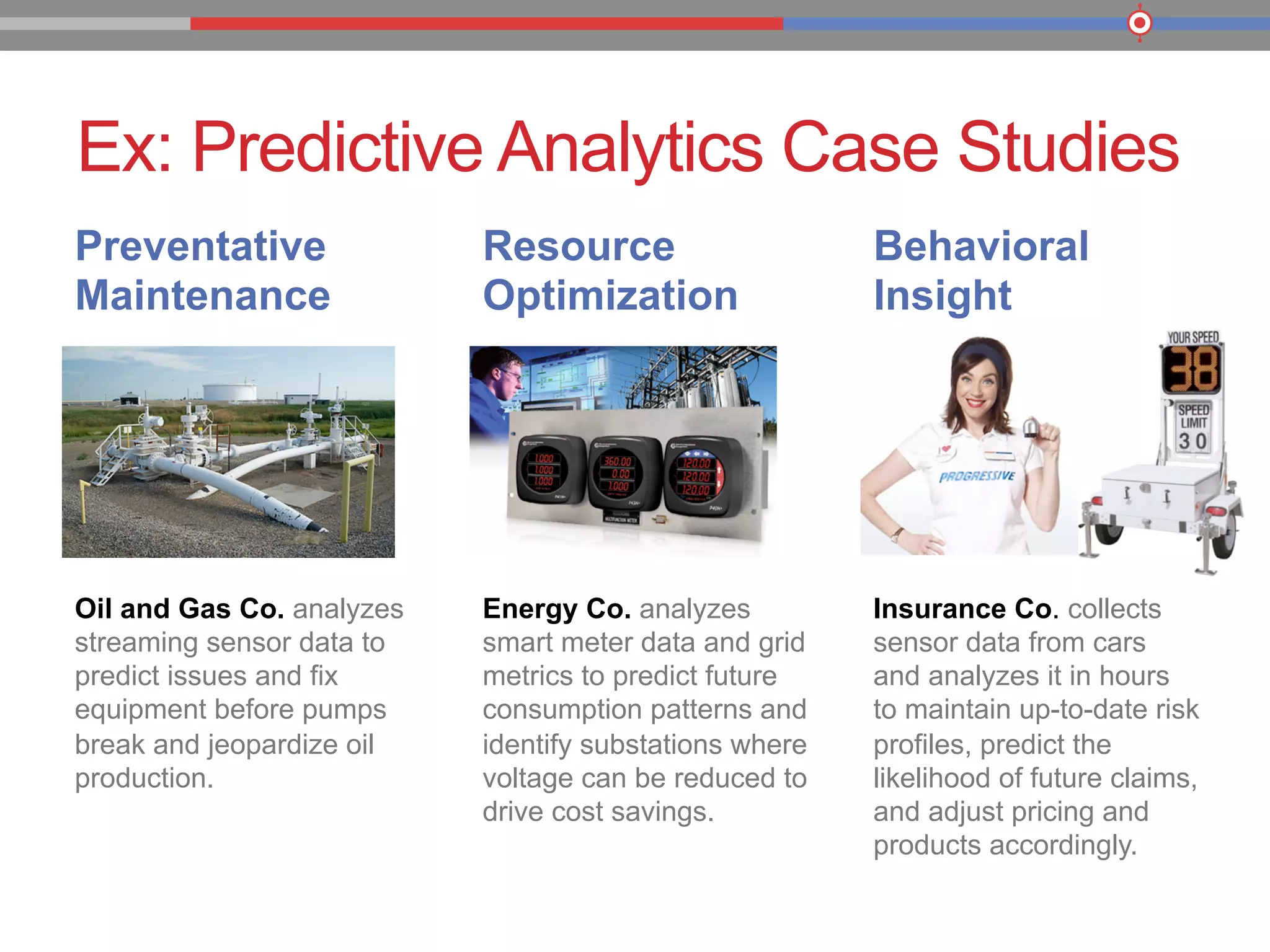

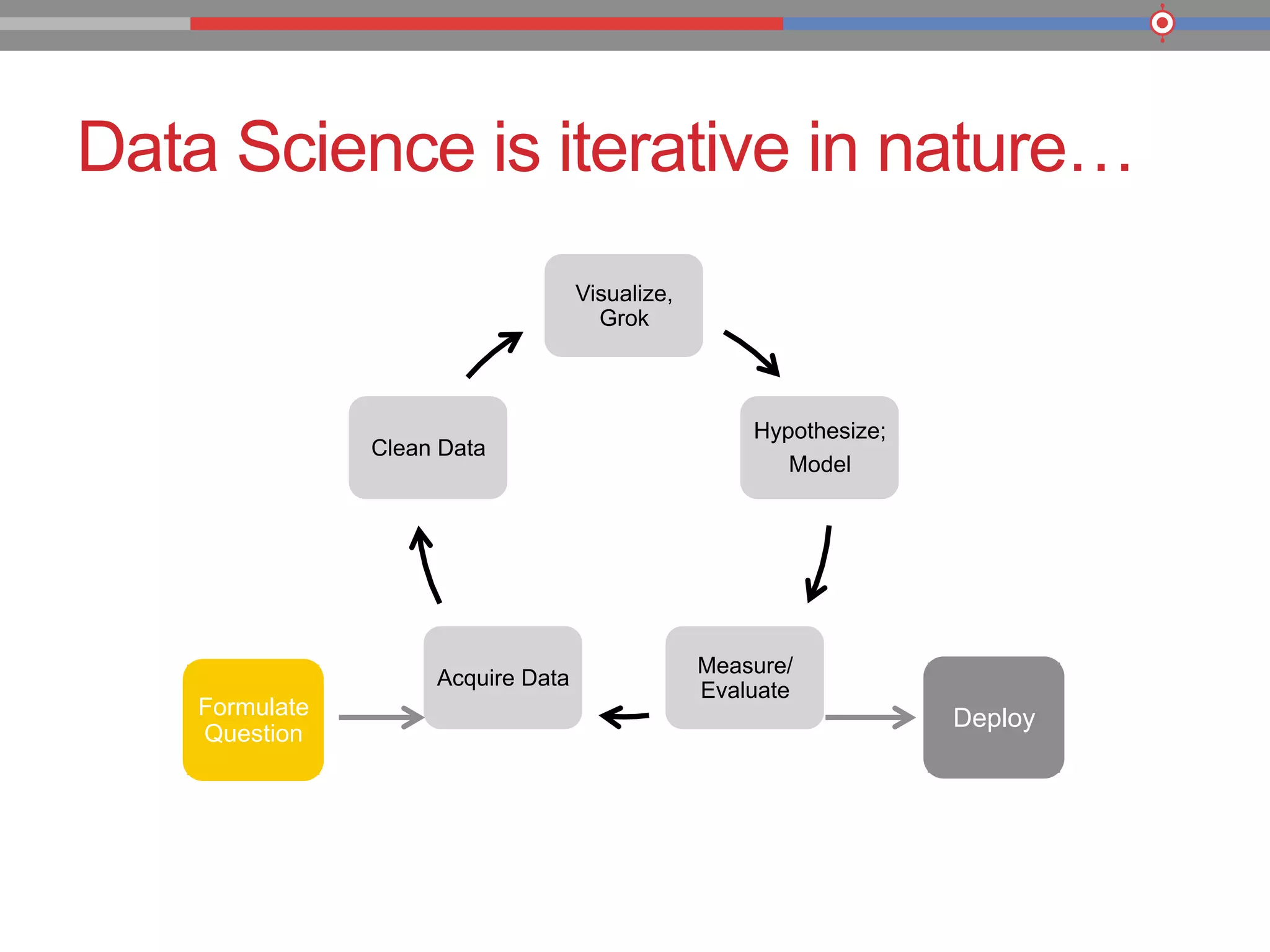

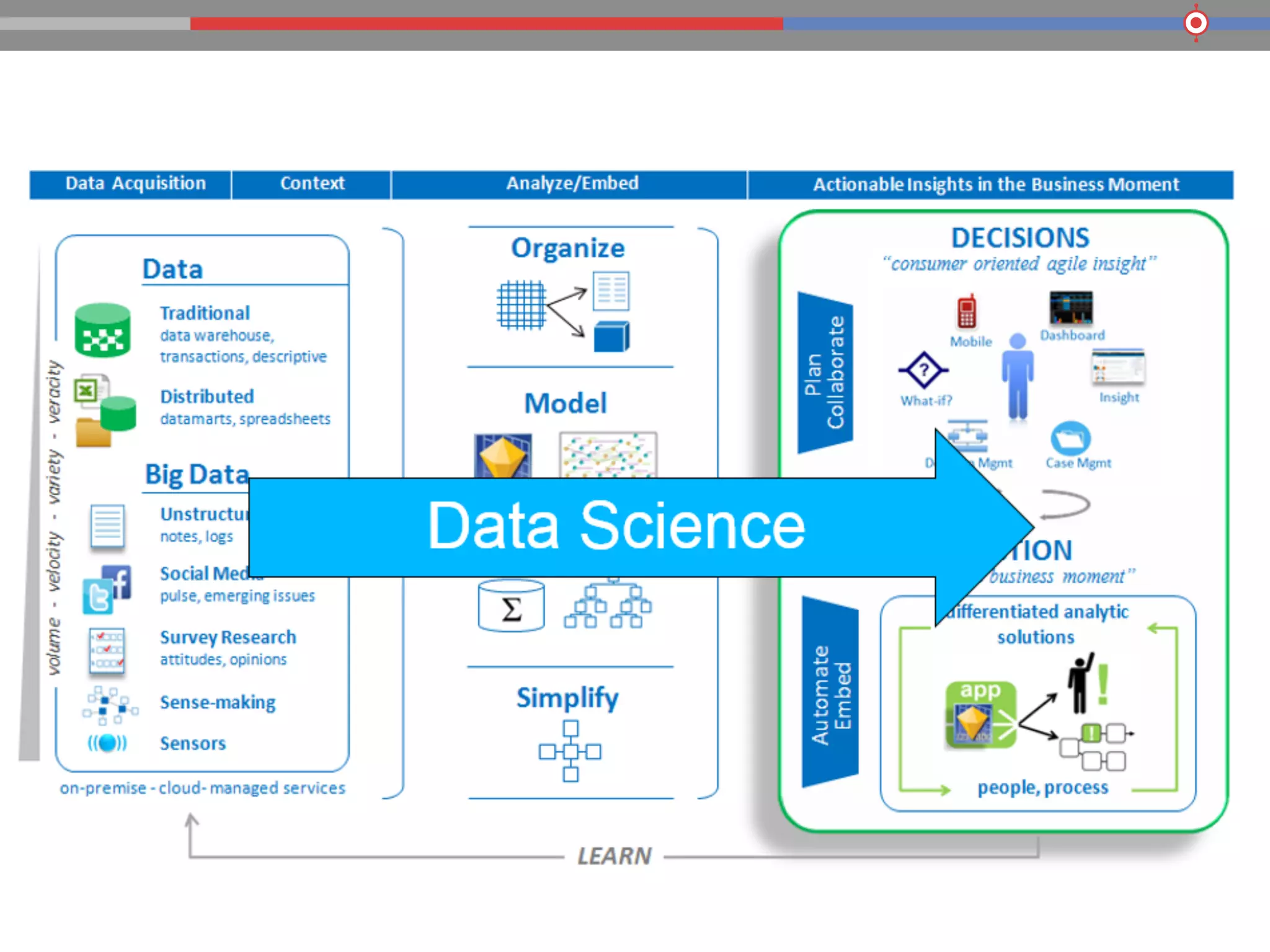

The document discusses the evolution of data processing technologies, emphasizing the capabilities of Apache Hadoop and modern data architecture for enterprises. It outlines the transformative power of big data, highlighting its impact on various industries and the need for scalable, cost-effective solutions. Various use cases demonstrate how data science can enhance decision-making, customer insights, and operational efficiency across sectors such as utilities, insurance, and retail.