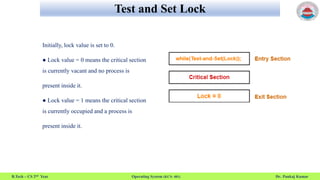

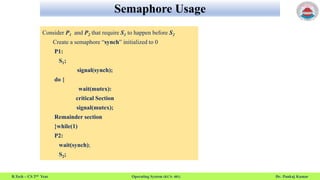

This document discusses operating system concepts related to process synchronization including semaphores, monitors, and classic synchronization problems. It provides details on semaphore usage including wait() and signal() functions, types of semaphores, and problems that can occur. Example problems discussed include the dining philosophers problem, bounded buffer problem, and sleeping barber problem. Solutions using semaphores and monitors are presented.

![Dining-Philosophers Problem

B.Tech – CS 2nd Year Operating System (KCS- 401) Dr. Pankaj Kumar

Philosophers spend their lives alternating thinking and eating

Don’t interact with their neighbors, occasionally try to pick up 2

chopsticks (one at a time) to eat from bowl

Need both to eat, then release both when done

In the case of 5 philosophers

Shared data

Bowl of rice (data set)

Semaphore chopstick [5] initialized to 1](https://image.slidesharecdn.com/semaphor-220910042644-5bf4b345/85/Operating-System-Semaphor-11-320.jpg)

![Dining-Philosophers Problem

B.Tech – CS 2nd Year Operating System (KCS- 401) Dr. Pankaj Kumar

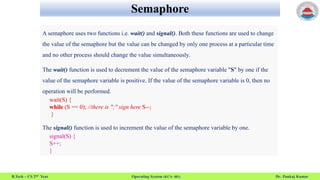

The structure of Philosopher i:

do {

wait (chopstick[i] );

wait (chopStick[ (i + 1) % 5] );

// eat

signal (chopstick[i] );

signal (chopstick[ (i + 1) % 5] );

// think

} while (TRUE);](https://image.slidesharecdn.com/semaphor-220910042644-5bf4b345/85/Operating-System-Semaphor-12-320.jpg)