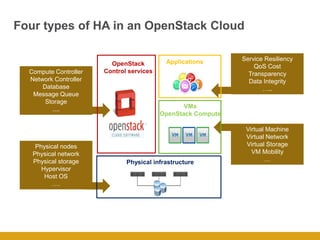

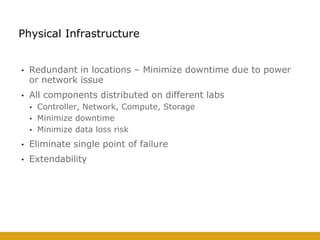

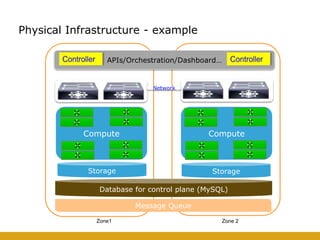

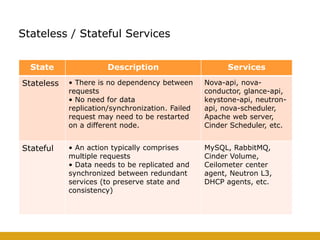

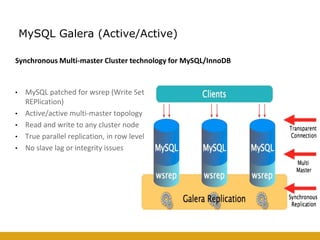

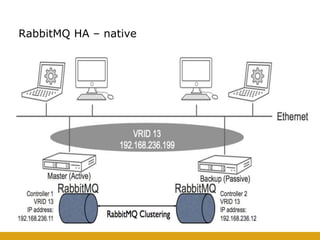

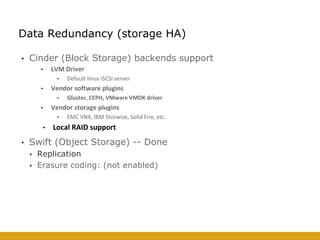

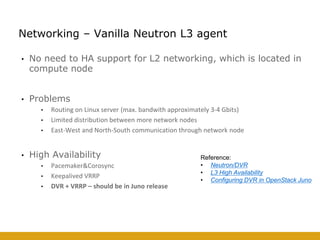

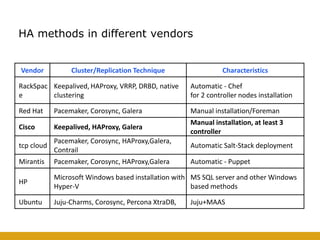

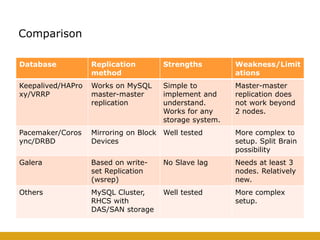

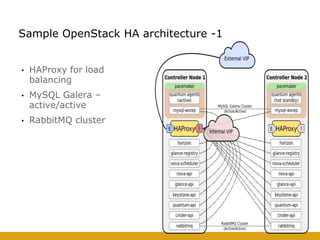

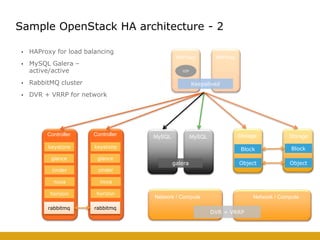

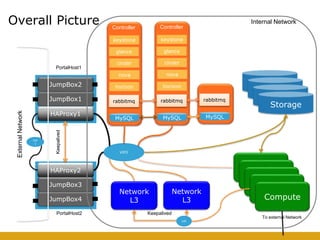

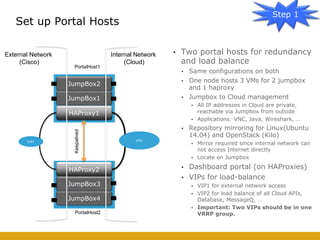

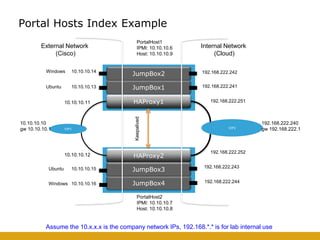

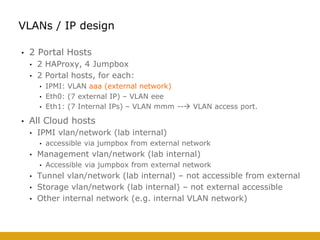

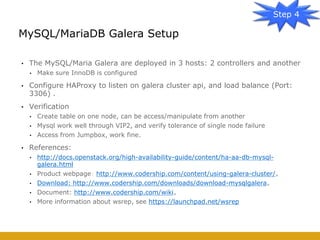

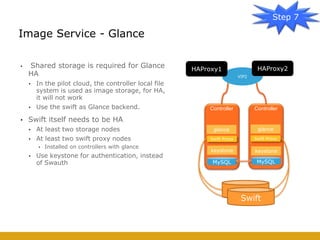

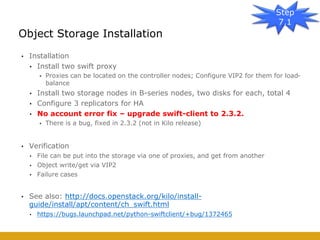

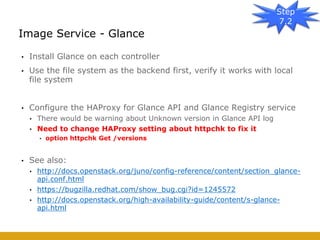

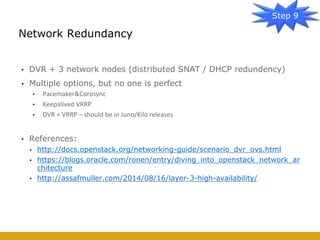

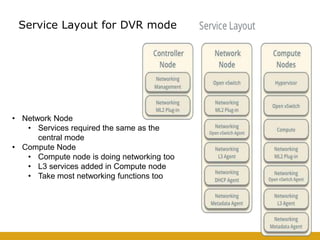

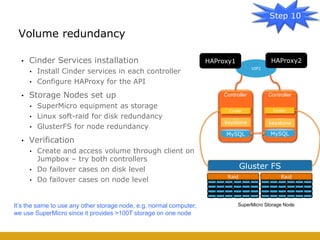

This document details the design and deployment of high availability (HA) for OpenStack cloud control services, outlining various types of HA configurations including stateless and stateful services, and the use of technologies such as keepalived, HAProxy, and MySQL Galera for load balancing and redundancy. It describes the architecture featuring multiple physical nodes and detailed deployment steps for setting up a resilient OpenStack environment with components like controllers, databases, and storage systems. Additionally, it addresses challenges and configurations related to networking, including the use of VRRP for virtual IP management and various vendor-specific implementation techniques.