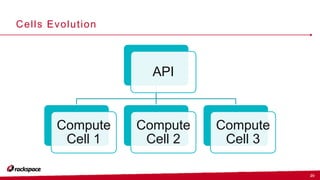

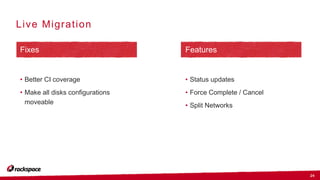

The document outlines updates and priorities for OpenStack Nova as discussed by John Garbutt during the OpenStack Ops Midcycle event in February 2016. Key focuses include a robust API, enhancements to the upgrade process, and the evolution of the API through microversions. It also addresses issues related to live migration and the architecture improvements aimed at maintaining system reliability and scalability.