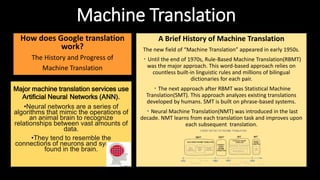

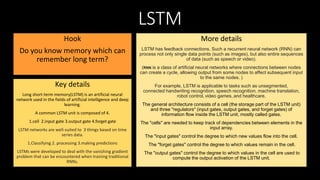

Neural networks like artificial neural networks (ANNs) and long short-term memory (LSTM) networks are commonly used in machine translation systems. ANNs mimic the human brain by recognizing relationships in vast amounts of data, similar to neural connections in the brain. The field of machine translation began in the 1950s with rule-based machine translation (RBMT) relying on linguistic rules and dictionaries. Statistical machine translation (SMT) developed in the late 1970s and analyzed existing human translations. More recently, neural machine translation (NMT) introduced in the last decade learns from each translation to improve. LSTM is a type of RNN with feedback connections that can process entire sequences of data over time and is well-suited for classifying