The paper presents a novel framework called AutoRE that can automatically generate regular expression signatures to detect spam emails. AutoRE analyzes URLs contained in emails to group similar domains and merge signatures, allowing it to detect future botnets not seen in training data. While AutoRE showed promising results on a Hotmail dataset, it has weaknesses like not addressing proxy URLs and inability to detect image spam. The paper is technically sound but could improve organization by separating discussion of AutoRE and botnet characteristics more clearly.

![Group Details:-

Dhara Shah z3299353

Imad Hashmi z3193866

Zuo Cui z3261136

Our Paper:- Y. Xie , F. Yu, K. Achan , R. Panigraphy , G. Hulten and I. Osipkov , Spamming Botnets: Signatures and Characteristics, in Proceedings of ACM SIGCOMM 2008, pp. 171-182, Seattle, USA August 2008.

Is this paper technically sound?

Paper is based on the experiments conducted on 3 months data collected from the Hotmail‟s Server. To simulate similar results we needed the algorithm or rules used in the AutoRE software to generate regular expression and data on which experiments could be conducted.

To get the details of the software we tried contacting the Authors but unfortunately could not receive any reply from them (proof attached in appendix). We suspect that as it‟s a Microsoft group research and commercial product details are confidential. Hence we tried looking at the open source spam detection software to understand working of AutoRE. We could not compare the techniques used by the open source Spam Detection Software and AutoRE as we didn‟t had all details of AutoRE.

There are a number of spam detection tools available both commercial and open source but none of them is based on signatures. The idea in this paper is genuine and novel because other content based filters do not generate signatures and rely on a complete scan of the email. Following are some of the rules used to identify a spam URL[3]. We discuss URLs only because AutoRE works with URLs only:

Uses a numeric IP address in URL

Uses %-escapes inside a URL's hostname

Completely unnecessary %-escapes inside a URL

Dotted-decimal IP address followed by CGI

Uses non-standard port number for HTTP

Has Yahoo Redirect URI

Contains an URL-encoded hostname (HTTP77)

URI contains ".com" in middle

URI contains ".com" in middle and end

URI contains ".net" or ".org", then ".com"

URI hostname has long hexadecimal sequence

URI hostname has long non-vowel sequence

CGI in .info TLD other than third-level "www"

CGI in .biz TLD other than third-level "www"](https://image.slidesharecdn.com/fe4c246f-3c7d-4b9f-a00a-cbebb3a7c1a3-141209152654-conversion-gate02/75/NetworkPaperthesis2-1-2048.jpg)

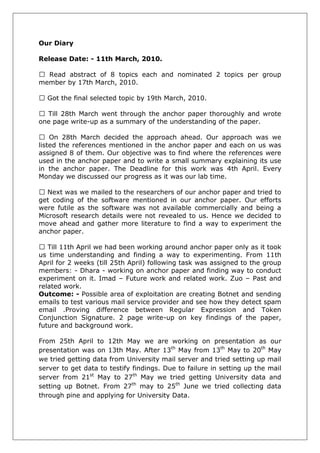

![There is a long list of email header criteria which can be applied to identify spam but that is beyond the scope here.

Next was we tried collecting data from the University‟s Mail server to verify the characteristics about the spam emails mentioned in the paper (proof attached in the appendix). But due to security issues concerned with the university we couldn‟t get the data. Hence we redirected our yahoo, Gmail and hotmail accounts to Cse account. And then accessing the Cse account via “pine” utility. Pine is a text based email reader which enables us to see detailed email headers. We tried distinguishing the email header of the Spam Email and a legitimate Email. But as Cse doesn‟t have an anti spam technology applied to it, it relies on the University‟s server for this. We verified this by observing that all the emails coming to Cse are being forwarded by the University‟s server. Also we understood that even if the user marks a email as spam, the system does not categorize it as spam until it satisfy the basic property of burstiness. We classified few legitimate email-ids as spam but the email server never classified it as spam as they were never sending in bulk.

Result from Pine is as follows:-

INFPACM003.services.comms.unsw.edu.au ([149.171.193.26]) (IP doesn't match sender domain)

(for <dsha472@cse.unsw.edu.au>) By note With Smtp ;

Fri, 18 Jun 2010 20:23:12 +1000

Received: from mta156.mail.in.yahoo.com ([203.84.221.168]) by INFPACM003.services.comms.unsw.edu.au with SMTP; 18 Jun 2010 20:02:46

+1000

Received: from 68.142.207.198 (HELO web32405.mail.mud.yahoo.com)

(68.142.207.198) by mta156.mail.in.yahoo.com with SMTP; Fri, 18 Jun 2010 15:53:07 +0530

Received: (qmail 20395 invoked by uid 60001); 18 Jun 2010 10:23:04 -0000

Received: from [117.193.43.248] by web32405.mail.mud.yahoo.com via HTTP; Fri

, 18 Jun 2010 03:23:03 PDT

Received: From INFPACM001.services.comms.unsw.edu.au ([149.171.193.18])

(for <dsha472@cse.unsw.edu.au>) By note With Smtp ;

Fri, 18 Jun 2010 20:04:32 +1000

Received: from mta177.mail.in.yahoo.com ([202.86.5.206]) by INFPACM001.services.comms.unsw.edu.au with SMTP; 18 Jun 2010 19:52:33

+1000

Received: from 65.54.190.16 (EHLO bay0-omc1-s5.bay0.hotmail.com)

(65.54.190.16) by mta177.mail.in.yahoo.com with SMTP; Fri, 18 Jun 2010 15:34:22 +0530

Received: from BL2PRD0102HT003.prod.exchangelabs.com ([65.54.190.61]) by bay0-omc1-s5.bay0.hotmail.com with Microsoft SMTPSVC(6.0.3790.4675);

Fri, 18 Jun 2010 03:04:00 -0700

Received: from BL2PRD0102MB009.prod.exchangelabs.com ([169.254.34.168]) by BL2PRD0102HT003.prod.exchangelabs.com ([169.254.220.82]) with mapi; Fri, 18

Jun 2010 10:03:59 +0000](https://image.slidesharecdn.com/fe4c246f-3c7d-4b9f-a00a-cbebb3a7c1a3-141209152654-conversion-gate02/85/NetworkPaperthesis2-2-320.jpg)

![Are the ideas and results presented in this paper novel?

In our opinion, the idea of framework AutoRE is significantly novel. Although in some previous works, regular expressions were used for spam detection which is based on URLs in the email content; AutoRE is quite different from them. As can be seen from reasons below:

First, AutoRE has ability to automatically generate regular expressions based on the discovered URLs. Currently, man-made regular expressions are required in most detection framework. With the rapid growth of the number of spam, it becomes increasing tough even impossible to generate regular expressions manually. By learning from some methods of worm detection system (Singh's research [2]), AutoRE generates spam signature automatically. Therefore, this technique reduces the workload of human being and improves the veracity of regular expressions.

Second, AutoRE has capacity to predict the future domain-agnostic botnets. Most of previous researches and current detection frameworks are aiming at specific individual botnet. However, for those botnets which have similar behaviours, AutoRE cannot detect them automatically and they can only take action to the domain of those botnets which have been captured. For those possible future domain, these previous research is helpless. However, AutoRE is able to analyse and group the domains which have similar behaviour, and then merge domain-specific regular expressions into domain-agnostic regular expressions, therefore, AutoRE obtain the ability of detecting the domain both currently and in the future which possess same behaviour.

From these points of view, AutoRE can be considered as an innovative framework in the field of spam detection.

Are there any weaknesses of this paper that you have not mentioned in your answers to the above questions?

One of the weaknesses is that AutoRE doesn‟t deal with proxy URL. These proxy URLs usually have no relevance to their redirect destination, so it is hard to group them by using AutoRE. Although they can be traced from redirecting destination and using this destination address to detect whether it is a spam or not by AutoRE, but the tracing process is exactly as spammer‟s wishes. Currently, this situation cannot be improved in this paper. Another weakness is that AutoRE cannot detect the increasing image spam. So authors could borrow ideas from other image spam detection framework (like Uemura research [1]), using image‟s information, such as URL, file name or size, to improve this framework.](https://image.slidesharecdn.com/fe4c246f-3c7d-4b9f-a00a-cbebb3a7c1a3-141209152654-conversion-gate02/85/NetworkPaperthesis2-3-320.jpg)

![ Keeping signature up-to-date

AutoRE works on historic data. Since it generates spam signatures and identified spam emails based on historic data it is a big challenge itself to keep those signatures correct and up-to-date. If the signature expires the low false positive rate may change significantly and the system may lose its strength. The paper does not explain anything about it. Having a mechanism to update the signature will heavily boost the software performance.

Detecting Image spam

A lot of spam emails today are sent in the form of images. The purpose of using images is to hide email contents from content based filters. This important feature should be dealt with by content based filtering systems like AutoRE. One way of doing this is to generate signature of the image as well. Some basic characteristics like image size, type and dimensions can be recorded inside the email signature to identify similar images in other emails. Advanced image signature algorithms like colour histograms might not be possible to apply at such mass scale but calculating an image hash might turn out to be useful.

Dependence on Botnet burstiness

AutoRE heavily relies on the burstiness property of spamming botnets with the assumption that the botnets will be rented for a small time only. This can ultimately result in generation of a totally incorrect spam signature if botnet start throttling the sending speed. However this topic remains wide open because waiting for the right spam email to be used as signature data is not the option.

Reference:

[1]Uemura, M& Tabata, T 2008 „Design and Evaluation of a Bayesian- filter-based Image Spam Filtering Method‟, 2008 International Conference on Information Security and Assurance, 2008 IEEE

[2]S. Singh, C. Estan, G. Varghese, and S. Savage. Automated worm fingerprinting. In OSDI, 2004.

[3]Apache SpamAssassin](https://image.slidesharecdn.com/fe4c246f-3c7d-4b9f-a00a-cbebb3a7c1a3-141209152654-conversion-gate02/85/NetworkPaperthesis2-6-320.jpg)