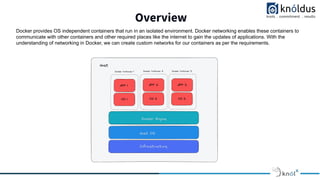

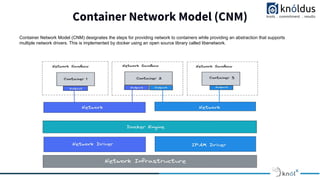

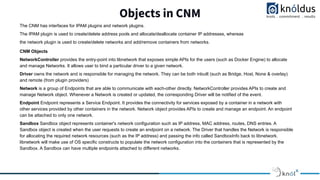

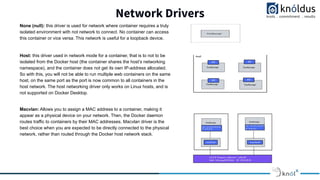

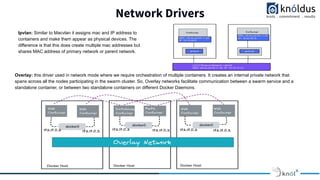

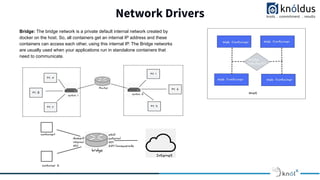

The document outlines networking in Docker, focusing on etiquette during sessions, including punctuality and minimizing disturbances. It discusses the Container Network Model (CNM), which facilitates communication between Docker containers through various network drivers like bridge, overlay, and macvlan, among others. Additionally, it details the roles of network objects such as NetworkController, endpoints, and sandbox configurations in managing container networks.