ML for blind people.pptx

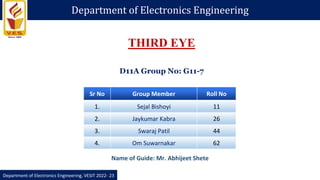

- 1. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Sr No Group Member Roll No 1. Sejal Bishoyi 11 2. Jaykumar Kabra 26 3. Swaraj Patil 44 4. Om Suwarnakar 62 THIRD EYE Name of Guide: Mr. Abhijeet Shete D11A Group No: G11-7

- 2. 1. Today, machine learning is used in many types of industries from medical image processing to autonomous cars. Detecting objects in images has also become one of the important research areas and now computers are able to not only detect objects but also are able to draw bounding boxes on it. This is also known as computer vision. 2. Therefore , we proposed the implementation of computer vision machine learning ssssalgorithms to detect objects. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Why This Project?

- 3. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Why This Project? The World Health Organization (WHO) reported that at least 2.2 billion people worldwide have a visual impairment or blindness 1. 1.09 billion people, over the age of 35, suffer from visual impairment . 1. Assistive devices have been used for the blind and visually impaired people to overcome various physical, social, infrastructural, and accessibility barriers to independence and to live active, productive, and independent lives as equal members of the society

- 4. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Introduction 1. Vision is one of the very essential human senses and it plays the most important role in human perception about the surrounding environment. 2. Detecting objects in images has also become one of the important research areas and now computers are able to not only detect objects but also are able to draw bounding boxes on it. This is also known as computer vision. 3. We proposed the implementation of computer vision machine learning algorithms for object detection.

- 5. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Literature Review 1.2019 6th IEEE International Conference on Engineering Technologies and Applied Sciences (ICETAS) Object Detection and Narrator for Visually Impaired People. This paper explains how convolution neural networks are trained on ImageNet dataset that can detect objects and narrate detected objects information to the visually impairs person. This implementation can be used with any device using a camera that includes computers, tablets and mobile phones.

- 6. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Literature Review 2.International Journal of Engineering Research & Technology (IJERT) Vol. 9 Issue 09, September-2020 Assistive Object Recognition System for Visually Impaired This paper proposed to aid the visually impaired by introducing a system that is most feasible, compact, and cost-effective. So, the paper implied a system that makes use of Raspberry Pi in which you only look once (YOLO v3) machine learning algorithm trained on the coco database is applied.

- 7. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Literature Review 3.2020 IEEE Region 10 Symposium (TENSYMP), 5-7 June 2020, Dhaka, Bangladesh Assistive Technology for Visually Impaired using Tensorflow Object in Raspberry Pi and Coral USB Accelerator This paper aims to develop an assistive technology based on Computer Vision, Machine Learning and Tensorflow to support visually impaired people. The proposed system will allow the users to navigate independently using real-time object detection and identification.

- 8. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Literature Review 4.European Journal of Molecular & Clinical Medicine ISSN 2515-8260 Volume 7, Issue 4, 2020 Real Time Object Detector for Visually Impaired using OPEN The goal of the present project is to model an object detector to detect objects for visually impaired people and other commercial purposes by recognizing the objects at a particular distance.This paper propose a computer vision concept to convert objects to text by importing the pre-trained dataset model from the caffemodel framework and the texts are further converted into speech.

- 9. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Hardware/Software Requirements Hardware: Integrated Camera Integrated Speaker Software: MATLAB & Simulink Libraries: 1. Computer Vision Toolbox 2. Computer vision Toolbox Model for Mask R-CNN Instance Segmentation 3. Deep Learning Toolbox 4. Deep Learning Toolbox For ResNet-50 Network 5. Image Processing Toolbox 6. MATLAB Support Package For USB Webcams

- 10. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022 -23 Flowchart

- 11. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Block Diagram

- 12. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Working 1. Initially,the images are captured through camera and these images are send to the pretrained model. 2. A machine learning model (resnet50-coco) detect the image and find the objects in the image . 3. At the backend,image processing takes place where necessary processes such as features detection,features extraction ,etc. takes place. 4. Once the object is detected,the output is send to the user in the form of audio.

- 13. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Courses Matlab Onramp Modules - 14 Status - Completed Matlab Machine Learning Modules - 6 Status - Completed Matlab Deep learning Modules - 13 Status-Completed Matlab Image Processing Modules - 11 Status - Completed

- 14. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Plan of Implementation MONTH PLAN August 2022 Deciding the topic and researching September 2022 Completing the courses required for the project October 2022 Adding the image data and image processing November 2022 Completion of the project (Software)

- 15. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Applications 1. Tracking objects : 2. People Counting : 3. Automated CCTV :

- 16. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Applications 4. Person Detection : 4. Vehicle Detection :

- 17. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Result

- 18. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Result Accuracy Graph Confusion Matrix

- 19. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Conclusion In this paper we proposed a system which can be used to detect objects in various fields like medical science , automobile industries and can even be used to assist the visually impaired person in understanding the environment by narrating the objects in the surrounding. The developed system is based on using a MATLAB which on loading takes the image from the camera and pass that image to the server. On server side, a trained machine learning model is deployed to detect the objects in that image. The result of detection is passed to the client where a voice library narrates the results to visually impaired person.

- 20. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 References 1. World Health Organization Visual Impairment and Blindness. [(accessed on 24 January 2016)]. Available online: http://www.Awho.int/mediacentre/factsheets/fs282/en/ 2. American Foundation for the Blind. [(accessed on 24 January 2016)]. Available online: http://www.afb.org/ 3. National Federation of the Blind. [(accessed on 24 January 2016)]. Available online: http://www.nfb.org/ 4. Velázquez R. Wearable and Autonomous Biomedical Devices and Systems for Smart Environment. Springer; Berlin/Heidelberg, Germany: 2010. Wearable assistive devices for the blind; pp. 331–349. [Google Scholar] 5. Baldwin D. Wayfinding technology: A road map to the future. J. Vis. Impair. Blind. 2003;97:612–620. [Google Scholar] 6. Blasch B.B., Wiener W.R., Welsh R.L. Foundations of Orientation and Mobility. 2nd ed. AFB Press; New York, NY, USA: 1997. [Google Scholar] 7. Shah C., Bouzit M., Youssef M., Vasquez L. Evaluation of RUNetra tactile feedback navigation system for the visually-impaired; Proceedings of the International Workshop on Virtual Rehabilitation; New York, NY, USA. 29–30 August 2006; pp. 72–77. [Google Scholar] 8. Hersh M.A. International Encyclopedia of Rehabilitation. CIRRIE; Buffalo, NY, USA: 2010. The Design and Evaluation of Assistive Technology Products and Devices Part 1: Design. [Google Scholar] 9.who.int …

- 21. Department of Electronics Engineering Department of Electronics Engineering, VESIT 2022- 23 Thank you