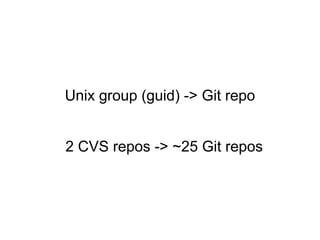

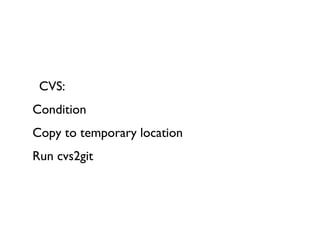

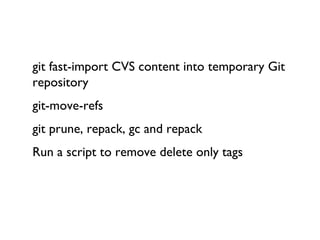

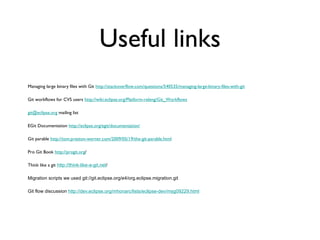

The document summarizes IBM's experience migrating a large codebase from CVS to Git. It involved migrating over 40 active committers and around 600 bundles built daily across 4 active development streams. The migration process took several steps including converting the CVS repositories to Git, adding .gitignore files, and optimizing the repositories. Quotes from IBM employees discuss advantages of Git like thinking in terms of branches instead of patches, and challenges like a learning curve for developers.