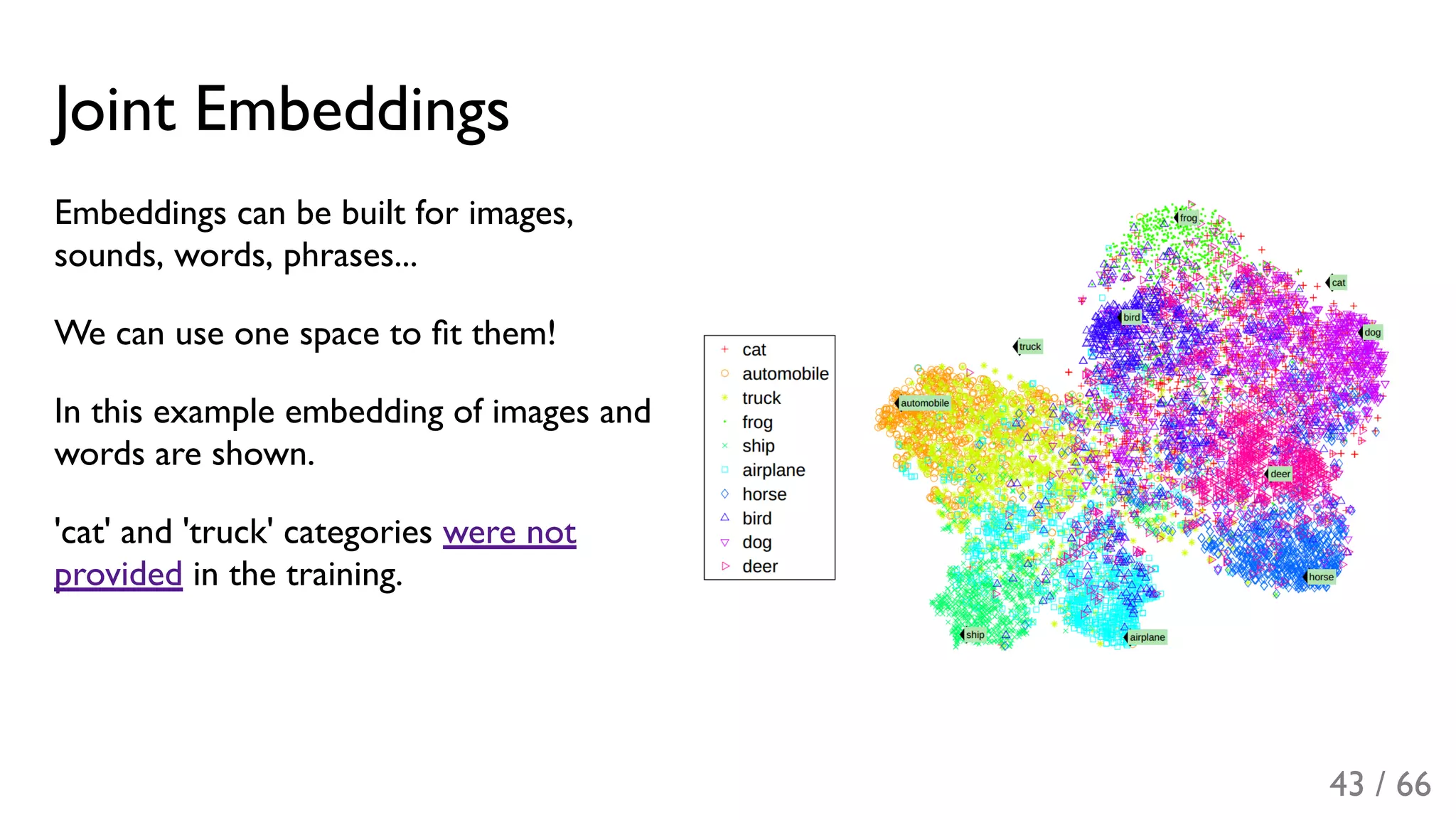

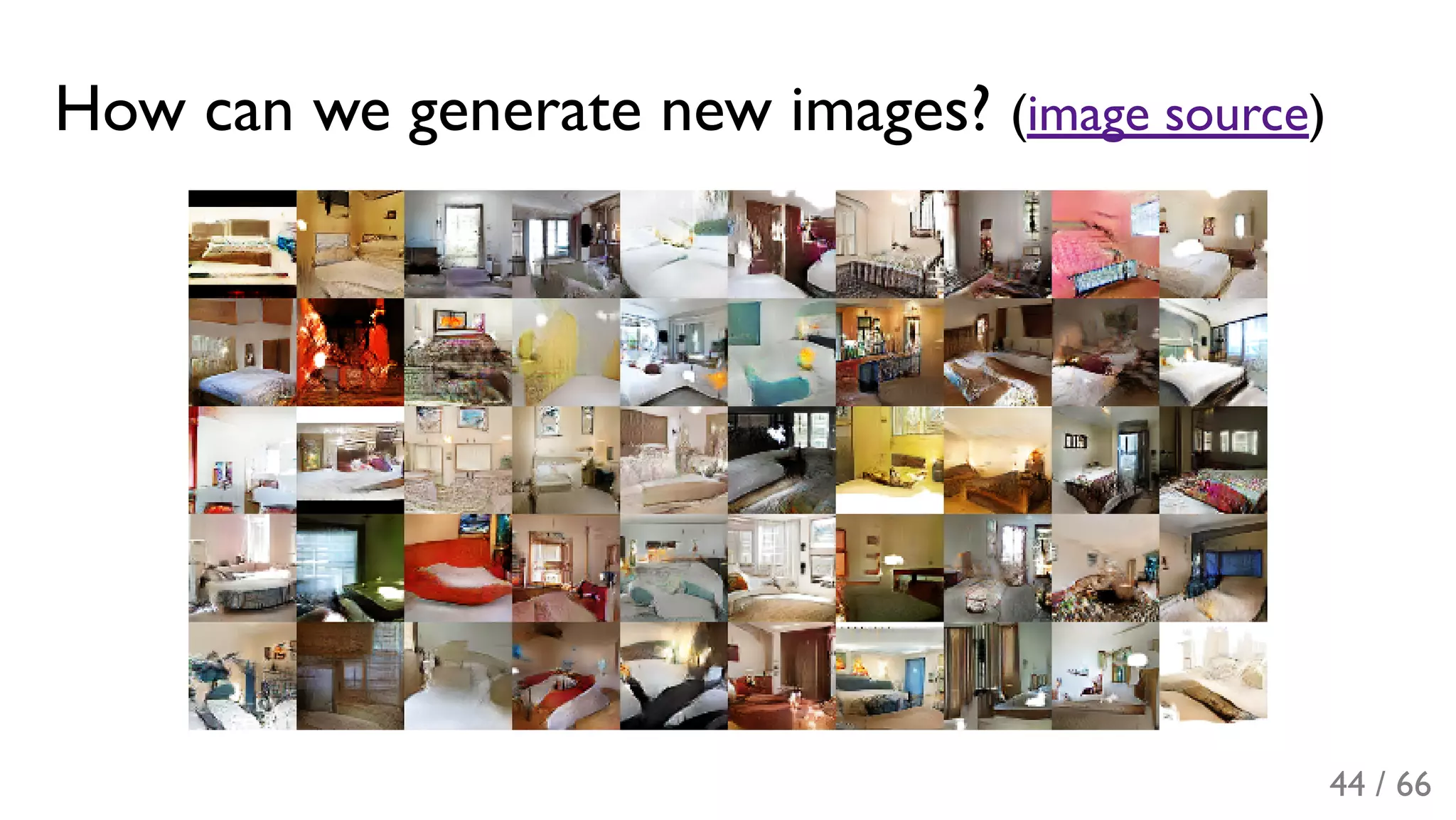

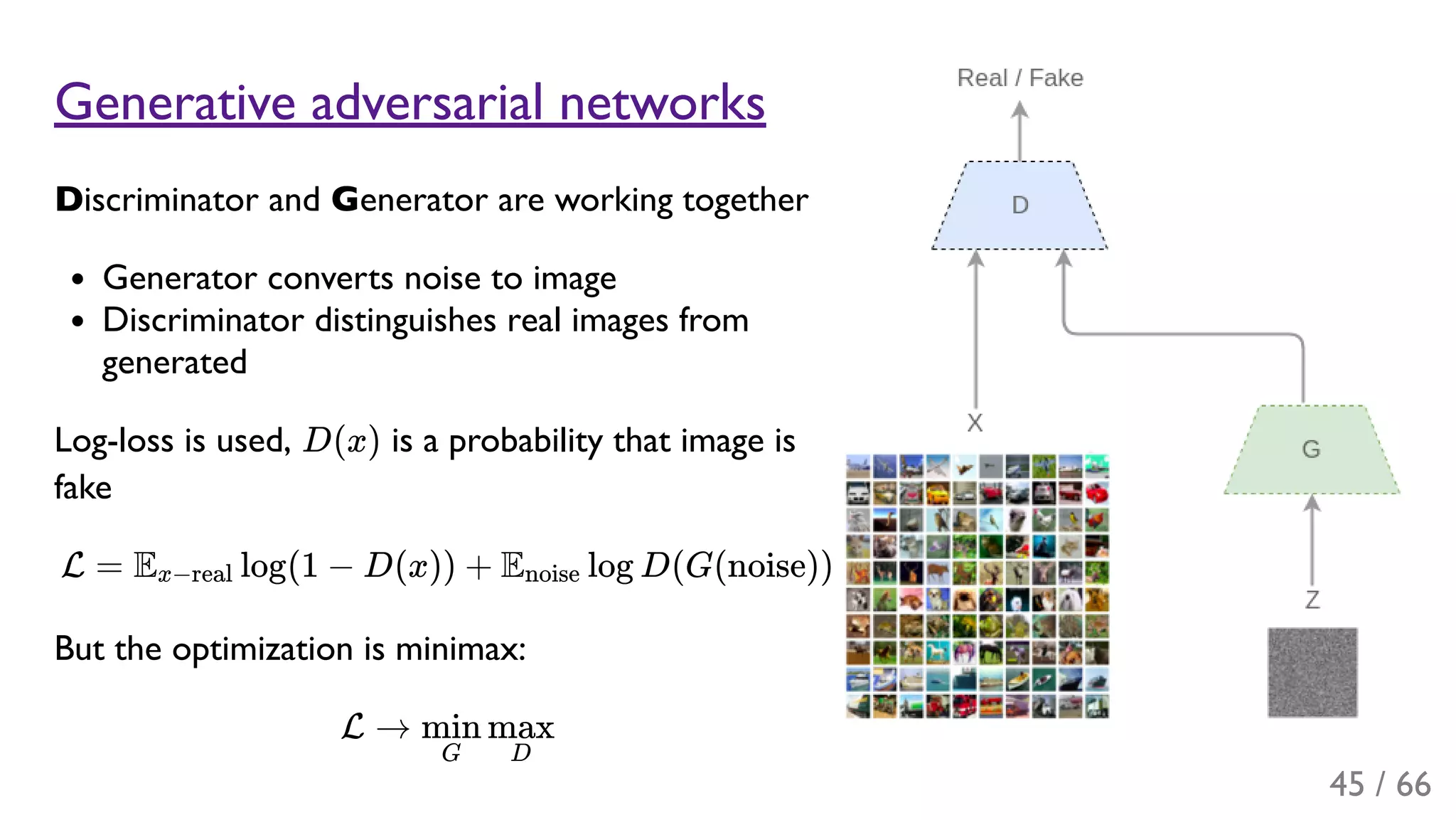

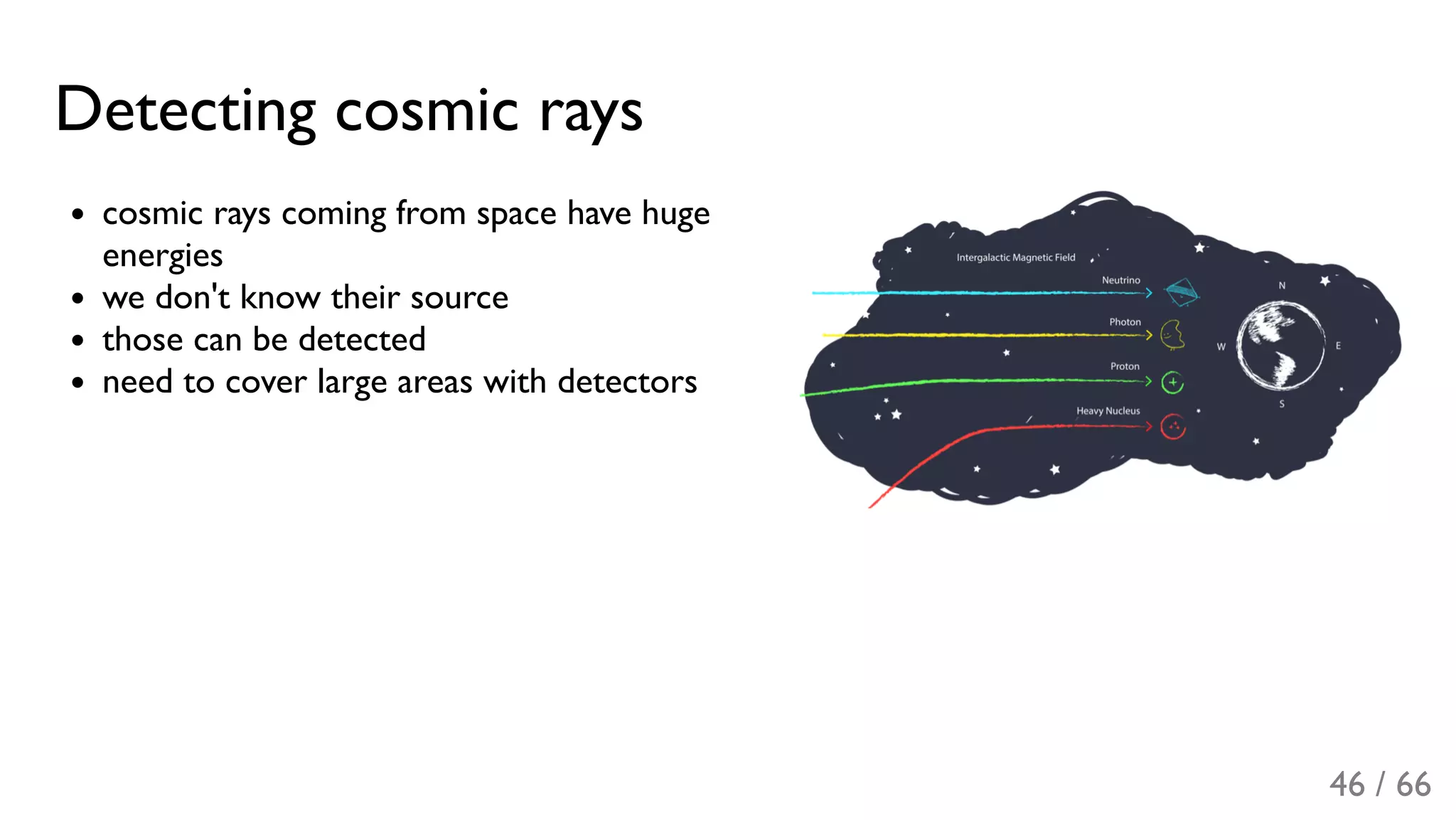

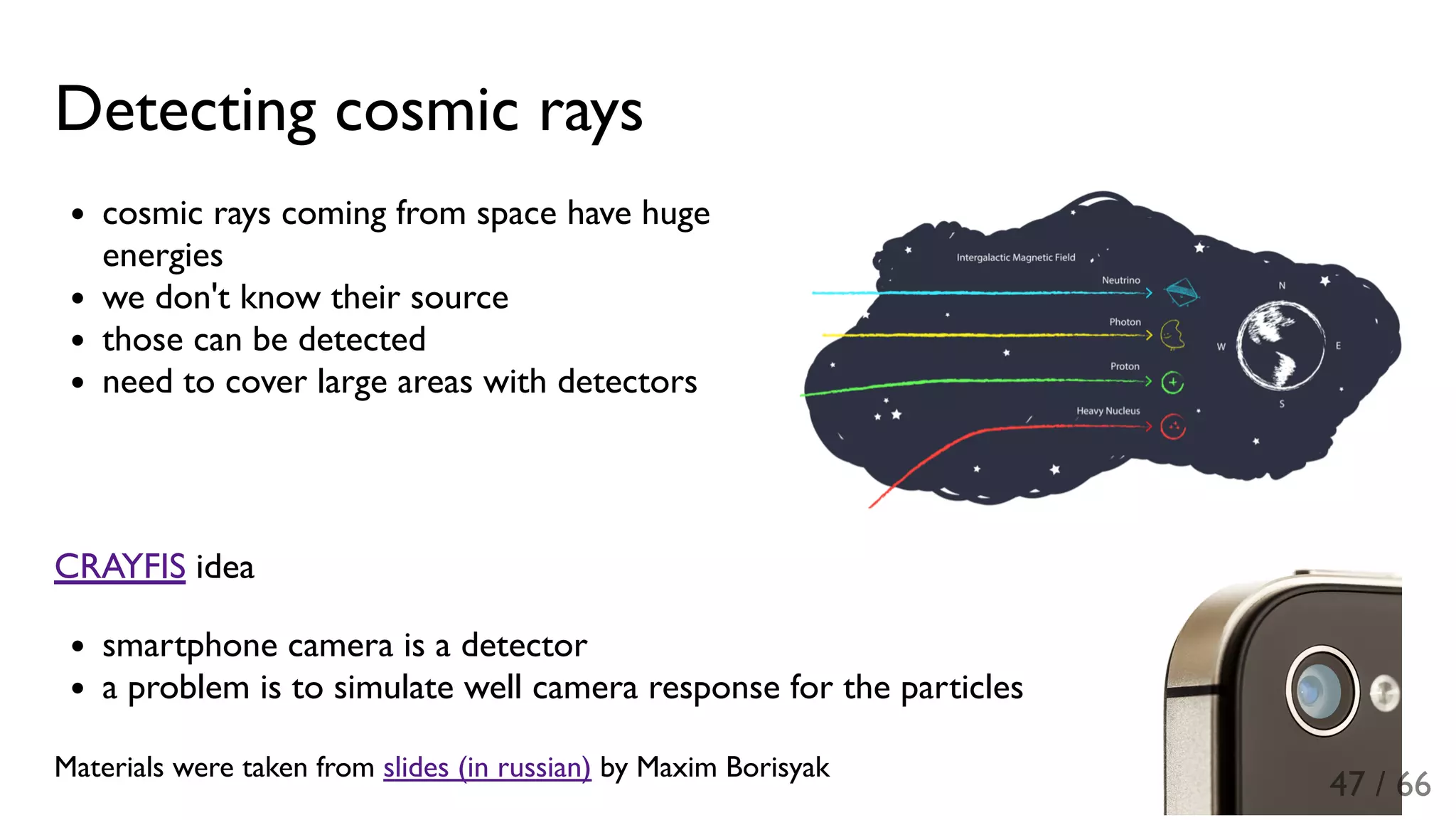

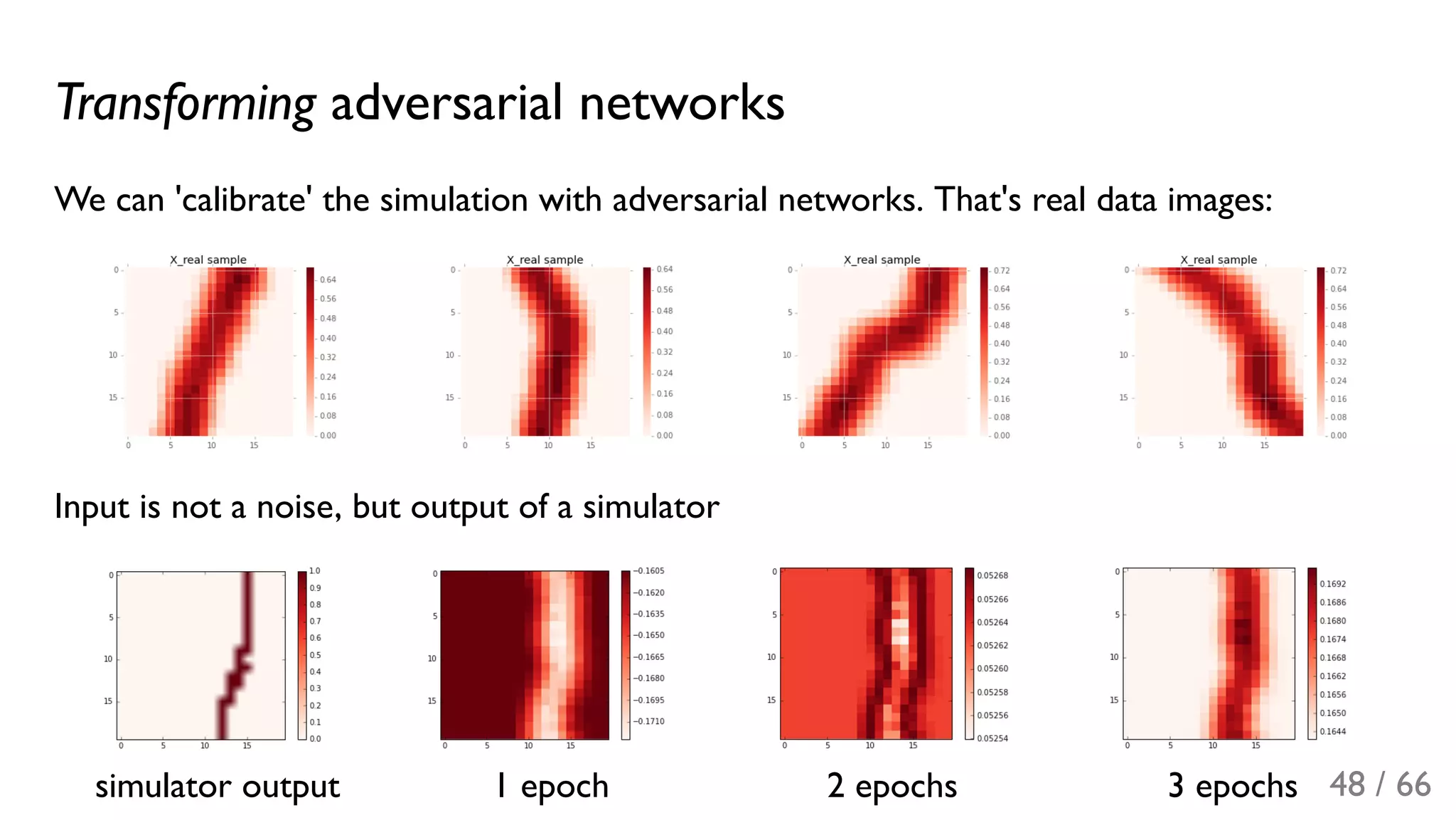

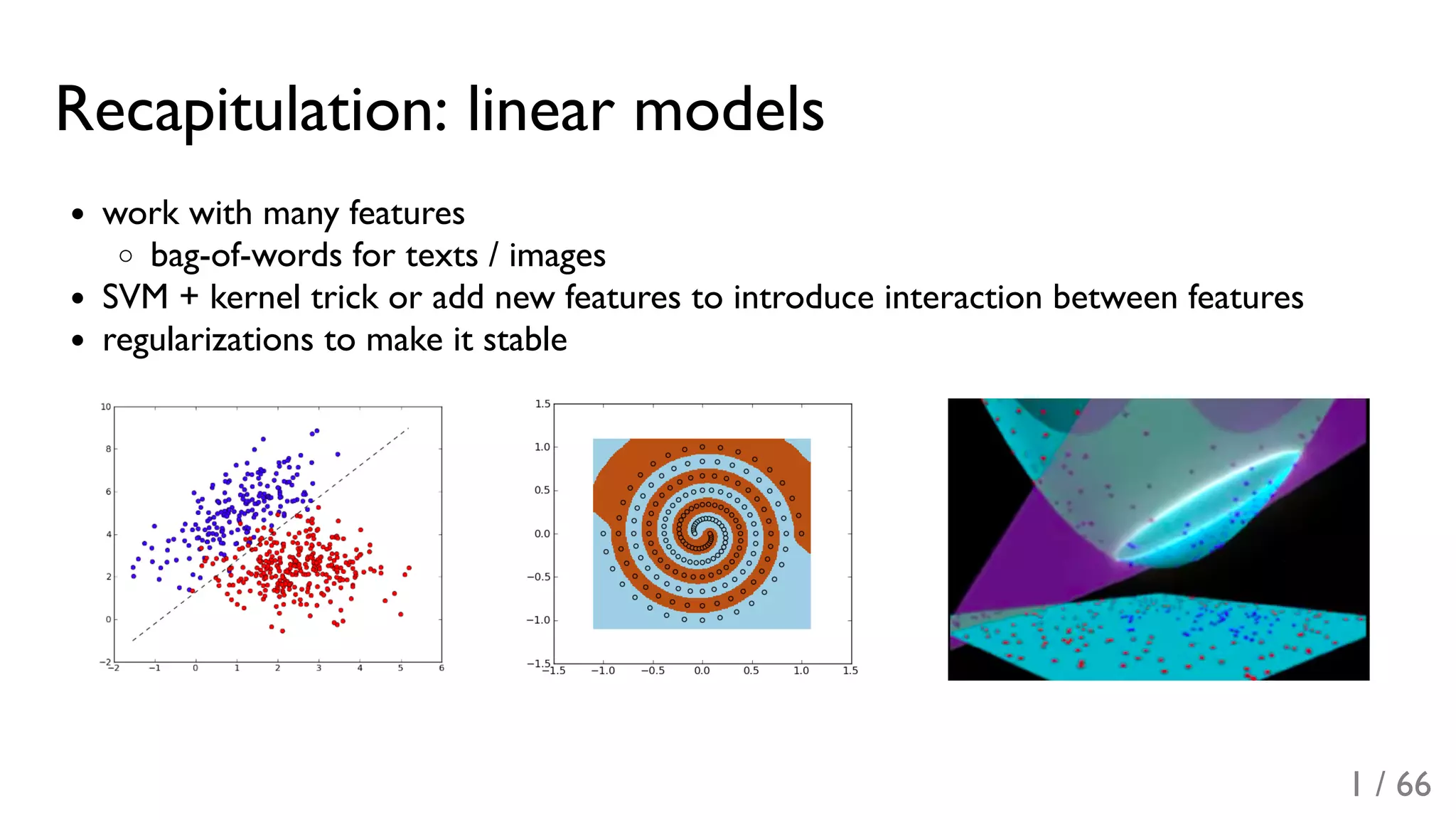

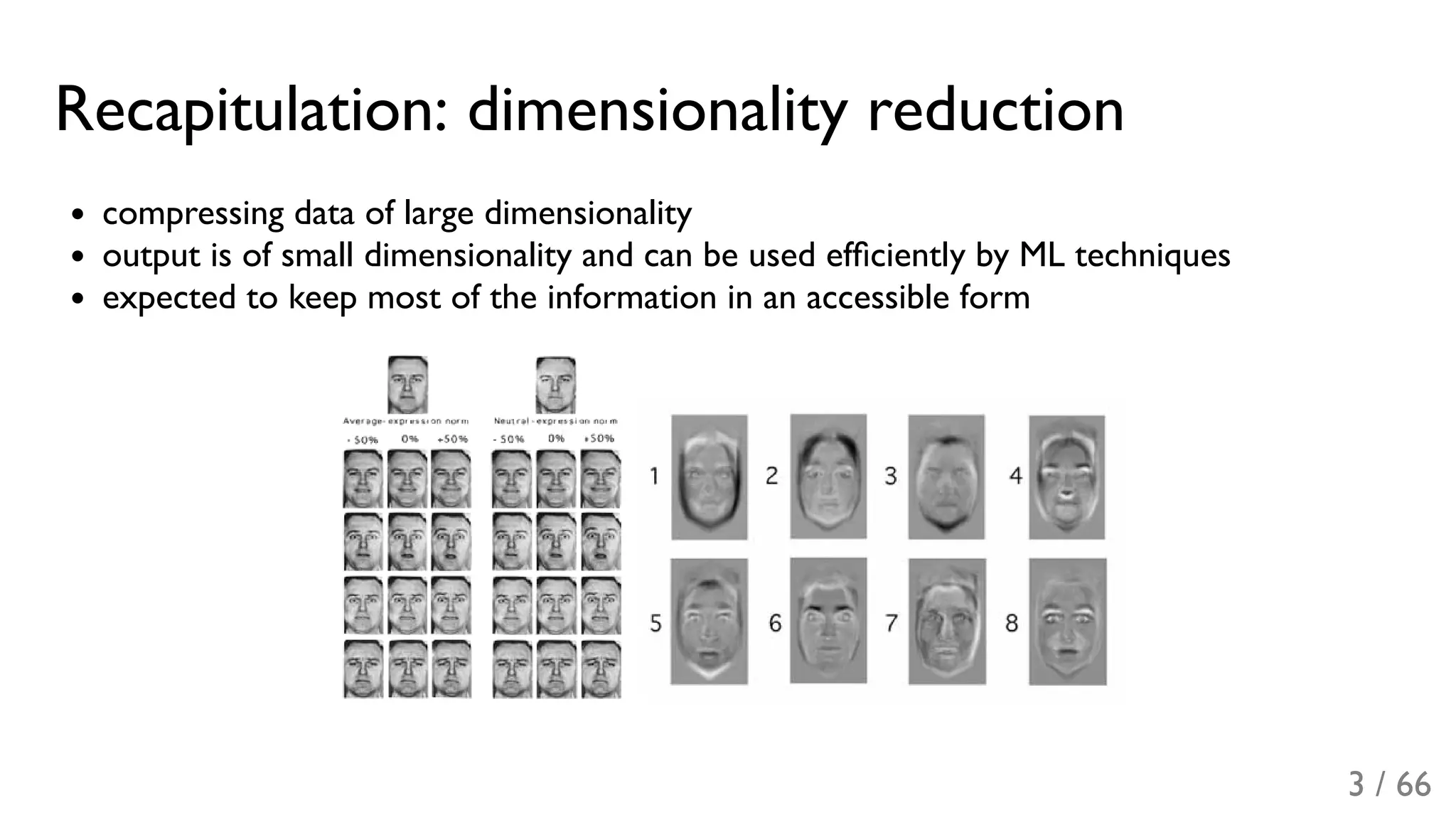

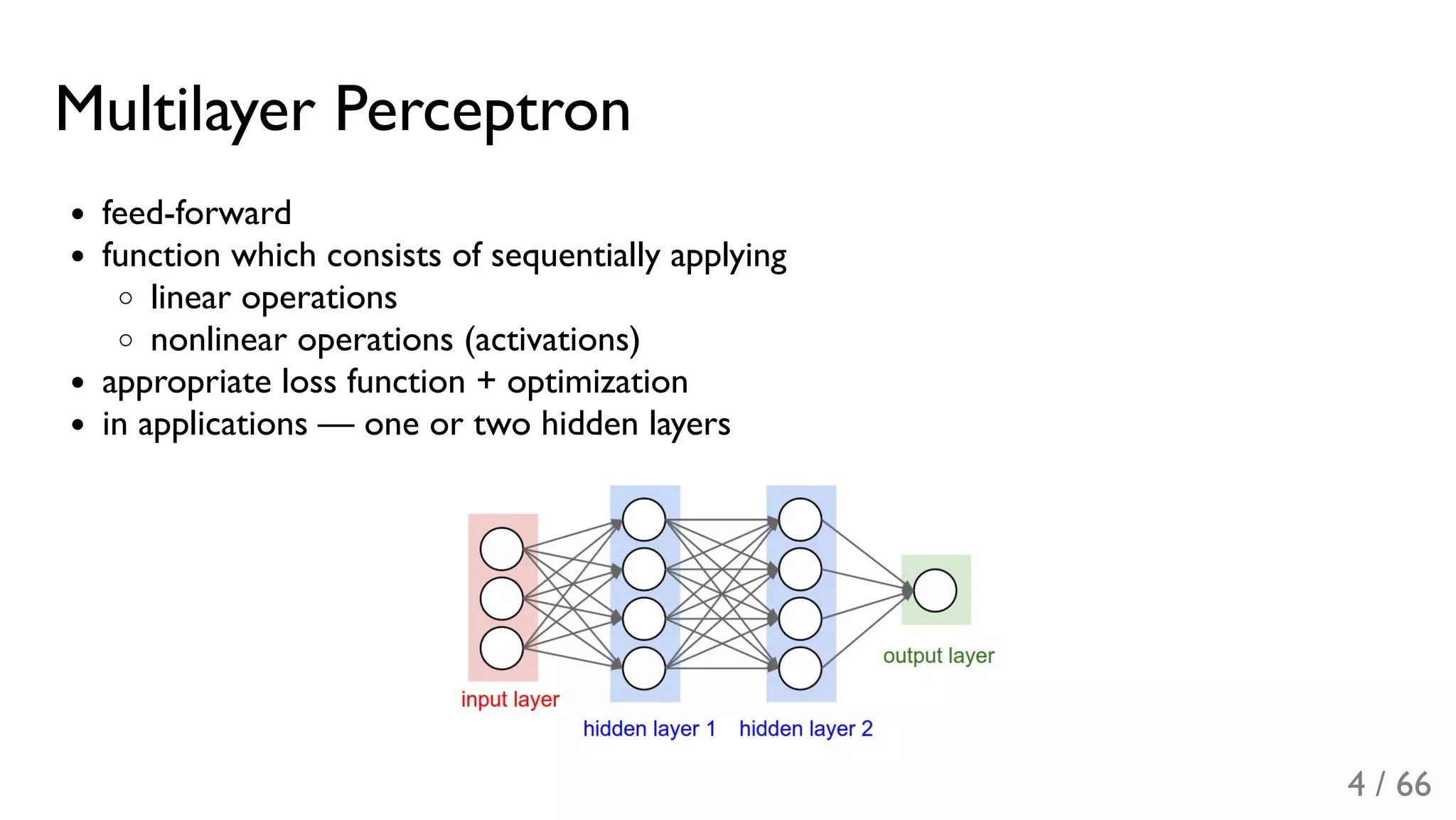

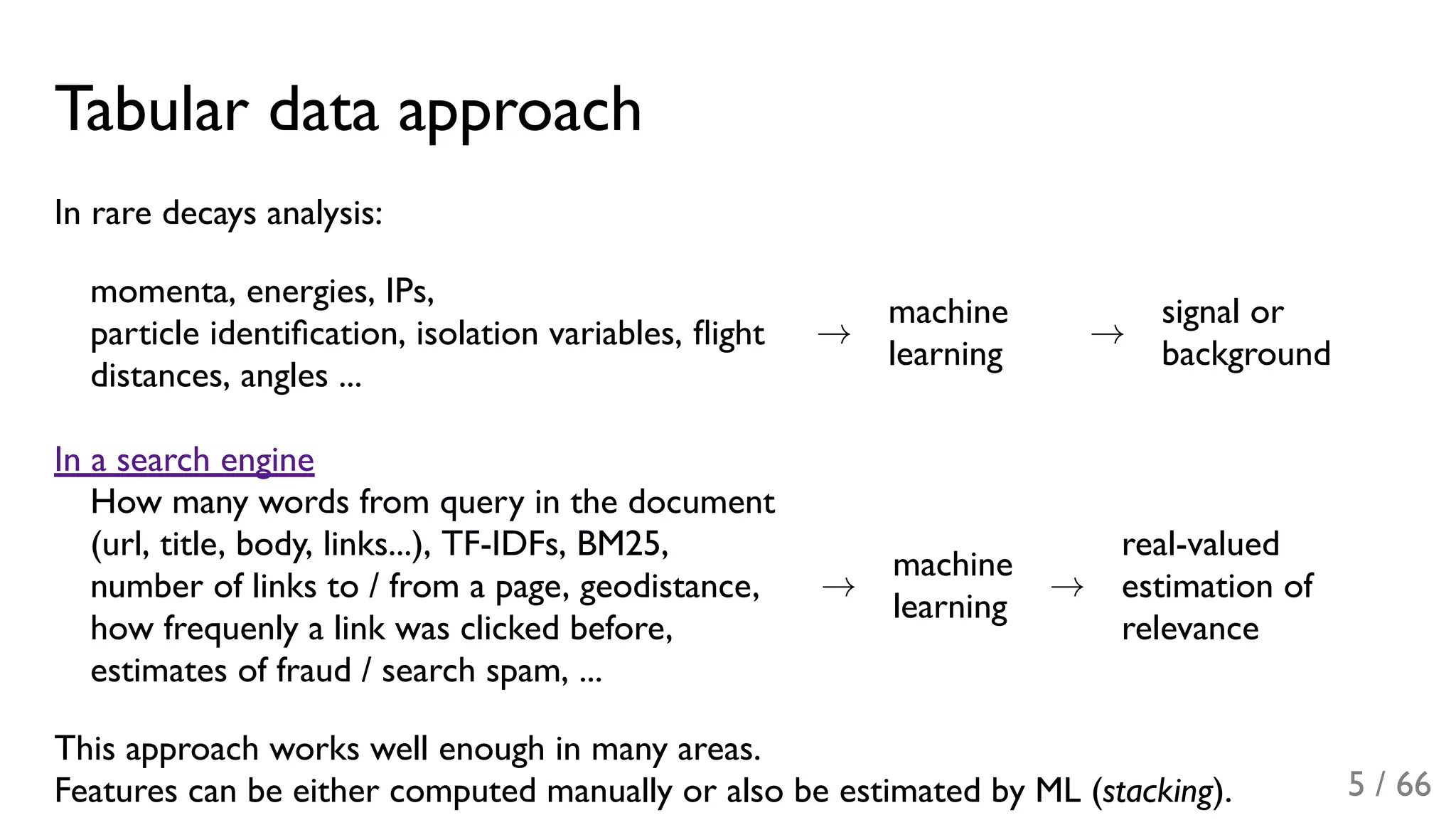

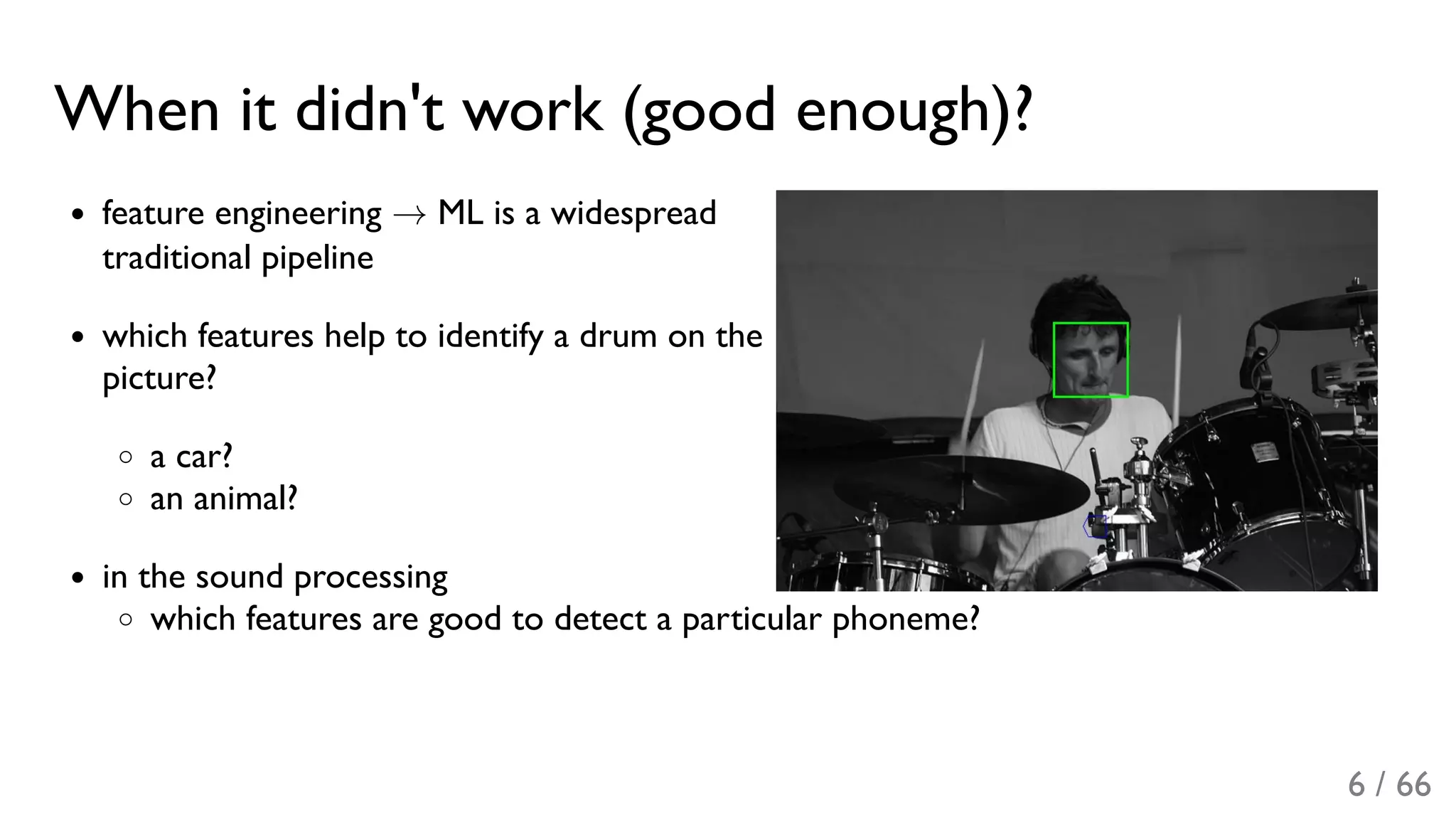

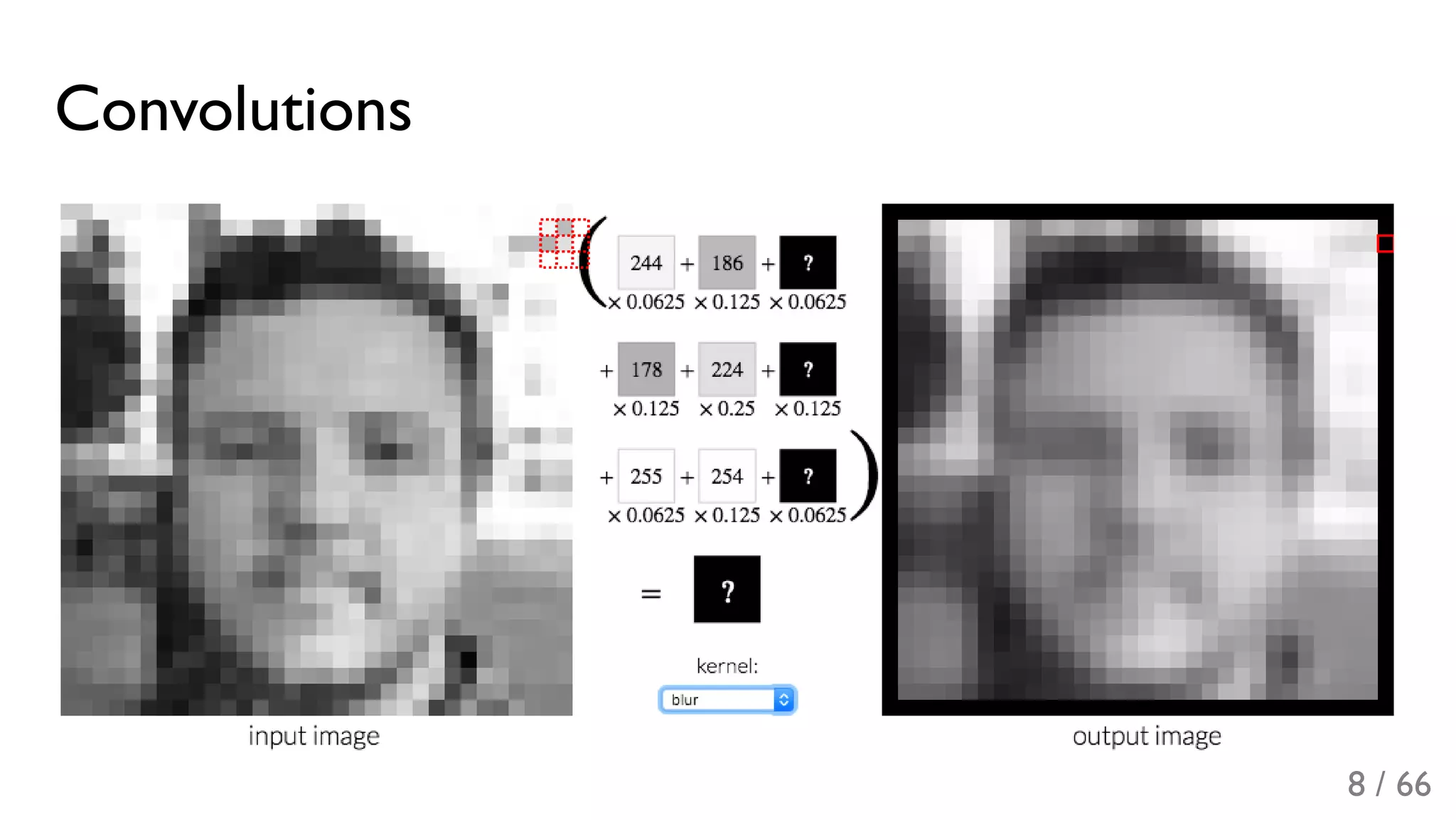

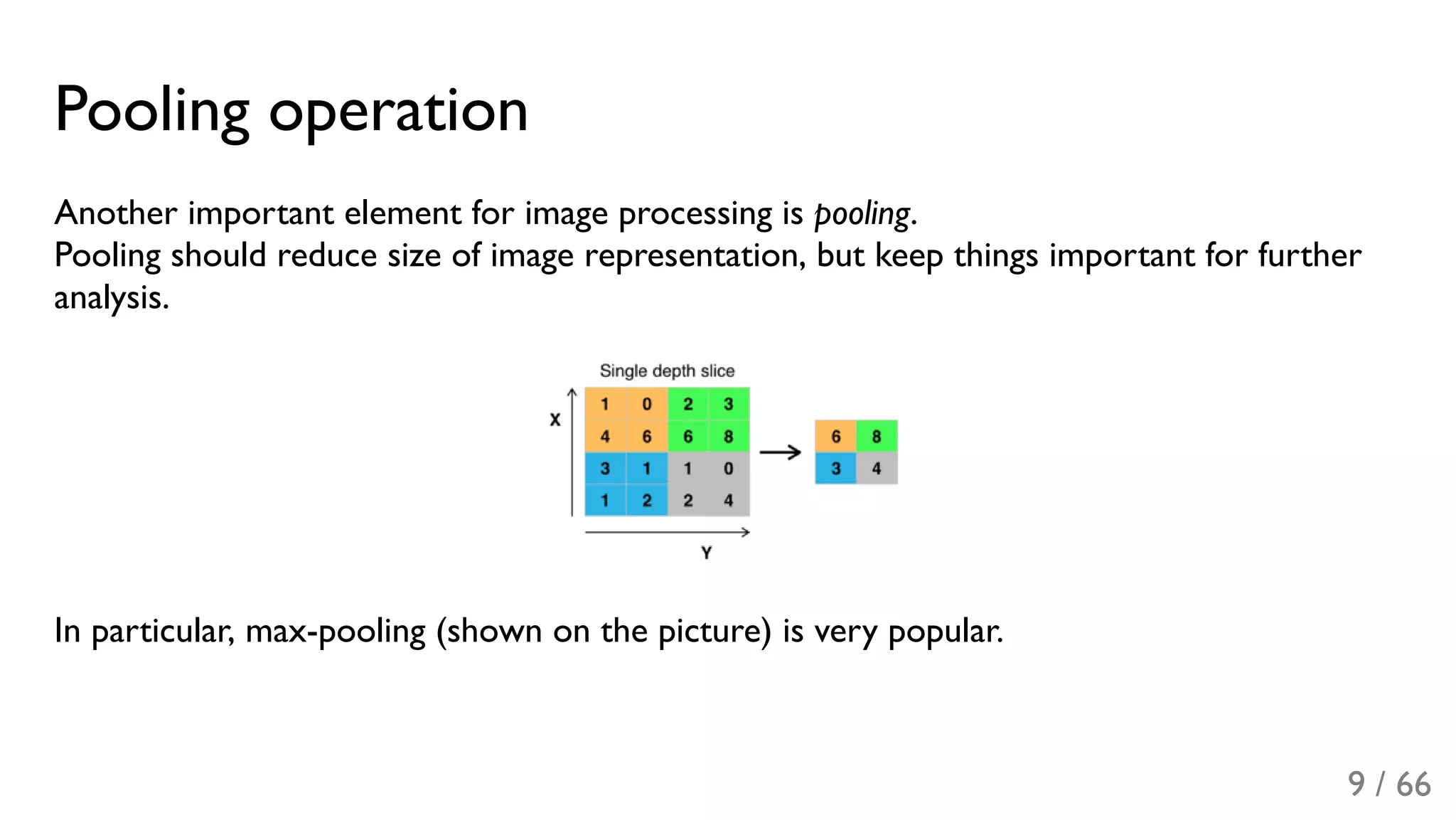

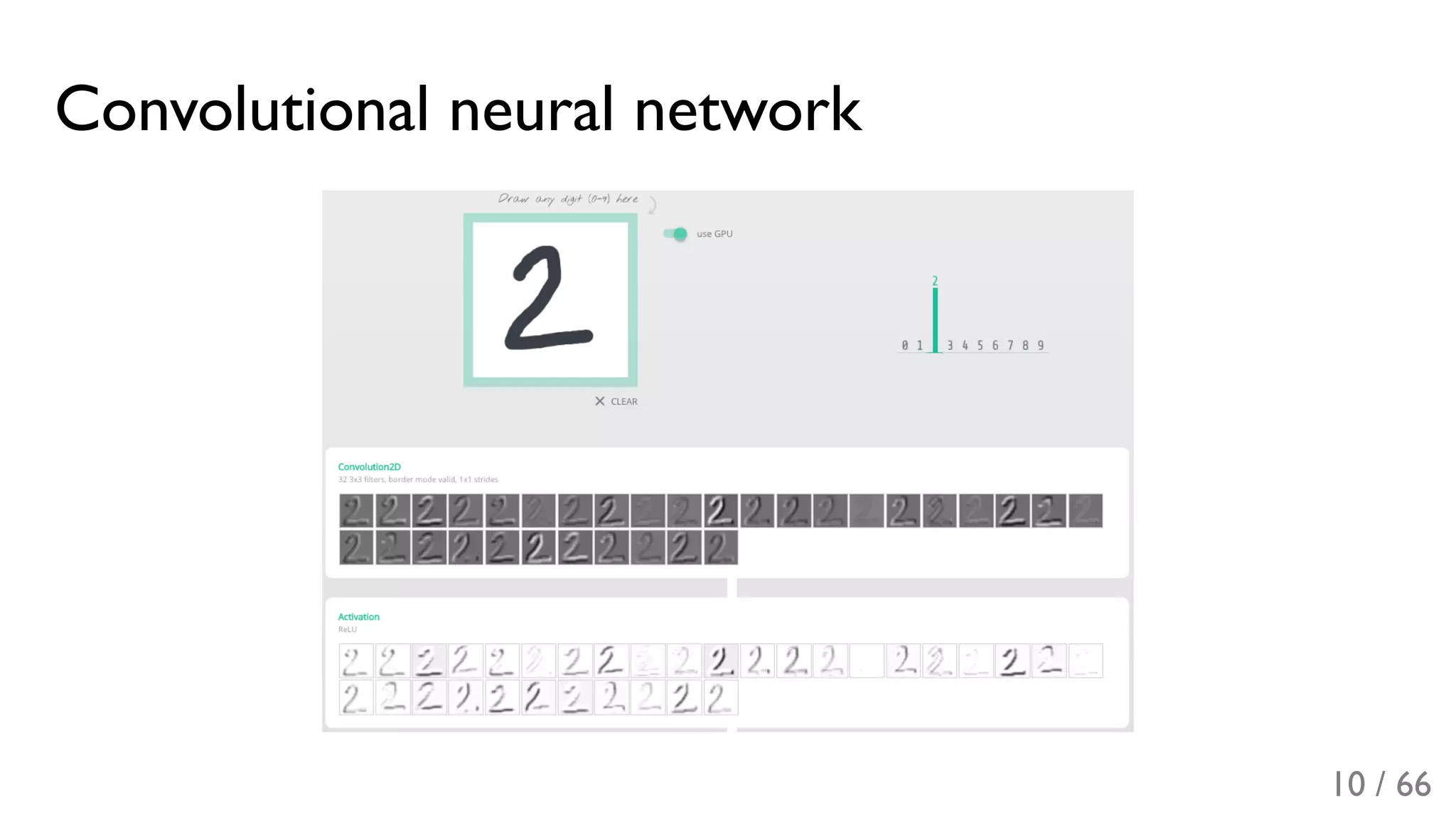

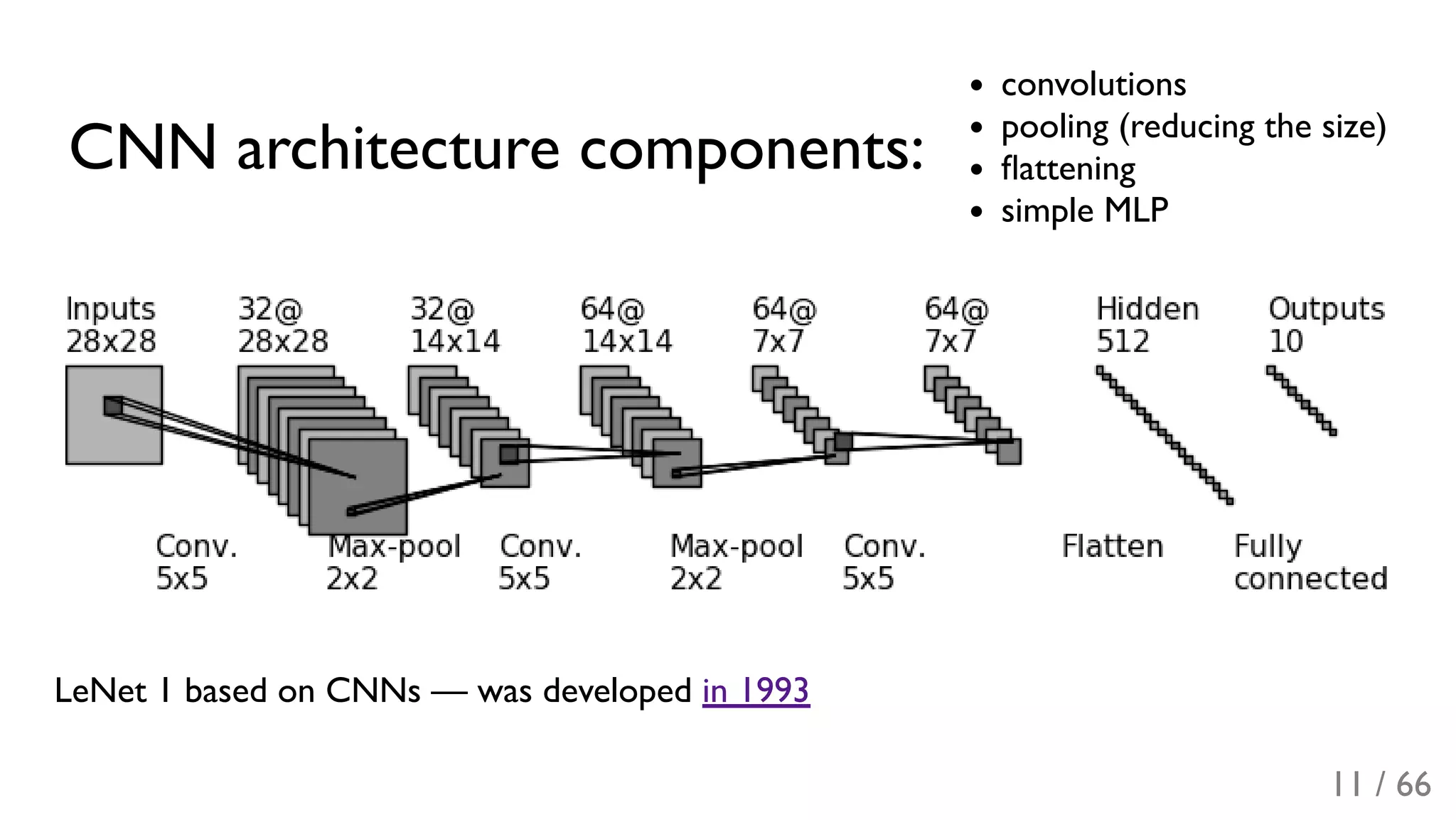

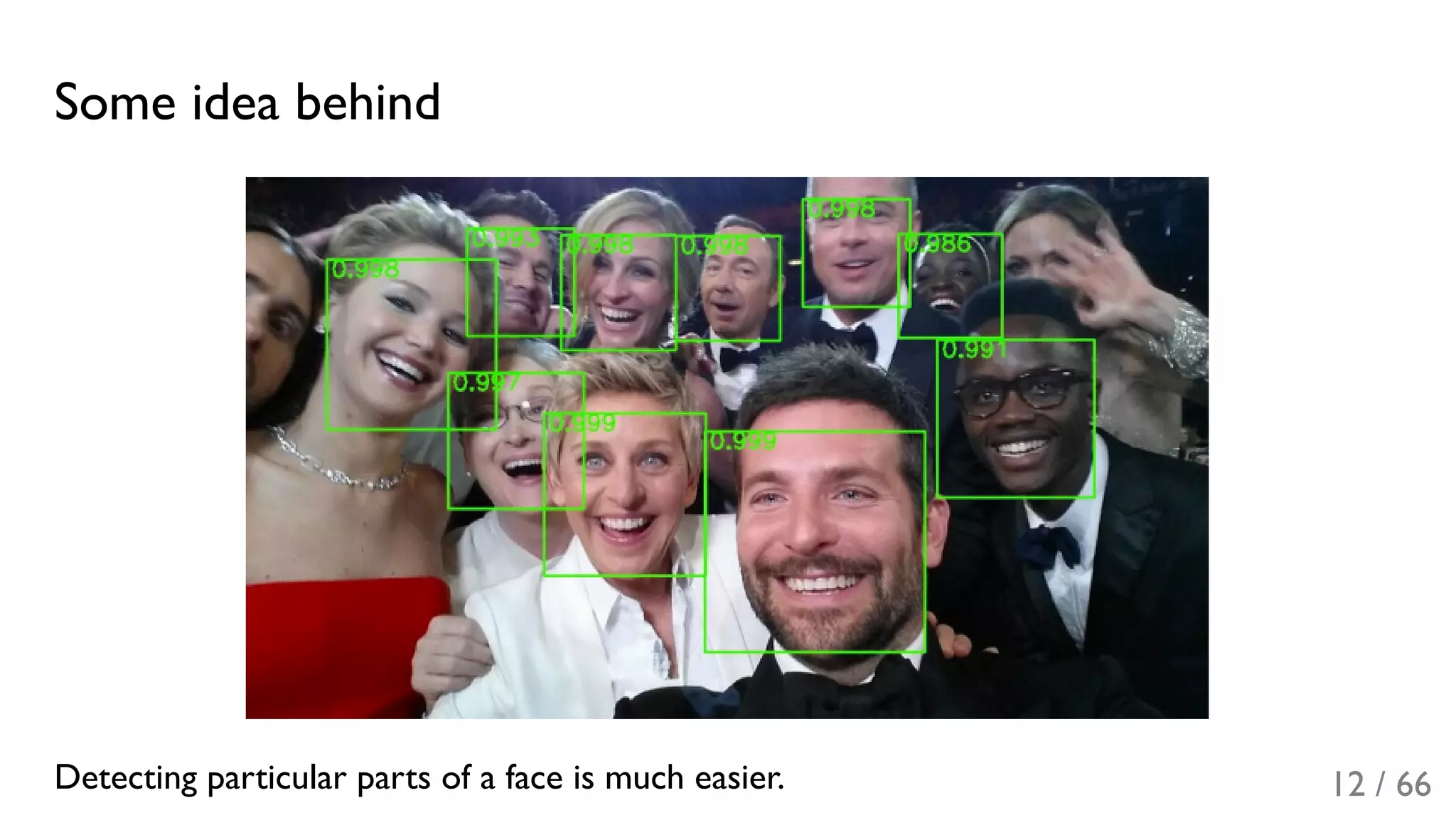

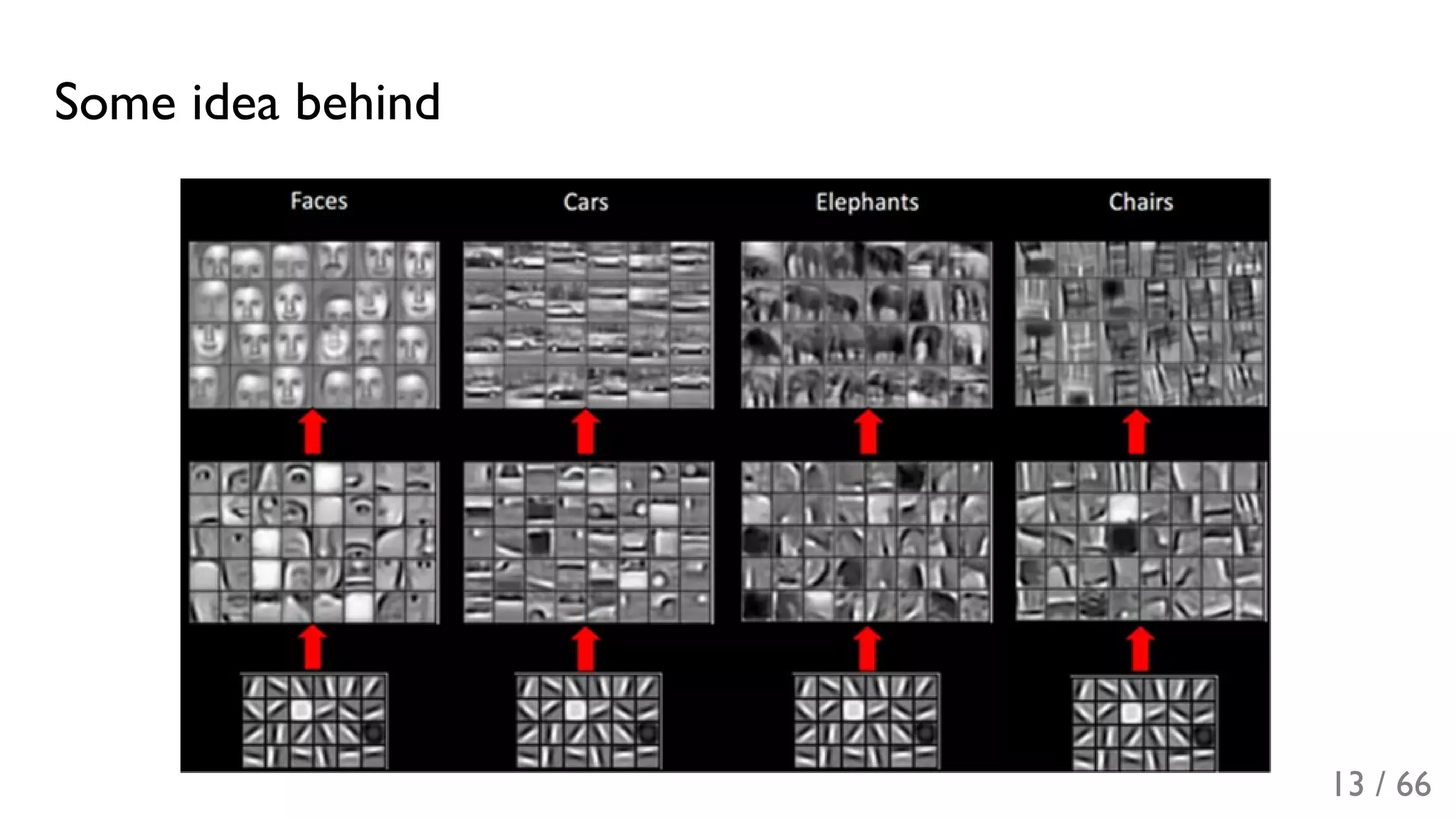

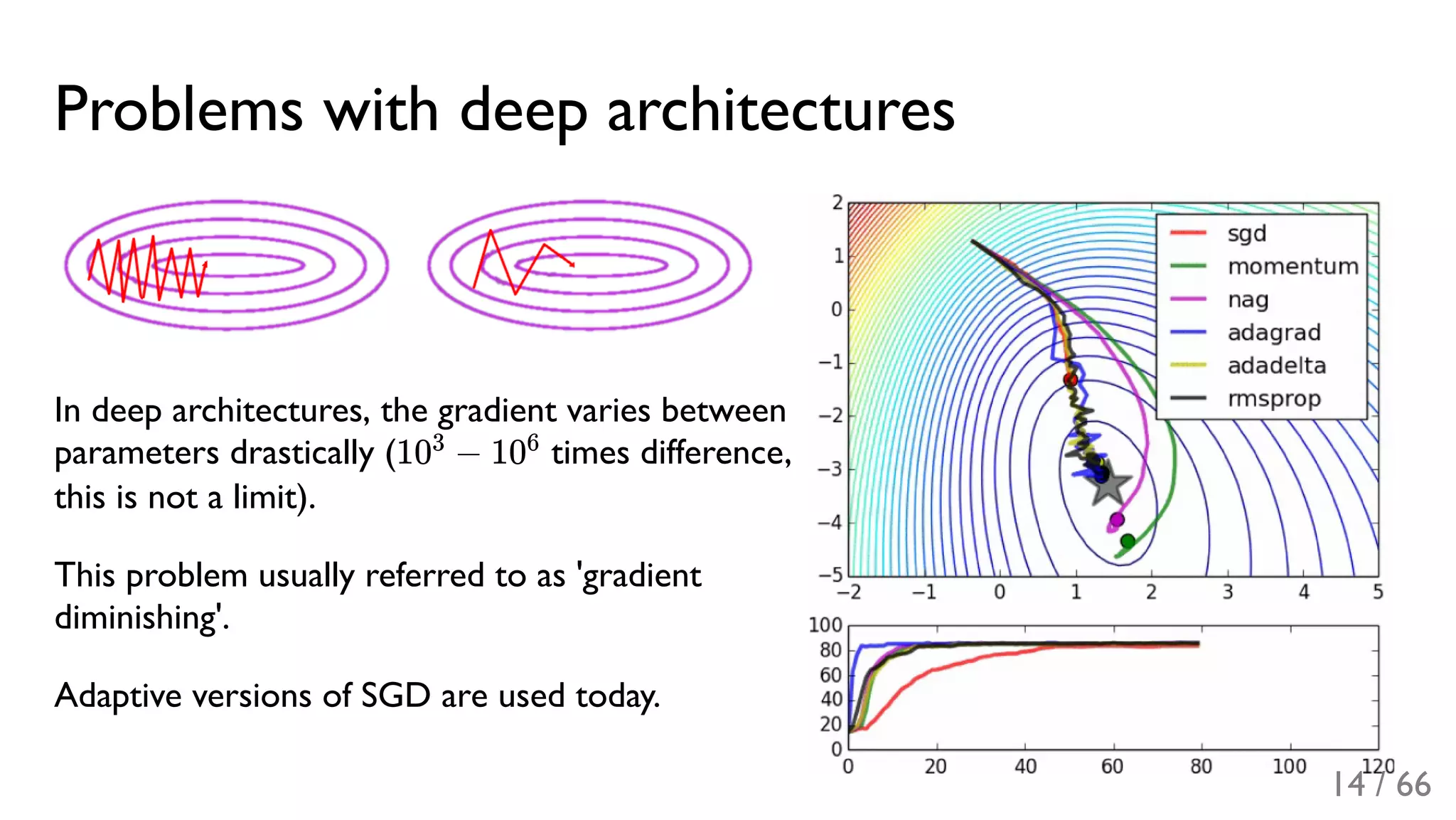

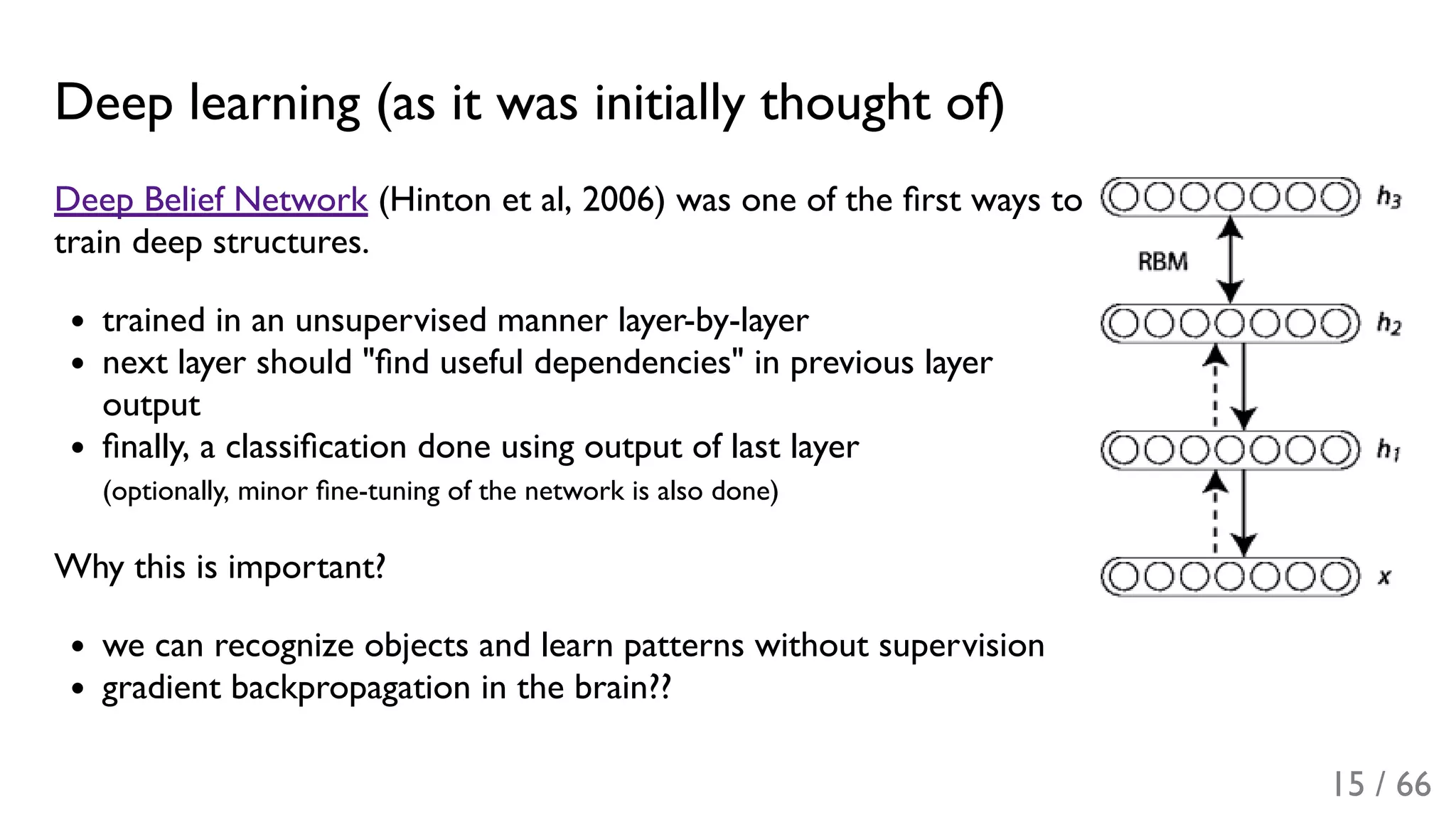

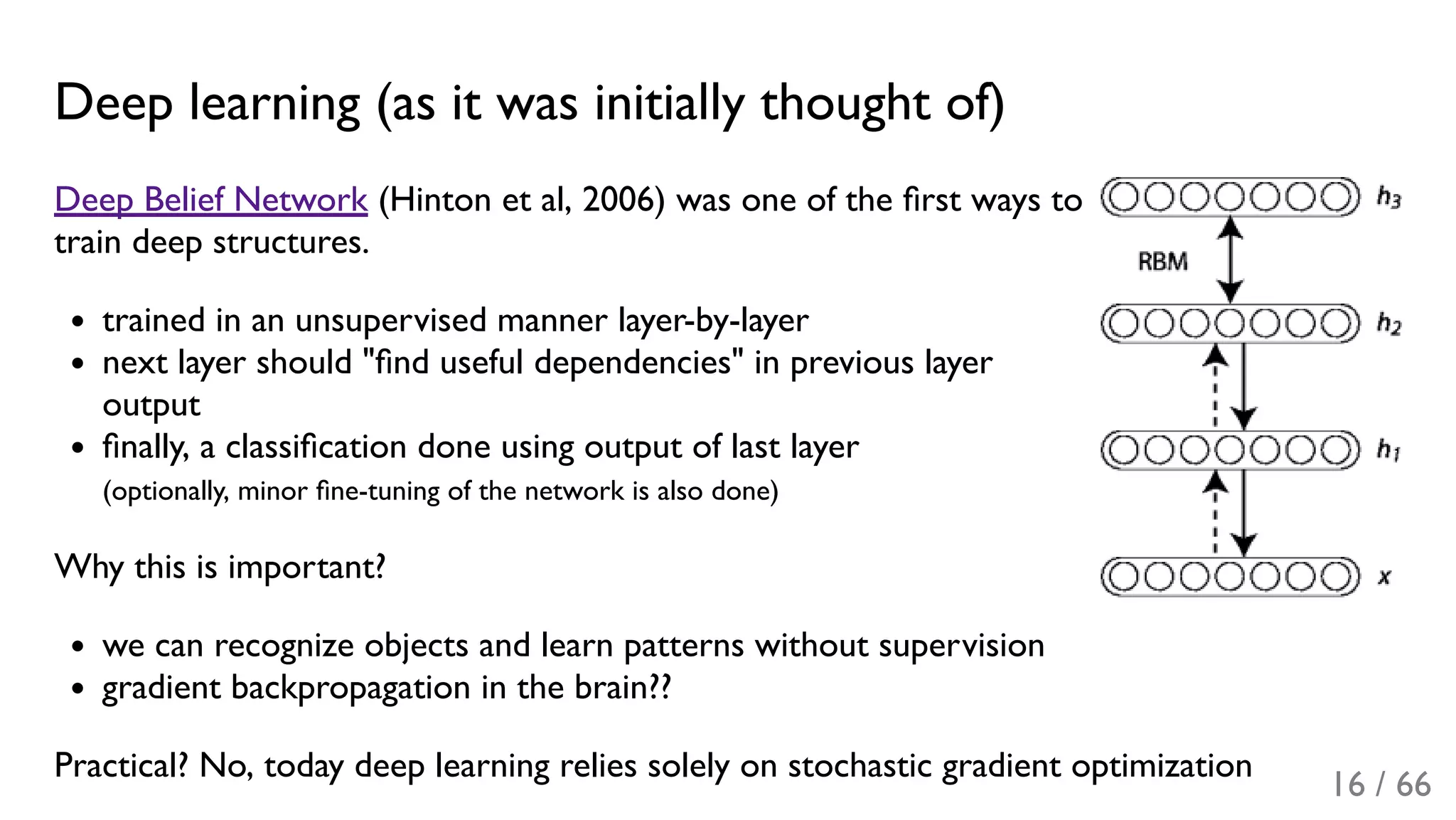

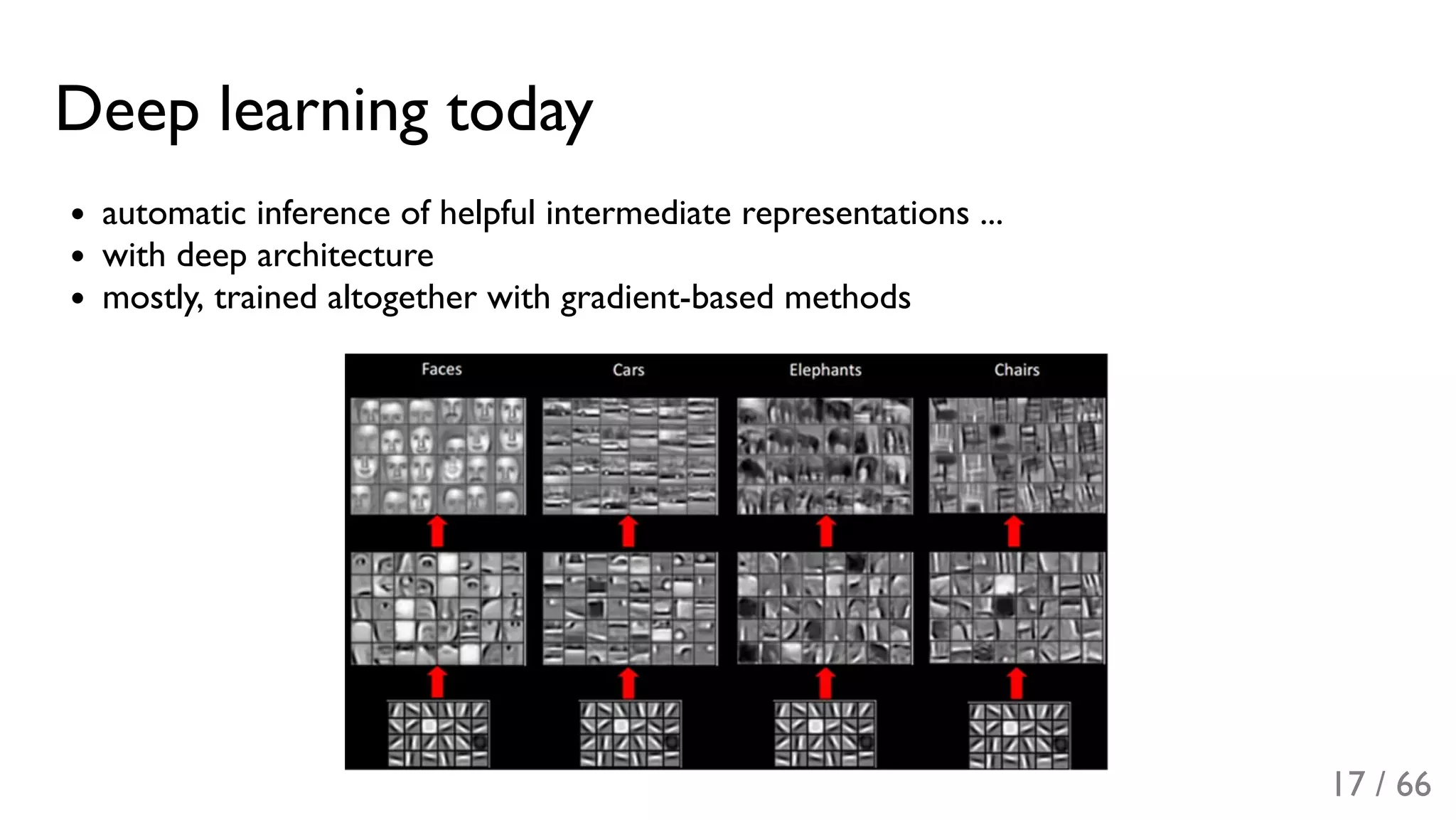

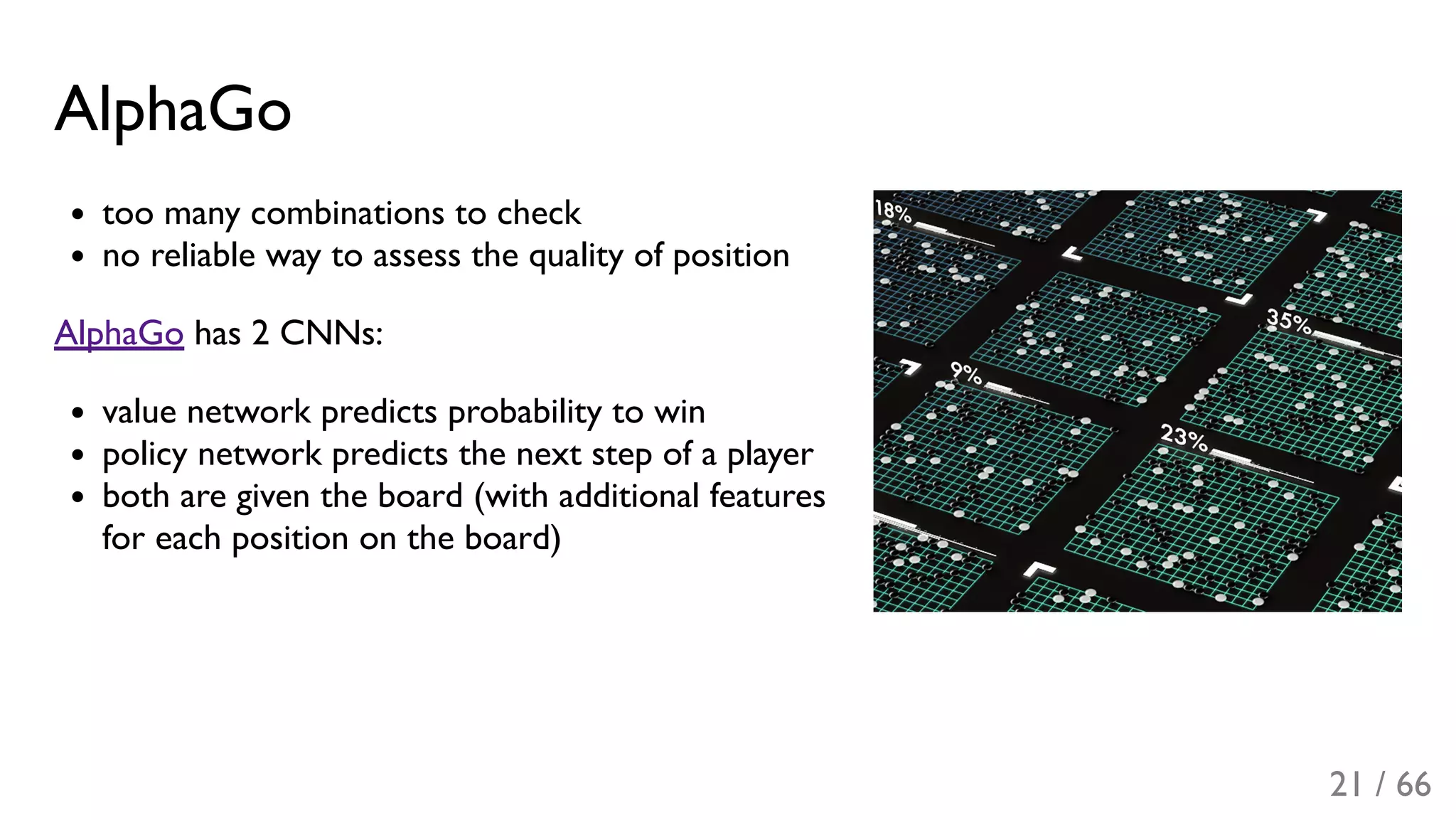

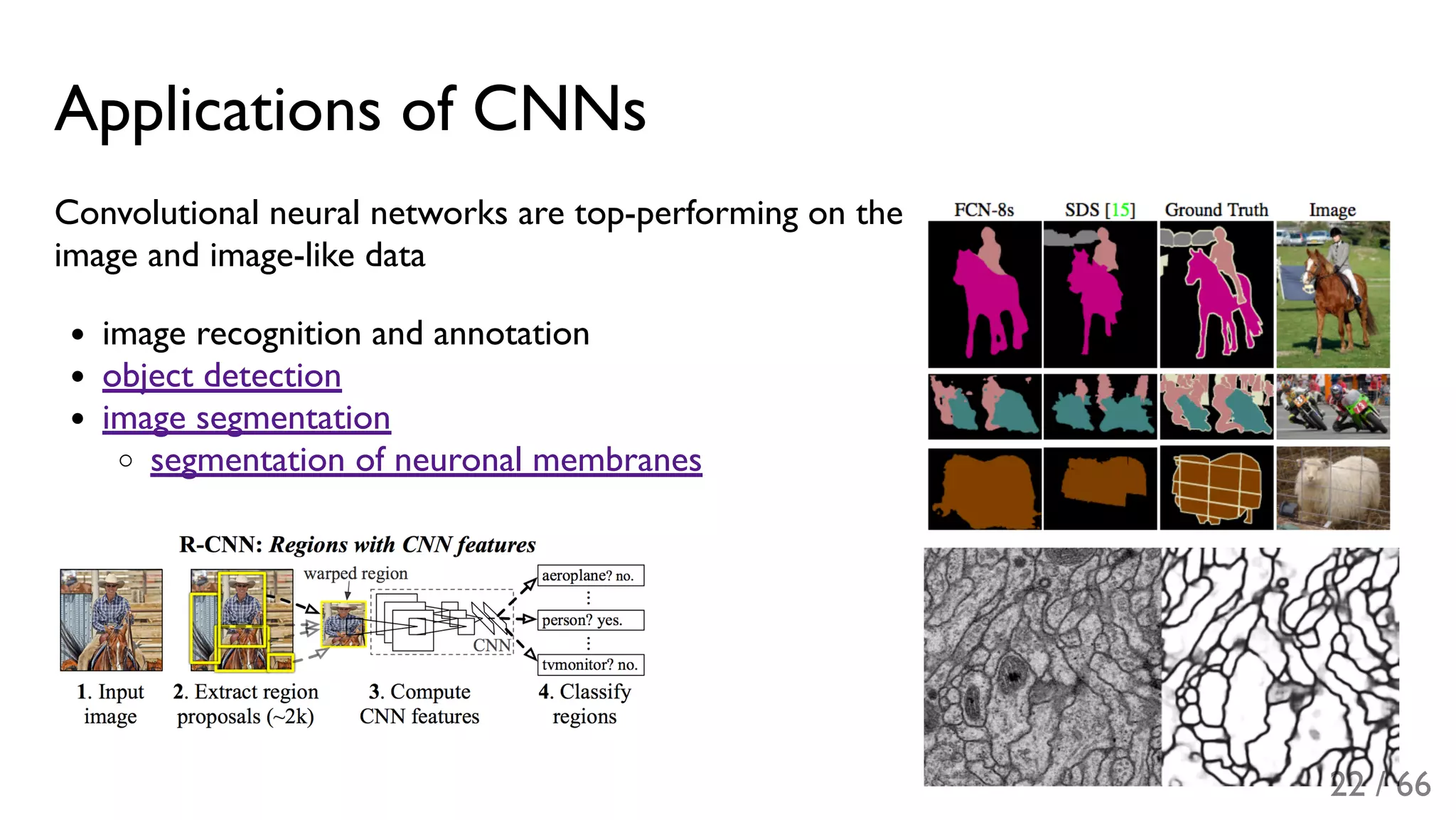

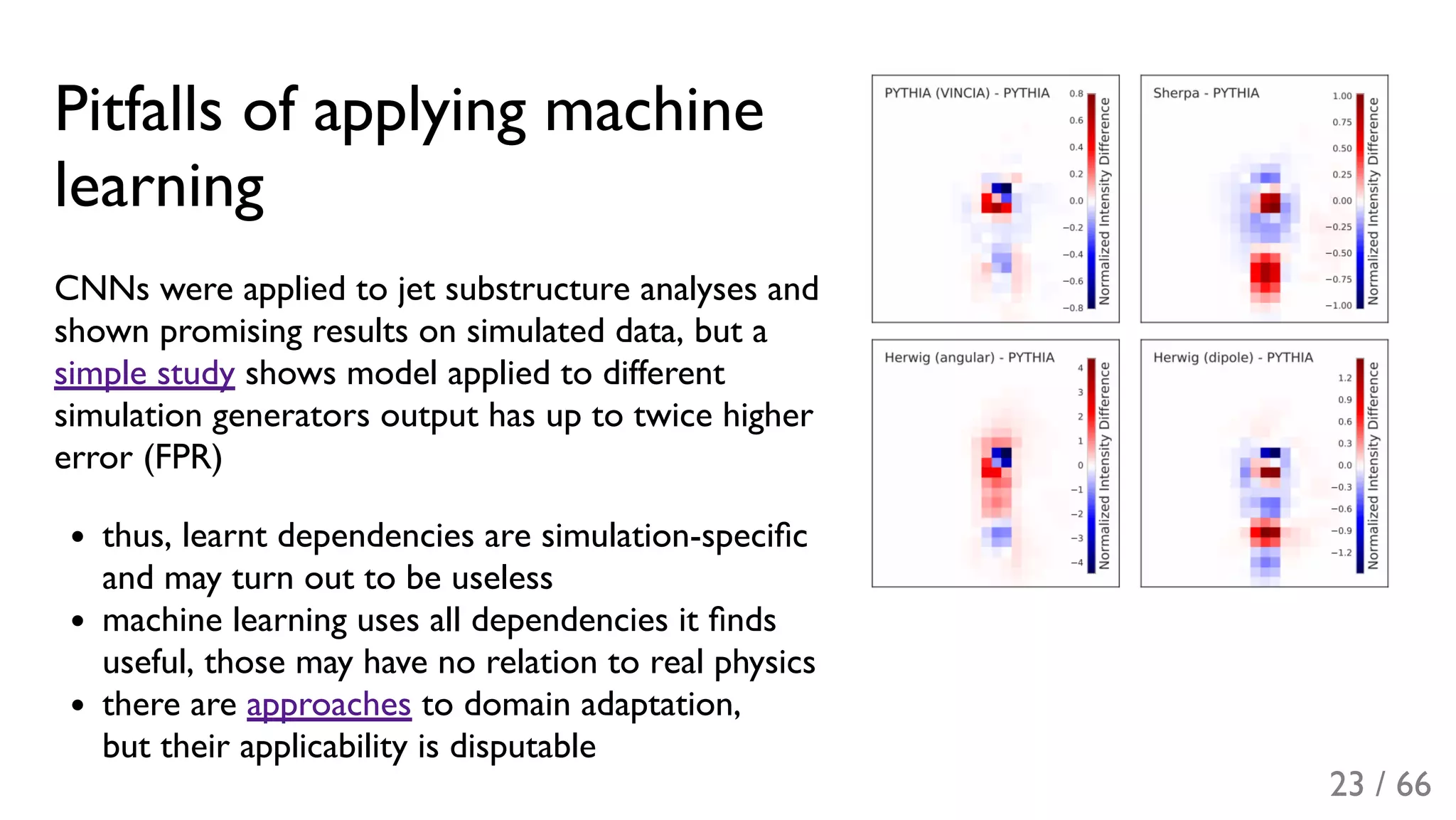

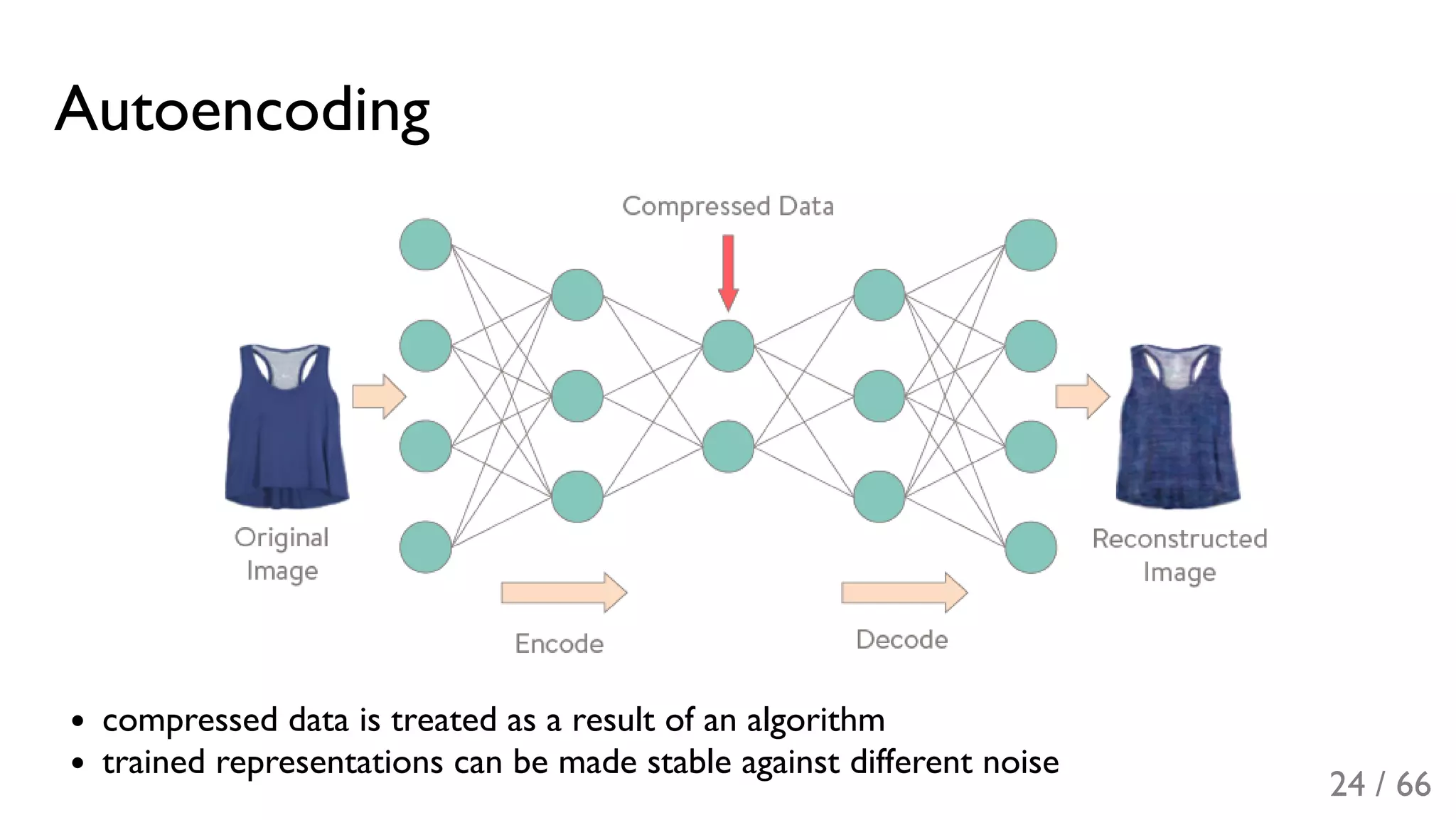

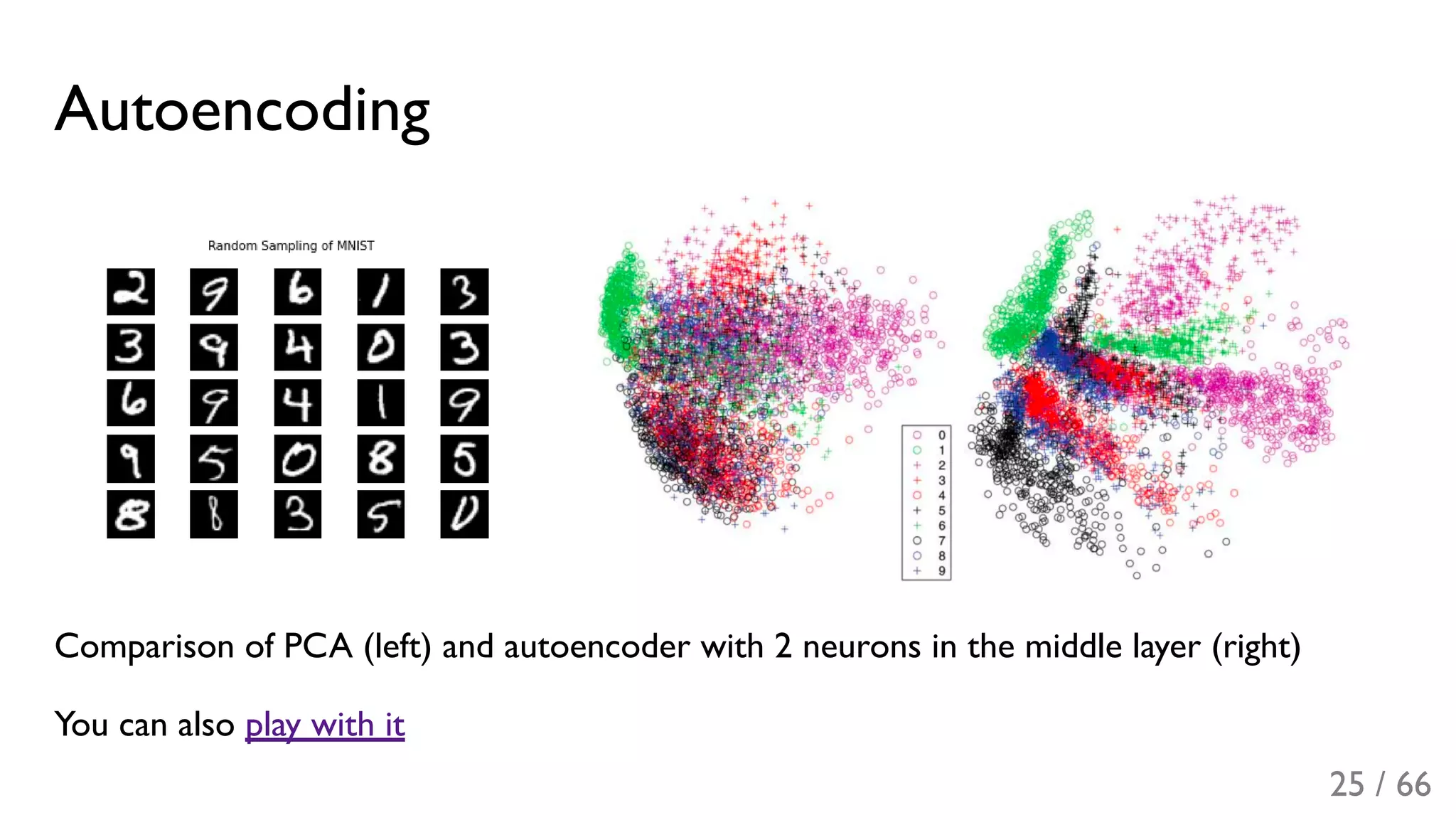

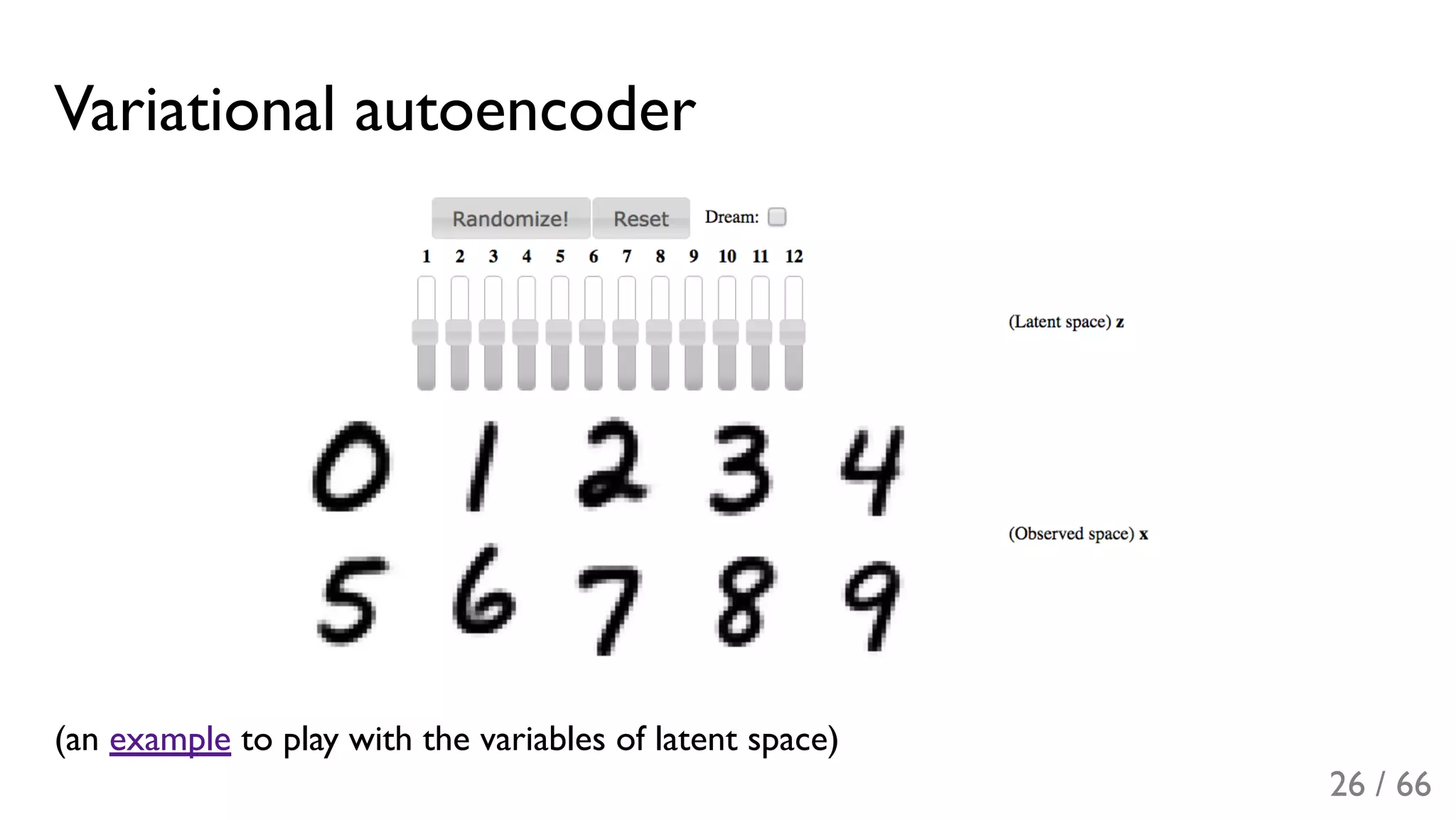

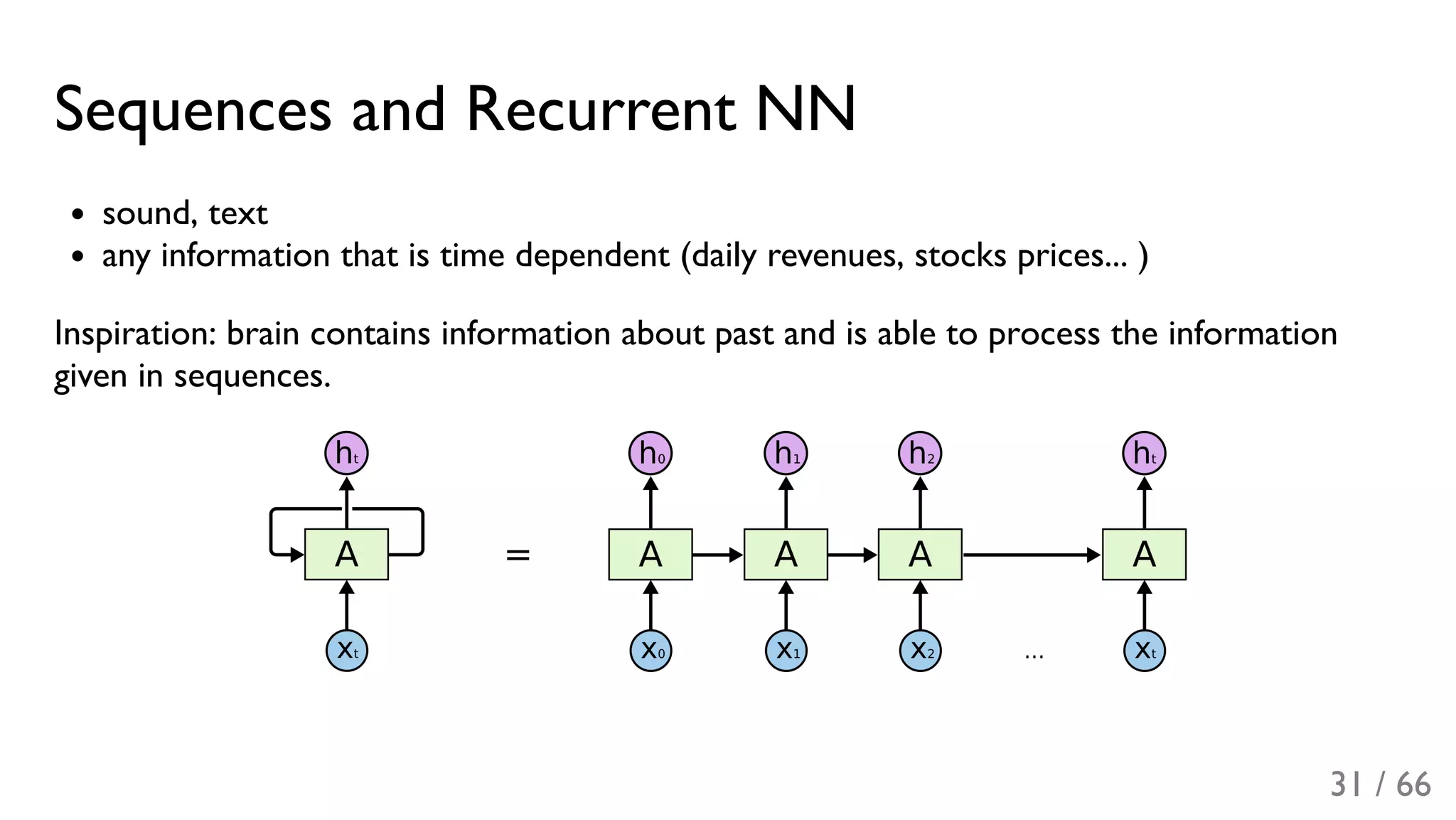

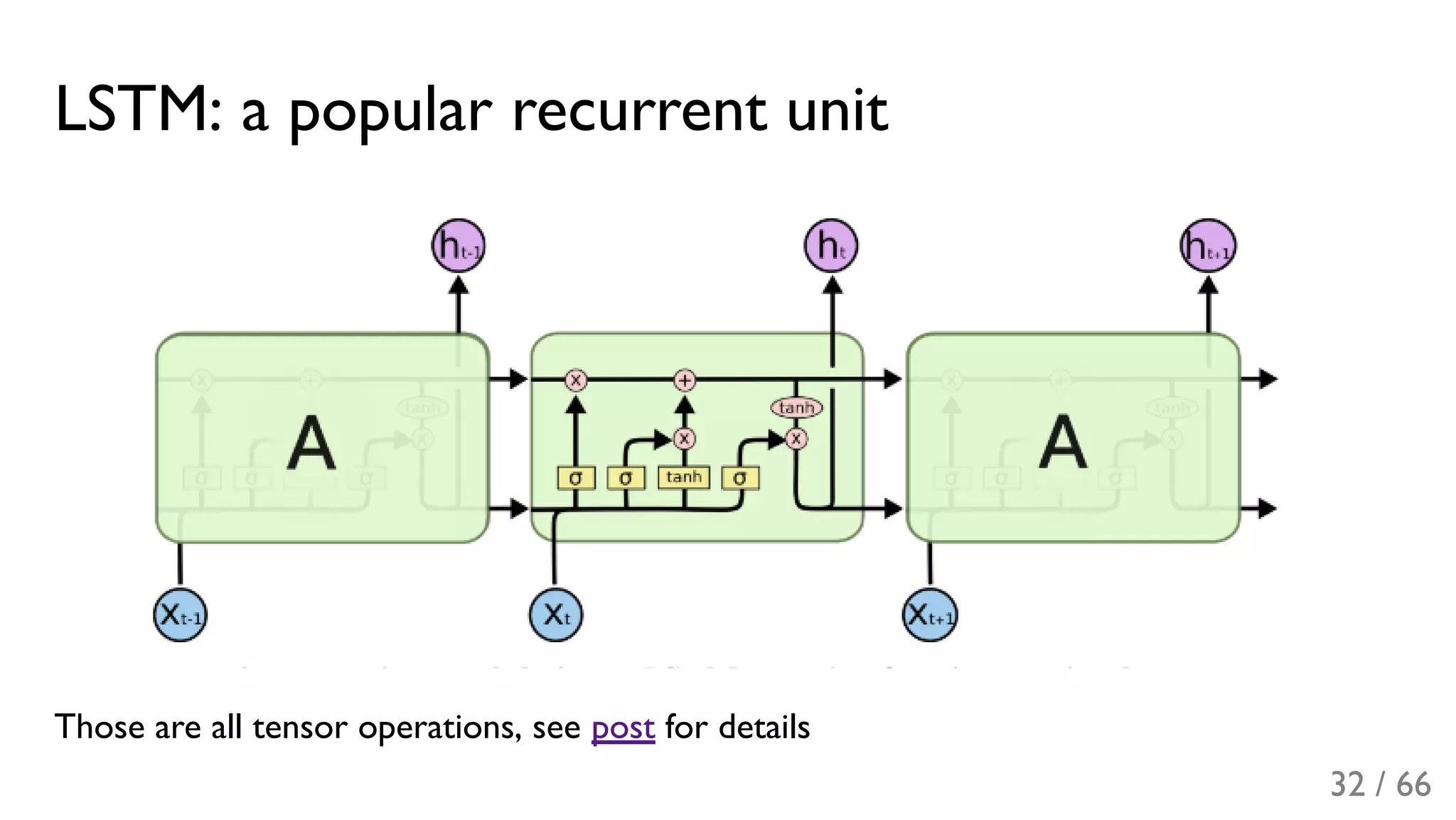

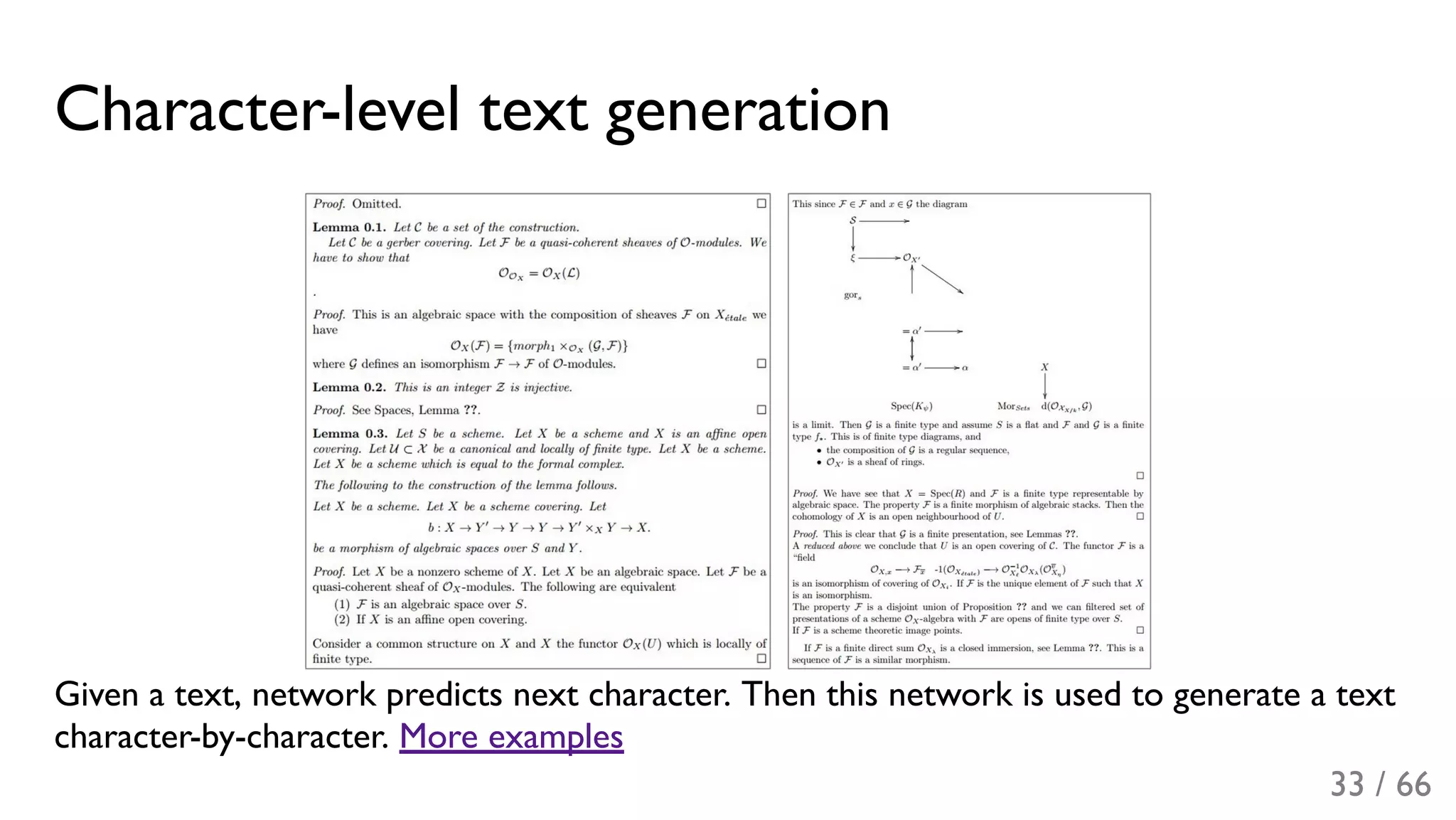

The document covers advanced topics in machine learning as presented in lectures by Alex Rogozhnikov and Tatiana Likhomanenko, addressing techniques like linear models, convolutional neural networks, and recurrent neural networks. It emphasizes the importance of feature engineering, hyperparameter optimization, and the application of deep learning methods in various domains, including image and text analysis. The lectures highlight the growing complexity of problems in science and industry and the evolving capabilities of machine learning to provide insights from large datasets.

![Recapitulation: factorization models

recommender systems

=< U V >

− R → min

learning representations

learning through the prism of interaction between

entities

R^u,i u,⋅ ⋅,i

known Ru,i

∑ [R^u,i u,i]

2

2 / 66](https://image.slidesharecdn.com/4-lecture-graddays-170420165455/75/Machine-learning-in-science-and-industry-day-4-3-2048.jpg)

![Code generation

Network trained on the linux source:

/*

* Increment the size file of the new incorrect UI_FILTER group information

* of the size generatively.

*/

static int indicate_policy(void)

{

int error;

if (fd == MARN_EPT) {

/*

* The kernel blank will coeld it to userspace.

*/

if (ss->segment < mem_total)

unblock_graph_and_set_blocked();

else

ret = 1;

goto bail;

}

segaddr = in_SB(in.addr);

selector = seg / 16;

setup_works = true;

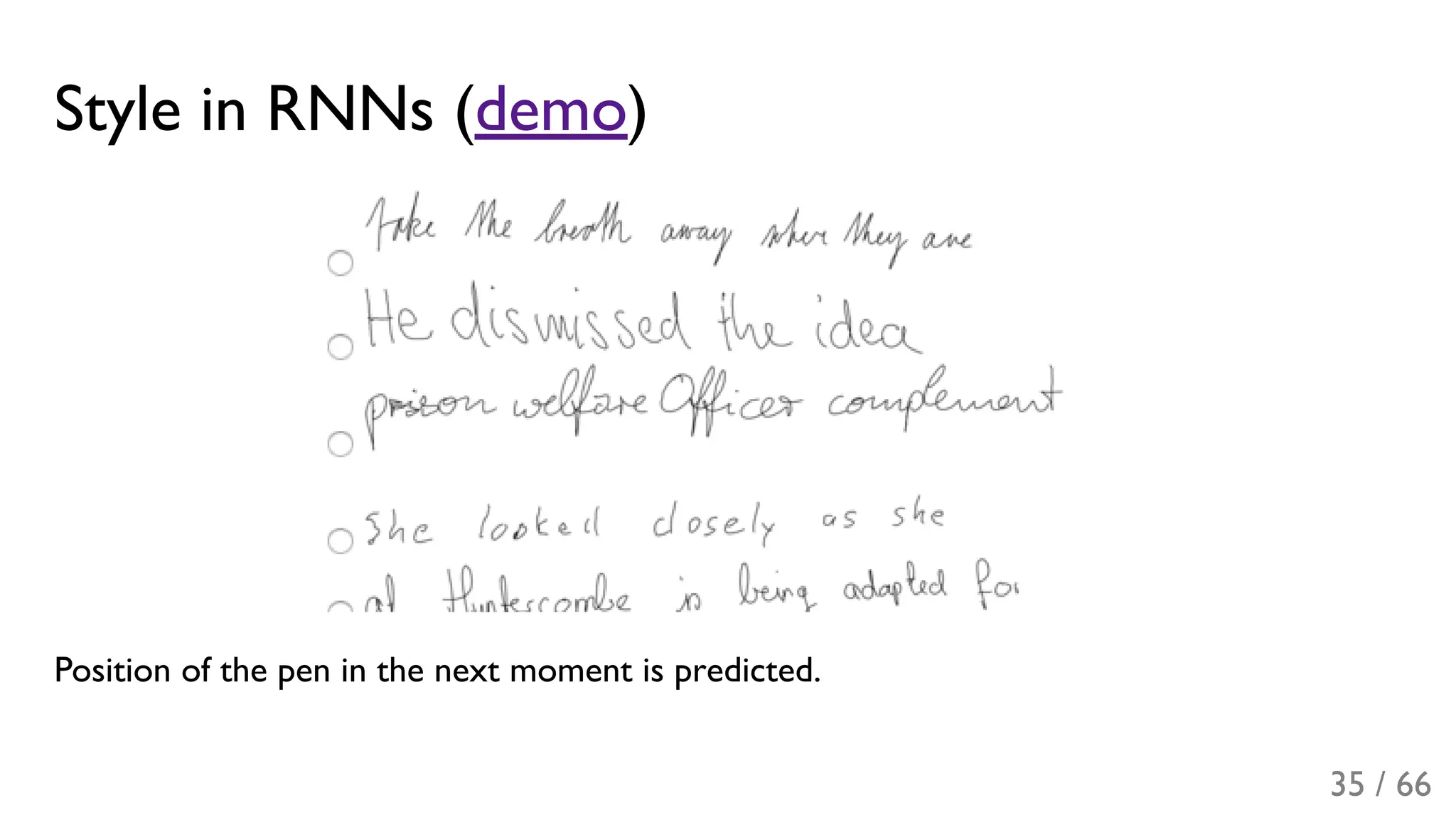

for (i = 0; i < blocks; i++) {

seq = buf[i++];

bpf = bd->bd.next + i * search;

if (fd) {

current = blocked;

}

}

rw->name = "Getjbbregs";

bprm_self_clearl(&iv->version);

regs->new = blocks[(BPF_STATS << info->historidac)] | PFMR_CLOBATHINC_SECONDS << 12;

return segtable;

}

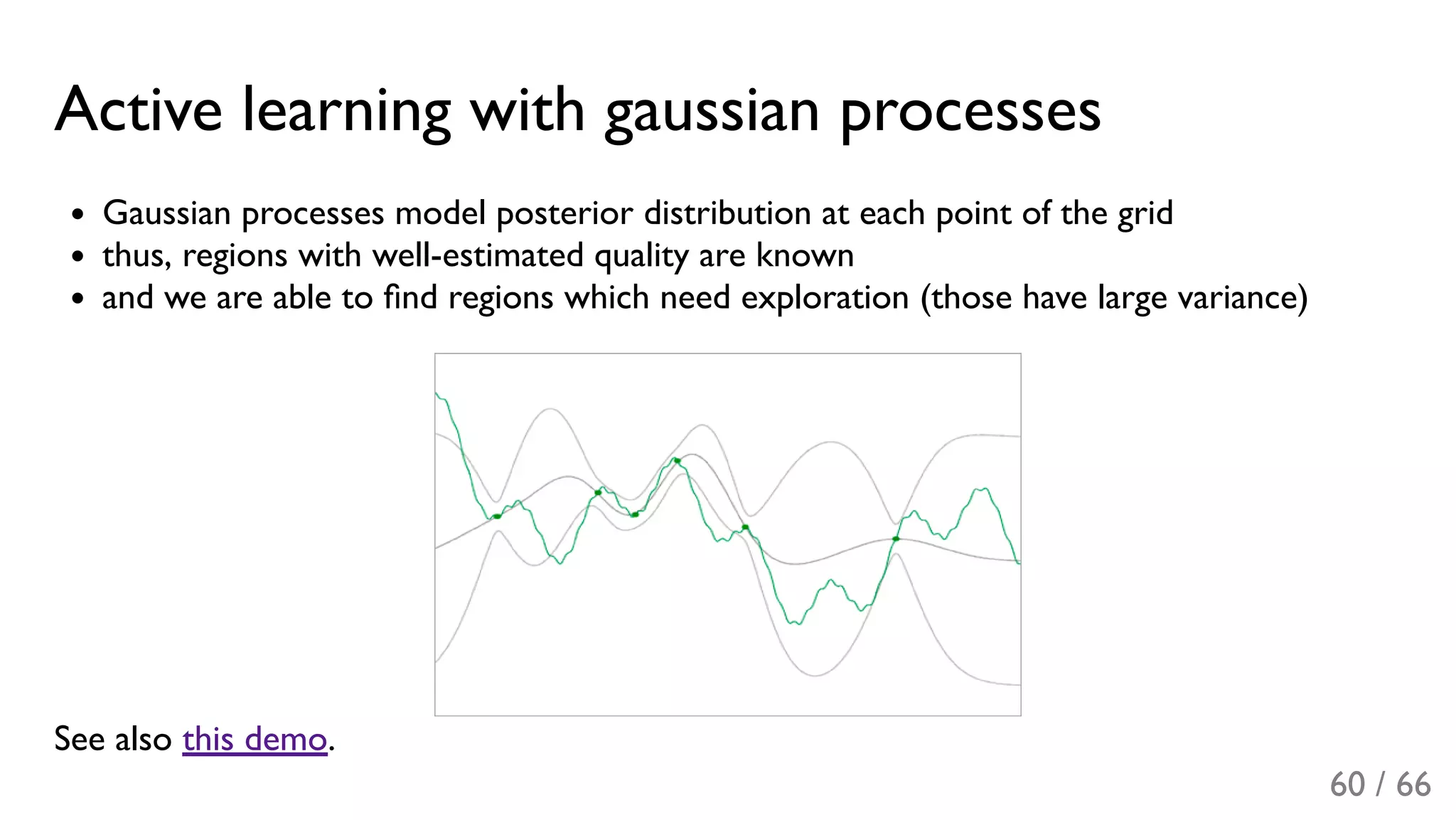

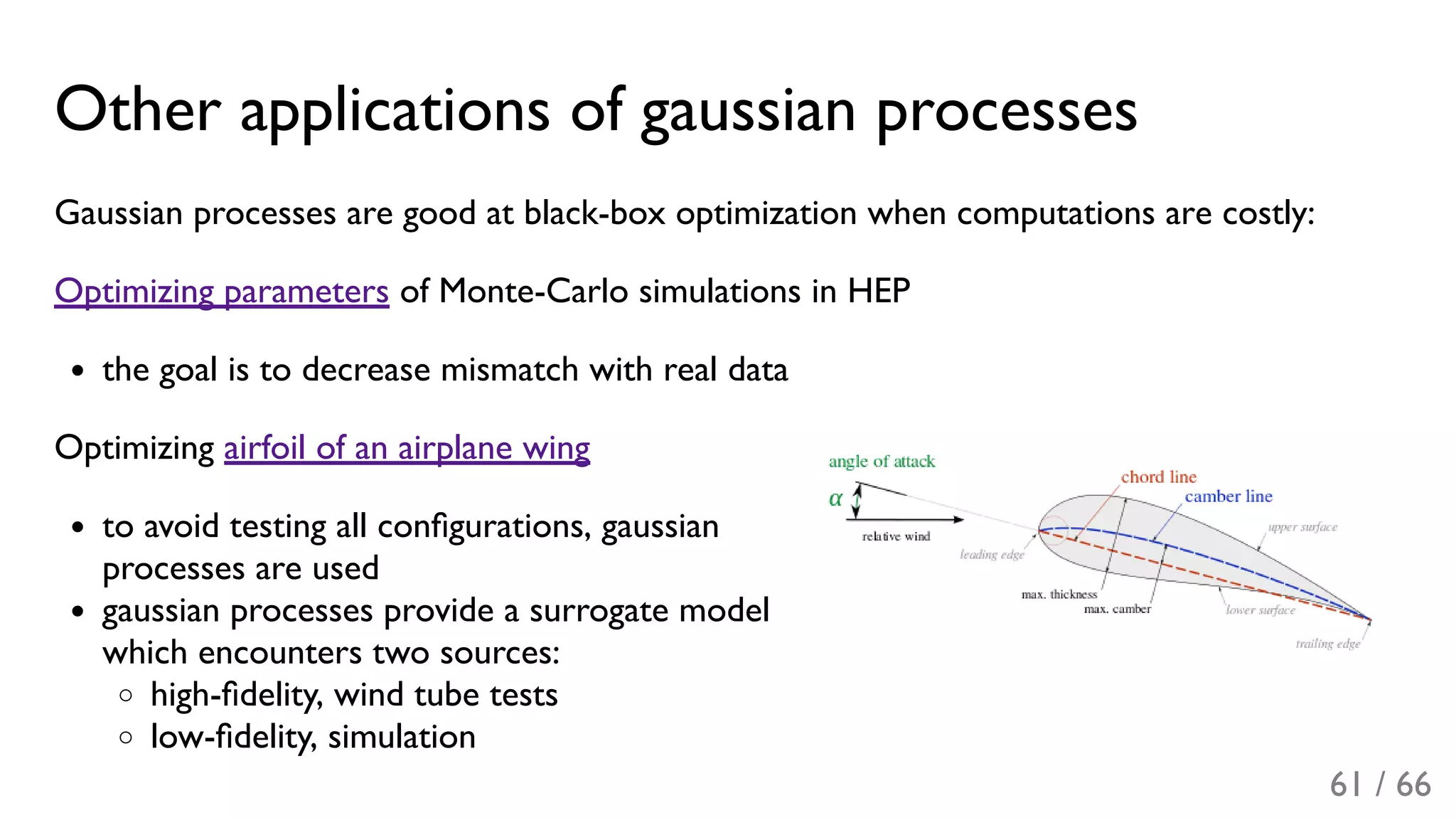

34 / 66](https://image.slidesharecdn.com/4-lecture-graddays-170420165455/75/Machine-learning-in-science-and-industry-day-4-35-2048.jpg)