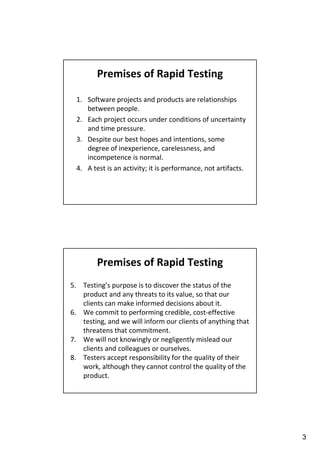

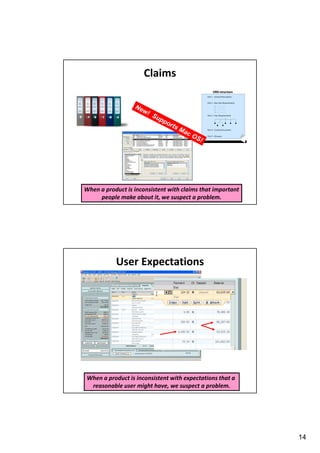

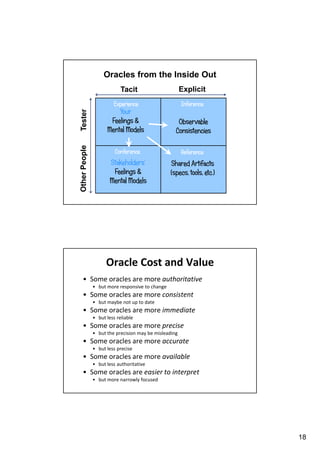

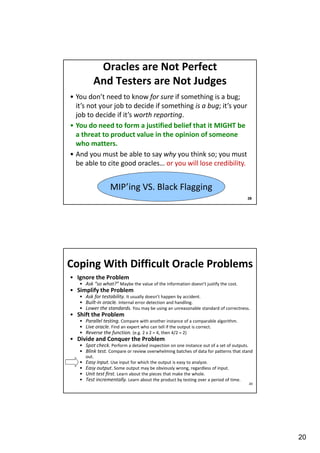

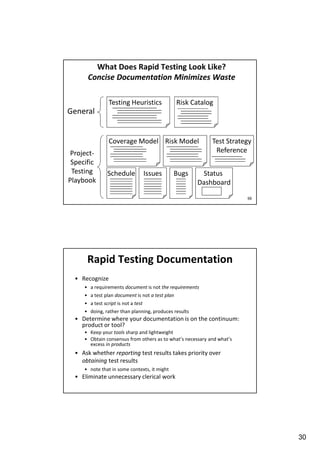

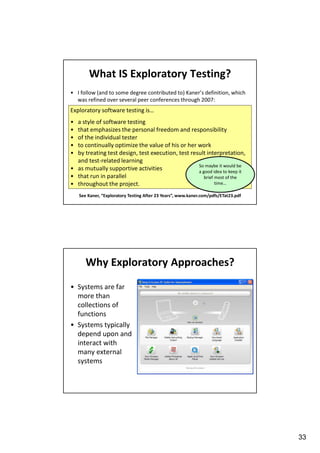

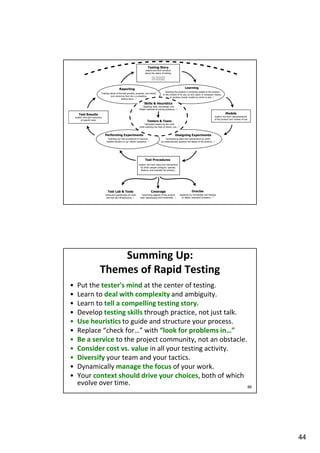

El tutorial 'Una introducción rápida a las pruebas de software rápidas', presentado por Paul Holland, aborda la eficiencia en las pruebas de software, enfatizando la importancia de una mentalidad adaptativa y habilidades prácticas. Se destacan premisas como la relación entre proyectos de software y personas, y la necesidad de pruebas rápidas que identifiquen problemas importantes bajo condiciones de incertidumbre y presión de tiempo. Además, se discuten heurísticas y oráculos como herramientas para reconocer problemas durante el proceso de prueba.