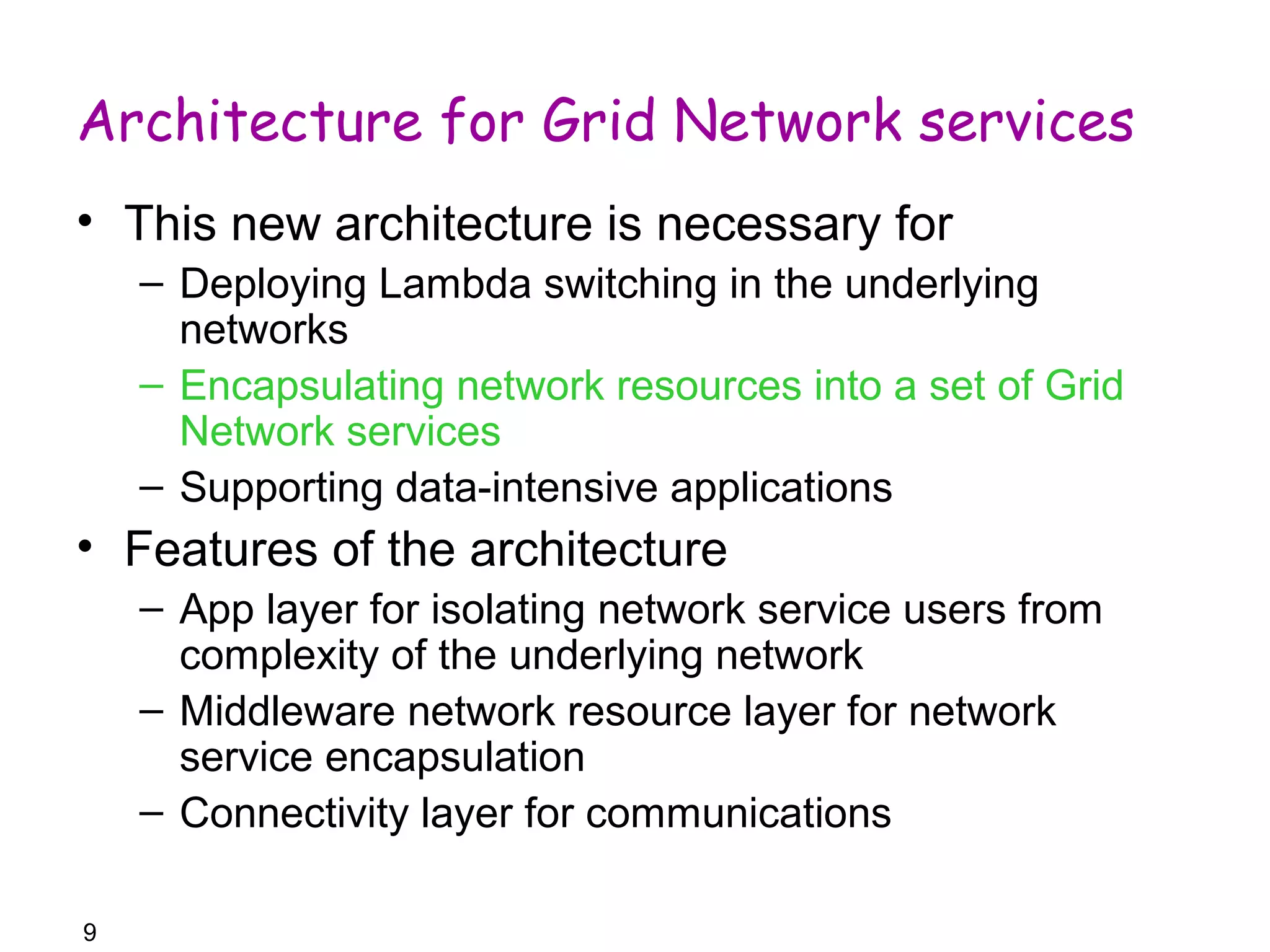

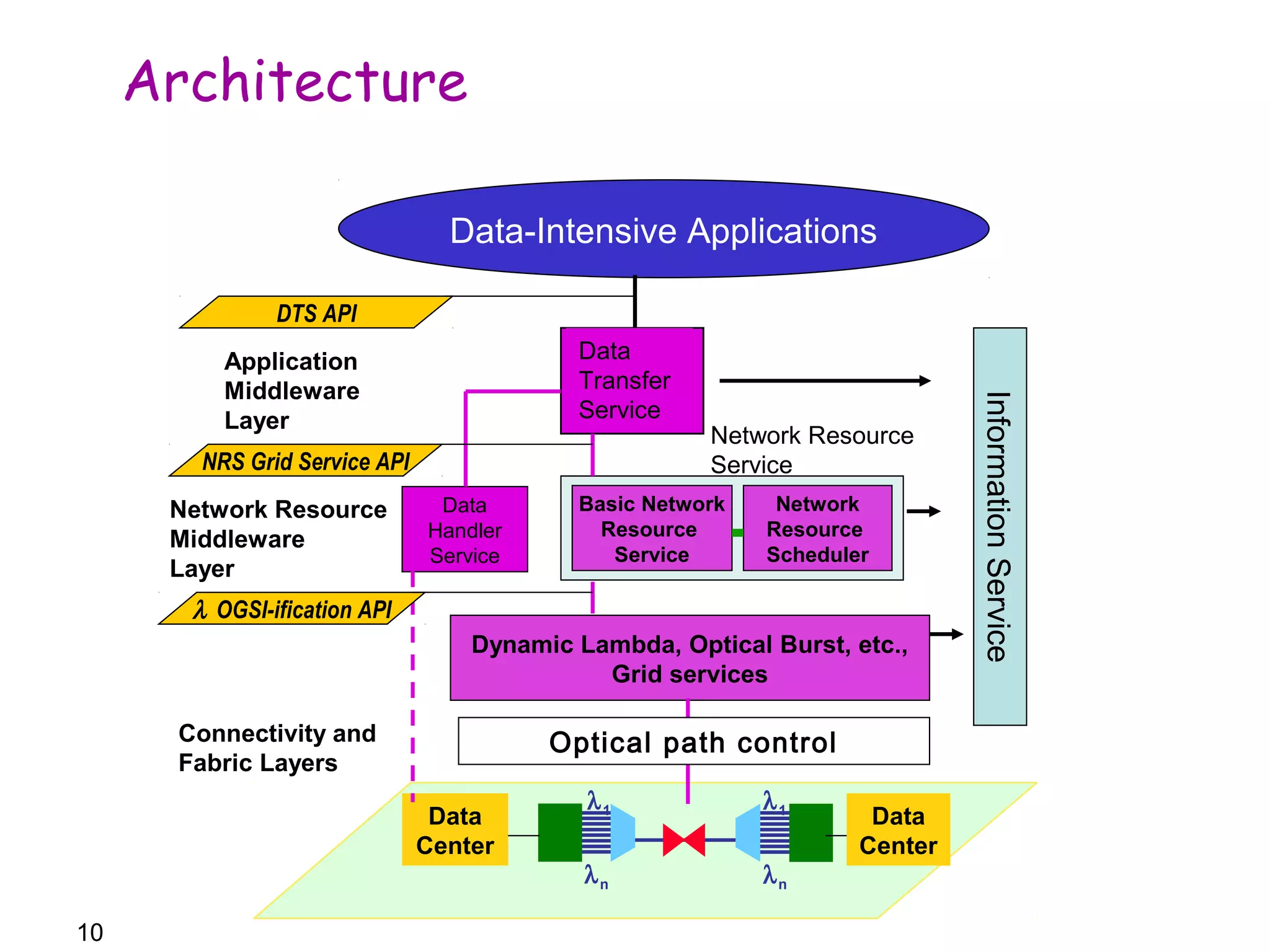

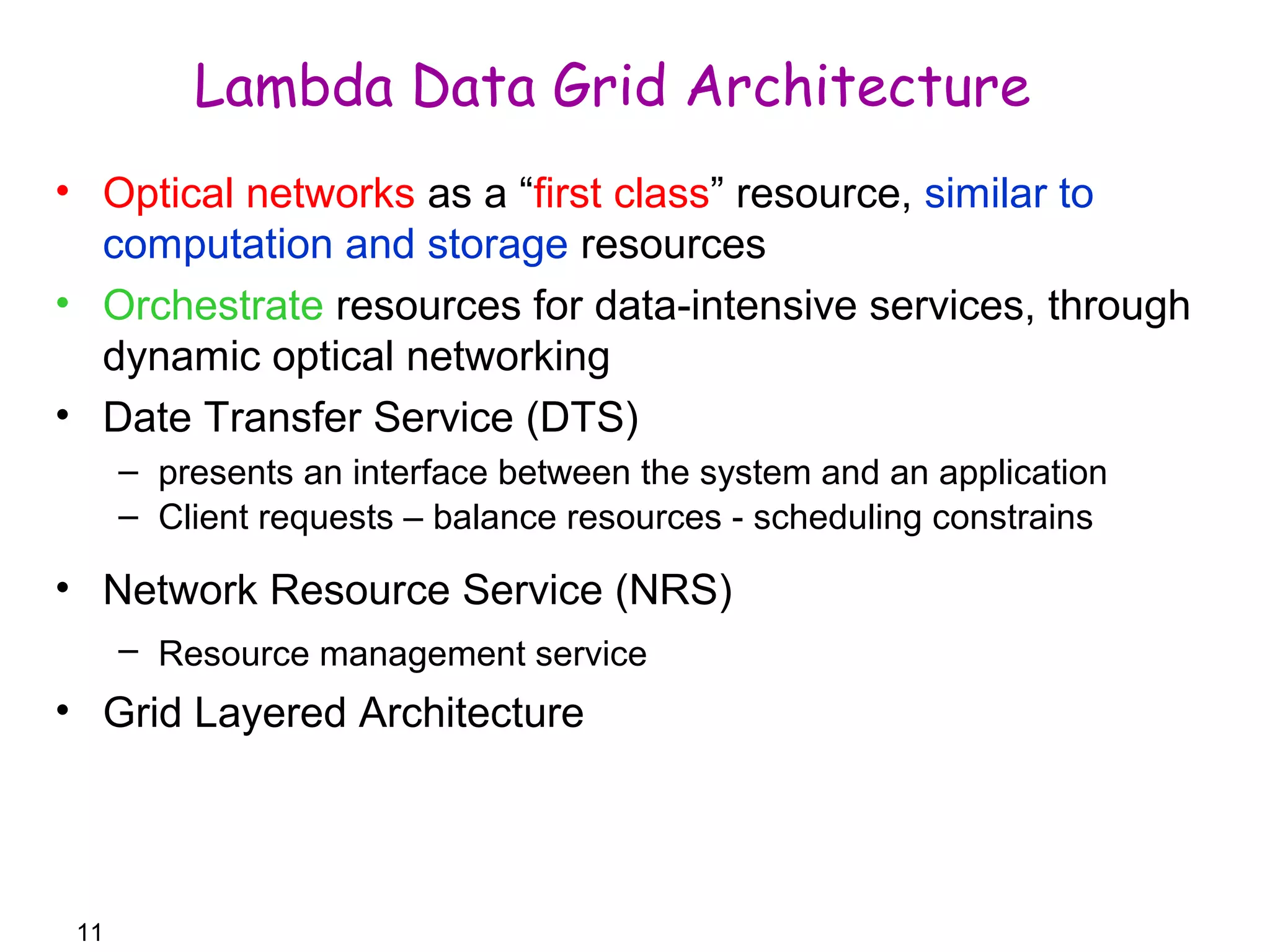

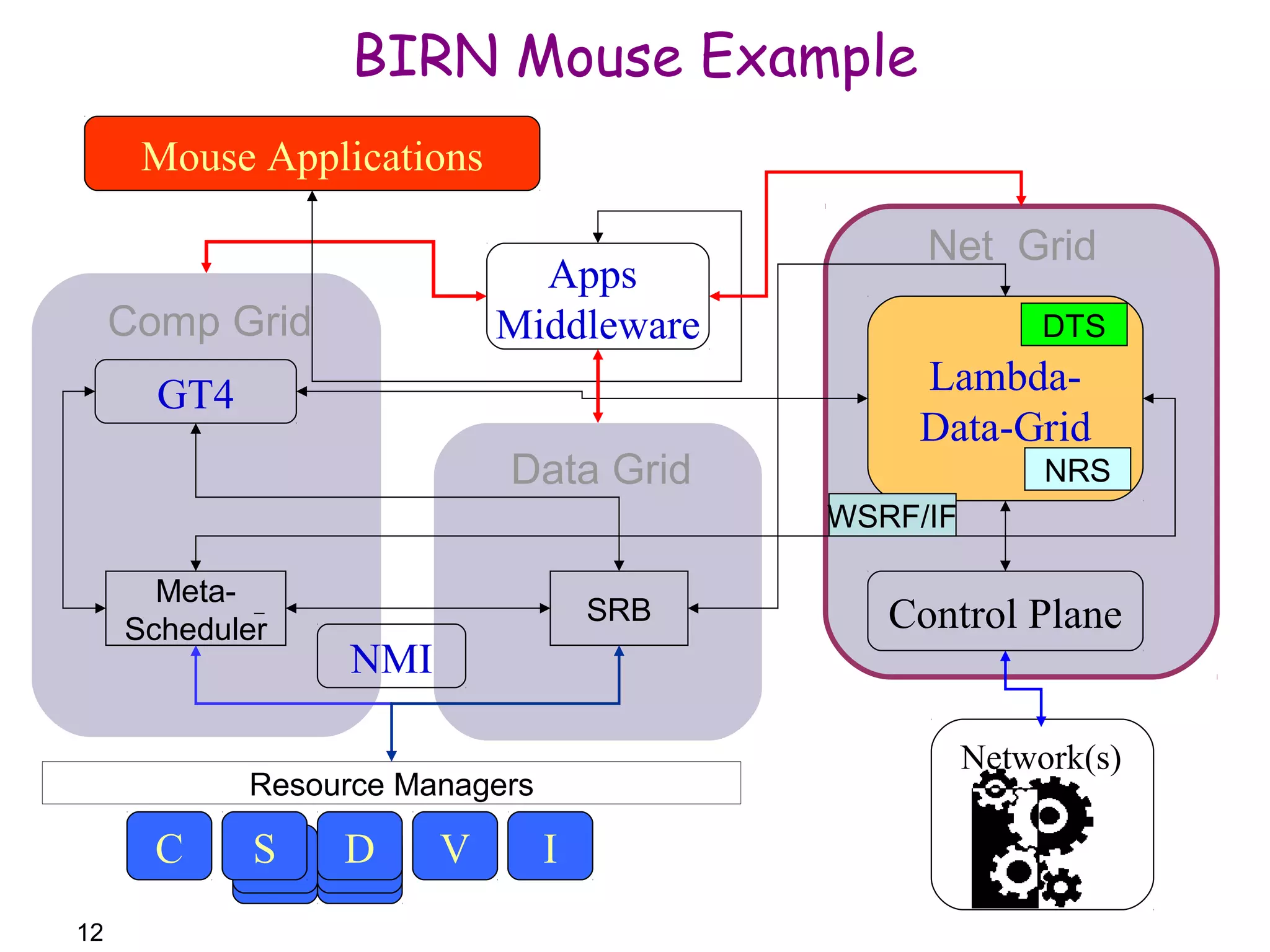

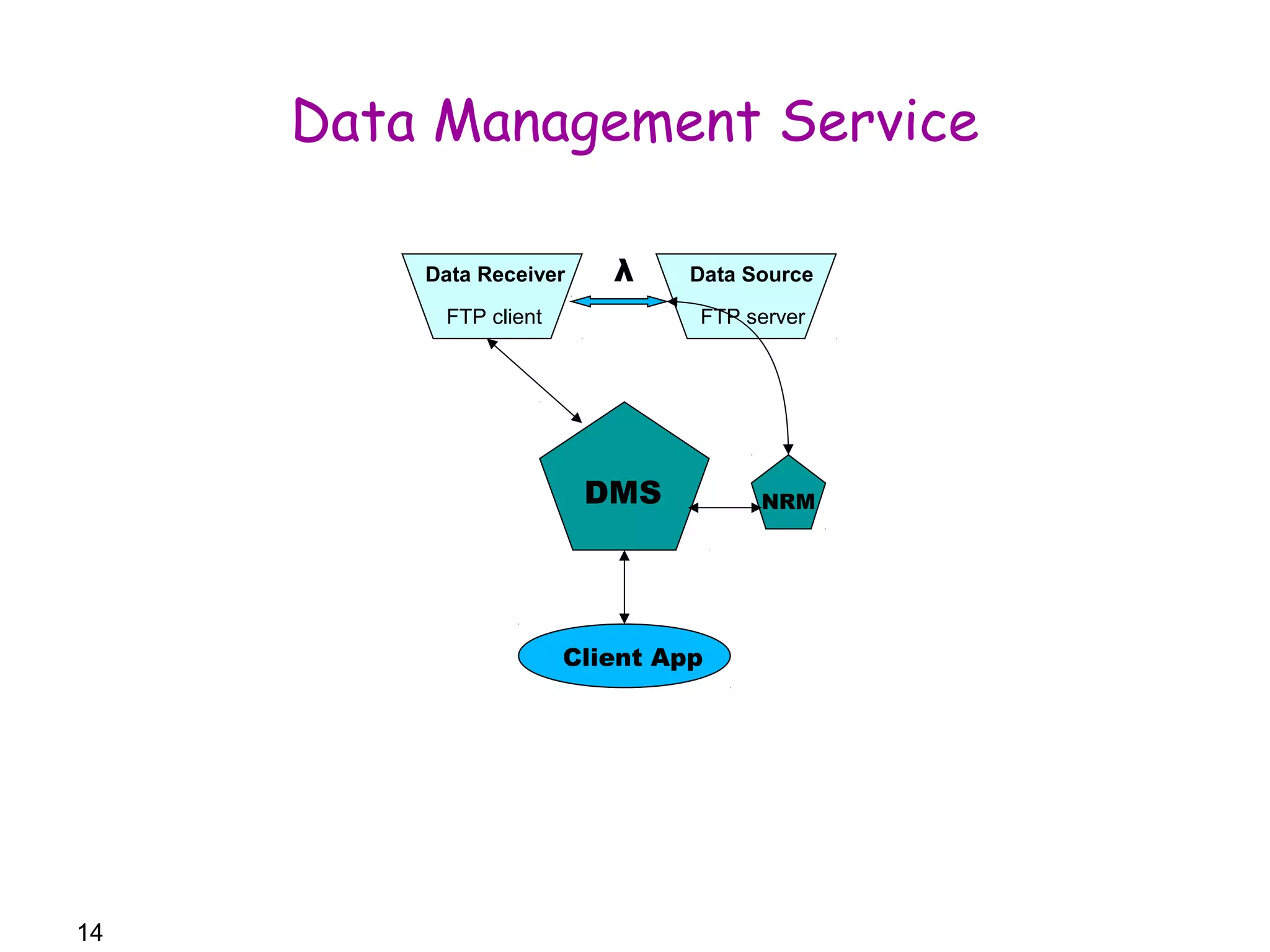

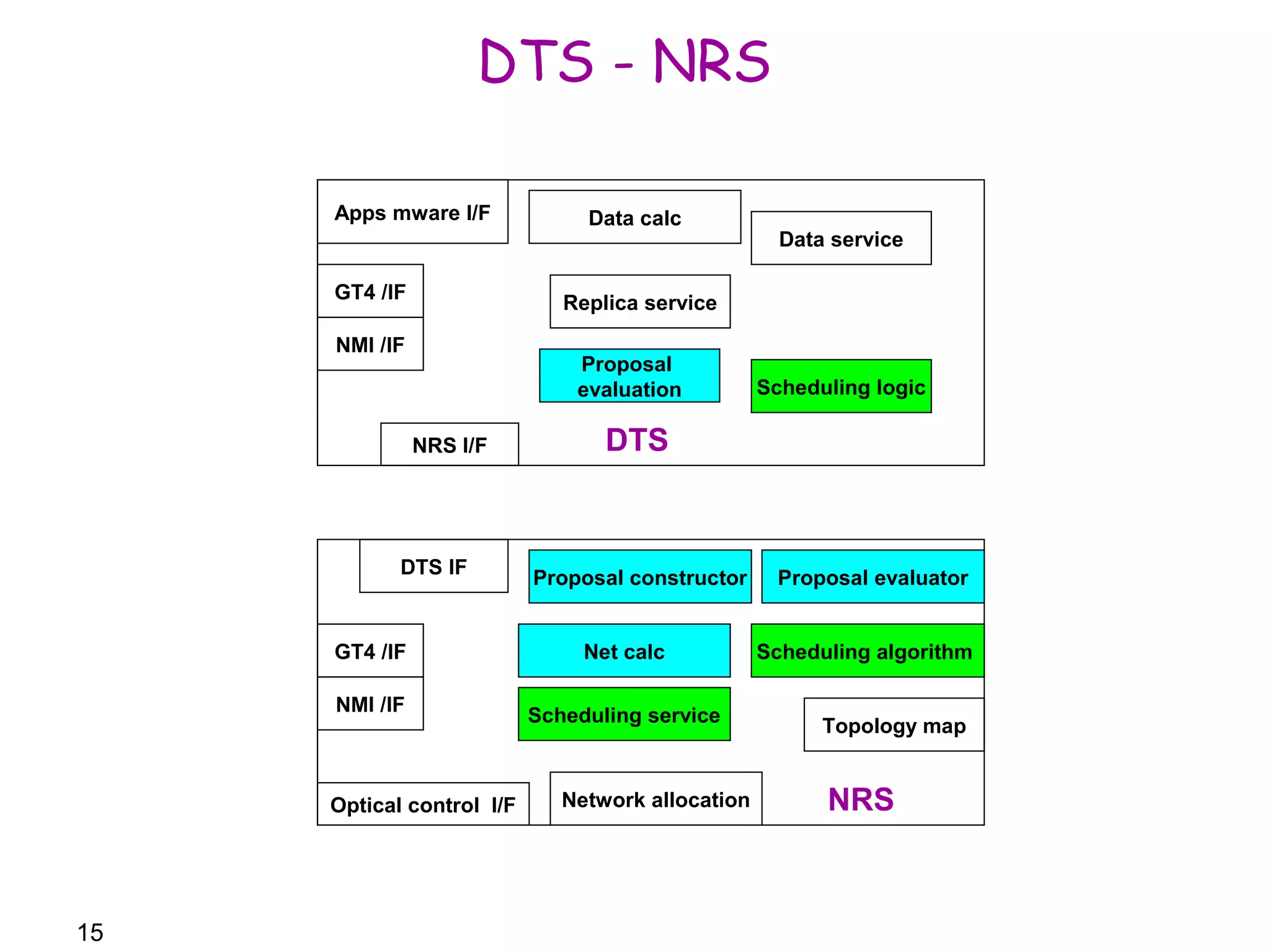

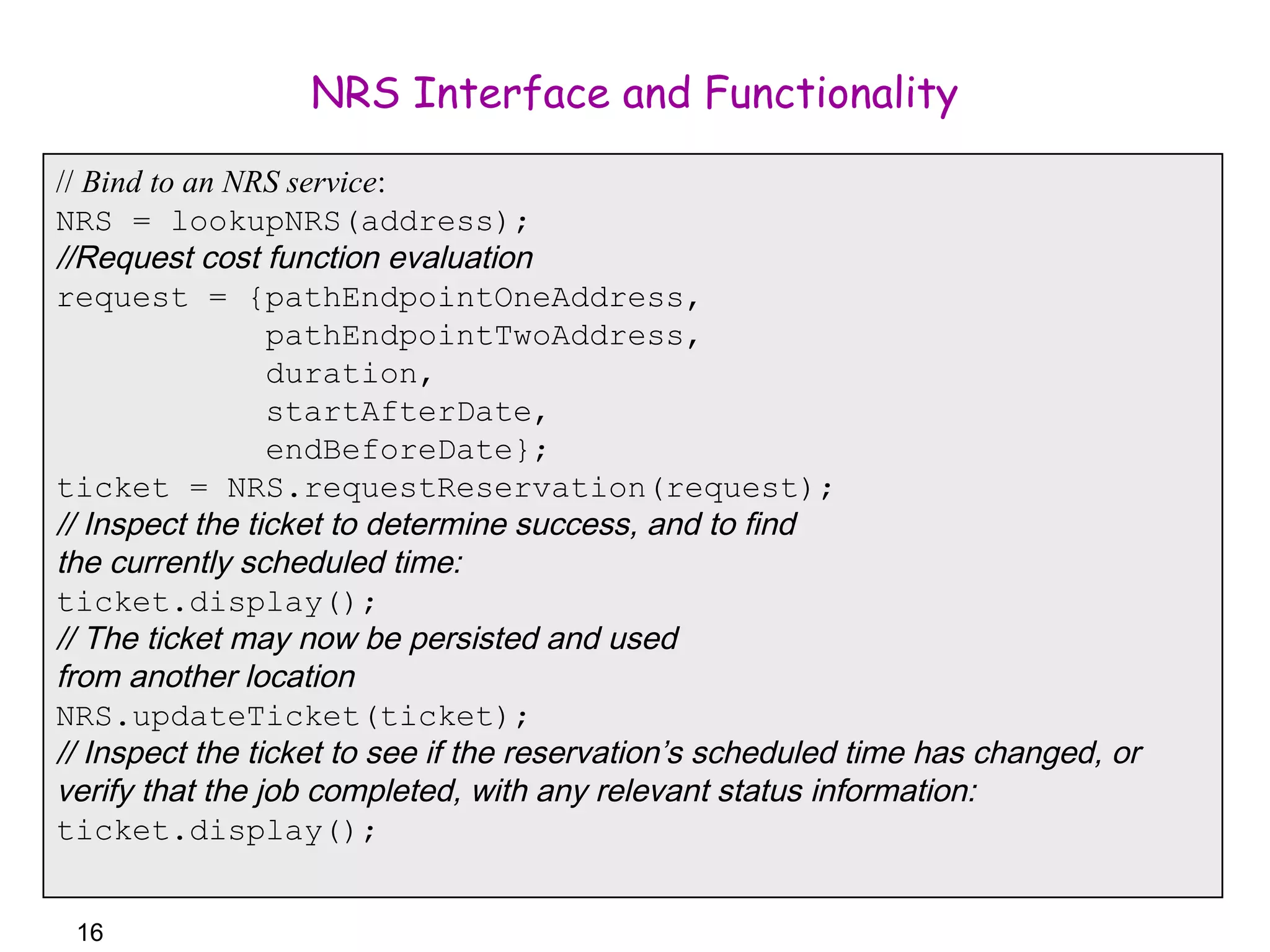

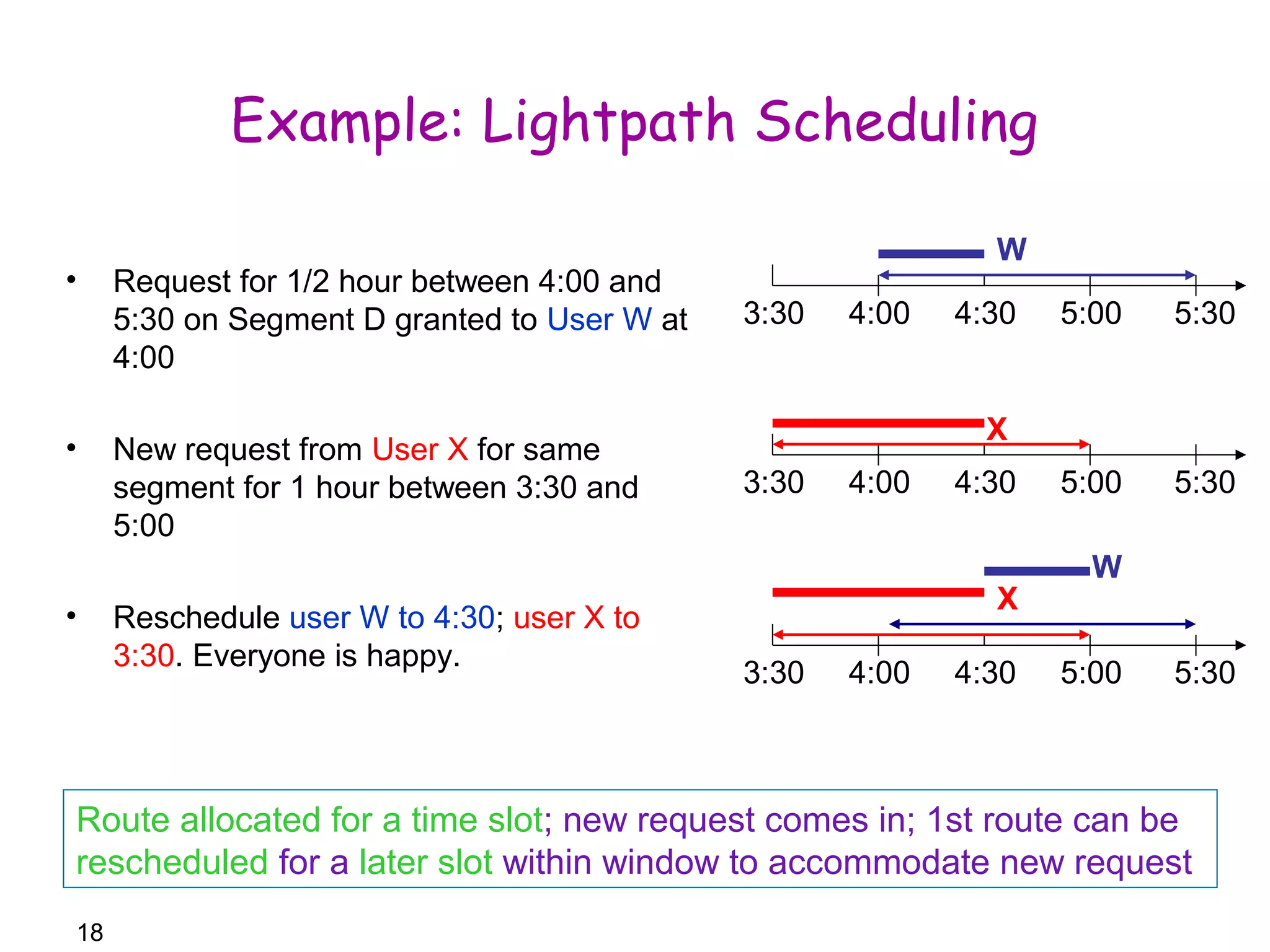

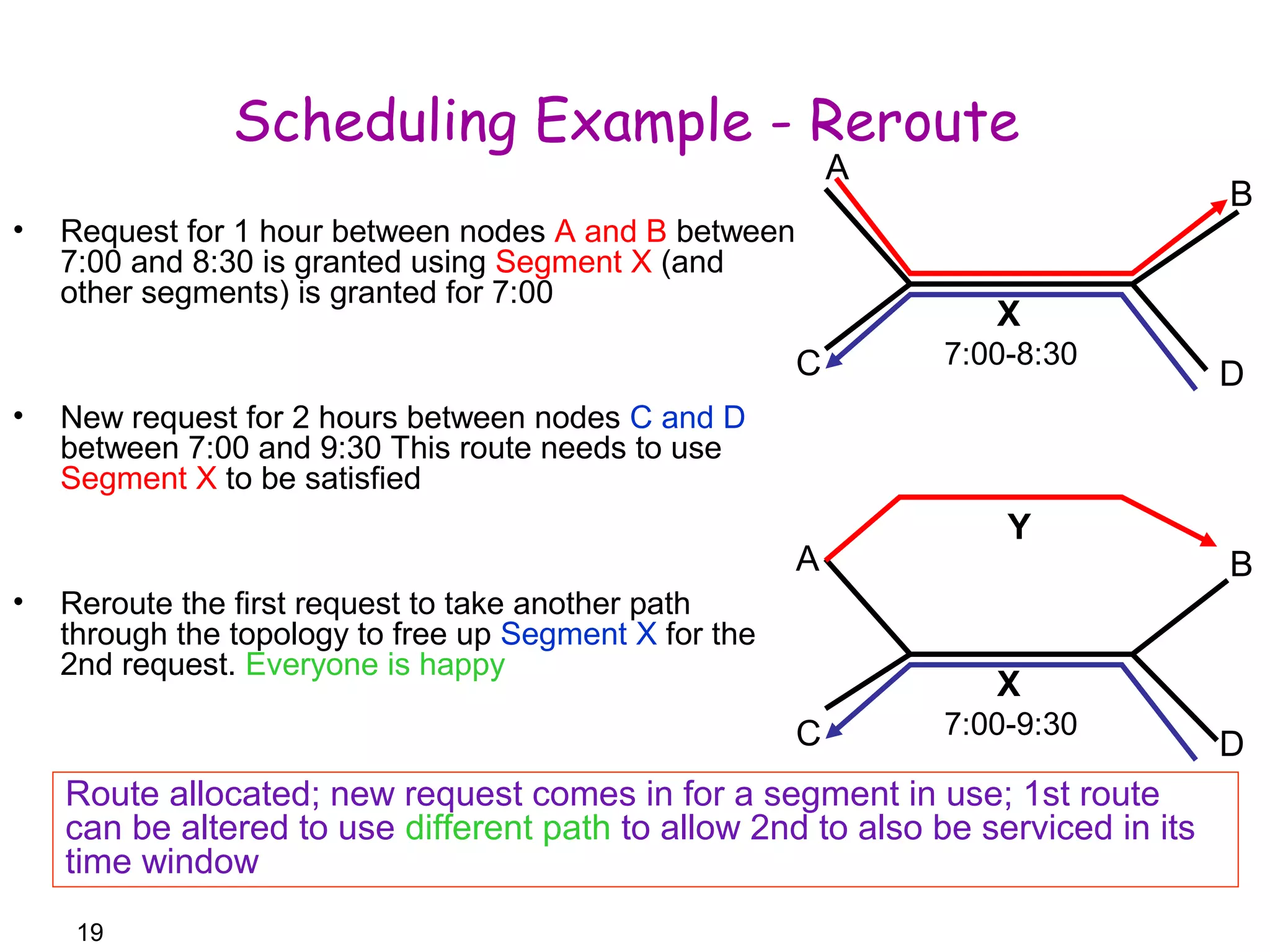

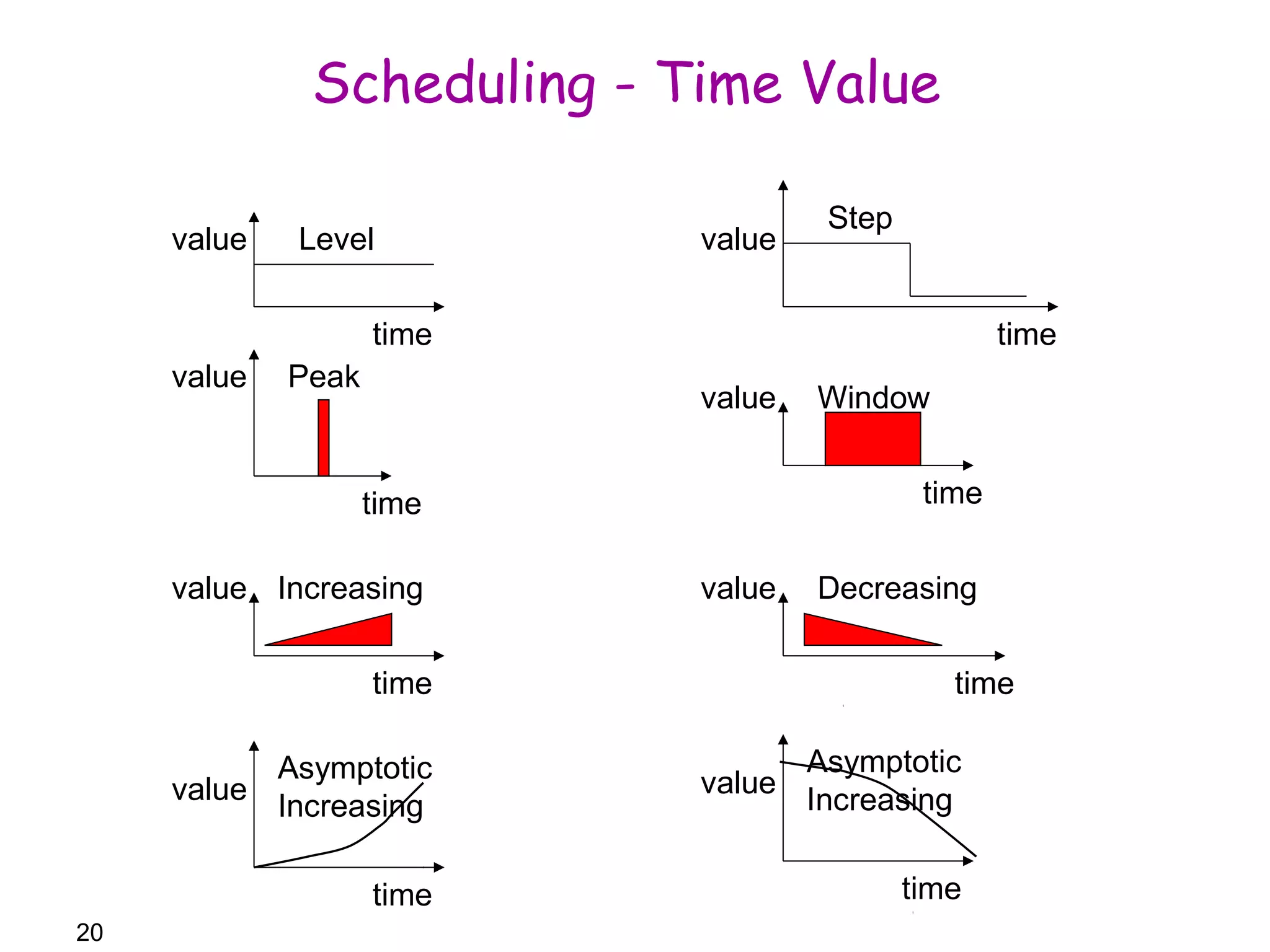

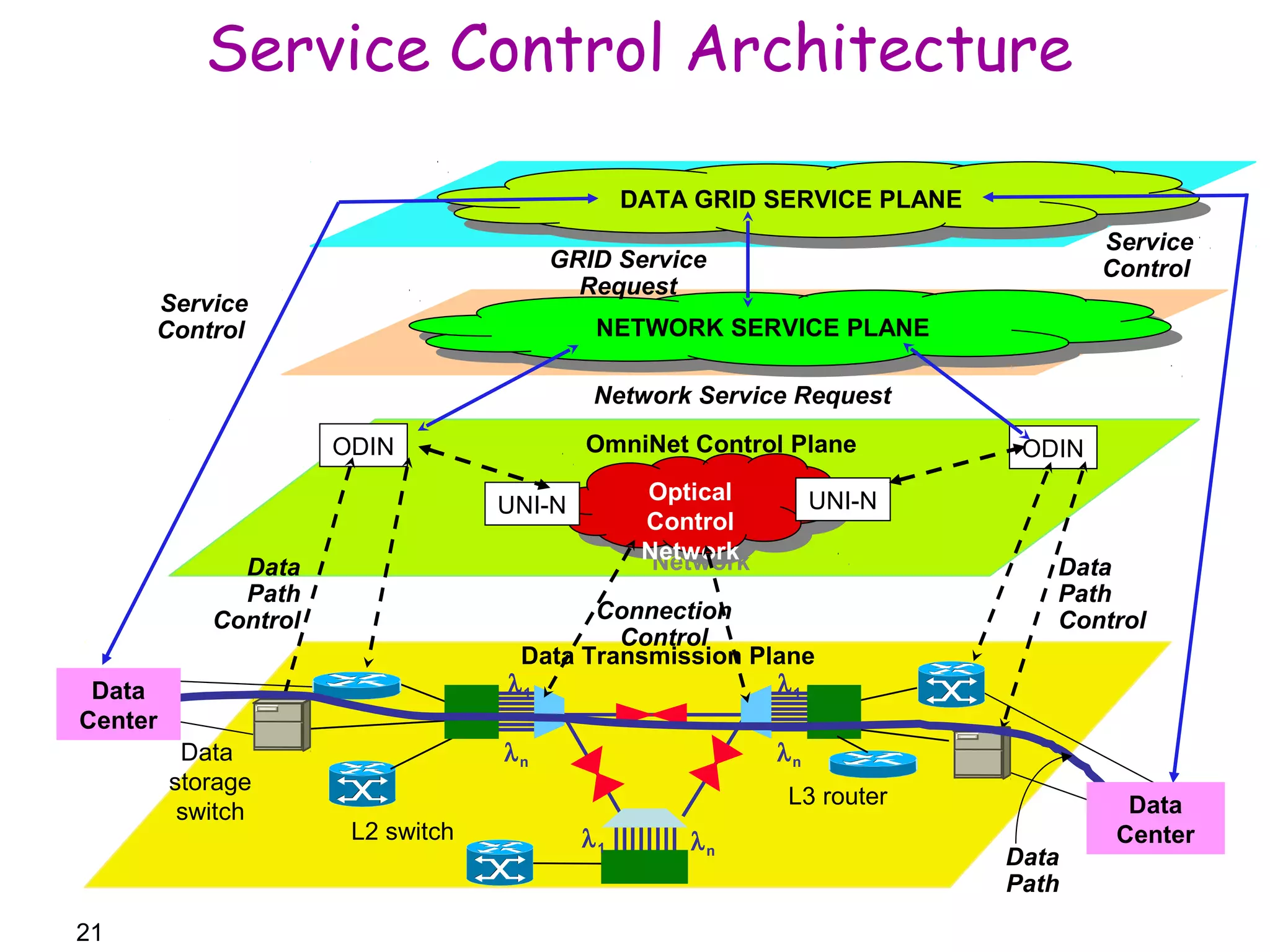

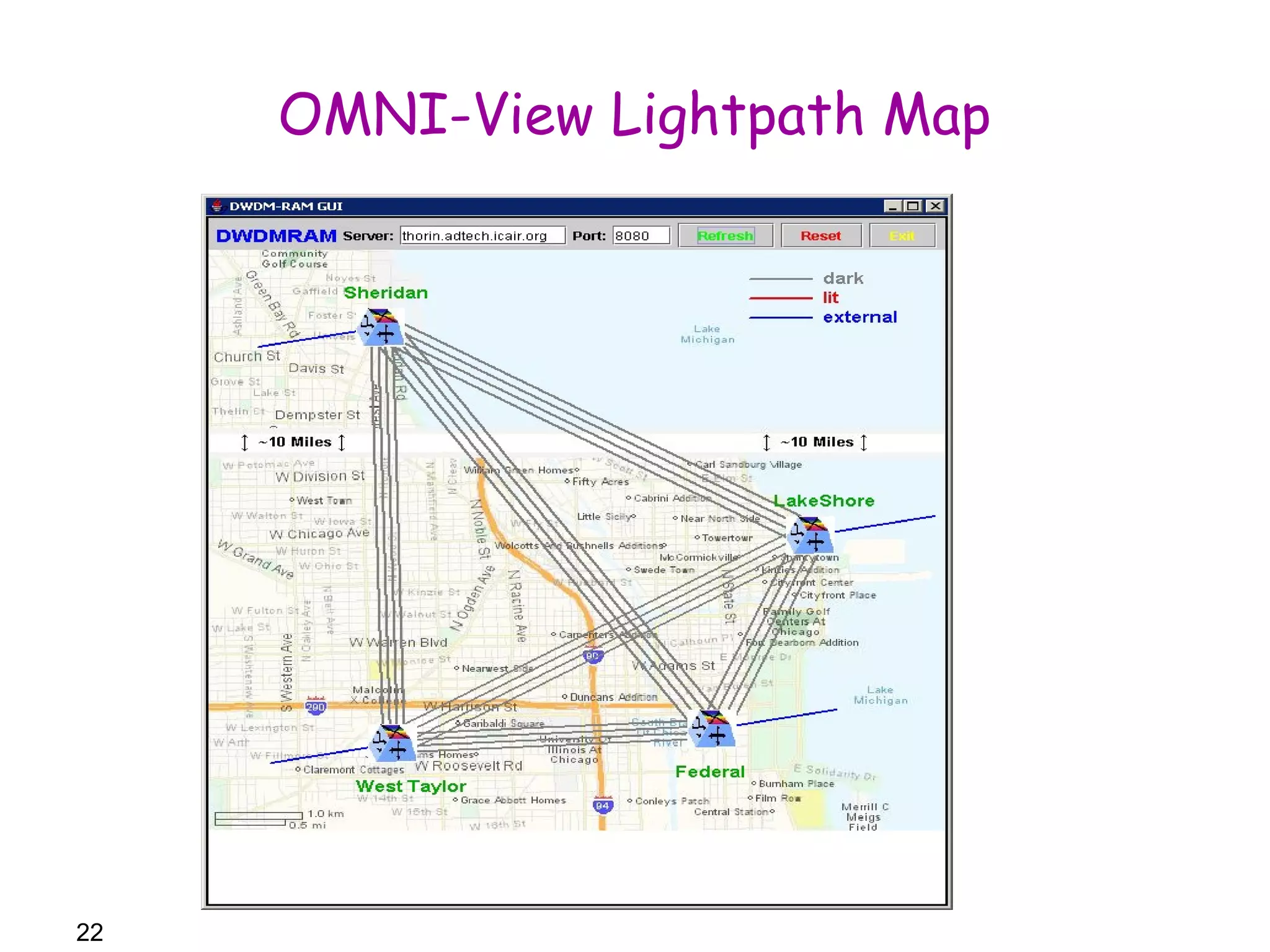

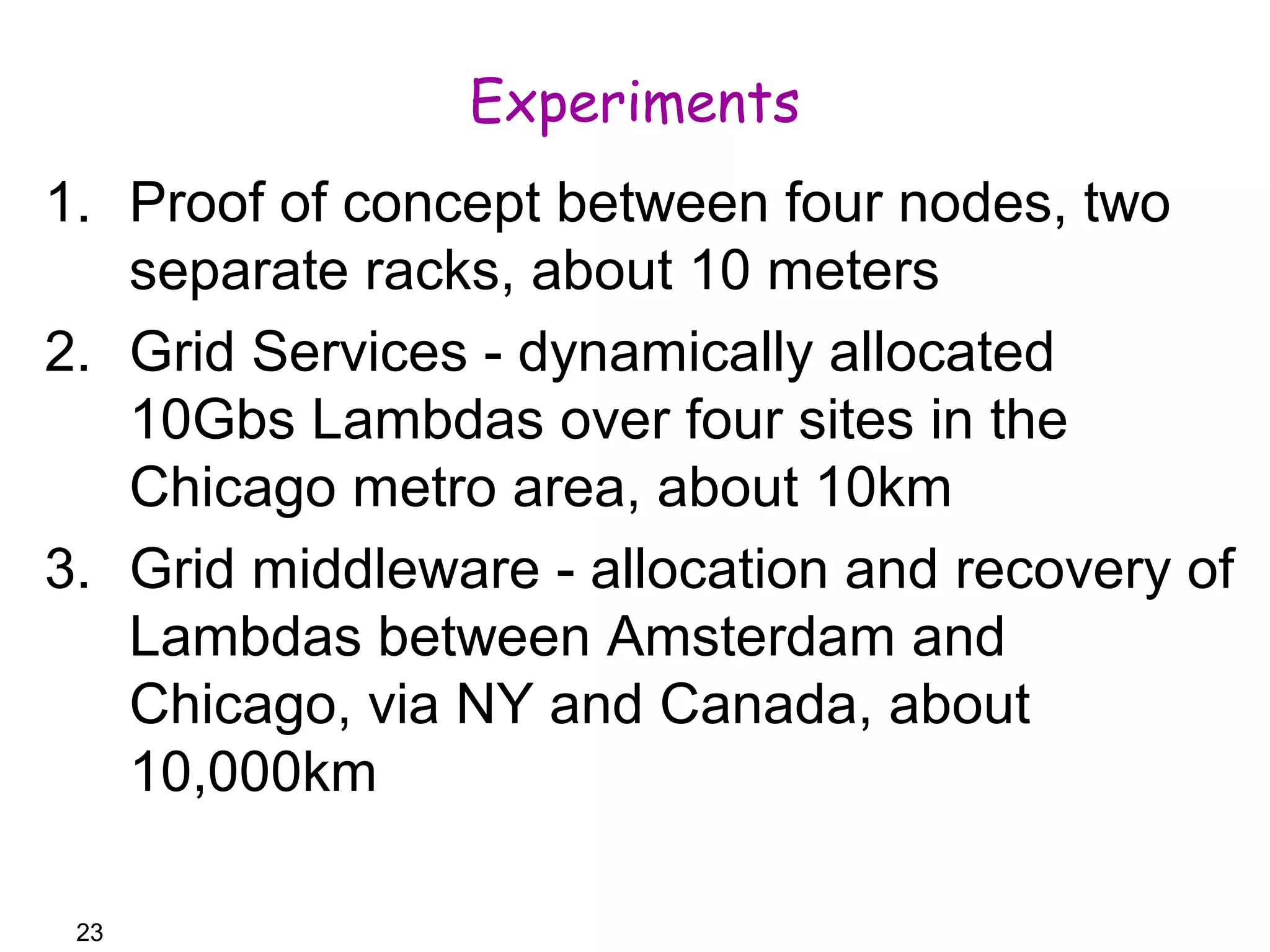

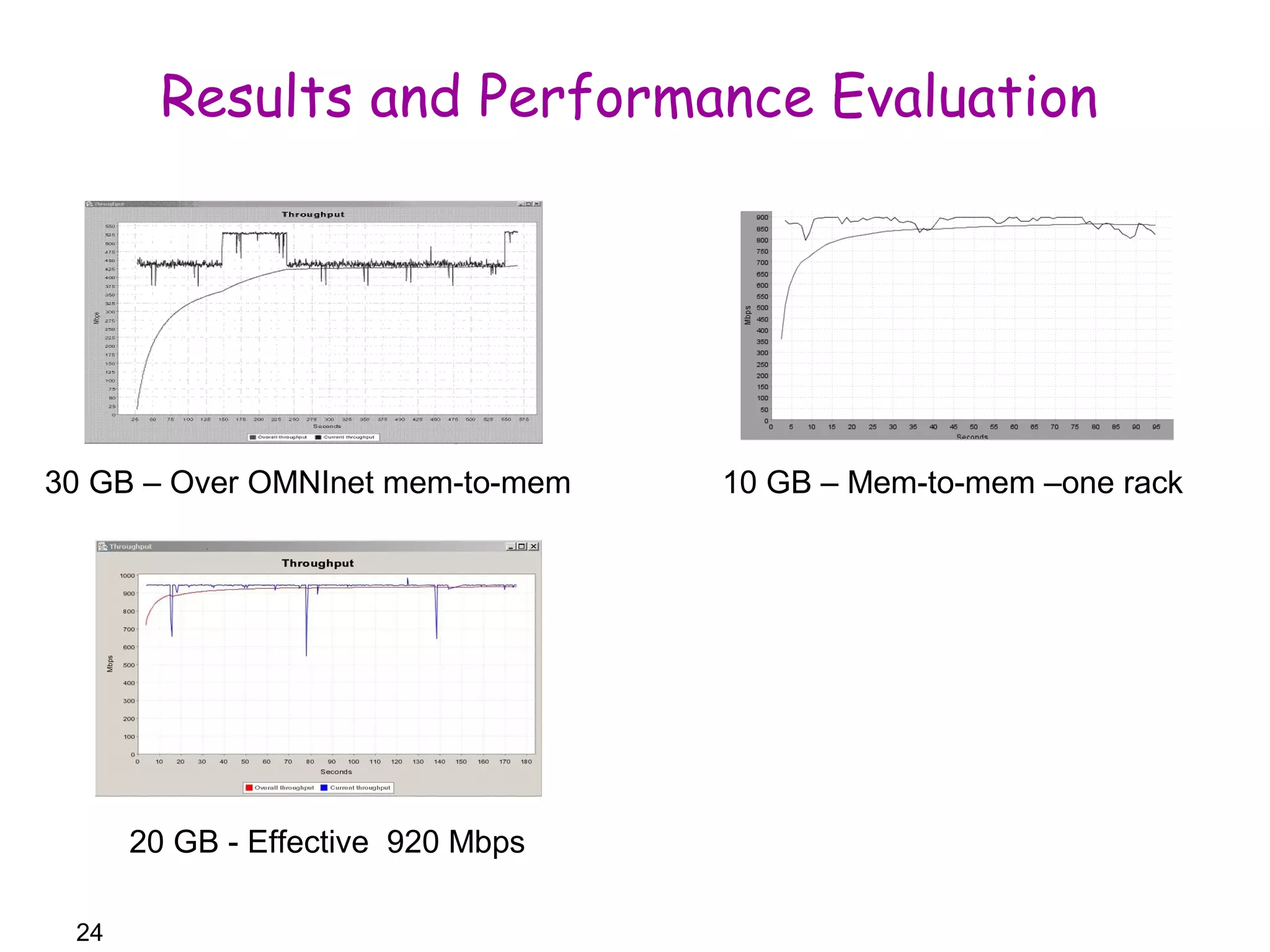

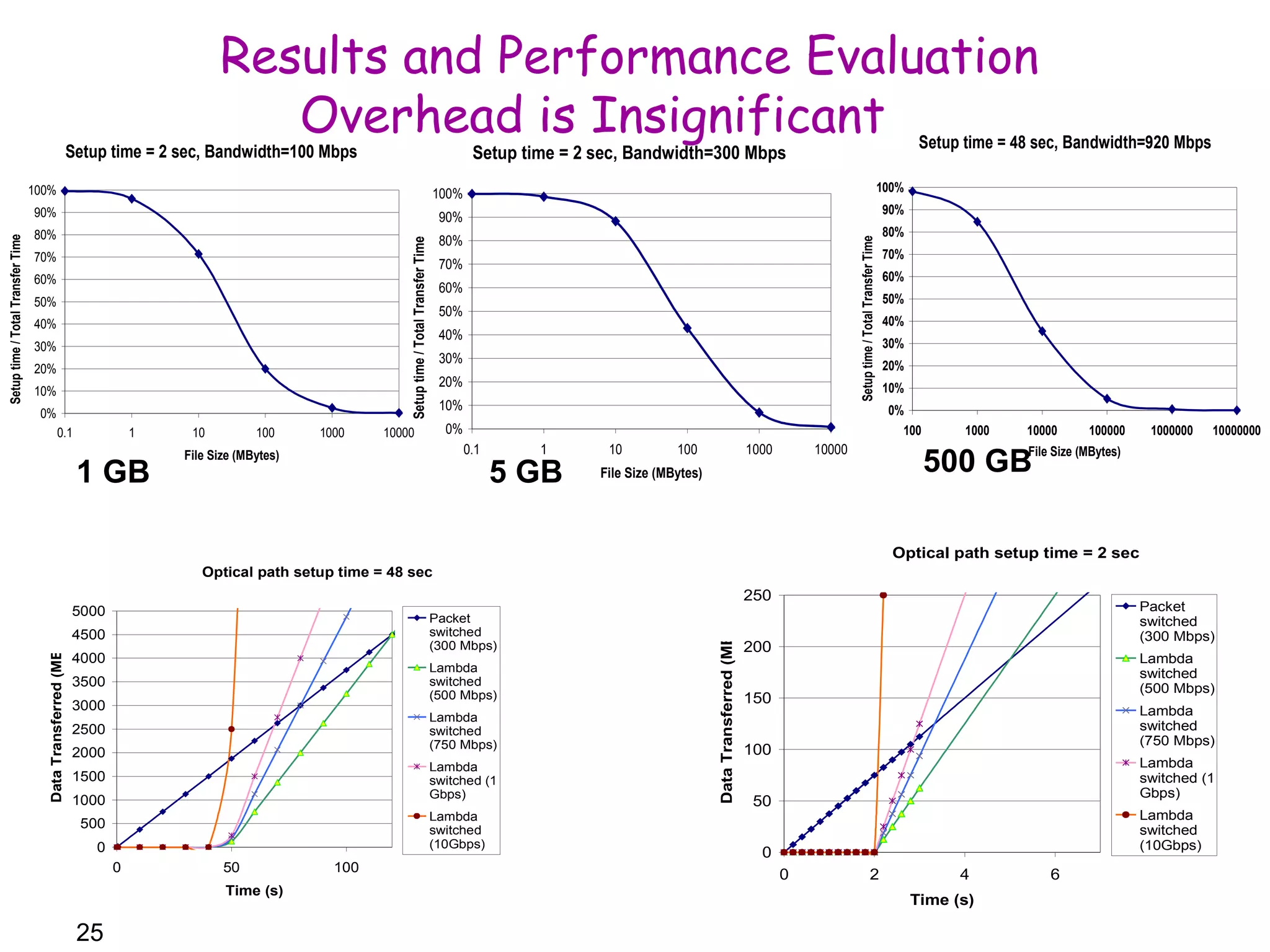

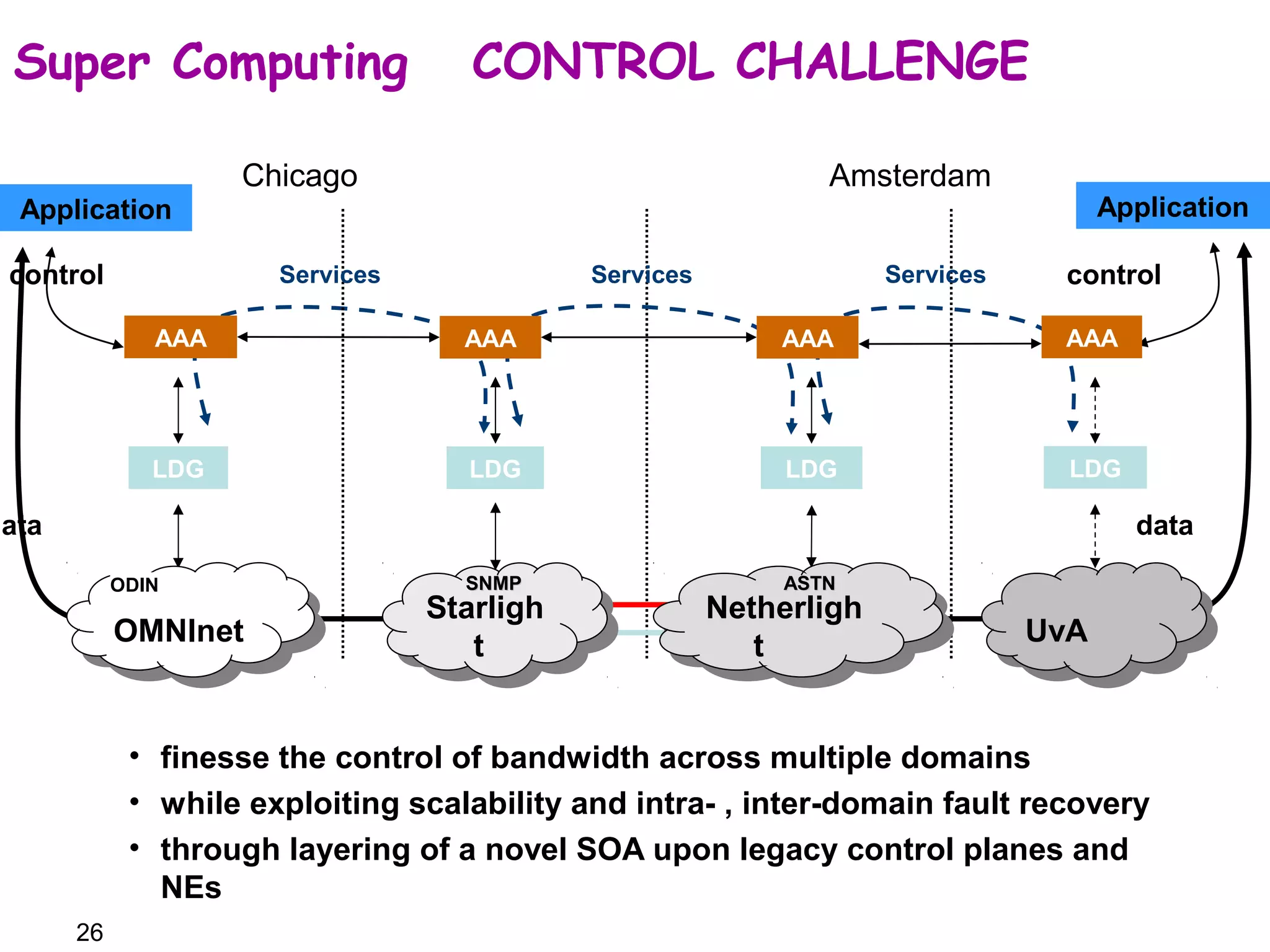

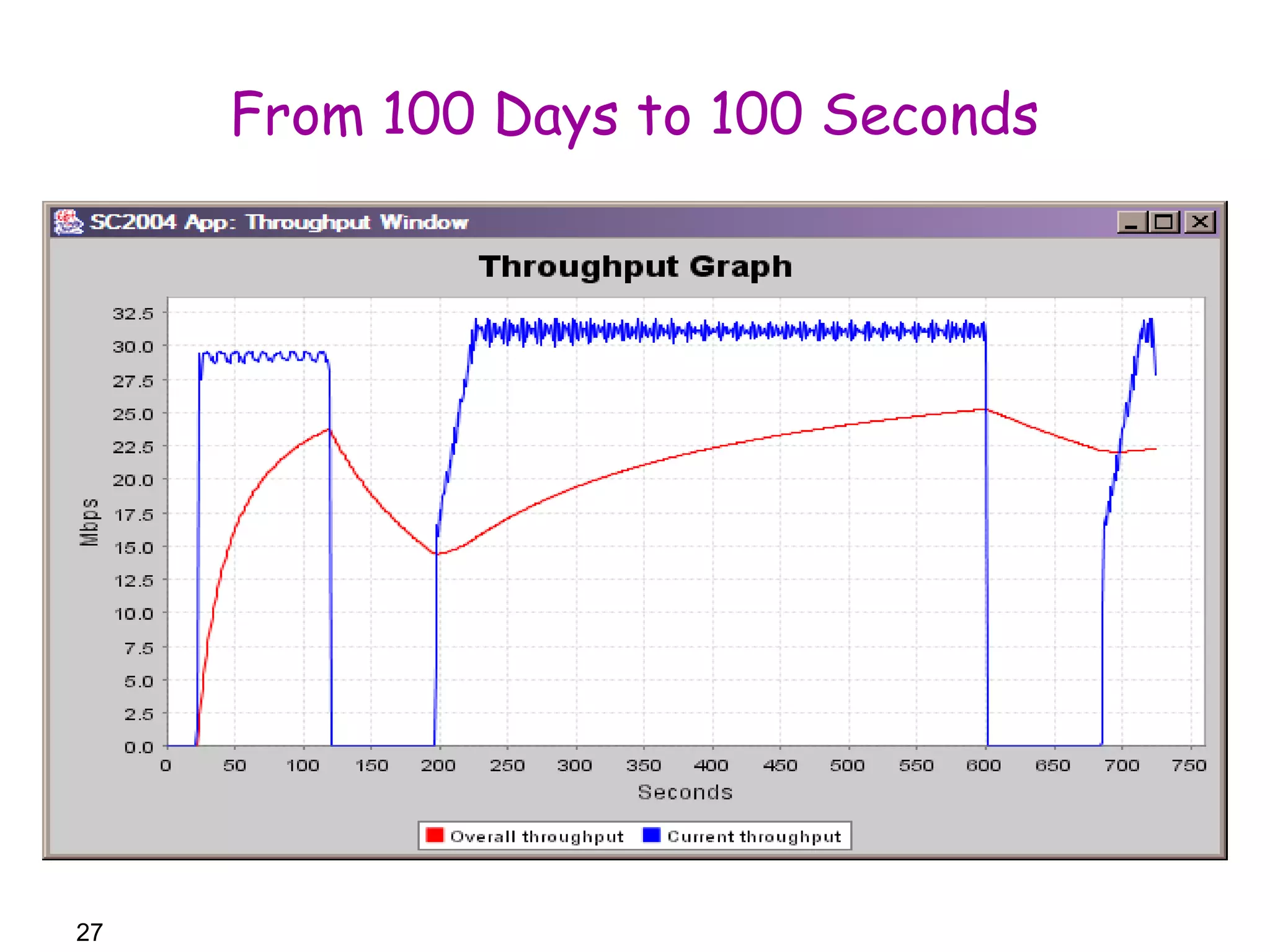

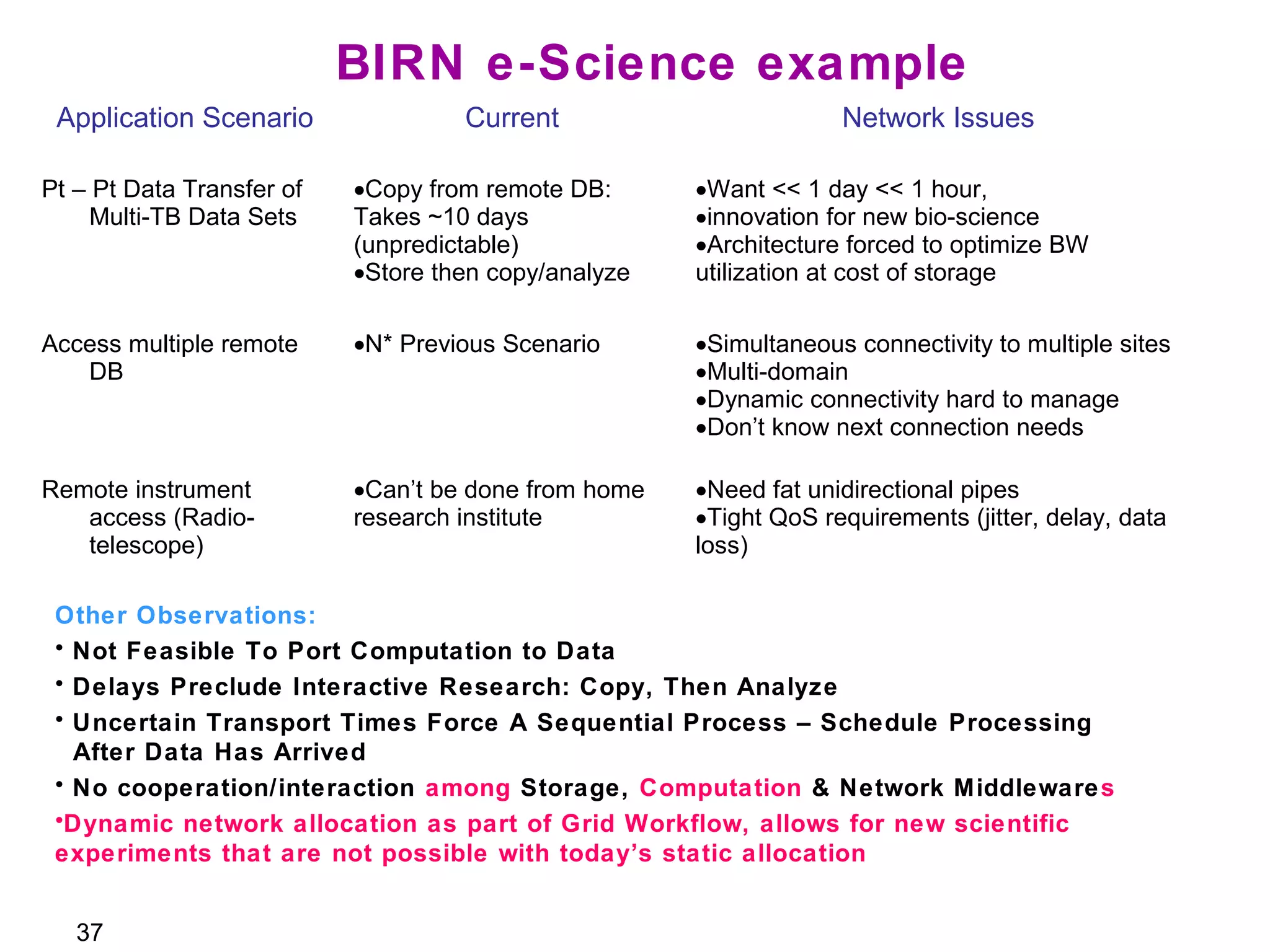

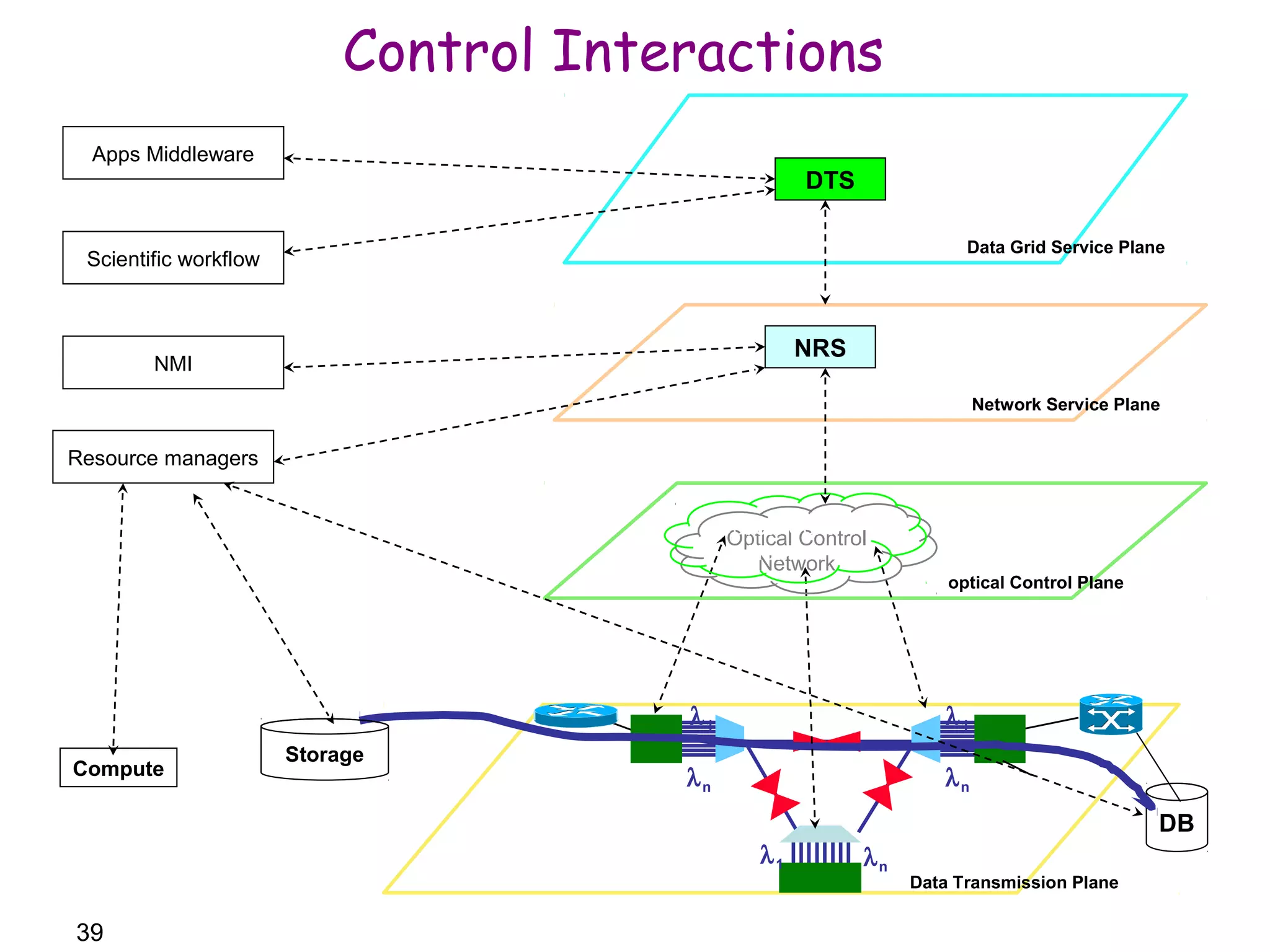

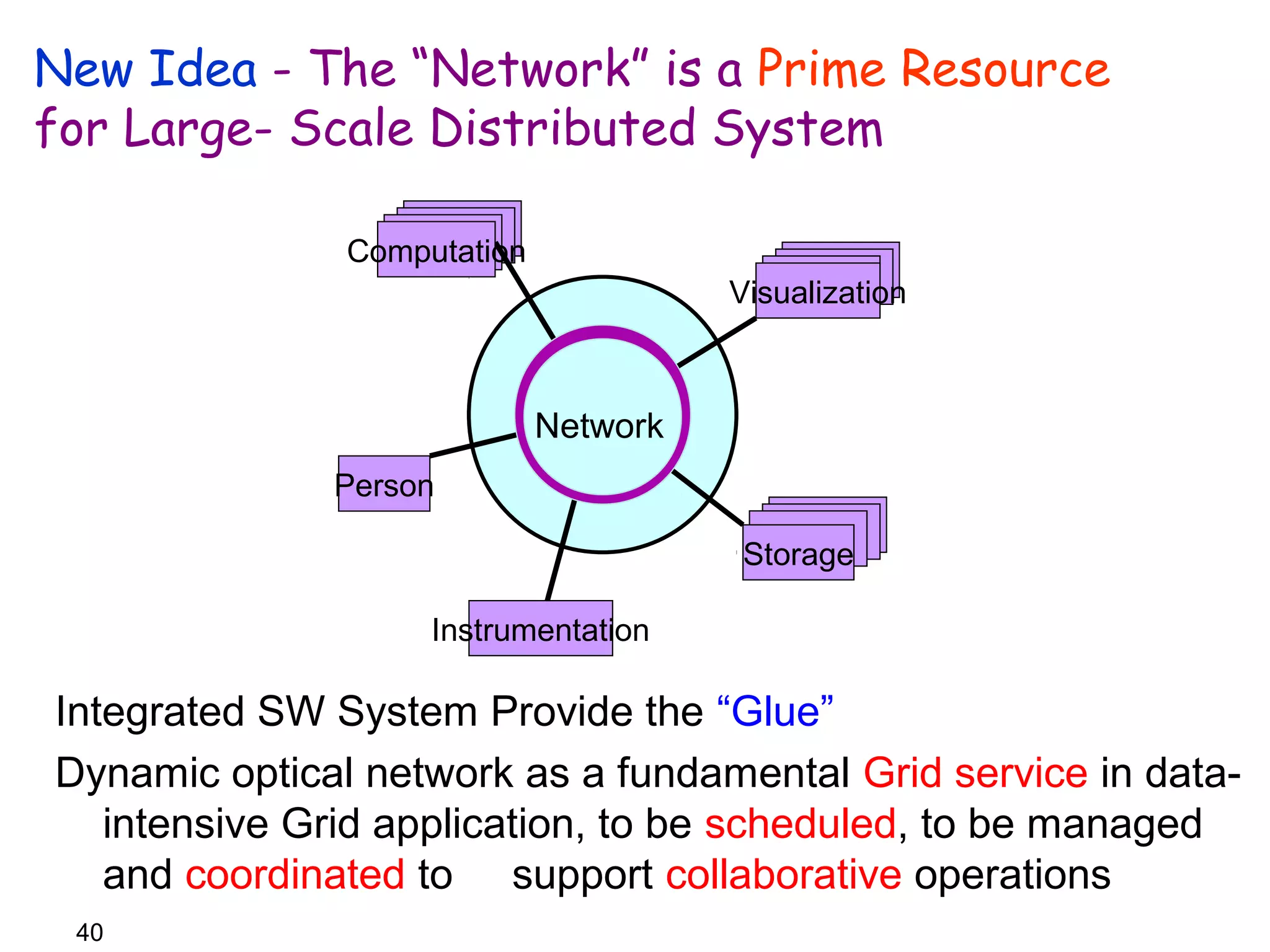

The document introduces the Lambda Data Grid, a novel grid computing architecture that treats the network as a primary resource alongside computation and storage, addressing scalability issues in data-intensive applications. It outlines the challenges of existing grid technologies, presents solutions for managing network resources, and evaluates performance through testbed implementations. The conclusion emphasizes the need for the network to be integrated as a class resource in grid computing, facilitating efficient orchestration and scheduling of resources for advanced e-science applications.