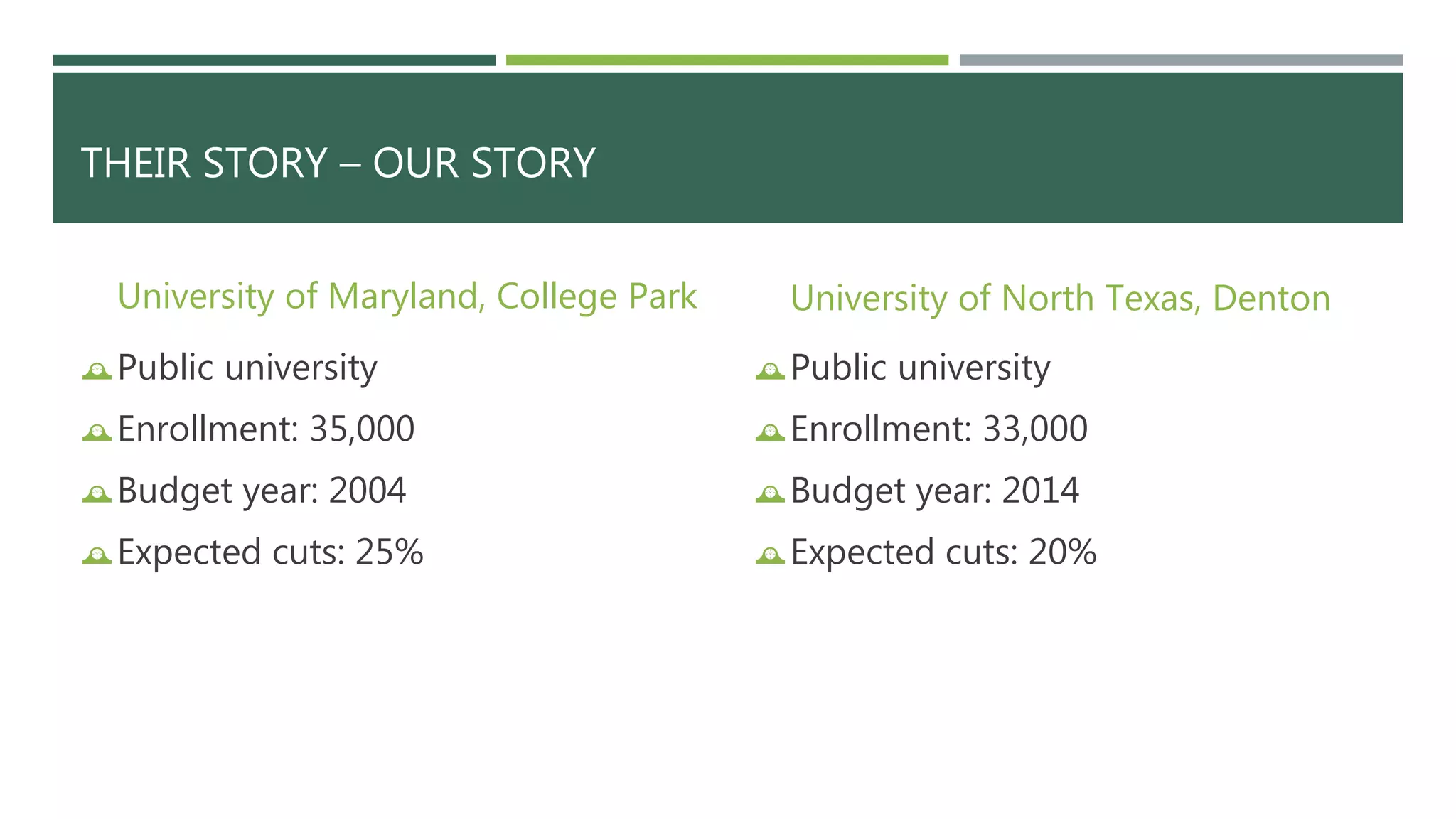

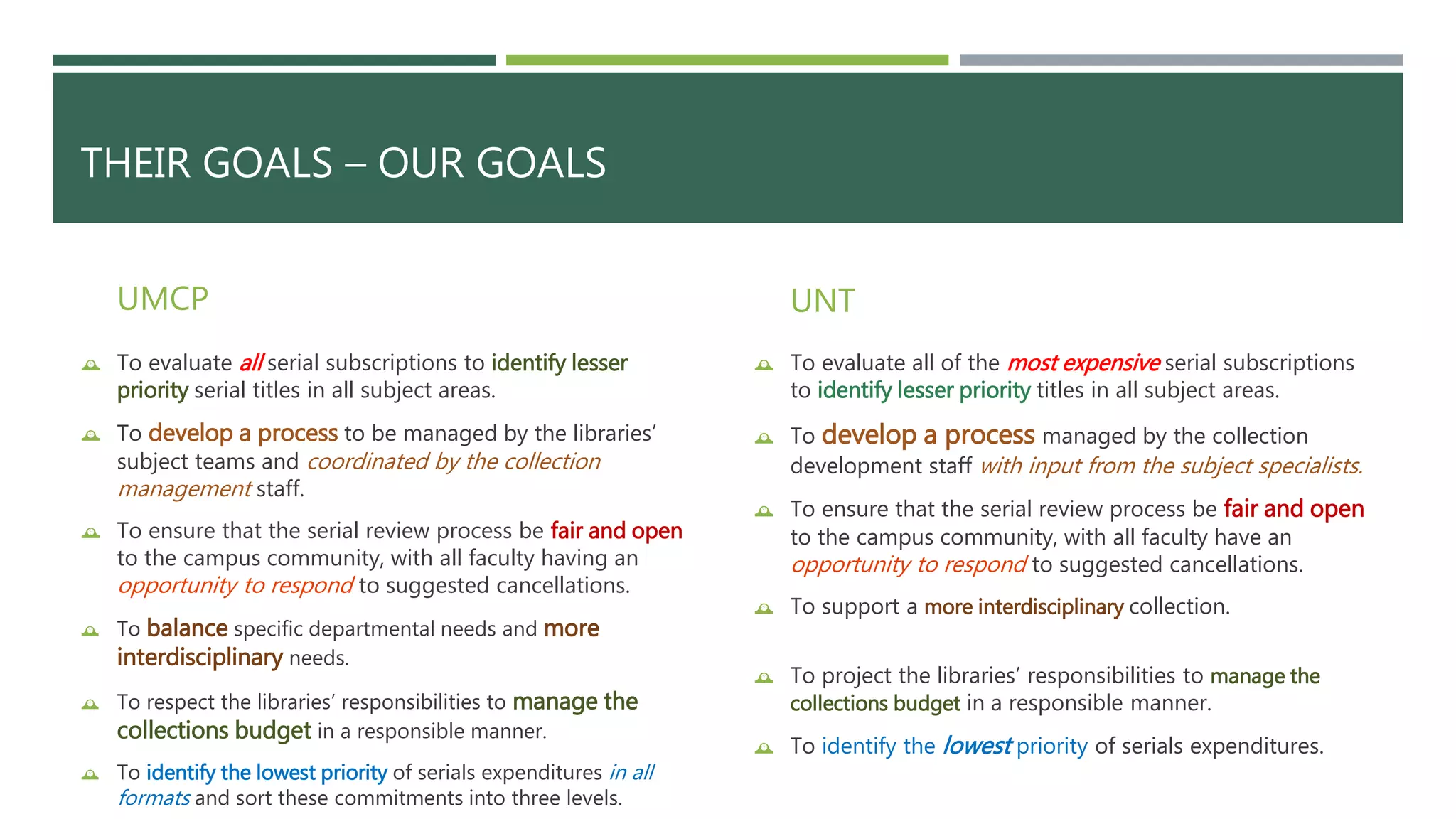

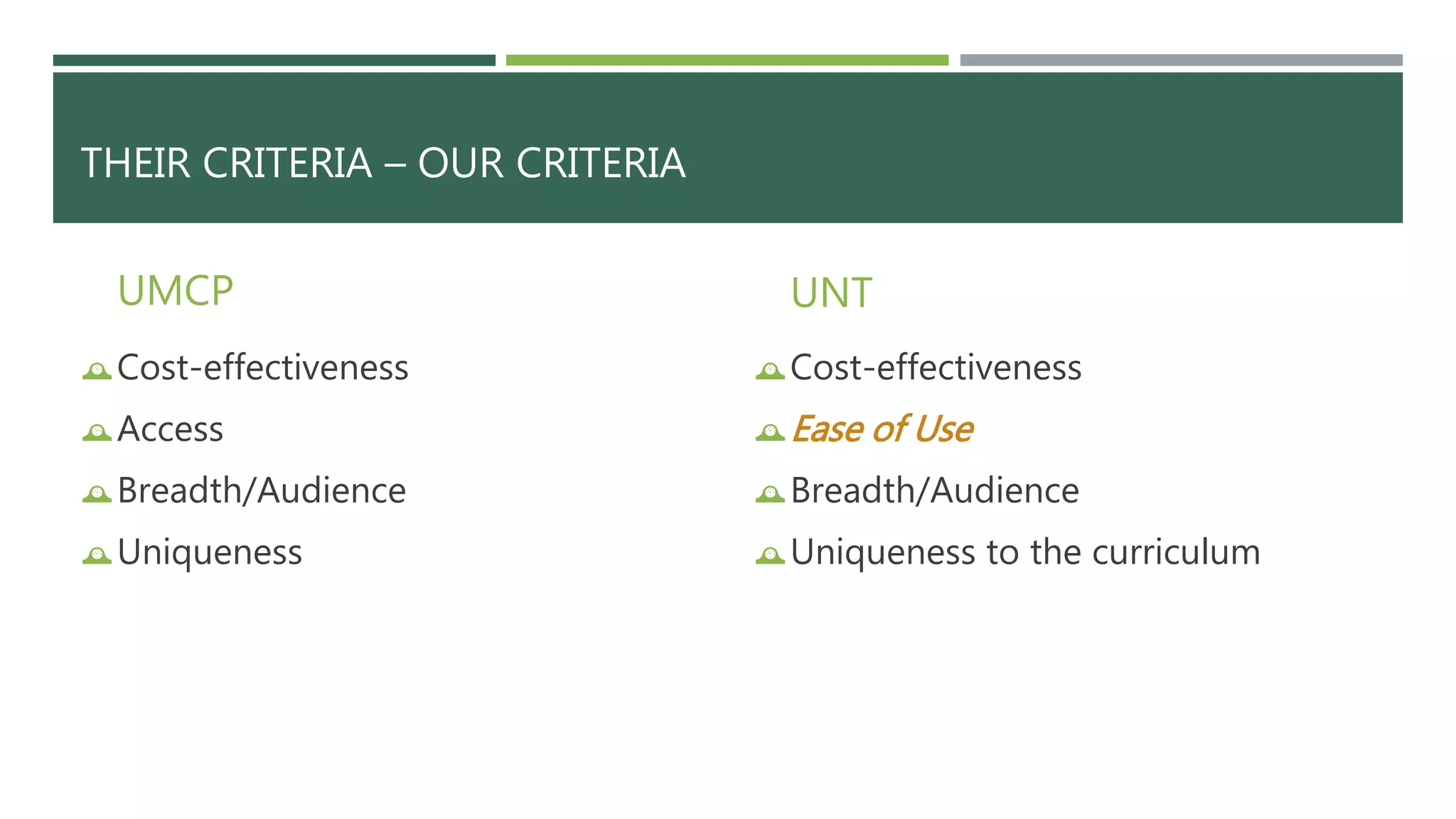

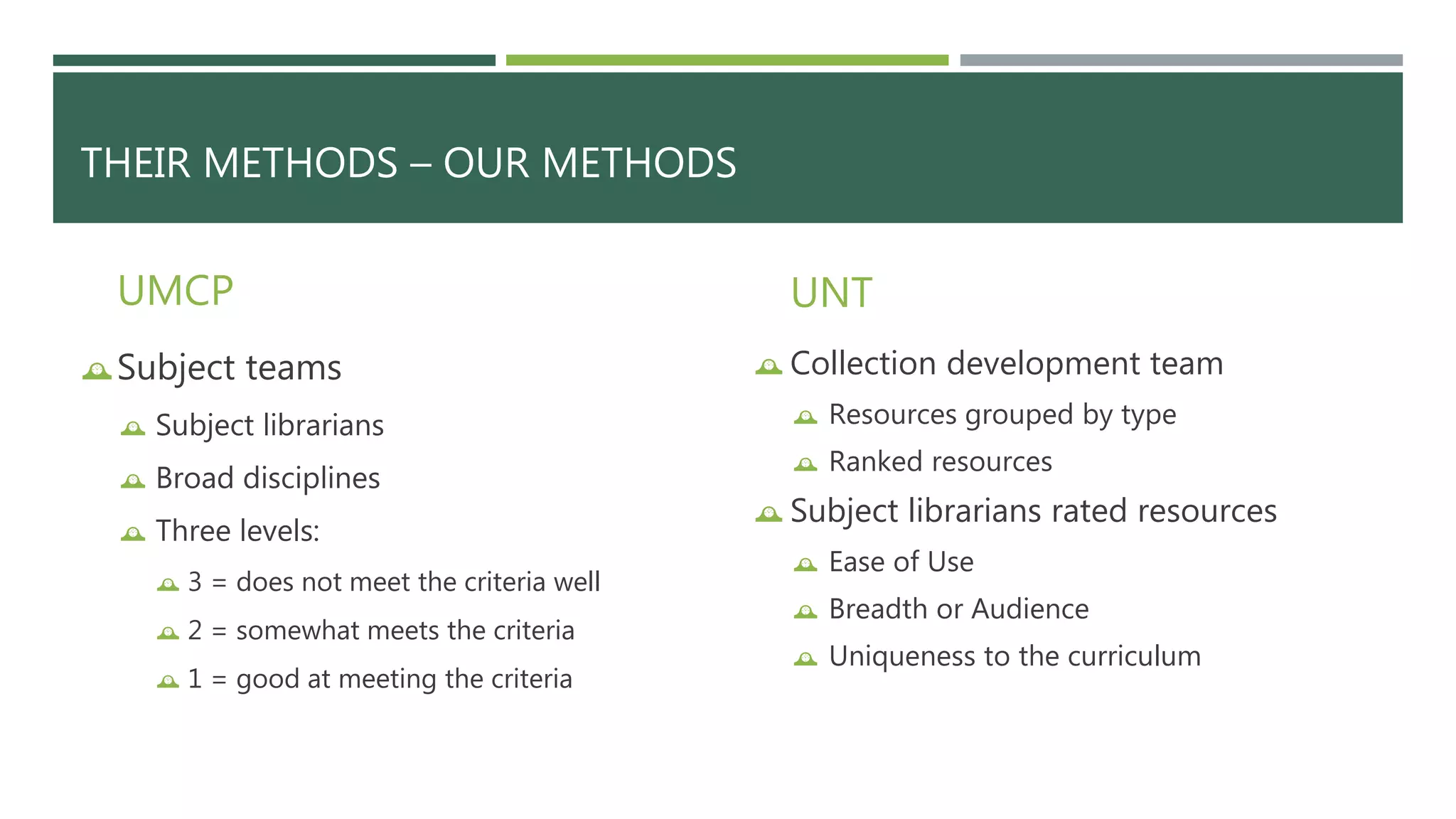

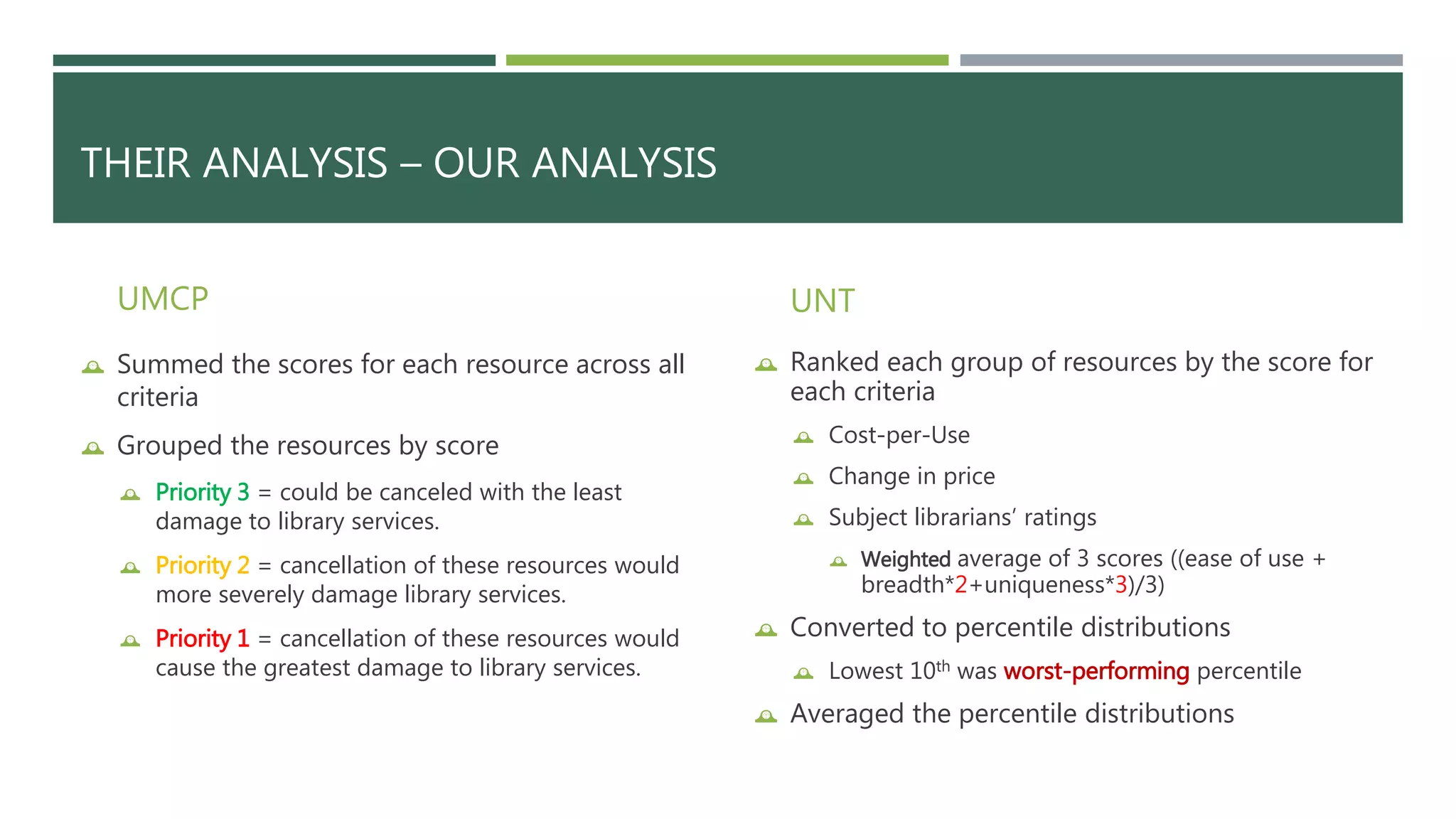

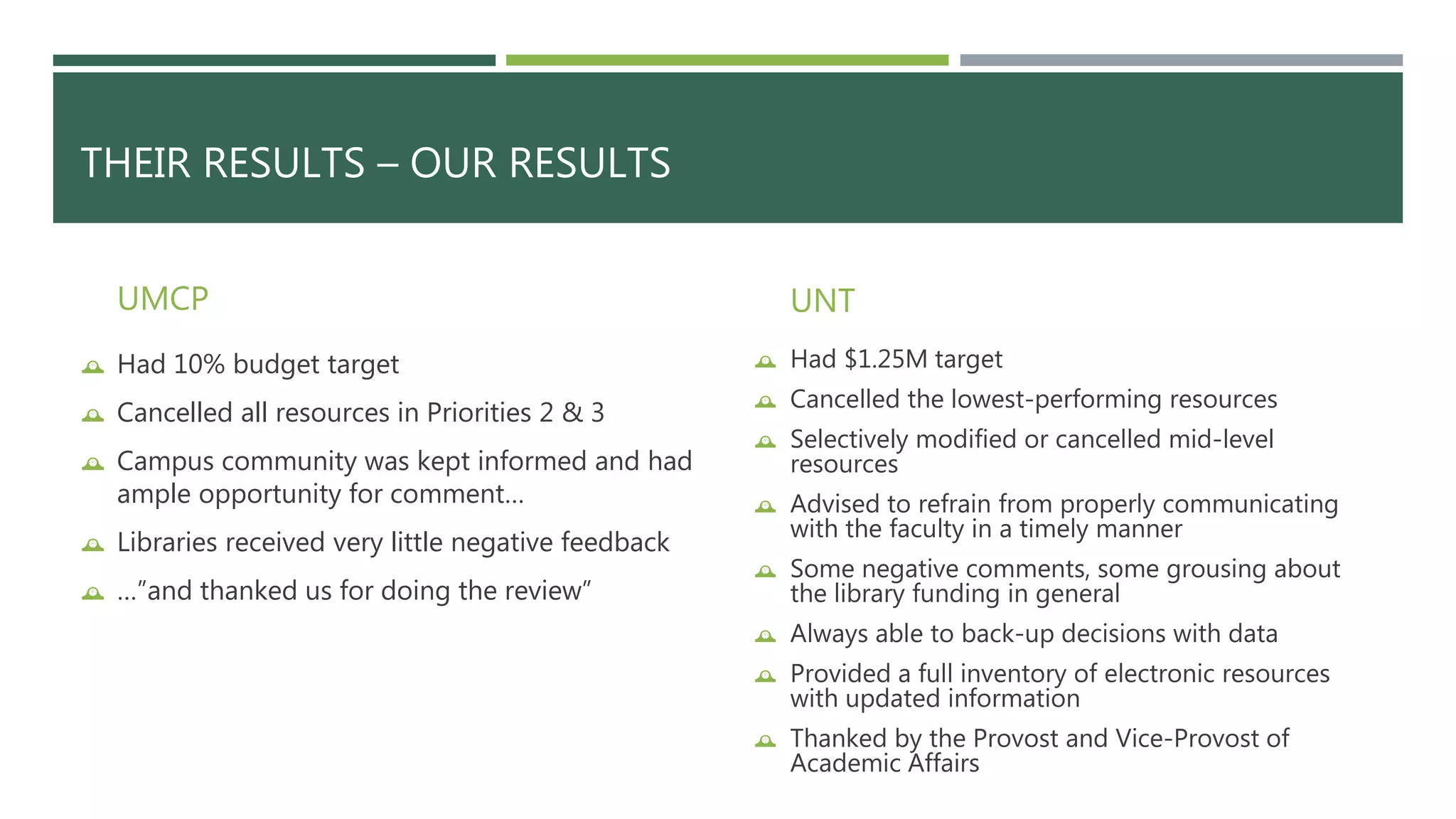

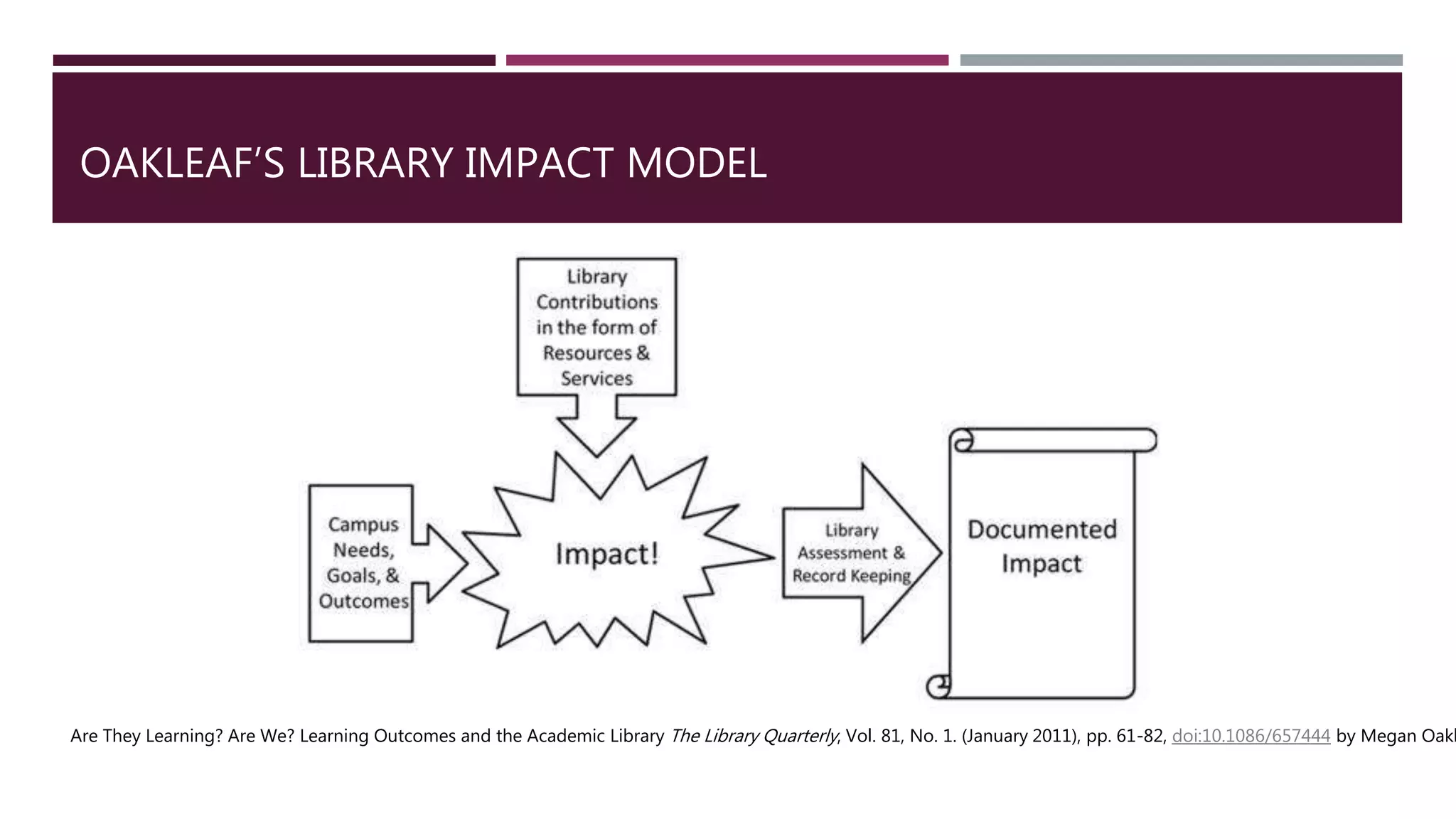

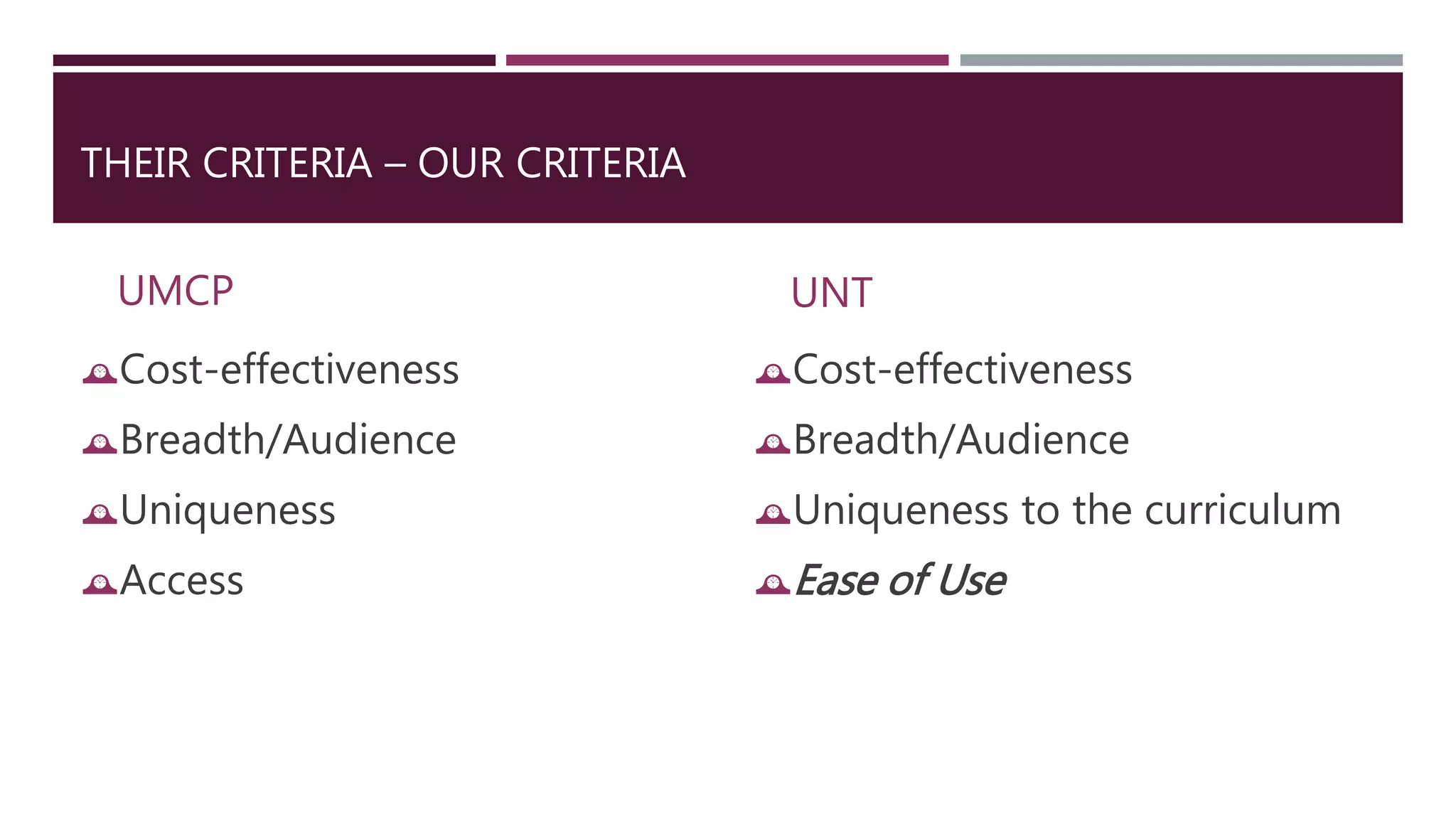

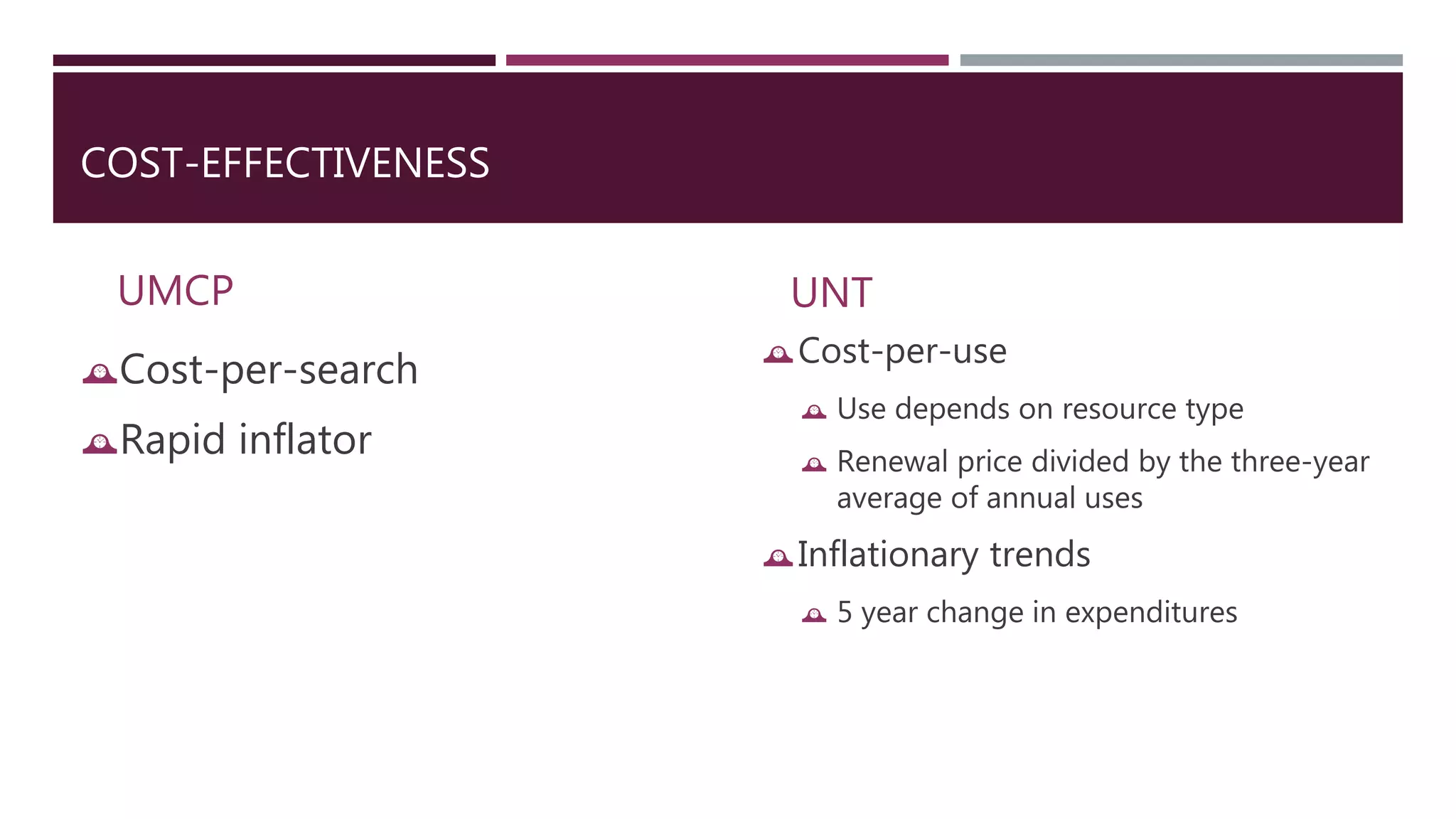

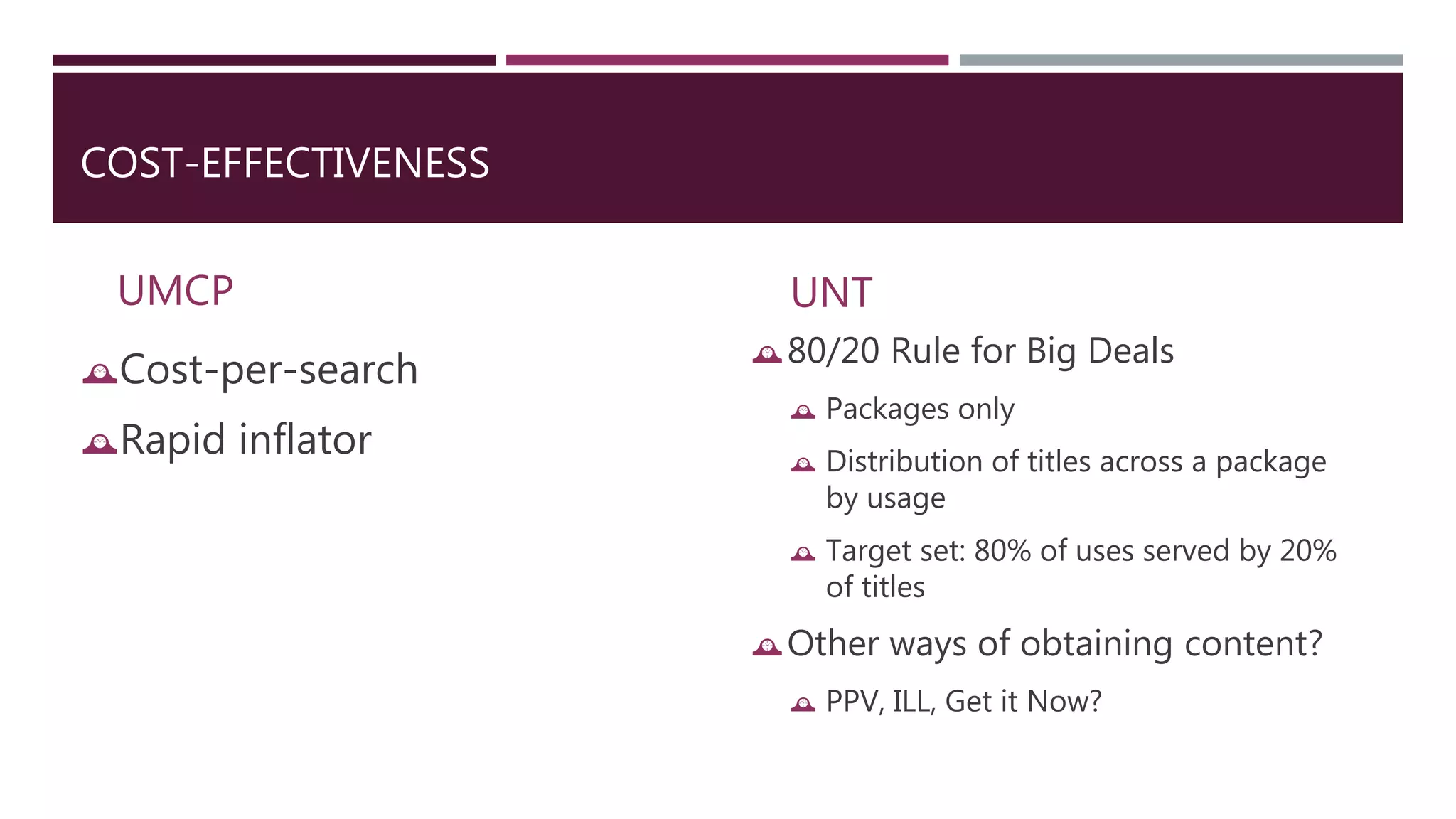

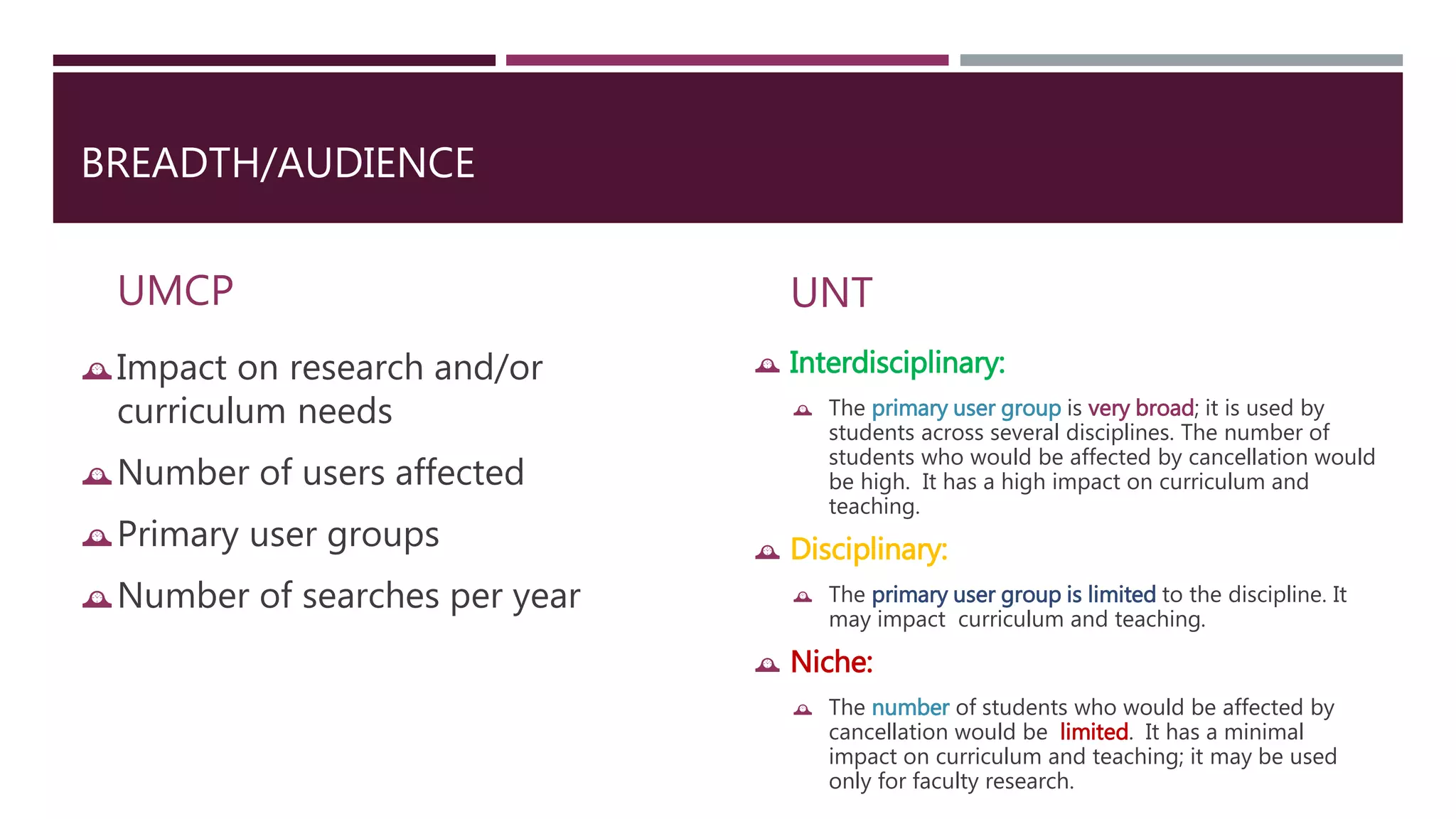

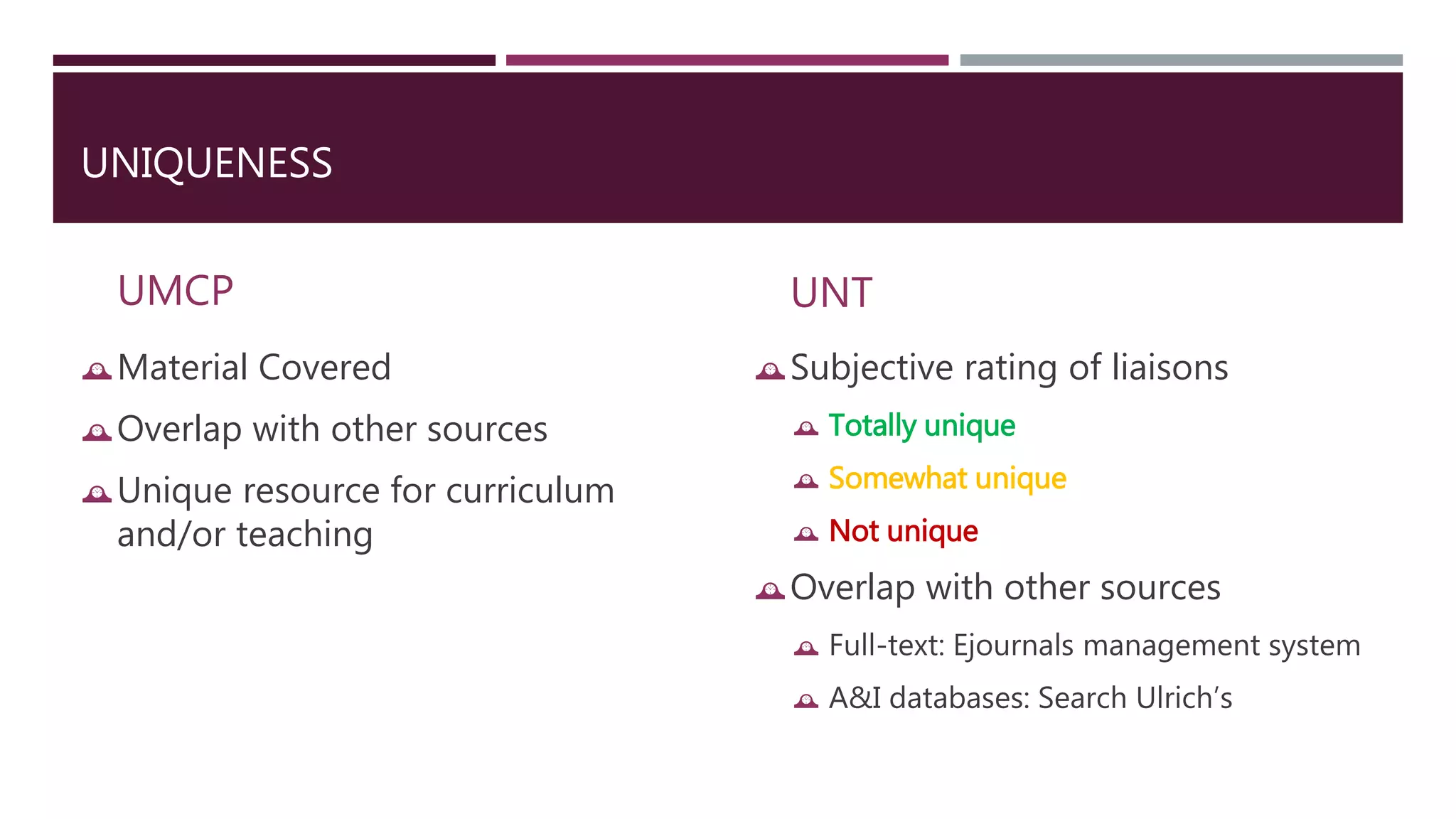

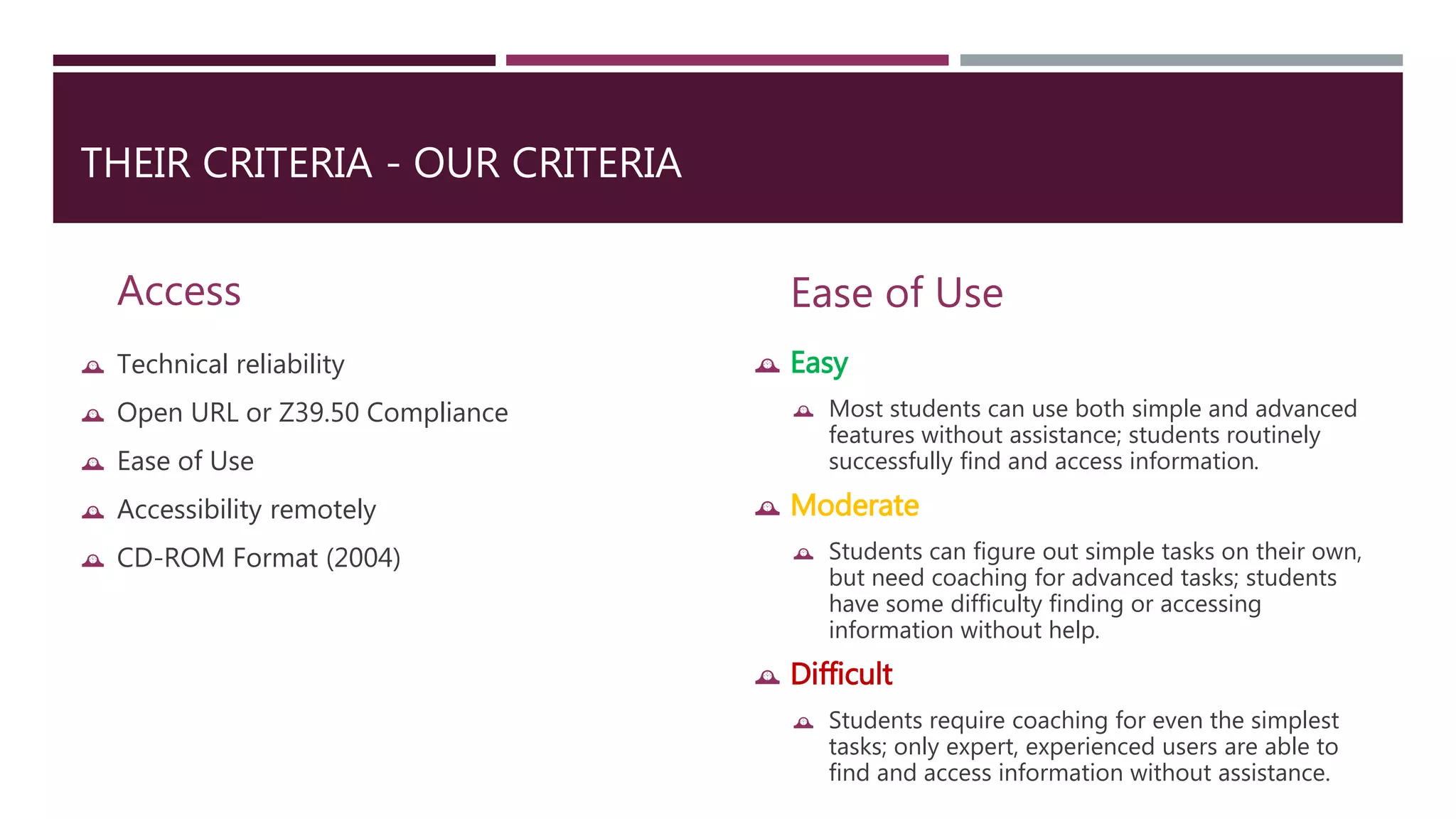

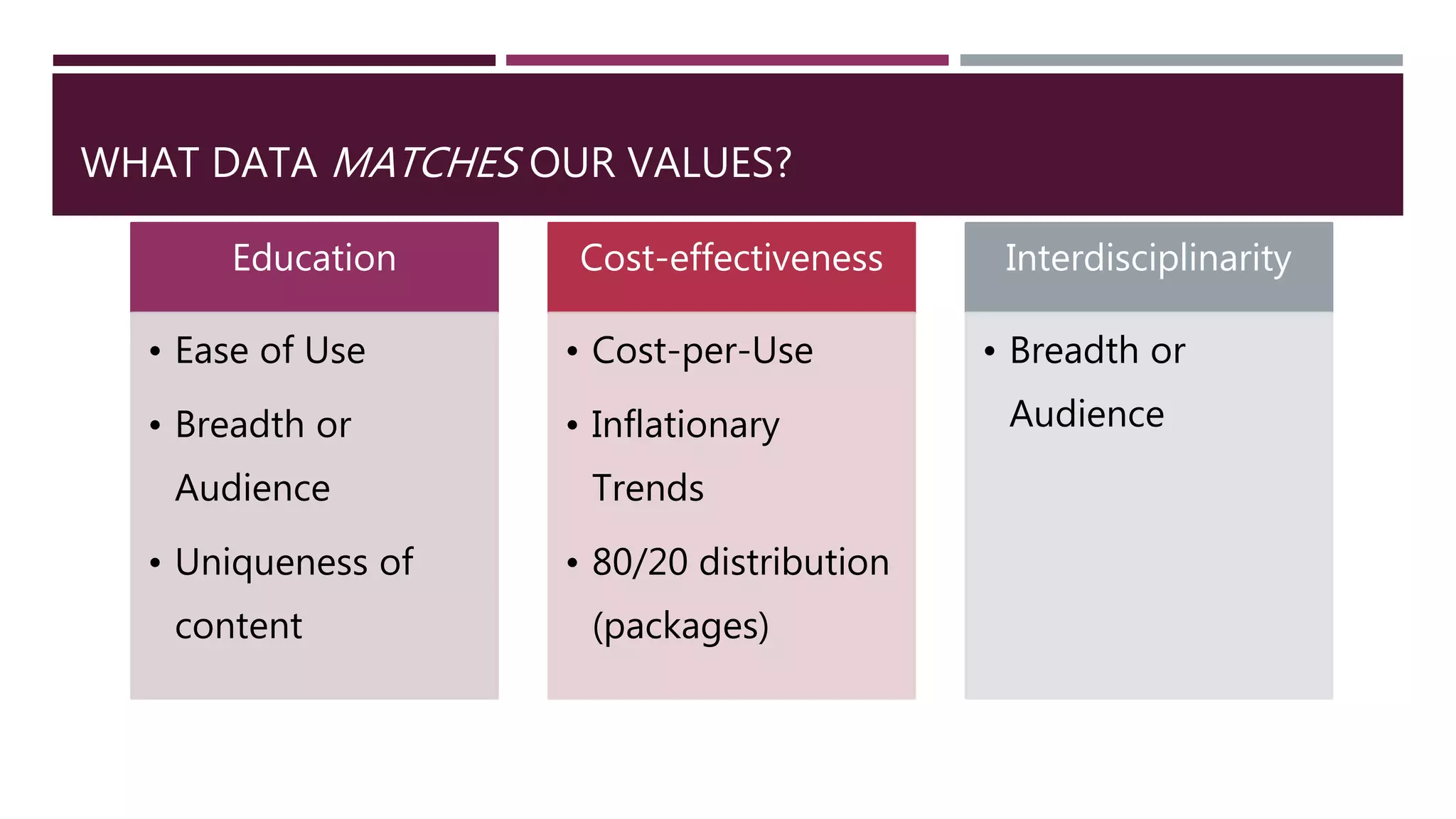

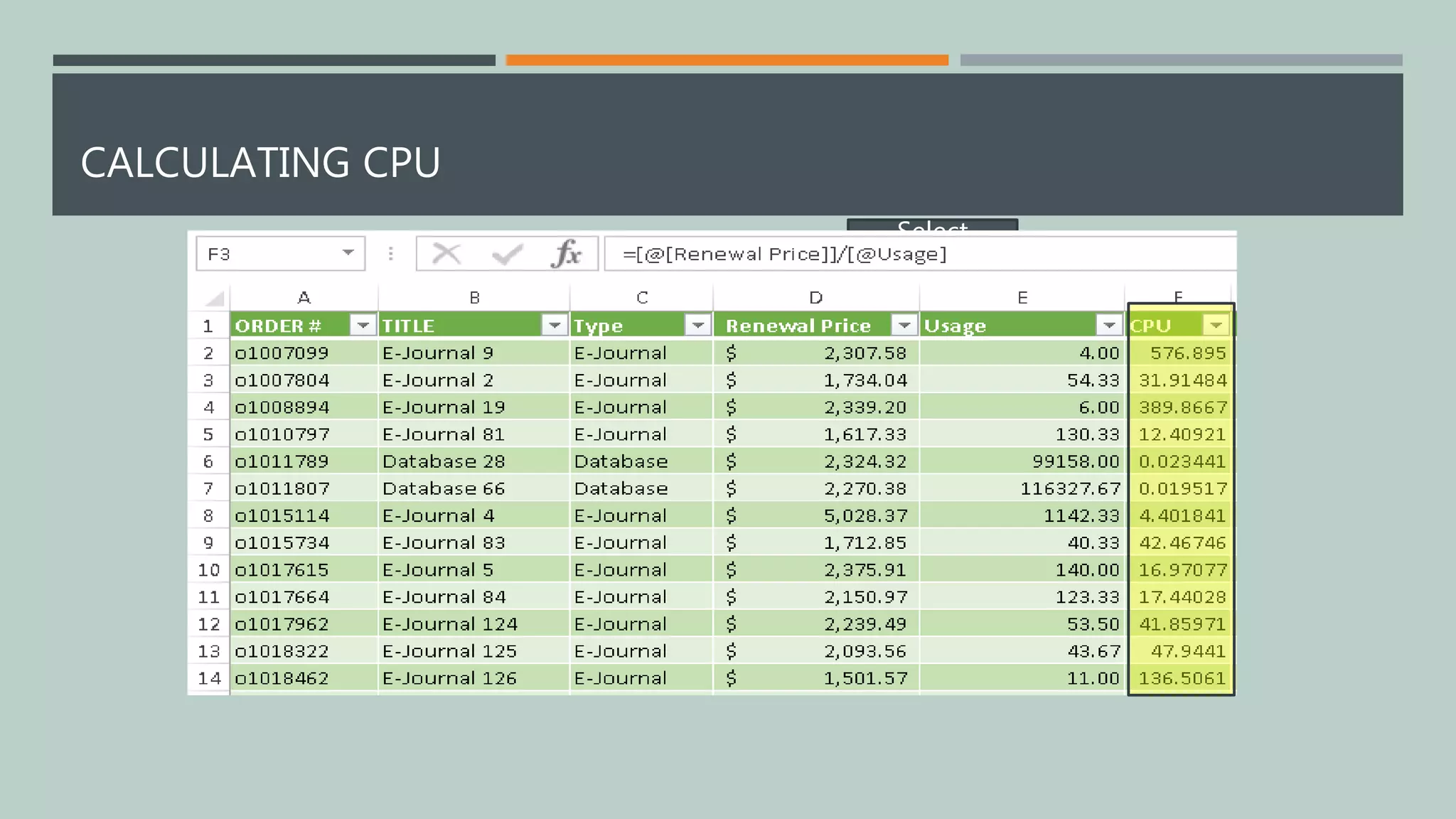

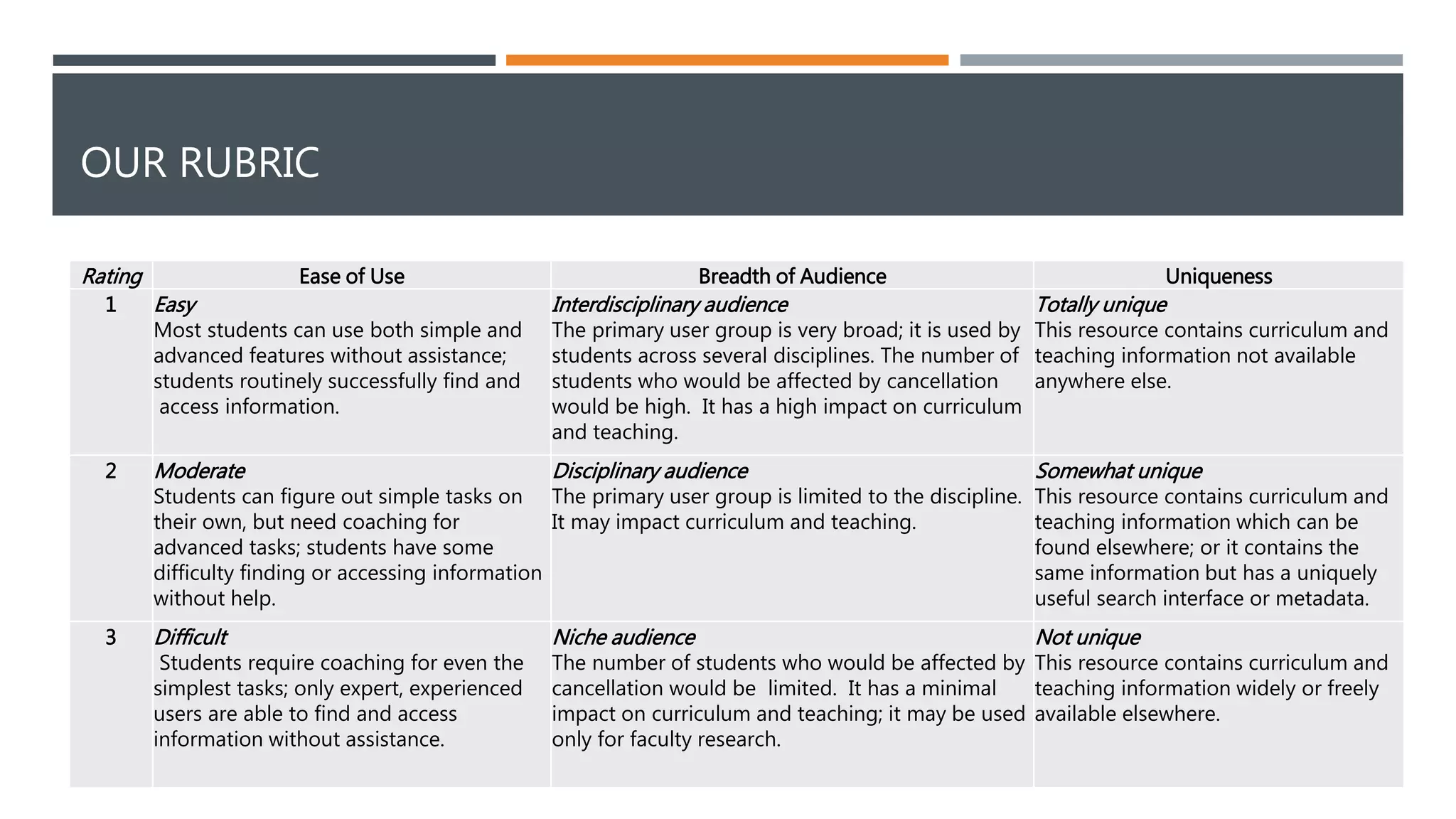

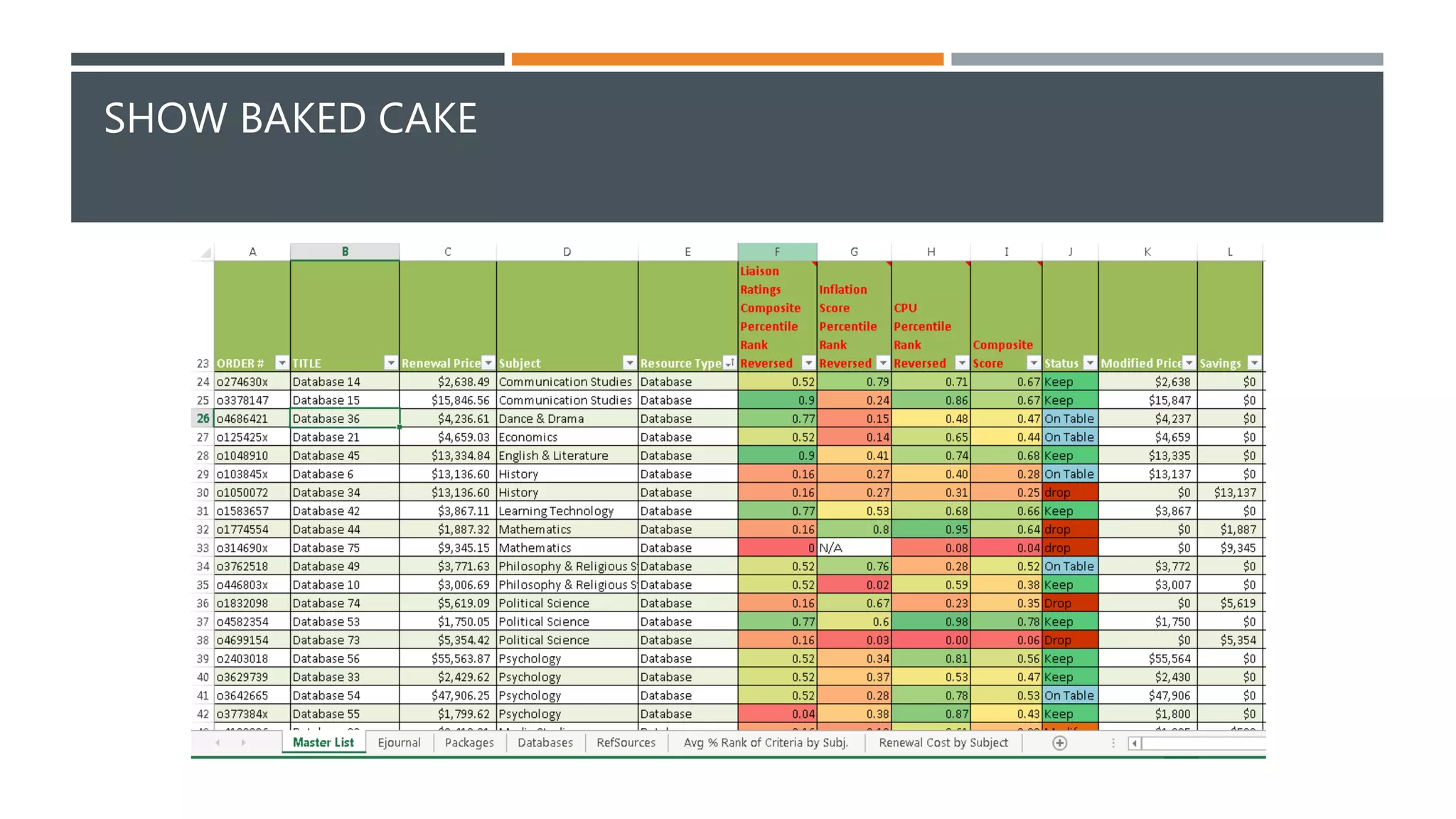

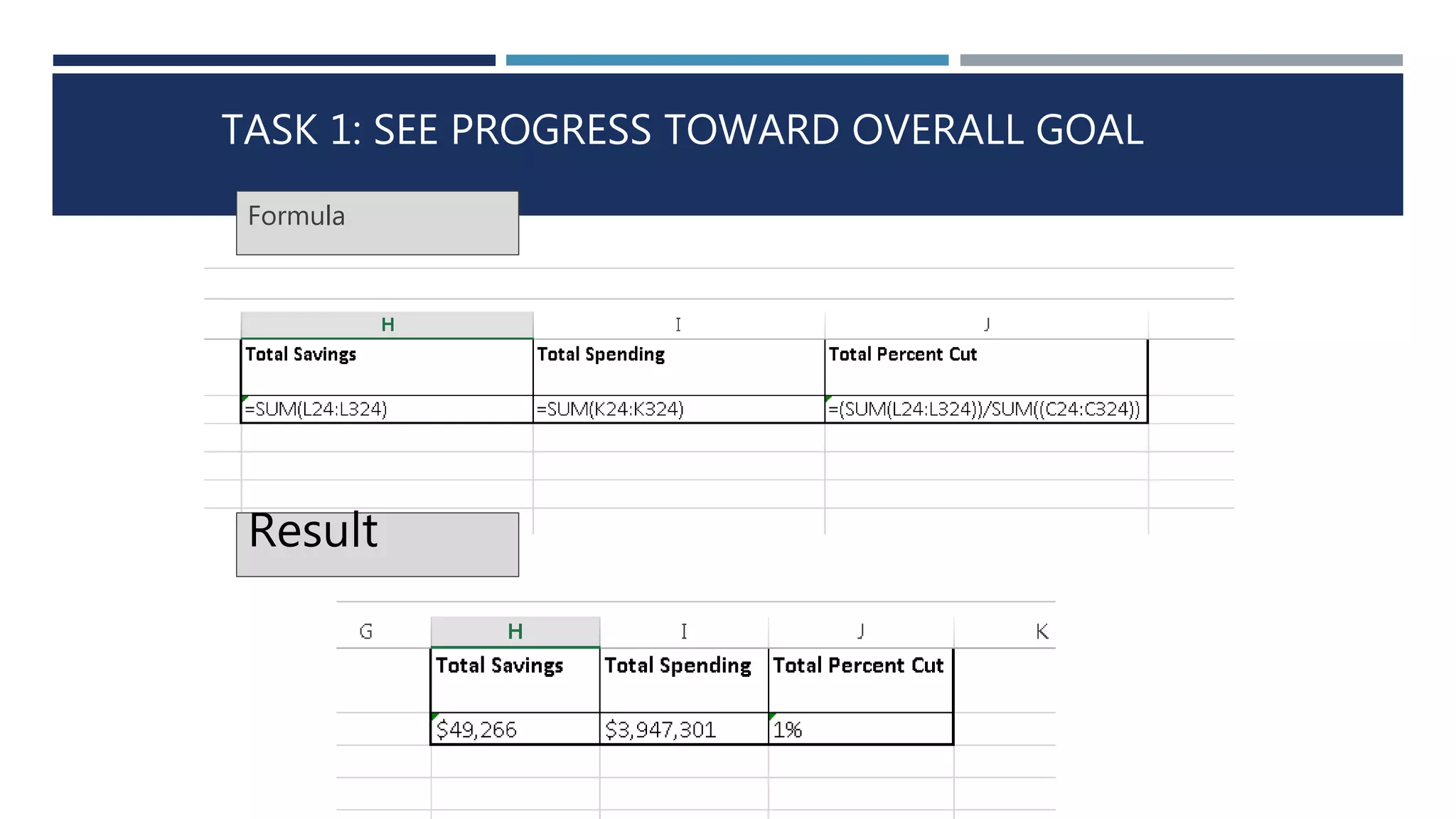

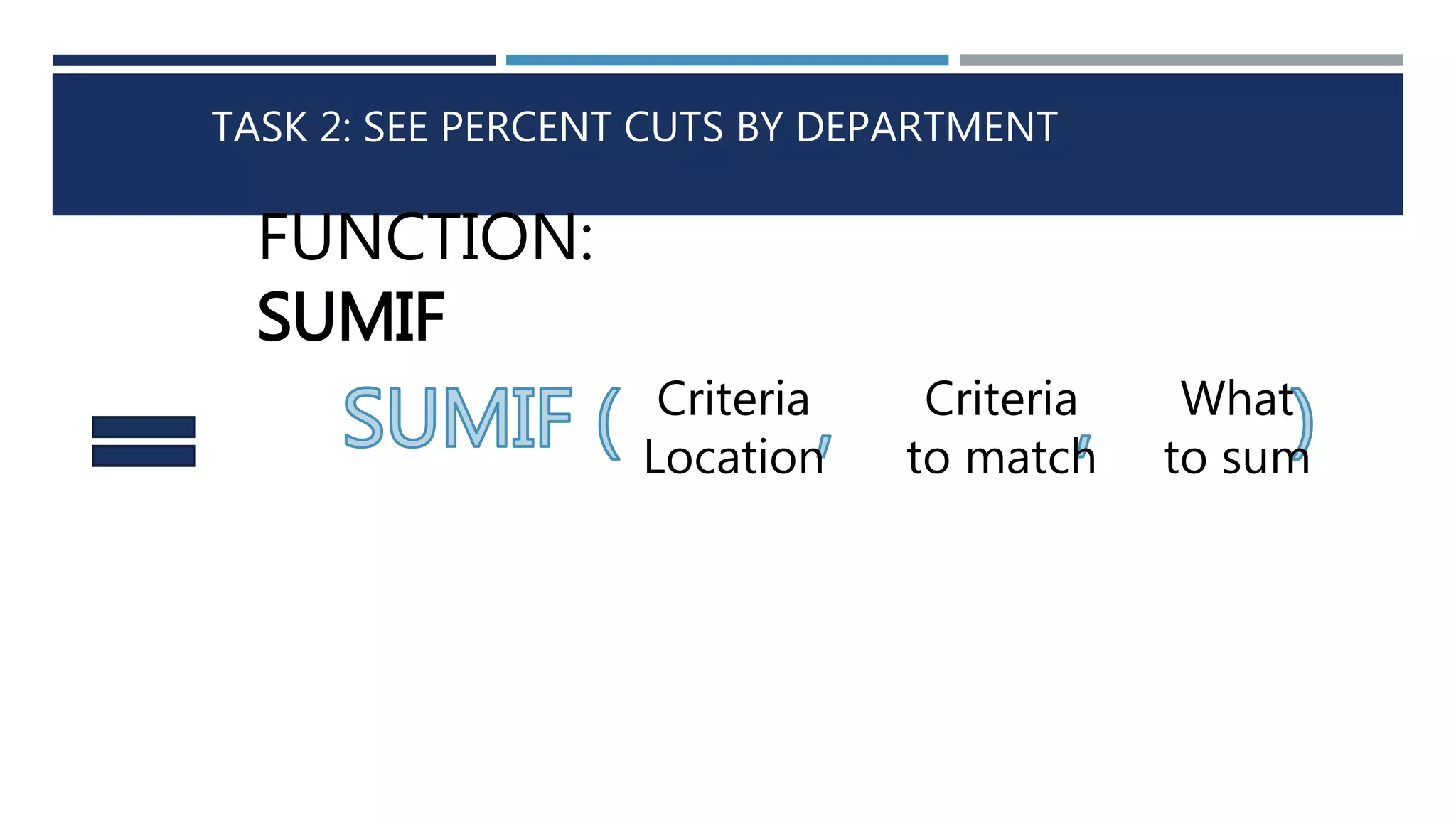

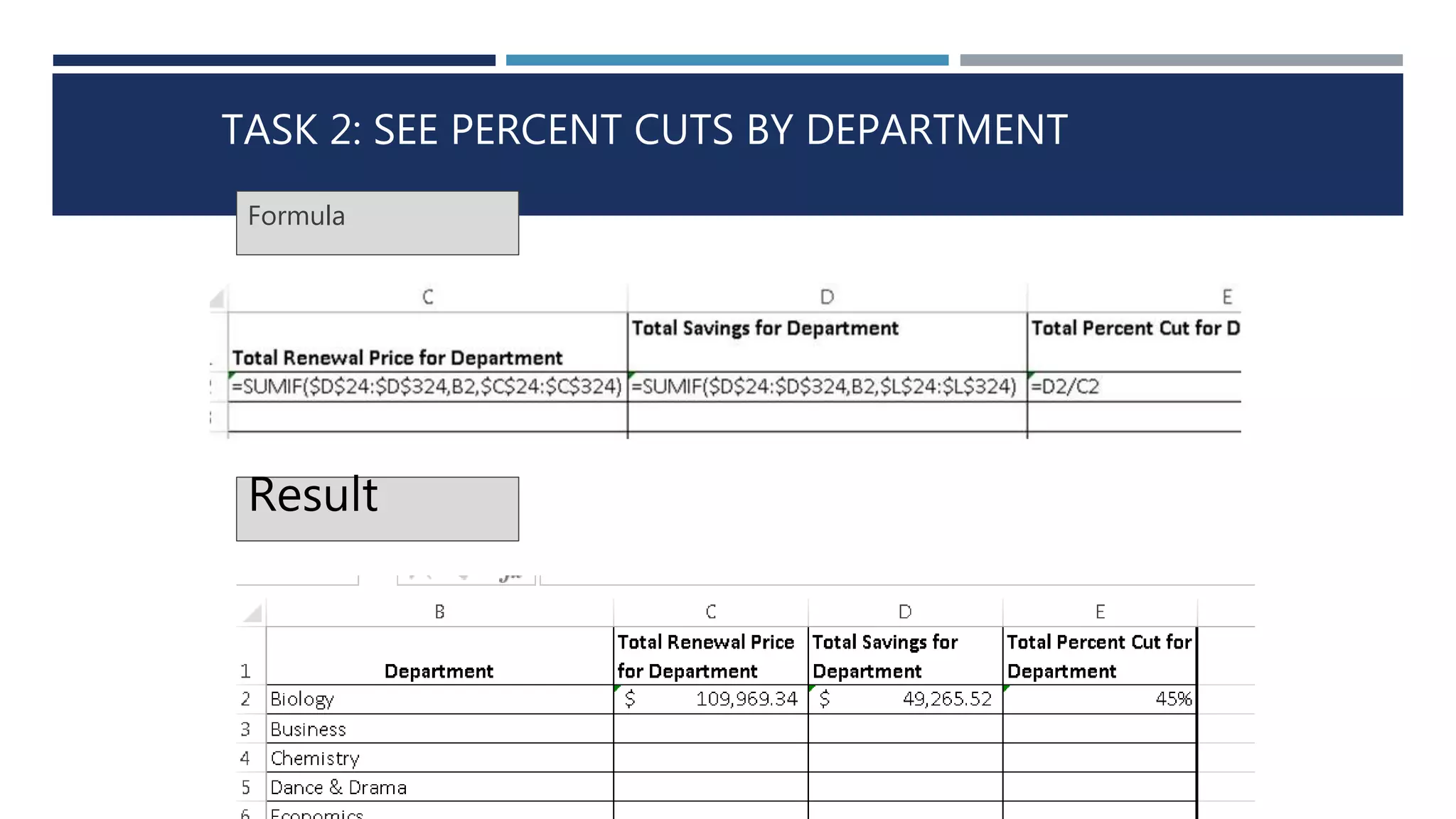

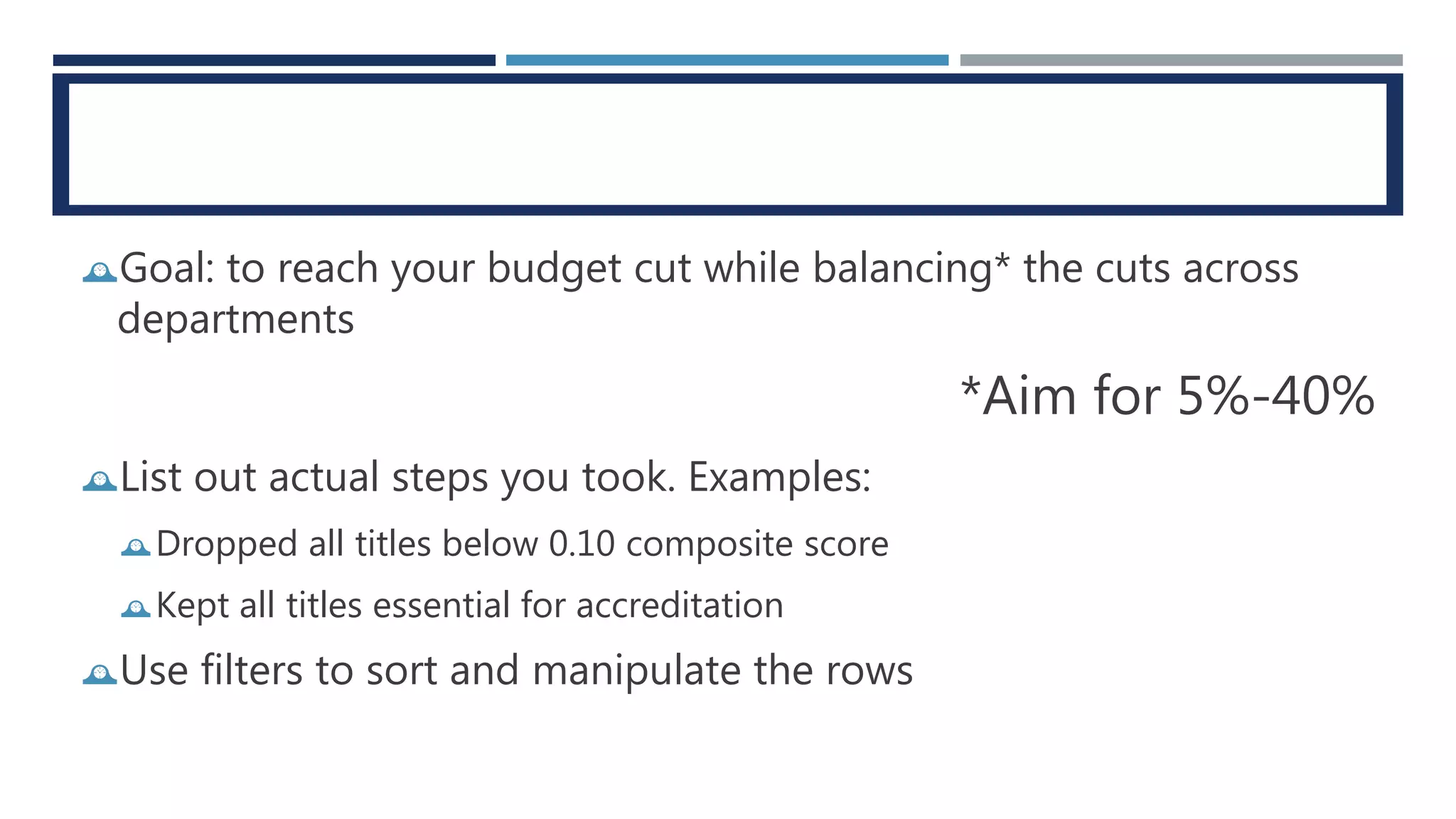

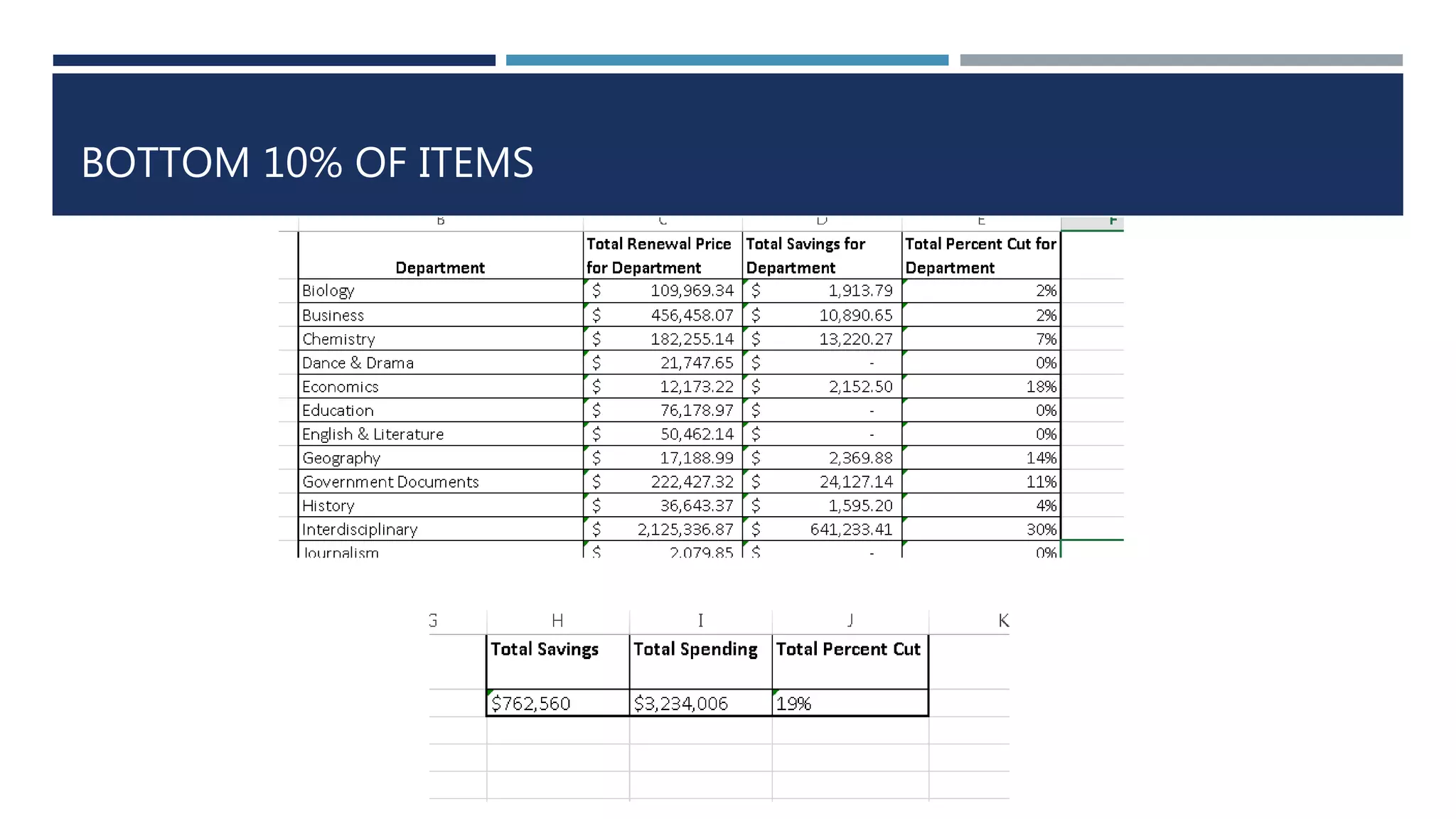

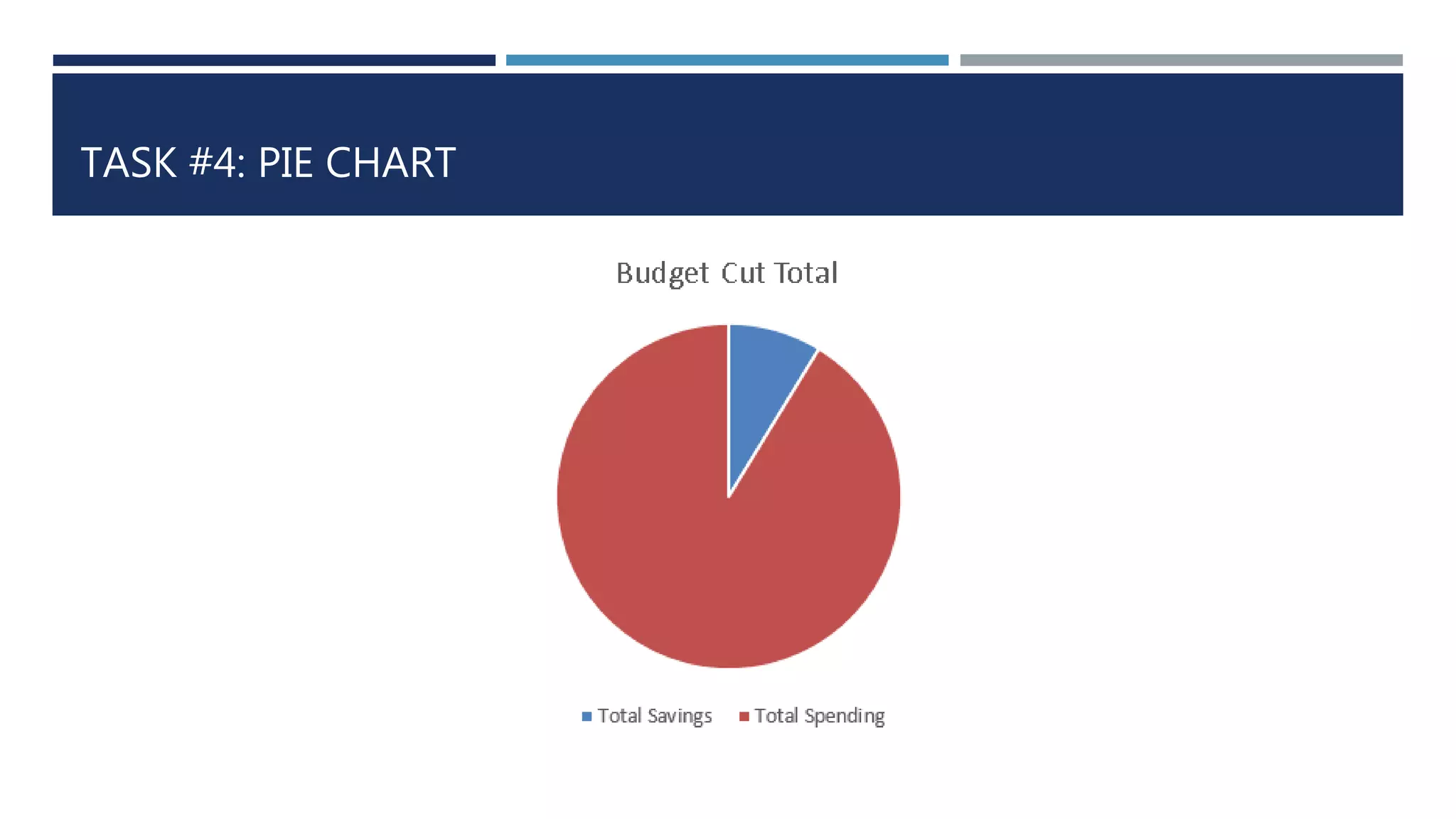

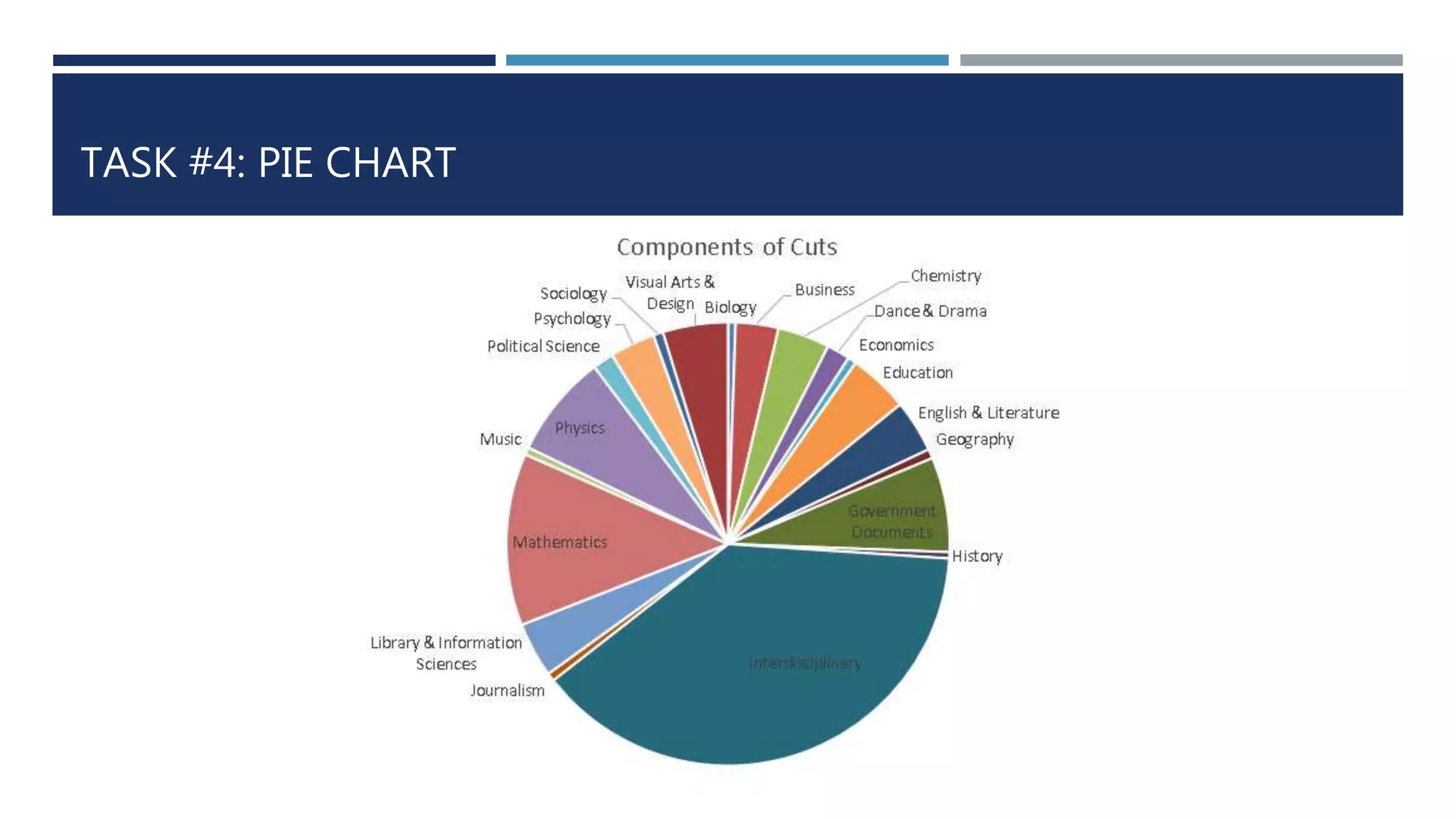

The document outlines a comprehensive strategy for evaluating electronic resources at university libraries, detailing the experiences of the University of North Texas (UNT) and the University of Maryland (UMCP) in managing collections amid budget cuts. It emphasizes a data-driven decision-making process focused on criteria such as cost-effectiveness, accessibility, and uniqueness, while encouraging community engagement and transparency in the review process. The document also discusses methods of data gathering and analysis to support future decisions regarding serial subscriptions and resource management.