Jonh Von Neumann

•Download as PPTX, PDF•

0 likes•912 views

Descrizione informatica

Report

Share

Report

Share

Recommended

UNIT-I-RTOS and Concepts

The document discusses real-time operating systems and concepts. It defines an operating system and real-time systems, distinguishing between soft and hard real-time systems. Popular real-time operating systems include VxWorks, QNX and Linux. Real-time operating systems provide mechanisms for real-time scheduling of tasks with deterministic timing. The architecture of a real-time operating system includes tasks, scheduling, interrupts and kernel objects like semaphores. Key differences from general purpose OS are determinism, preemptive multitasking and priority-based scheduling in real-time OS.

Microprocessor

The document discusses microprocessors and microcontrollers. It defines a microprocessor as the central processing unit (CPU) of a microcomputer that is contained on a single silicon chip. A microcontroller is similarly integrated but also includes memory and input/output ports, making it self-contained to control a specific system. The document provides details on the components and architecture of microprocessors, including registers, buses, memory, and I/O devices. It also summarizes the characteristics of the Intel 8085 microprocessor.

Interrupts

The document discusses interrupts in microcontrollers. It defines interrupts as a way to asynchronously process events outside the main program flow. When an interrupt occurs, the microcontroller finishes its current instruction, saves context to the stack, and jumps to the interrupt service routine (ISR) before returning to the main program. The document outlines the interrupt sequence and describes how to define an ISR. It also discusses interrupt priorities, nesting, and the registers used to configure and manage interrupts.

How to create SystemVerilog verification environment?

Basic knowledge for the verification engineer to learn the art of creating SystemVerilog verification environment.

Starting from the specifications extraction till coverage closure.

Operating system 34 contiguous allocation

Main memory must support both OS and user processes

Limited resource, must allocate efficiently

Contiguous allocation is one early method

Main memory usually into two partitions:

Resident operating system, usually held in low memory with interrupt vector

User processes then held in high memory

Each process contained in single contiguous section of memory

ARM - Advance RISC Machine

ARM (Advance RISC Machine) is one of the most licensed and thus widespread processor cores in the world.Used especially in portable devices due to low power consumption and reasonable performance.Several interesting extension available like THUMB instruction set and Jazelle Java Machine.

Introduction to Interrupts of 8085 microprocessor

The document discusses interrupts in microprocessors. It defines an interrupt as an asynchronous signal from an I/O device that gets the processor's attention. Interrupts can be maskable, which can be delayed, or non-maskable, which cannot. The 8085 interrupt controller supports 5 interrupt lines, including one non-maskable TRAP line. Interrupts are handled through an interrupt vector table that redirects the processor to interrupt service routines.

I/O Buffering

Buffer is an area of memory used to temporarily store data during transfer between two devices or between a device and an application. There are several types of buffering used in operating systems:

1) Single buffering uses one buffer assigned by the OS for each I/O request.

2) Double buffering uses two system buffers so computation and transfer can occur in parallel.

3) Circular buffering uses more than two buffers arranged in a circle so I/O can keep up with rapid process bursts.

4) Utility buffering overlaps I/O with computations through reserving memory areas called buffers. This improves CPU utilization and job completion times.

Recommended

UNIT-I-RTOS and Concepts

The document discusses real-time operating systems and concepts. It defines an operating system and real-time systems, distinguishing between soft and hard real-time systems. Popular real-time operating systems include VxWorks, QNX and Linux. Real-time operating systems provide mechanisms for real-time scheduling of tasks with deterministic timing. The architecture of a real-time operating system includes tasks, scheduling, interrupts and kernel objects like semaphores. Key differences from general purpose OS are determinism, preemptive multitasking and priority-based scheduling in real-time OS.

Microprocessor

The document discusses microprocessors and microcontrollers. It defines a microprocessor as the central processing unit (CPU) of a microcomputer that is contained on a single silicon chip. A microcontroller is similarly integrated but also includes memory and input/output ports, making it self-contained to control a specific system. The document provides details on the components and architecture of microprocessors, including registers, buses, memory, and I/O devices. It also summarizes the characteristics of the Intel 8085 microprocessor.

Interrupts

The document discusses interrupts in microcontrollers. It defines interrupts as a way to asynchronously process events outside the main program flow. When an interrupt occurs, the microcontroller finishes its current instruction, saves context to the stack, and jumps to the interrupt service routine (ISR) before returning to the main program. The document outlines the interrupt sequence and describes how to define an ISR. It also discusses interrupt priorities, nesting, and the registers used to configure and manage interrupts.

How to create SystemVerilog verification environment?

Basic knowledge for the verification engineer to learn the art of creating SystemVerilog verification environment.

Starting from the specifications extraction till coverage closure.

Operating system 34 contiguous allocation

Main memory must support both OS and user processes

Limited resource, must allocate efficiently

Contiguous allocation is one early method

Main memory usually into two partitions:

Resident operating system, usually held in low memory with interrupt vector

User processes then held in high memory

Each process contained in single contiguous section of memory

ARM - Advance RISC Machine

ARM (Advance RISC Machine) is one of the most licensed and thus widespread processor cores in the world.Used especially in portable devices due to low power consumption and reasonable performance.Several interesting extension available like THUMB instruction set and Jazelle Java Machine.

Introduction to Interrupts of 8085 microprocessor

The document discusses interrupts in microprocessors. It defines an interrupt as an asynchronous signal from an I/O device that gets the processor's attention. Interrupts can be maskable, which can be delayed, or non-maskable, which cannot. The 8085 interrupt controller supports 5 interrupt lines, including one non-maskable TRAP line. Interrupts are handled through an interrupt vector table that redirects the processor to interrupt service routines.

I/O Buffering

Buffer is an area of memory used to temporarily store data during transfer between two devices or between a device and an application. There are several types of buffering used in operating systems:

1) Single buffering uses one buffer assigned by the OS for each I/O request.

2) Double buffering uses two system buffers so computation and transfer can occur in parallel.

3) Circular buffering uses more than two buffers arranged in a circle so I/O can keep up with rapid process bursts.

4) Utility buffering overlaps I/O with computations through reserving memory areas called buffers. This improves CPU utilization and job completion times.

Advanced Pipelining in ARM Processors.pptx

The document discusses advanced pipelining techniques in ARM processors. It begins with an overview of pipelining and its benefits of improving throughput by executing multiple instructions simultaneously. ARM processors implement different numbers of pipeline stages - 3 stages in ARM7, 5 stages in ARM9, 6 stages in ARM10, and 7 stages in ARM11. Issues like control hazards, data hazards, and interrupts are addressed through techniques like data forwarding, branch prediction, and out-of-order execution. The 6-stage pipeline in ARM10 achieves double the throughput of ARM7 while compromising on latency. Branch target buffers are used to reduce delays from branch instructions. The 7-stage pipeline in ARM11 and above further improves performance using advanced data

Architecture of 8051 microcontroller))

A microcontroller is a single-chip microprocessor system consisting of a CPU, memory, and input/output ports. It can be considered a complete computer on a single chip. The 8051 was an early microcontroller developed by Intel for use in embedded systems. It had 4KB of program memory, 128 bytes of data memory, timers, counters, and I/O ports. The 8051 has separate memory spaces for program and data memory and its CPU, registers, timers and I/O ports allow it to monitor and control external devices.

Semaphores

1) A semaphore consists of a counter, a waiting list, and wait() and signal() methods. Wait() decrements the counter and blocks if it becomes negative, while signal() increments the counter and resumes a blocked process if the counter becomes positive.

2) The dining philosophers problem is solved using semaphores to lock access to shared chopsticks, with one philosopher designated as a "weirdo" to avoid deadlock by acquiring locks in a different order.

3) The producer-consumer problem uses three semaphores - one to limit buffer size, one for empty slots, and one for locks - to coordinate producers adding to a bounded buffer

Virtual Mapping in Virtual Memory

This is an PPT of Operating System. It include the following topic "Virtual Mapping in Virtual Memory ".

Memory : operating system ( Btech cse )

This document summarizes chapters 9 of the textbook "Operating System Concepts – 9th Edition" by Silberschatz, Galvin and Gagne. It discusses memory management techniques including contiguous memory allocation, segmentation, paging and page tables. Segmentation divides a program into segments that can reside in different parts of memory. Paging divides memory into fixed-size pages that can also reside in non-contiguous locations. Address translation uses a page table to map logical addresses to physical frames. Hardware support in the form of base/limit registers and TLB caches is required for these memory management schemes.

CPU scheduling algorithms in OS

This document discusses different CPU scheduling algorithms. It describes the First Come First Serve (FCFS), Shortest Job First (SJF), Priority Scheduling (PS), and Round Robin (RR) algorithms. Each algorithm is evaluated based on criteria like average turnaround time, waiting time, and CPU utilization. FCFS is found to have the highest CPU utilization but higher average turnaround times. SJF provides the lowest average turnaround times but can cause starvation of longer jobs. RR provides fairness but the time quantum setting impacts efficiency. The best algorithm depends on the specific performance measures and system requirements.

ARM architcture

The document provides an overview of embedded systems and ARM processors. It discusses key aspects of ARM processors including the pipeline, memory management features like cache, TCM, MMU and TLB. It also summarizes the AMBA specification and differences between operating in ARM and Thumb states. The document is intended as lecture material for an embedded systems course covering ARM architecture.

Static and Dynamic Read/Write memories

This document summarizes different types of random access memory (RAM), including static RAM (SRAM), dynamic RAM (DRAM), synchronous DRAM (SDRAM), and double data rate SDRAM (DDR SDRAM). It describes the basic operation and characteristics of each type of RAM, such as the use of transistors and capacitors, refresh requirements, packaging, and timing. Key details covered include the differences between SRAM and DRAM, DRAM refresh requirements, DRAM and SDRAM timing diagrams, and how DDR SDRAM transfers data on both clock edges.

Instruction scheduling

Assembly Code using Instruction Scheduling.

What is pipelining in embedded system?

Which instruction takes how many cycles for execution?

What is pipeline interlock?

Why branch instruction takes three execution cycles?

Scheduling of load instructions (C program and assembly code)

Methods of Scheduling the Load instructions: Preloading and Unrolling

Advanced microprocessor

Microprocessors are computer components made from transistors on a single chip that serve as the central processing unit (CPU) of computers. Microcontrollers are specialized microprocessors designed to control electronic devices. The key differences are that microcontrollers incorporate additional features like RAM, ROM, I/O ports directly on the chip to be self-sufficient, whereas microprocessors rely on external components. An 80286 microprocessor has features like a 16-bit data bus, 24-bit address bus, and memory management abilities. It was used in early PCs and can address up to 16MB of RAM. Microcontrollers are commonly found in embedded systems like appliances and control specific tasks without changes throughout their lifetime.

RISC - Reduced Instruction Set Computing

This document discusses RISC (Reduced Instruction Set Computer) architecture. It includes a member list, outline of topics to be covered, and acknowledgements. The main topics covered are what RISC is, the background and history of RISC, characteristics of RISC like simplified instructions and pipelining, differences between RISC and CISC, performance equations, and applications of RISC like in mobile systems, high-end computing, and ARM and MIPS architectures. It concludes that over time, the differences between RISC and CISC have blurred as they have adopted each other's strategies.

Interrupt handling

The document discusses exception and interrupt handling techniques in ARM processors. It describes ARM's operating modes, register set, and how exceptions are prioritized and handled via a vector table. Interrupts can be assigned and prioritized using an interrupt controller. The document outlines several interrupt handling schemes for ARM including non-nested, nested, and prioritized approaches. It considers factors like interrupt latency, nested capabilities, and priority handling when choosing an appropriate scheme for an embedded system.

Interrupts and types of interrupts

This method of checking the signal in the system for processing is called Polling Method. In this method, the problem is that the processor has to waste number of clock cycles just for checking the signal in the system, by this processor will become busy unnecessarily. If any signal came for the process, processor will take some time to process the signal due to the polling process in action. So system performance also will be degraded and response time of the system will also decrease.

Pipeline and data hazard

RAR (Read After Read) is not considered a data hazard because it does not change the order of memory accesses or introduce incorrect results. Multiple instructions can safely read the same register without interfering with each other. The three types of data hazards that can occur are RAW (Read After Write), WAR (Write After Read), and WAW (Write After Write) which all involve write operations that could potentially overwrite data before it is read.

MEMORY MANAGEMENT

The document discusses the responsibilities and functions of an operating system's memory manager. The memory manager must securely allocate memory in a way that prevents memory leaks and stack overflows. It allocates memory dynamically using different techniques, including fixed and dynamic block allocation, dynamic page allocation, and dynamic address relocation. The memory manager also protects memory access between the operating system functions and tasks running on the system.

Pentium

The document provides an overview of the evolution of Intel processors from early 16-bit processors like the 8086 and 8088 through to the Pentium processor. It describes the key features and architectural changes introduced at each generation, including protected mode and segmentation in the 286, 32-bit registers and virtual memory support in the 386, pipelining and caching in the 486, and superscalar processing and branch prediction in the Pentium.

Real Time Operating Systems

This document discusses real-time operating systems (RTOS). It defines an RTOS as a program that schedules execution in a timely manner, manages system resources, and provides a consistent foundation for developing application code. The key components of an RTOS include a scheduler, objects like tasks and semaphores, and services like interrupt management. The scheduler uses algorithms to determine which task executes when and includes important elements like context switching. Objects help with synchronization between tasks. Services perform operations on objects and other kernel functions.

Memory management

Memory management is the act of managing computer memory. The essential requirement of memory management is to provide ways to dynamically allocate portions of memory to programs at their request, and free it for reuse when no longer needed. This is critical to any advanced computer system where more than a single process might be underway at any time

Seminar stt ram

This document discusses the history and working of spin transfer torque RAM (STT-RAM). STT-RAM is a type of non-volatile memory that uses magnetic tunnel junctions to store data. It provides advantages like high speed, density, low power and reliability compared to other memory technologies. The document describes the key patents and commercial releases in STT-RAM's development. It explains the read, write and refresh operations in STT-RAM and discusses applications, advantages and challenges of this memory technology.

Basic computer architecture

This document provides an overview of basic computer architecture and components. It discusses the history of computers and introduces Arduino. The main computer components are described as the input/output units, memory/storage units, and the CPU. The motherboard diagram shows the northbridge, southbridge, and bus. The von Neumann and Harvard CPU architectures are explained. The CPU has three major components: the ALU, CU, and registers. Memory types like RAM, ROM, cache memory and their functions are also summarized.

More Related Content

What's hot

Advanced Pipelining in ARM Processors.pptx

The document discusses advanced pipelining techniques in ARM processors. It begins with an overview of pipelining and its benefits of improving throughput by executing multiple instructions simultaneously. ARM processors implement different numbers of pipeline stages - 3 stages in ARM7, 5 stages in ARM9, 6 stages in ARM10, and 7 stages in ARM11. Issues like control hazards, data hazards, and interrupts are addressed through techniques like data forwarding, branch prediction, and out-of-order execution. The 6-stage pipeline in ARM10 achieves double the throughput of ARM7 while compromising on latency. Branch target buffers are used to reduce delays from branch instructions. The 7-stage pipeline in ARM11 and above further improves performance using advanced data

Architecture of 8051 microcontroller))

A microcontroller is a single-chip microprocessor system consisting of a CPU, memory, and input/output ports. It can be considered a complete computer on a single chip. The 8051 was an early microcontroller developed by Intel for use in embedded systems. It had 4KB of program memory, 128 bytes of data memory, timers, counters, and I/O ports. The 8051 has separate memory spaces for program and data memory and its CPU, registers, timers and I/O ports allow it to monitor and control external devices.

Semaphores

1) A semaphore consists of a counter, a waiting list, and wait() and signal() methods. Wait() decrements the counter and blocks if it becomes negative, while signal() increments the counter and resumes a blocked process if the counter becomes positive.

2) The dining philosophers problem is solved using semaphores to lock access to shared chopsticks, with one philosopher designated as a "weirdo" to avoid deadlock by acquiring locks in a different order.

3) The producer-consumer problem uses three semaphores - one to limit buffer size, one for empty slots, and one for locks - to coordinate producers adding to a bounded buffer

Virtual Mapping in Virtual Memory

This is an PPT of Operating System. It include the following topic "Virtual Mapping in Virtual Memory ".

Memory : operating system ( Btech cse )

This document summarizes chapters 9 of the textbook "Operating System Concepts – 9th Edition" by Silberschatz, Galvin and Gagne. It discusses memory management techniques including contiguous memory allocation, segmentation, paging and page tables. Segmentation divides a program into segments that can reside in different parts of memory. Paging divides memory into fixed-size pages that can also reside in non-contiguous locations. Address translation uses a page table to map logical addresses to physical frames. Hardware support in the form of base/limit registers and TLB caches is required for these memory management schemes.

CPU scheduling algorithms in OS

This document discusses different CPU scheduling algorithms. It describes the First Come First Serve (FCFS), Shortest Job First (SJF), Priority Scheduling (PS), and Round Robin (RR) algorithms. Each algorithm is evaluated based on criteria like average turnaround time, waiting time, and CPU utilization. FCFS is found to have the highest CPU utilization but higher average turnaround times. SJF provides the lowest average turnaround times but can cause starvation of longer jobs. RR provides fairness but the time quantum setting impacts efficiency. The best algorithm depends on the specific performance measures and system requirements.

ARM architcture

The document provides an overview of embedded systems and ARM processors. It discusses key aspects of ARM processors including the pipeline, memory management features like cache, TCM, MMU and TLB. It also summarizes the AMBA specification and differences between operating in ARM and Thumb states. The document is intended as lecture material for an embedded systems course covering ARM architecture.

Static and Dynamic Read/Write memories

This document summarizes different types of random access memory (RAM), including static RAM (SRAM), dynamic RAM (DRAM), synchronous DRAM (SDRAM), and double data rate SDRAM (DDR SDRAM). It describes the basic operation and characteristics of each type of RAM, such as the use of transistors and capacitors, refresh requirements, packaging, and timing. Key details covered include the differences between SRAM and DRAM, DRAM refresh requirements, DRAM and SDRAM timing diagrams, and how DDR SDRAM transfers data on both clock edges.

Instruction scheduling

Assembly Code using Instruction Scheduling.

What is pipelining in embedded system?

Which instruction takes how many cycles for execution?

What is pipeline interlock?

Why branch instruction takes three execution cycles?

Scheduling of load instructions (C program and assembly code)

Methods of Scheduling the Load instructions: Preloading and Unrolling

Advanced microprocessor

Microprocessors are computer components made from transistors on a single chip that serve as the central processing unit (CPU) of computers. Microcontrollers are specialized microprocessors designed to control electronic devices. The key differences are that microcontrollers incorporate additional features like RAM, ROM, I/O ports directly on the chip to be self-sufficient, whereas microprocessors rely on external components. An 80286 microprocessor has features like a 16-bit data bus, 24-bit address bus, and memory management abilities. It was used in early PCs and can address up to 16MB of RAM. Microcontrollers are commonly found in embedded systems like appliances and control specific tasks without changes throughout their lifetime.

RISC - Reduced Instruction Set Computing

This document discusses RISC (Reduced Instruction Set Computer) architecture. It includes a member list, outline of topics to be covered, and acknowledgements. The main topics covered are what RISC is, the background and history of RISC, characteristics of RISC like simplified instructions and pipelining, differences between RISC and CISC, performance equations, and applications of RISC like in mobile systems, high-end computing, and ARM and MIPS architectures. It concludes that over time, the differences between RISC and CISC have blurred as they have adopted each other's strategies.

Interrupt handling

The document discusses exception and interrupt handling techniques in ARM processors. It describes ARM's operating modes, register set, and how exceptions are prioritized and handled via a vector table. Interrupts can be assigned and prioritized using an interrupt controller. The document outlines several interrupt handling schemes for ARM including non-nested, nested, and prioritized approaches. It considers factors like interrupt latency, nested capabilities, and priority handling when choosing an appropriate scheme for an embedded system.

Interrupts and types of interrupts

This method of checking the signal in the system for processing is called Polling Method. In this method, the problem is that the processor has to waste number of clock cycles just for checking the signal in the system, by this processor will become busy unnecessarily. If any signal came for the process, processor will take some time to process the signal due to the polling process in action. So system performance also will be degraded and response time of the system will also decrease.

Pipeline and data hazard

RAR (Read After Read) is not considered a data hazard because it does not change the order of memory accesses or introduce incorrect results. Multiple instructions can safely read the same register without interfering with each other. The three types of data hazards that can occur are RAW (Read After Write), WAR (Write After Read), and WAW (Write After Write) which all involve write operations that could potentially overwrite data before it is read.

MEMORY MANAGEMENT

The document discusses the responsibilities and functions of an operating system's memory manager. The memory manager must securely allocate memory in a way that prevents memory leaks and stack overflows. It allocates memory dynamically using different techniques, including fixed and dynamic block allocation, dynamic page allocation, and dynamic address relocation. The memory manager also protects memory access between the operating system functions and tasks running on the system.

Pentium

The document provides an overview of the evolution of Intel processors from early 16-bit processors like the 8086 and 8088 through to the Pentium processor. It describes the key features and architectural changes introduced at each generation, including protected mode and segmentation in the 286, 32-bit registers and virtual memory support in the 386, pipelining and caching in the 486, and superscalar processing and branch prediction in the Pentium.

Real Time Operating Systems

This document discusses real-time operating systems (RTOS). It defines an RTOS as a program that schedules execution in a timely manner, manages system resources, and provides a consistent foundation for developing application code. The key components of an RTOS include a scheduler, objects like tasks and semaphores, and services like interrupt management. The scheduler uses algorithms to determine which task executes when and includes important elements like context switching. Objects help with synchronization between tasks. Services perform operations on objects and other kernel functions.

Memory management

Memory management is the act of managing computer memory. The essential requirement of memory management is to provide ways to dynamically allocate portions of memory to programs at their request, and free it for reuse when no longer needed. This is critical to any advanced computer system where more than a single process might be underway at any time

Seminar stt ram

This document discusses the history and working of spin transfer torque RAM (STT-RAM). STT-RAM is a type of non-volatile memory that uses magnetic tunnel junctions to store data. It provides advantages like high speed, density, low power and reliability compared to other memory technologies. The document describes the key patents and commercial releases in STT-RAM's development. It explains the read, write and refresh operations in STT-RAM and discusses applications, advantages and challenges of this memory technology.

Basic computer architecture

This document provides an overview of basic computer architecture and components. It discusses the history of computers and introduces Arduino. The main computer components are described as the input/output units, memory/storage units, and the CPU. The motherboard diagram shows the northbridge, southbridge, and bus. The von Neumann and Harvard CPU architectures are explained. The CPU has three major components: the ALU, CU, and registers. Memory types like RAM, ROM, cache memory and their functions are also summarized.

What's hot (20)

Similar to Jonh Von Neumann

20090213 Cattaneo Architettura Degli Elaboratori P1

Prima lezione di informatica del master in cosmetologia della facoltà di farmacia dell'Università di Salerno

Modulo 1 struttura del computer

Materiale di studio per il progetto PON "Frontiera" - Le competenze digitali"

Windows... per nostalgici

Windows per nostalgici.. si tratta di dispense che usavo agli albori per le mie lezioni.

Similar to Jonh Von Neumann (20)

20090213 Cattaneo Architettura Degli Elaboratori P1

20090213 Cattaneo Architettura Degli Elaboratori P1

Power point sistemi operativi , luca marcella 3° e

Power point sistemi operativi , luca marcella 3° e

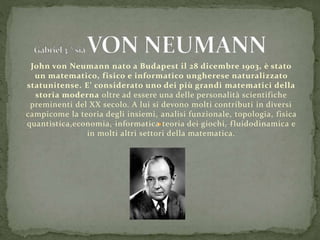

Jonh Von Neumann

- 1. John von Neumann nato a Budapest il 28 dicembre 1903, è stato un matematico, fisico e informatico ungherese naturalizzato statunitense. E’ considerato uno dei più grandi matematici della storia moderna oltre ad essere una delle personalità scientifiche preminenti del XX secolo. A lui si devono molti contributi in diversi campicome la teoria degli insiemi, analisi funzionale, topologia, fisica quantistica,economia, informatica teoria dei giochi, fluidodinamica e in molti altri settori della matematica.

- 2. Un computer basato sull'architettura di von Neumann è detto "modello di von Neumann oppure come la chiamò Von Neumann stored -program computer .In informatica l'architettura di von Neumann è una tipologia di architettura hardware per computer digitali programmabili a programma memorizzato la quale condivide i dati del programma e le istruzioni del programma nello stesso spazio di memoria. Per tale caratteristica l'architettura di von Neumann si contrappone all'architettura Harvard nella quale invece i dati del programma e le istruzioni del programma sono memorizzati in spazi di memoria distinti. L'importanza dell'architettura di von Neumann è notevole in quanto è l'architettura hardware su cui è basata la maggior parte dei moderni computer programmabili. E se si pensa che è stata sviluppata più di settant'anni fa, si può comprendere quanto erano notevoli anche le capacità di chi l'ha concepita.

- 3. Lo schema si basa su cinque componenti fondamentali: CPU (o unità di lavoro) che si divide a sua volta in Unità operativa, nella quale uno dei sottosistemi più rilevanti è l'unità aritmetica e logica(o ALU) Unità di controllo Unità di memoria, intesa come memoria di lavoro o memoria principale (RAM, Random Access Memory) Unità di input, tramite la quale i dati vengono inseriti nel calcolatore per essere elaborati Unità di output, necessaria affinché i dati elaborati possano essere restituiti all'operatore Bus, un canale che collega tutti i componenti fra loro All'interno dell'ALU è presente un registro detto accumulatore, che fa da ponte tra input e output grazie a una speciale istruzione che carica una parola dalla memoria all'accumulatore e viceversa. È importante sottolineare che tale architettura, a differenza di altre, si distingue per la caratteristica di immagazzinare all'interno dell'unità di memoria, sia i dati dei programmi in esecuzione che il codice di questi ultimi. Bisogna comunque precisare che questa è una schematizzazione molto sintetica, sebbene molto potente: basti pensare che i moderni computer di uso comune sono progettati secondo l'architettura Von Neumann. Difatti essa regola non solo gli insiemi, ma l'intera architettura logica interna degli stessi, ovvero la disposizione delle porte logiche, perlomeno per quanto riguarda la parte elementare, sulla quale si sono sviluppate le successive progressioni. Inoltre, quando si parla di unità di memoria si intende la memoria primaria, mentre le memorie di massa sono considerate dispositivi di I/O. Il motivo di ciò è innanzitutto storico, in quanto negli anni quaranta, epoca a cui risale questa architettura, la tecnologia non lasciava neanche presupporre dispositivi come hard disk, CD-ROM, DVD-ROM o anche solo nastri magnetici, ma anche tecnico, se si considera che in effetti i dati da elaborare devono comunque essere caricati in RAM, siano essi provenienti da tastiera o da hard-disk.

- 4. Il computer è uno strumento estremamente versatile. Può essere utilizzato in tutti i possibili campi. Il costo e la facilità d’uso sono inversamente proporzionali alla potenza del sistema. I costruttori hanno differenziato varie categorie di computer ognuna adatta A rispondere a determinate esigenze. Classificazione di Elaboratori: Supercomputer Mainframe Mini-computer,Workstation Personal Computer Notebook, Network Computer