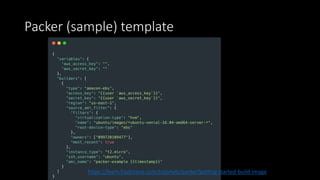

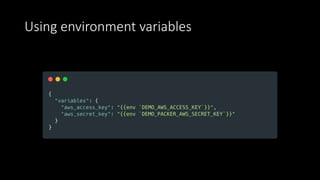

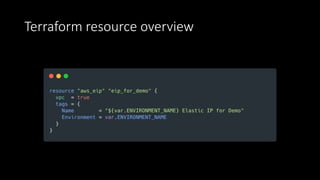

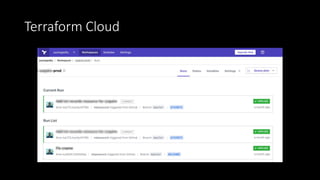

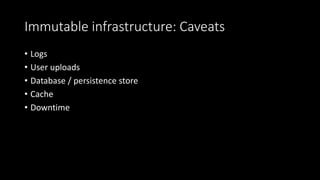

The document discusses the implementation of immutable infrastructure using tools like Packer, Ansible, and Terraform to address issues related to maintaining confidence in production server states. It outlines the characteristics of immutable servers, deployment processes, and the benefits of using Infrastructure as Code (IaC). Additionally, it provides insights into the integration of these tools and presents a sample project demonstrating their functionality.