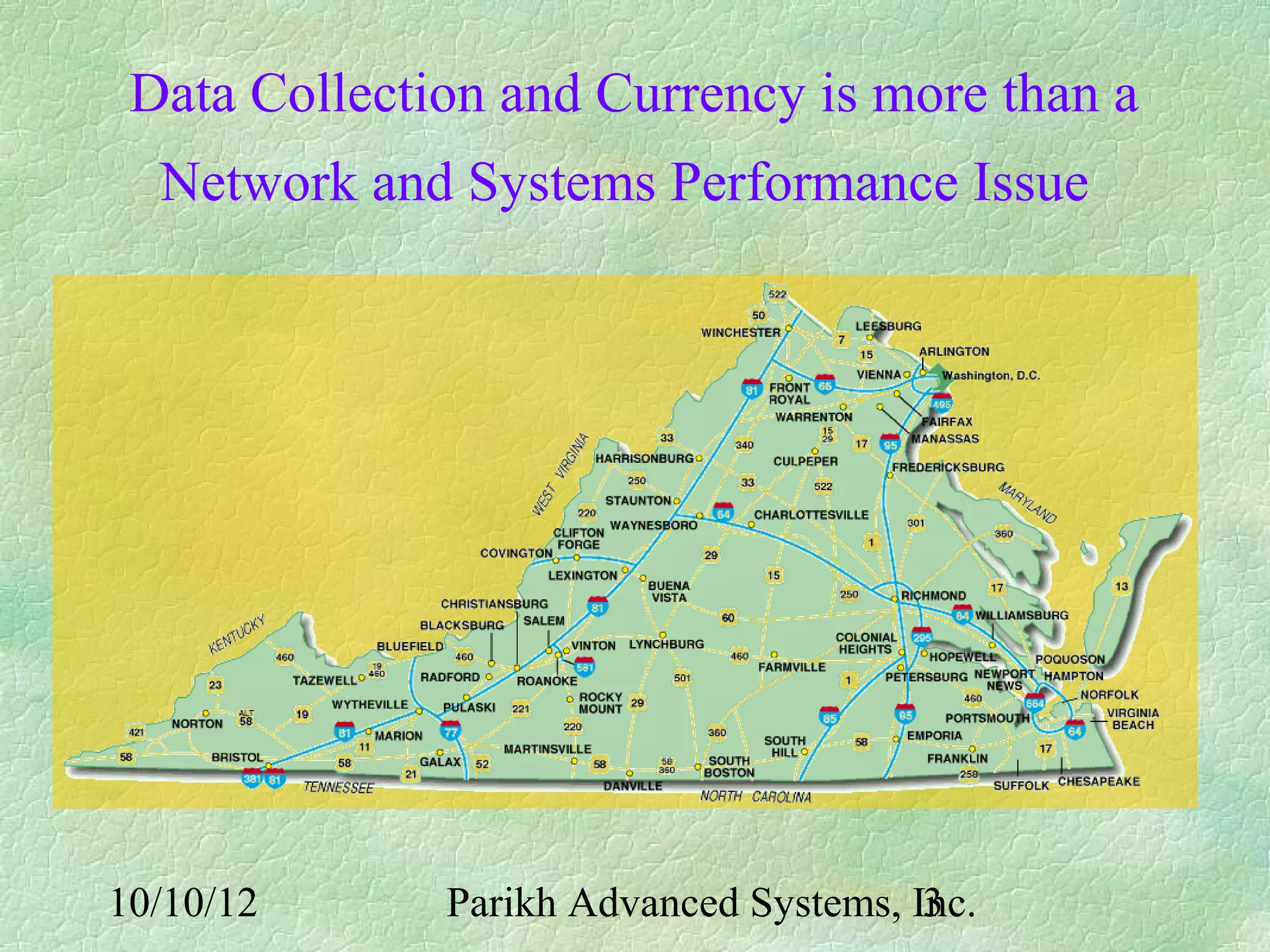

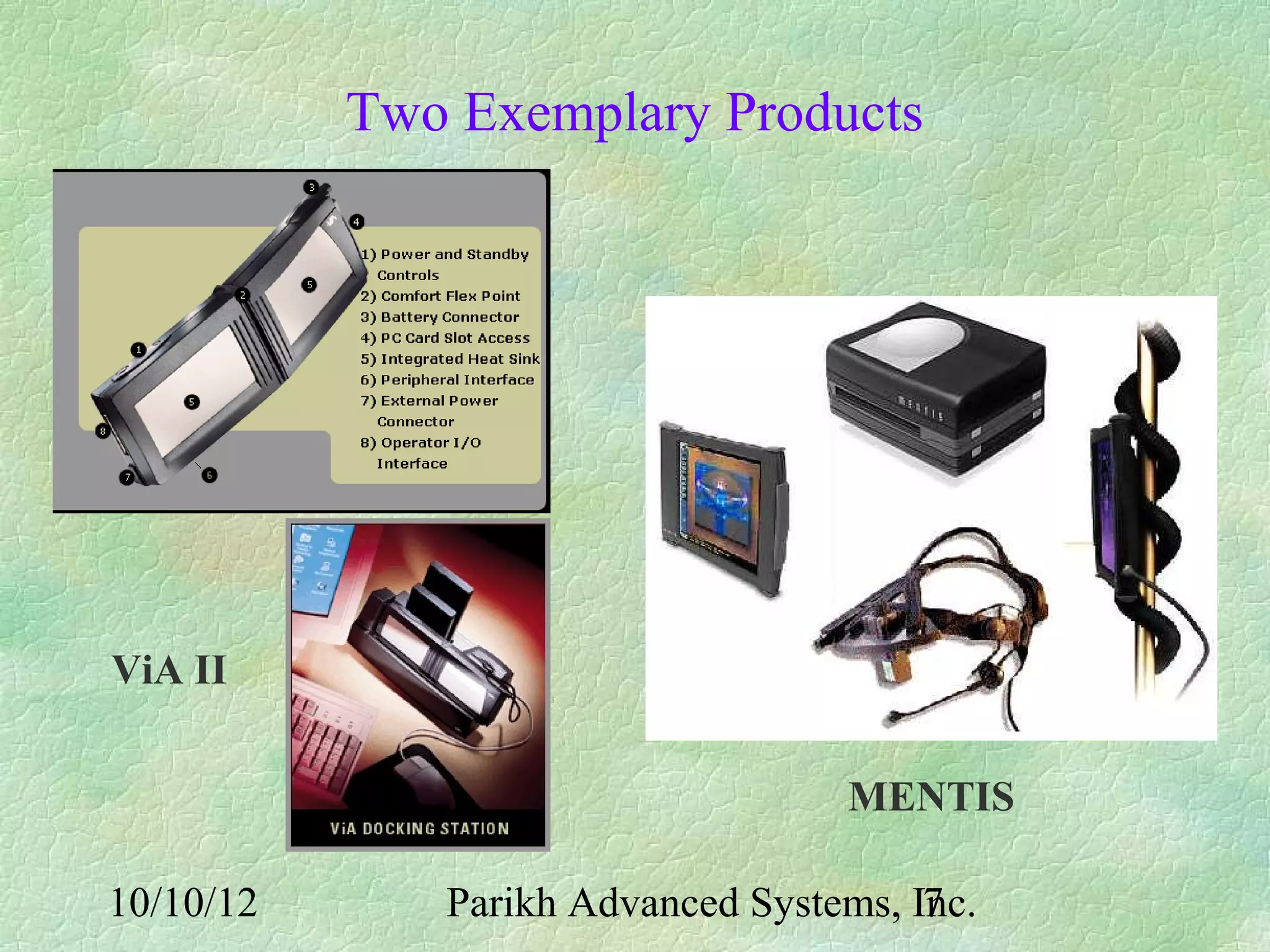

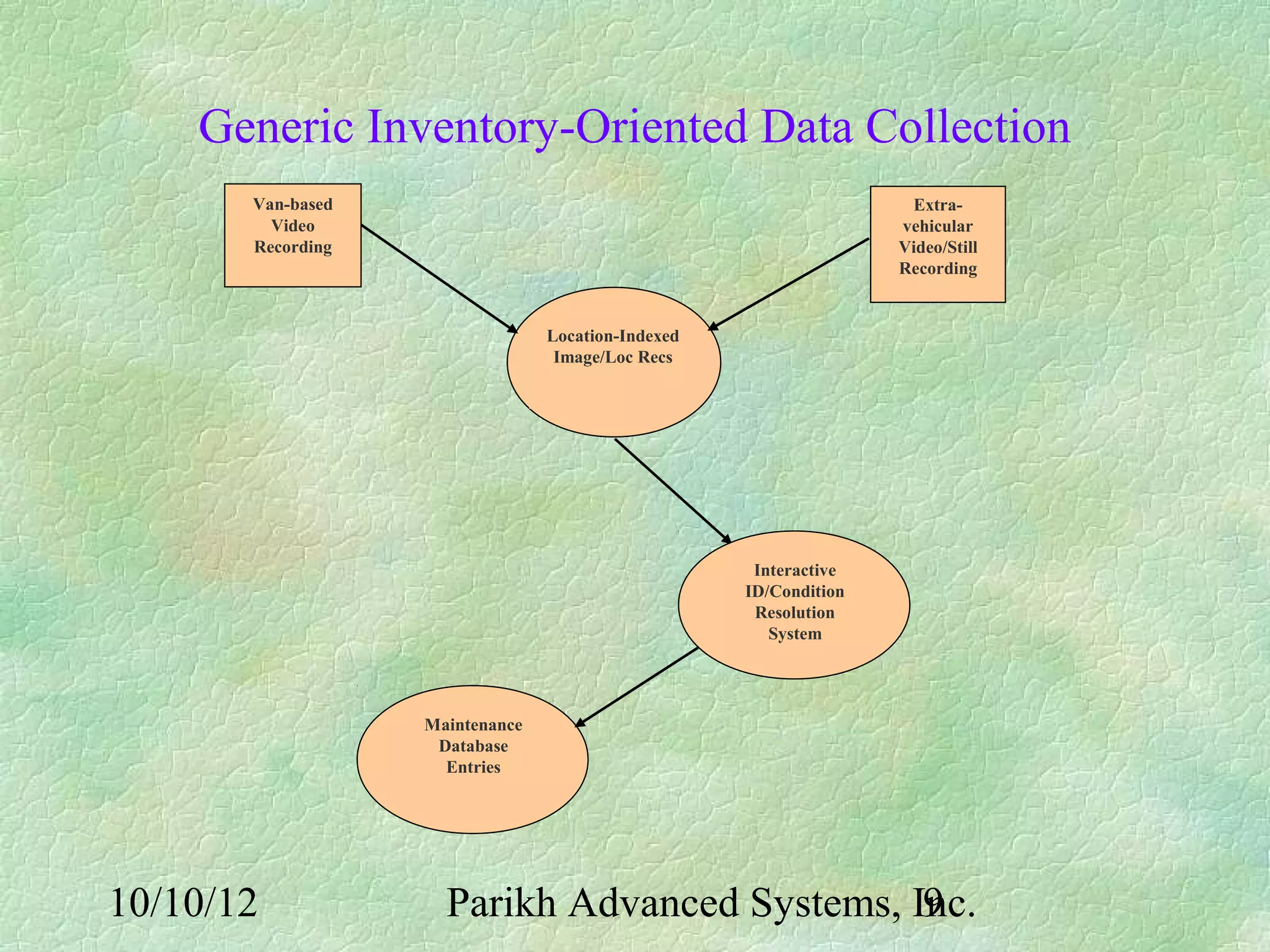

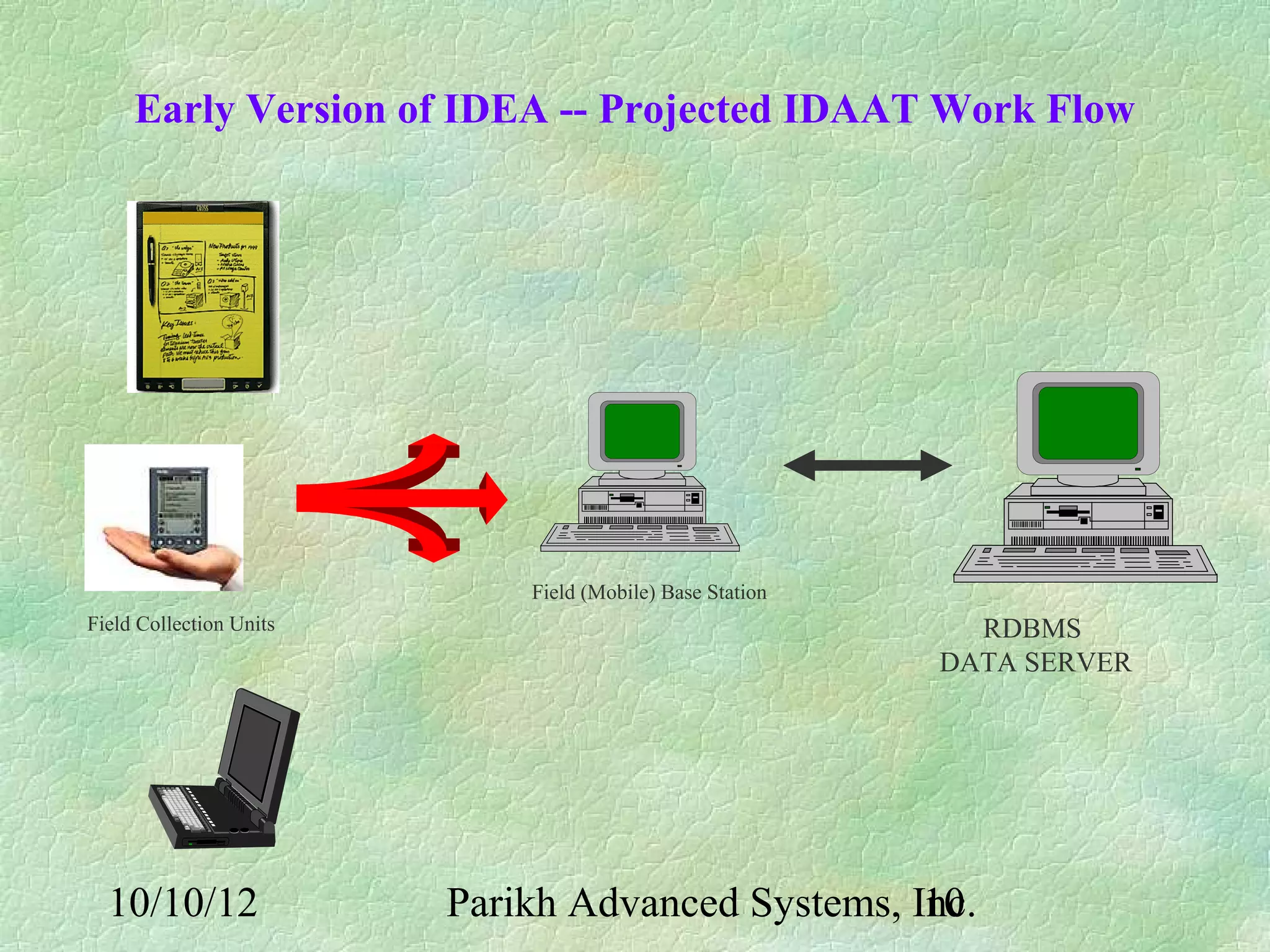

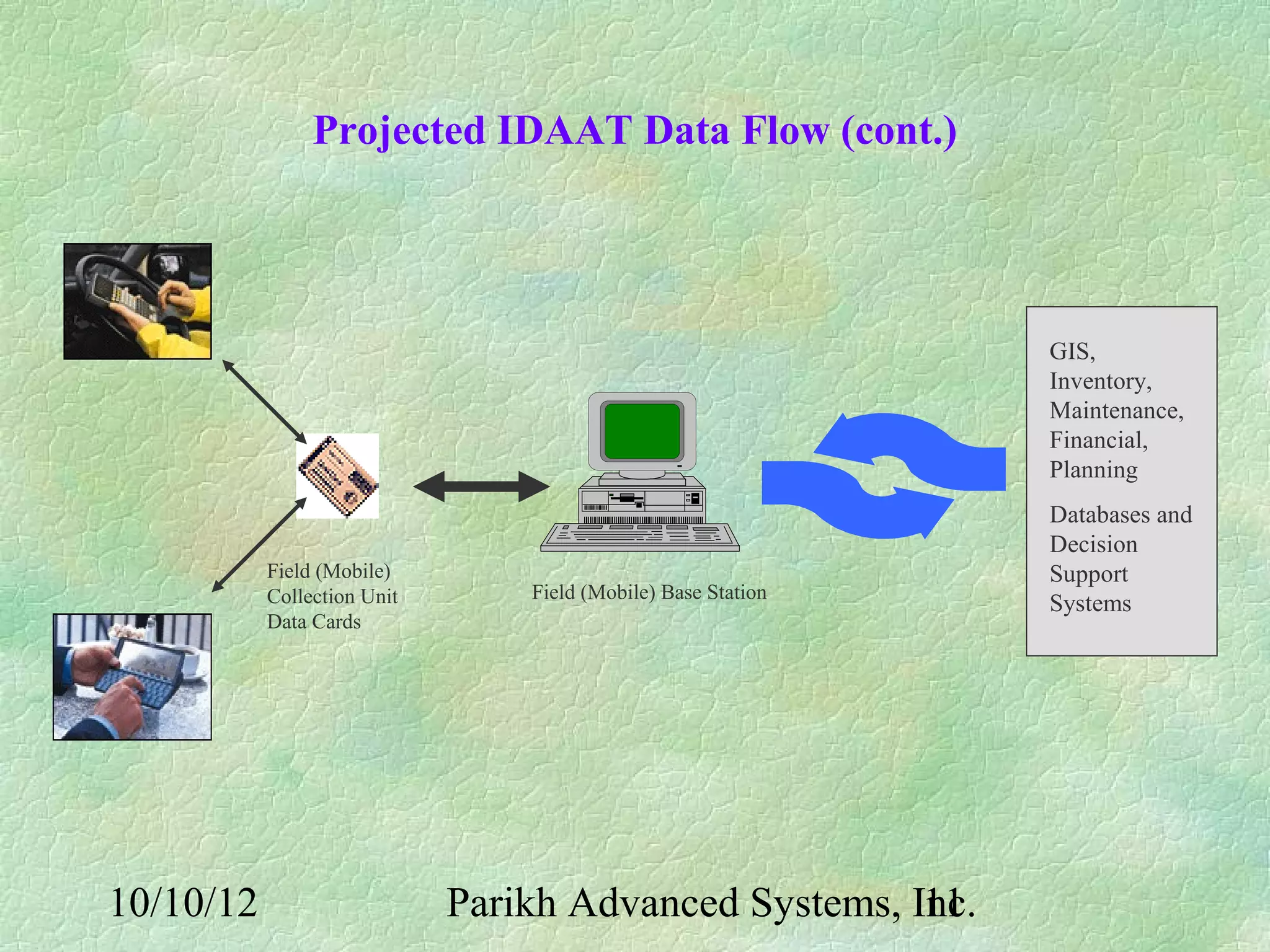

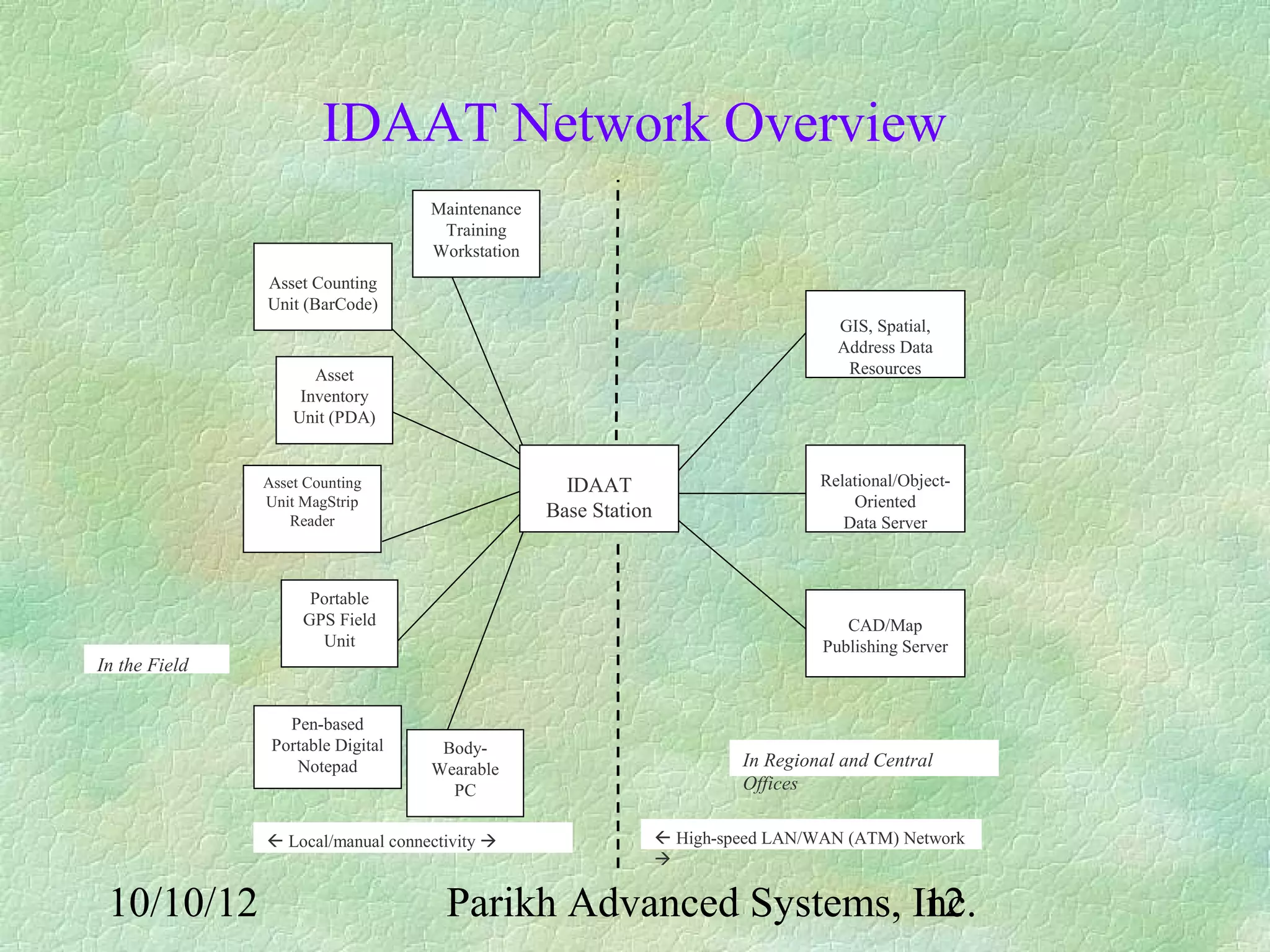

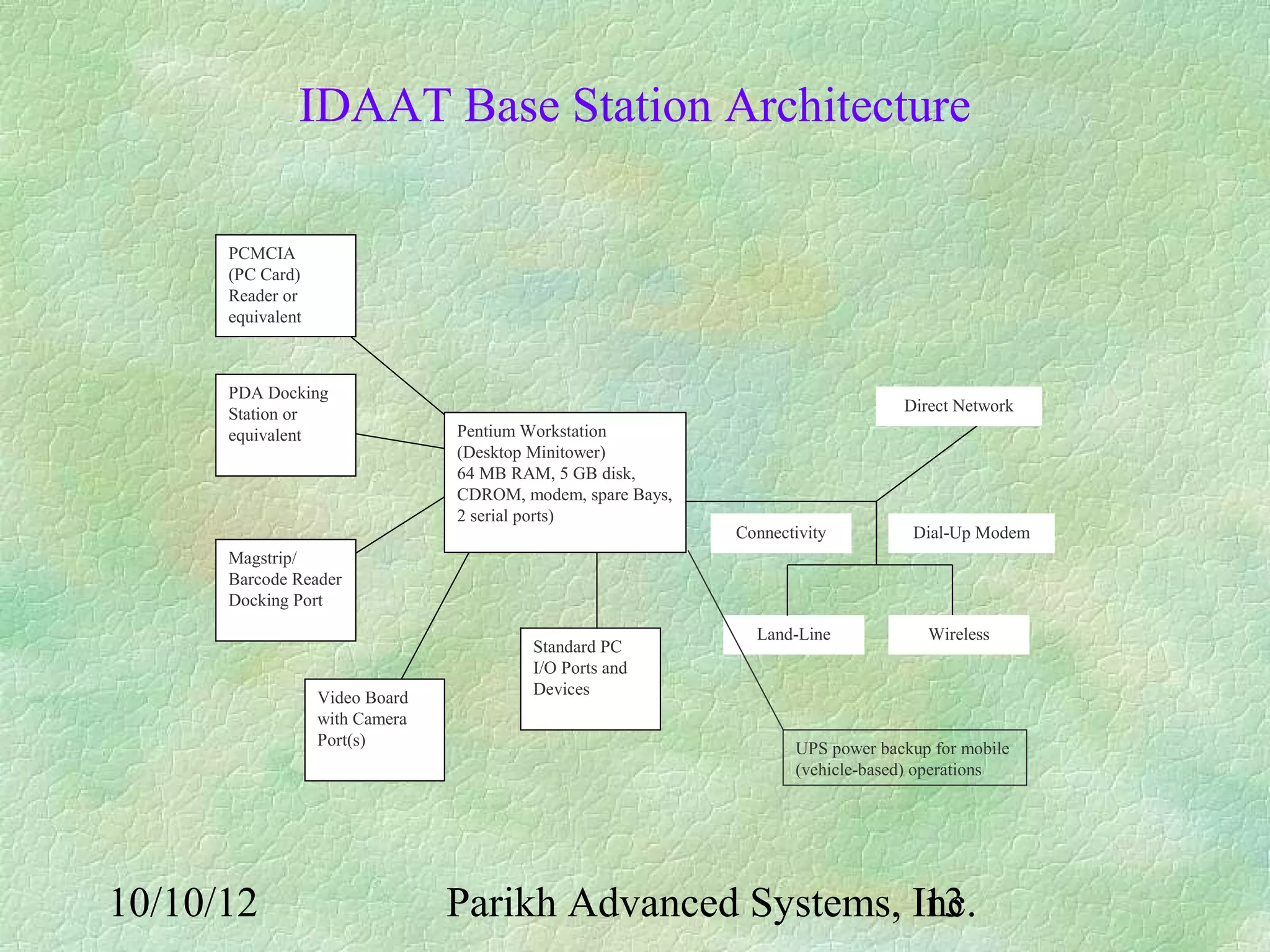

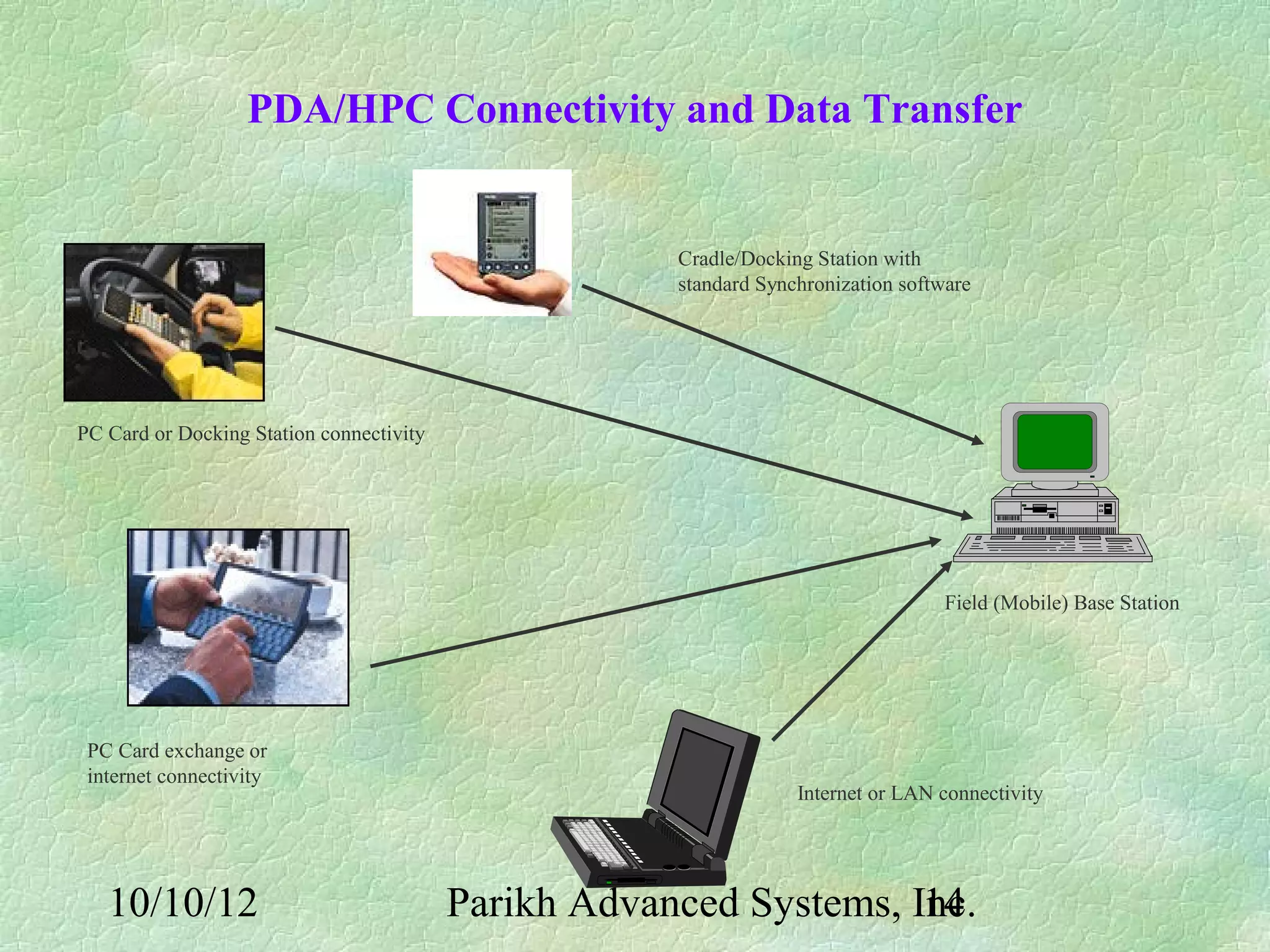

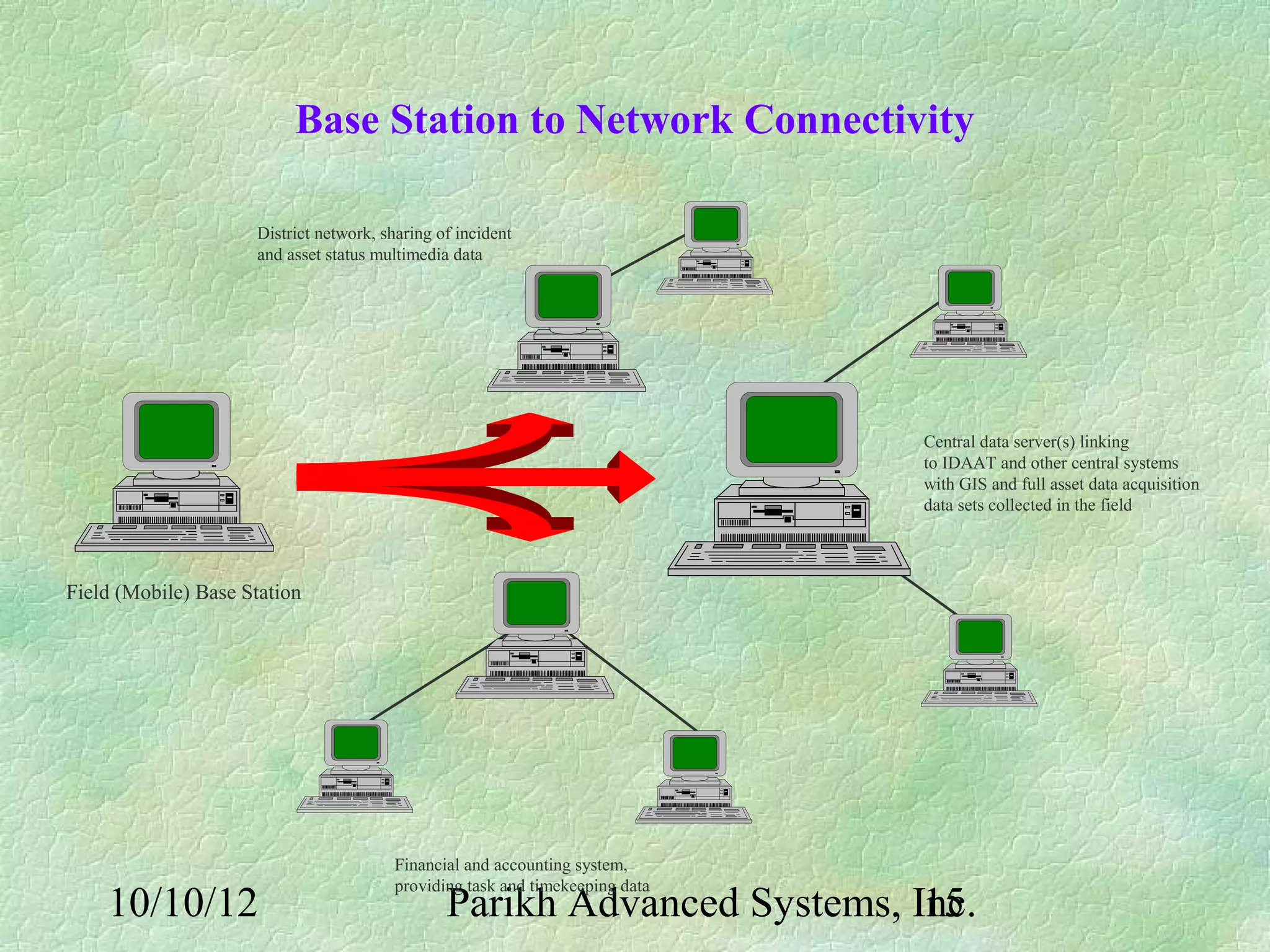

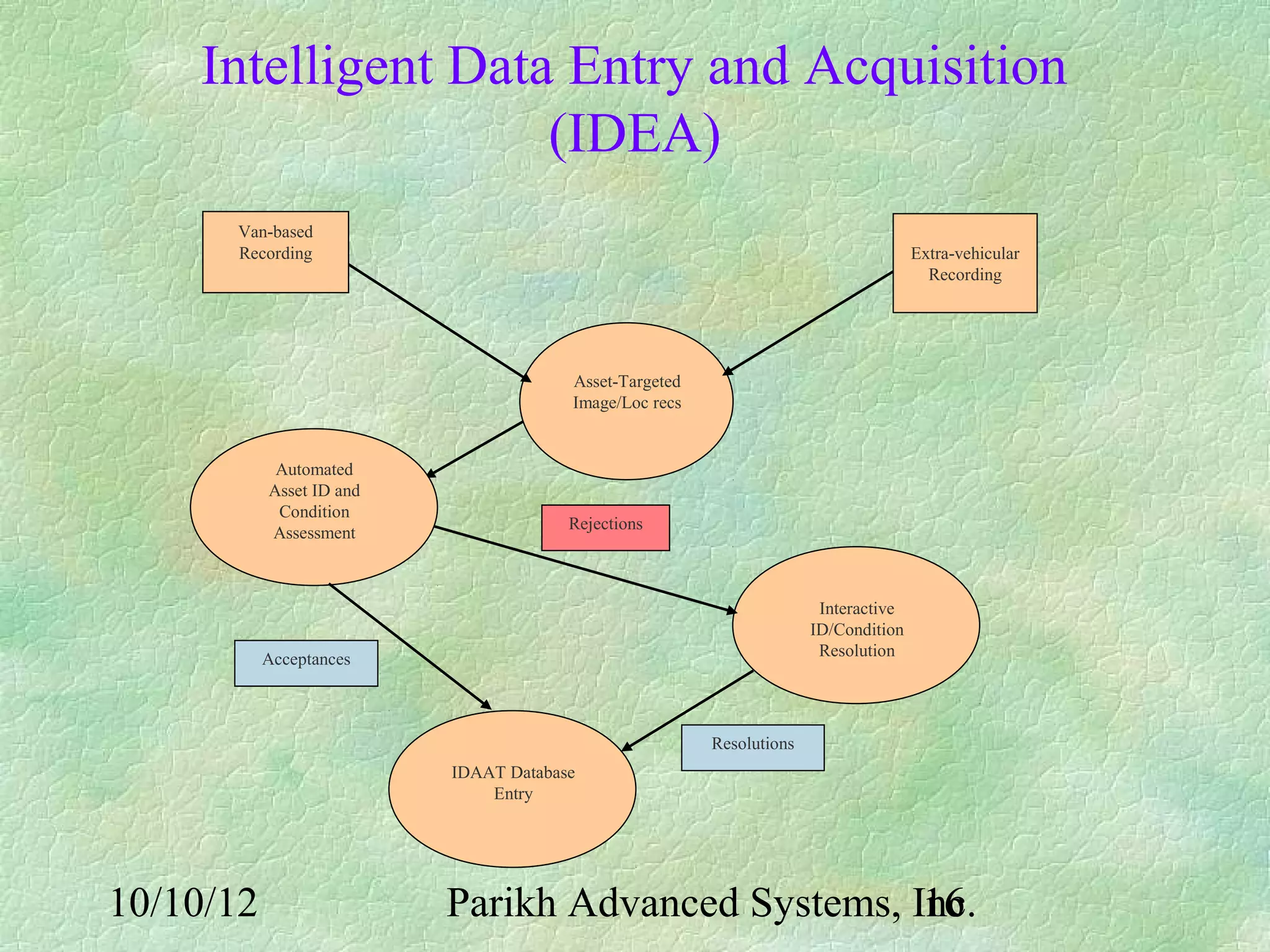

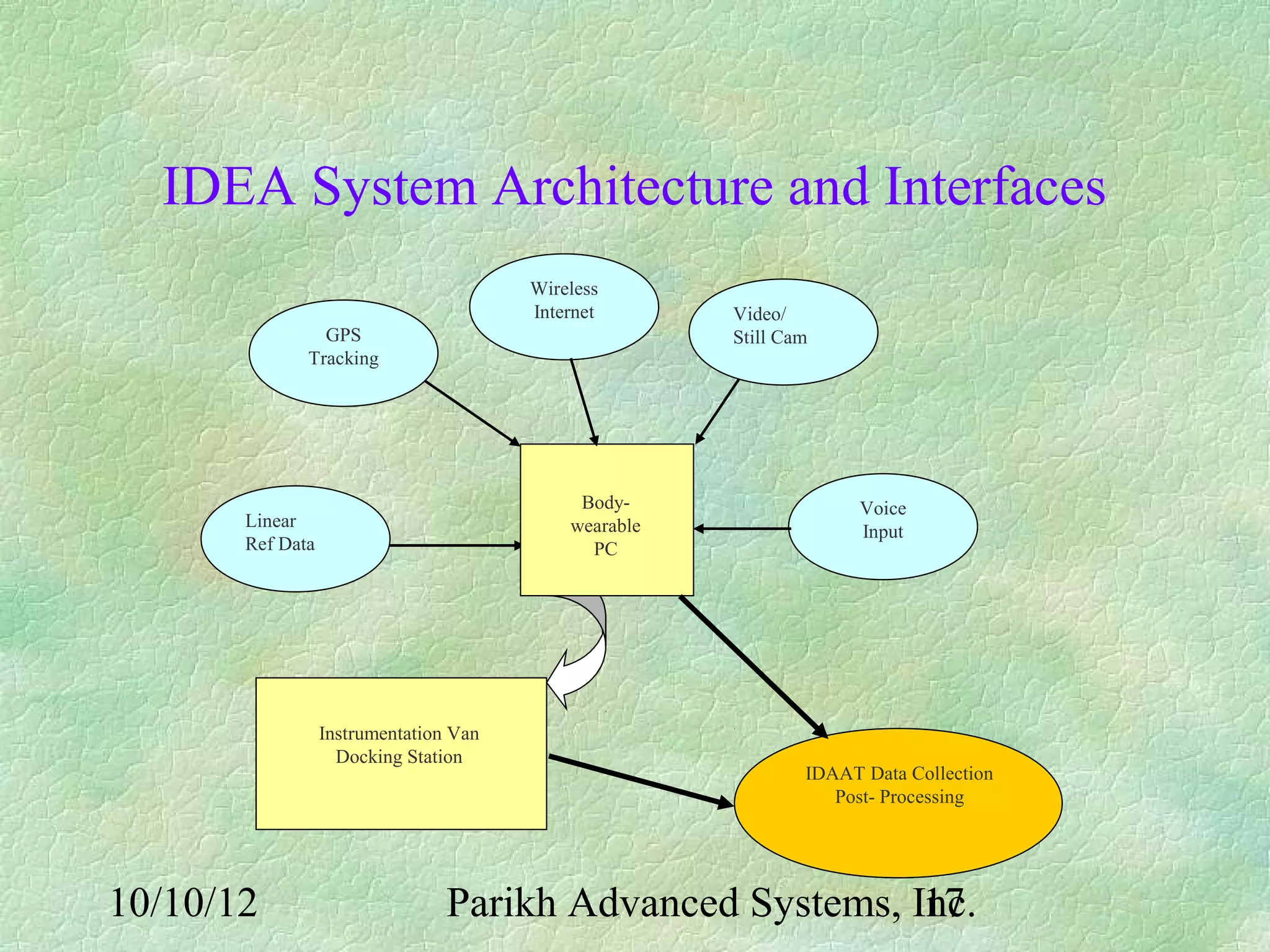

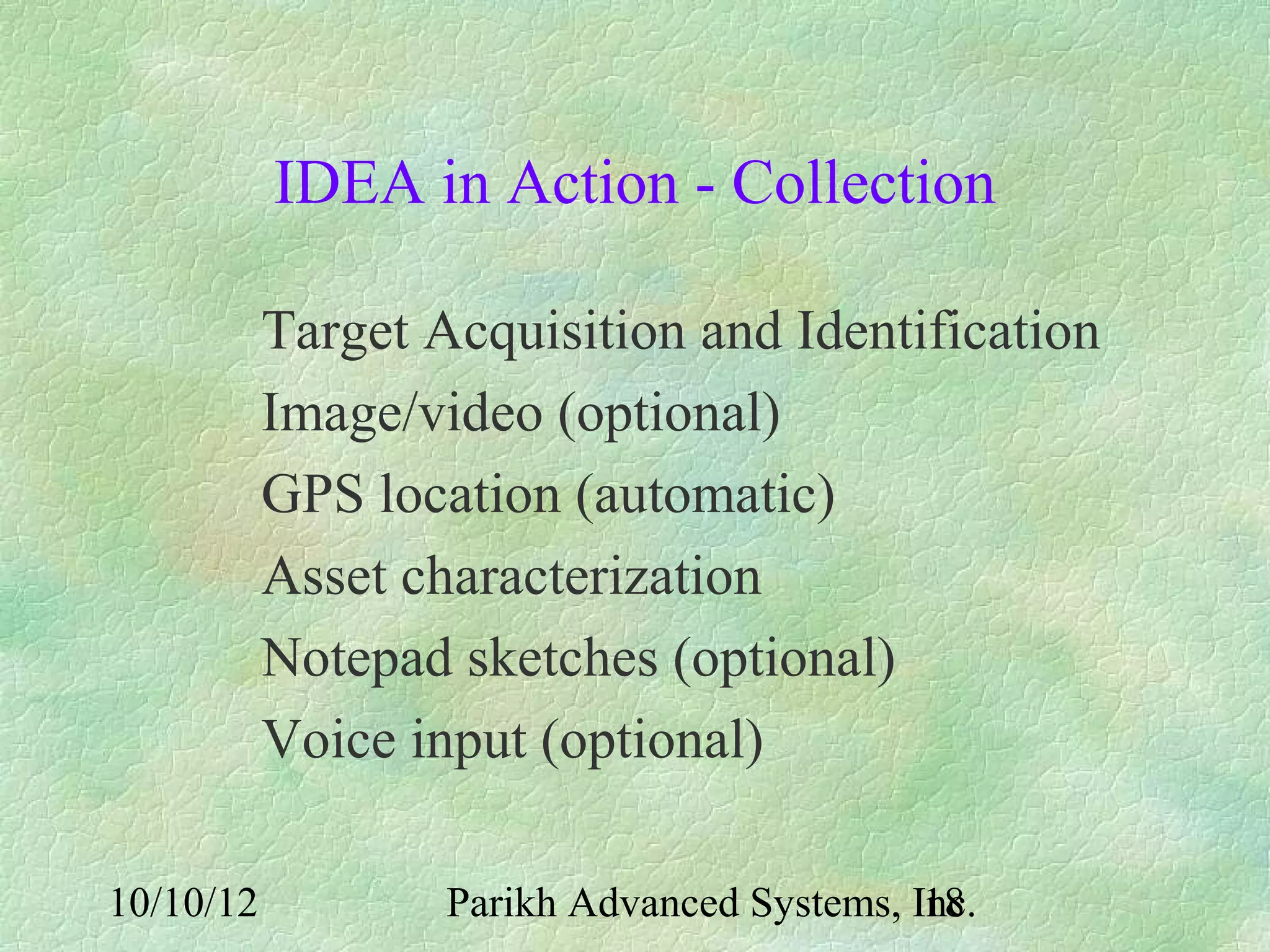

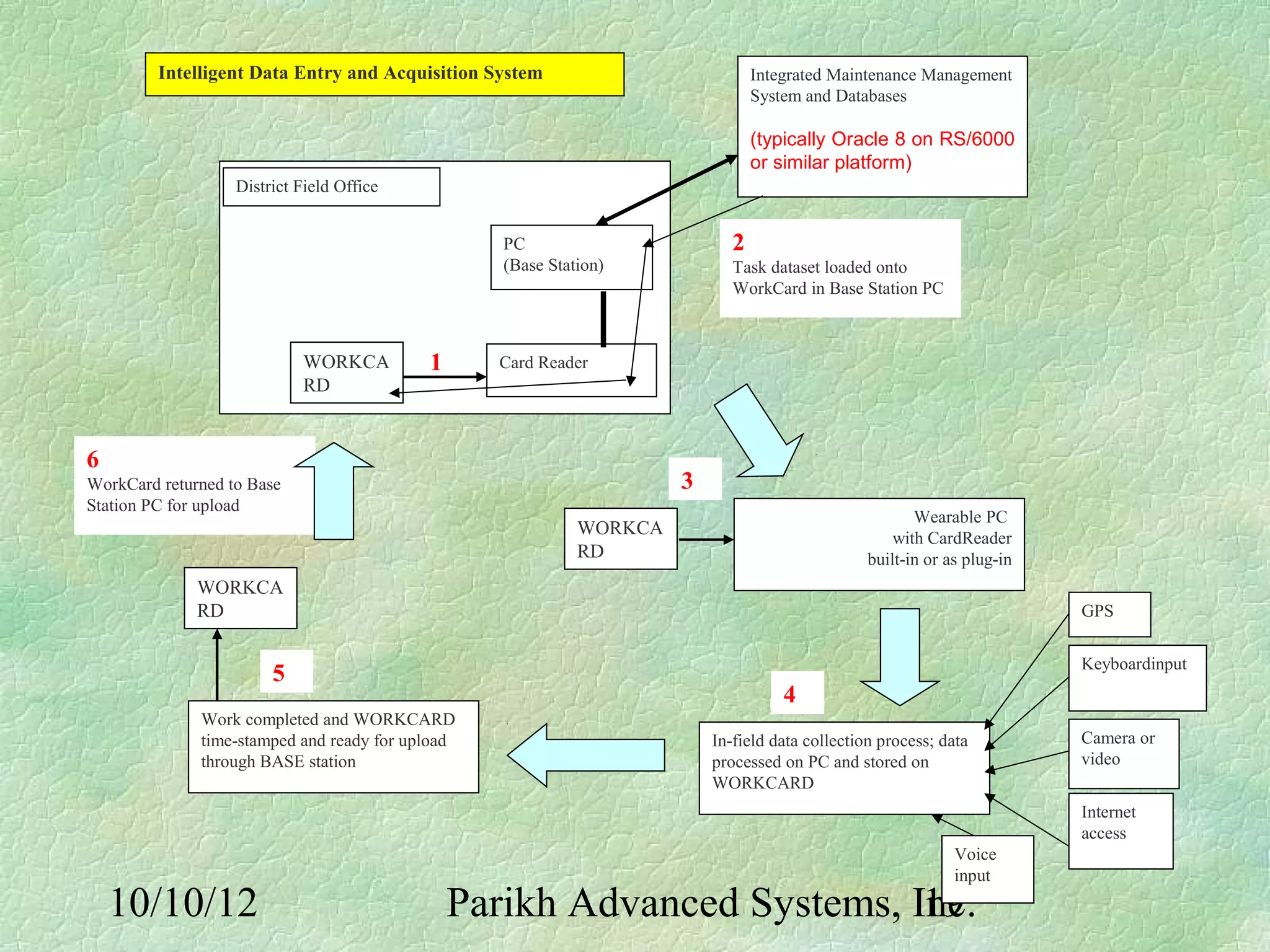

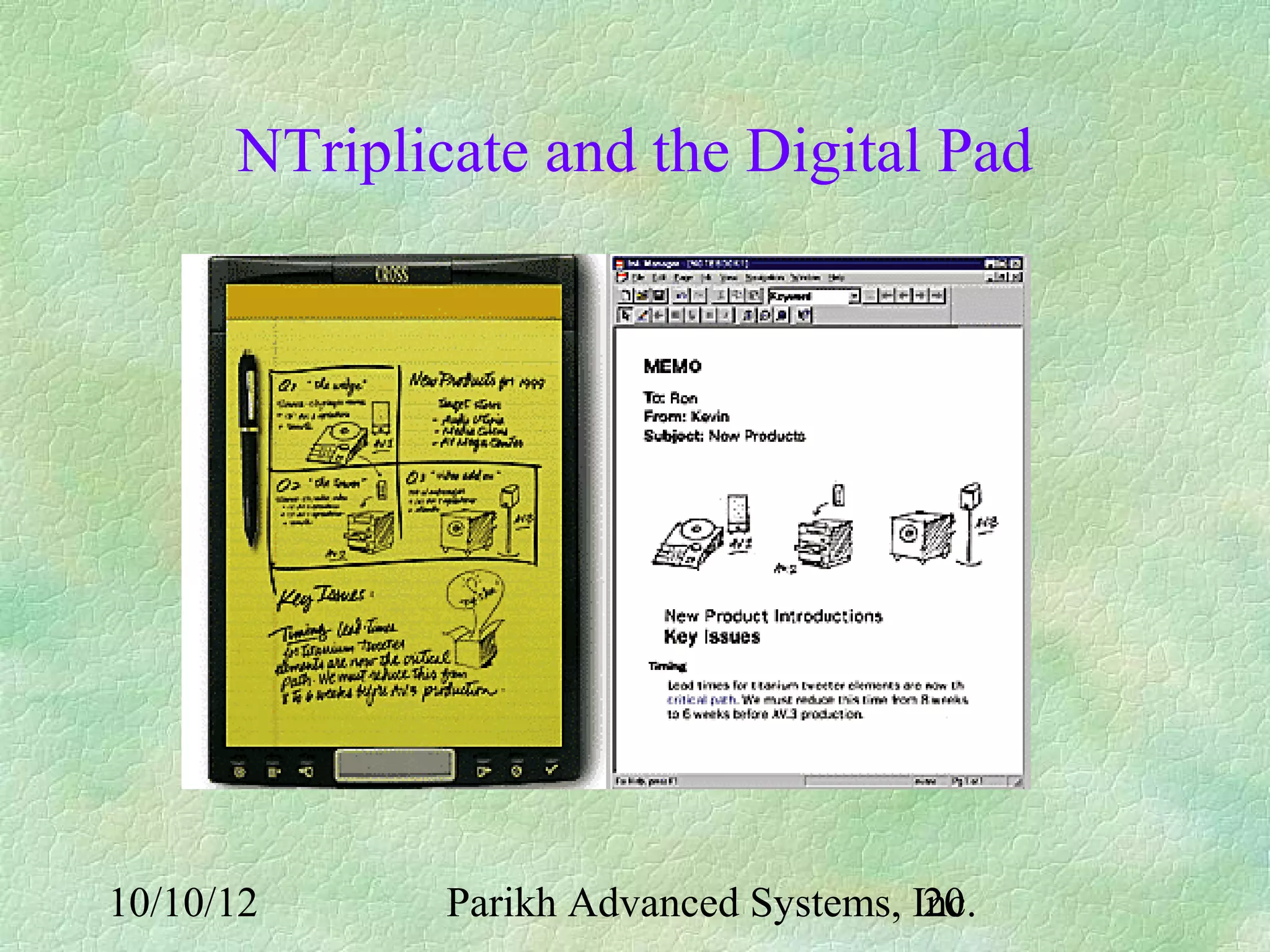

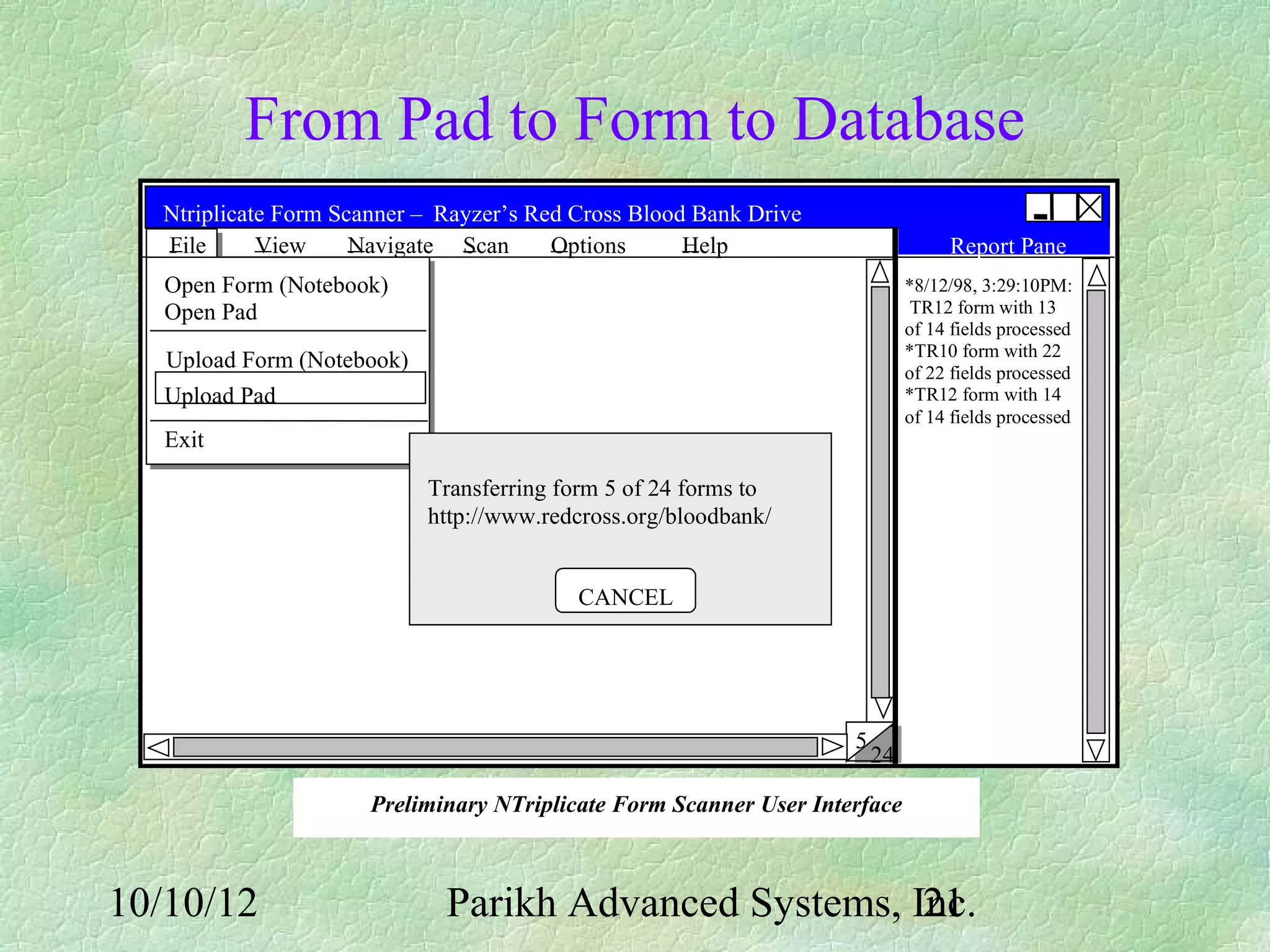

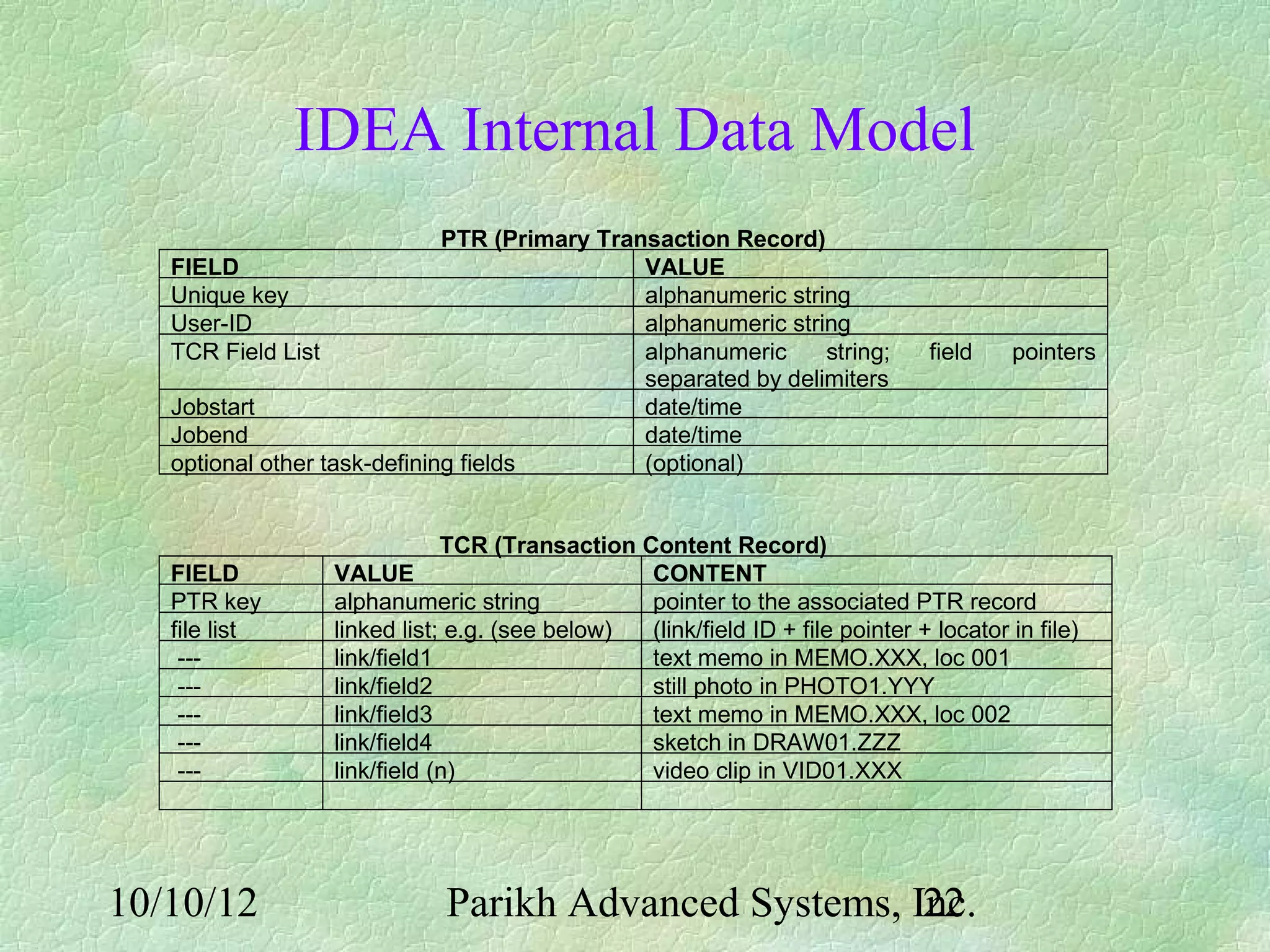

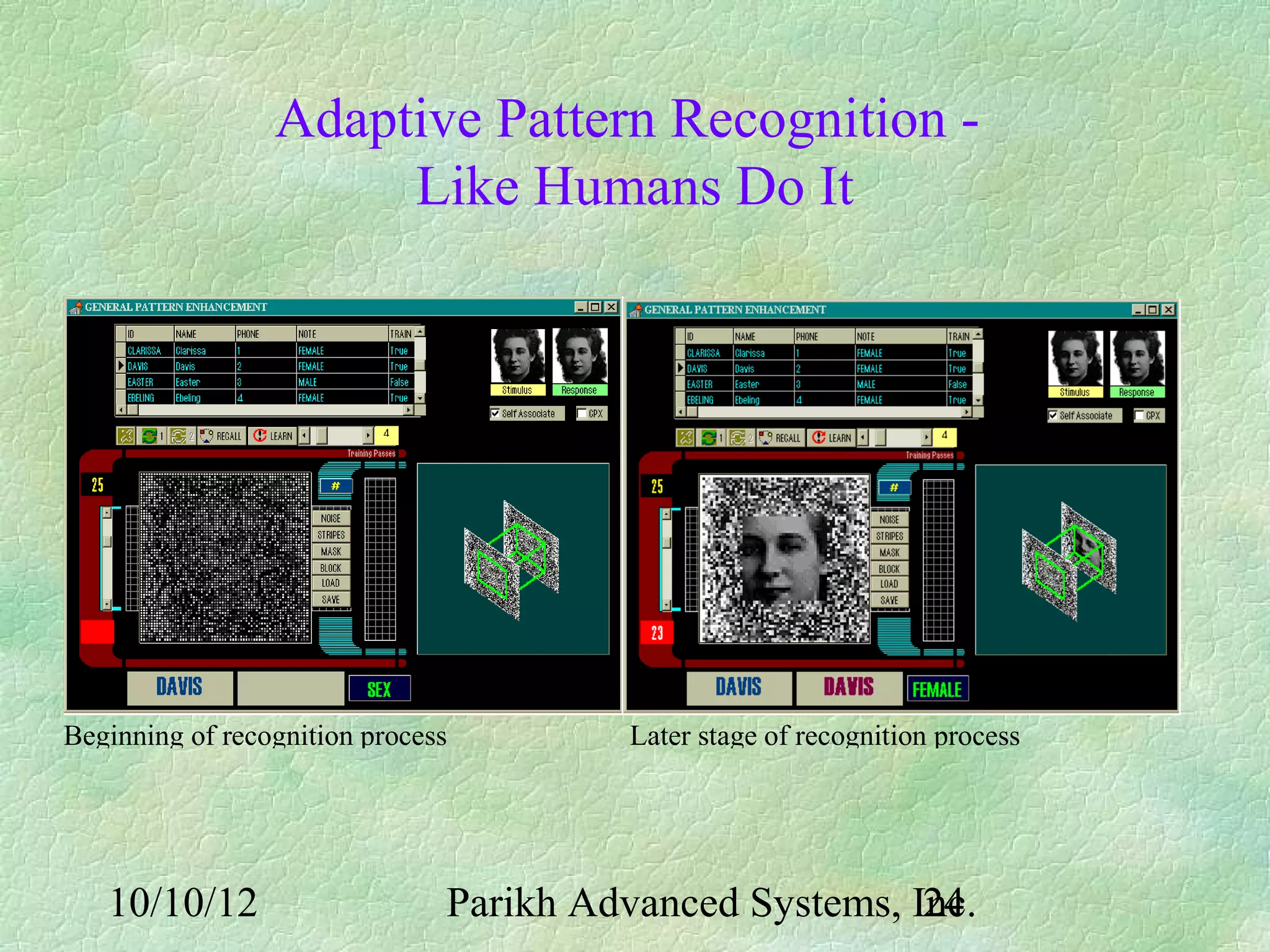

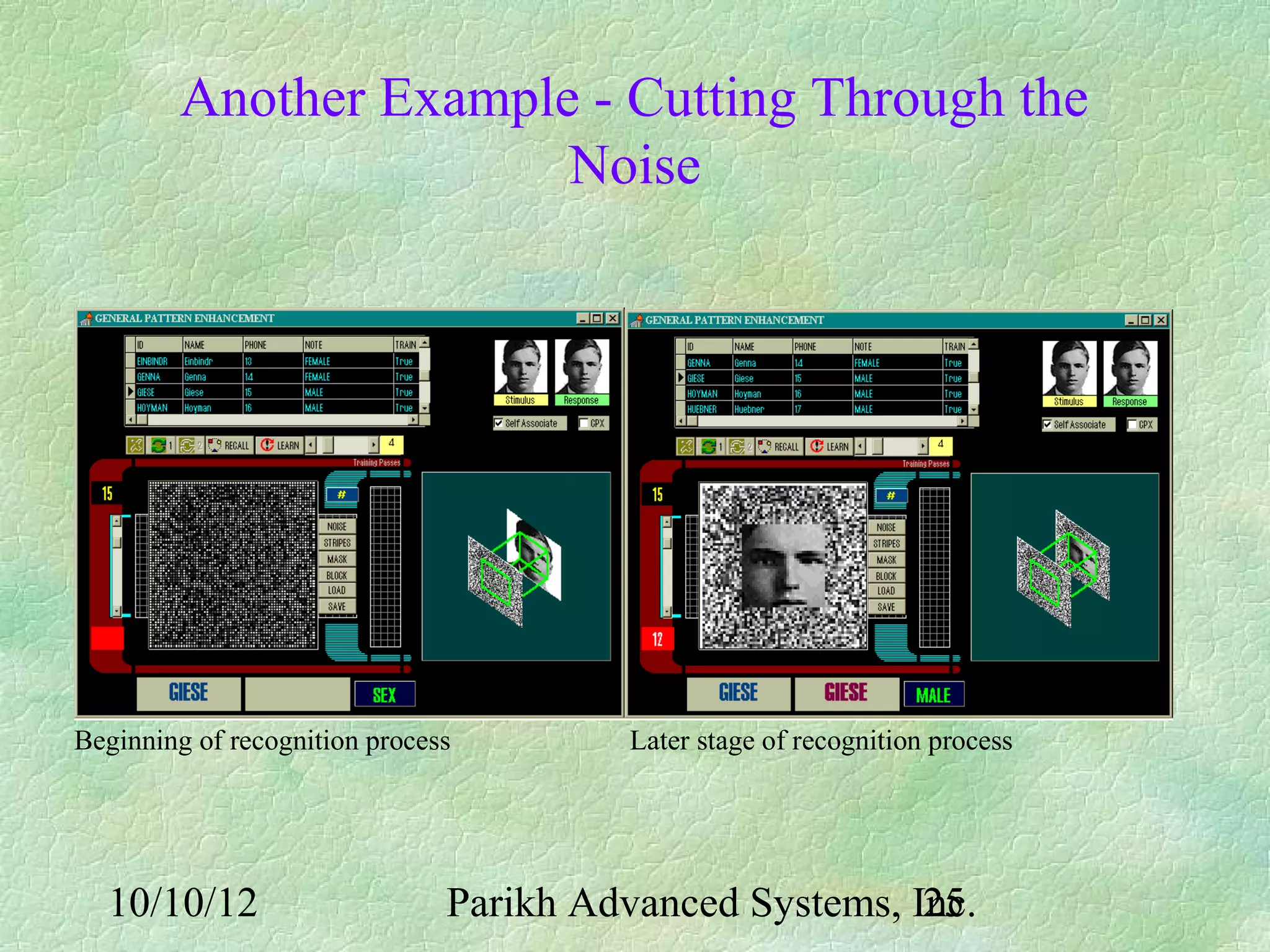

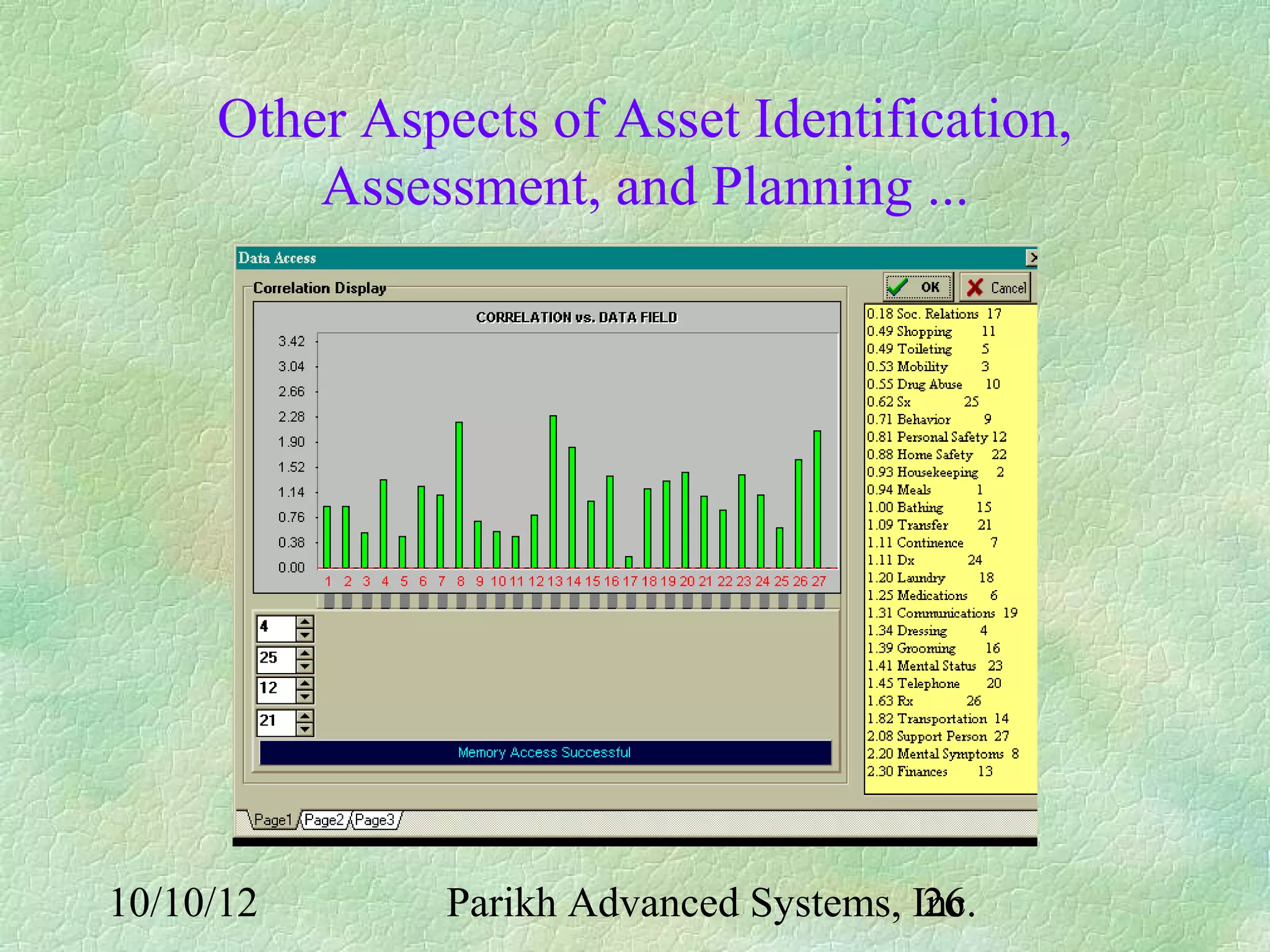

This document proposes an Intelligent Data Entry and Acquisition (IDEA) system to help with on-site highway maintenance and construction. It describes an architecture using wearable computers and sensors to collect asset data in the field, process it using pattern recognition, and upload it to centralized databases. Field workers could use tools like digital notepads, cameras, and GPS to gather location-tagged images, notes and condition reports on assets, which the IDEA system would then analyze and integrate into maintenance planning databases back at the office. The goal is to streamline data collection and improve safety, productivity and data quality for tasks like infrastructure inspections.