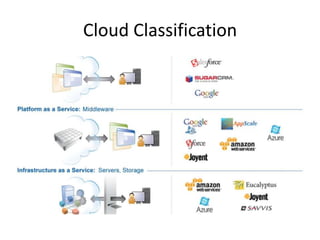

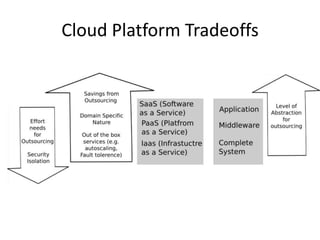

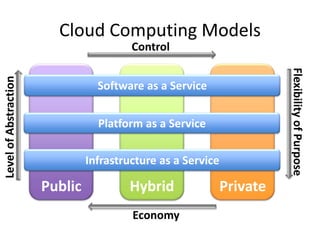

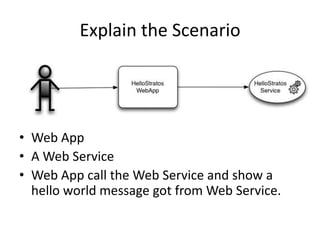

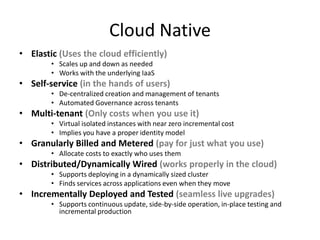

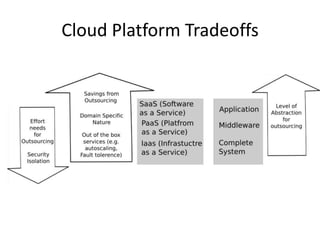

This document provides an overview of cloud computing and demonstrates how to create and deploy a sample application in the cloud. It begins with definitions of cloud computing and discusses the benefits of the cloud model. It then demonstrates deploying a web application and web service to the Amazon EC2 platform as an example of Infrastructure as a Service (IaaS). The document compares IaaS to Platform as a Service (PaaS) and provides Google App Engine as an example of PaaS.