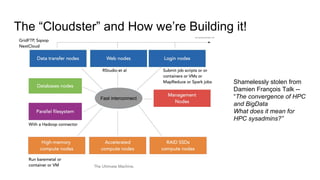

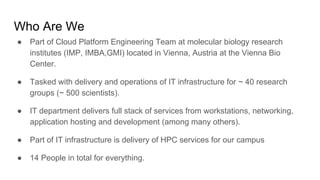

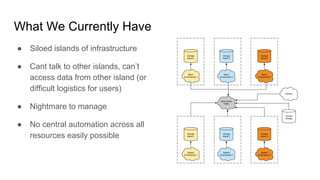

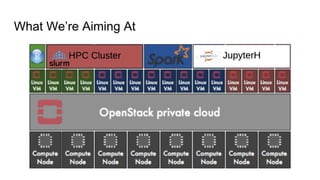

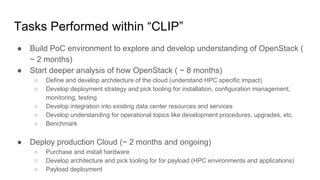

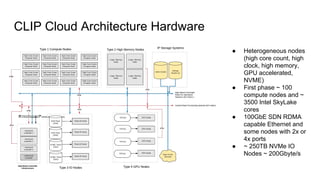

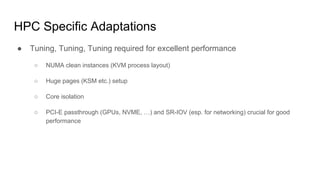

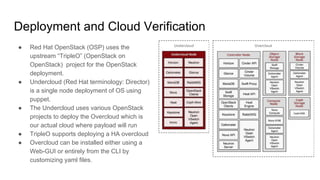

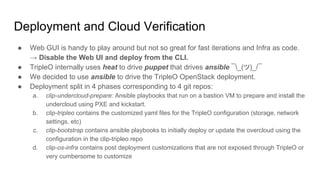

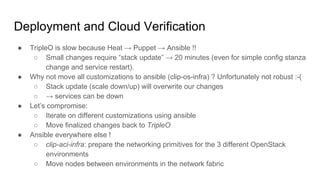

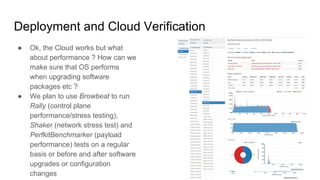

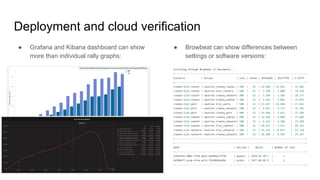

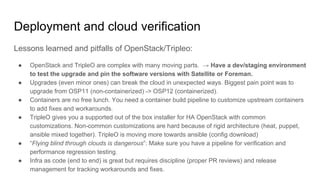

The document outlines a presentation on the cloud infrastructure project 'Clip' at Vienna Bio Center, which aims to enhance HPC services using OpenStack. It details the team's structure, existing challenges with siloed infrastructure, and the project's phased approach to build and deploy a software-defined data center. Key lessons emphasize the complexity of OpenStack, the necessity for multiple environments for deployment verification, and strategies for effective monitoring and performance testing.