Haystack 2019 - Custom Solr Query Parser Design Option, and Pros & Cons - Bertrand Rigaldies

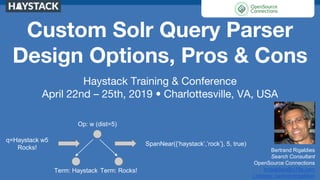

- 1. Custom Solr Query Parser Design Options, Pros & Cons Haystack Training & Conference April 22nd – 25th, 2019 • Charlottesville, VA, USA Bertrand Rigaldies Search Consultant OpenSource Connections brigaldies@o19s.com Linkedin: bertrandrigaldies Op: w (dist=5) Term: Haystack Term: Rocks! SpanNear({‘haystack’,’rock’}, 5, true) q=Haystack w5 Rocks!

- 2. Haystack 2019, April 24-25 Agenda - Query parsers’ purpose - Query parser composition in Solr - When do you need a custom query parser? - How to build a custom query parser? - Pros and cons of various design approaches - Beyond query parsers 2

- 3. Haystack 2019, April 24-25 Search engine big picture Documents Search results Ranked, highlighted, Faceted Matches Query Index Credit: Doug Turnbull, "Think Like a Relevance Engineer” training material, Day #2, Session #1 3

- 4. Haystack 2019, April 24-25 What’s The Problem Here? 1. [ Expression → Search Executable ] compilation 2. Query Understanding 3. How do your users search? ○ “Natural” language, as we increasingly do everyday ○ Or, a more formal search language: ■ With operators like boolean and proximity ■ Advanced custom query syntax ○ Or, some kind of hybrid of the above End-Users Spectrum Casual, Occasional Professional LibrarianSeasoned 4

- 5. Haystack 2019, April 24-25 What’s The Problem? (again) Is it the FIRST relevancy issue in a search application project: How do we translate the end-user’s high-level search expression into an executable that will most effectively approximate what the end-user is looking for? 5

- 6. Haystack 2019, April 24-25 What Can We Do Out-of-the-box? ● A lot! Solr (ES too) offers powerful query parsers out of the box: ○ “Classic” Lucene: ■ df=title, q=I love search → title:i title:love title:search ○ “Swiss Army Knife” edismax: ■ qf=title body, q=I love search → +( (title:i | body:i) (title:love | body:love) (title:search | body:search) ) 6

- 7. Haystack 2019, April 24-25 How far can I go? Search for the capitalized term “Green”, but not the adjective “green”, that is 5 positions or less before the noun “deal”. {!lucene} “green deal”~5 {!surround} green 5w deal {!surround} 5w(2w(green,deal), congress OR legislation) _query_:”{!cap}firstcap(green)” AND _query_:”{!proximity}green 5w deal” 7

- 8. Haystack 2019, April 24-25 Query Parsers Composition ● Solr provides a large variety of QPs (28 and counting, JSON Query DSL), that are composable: _query_:"{!lucene}"green deal"" AND _query_:"{!surround} 5n(congress, democrat)" 8

- 9. Haystack 2019, April 24-25 Query QPs Composition (Cont’d) Solr XML QP: <BooleanQuery fieldName="title_txt"> <Clause occurs="must"> <SpanNear slop="0" inOrder="true"> <SpanTerm>green</SpanTerm> <SpanTerm>deal</SpanTerm> </SpanNear> </Clause> <Clause occurs="must"> <SpanNear slop="5" inOrder="false"> <SpanTerm>congress</SpanTerm> <SpanTerm>democrat</SpanTerm> </SpanNear> </Clause> </BooleanQuery> 9

- 10. Haystack 2019, April 24-25 Query QPs Composition (Cont’d) Solr JSON QP: { "query": { "bool": { "must": [ {"lucene": {"df": "title_t", "query": ""green deal""}}, {"surround": {"df": "title_t", "query": "5n(congress, democrat)"}} ] }}} 10

- 11. Haystack 2019, April 24-25 What If We Need To Go Beyond? ● There are limitations and quirks, e.g., the Solr “Surround” QP: ○ Distance <= 99; ○ Search terms are not analyzed! What? ● What about operators that do not exit? ○ Capitalization: Match Green, but not green ○ Frequency: Must match N times or less ○ As-is: Search for a term as written. ● What do we do now? Enter the world of custom query parsers! 11

- 12. Haystack 2019, April 24-25 Demo: Let’s build a simple proximity query parser! … CVille Haystack w5 Rocks 2019 ... - Analyze terms - Distance >= 0, no upper limit - Operator: Same as surround (w<dist>, n<dist>) - https://github.com/o19s/solr-query-parser-demo 12

- 13. Haystack 2019, April 24-25 Query Parser Plugin Anatomy ProximityQParserPlugin.java: public class ProximityQParserPlugin extends QParserPlugin { public QParser createParser(String s, SolrParams localParams, SolrParams globalParams, SolrQueryRequest solrQueryRequest) { return new ProximityQParser(s, localParams, globalParams, solrQueryRequest); } } In solrconfig.xml: <queryParser name="proximity" class="com.o19s.solr.qparser.ProximityQParserPlugin"/> <requestHandler name="/proximity" class="solr.SearchHandler"> <lst name="defaults"> <str name="defType">proximity</str> ... 13 QP “Factory” Class Solr Config

- 14. Haystack 2019, April 24-25 Custom QP & Request Handler <requestHandler name="/proximity" class="solr.SearchHandler"> <lst name="defaults"> <str name="defType">proximity</str> <str name="qf">title_txt</str> <str name="fl">id, title_txt, pub_dt, popularity_i, $luceneScore, $dateBoost, $popularityBoost, $myscore</str> <str name="dateBoost">recip(ms(NOW,pub_dt),3.16e-11,1,1)</str> <str name="popularityBoost">sum(1,log(sum(1, popularity_i)))</str> <str name="mainQuery">{!proximity v=$q}</str> <str name="luceneScore">query($mainQuery)</str> <str name="myscore">product(product($luceneScore, $dateBoost), $popularityBoost)</str> <str name="order">$myscore desc, pub_dt desc, title_s desc</str> <str name="hl">true</str> <str name="hl.method">unified</str> <str name="hl.fl">title_txt</str> <str name="facet">true</str> <str name="facet.mincount">1</str> <str name="facet.field">popularity_i</str> <str name="facet.range">pub_dt</str> <str name="f.pub_dt.facet.range.start">NOW/DAY-30DAYS</str> <str name="f.pub_dt.facet.range.end">NOW/DAY+1DAYS</str> <str name="f.pub_dt.facet.range.gap">+1DAY</str> </lst> </requestHandler> 14 Solr Config (cont’d) QP Highlighting Faceting Boosting and custom scoring

- 15. Haystack 2019, April 24-25 Query Parser Plugin Anatomy ProximityQParser.java: public class ProximityQParser extends QParser { public ProximityQParser(String qstr, SolrParams localParams, SolrParams params, SolrQueryRequest req) { super(qstr, localParams, params, req); } public Query parse() throws SyntaxError { // Parse and build the Lucene query Query query = parseAndComposeQuery(qstr); return query; } } 15 Parse end-user’s search string; Generate the Lucene query, and return it to Solr.

- 16. Haystack 2019, April 24-25 Query Parser’s “Parse Flow” 16 Op: w (dist=5) Term: Haystack Term: Rocks! SpanNear({‘haystack’,’rock’}, 5, true) q=Haystack w5 Rocks! 1. Parse 2. Analyze 3. Generate Op: w (dist=5) Term: haystack Term: rock

- 17. Haystack 2019, April 24-25 Demo 1. Overview of the Java code 2. Run unit tests 3. Deploy the plugin jar 4. Run test queries 5. Examine scoring 17

- 18. Haystack 2019, April 24-25 Score 18

- 19. Haystack 2019, April 24-25 Query Parser Strategies “Natural” Query Language Application Search box: green deal Solr q={!edismax} green deal QP: edismax Custom Query Language (Moderate Complexity) Application Search box: dog near/5 house Solr QP: surround q={!surround} green 5n deal Custom Query Language (Any Complexity) Application Search box: cap(green) near/5 deal Solr QP: MyQP q={!myqp} cap(green) near/5 deal 19 QP: MyQP

- 20. Haystack 2019, April 24-25 Query Parser Strategies Comparison Criteria edismax Solr QPs Composition Custom QP Software R&D No Moderate High 20 Ease of Solr upgrade Very Good Good To be managed Performance Good Good Better vs. Solr QPs composition But be careful! Deployment - - Plugin jar(s) Ease of Relevancy Tuning The good ol’ edismax Individual QPs’ knobs and dials More software to write!

- 21. Haystack 2019, April 24-25 Entities Recognition vs. Query Parsing Search Requests Load Balancer ...Solr Node MyQP Solr Node MyQP Solr Node MyQP Solr Node MyQP Load Balancer Entities Recognition Service Search Service Search Service Search Service ... 21

- 22. Haystack 2019, April 24-25 Closing Remarks ● QPs are a lot of fun, BUT: ○ Make sure you really need to go beyond the out- the-box features! ○ Great power comes with great responsibility. Careful what you write! ○ Relevancy knobs and dials can be tricky to re- implement: Multi-field, term- vs. fields-centric, mm, field boosting, etc. ● The next frontier: Custom Lucene queries ○ Multi-terms synonyms w/ equalized scoring ○ Frequency operators 22

Editor's Notes

- Good morning everyone. My name is Bertrand Rigaldies. I am an OpenSource Connections search consultant. I joined OSC in early 2017. I have worked primarily in Solr, working on a variety of search relevancy issues. Lately, I have been very fortunate to work on a custom query parser for a great client, represented by some of you in the audience. A large part of this talk has been inspired by this work. In this talk I would like to share with you my experience with Query Parsers: Why and when do we need them? How to write one? The different design and implementation options, their pros and cons, and some pitfalls. This talk is definitely more engineering than science, more back-to-the-fundamentals than let’s-re-invent-search. It’s a let’s lift the hood and see the different nuts and bolts of Query Parsers. Hopefully the talk will give some ideas you can take back to your jobs. Quick polling of the audience on the topic: Raise your arm if you have no idea or only a vague idea of what a query parser is Raise your arm if you have participated in the development of a custom query parser? Raise your arm if you are a developer, and/or you’re comfortable with Java?

- Some basics first. Let’s locate the Query Parser, as an architecture component, in the overall Solr (or ES) architecture. This slide was borrowed and adapted from the OSC Solr training material. Talking Points: Where does query parsers belong in the big picture of a search engine? Left side handles documents indexing Right side handles querying The concentric circles should be from center going out Query goes through the following 4 concentric circles of processing: Normalization of the search terms (tokenization, filters, etc.) Matching and ranking, which is responsibility of query parsers Decoration, such as snipetting, highlighting, term vectors spell checking Analytics, such as facets In this talk we’ll be focusing on the Matching and Ranking ring.

- SLIDE CAPTION ONLY So, what is the problem? Well, with Query Parsers, we are staring at one of the core challenges of search engines: How to understand the text that the end-user typed or spoke, and turn it into code that can be executed to search. Click NEXT The first part of the problem is essentially the classic problem of compiling a high-level formalism (e.g., everyday language English, or other more formal form of search expressions) to low-level executable code). For the most part, the first issue is well understood from a computer science standpoint. There are several great tools to generate compilers (javacc, Antler, etc.; Note: The Lucene classic search language is implemented in javacc). And, as we’ll see, Lucene provides a rich set of search primitives that we can use to create executable search code. CLICK NEXT The second part of the problem is more challenging, and has to do with “query understanding”: What is the end-user saying, and what is the appropriate executable search construct? The Holy Grail of search is to search what the end-user means, not what he/she typed. Well, we haven’t invented a compiler that can do that yet! Ha ha. Now, more practically for our applications design, we should ask ourselves how end-users will search. That understanding will inform how to parse the text they type or speak. NEXT, NEXT, etc. So-called “Natural” language, a la Google Or, more formal languages: Boolean and proximity like the Classic Lucene syntax More advanced than the Classic, with operators like as-is, capitalization, clause frequency, etc. Or, some kind of hybrid

- So, to wrap up this context-setting slide: At a philosophical level, this PROBLEM may the FIRST relevancy issue in a search application project: How do we translate the end-user’s high-level search expression into an executable that will most effectively approximate what the end-user is looking for?

- ANIMATION! What can we do in Solr (or ES) in order to address the problem? Good news is: Solr offers powerful out-of-the-box Query Parsers. The edismax is like a power tool: With it comes great responsibility. And Doug wrote three chapters on the subtleties and pros and cons of multi-field queries, and the pros and cons of terms- vs. fields-centric approaches. I spent a year tuning a system using the edismax with many fields. It’s hard work! Which requires a mature relevancy testing infrastructure by the way, but you knew that. Ask the audience who has been using the edismax in their applications? There is a very rich query parsers eco-system in Solr: Solr 7.7 Other Query Parsers

- But, how far can I go with the Solr query parsers? Pretty far actually! For example, in terms of queries specifying some proximity between terms, there is little-known query parser called the “surround” query parser. Check it out in the Solr doc. It’s implemented in Lucene by a separate javacc grammar (See the package org.apache.lucene.queryparser.surround.parser). Solr demo: http://localhost:8983/solr/demo/select?debugQuery=on&df=title_t&q=%7B!surround%7D%205n(2n(donald%2Ctrump)%2C%20impeached%20OR%20impeachment)&wt=json TODO: Change to an example that is not (too) political Green legislation Search for the capitalized term “Green” (as if Green New Deal), but not the color “green”, which is within X positions of the term “legislation” cap(green) w/5 legislation Note: the position count in the surround operator is “slop + 1” (Number of positions between terms + 1)

- So, the Solr toolbox provides many query parsers that can be combined in arbitrarily complex compositions. Solr demo: http://localhost:8983/solr/demo/select?debugQuery=on&df=title_t&q=_query_%3A%22%7B!lucene%7D%5C%22green%20deal%5C%22%22%20%0AAND%20%0A_query_%3A%22%7B!surround%7D%205n(congress%2C%20democrat)%22&wt=json

- Example of QPs composition with XML. Show on Postman > Haystack 2019 > XML Query Parser Demo: Postman

- Example of QPs composition with ES-esque JSON Query DSL Show on Postman > Haystack 2019 > JSON Query Parser Demo

- ANIMATION! NEXT: But there are limitations that could be showstoppers to meet your functional requirements. E.g., in the surround QP NEXT: What about operators that do not exist in Lucene or Solr? NEXT :Enter the world of custom query parsers...

- Show, explain, and run the code from IntelliJ. We’re going to improve the surround QP in two areas: Analyze the search terms Not have any distance limitation

- Quick Query Parser anatomy with a couple of slides and then we’ll do a high-level code walk through. This is Java code, so hopefully we’re okay with that. My apology to those in the room that will have a hard time seeing the code. But, one takeaway should be for all that there is an easy and convenient set of Solr plugin patterns as well as Lucene search primitives that make the creation of custom query parsers very approachable for you all. Show request handler in IntelliJ.

- Note that the query parser does not execute the query. Its sole responsibility is to produce the executable query and return to Solr. Good news is that, as a query parser writer, we don’t have to worry about query execution concerns such as: filtering, pagination, highlights, facets, boosting (more on that later). Let’s walk through the code (next slide).

- Go to the Solr UI to play with our proximity query parser FIRST, show the plugin in action: Query with it: {!proximity} GREEN w5 Deal http://localhost:8983/solr/demo/select?debugQuery=on&fl=*&q=%7B!proximity%7D%20GREEN%20w5%20Deal&qf=title_t&wt=json Analyzed search terms (Donald and IMPEACHED analyzed to trump and impeached) No limit to the distance (100) Show the generate Lucene query with debugQuery=on Show where the jar is deployed (Normally pushed to an artifacts repository, and deployed to your Solr nodes) Show the plugin is listed: http://localhost:8983/solr/#/demo/plugins?type=queryparser&entry=com.o19s.solr.qparser.ProximityQParserPlugin Query using the request handler /proximity: No match (mm=100, “democrat” does not match because we have no stemmer): http://localhost:8983/solr/demo/proximity?=&debugQuery=on&fl=*&mm=100&q=green%20new%20w5%20deal%20democrat&qf=title_t&wt=json mm=50%: http://localhost:8983/solr/demo/proximity?=&debugQuery=on&fl=*&mm=50&q=green%20new%20w5%20deal%20democrat&qf=title_t&wt=json Show Boosting, Highlights, Sort, Facets

- SCORING? You get the behavior of the underlying Lucene primitives and how they are composed? Go to Splainer live: http://splainer.io/#?solr=http:%2F%2Flocalhost:8983%2Fsolr%2Fdemo%2Fproximity%3FdebugQuery%3Don%26q%3DDonald%20w100%20IMPEACHED%26qf%3Dtitle_t&fieldSpec=* Just showing that the scoring is provided by the underlying Lucene primitives. TIDBITS: IDF(phrase) = sum of the IDFs of the phrase’s terms The span’s “phrase frequency” is 1 / (distance + 1) The span frequency (0.333…) is calculated in the BM25Similarity class (See line 77 in the 7_7 branch): protected float sloppyFreq(int distance) { return 1.0f / (distance + 1); } Can the score be customized? Yes, but that involves peeling the next layer of the Lucene onion and get into the Weigh and Scorer classes. A presentation for another time. Also, show Quepid is fine.

- Recap: Different approaches to QPs. Notice the location of the Query Parser in the center solution: It is in the application! The application parses the end-user’s string and produces a Solr search using the Perl-like notation, XML, or JSON.

- Ease of Relevance Tuning: Edismax: the good, bad, and ugy QPs composition: Underlying QPs’ knobs and dials Custom Query Parser: More software to write: Multi-fields Synonyms Compounds DisMax behavior Terms- vs fields-centric Tie mm etc.

- Often times, entities such as dates, numbers, people, places, institutions, etc. must be recognized as a first-pass before producing the parse tree so that the recognized entities are leaves themselves. In a large SolrCloud cluster, if the entity recognition is an expensive operation, perhaps involving a call to an external service, it is a good idea to not have Solr be responsible for entities recognition, and let the application layer handle it.

- [