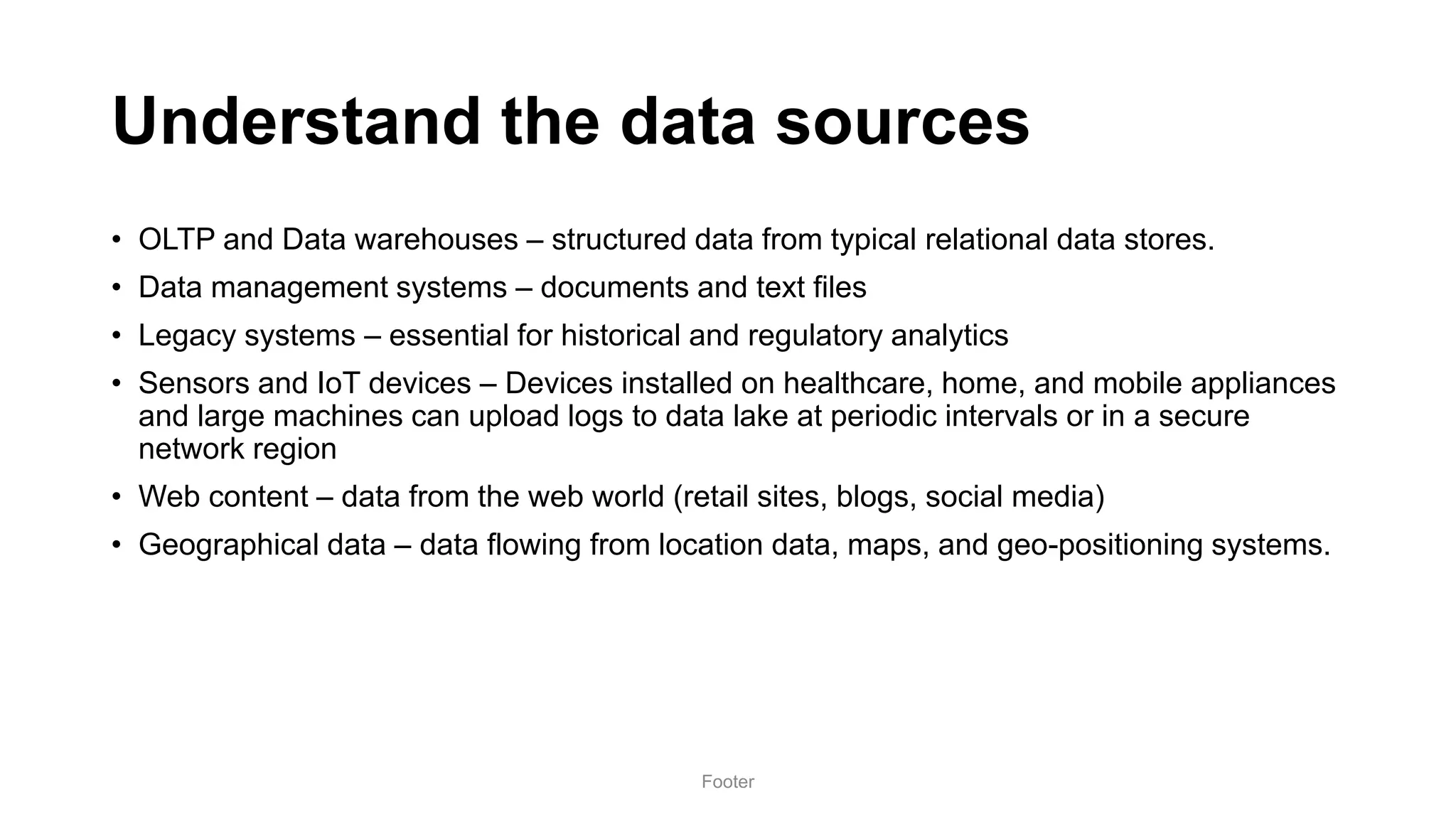

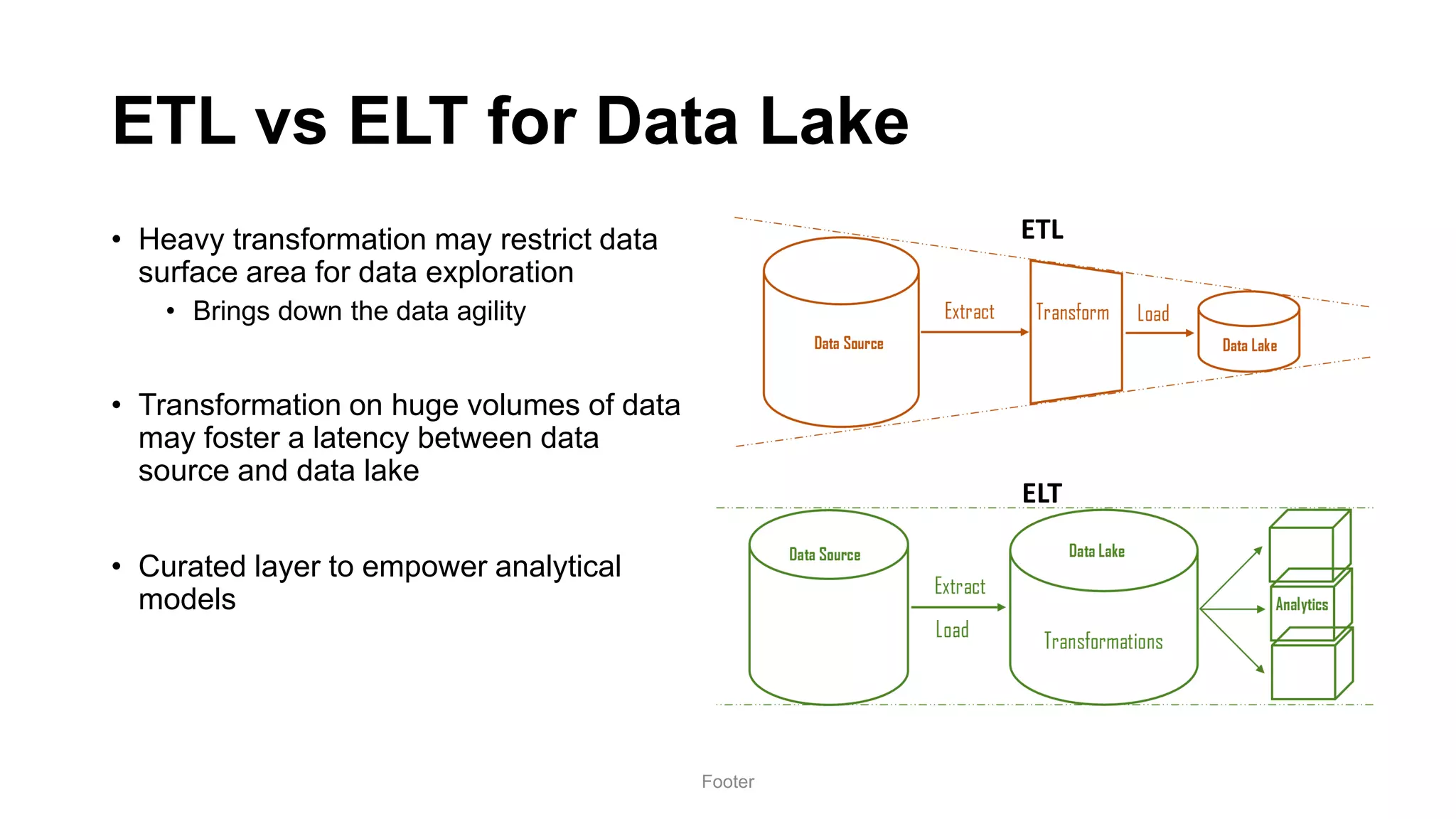

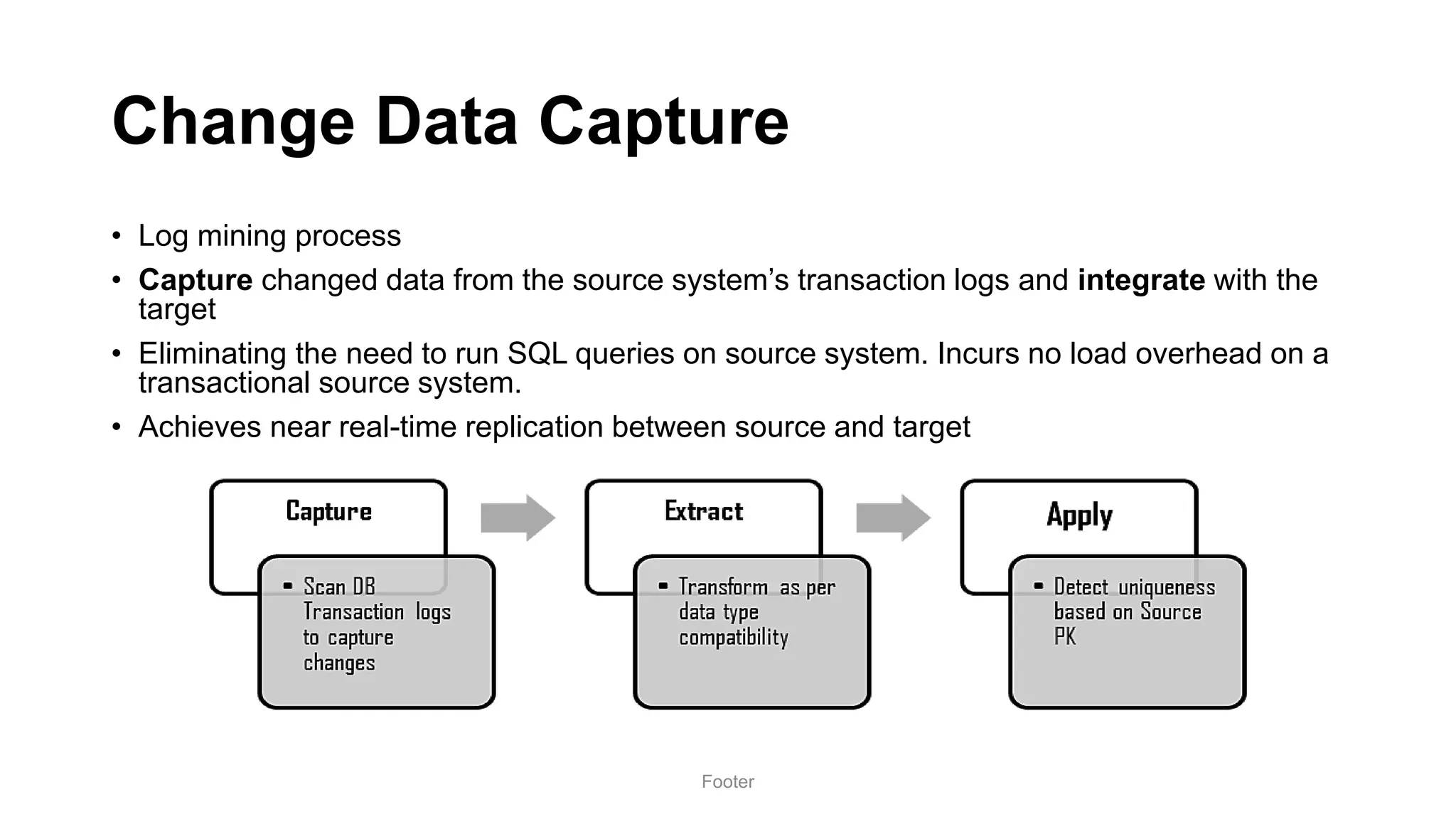

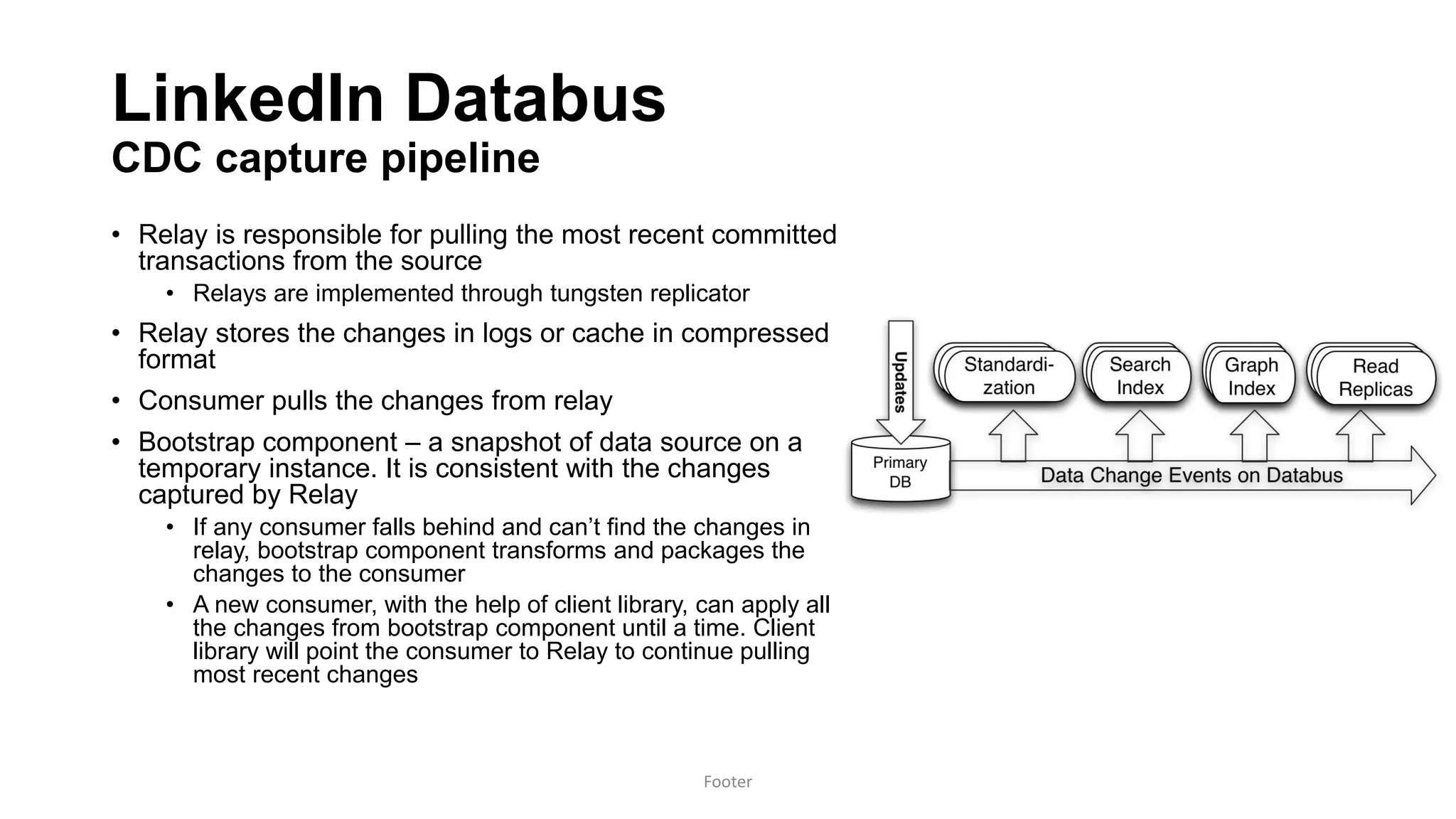

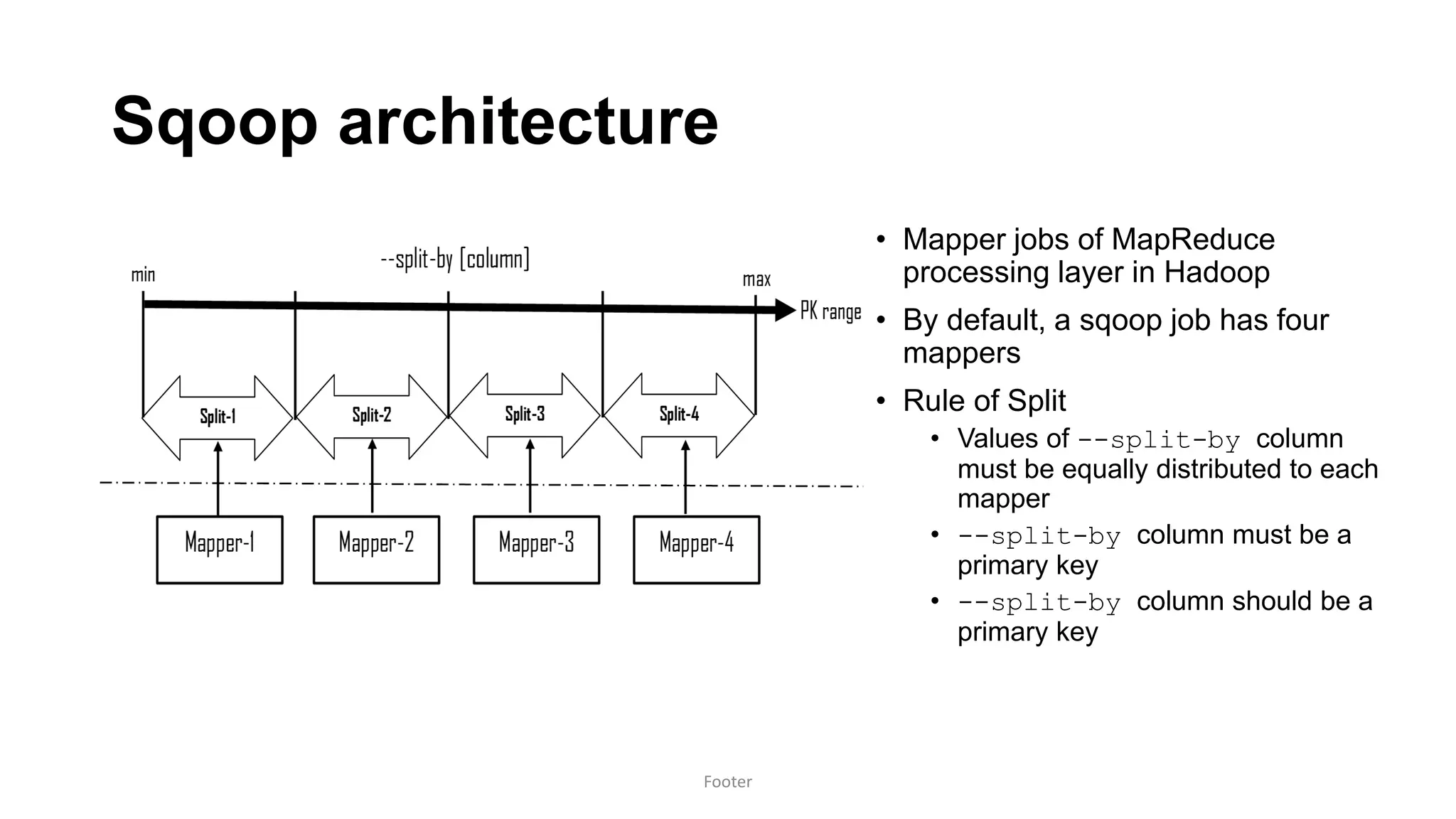

Harness the Power of Data in a Big Data Lake discusses strategies for ingesting and processing data in a data lake. It describes how to design a data ingestion framework that accounts for factors like data format, source, size, and location. The document contrasts ETL vs ELT approaches and discusses techniques for batched and change data capture ingestion of both structured and unstructured data. It also provides an overview of tools like Sqoop that can be used to ingest data from relational databases into a data lake.

![Sqoop

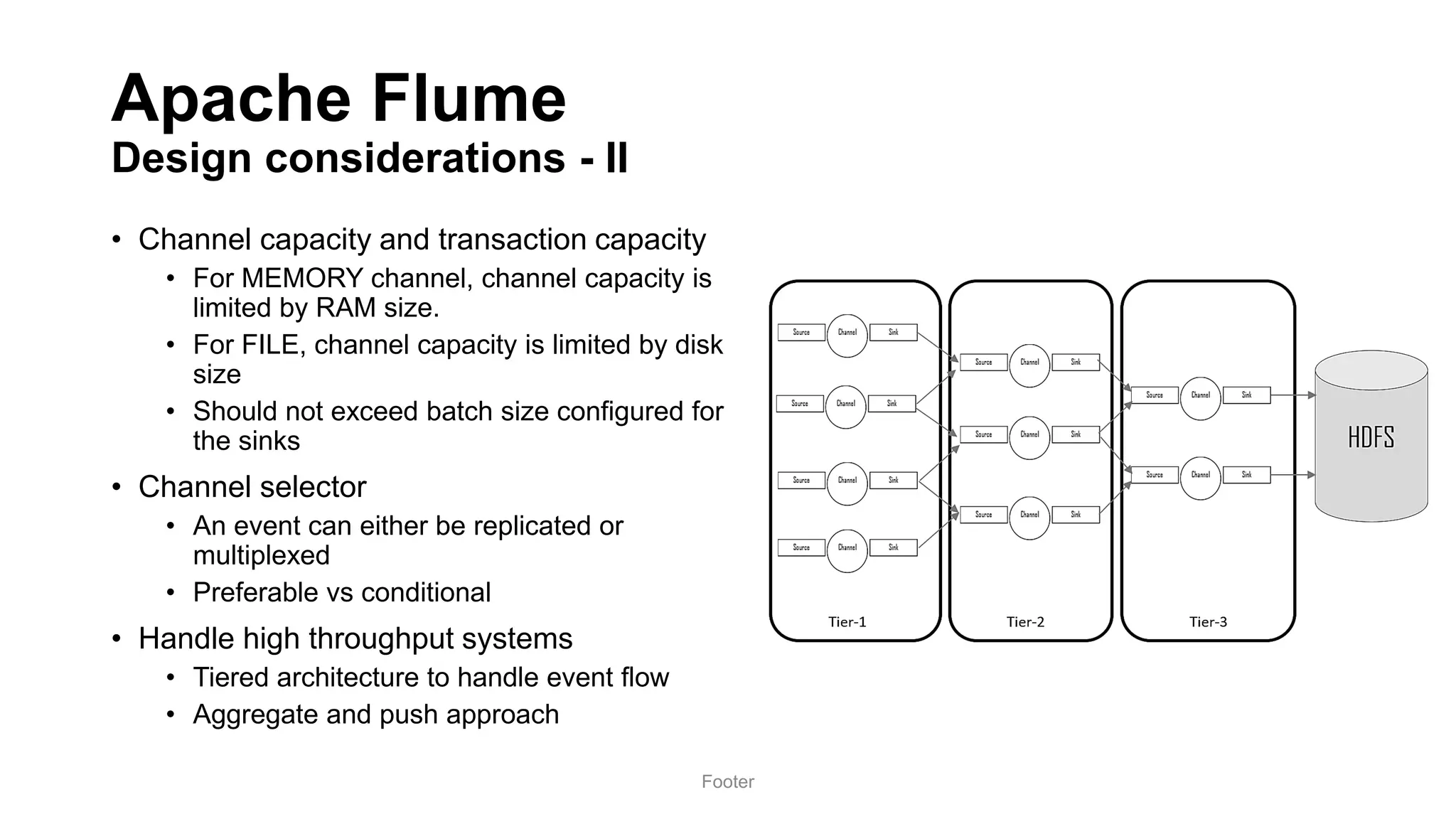

Design considerations - I

• Mappers

• --num-mappers [n] argument

• run in parallel within Hadoop. No formula but needs to be judiciously set

• Cannot split

• --autoreset-to-one-mapper to perform unsplit extraction

• Source has no PK

• Split based on natural or surrogate key

• Source has character keys

• Divide and conquer! Manual partitions and run one mapper per partition

• If key value is an integer, no worries

Footer](https://image.slidesharecdn.com/sangam17dataindatalake-180907194418/75/Harness-the-power-of-Data-in-a-Big-Data-Lake-24-2048.jpg)