Generic steps in informatica

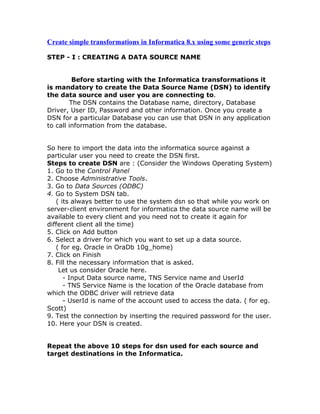

- 1. Create simple transformations in Informatica 8.x using some generic steps STEP - I : CREATING A DATA SOURCE NAME Before starting with the Informatica transformations it is mandatory to create the Data Source Name (DSN) to identify the data source and user you are connecting to. The DSN contains the Database name, directory, Database Driver, User ID, Password and other information. Once you create a DSN for a particular Database you can use that DSN in any application to call information from the database. So here to import the data into the informatica source against a particular user you need to create the DSN first. Steps to create DSN are : (Consider the Windows Operating System) 1. Go to the Control Panel 2. Choose Administrative Tools. 3. Go to Data Sources (ODBC) 4. Go to System DSN tab. ( its always better to use the system dsn so that while you work on server-client environment for informatica the data source name will be available to every client and you need not to create it again for different client all the time) 5. Click on Add button 6. Select a driver for which you want to set up a data source. ( for eg. Oracle in OraDb 10g_home) 7. Click on Finish 8. Fill the necessary information that is asked. Let us consider Oracle here. - Input Data source name, TNS Service name and UserId - TNS Service Name is the location of the Oracle database from which the ODBC driver will retrieve data - UserId is name of the account used to access the data. ( for eg. Scott) 9. Test the connection by inserting the required password for the user. 10. Here your DSN is created. Repeat the above 10 steps for dsn used for each source and target destinations in the Informatica.

- 2. STEP - II : CREATE YOUR DIRECTORY IN REPOSITORY MANAGER The repository is a relational database that stores information, or metadata, used by the informatica Server and Client tools. Mappings that describes how to transform the source data, sessions indicating when you want the information server to perform the transformations and connect strings for sources and target can be included in the Metadata. It also stores the username, password, permissions and privileges assigned. We have to create a folder or a directory or simply a work area where the user will be performing and storing his tasks. Steps to create folder in the Repository are: 1. Go to Menu 2. Click on Folder option 3. Go to Create a folder - Once you have created the folder you dont have to work in repository manager. - Close it ------------------------------------------------------------------------------- ------ Now we shall enter the Informatica Designer Hey, you must be knowing how to go there, still this steps would guide you - 1. Go to Programs (Start Menu option in Windows) 2. Choose Informatica PowerCentre 8.5 3. Go to Client 4. PowerCenter Designer Then connect to repository - right click on repository - say connect You will have to sign in to repository with the user name. Only after that you can view and open the folders. Connect to your folder.

- 3. Now, we have to move to build the transformations. The Main Steps are : 1. Create Source in Source Analyzer 2. Create Target in Target Designer 3. Create Mapping in Mapping Designer 4. Create Workflow and a Task in Workflow Designer 5. Execute the workflow and watch its succeeding in the Workflow Monitor Details - So that you are not lost while designing. Source Analyzer: Go through the following steps to import the source into the transformation. 1. Go to Menu Bar --> Sources 2. Select the option - Import the Database (You will get the Import Tables screen) 3. Here select the ODBC data source and enter the username and password. 4. Say connect to connect to the database under that particular DSN name. 5. After the connect, all the tables will be displayed alphabetically. 6. Select the table and it is now imported as the sources in the informatica. After the particular table is imported into the source analyzer you can just drag it to the work space to use it. Target Designer: Target table is the one which generally once created is not altered. It resides in data warehouse. The target table can be constructed by two ways - 1. By manually creating the new schema: - Right click and say create.

- 4. - Enter the new table name. - Select the database name. 2. Borrow the schema from the source: - Drag the table form the source to the target workspace. Remove the joins in the table if any. - Select the target table - right click and say edit - don't forget to rename the table Here only the target table is imported but the structure or the schema is not generated in the database.So at this stage it cannot be mapped further. So its mandatory to generate the schema. - Go to Menu --> Targets - Click on Generate/Execute SQL - It will display the screen named - Database Object Generation - The generated code is stored in .sql format. - Generate from - All tables or selected table. - Generate Options - Check the create table option - Check the drop table option (if any table exists) - Uncheck the primary key and foreign key options (otherwise it will become the constraint based loading and it doesn't work in bulk, we will ignore this case here) - click on Generate and Execute options. - the status will be displayed in the output window. If the processing fails, don't proceed further. Any transactions in the informatica has two instances of Database (e.g.- oracle), source and the Target. To perform any task the it is mandatory to map these two tables.Hence the third process is to create the mapping. Mapping Designer: Close the previous mapping if any opened.

- 5. To create a new mapping- - Go to Menu --> Mapping - Click on create - Enter the mapping name - Ok. Your mapping is created but is empty yet. To involve the source and target just drag them through the repository content in to the mapping space. [Here after we drag the source, it is always mapped with the another component source qualifier. Don't get confused. It is an interpreter and converts the data into the generic format. It is not specific to any RDBMS or vendor or any file. This is because we don't use the source definition directly.The target or any transformation is always connected to the source qualifier and not the source itself] If you want only to pass the data from source to target just map the target columns to the source qualifier one on one. The various Transformations functions actually enhance various transformations. The transformation available with informatica are : (to list the few important one) - Source Qualifier - Filter - Expression - Aggregator - Joiner - Router etc. and many more ( I shall not explain them now. The aim of this article is to highlight the general steps that any transformation need to follow) To use the transformation in the mapping, go through the following steps : 1. Go to Menu --> Transformation 2. Click on create 3. Select the transformation function from the drop down list and name it. - In transformation we call the column as Ports

- 6. - Drag the necessary ports to the transformation - click on Edit and enable the needed settings for the same. (For the required settings of each function better take the help of the given "Help Content") Now simply map the ports to the source and the target. Validate the Mapping - Menu --> Mapping --> Validate - Unless the mapping is not valid the transformation will not take place. In order to execute the mapping the next step is to create a work flow and create a task in it. Go to Menu --> Tools --> Workflow Manager. Workflow manager is the interface used to create the workflow, task and excute the mapping.Its three components are: - Task Developer: used to create the task - Workflow designer: used to create the workflow - Worklet designer: used to create the worklet Workflow Designer: Here for the simple transformation we will use only workflow designer and create the workflow and the task together. Steps: 1. go to Menu --> Workflow - click on create - Just name the workflow and say Ok - A start symbol will appear on the designer. 2. go to Menu --> Task - click on create - In a drop down list, select Session task. ( Session is task that allows you to load the data) - name the task - click Ok - Select the mapping from the list displayed, that is previously created Now in order to execute the flow we have to link the Workflow and the task.

- 7. - go to Menu --> task - select the link task - immediately drag the pointer from Start to the Task. The workflow will get connected, shown by the connected line. Now the source and the target (if they are the physical tables taken form the database) need to be configured again with their respective Data Source names. Steps : 1. Double click on task to enter the 'edit task' 2. go to the Mapping tab - Select source (it will be the qualifier's name) - Select Connections - Choose the DSN name and connect the component with the table. (you have to authenticate with username and password) Select the target and repeat the Step 2 again for target table. 3. Ok 4. go to Menu --> Workflow --> Validate 5. go to Menu --> Repository --> Save (unless you dont save, the workflow will be not be started) To start the workflow - - go to Menu --> Workflow --> Start Workflow The succceedings of the workflow can be observed in the Workflow Monitor. Workflow Monitor: - go to Menu --> Tools --> Workflow monitor - connect to the repository and the integration service. The workflow monitor has the time stamps per hour where actually it monitors whether the workflow started at particular time stamp is failed or succeeded. Never forget that workflow may always succeed, its the task that gets failed.You can read the log files if the task gets failed. ------------------------------------------------------------------------------------------------- In order to cross check whether the data has been transformed or not follow the following steps: - go to Informatica Designer again. - go to the mapping

- 8. - right click on the target table - say 'preview data' - after authenticating with your username and password the data transformed will be previewed.