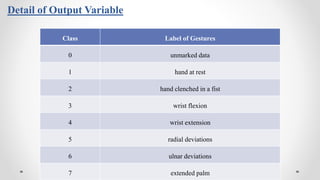

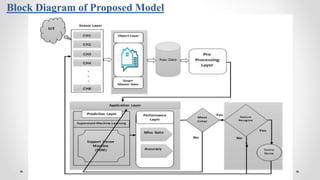

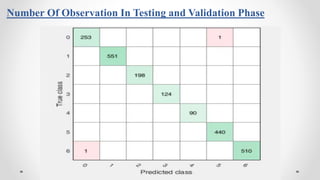

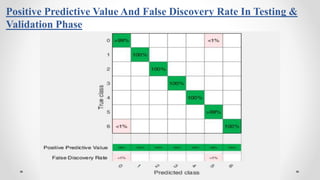

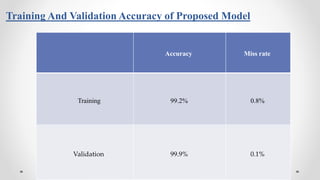

The document presents a thesis on an intelligent gesture classification system empowered by support vector machine (SVM) that utilizes electromyography (EMG) signals for recognizing hand movements. The proposed model achieves a high accuracy of 99.9% in classifying gestures by analyzing data gathered from eight EMG sensors. The research advocates for future applications in real-time scenarios and improvements in gesture classification methodologies.

![Introduction

With the growing presence of computerized systems in our day to day lives, the importance

of Human-Computer Interface (HCI) system has been increased. HCI defines optimal usage

for storage, connectivity, and display capabilities used in the information flow. The

development of simple applications to understand movements of the body of the user and

transform these applications into commands of machine that has been of considerable

importance during the last years [1].

Specific biological signals could be used for neuronal communication with devices, and

could be obtained from the particular organ, body, or cell network such as the system of

nervous.

Reference[1]](https://image.slidesharecdn.com/thesisdefencesajidrasheed-201202101219/85/Final-Thesis-Presentation-4-320.jpg)

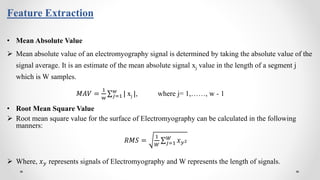

![ Electromyography (EMG) is the study of electrical signals in the muscles, called myoelectric

operation, which are derived from the surface of the skin via sensors [2].

Electromyography is a medical technique that measures the health status of the muscles and

nerve cells that regulate them or motor neurons. Muscle-acquired EMG signals permit

sophisticated methods for identification, decomposition, sorting, and classification.

Different EMG signal analysis methodologies and techniques for the completion of this

objective include quick and accurate ways to grasp the signal and its existence

electromyographic signals.

Electromyography (EMG)

Reference[2]](https://image.slidesharecdn.com/thesisdefencesajidrasheed-201202101219/85/Final-Thesis-Presentation-6-320.jpg)

![Images of Electromyography

Reference[3,4]](https://image.slidesharecdn.com/thesisdefencesajidrasheed-201202101219/85/Final-Thesis-Presentation-7-320.jpg)

![Literature Review

• Lobov et al. [5]

Work on the classification of hand gestures and implement it in dynamic gamming environment.

Proposed model classified seven hand movement by using Artificial Neural Network (ANN).

For data accusation, EMG Thalmic bracelet used which contained eight sensors.

Proposed model achieved accuracy up to 91.5% using ANN.

• Alejandro et al. [6]

An automated hand or wrist gesture identification system based on techniques of supervised machine

learning.

Proposed model used an open-access collection of 36 subjects that included recordings of EMG

signals.

Six hand gestures classified by using Convolutional Neural Network (CNN) and random forest

model and obtained accuracy up to 94.77% and 95.39% respectively.

Reference[5,6]](https://image.slidesharecdn.com/thesisdefencesajidrasheed-201202101219/85/Final-Thesis-Presentation-8-320.jpg)

![Continue…

• Benalcázar et al. [7]

Proposed a real-time hand classification method for the classification of hand movement.

Discussed model used raw data from surface of eight EMG signal to measure the movement of

forearm.

Model used K-Nearest Neighbors (KNN) classifier to identify five hand gesture without any feature

extraction method

Proposed model show the best classification accuracy of 89.5% to recognize hand gestures.

• Bian et al. [8]

discussed four classification systems are used to identify hand gestures relying on pattern recognition

of electromyographic (sEMG) surface signals.

The results indicate that both accuracy and the preparation time of the model are outperformed by

the support vector machine. System classification accuracy is about as high as 92.25 percent

Reference[7,8]](https://image.slidesharecdn.com/thesisdefencesajidrasheed-201202101219/85/Final-Thesis-Presentation-9-320.jpg)

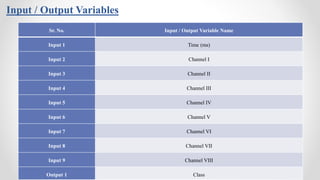

![Datasets

The proposed model acquire dataset from internet that is publically available

on the website of UCI Machine Learning Repository [8] to classify hand

gestures. EMG used an MYO Thalmic bracelet to acquire data that was warned

by the user in his/her forearm.

For the collection of data, 36 subjects participate that worn Thalmic bracelets

and perform seven basic gestures. Dataset contains ten attributes, one attribute

is time that record in a millisecond, other eight attributes contain eight EMG

channel to record the movement of gestures, and one attribute is class that

contain eight gestures. Reference[8]](https://image.slidesharecdn.com/thesisdefencesajidrasheed-201202101219/85/Final-Thesis-Presentation-13-320.jpg)

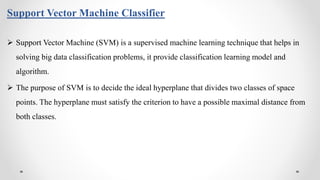

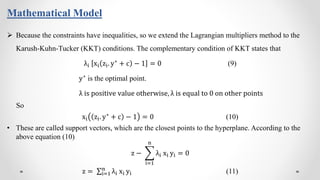

![Mathematical Model

When comparing hyperplanes, the hyperplane with the largest F will be complimentary selected. Where F is

called the geometric margin of the dataset.

Our objective is to find an optimal hyperplane, which means we need to find the values of z and c of the

optimal hyperplane.

SVM optimization problem is case of constrained optimization problem, Lagrange multipliers are used to solve

it.

• Lagrangian function is

ℒ z, c, λ = (1/2) z. z −

i=1

n

λi [xi z. yi + c − 1]

With respect to z

𝛻zℒ z, c, λ = 𝑧 − i=1

n

λi xi yi = 0 (5)

With respect to c

𝛻cℒ z, c, λ = i=1

n

λi xi = 0 (6)](https://image.slidesharecdn.com/thesisdefencesajidrasheed-201202101219/85/Final-Thesis-Presentation-22-320.jpg)

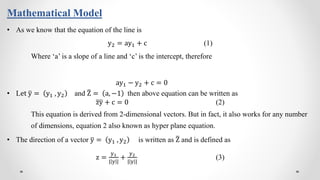

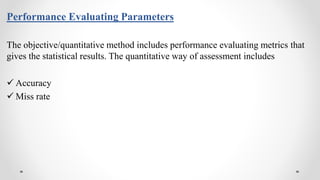

![Comparison of Proposed IGCS-SVM With Previous Work

Model Accuracy Miss Rate

Benalcazar et al. (2017) [10] 86% 14%

Chawathe (2019) [9] 89% 11%

Lobov et al. (2018) [5] 91.5% 8.5%

Alejandro et al. (2020) [6]

CNN Model

94.77% 5.23%

Random Forest Model 95.39% 4.61%

Proposed IGCS-SVM Model 99.9% 0.1% Reference[5,6,9,10]](https://image.slidesharecdn.com/thesisdefencesajidrasheed-201202101219/85/Final-Thesis-Presentation-36-320.jpg)

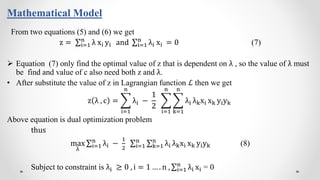

![Conclusion

In current thesis, an IGCS-SVM model is proposed for intelligent gesture classification

system based on Electromyography (EMG) signals.

Proposed model collect data from eight EMG sensors and then analyze it to classified

gesture. Support vector machine classified hand gestures in this model.

EMG signals acquired from different muscles location, through the Mayo armband Thalmic

bracelet, then support vector machine classified acquired signals. The proposed model

communicates with computing devices through IoT.

Presented IGCS-SVM model achieved gesture classification accuracy 99.9% using SVM.

Computational results show that the support vector machine proved a good choice to classify

hand gestures.

The simulation findings show that the suggested methodology produced batter outcomes as

compared to the previous approaches used by model Lobov et al. (2018) [5], Alejandro et al

(2020) [6], Chawathe (2019)[9] and Benalcazar et al. (2017) [10].](https://image.slidesharecdn.com/thesisdefencesajidrasheed-201202101219/85/Final-Thesis-Presentation-37-320.jpg)

![[1]. Ahsan, M. R., Ibrahimy, M. I., & Khalifa, O. O. (2009). EMG signal classification for human computer interaction: a

review. European Journal of Scientific Research, 33(3), 480- 501.

[2]. Reaz, M. B. I., Hussain, M. S., & Mohd-Yasin, F. (2006). Techniques of EMG signal analysis: detection, processing,

classification and applications. Biological procedures online, 8(1), 11-35.

[3]. https://encrypted-tbn0.gstatic.com/images?q=tbn%3AANd9GcQAApwTeIx8t4NV9Yz5kA71grP2wepYR1-p8g&usqp=CAU

[4]. https://www.mdpi.com/sensors/sensors-18-00183/article_deploy/html/images/sensors-18-00183-g001.png

[5]. Lobov, S., Krilova, N., Kastalskiy, I., Kazantsev, V., & Makarov, V. A. (2018). Latent factors limiting the performance of

sEMG-interfaces. Sensors, 18(4), 1122.

[6]. Alejandro Mora Rubio, J. A. A. G., Reinel Tabares-Soto ORCID logo, Simón Orozco-Arias, Cristian Felipe Jiménez Varón, Jorge

Iván Padilla Buriticá (2020). Identification of Hand Movements from Electromyographic Signals Using Machine

Learning. doi: doi: 10.20944/preprints202002.0443.v1

[7]. Benalcázar, M. E., Jaramillo, A. G., Zea, A., Páez, A., & Andaluz, V. H. (2017). Hand gesture recognition using

machine learning and the Myo armband. Paper presented at the 2017 25th European Signal Processing Conference

(EUSIPCO).

References](https://image.slidesharecdn.com/thesisdefencesajidrasheed-201202101219/85/Final-Thesis-Presentation-39-320.jpg)

![Continued…

[8]. https://archive.ics.uci.edu/ml/datasets/EMG+data+for+gestures

[9]. Chawathe, S. S. (2019). Hand Gestures from Low-Cost Surface-Electromyographs. IEEE National Aerospace and

Electronics Conference (NAECON).

[10]. Benalcázar, M. E., Motoche, C., Zea, J. A., Jaramillo, A. G., Anchundia, C. E., Zambrano, P., . . . Pérez, M. (2017). Real-

time hand gesture recognition using the Myo armband and muscle activity detection. Paper presented at the 2017

IEEE Second Ecuador Technical Chapters Meeting (ETCM).](https://image.slidesharecdn.com/thesisdefencesajidrasheed-201202101219/85/Final-Thesis-Presentation-40-320.jpg)