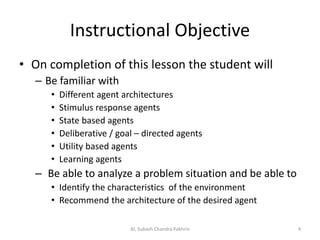

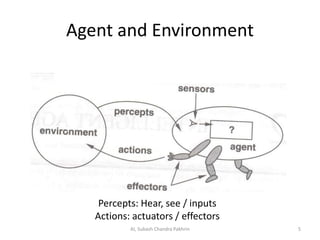

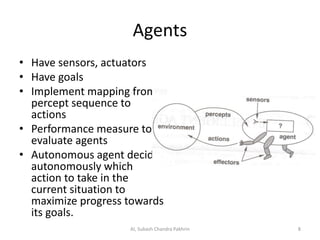

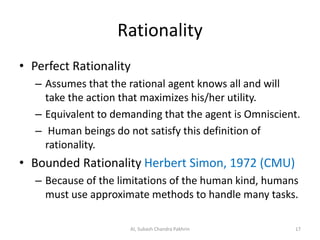

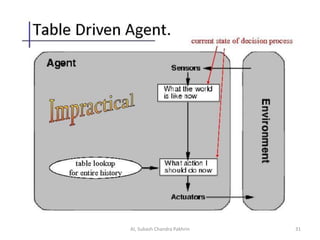

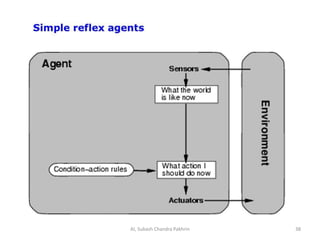

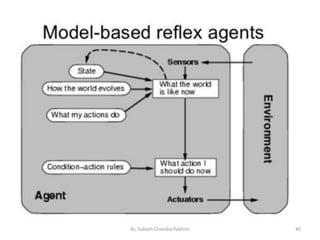

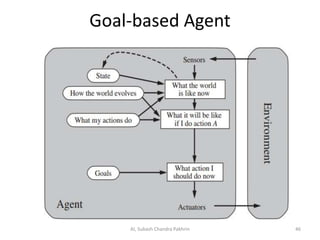

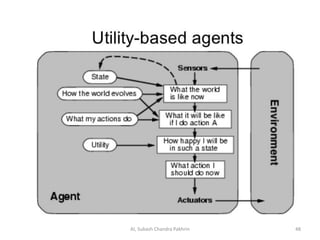

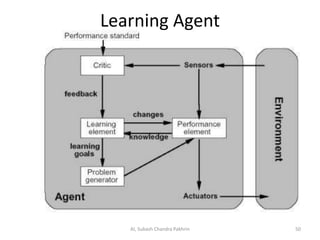

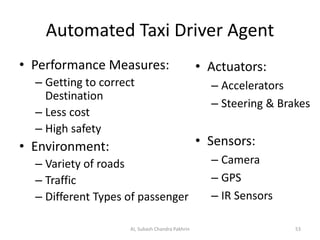

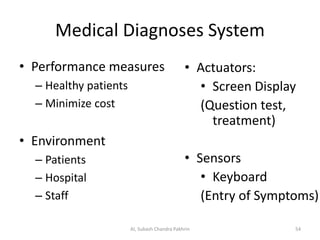

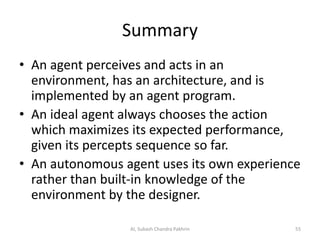

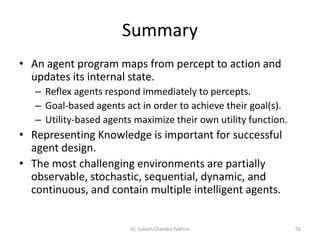

This document discusses different types of intelligent agents and their architectures. It defines agents as entities that operate in an environment, perceiving it through sensors and acting upon it through effectors to achieve their goals. The document outlines stimulus-response, state-based, deliberative, utility-based, and learning agent architectures. It also discusses concepts like rational agents, bounded rationality, and PEAS (Performance, Environment, Actuators, Sensors) representations for defining agent tasks.