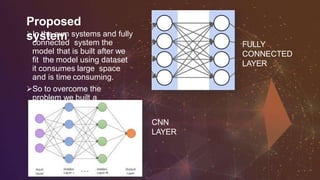

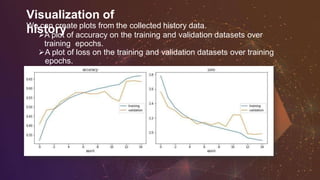

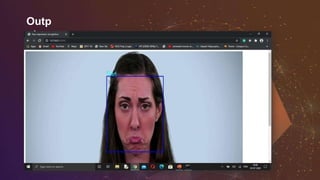

The document details a mini project on facial expression recognition using a convolutional neural network (CNN) to classify seven basic emotions from image data. The objective is to achieve accurate emotion detection for improved human-computer interaction, utilizing tools like Python, Keras, and TensorFlow. The project addresses the challenge of recognizing emotions in real-time through the development and training of a CNN model with data augmentation techniques, achieving validation accuracy of 96.24%.