社内向けに発表した研究会のスライドです。ハイライト版が以下のブログです。

「深層強化学習のself-playで遊んでみた」:https://recruit.gmo.jp/engineer/jisedai/blog/self-play/

結果のアニメーションが以下のgithubにあります。

https://github.com/jkatsuta/17_4q_supplement

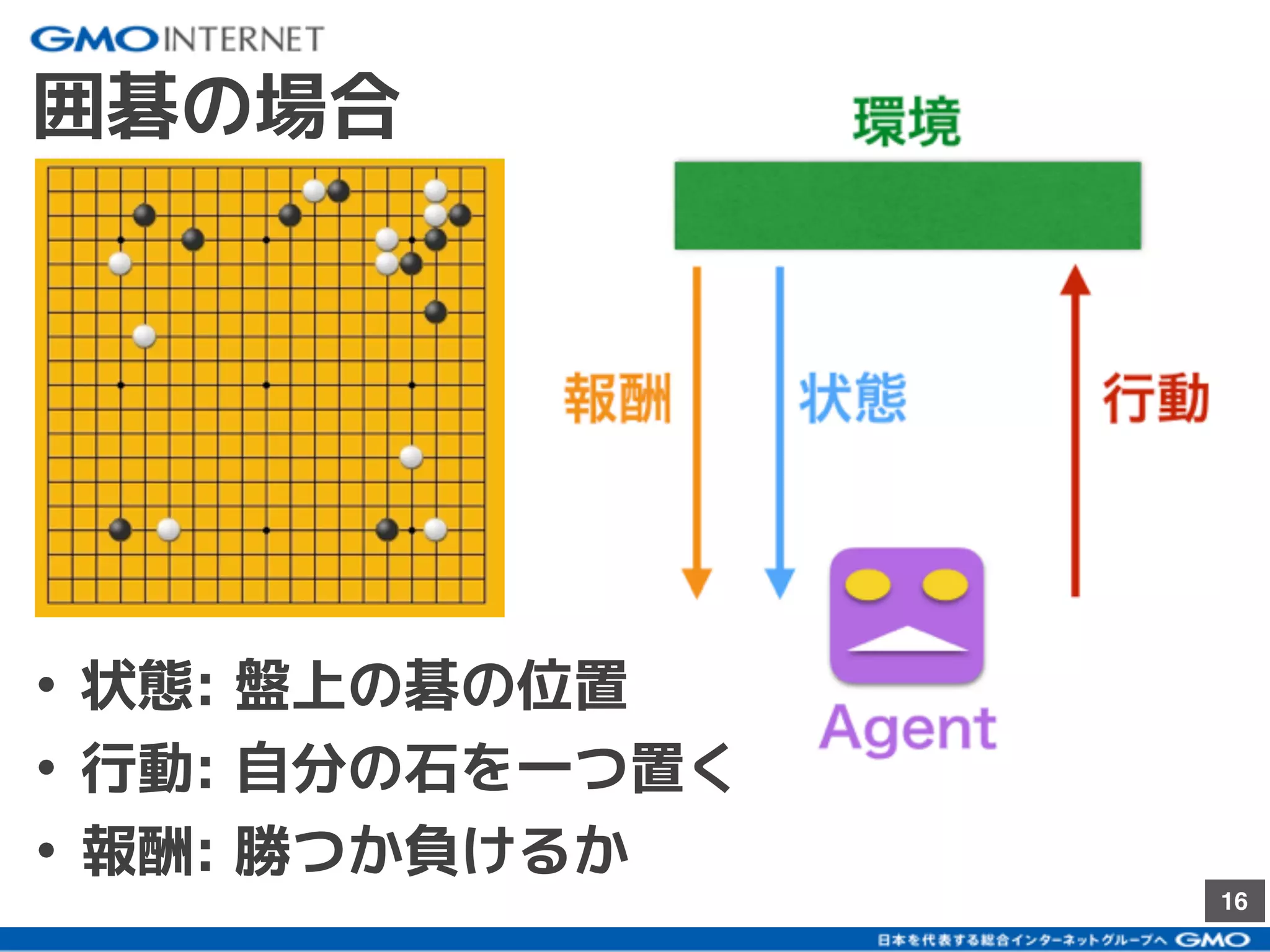

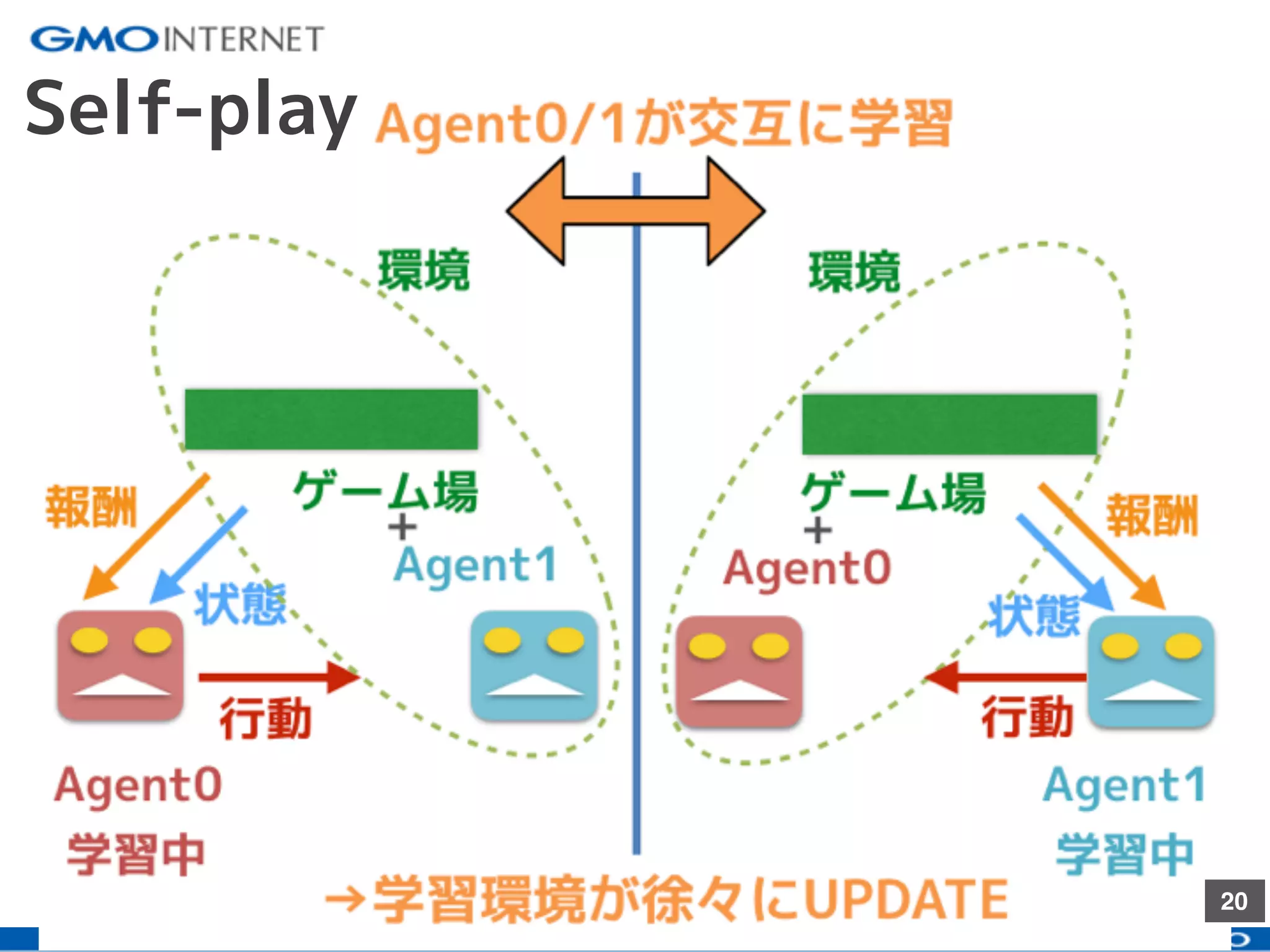

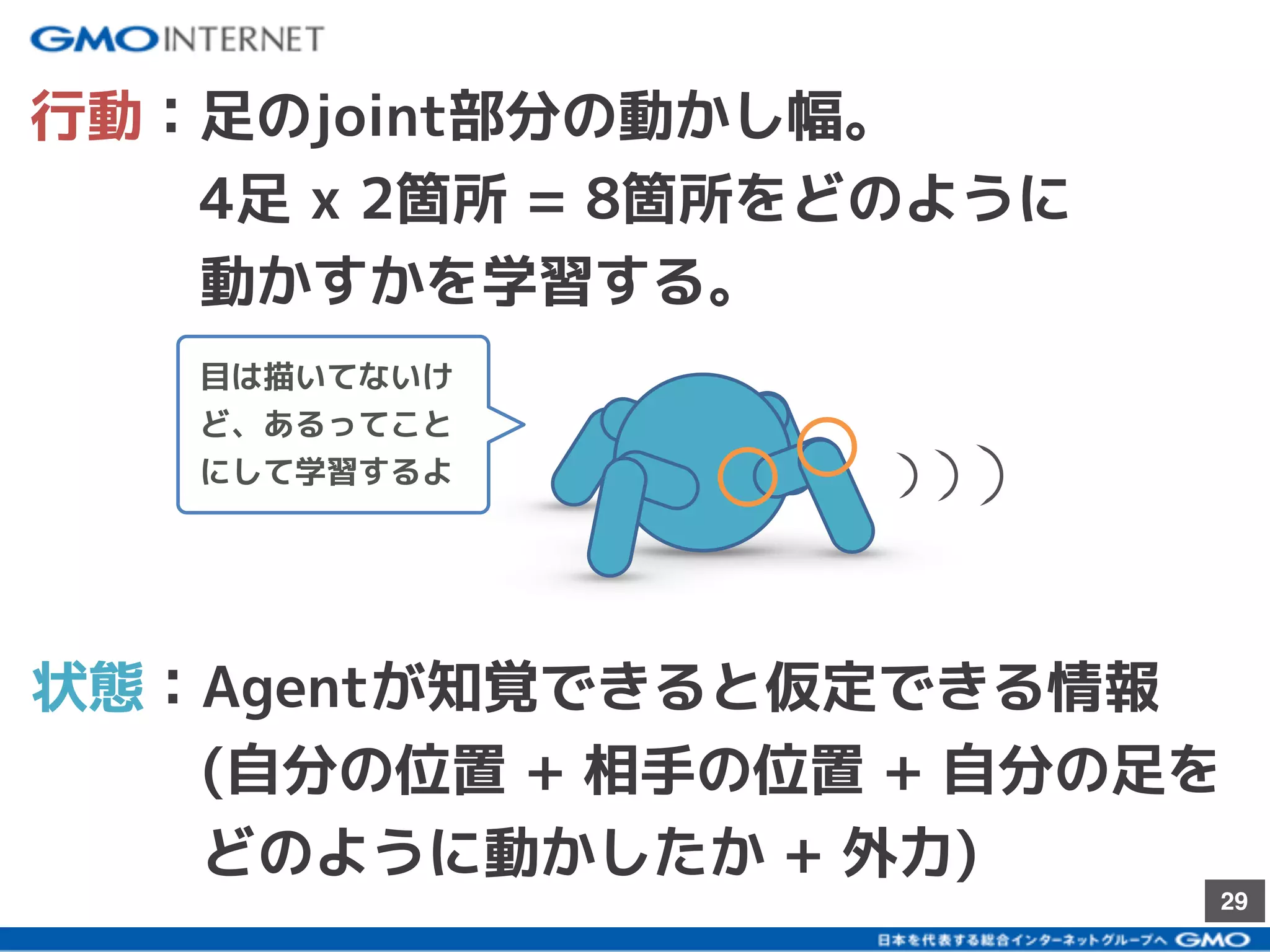

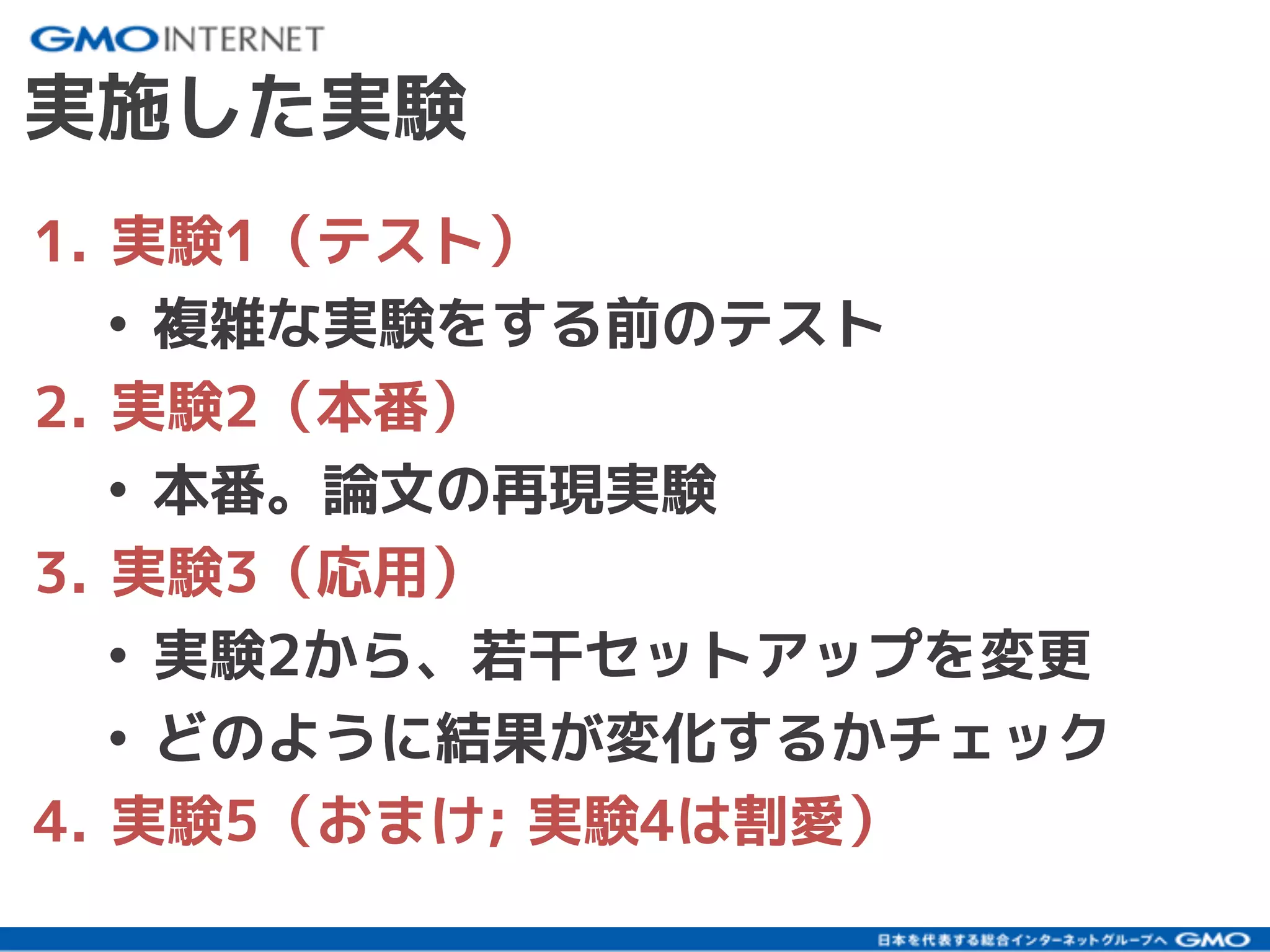

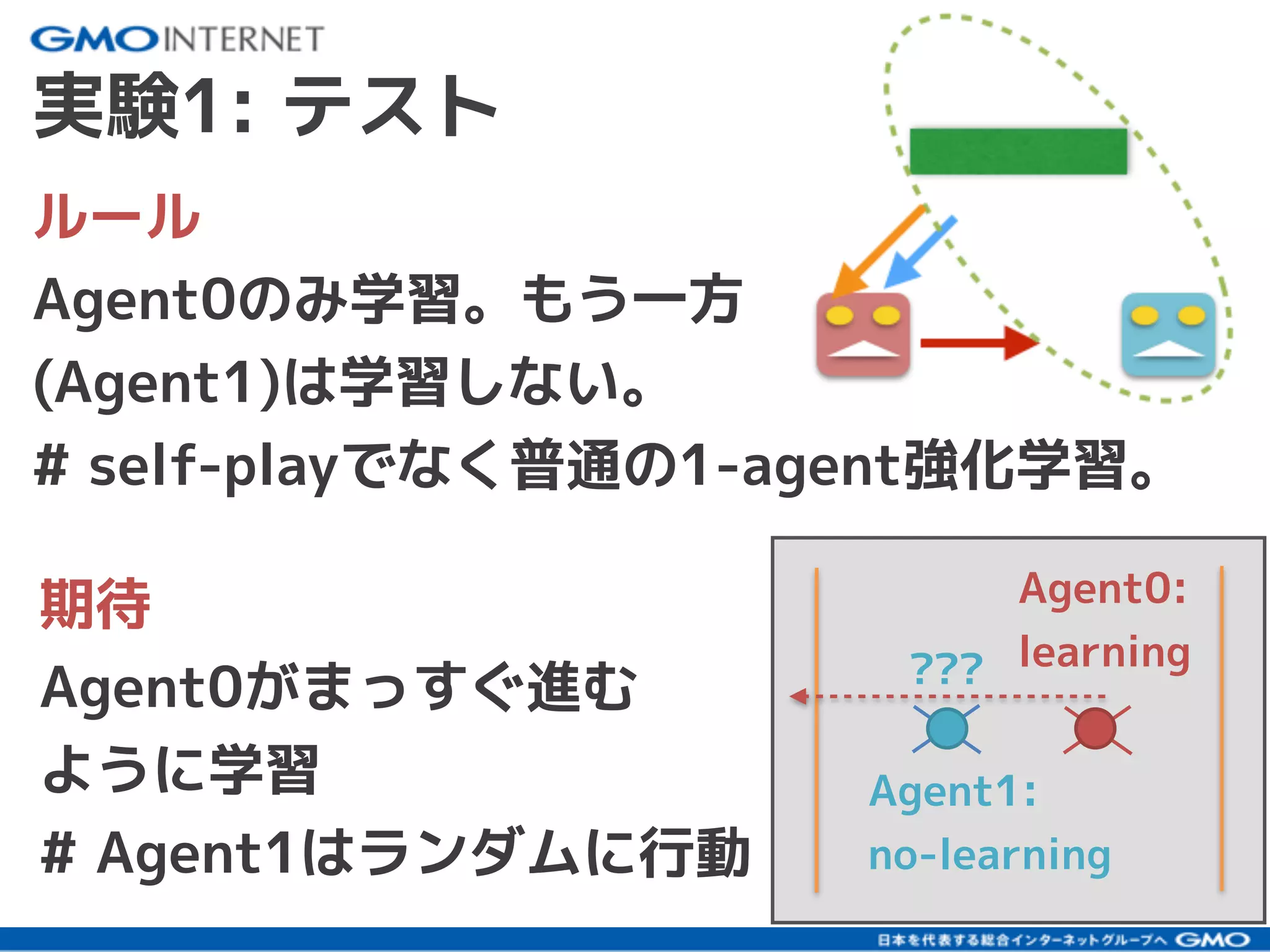

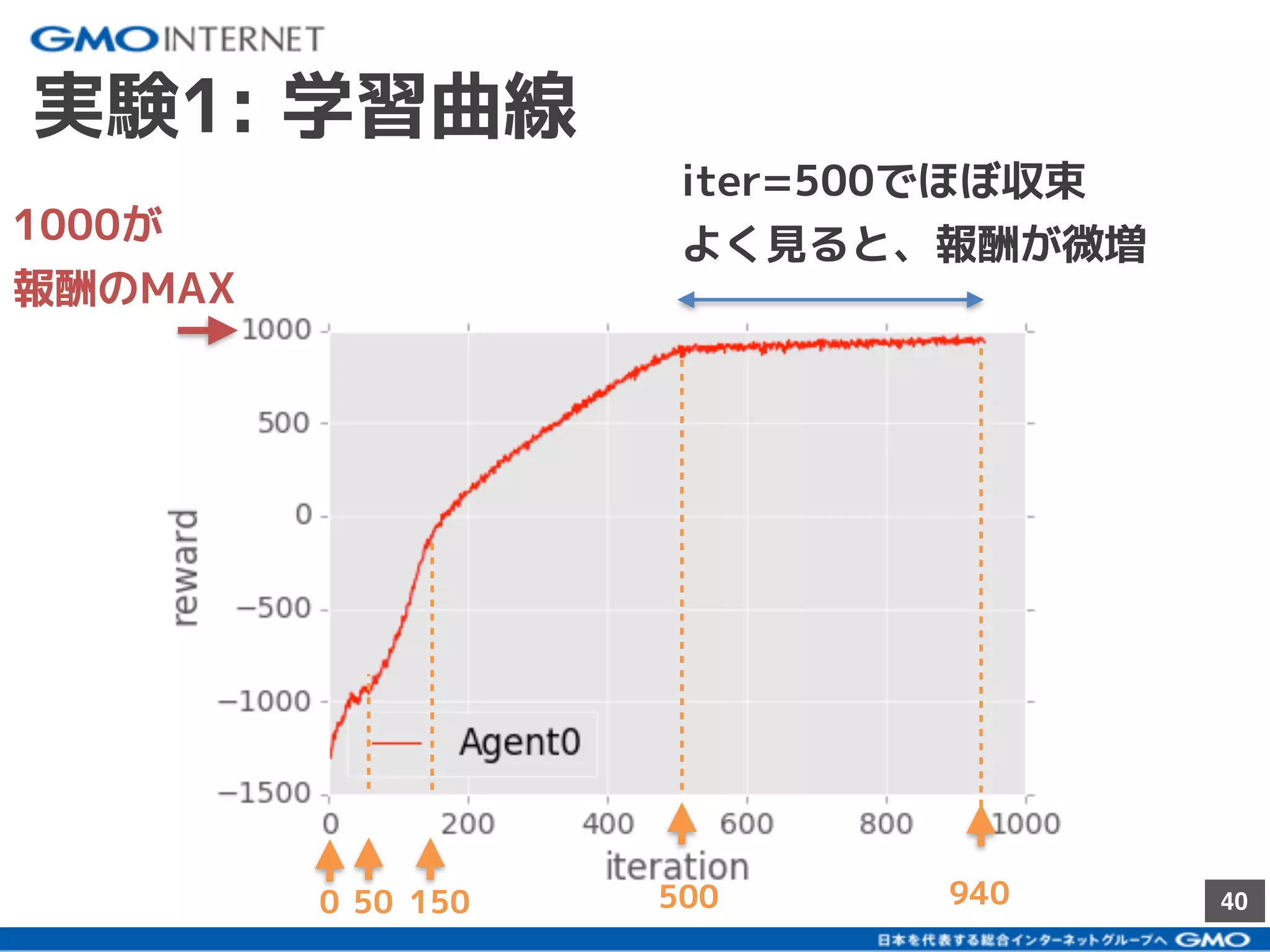

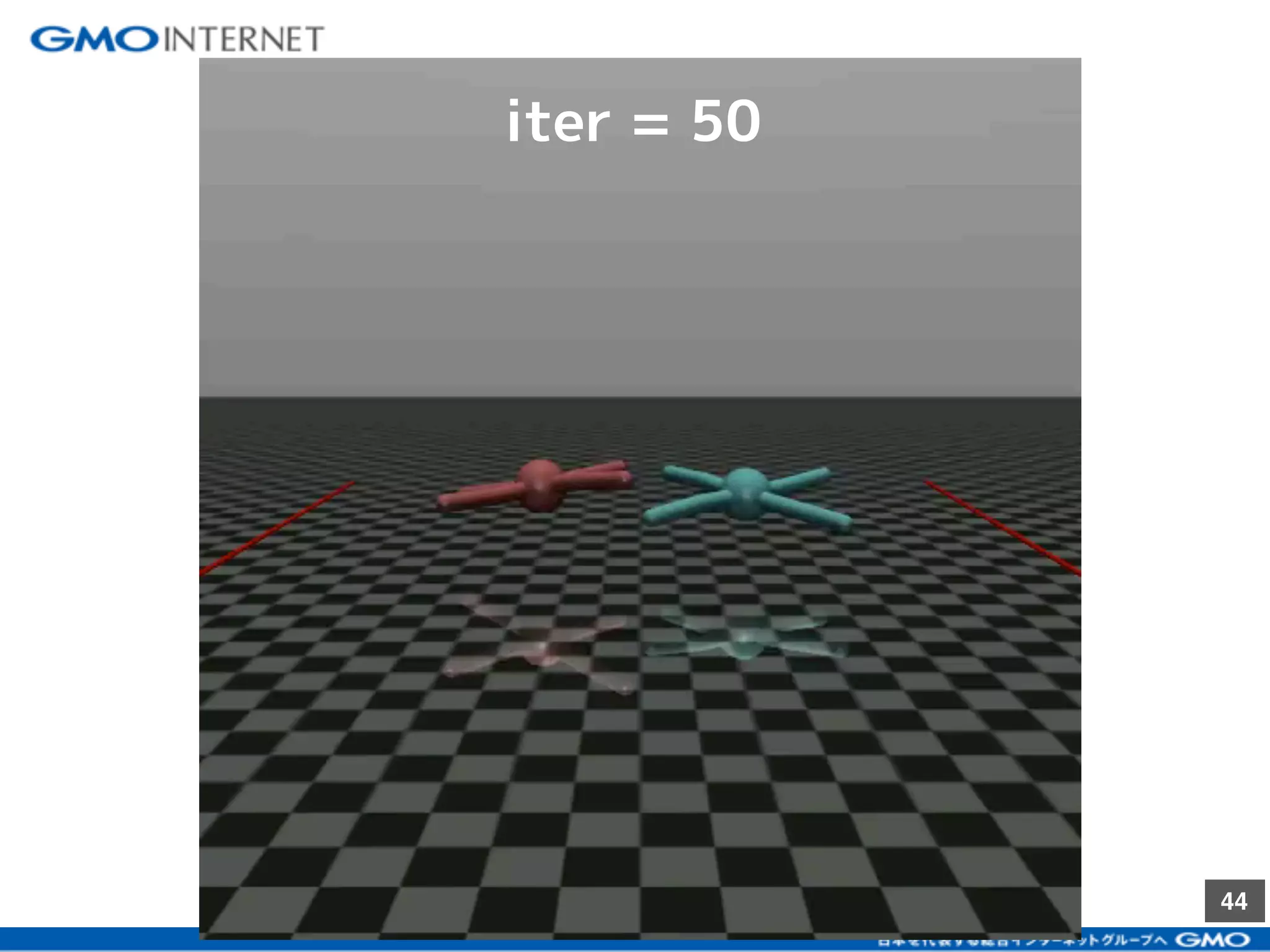

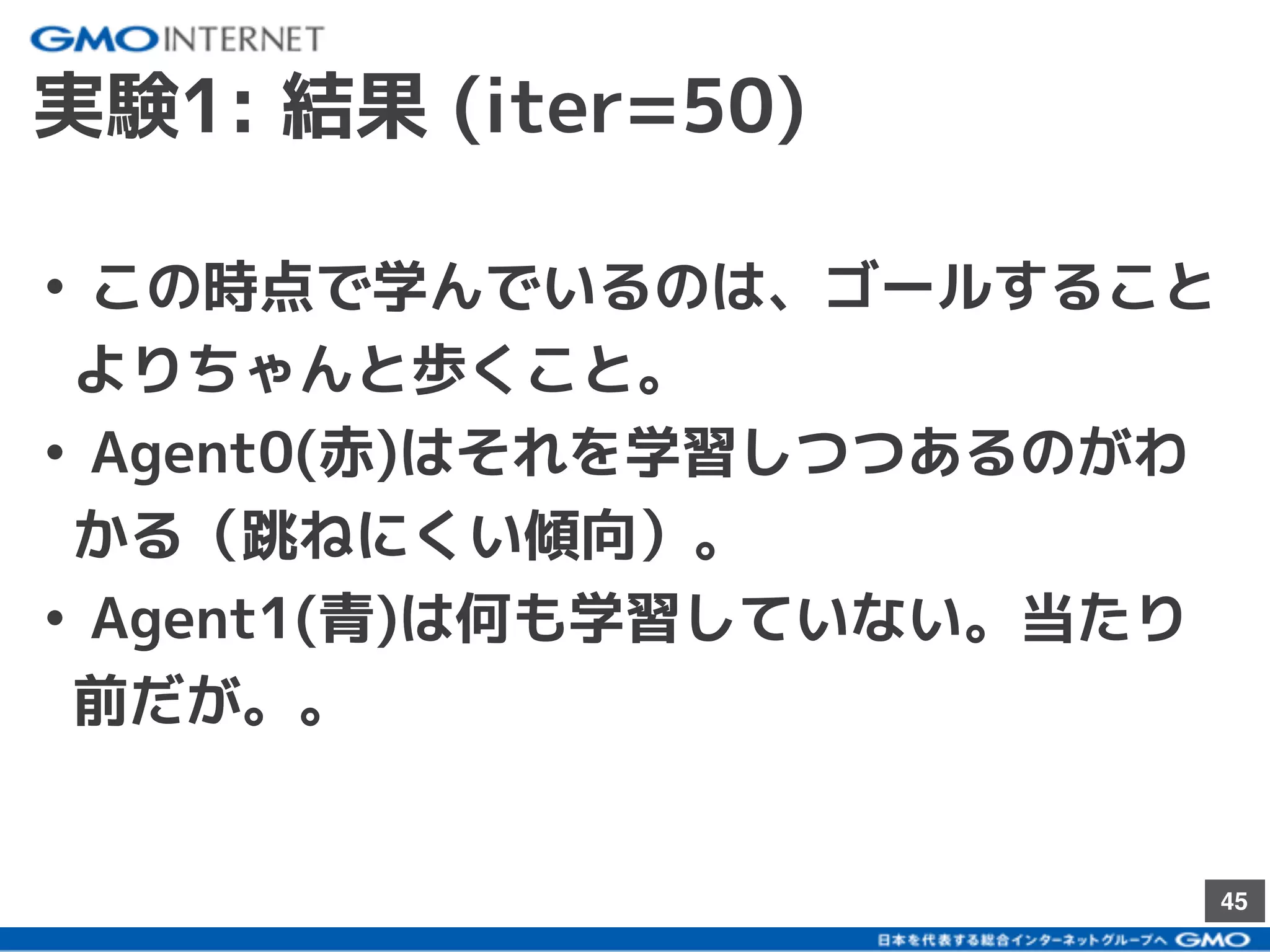

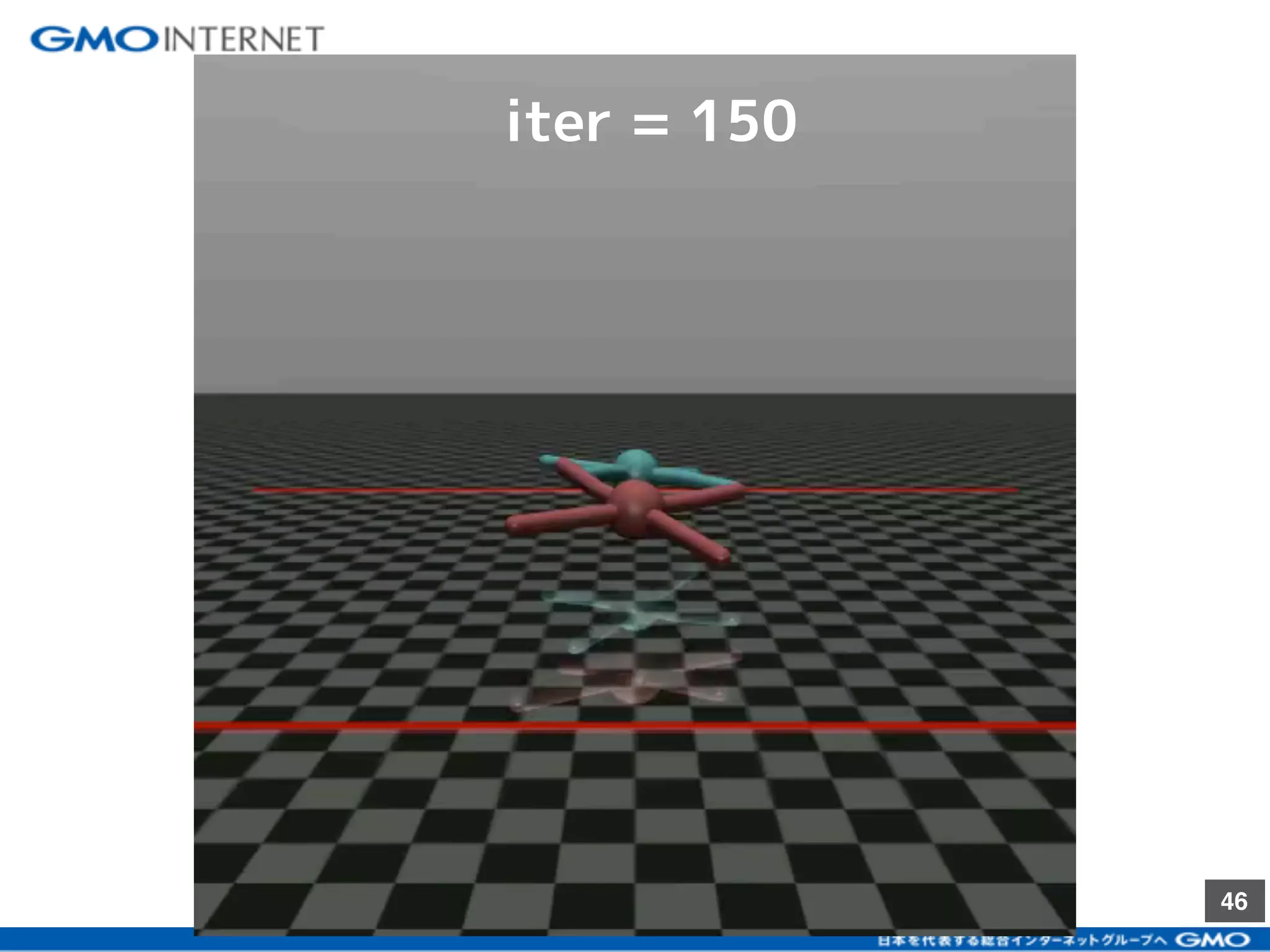

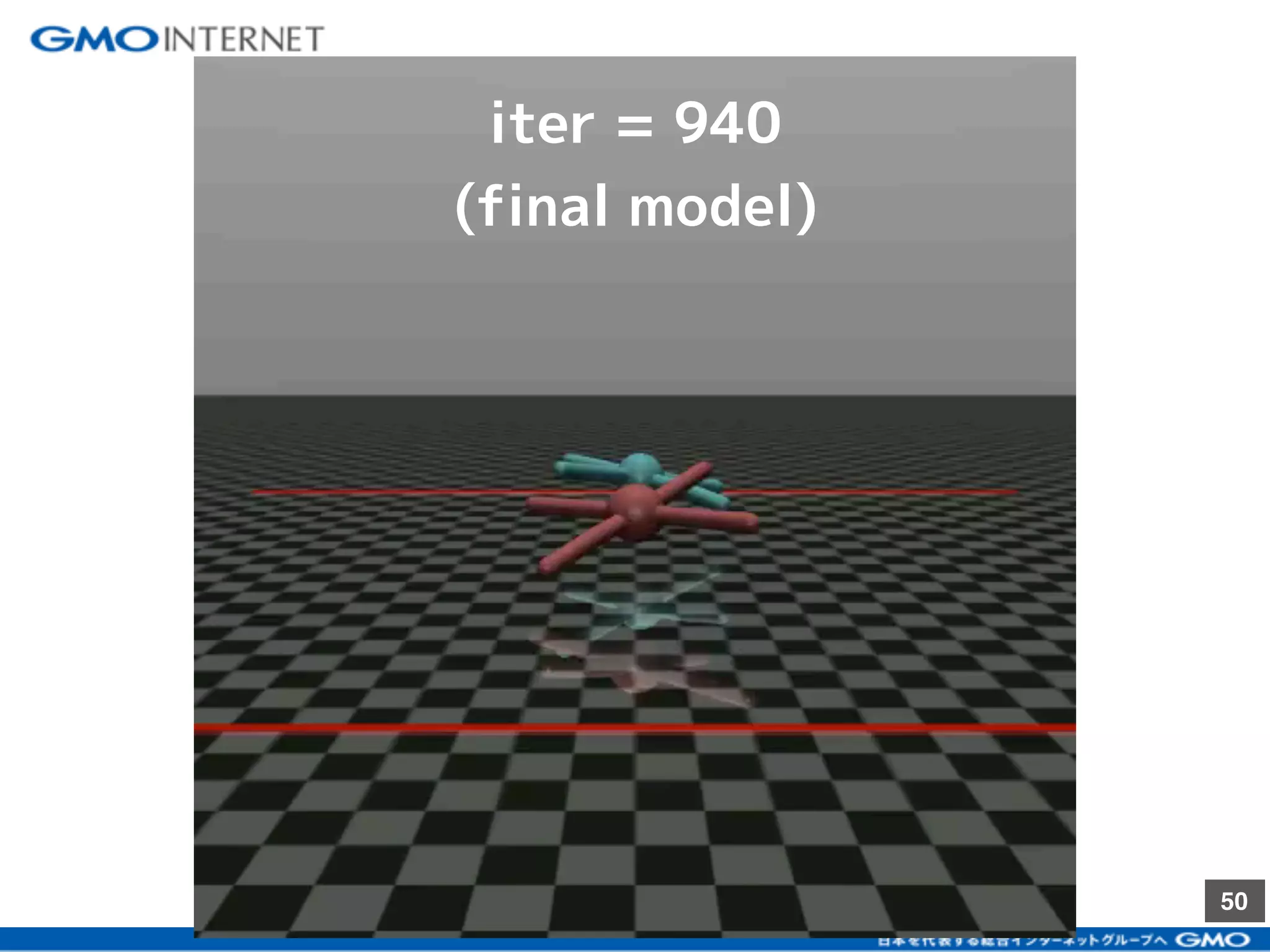

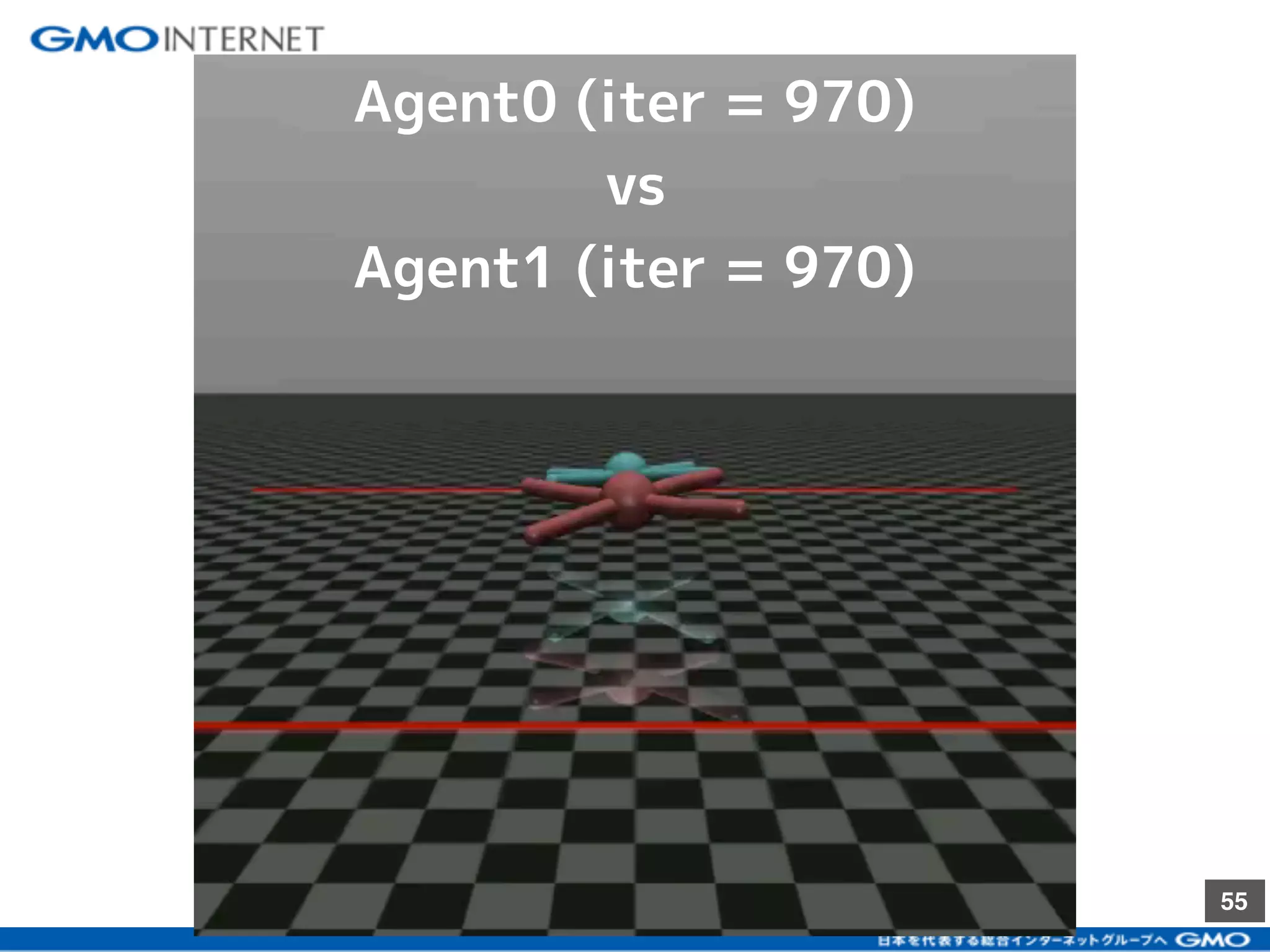

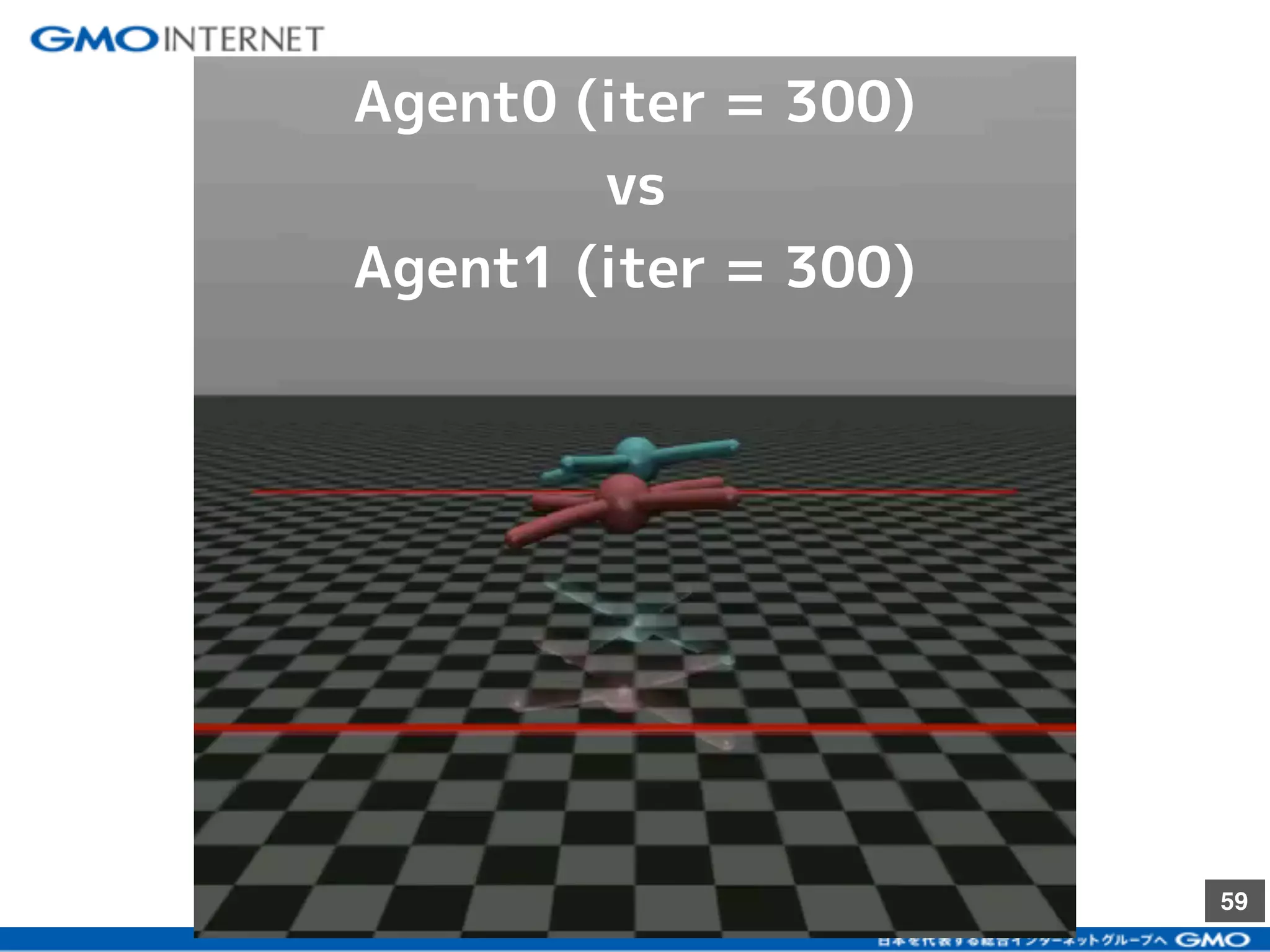

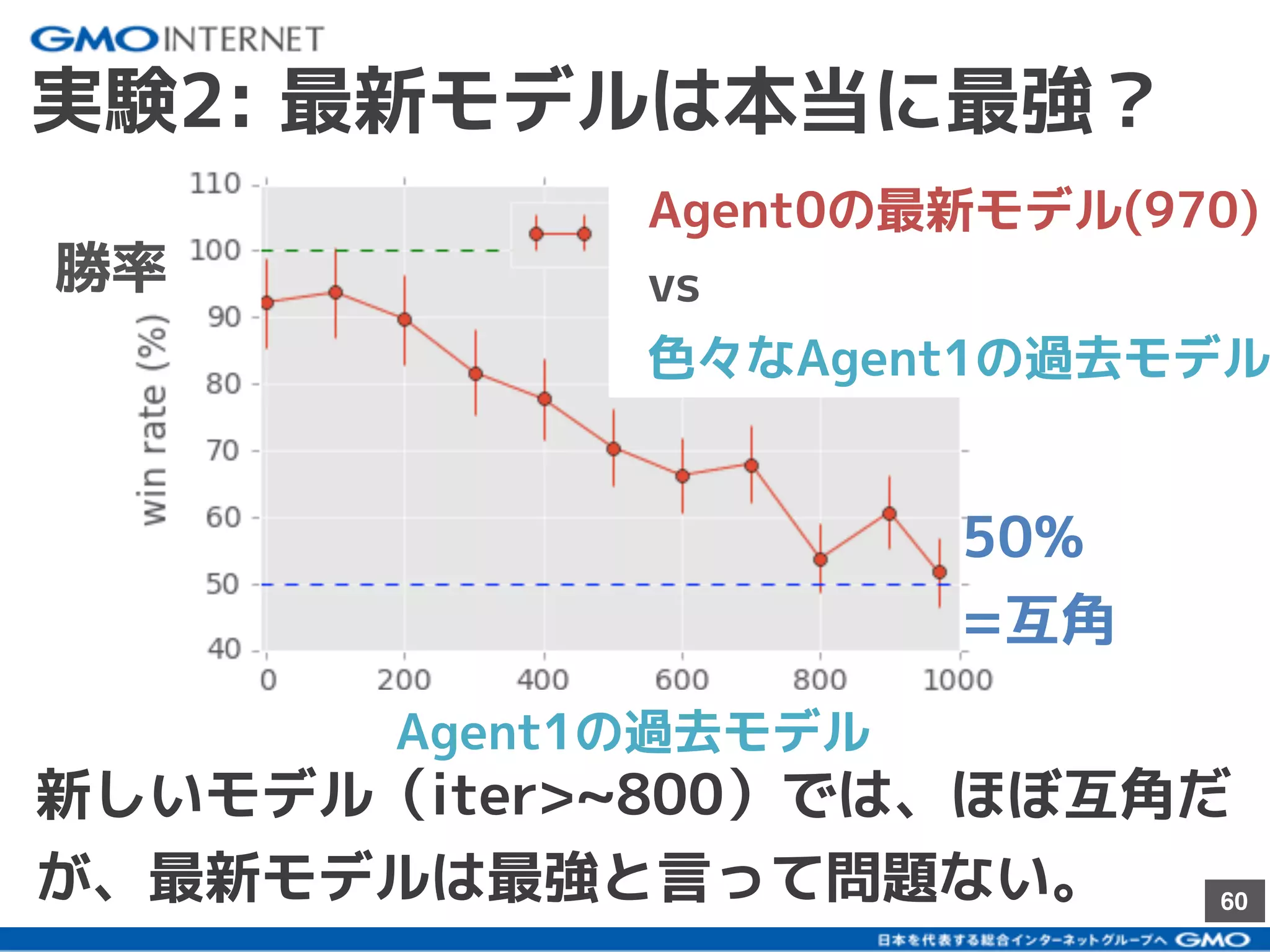

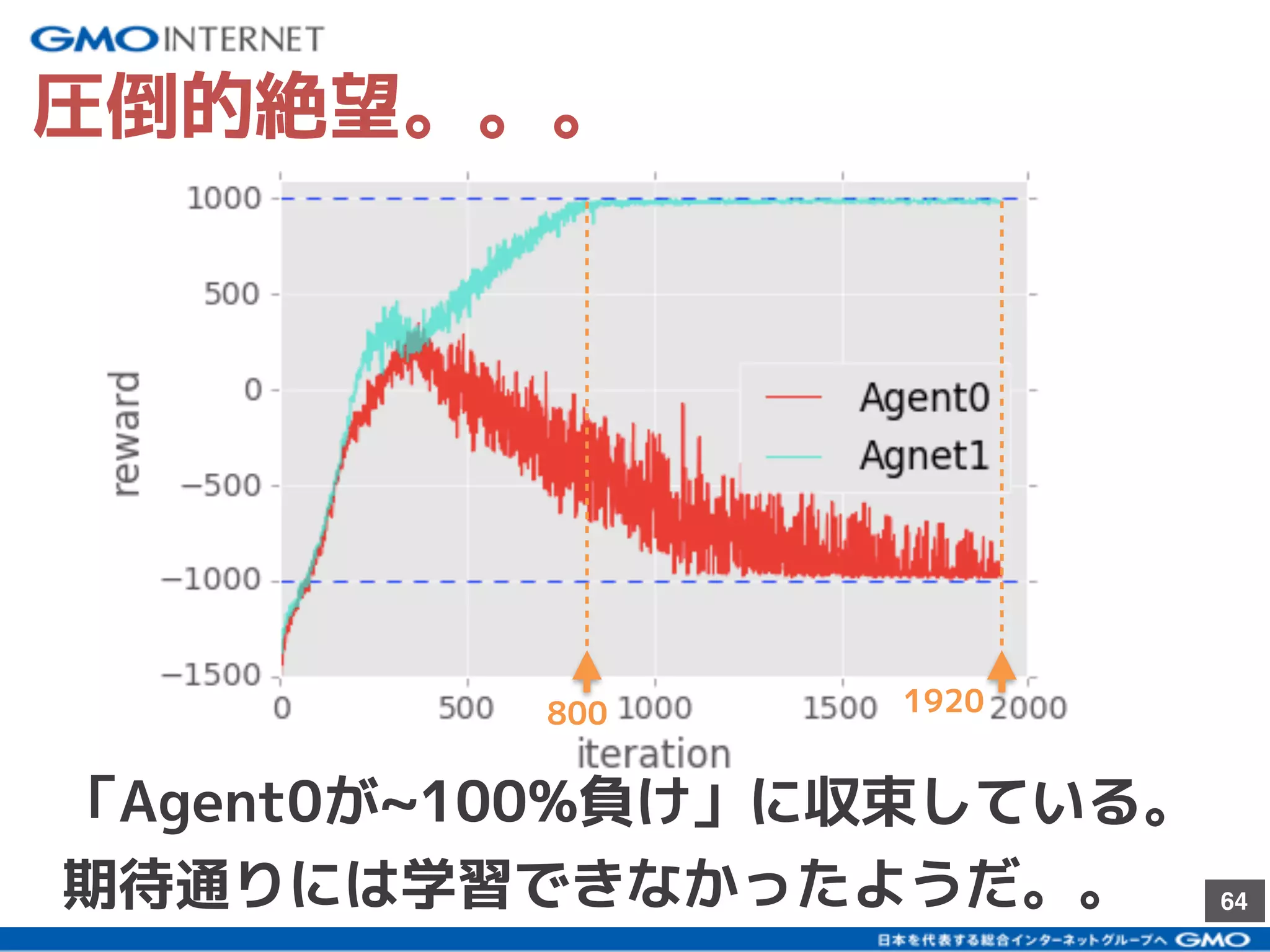

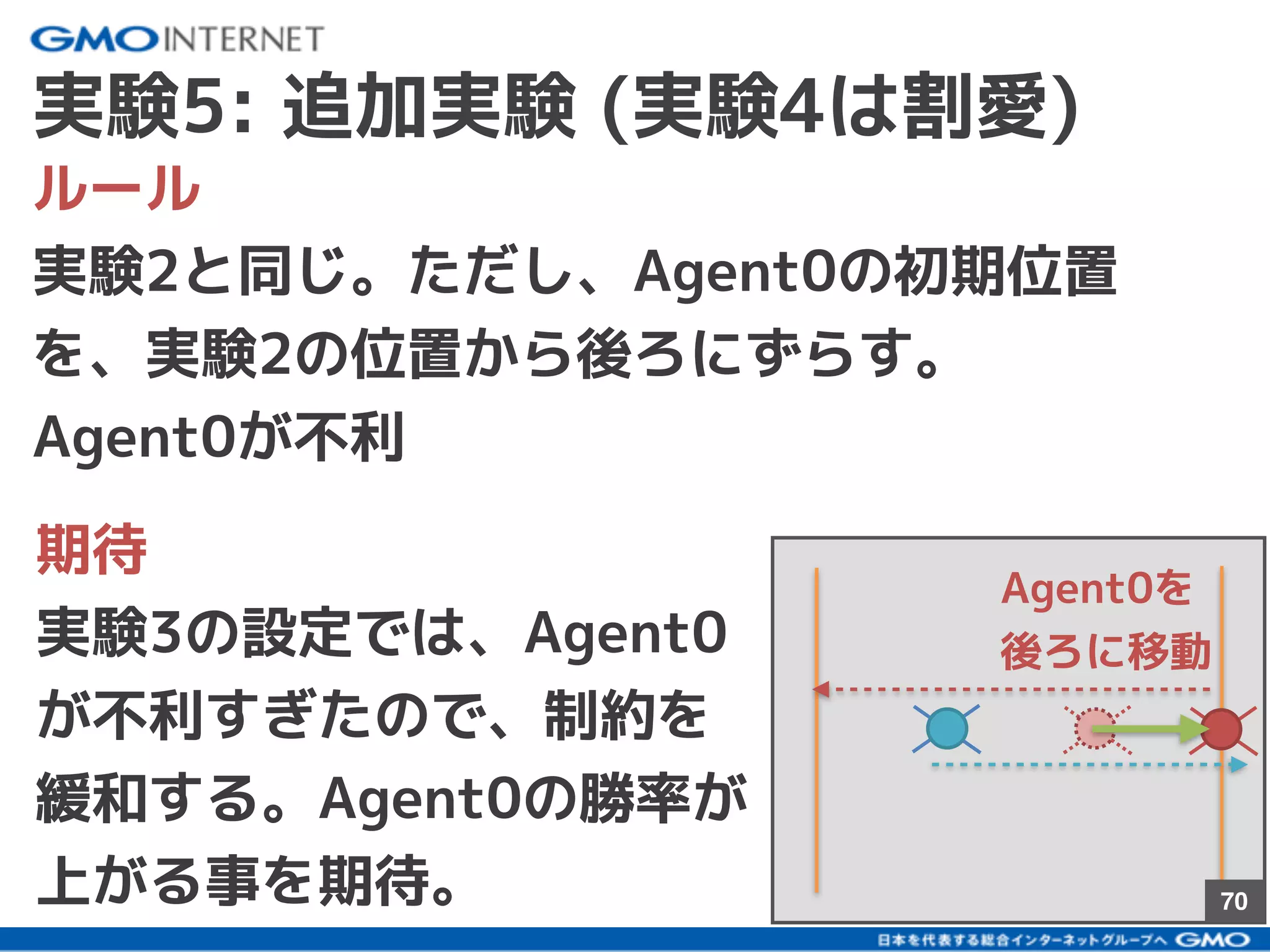

2017/10に発表されたBansal+17を参考に、深層強化学習のself-playを使って、2体のAgentを戦わせることで複雑な行動の学習を試みた結果について話しました。論文にはない初期位置などでも学習をさせて、どのように変化するかの考察などもしました。