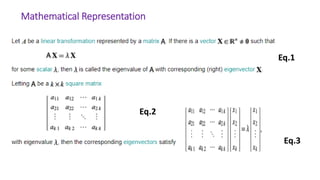

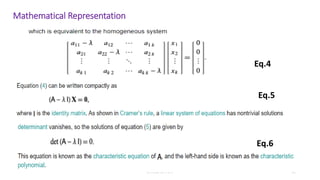

The presentation discusses eigenvalues and eigenvectors, their mathematical significance, and various applications in fields such as physics, engineering, and machine learning. Key points include using these concepts for dimensionality reduction in data analysis, and their importance in the stability of structures like bridges and in communication systems. It concludes that eigenvalues and eigenvectors are crucial for feature extraction and have widespread implications across multiple disciplines.

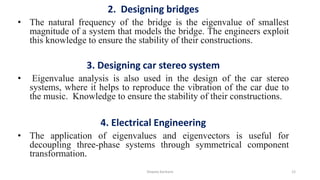

![Shweta Kanhere 21

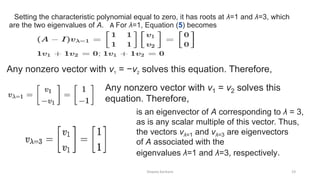

The transformation matrix A preserves the direction of purple vectors parallel

to vλ=1 = [1 −1]T and blue vectors parallel to vλ=3 = [1 1]T.The red vectors are

not parallel to either eigenvector, so, their directions are changed by the

transformation. The lengths of the purple vectors are unchanged after the

transformation (due to their eigenvalue of 1), while blue vectors are three times

the length of the original (due to their eigenvalue of 3)](https://image.slidesharecdn.com/eigenvalueandeigenvectorsshwetak-220106105416/85/Eigen-value-and-eigen-vectors-shwetak-21-320.jpg)