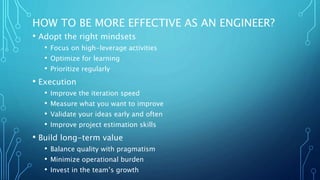

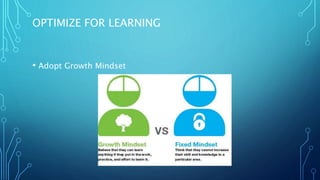

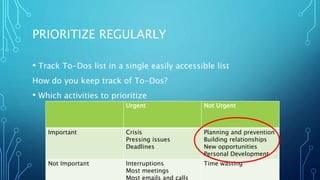

The document outlines strategies for engineers to increase effectiveness, including adopting growth mindsets, focusing on high-leverage activities, and optimizing for learning. Key considerations include measuring improvements, validating ideas early, and improving project estimation skills, while balancing quality with pragmatism and minimizing operational burdens. Additionally, it emphasizes investing in team growth and fostering a supportive engineering culture.