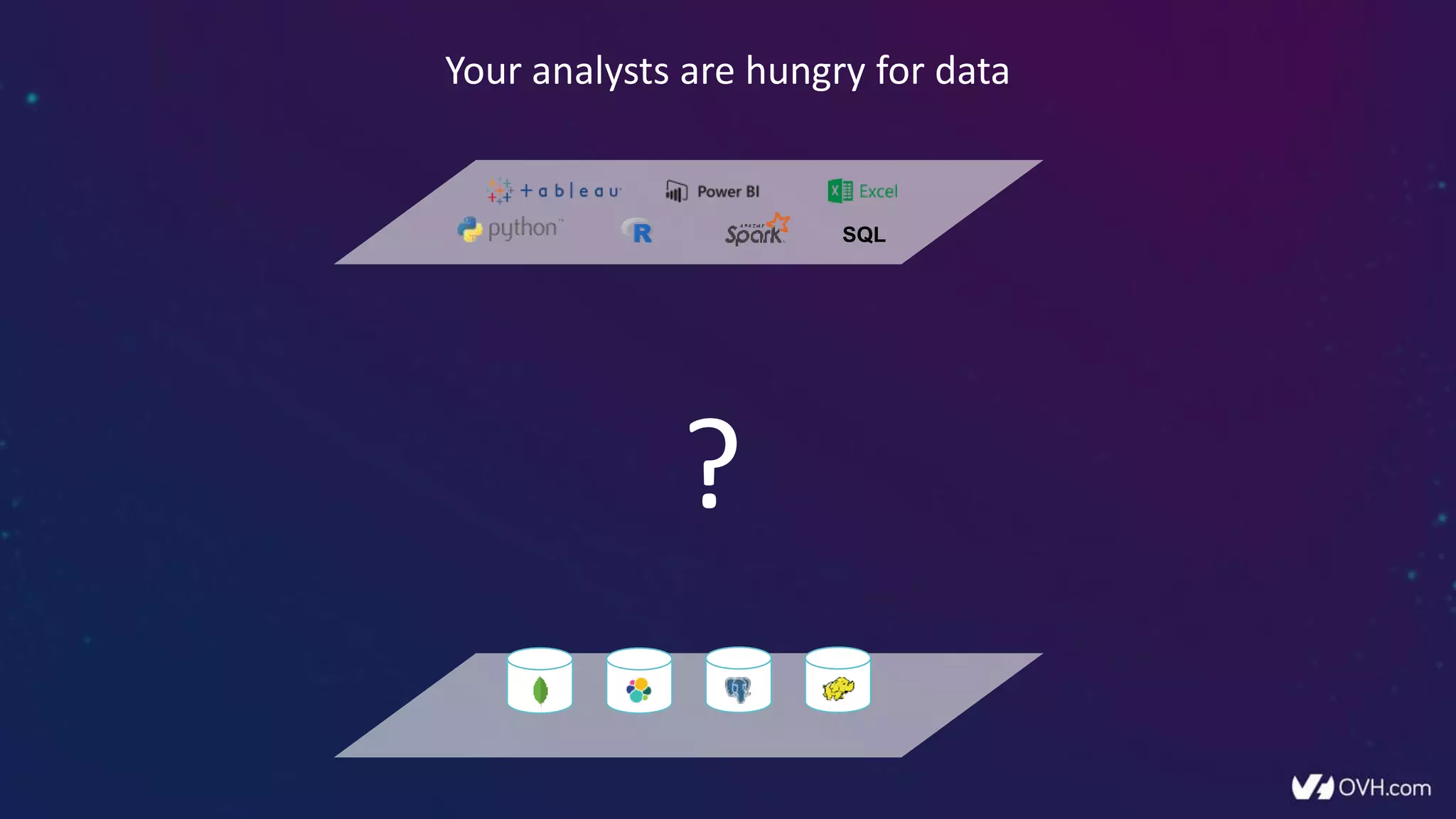

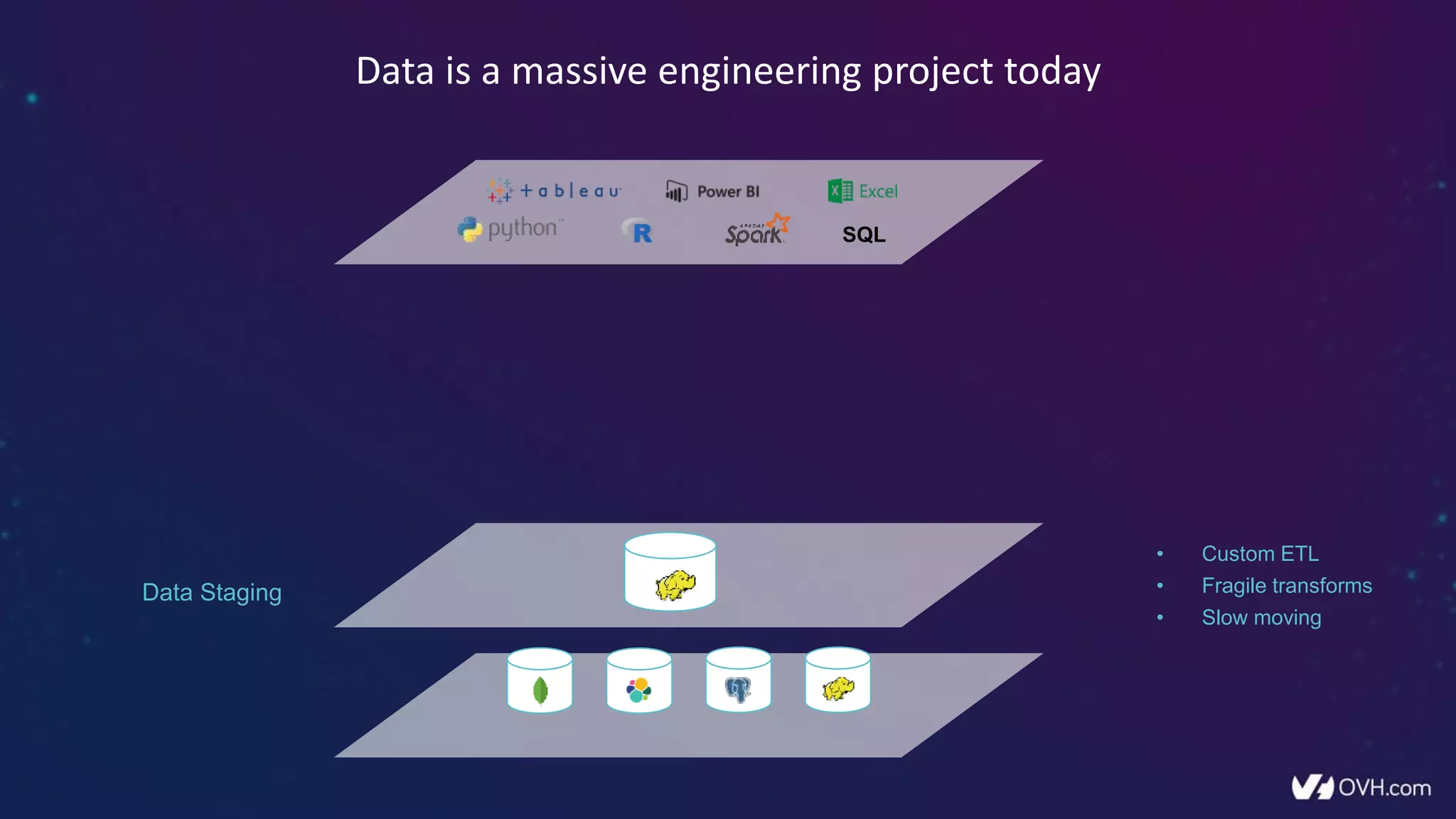

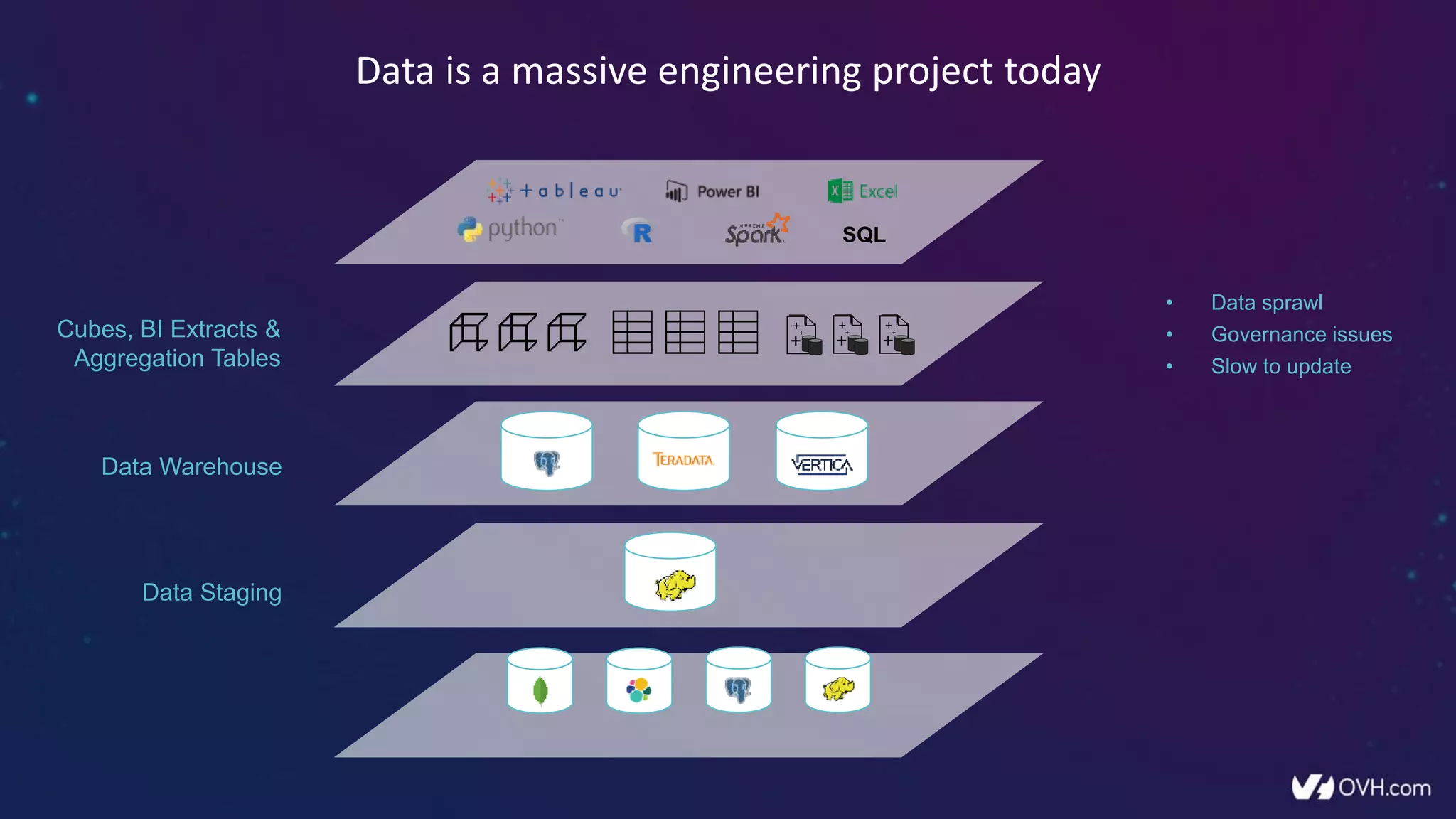

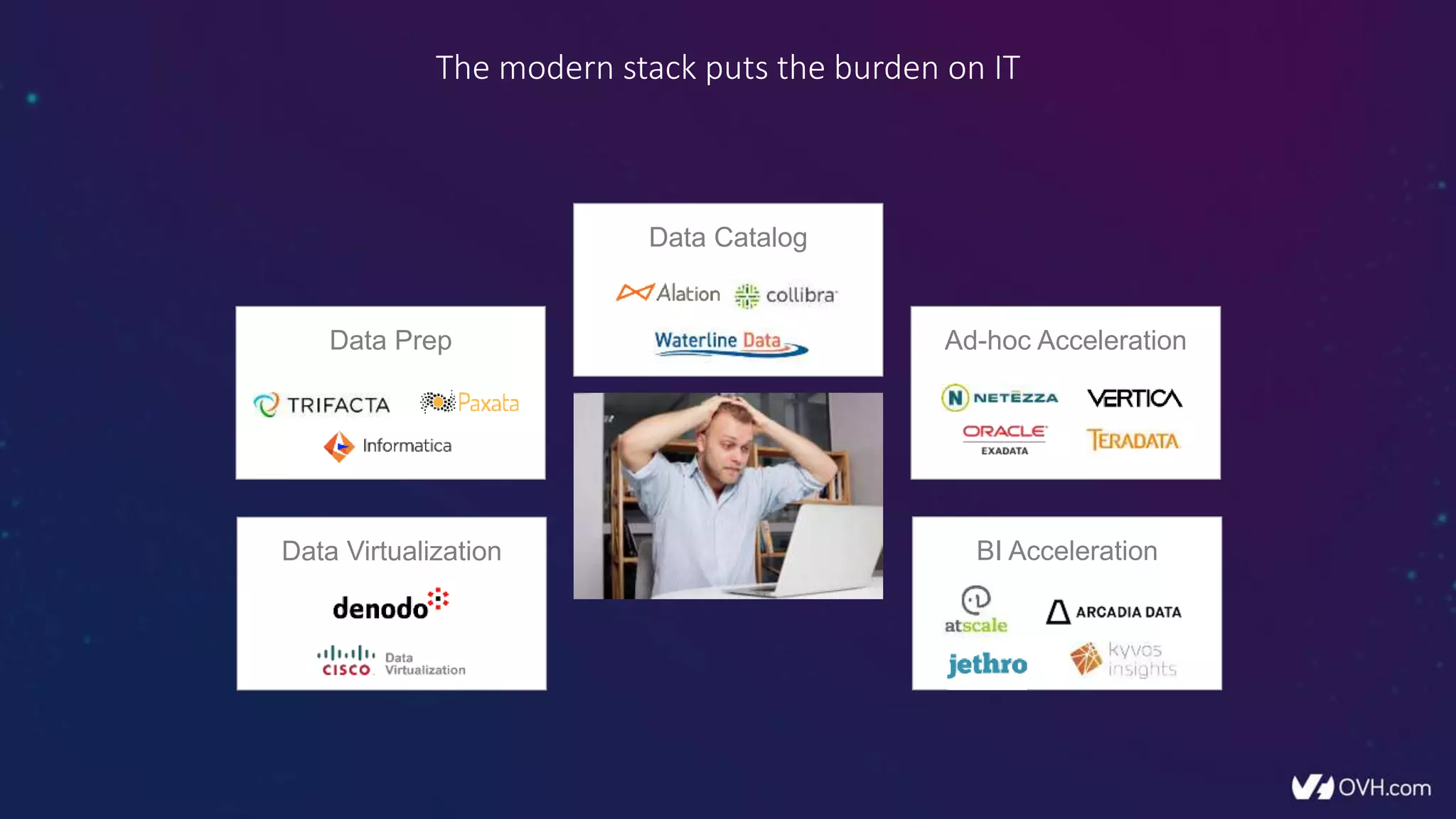

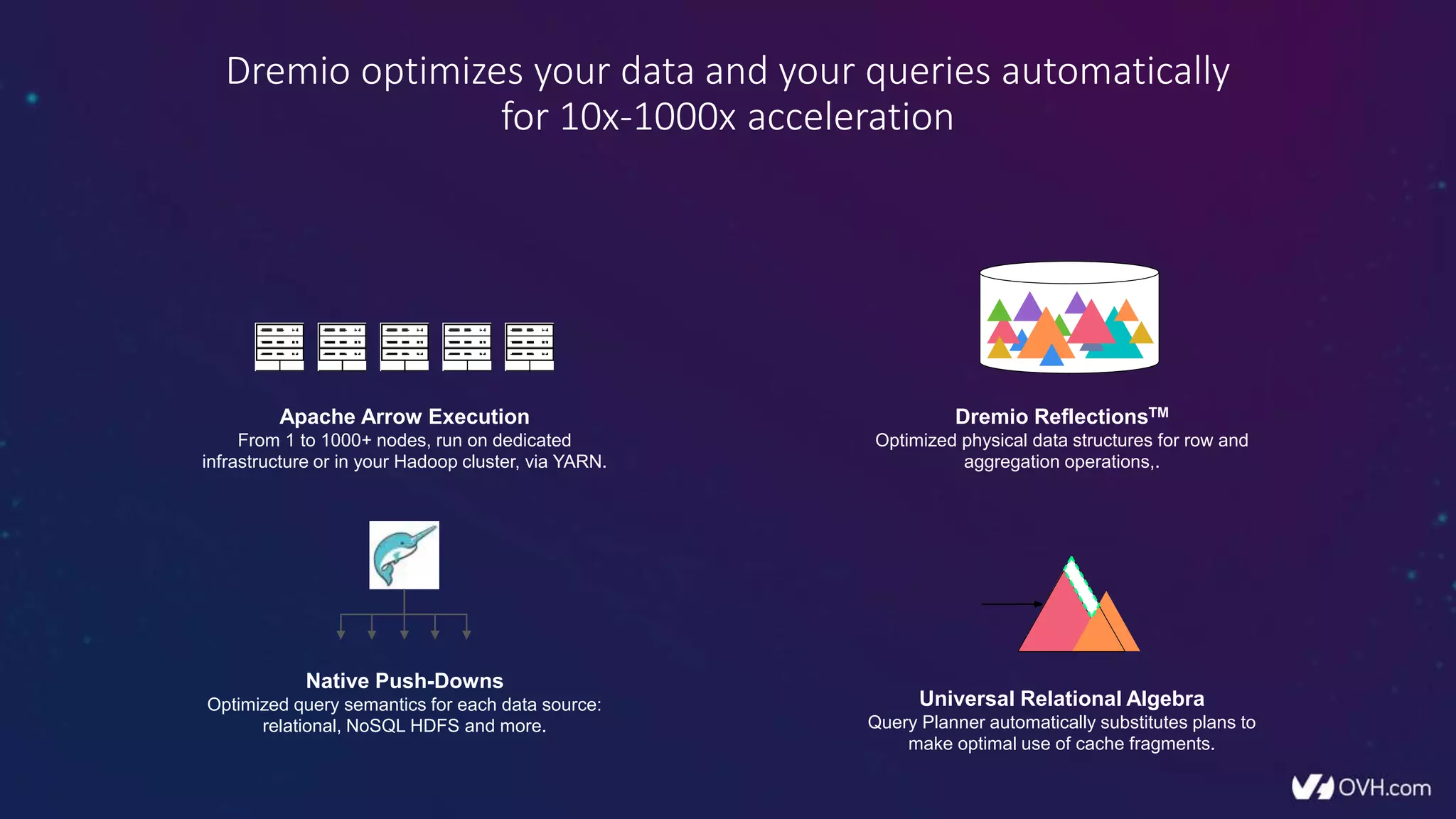

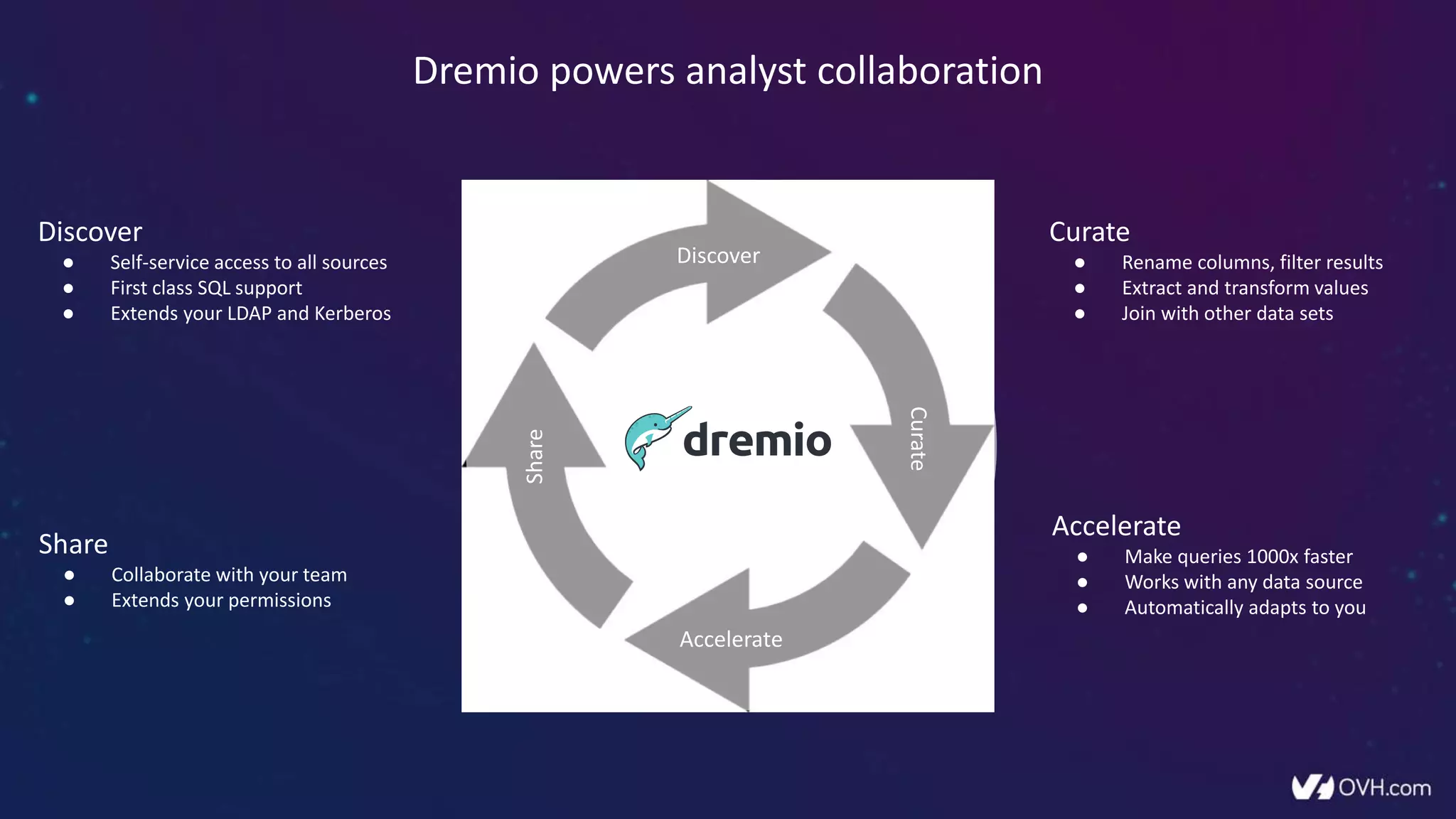

The document outlines how Dremio enhances data management by eliminating the need for traditional data warehouses, ETL processes, and cubes, thus enabling self-service and collaborative analytics. It introduces a data fabric approach that accelerates data queries and simplifies data curation across various data sources. Additionally, it highlights Dremio's technology, including Apache Arrow and built-in security features, which optimize data analytics and support team collaboration.