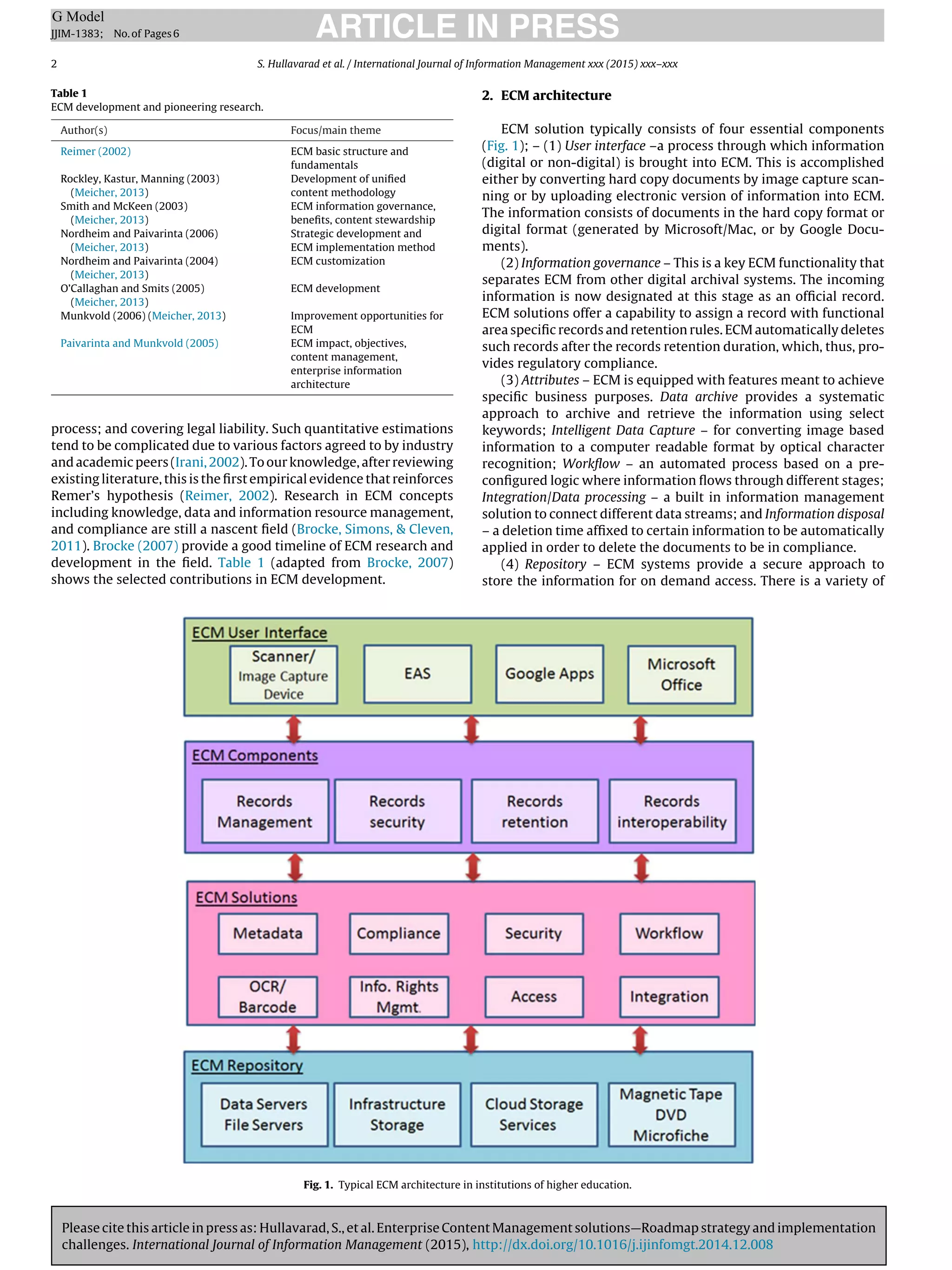

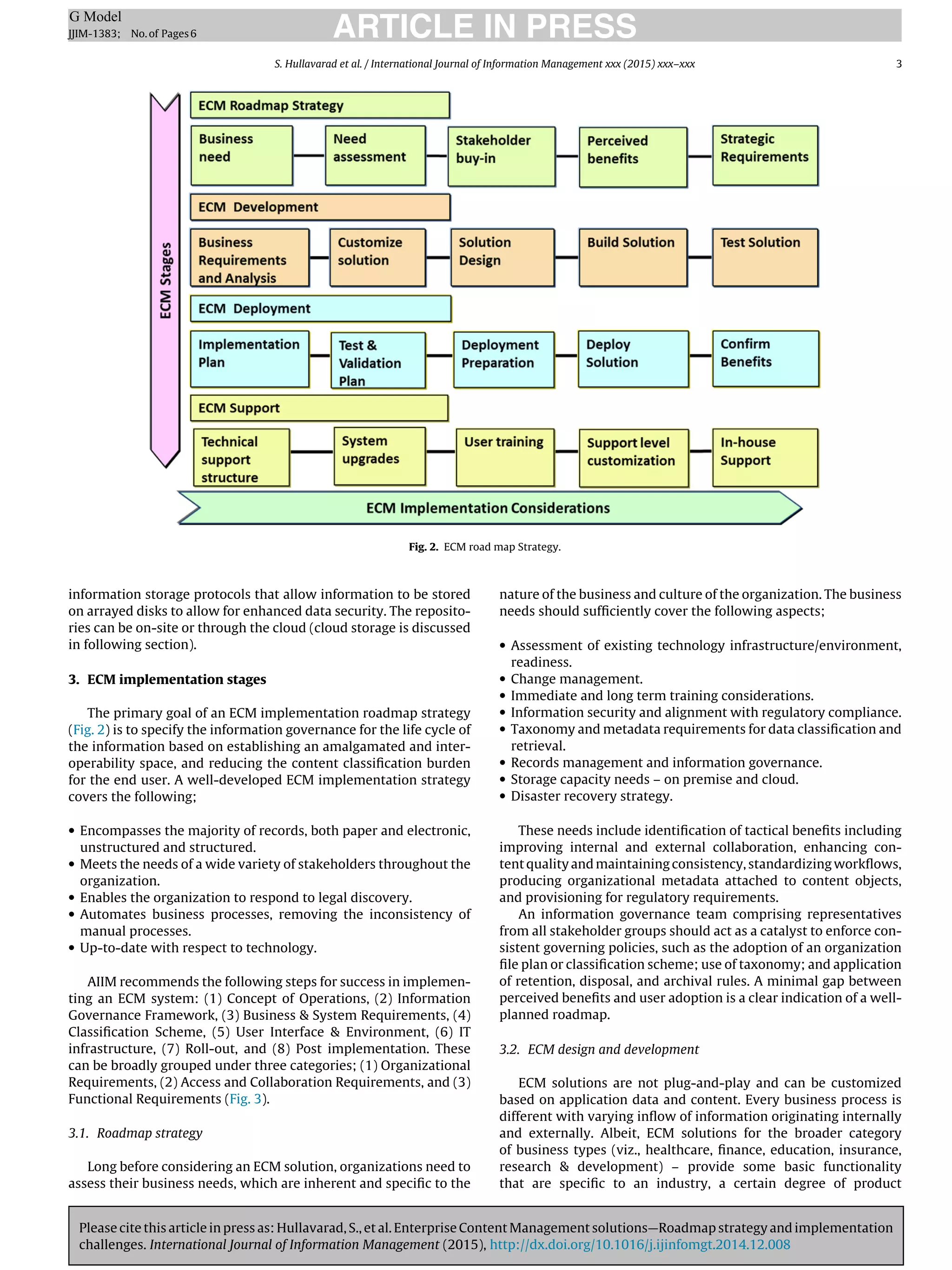

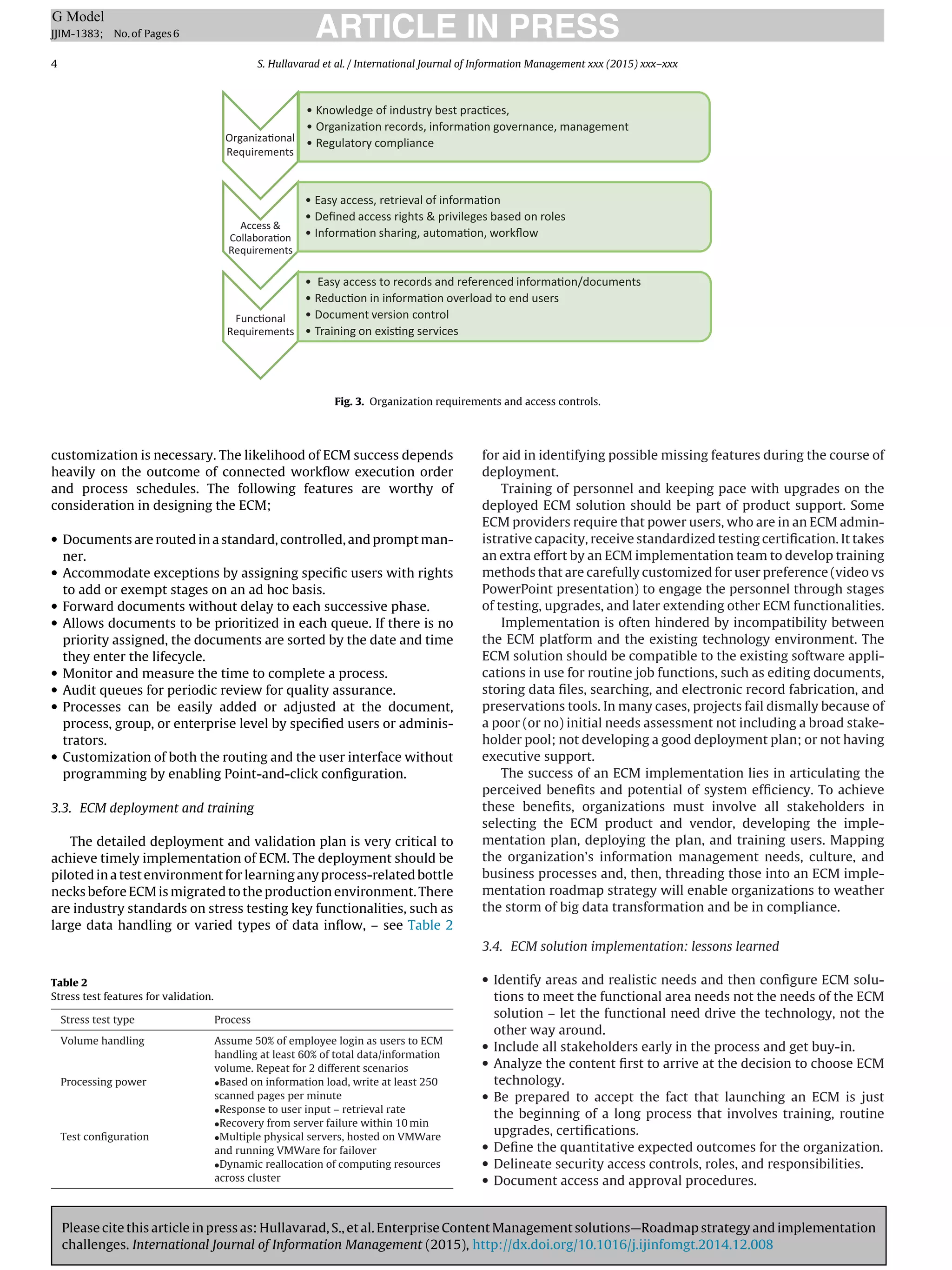

This document discusses enterprise content management (ECM) solutions, including their typical architecture and key challenges in implementation. It describes the four main components of an ECM architecture: (1) the user interface, (2) information governance, (3) attributes like data archiving and workflow, and (4) the repository for secure storage. The document also outlines stages in an ECM implementation roadmap strategy, highlighting the need to specify information governance over the lifecycle and establish interoperability between systems.