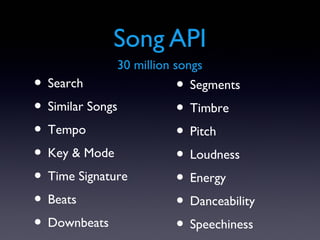

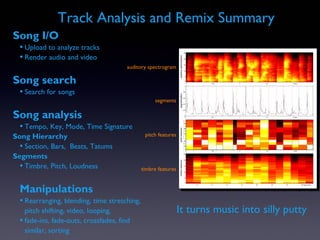

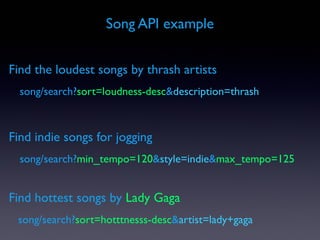

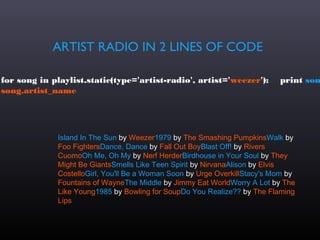

The Echo Nest provides a comprehensive API for music analysis and discovery, developed through extensive research at top universities. It offers tools for searching and analyzing over 30 million songs and 2 million artists, enabling tasks like retrieving song attributes and generating playlists based on various criteria. Additionally, the platform supports integration with major music services and has facilitated innovative music projects and applications.

![SIMILAR ARTISTS IN 2 LINES OF CODE

for a in artist.similar(names=['lady gaga']): print

a.name

MadonnaChristina AguileraBritney SpearsKylie MinogueKaty

PerryScissor SistersRihannaBeyoncéAshley TisdaleLivvi FrancLa

RouxParis HiltonShe Wants RevengeThe Pussycat DollsMarina and

The Diamonds](https://image.slidesharecdn.com/echo-nest-api-boston-2012-130109141726-phpapp02/85/Echo-nest-api-boston-2012-5-320.jpg)

![Top recent news stories for Adele

adele = artist.Artist('Adele')for news in adele.news: print

news['date_posted'], news['name']

2012-02-06T17:37:00 Grammys: Who Should Win the Major Categories2012-02-06T00:00:00

Noel Gallagher: Adele's Music Career Won't Last2012-02-06T00:00:00 Noel Gallagher Admits

He Feels Sorry For Adele2012-02-06T00:00:00 Dave Grohl's Grammy pride2012-02-

06T00:00:00 British Artists Dominate 2011 Market: Adele, Jessie J2012-02-06T00:00:00 Adele

called 'too fat'](https://image.slidesharecdn.com/echo-nest-api-boston-2012-130109141726-phpapp02/85/Echo-nest-api-boston-2012-6-320.jpg)

![Audio properties in a few lines of code

results = song.search(artist='Michael Jackson', title='billie jean')if

len(results) > 0: print 'tempo', results[0].audio_summary['tempo']

print 'dance', results[0].audio_summary['danceability'] print

'energy', results[0].audio_summary['energy']

tempo 117.128dance 0.97energy 0.47](https://image.slidesharecdn.com/echo-nest-api-boston-2012-130109141726-phpapp02/85/Echo-nest-api-boston-2012-10-320.jpg)

![slicing and dicing

Create a remix from beat one of every bar

Create a remix from beat one of every bar

bars = audiofile.analysis.bars collect =

[] for bar in bars:

collect.append(bar.children()[0]) out =

audio.getpieces(audiofile, collect)

out.encode(output_filename)

audio.getpieces(audiofile, collect)

out.encode(output_filename)](https://image.slidesharecdn.com/echo-nest-api-boston-2012-130109141726-phpapp02/85/Echo-nest-api-boston-2012-35-320.jpg)

![beat reversing

beats = audiofile.analysis.beats collec

= []

beats.reverse() for beat in beats:

collect.append(beat) out =

audio.getpieces(audiofile, collect)

out.encode(output_filename)

audio.getpieces(audiofile, collect)

out.encode(output_filename)

audio.getpieces(audiofile, collect)](https://image.slidesharecdn.com/echo-nest-api-boston-2012-130109141726-phpapp02/85/Echo-nest-api-boston-2012-36-320.jpg)