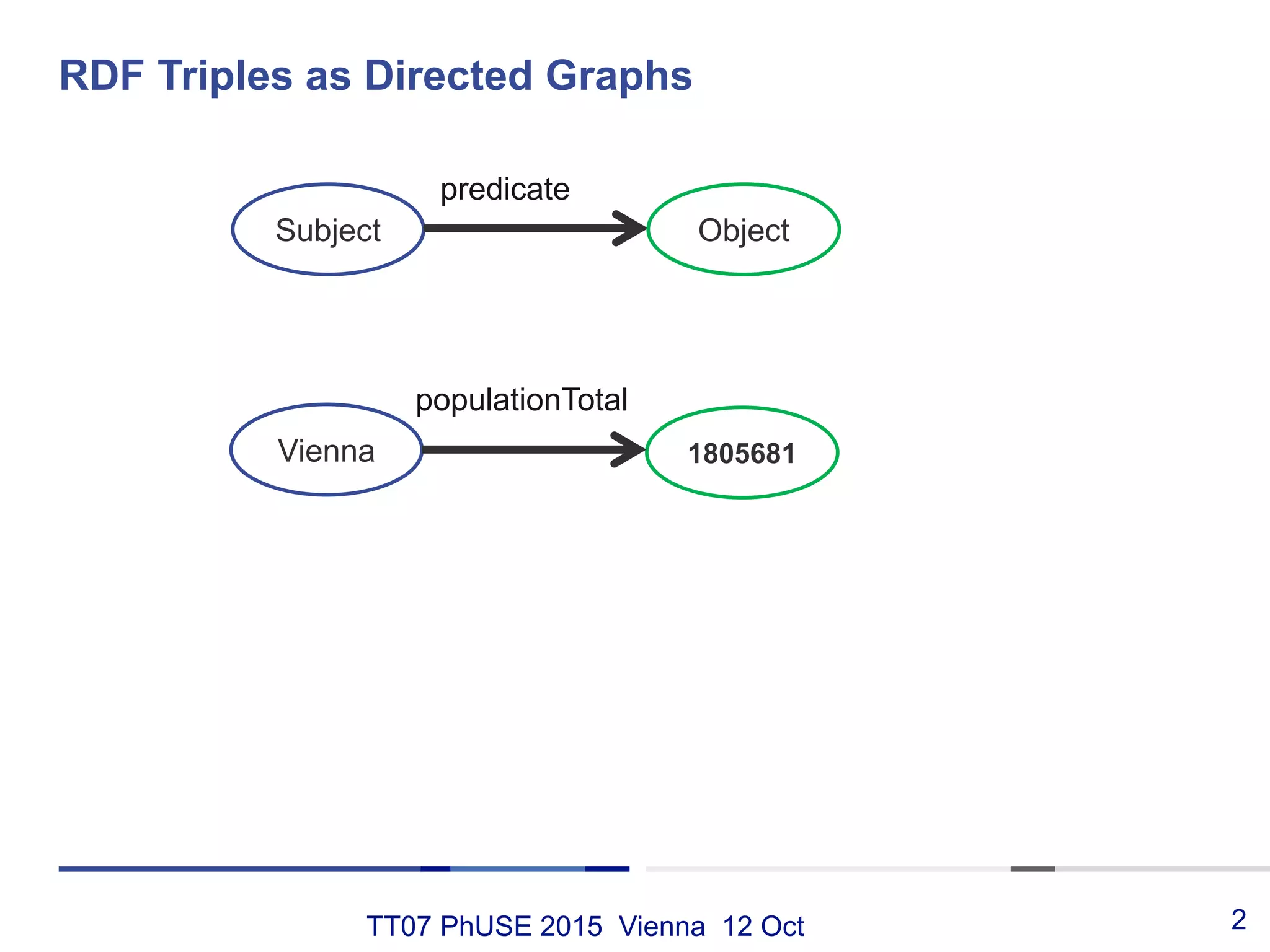

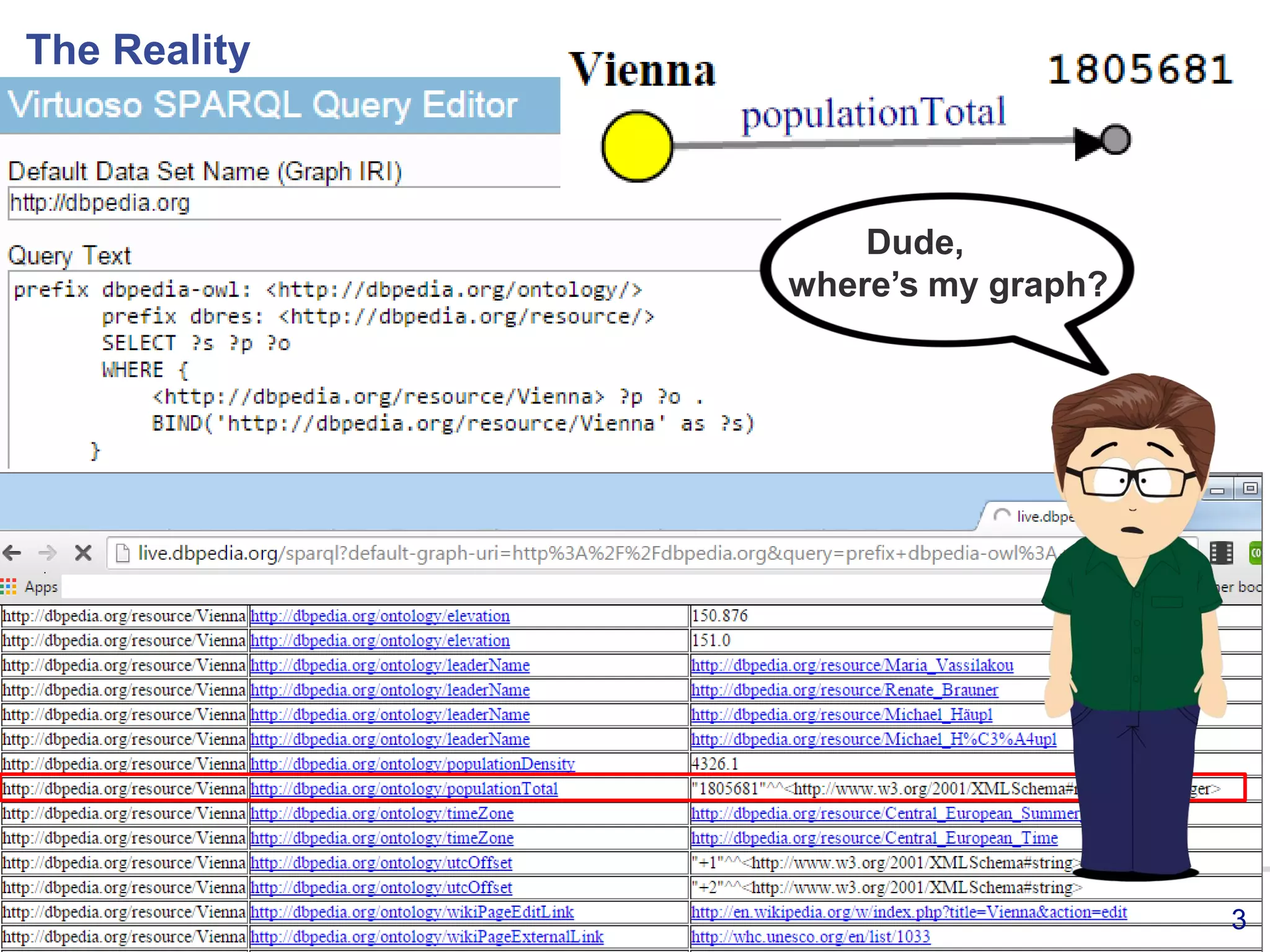

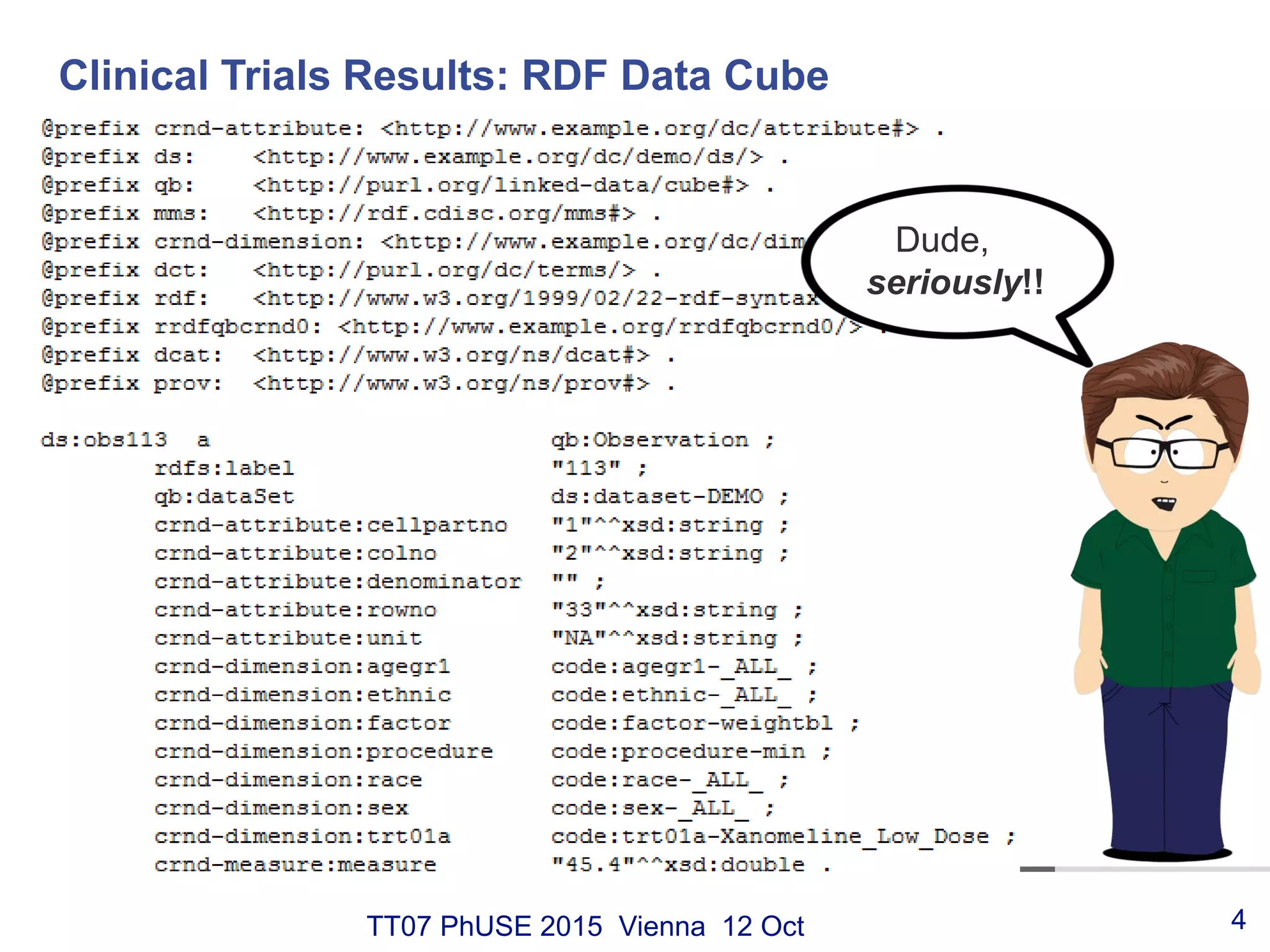

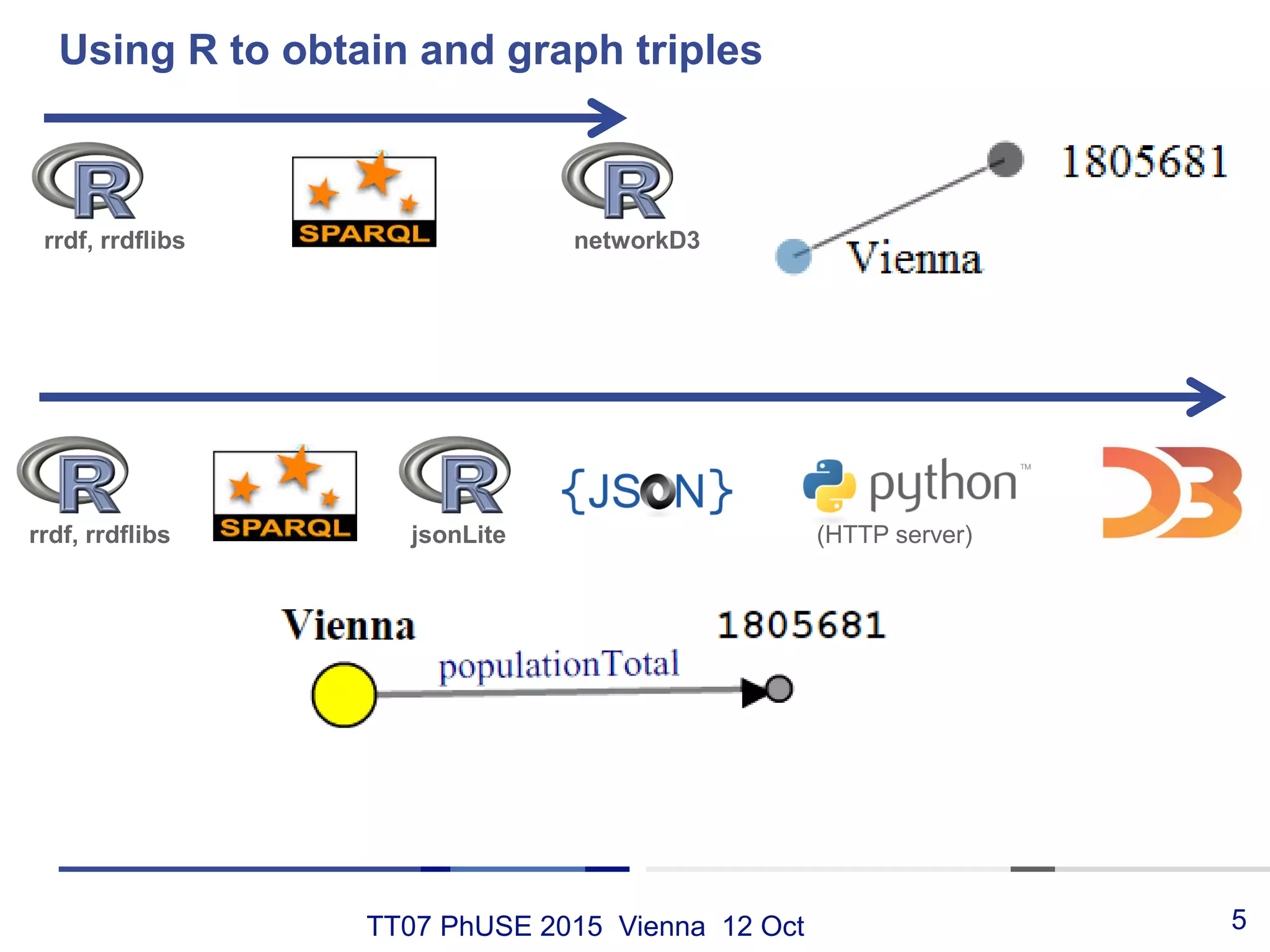

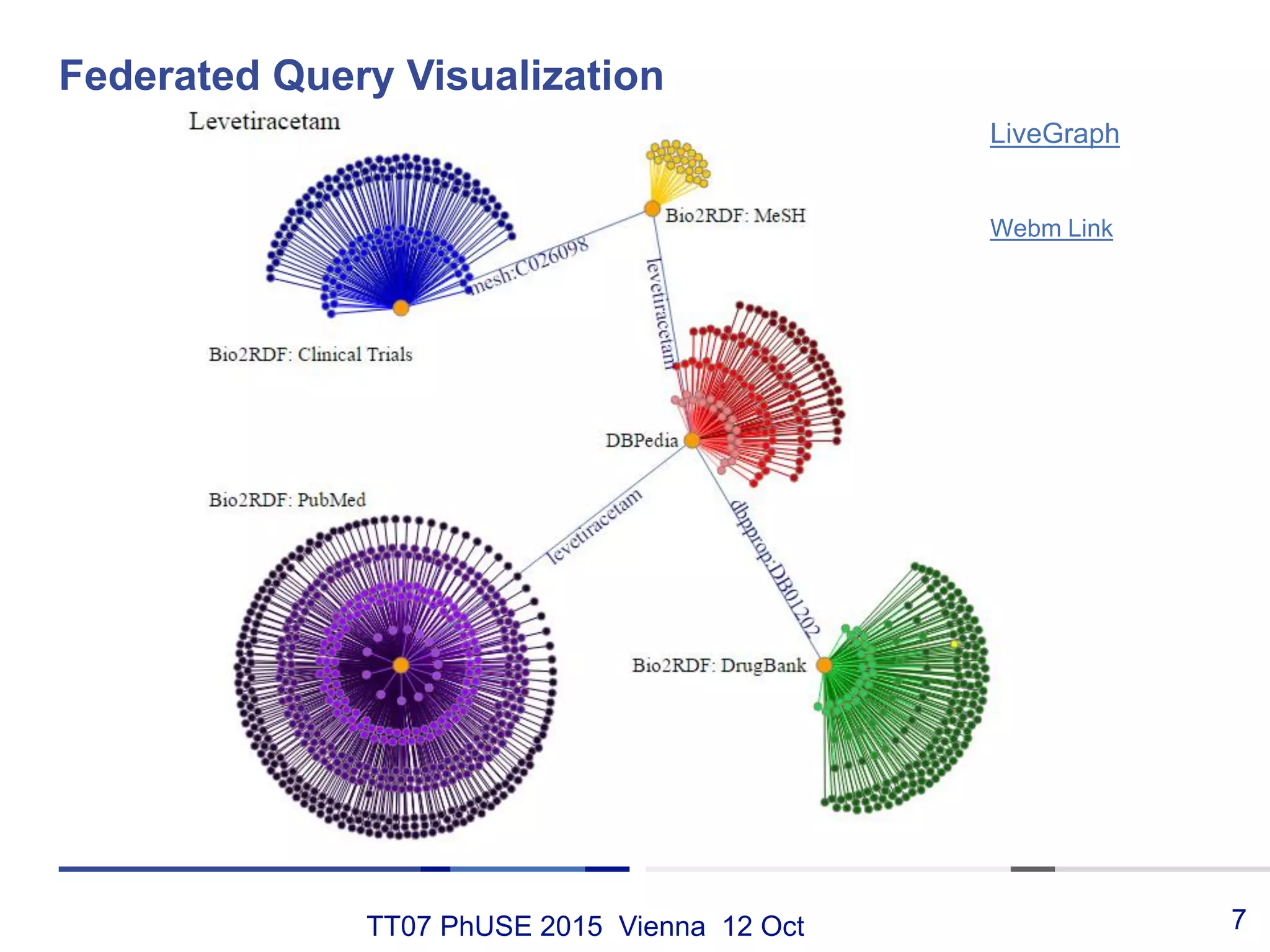

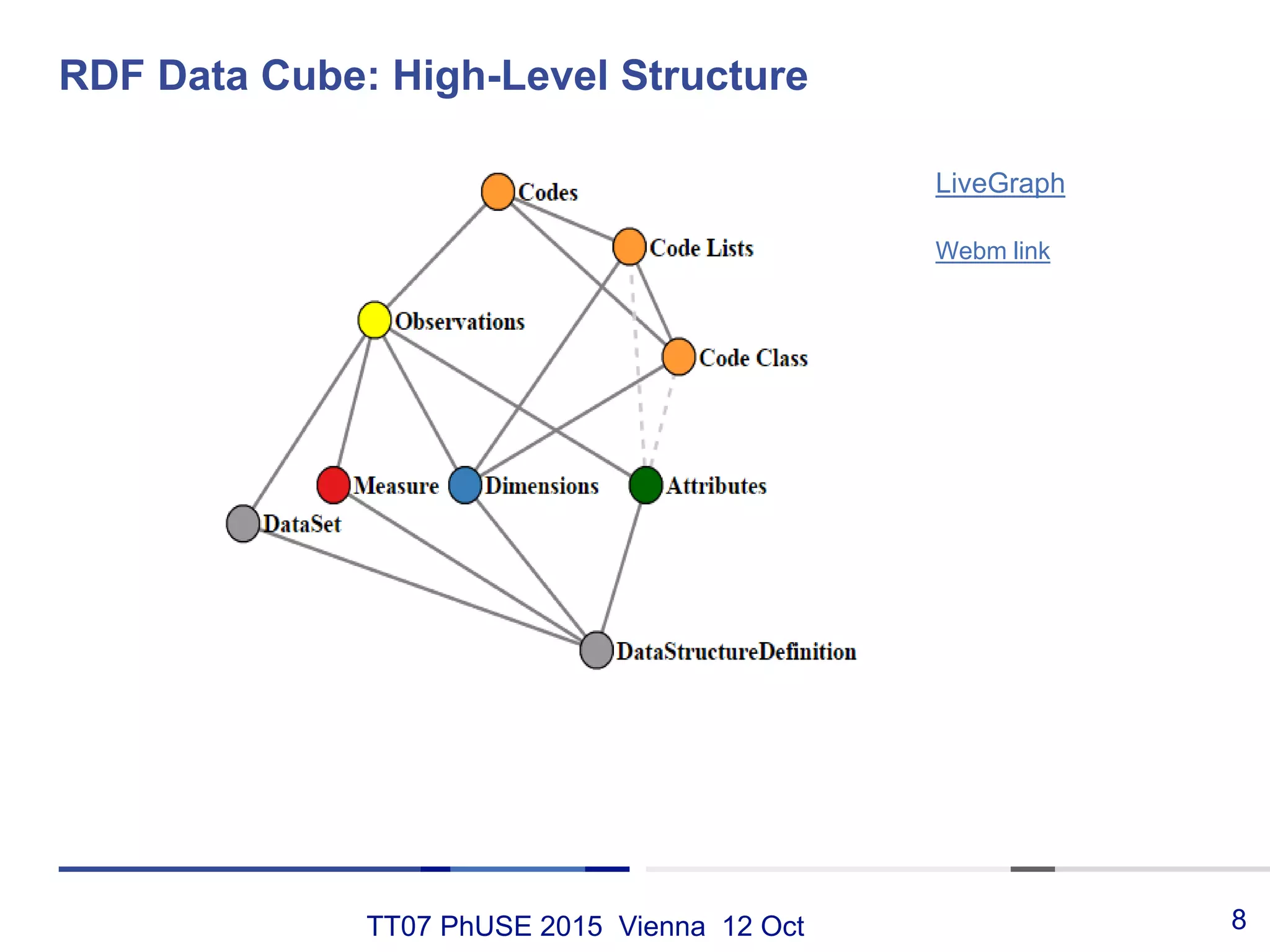

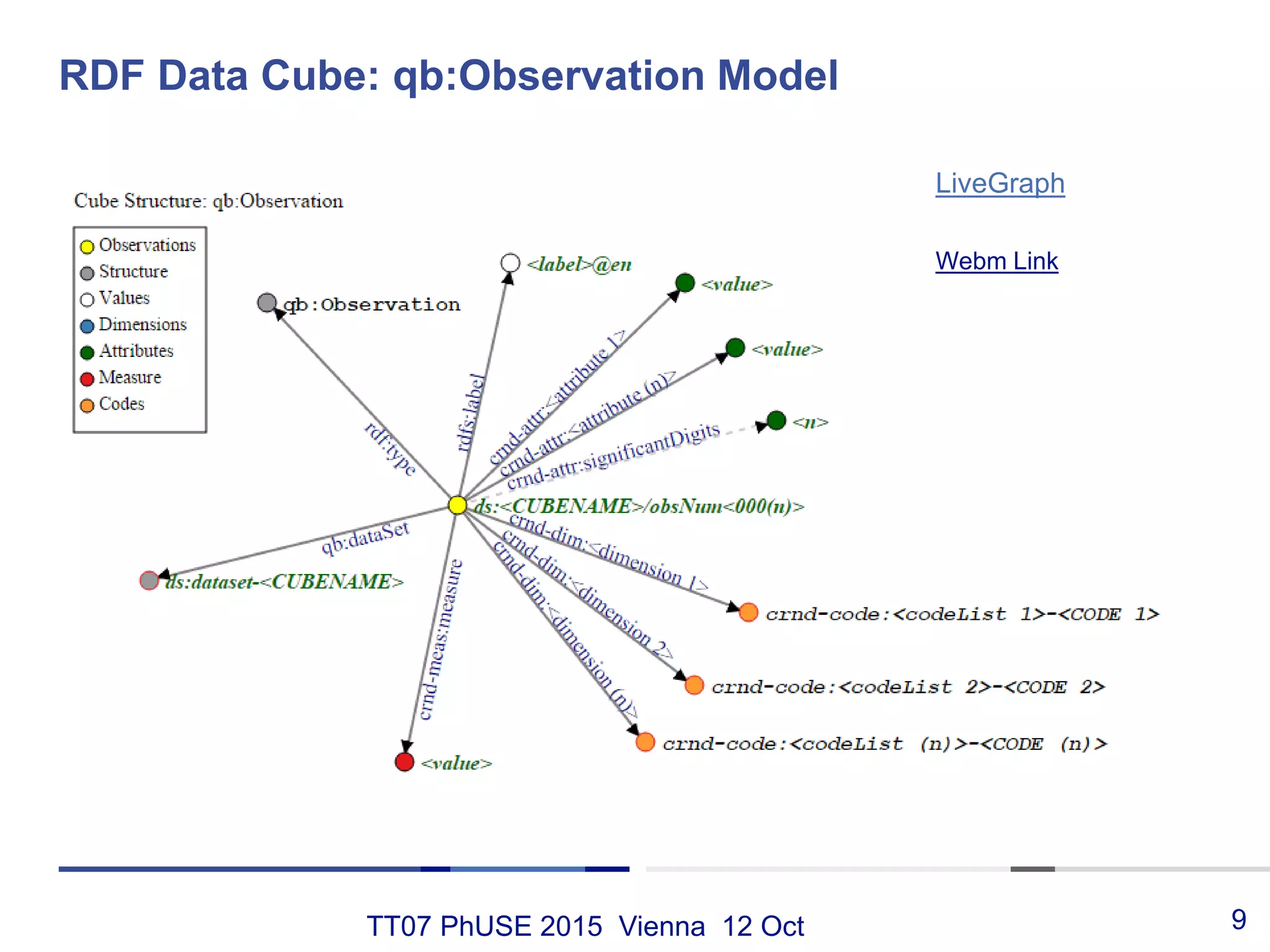

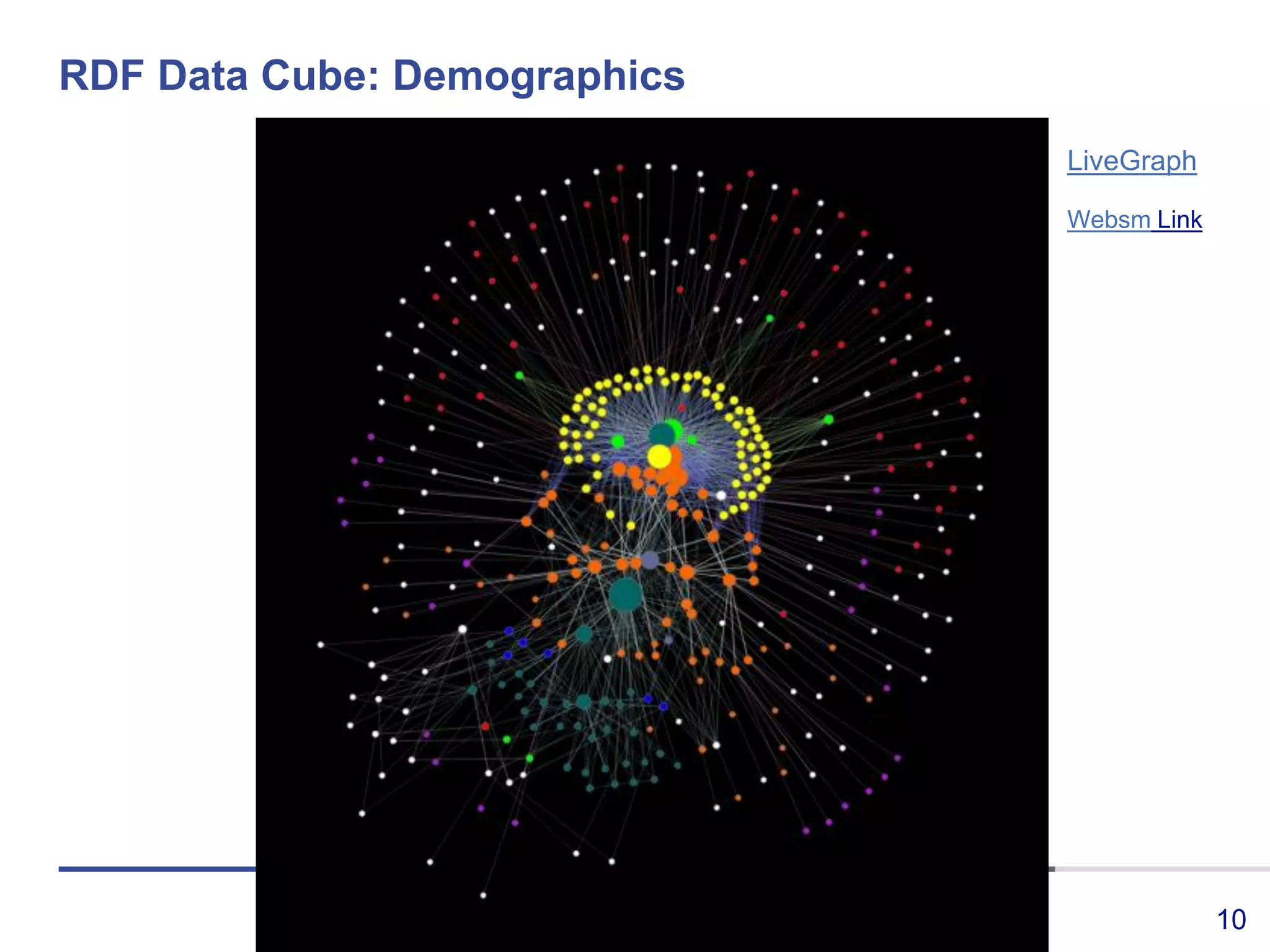

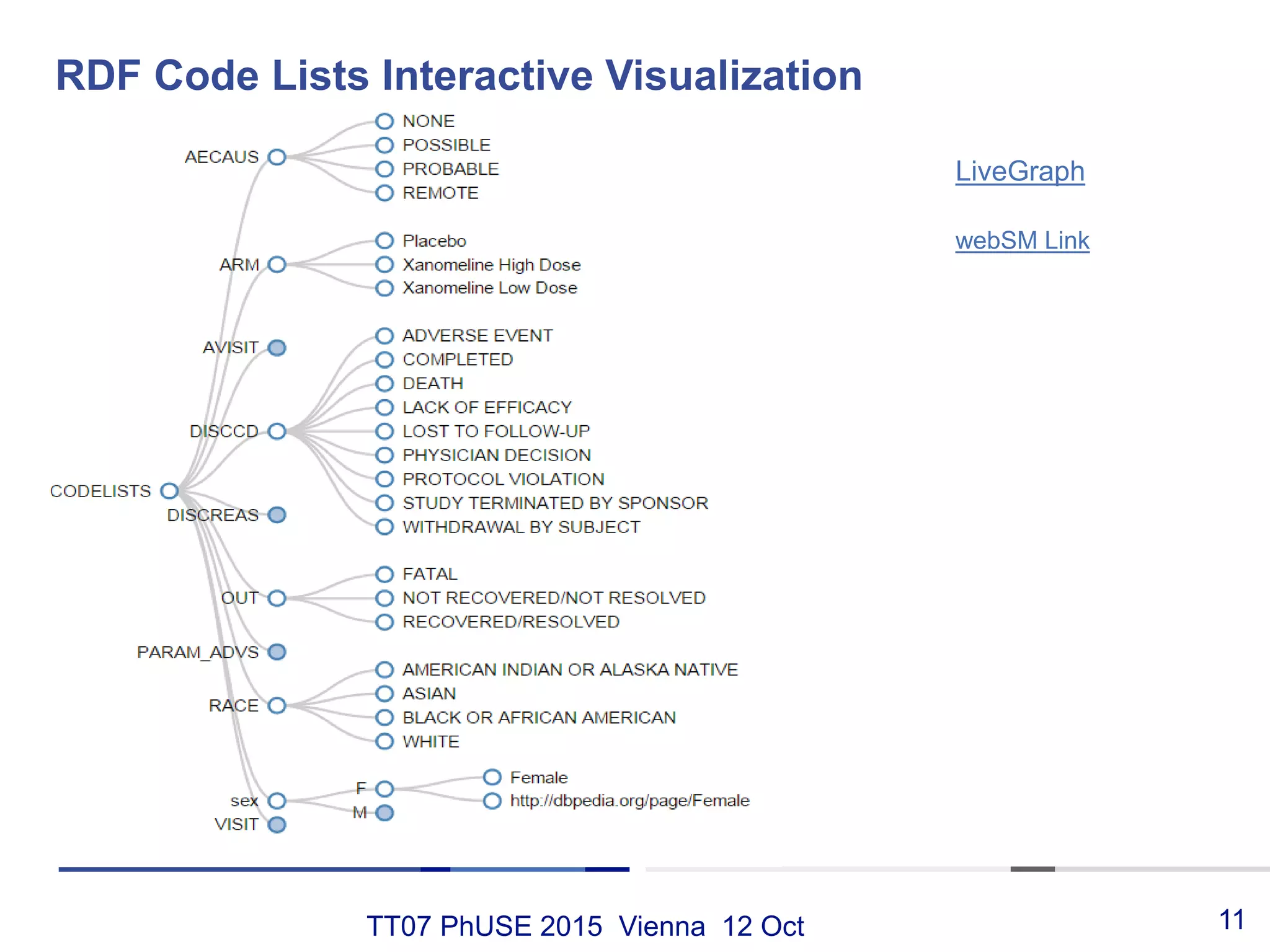

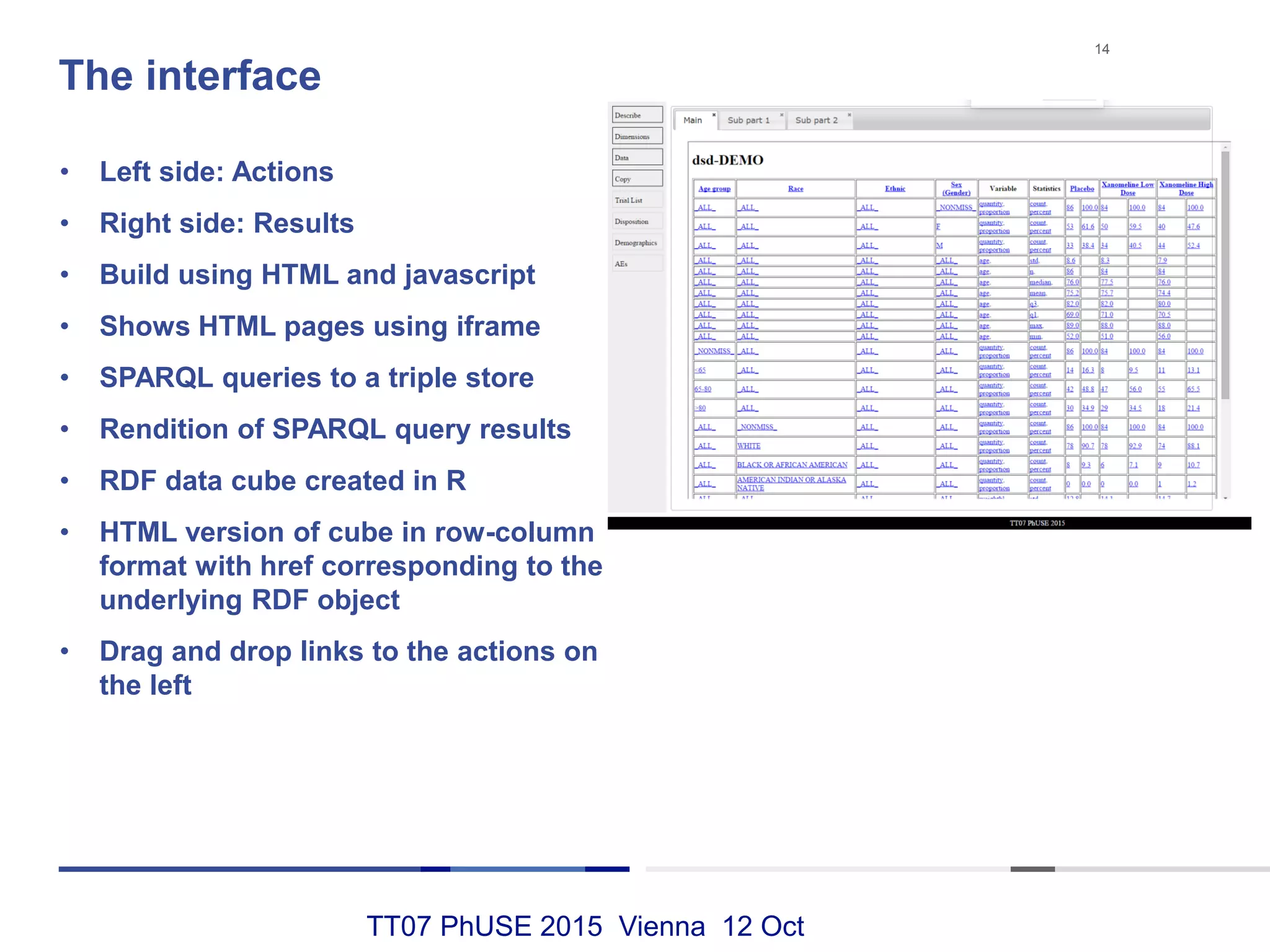

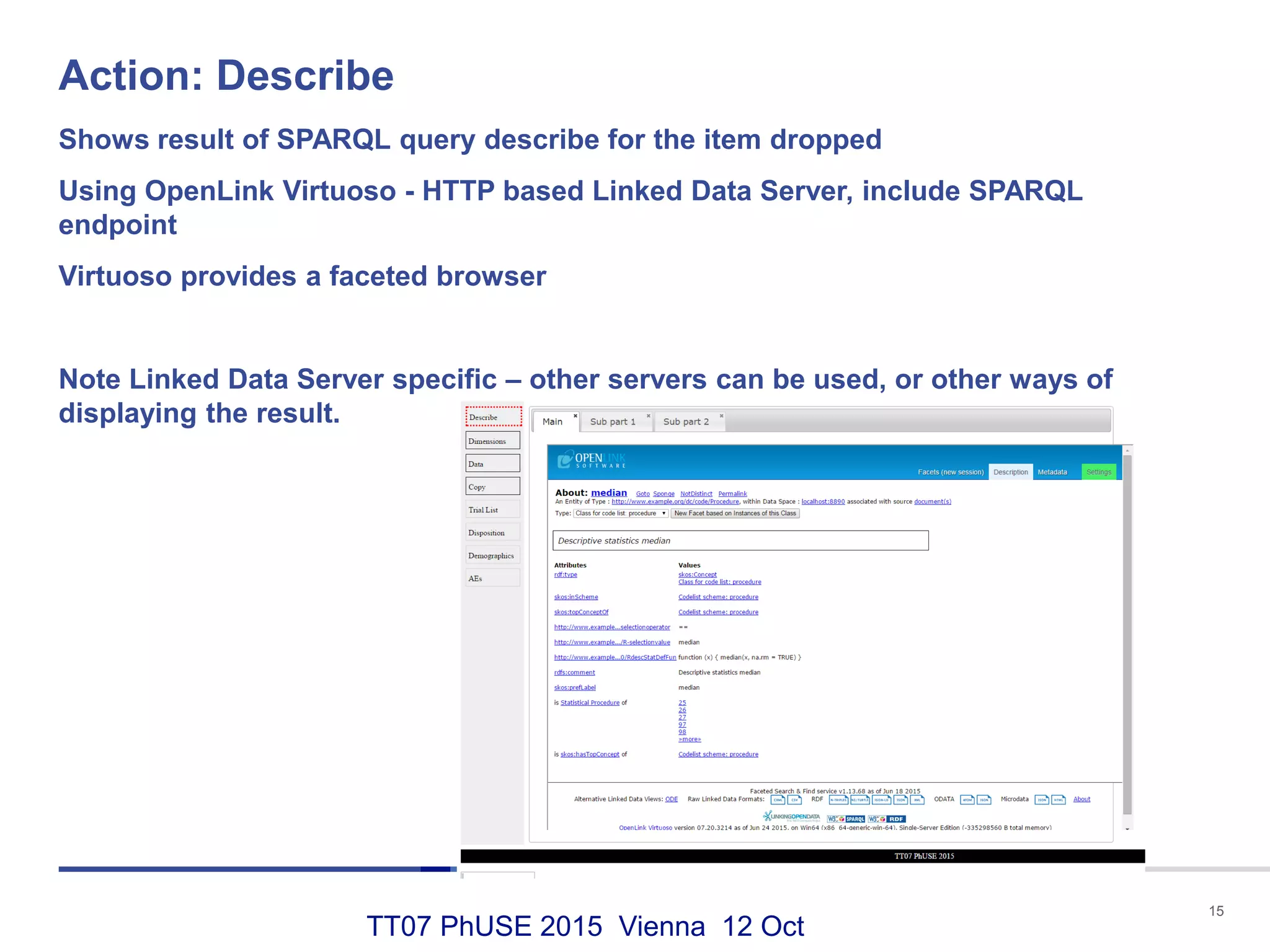

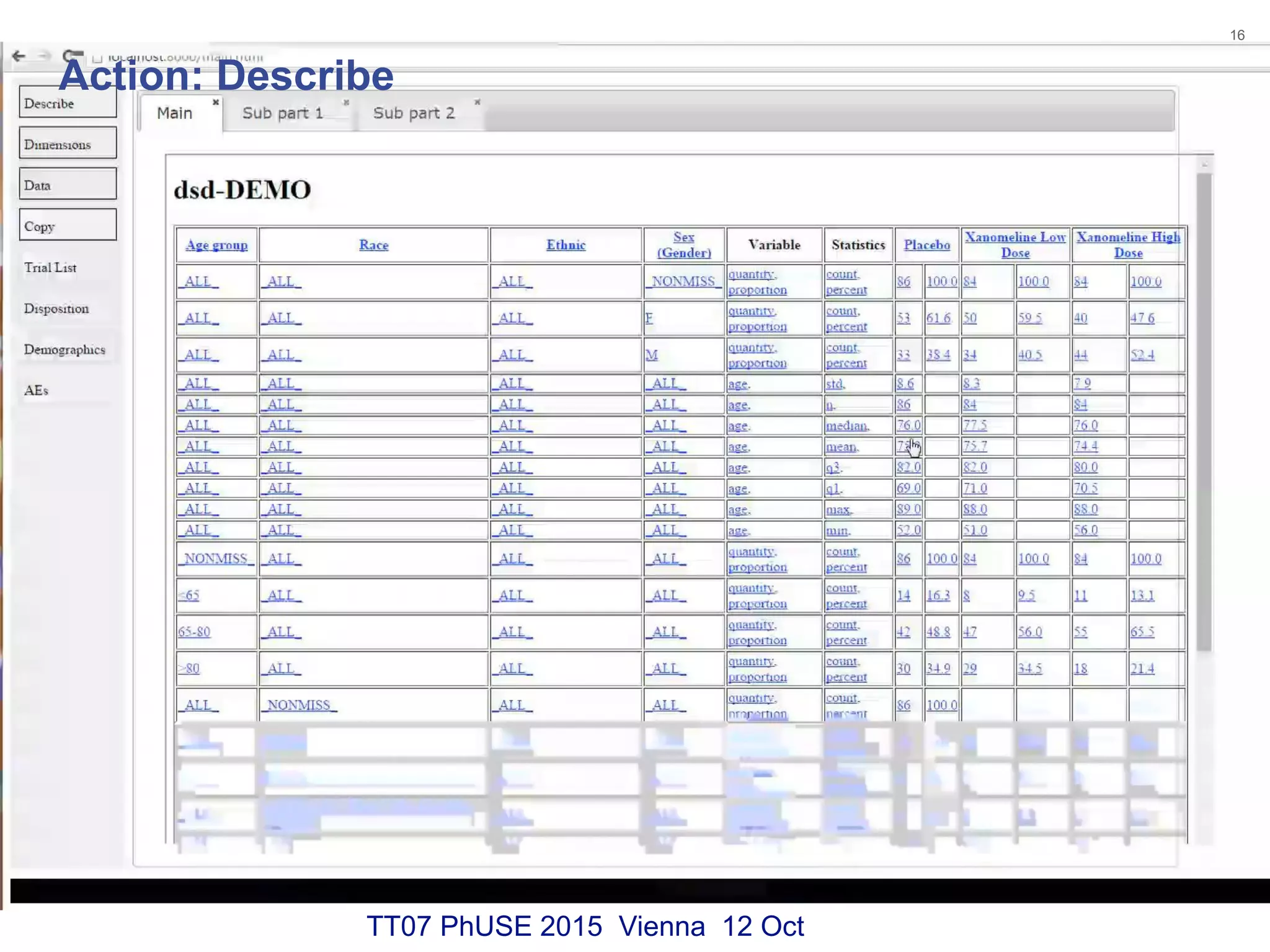

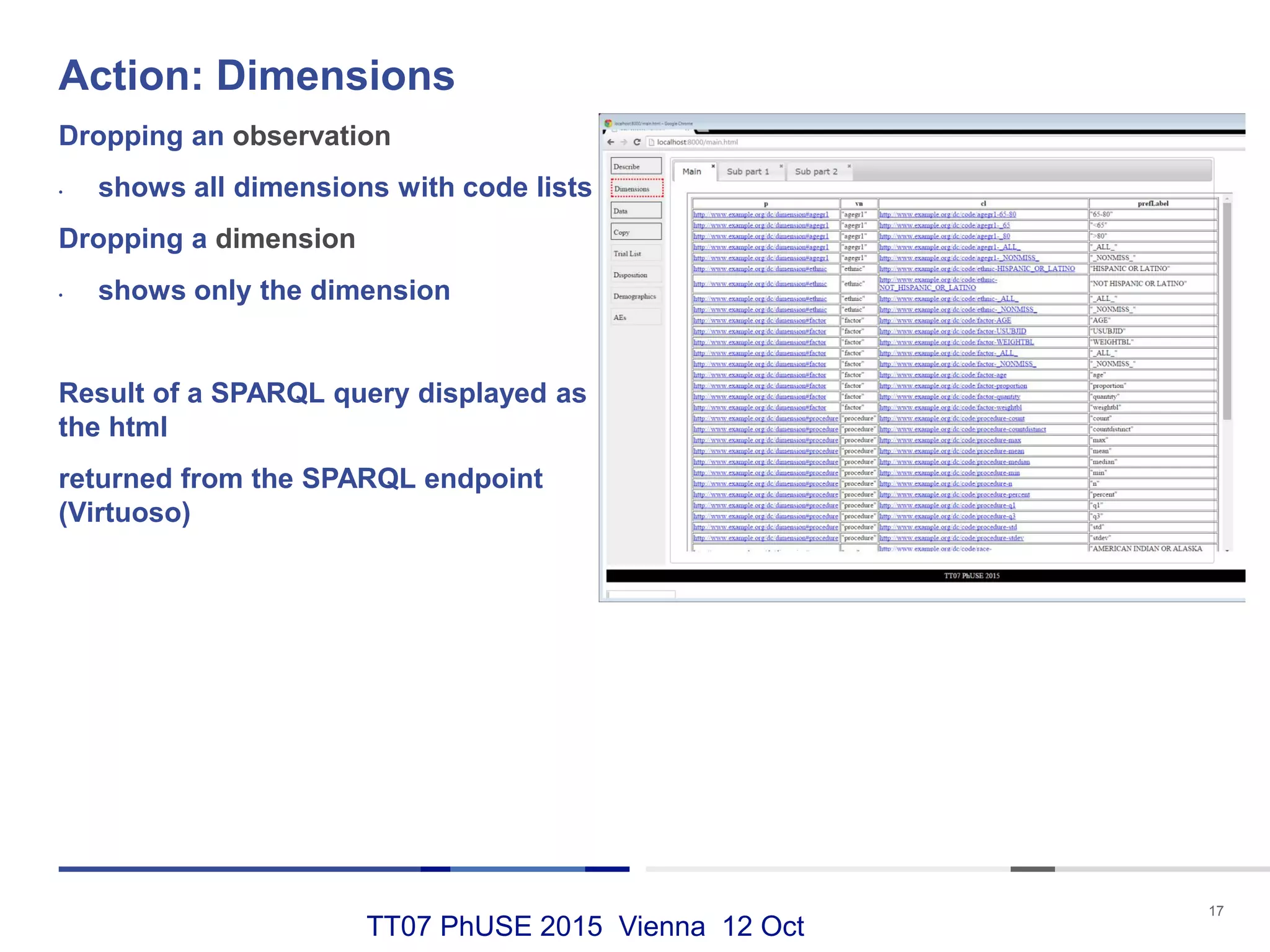

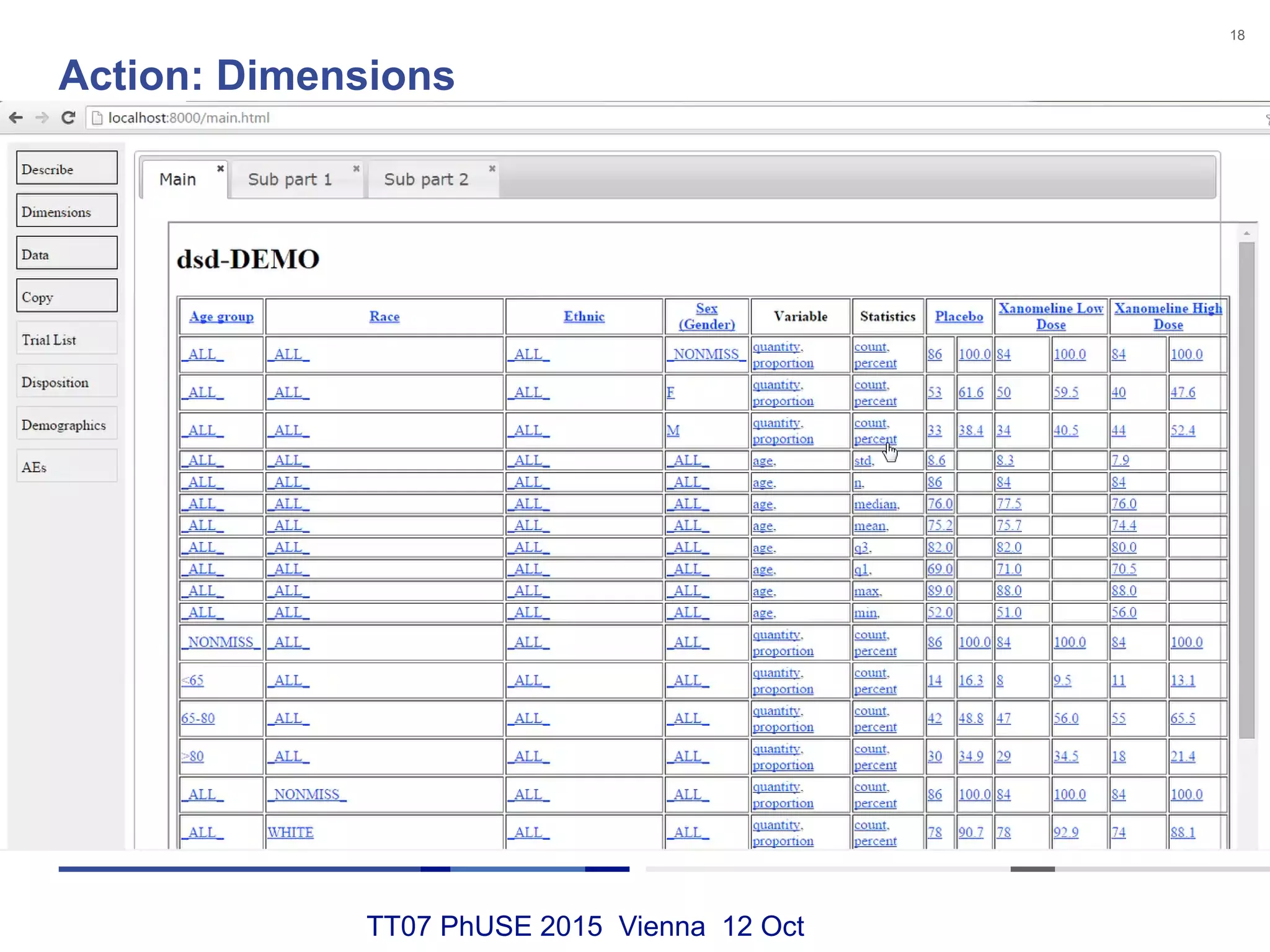

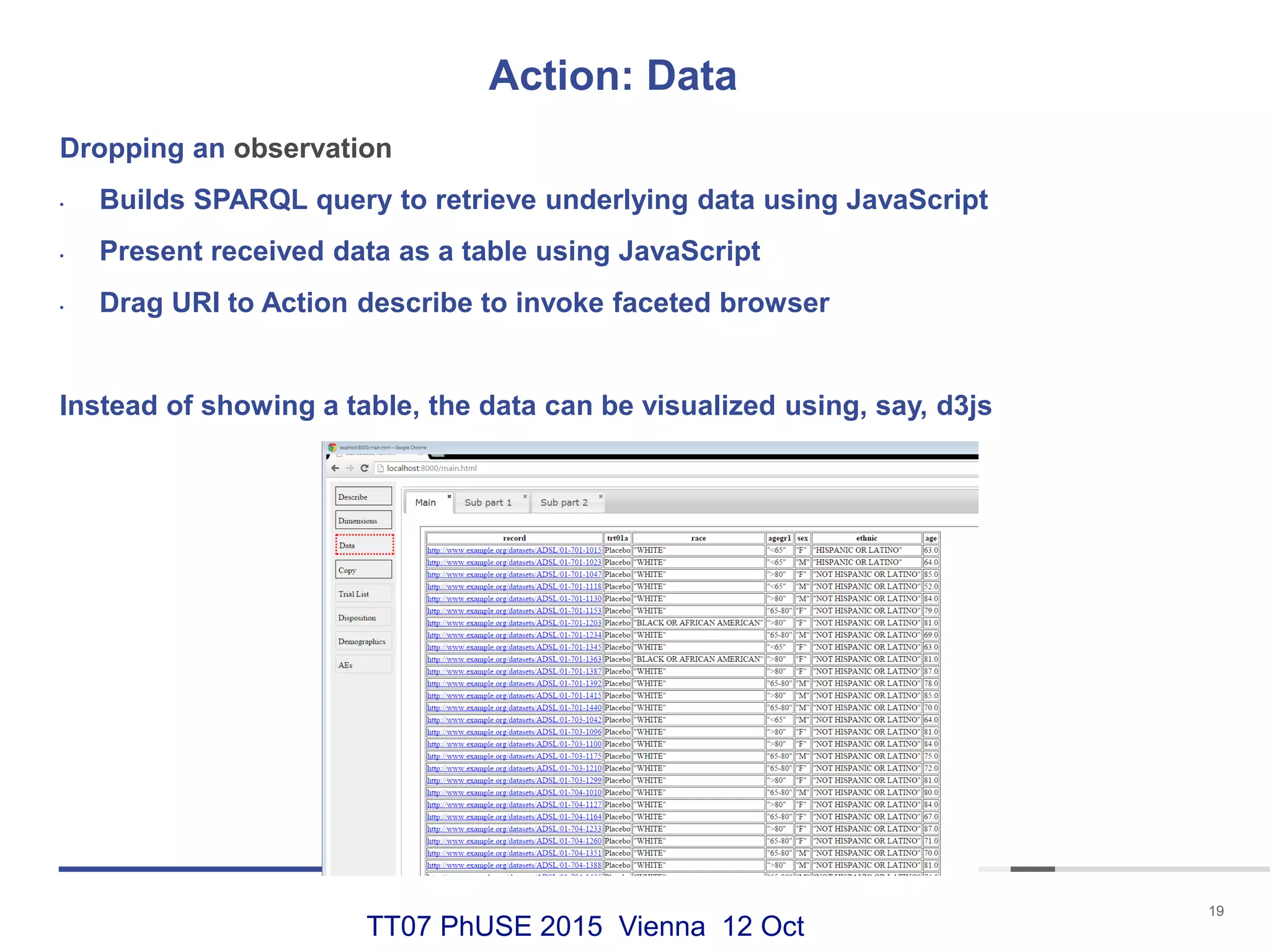

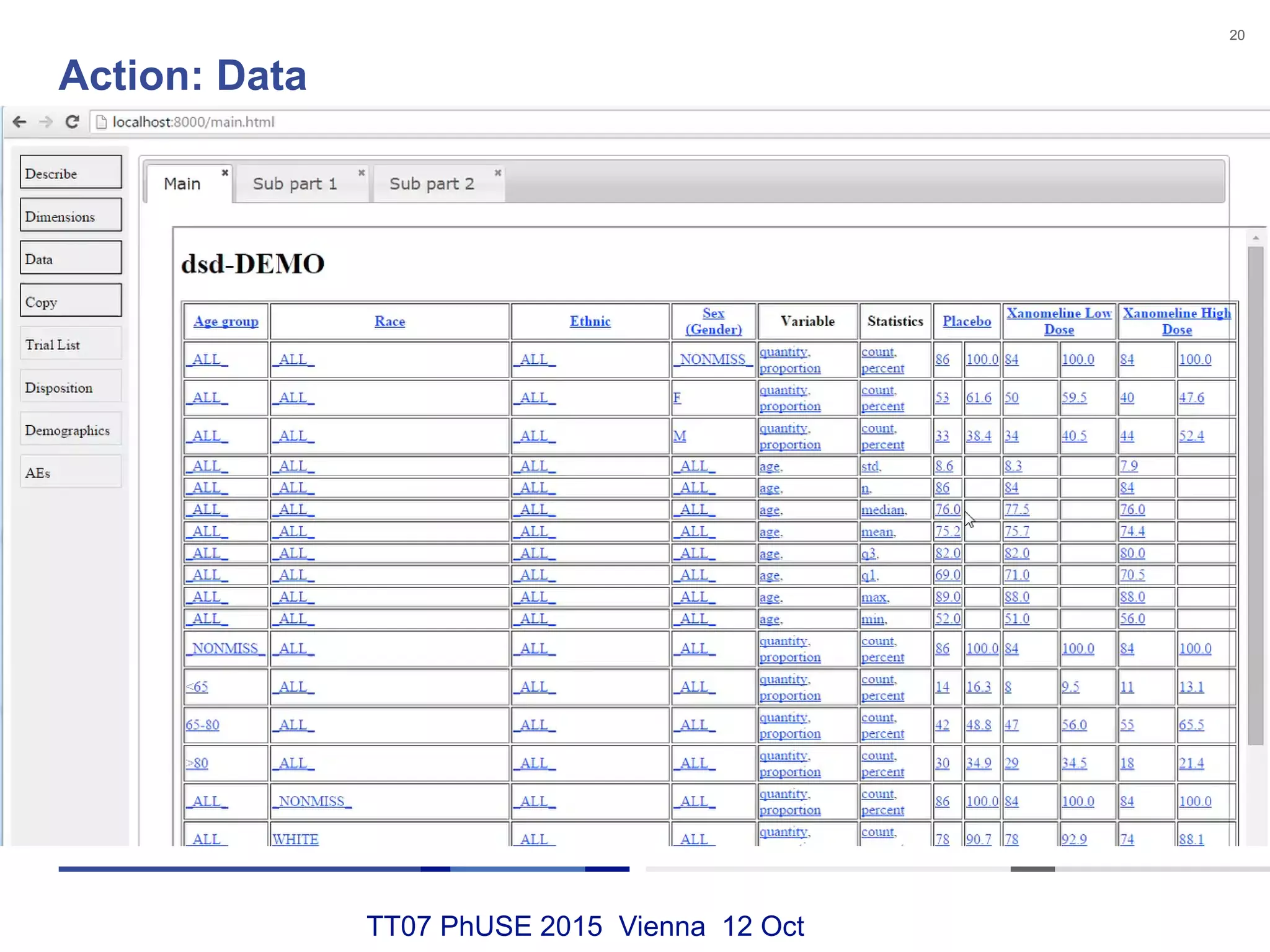

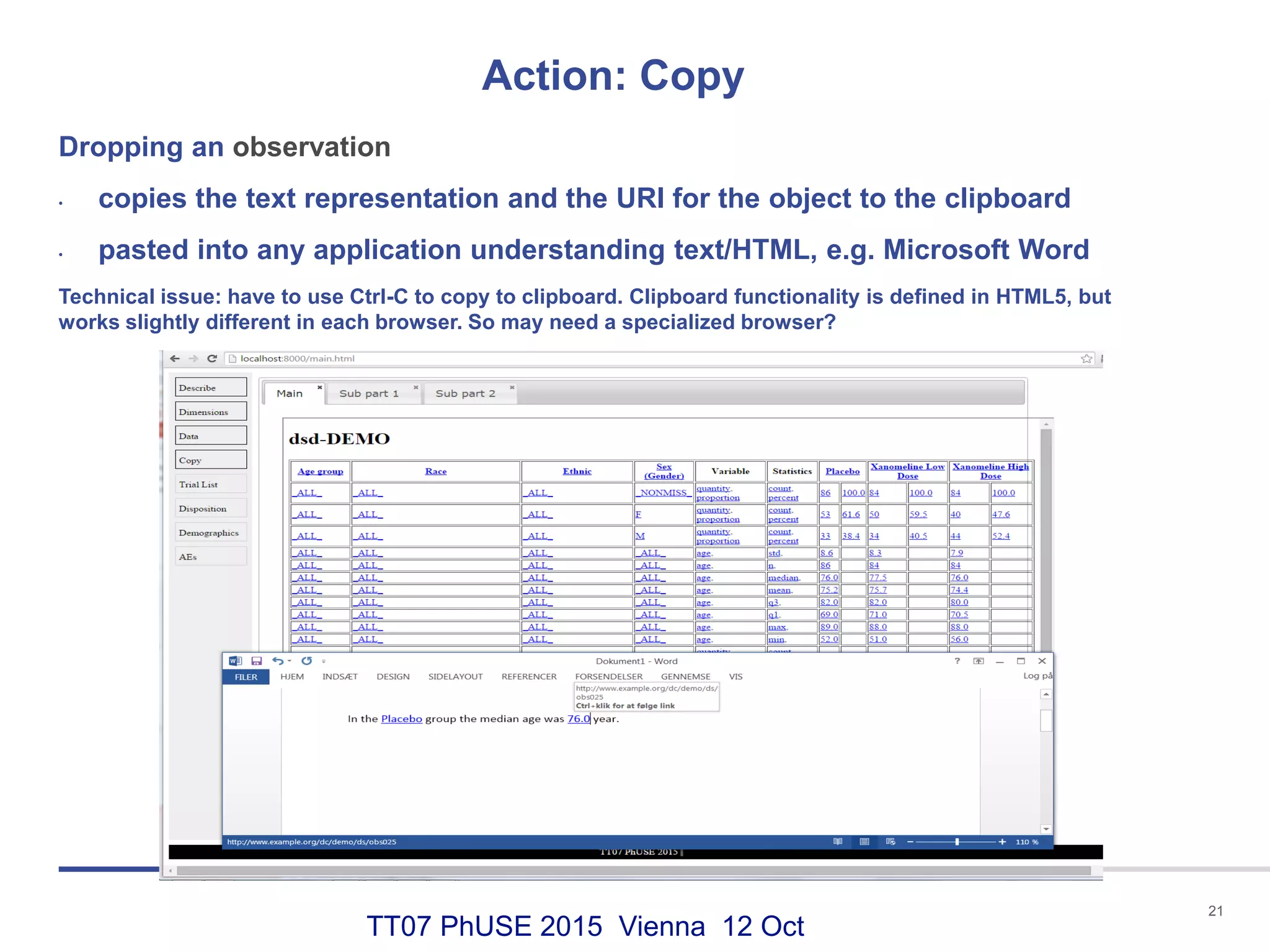

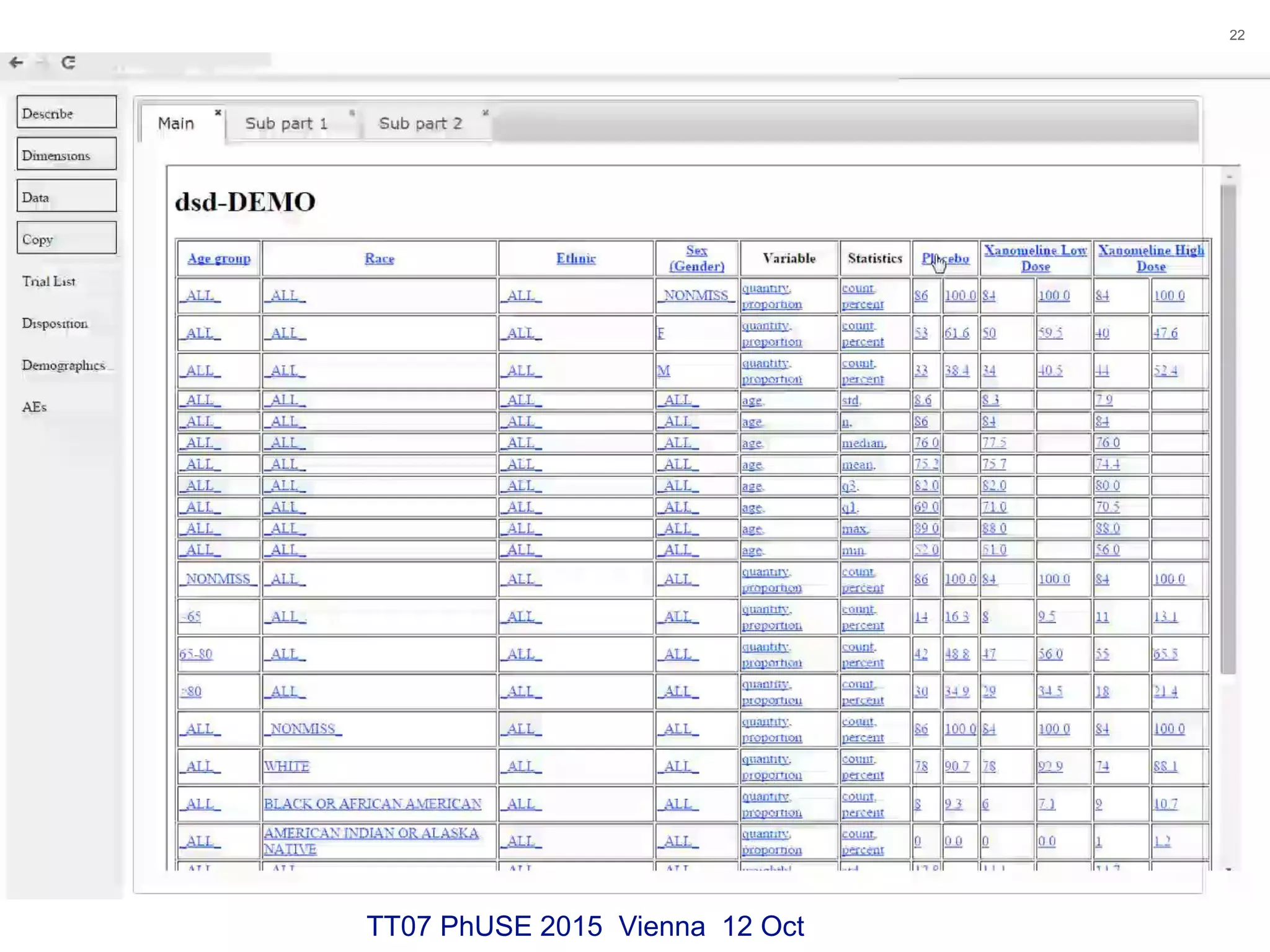

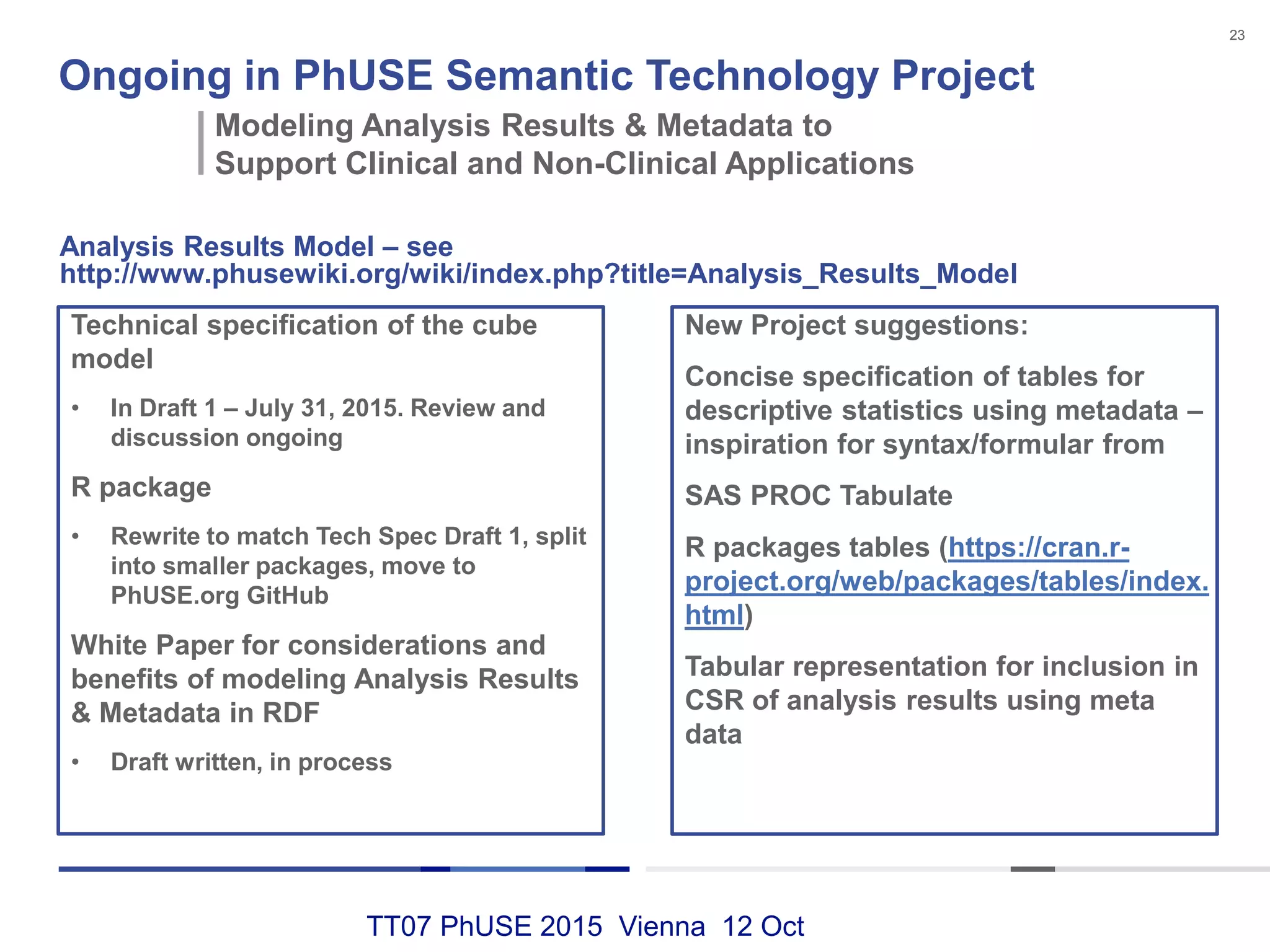

This document discusses using RDF and linked data principles to display clinical trial results as interactive graphs and summary tables. It describes how RDF triples can represent clinical data and be rendered as directed graphs using D3.js. It also presents an interface with actions like "Describe", "Dimensions", and "Data" that build and display SPARQL queries of an RDF data cube, allowing linked exploration and visualization of results. Ongoing work in the PhUSE Semantic Technology Project aims to further specify the RDF data cube model and develop supporting R packages and documentation.