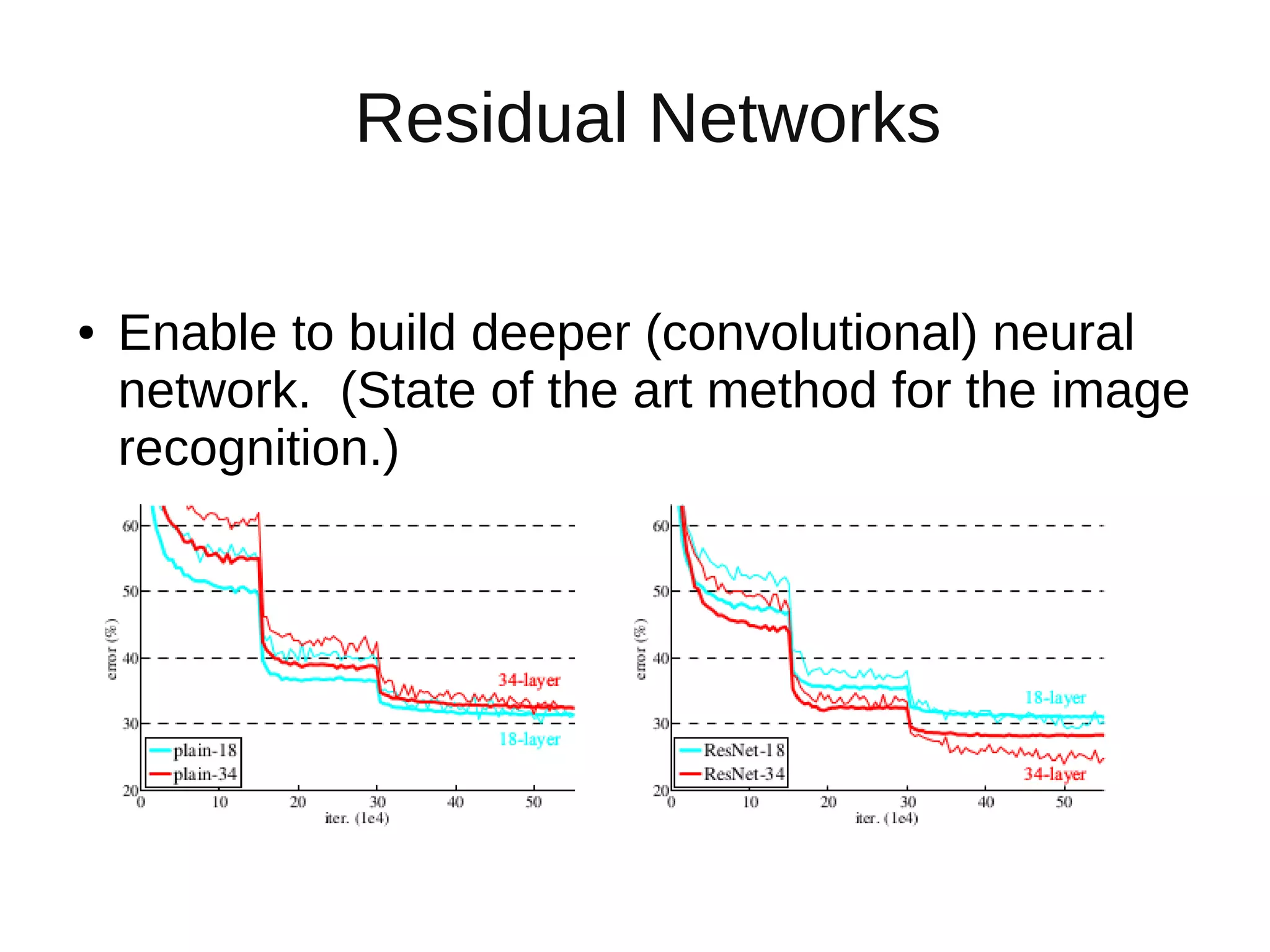

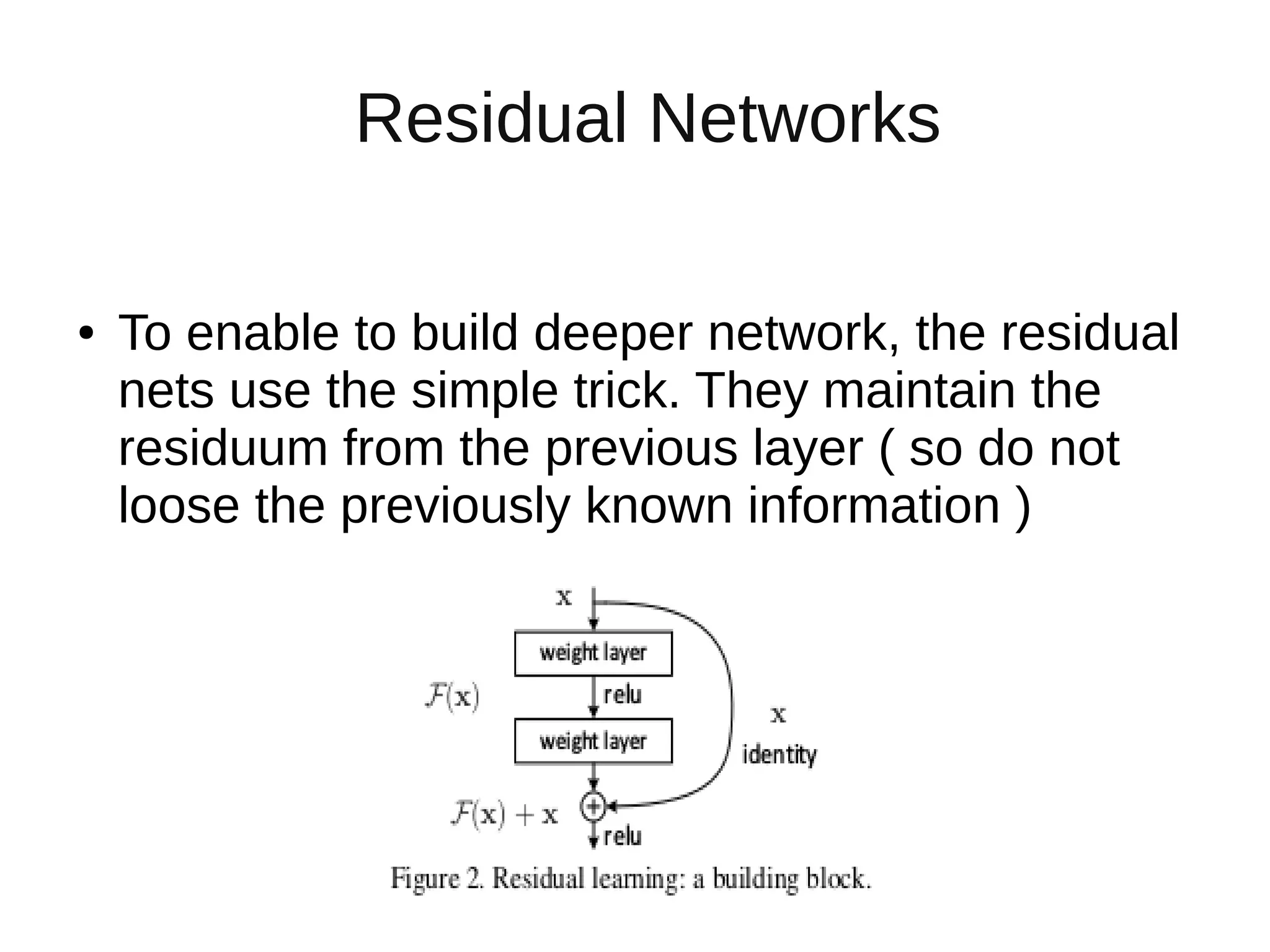

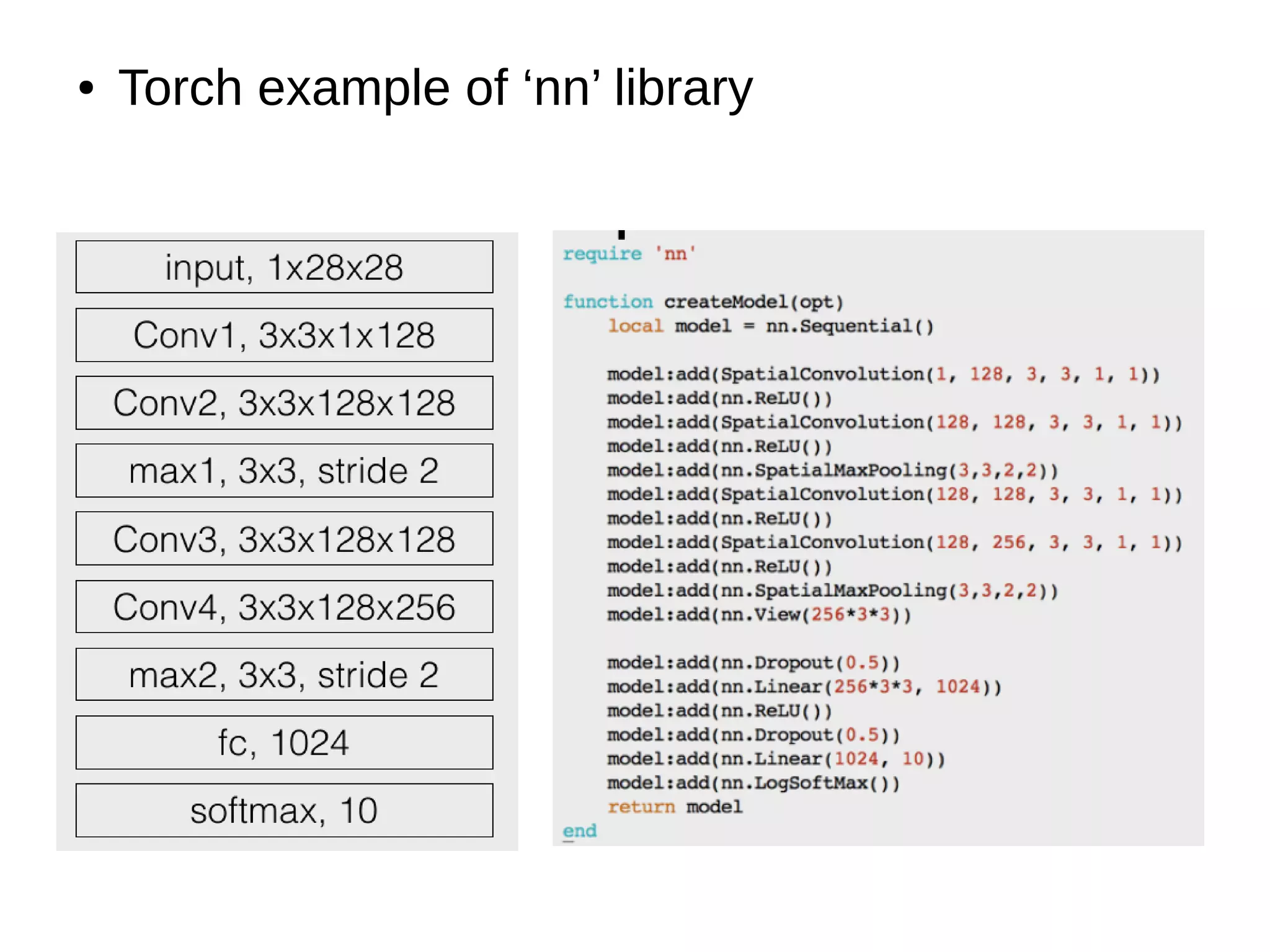

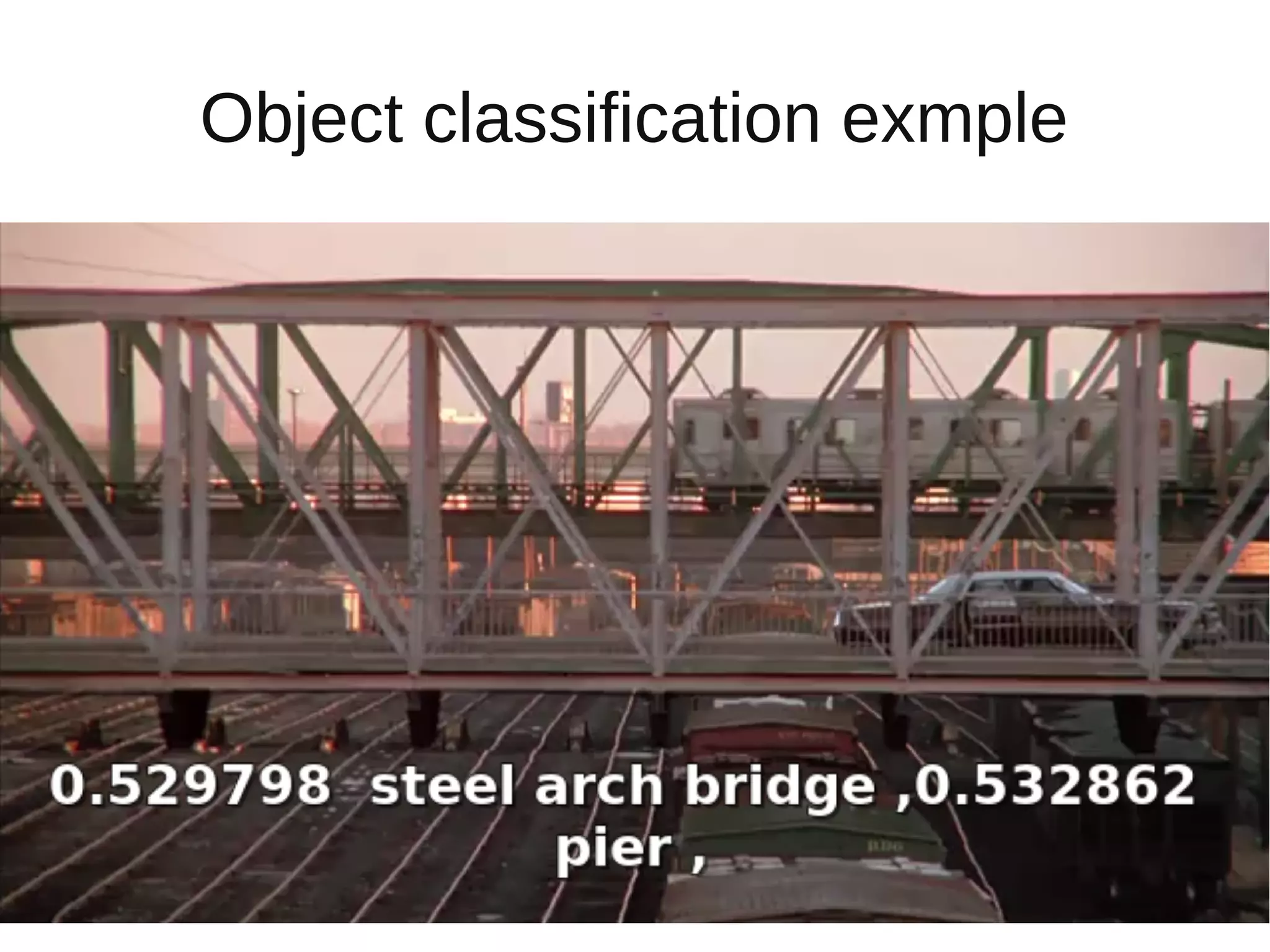

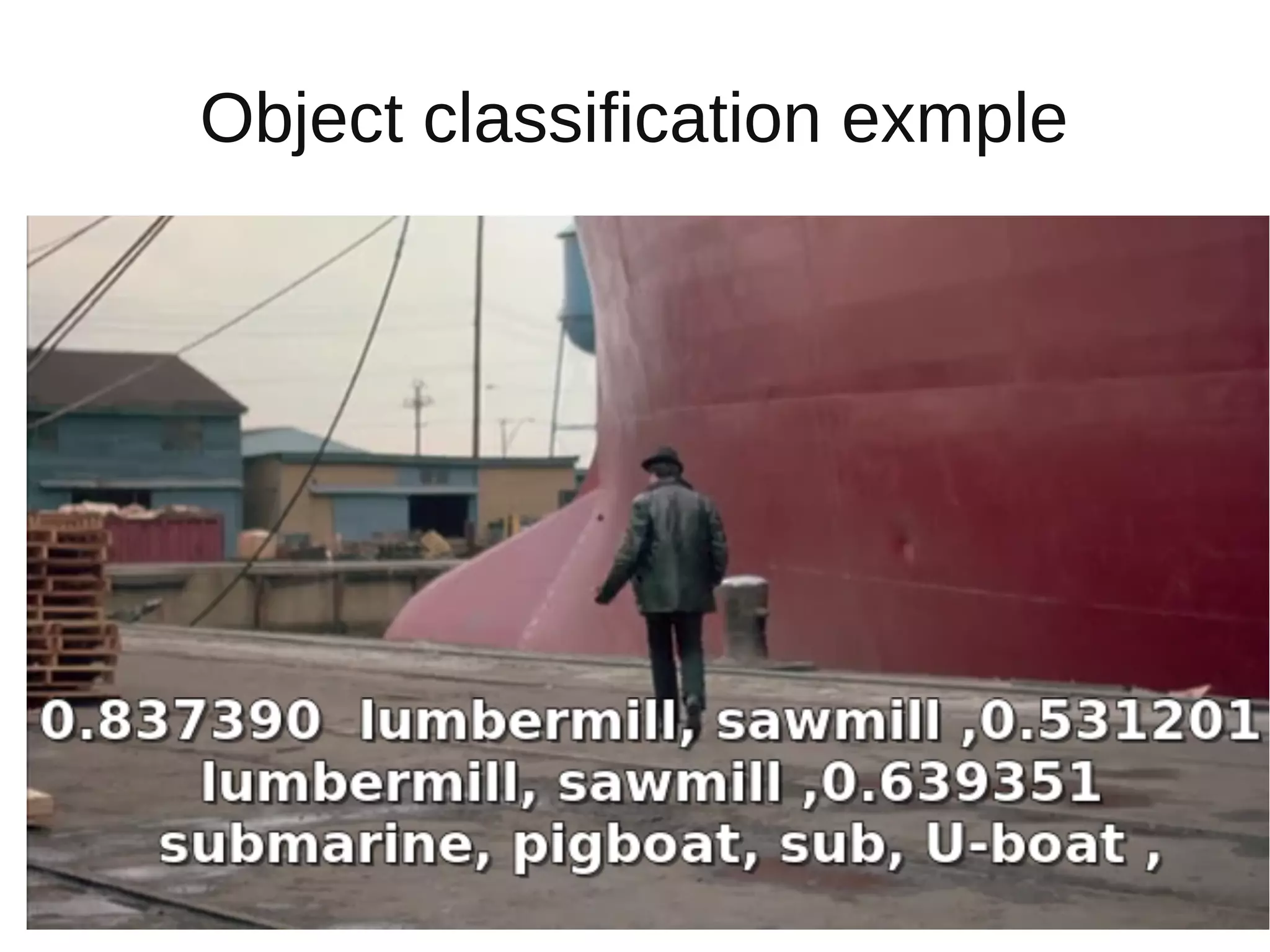

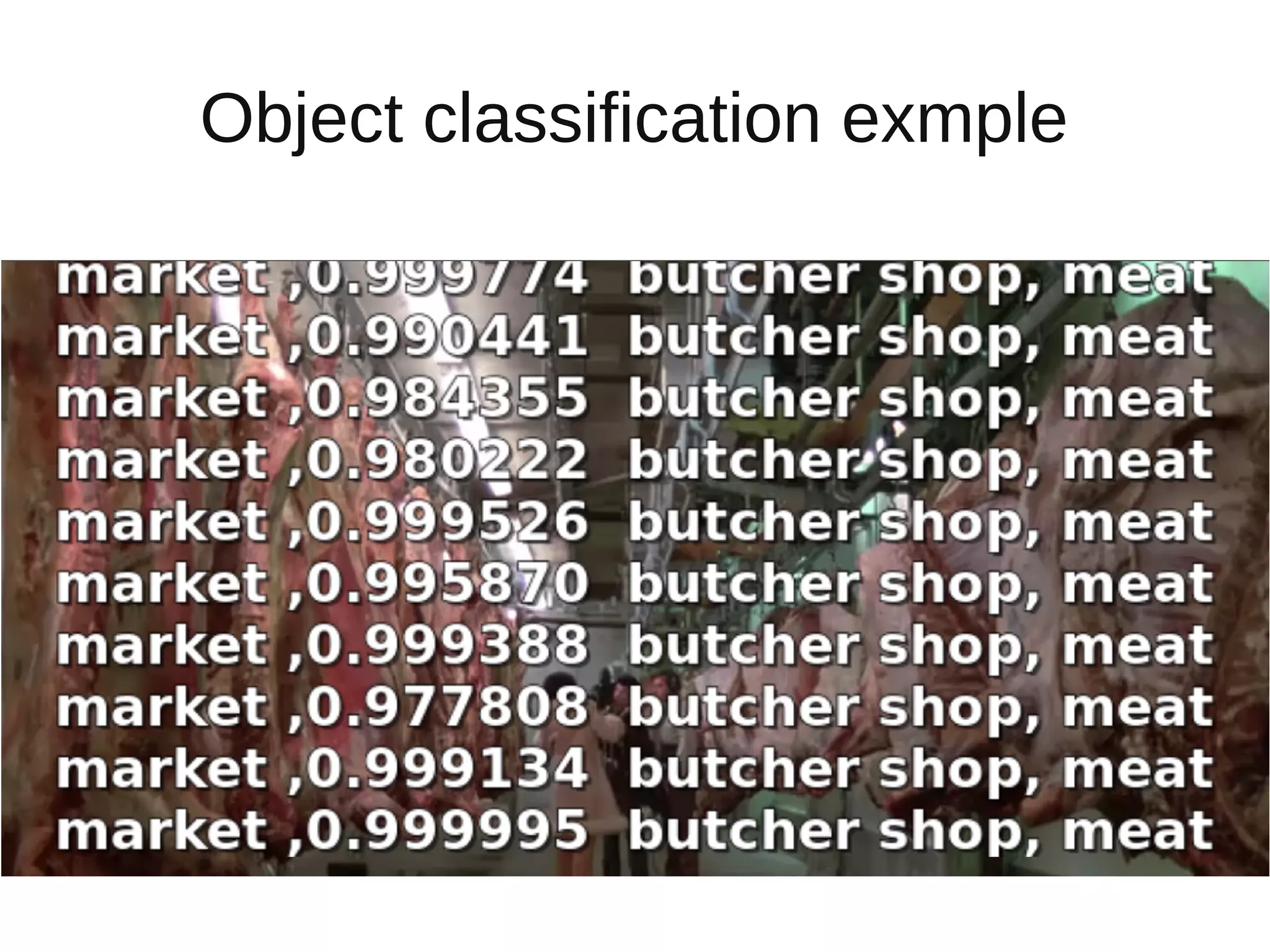

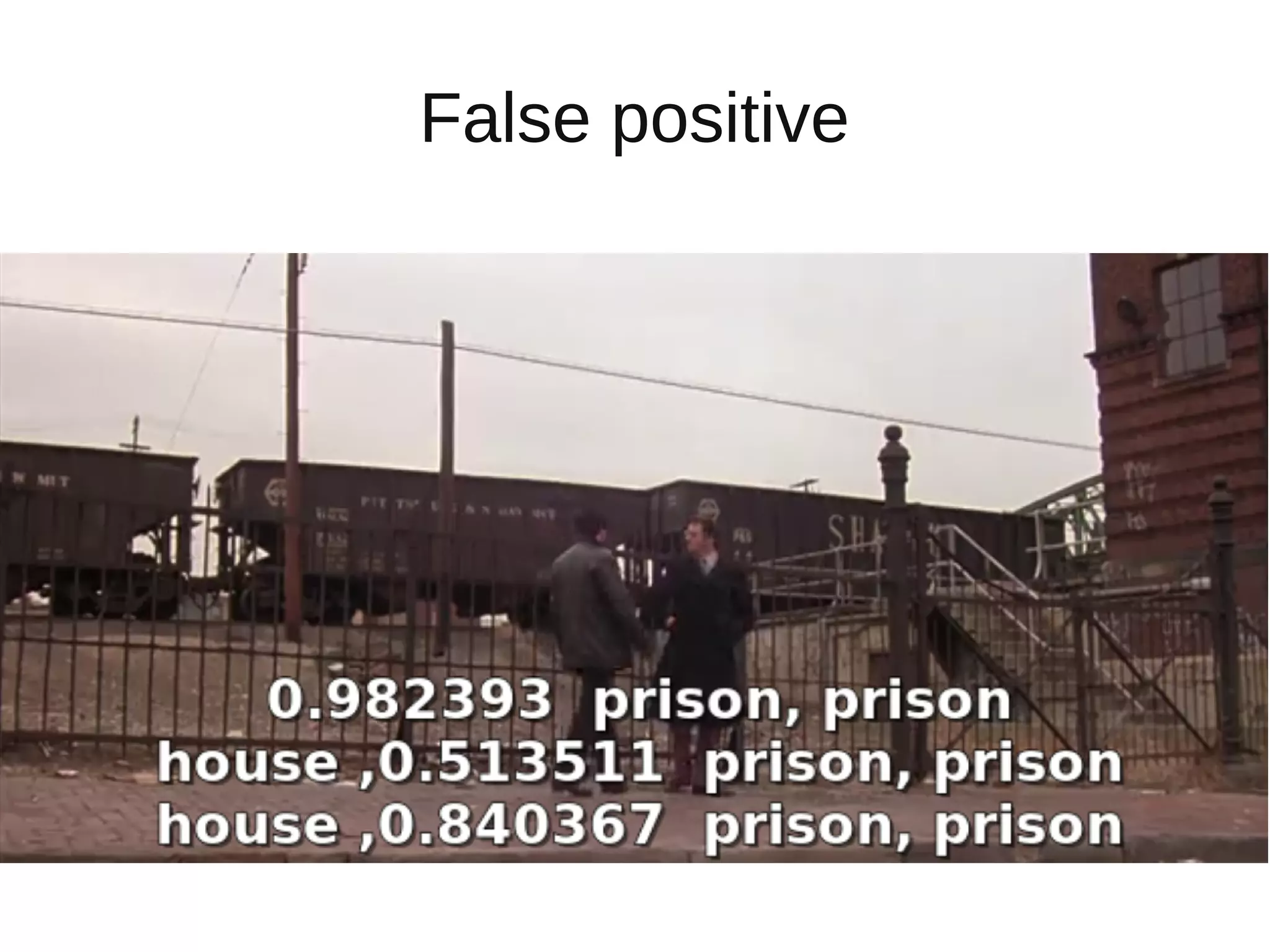

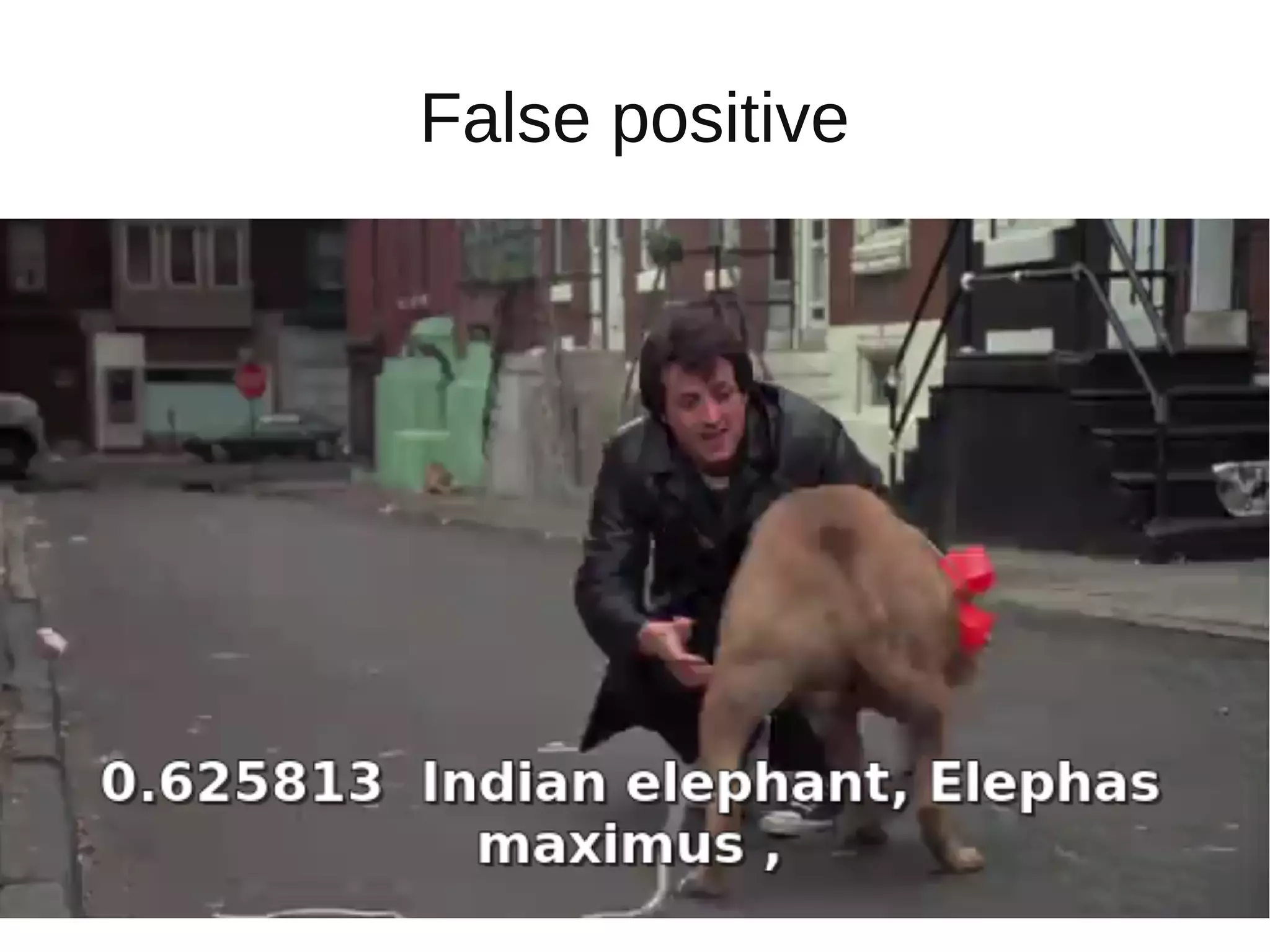

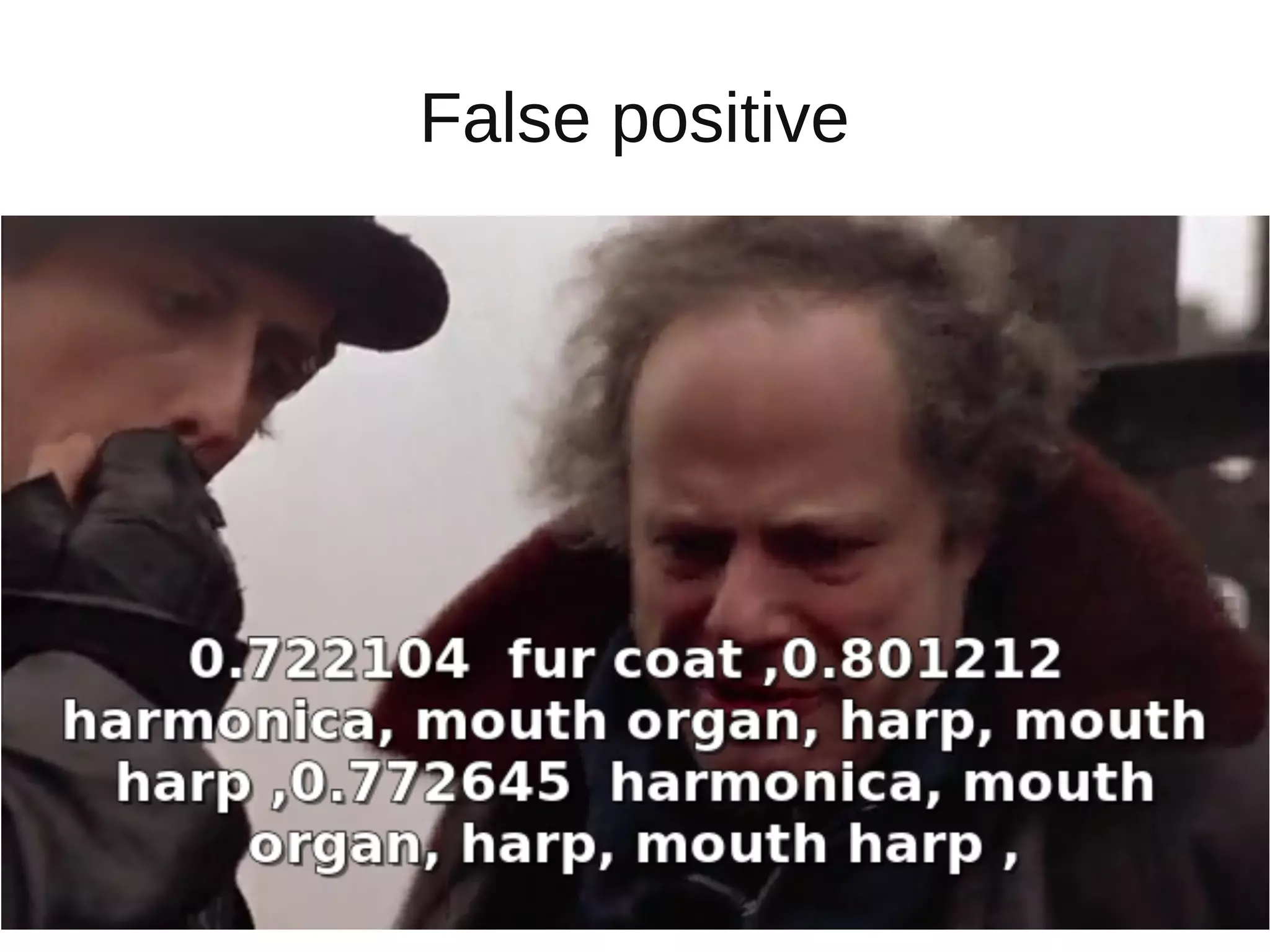

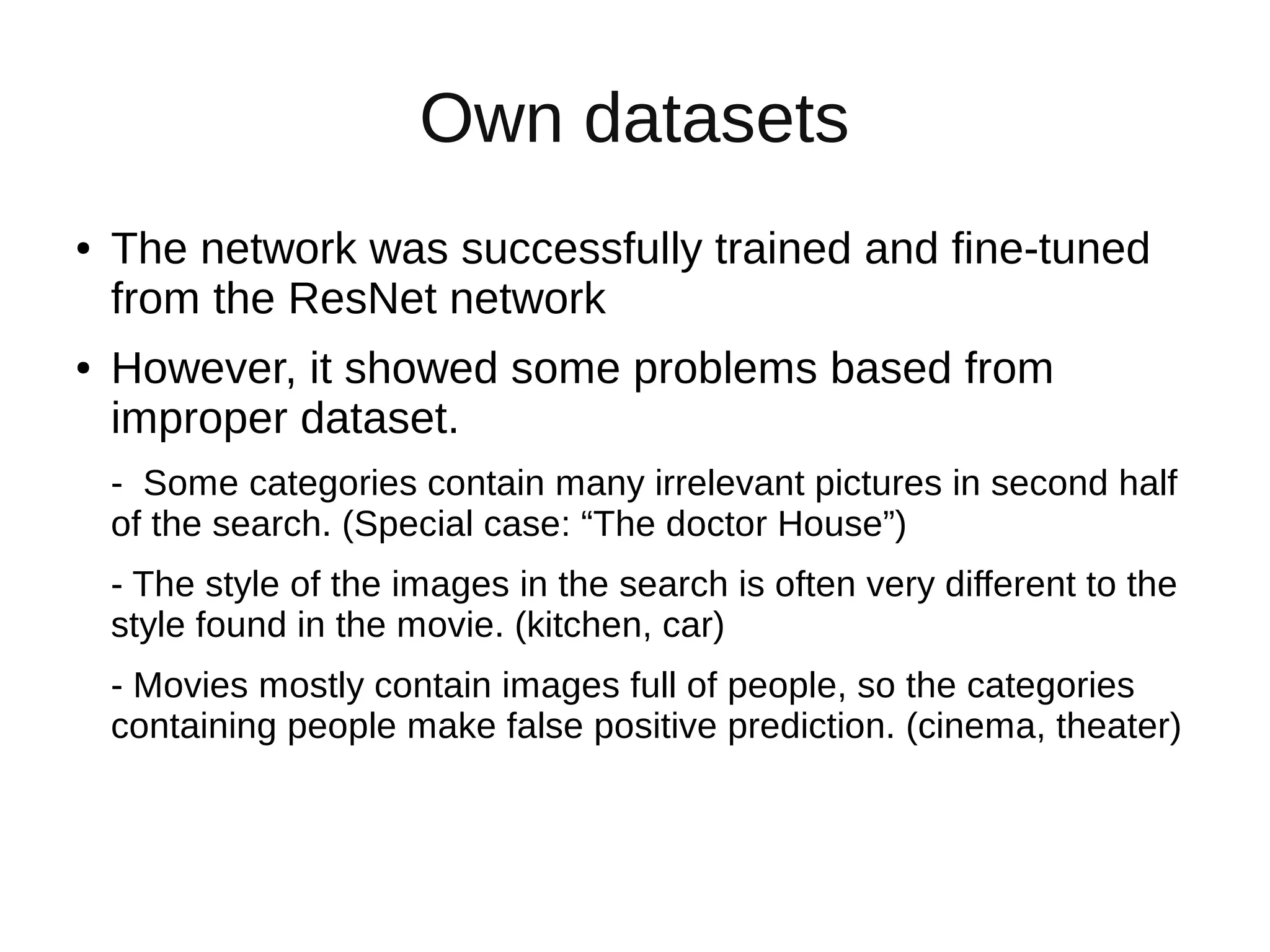

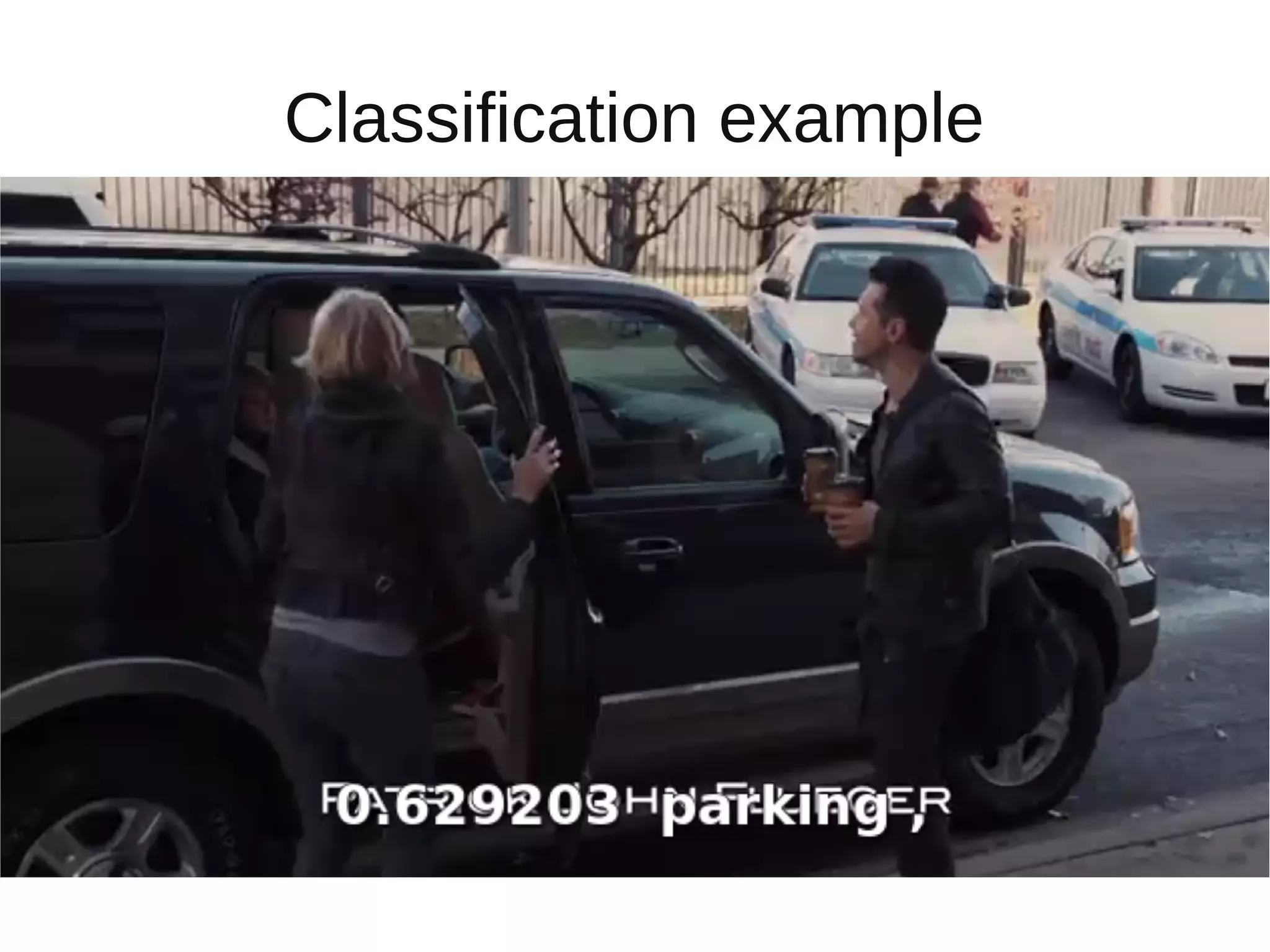

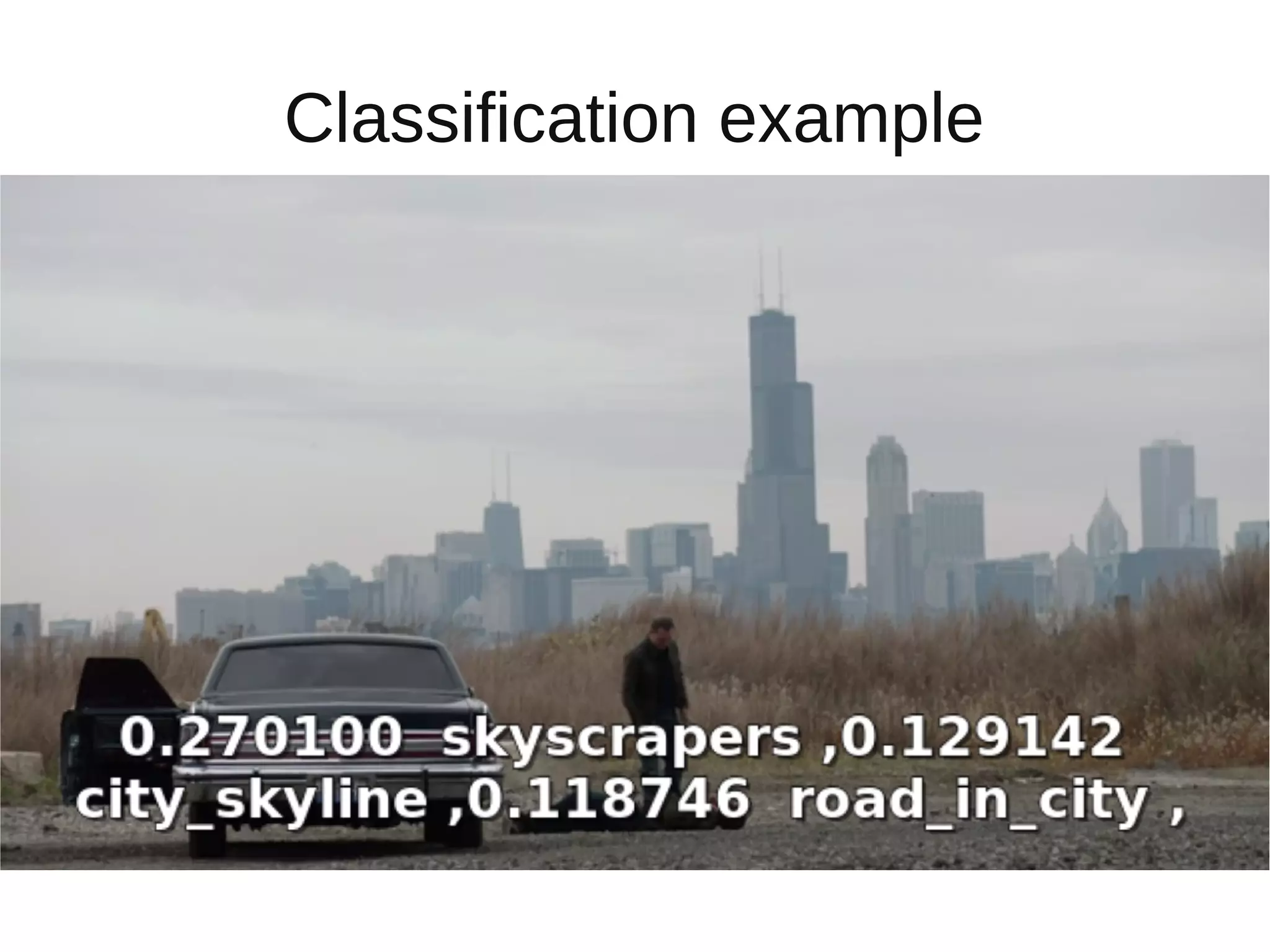

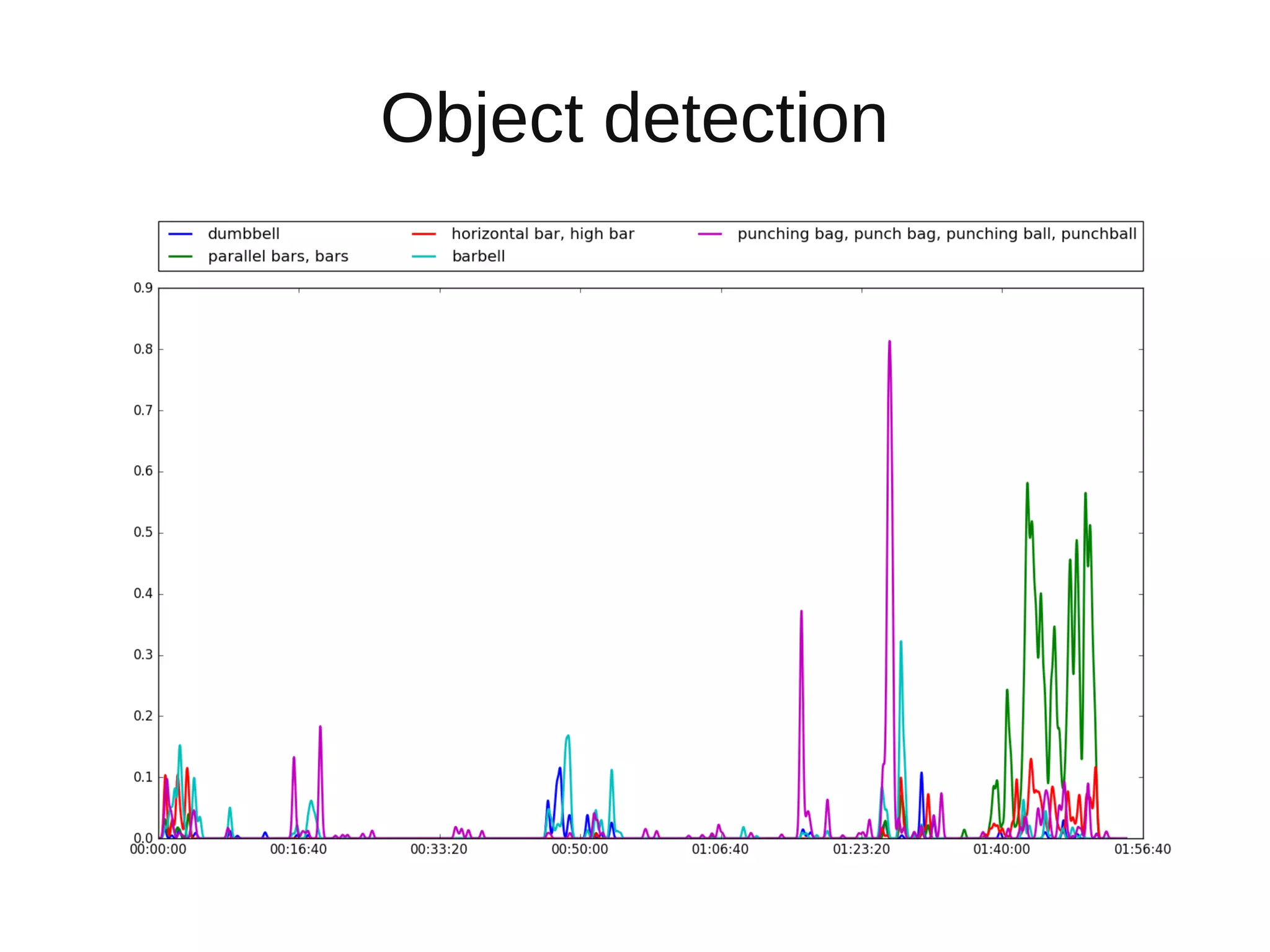

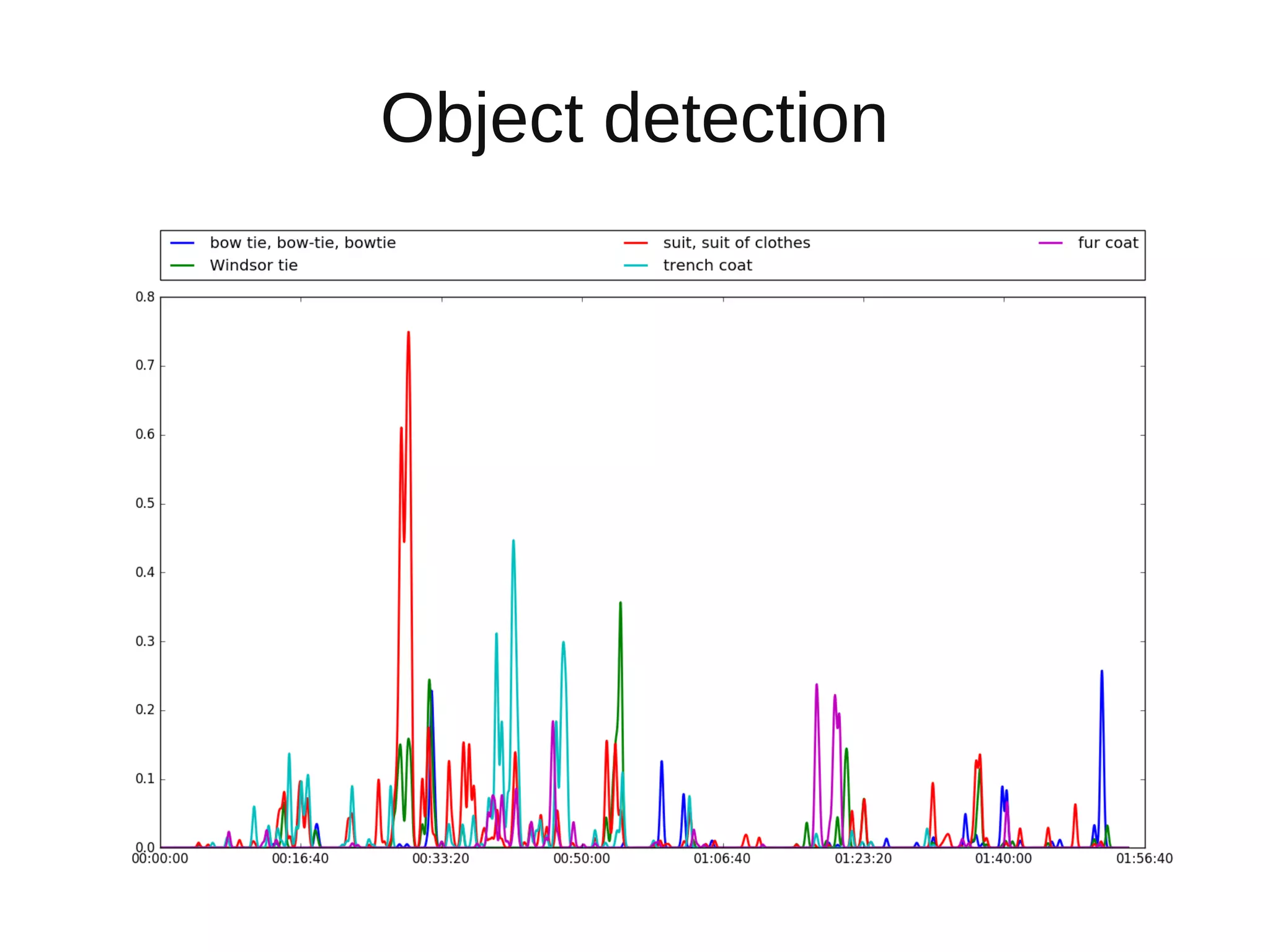

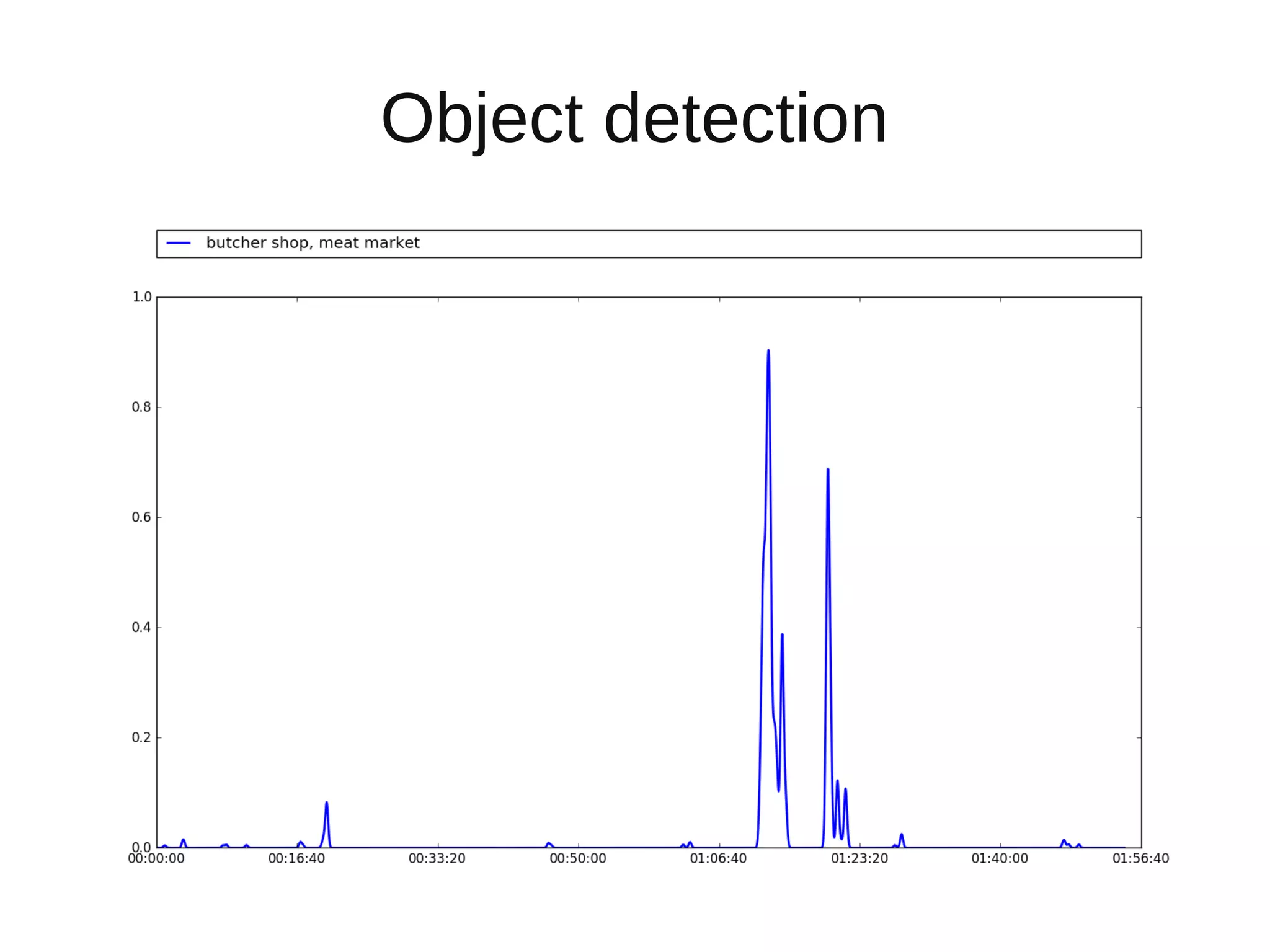

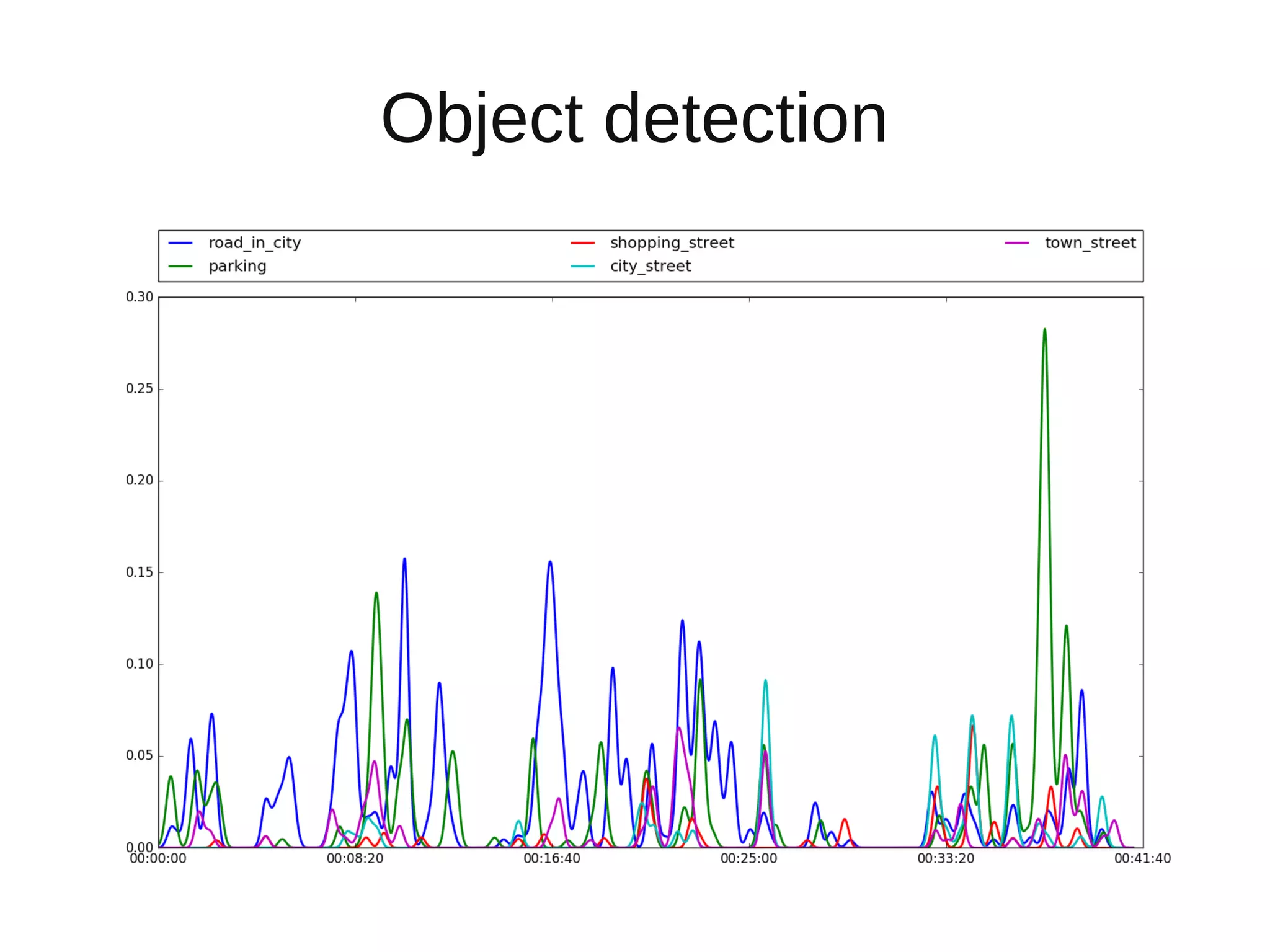

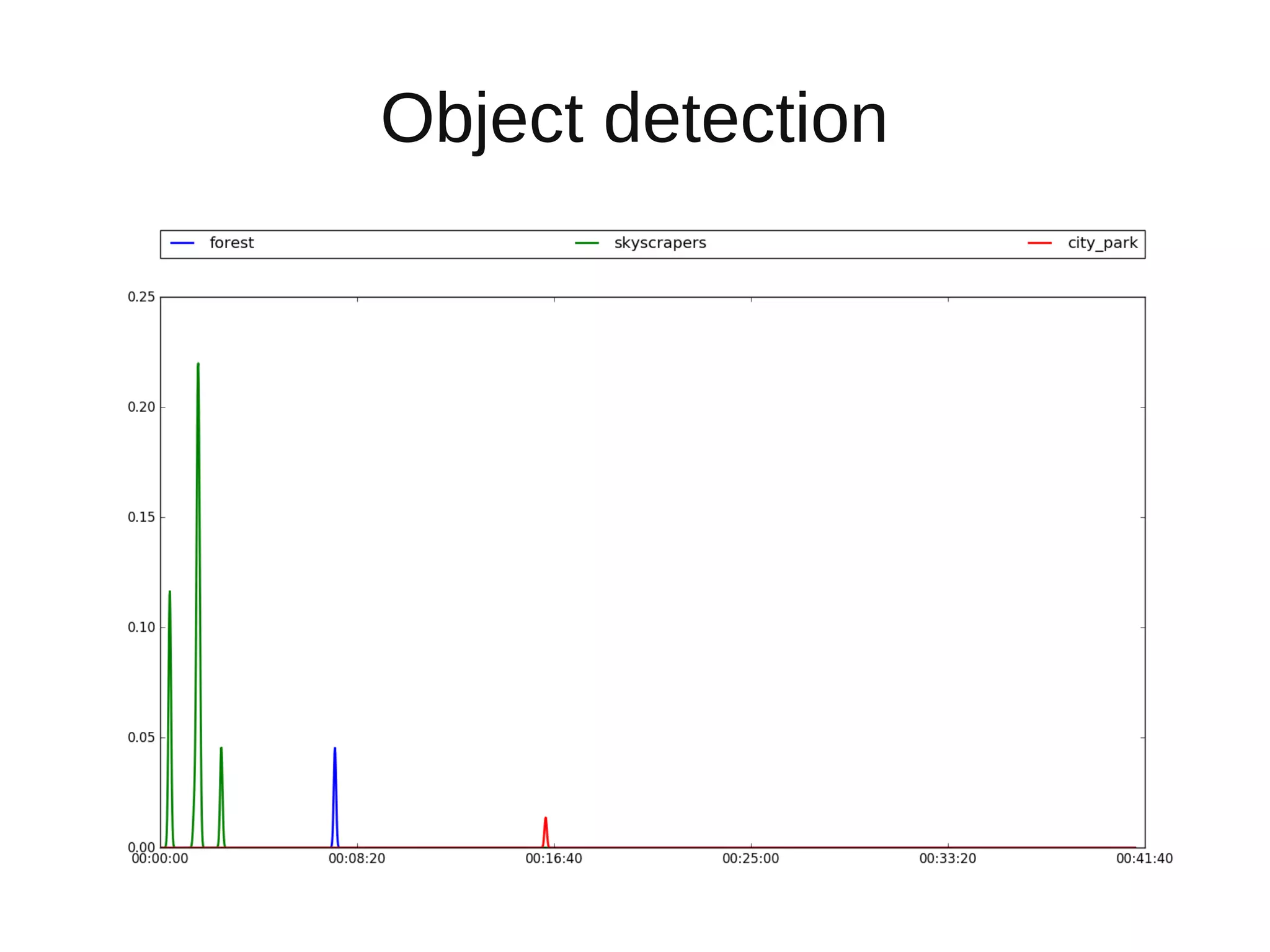

The document discusses applying deep learning techniques to extract features from video frames of movies on the Showmax streaming platform in order to explore similarities among films. It highlights the use of residual networks and the Torch library for building deep learning models, achieving frame classification, and addressing classification challenges. Future steps include transforming tools for user-friendliness and integrating successful feature vector analyses into existing movie recommendation systems.