The document discusses different database locking strategies in Hibernate and Spring, including:

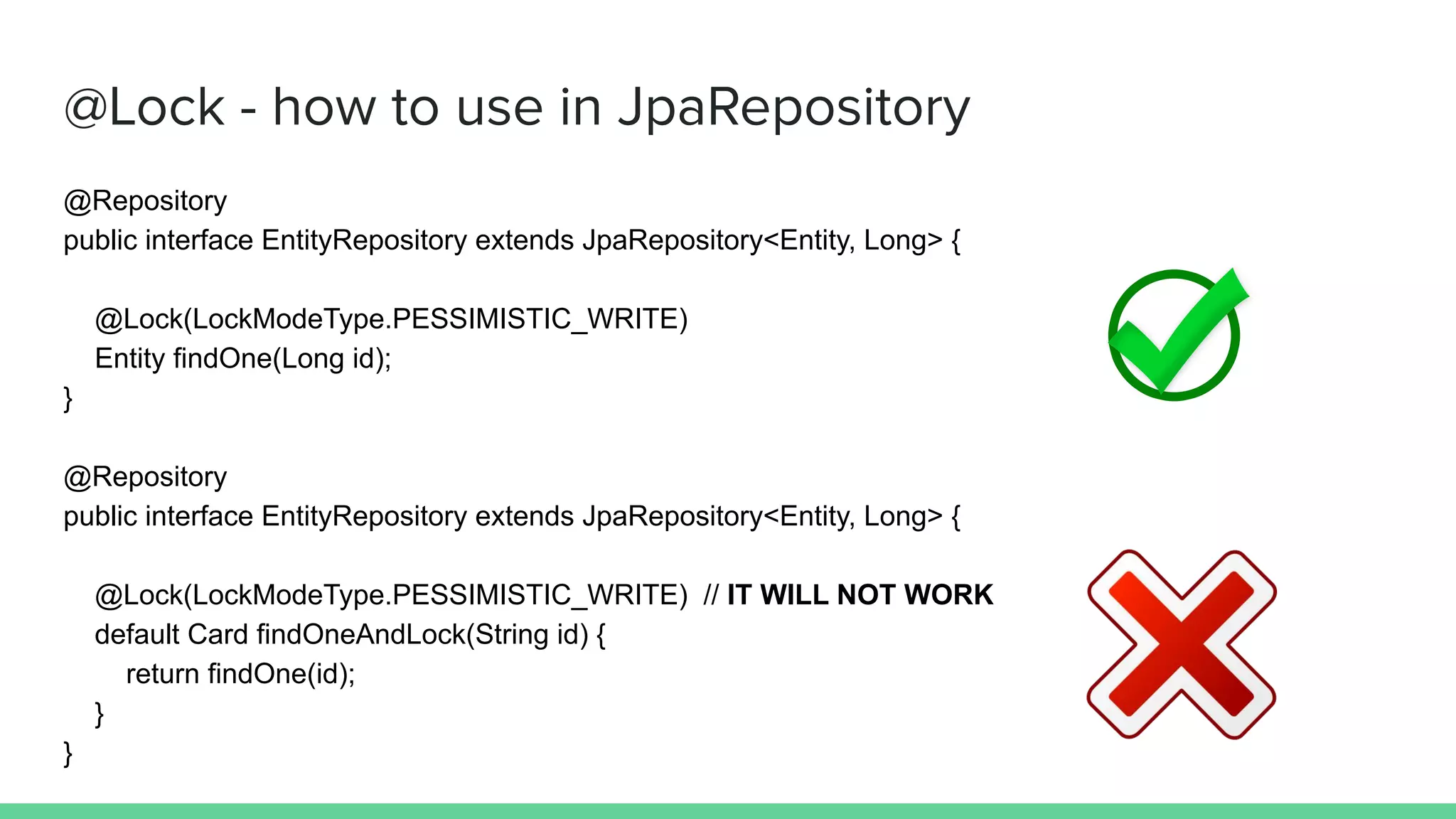

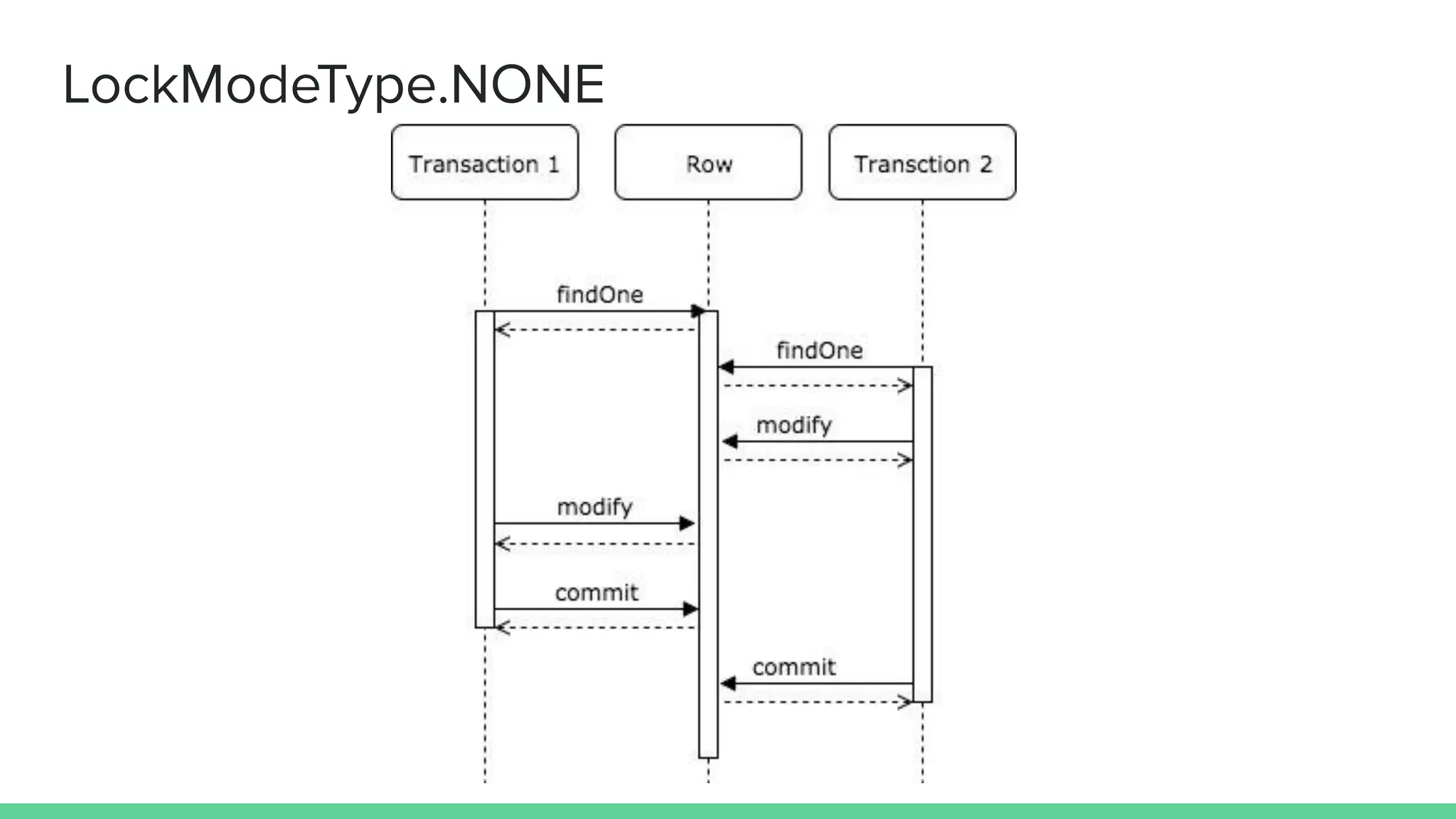

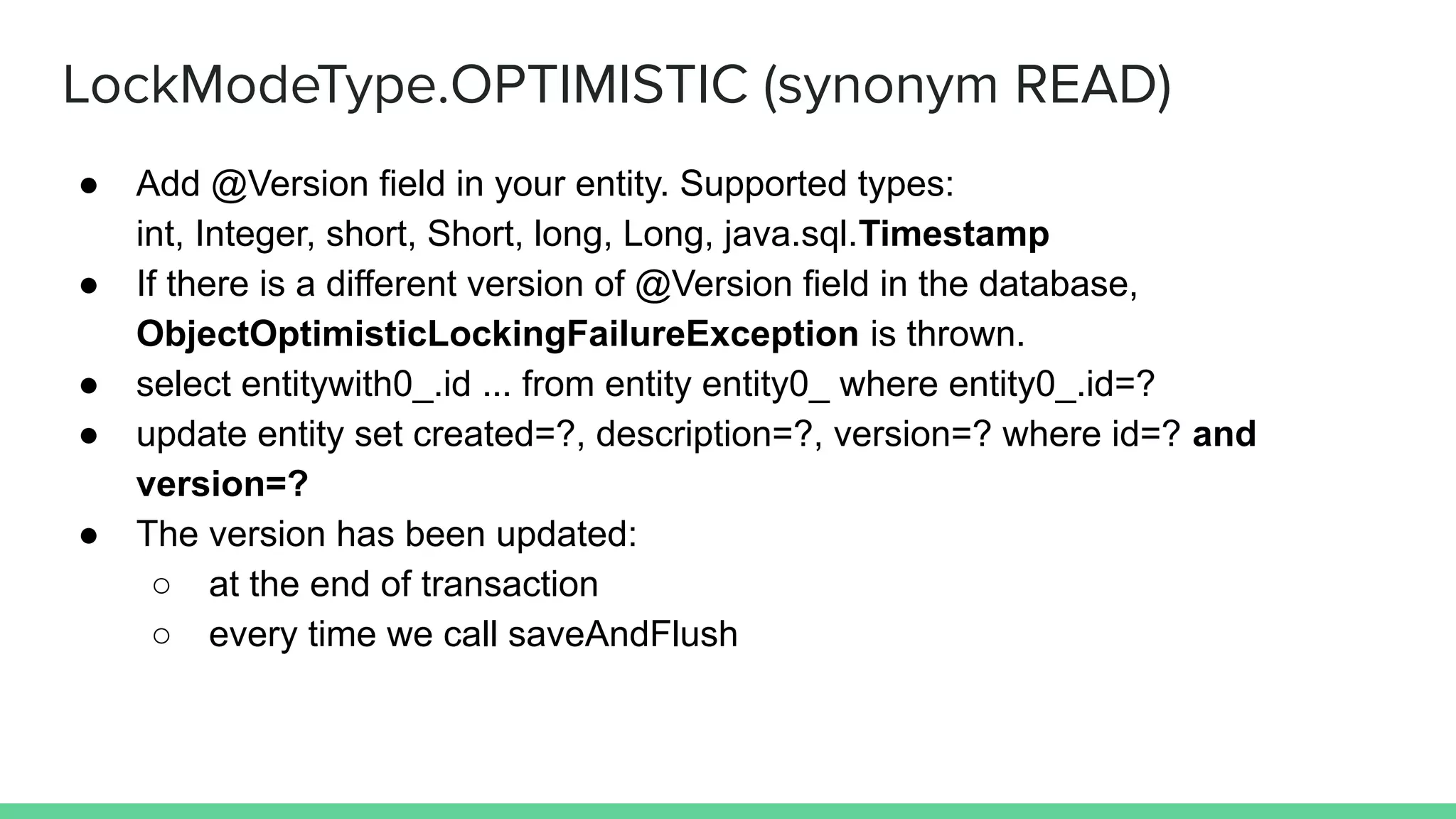

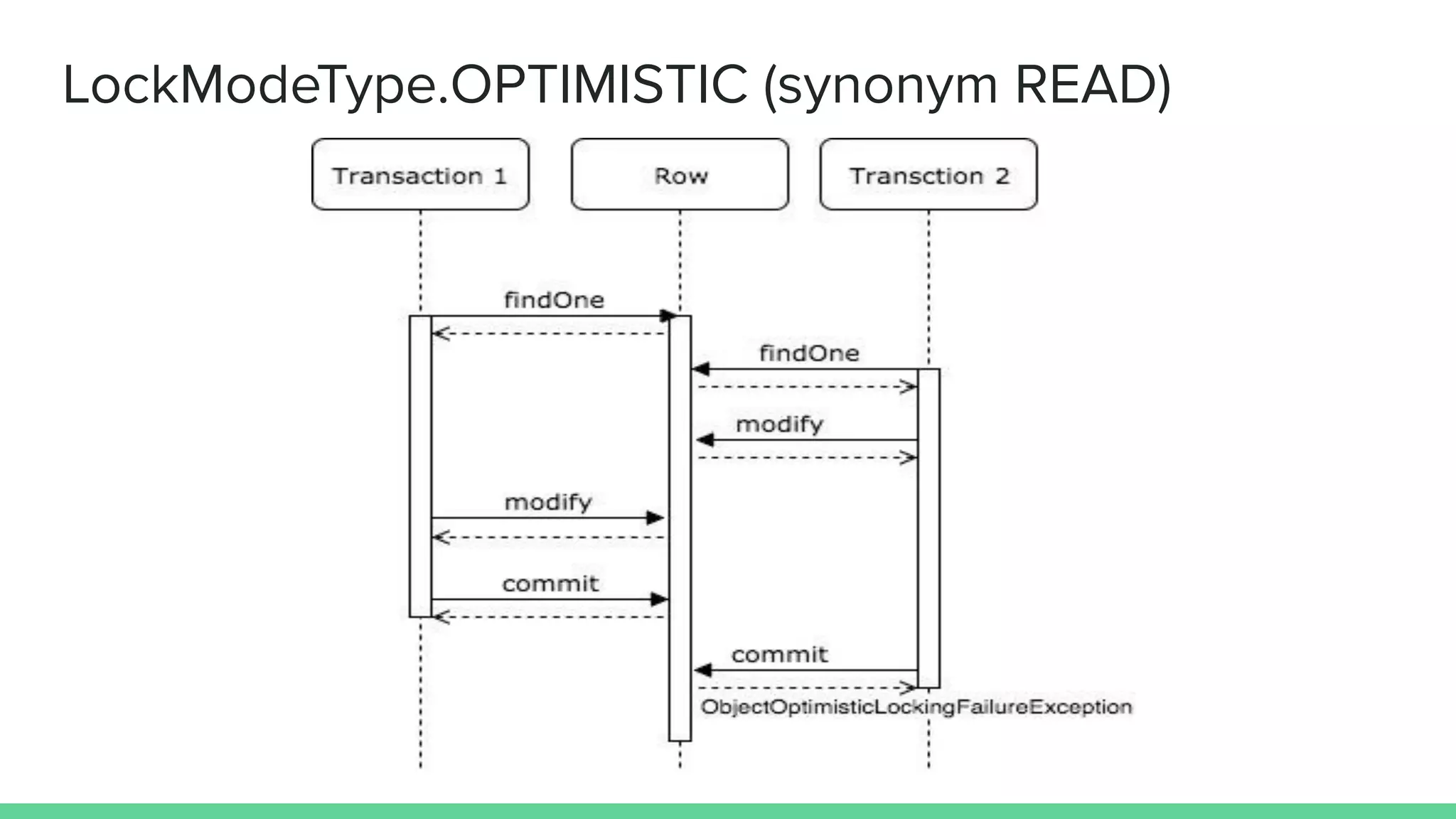

- Optimistic locking uses a version field to detect concurrent changes and throw an exception. Pessimistic locking prevents concurrent reads or writes of a row.

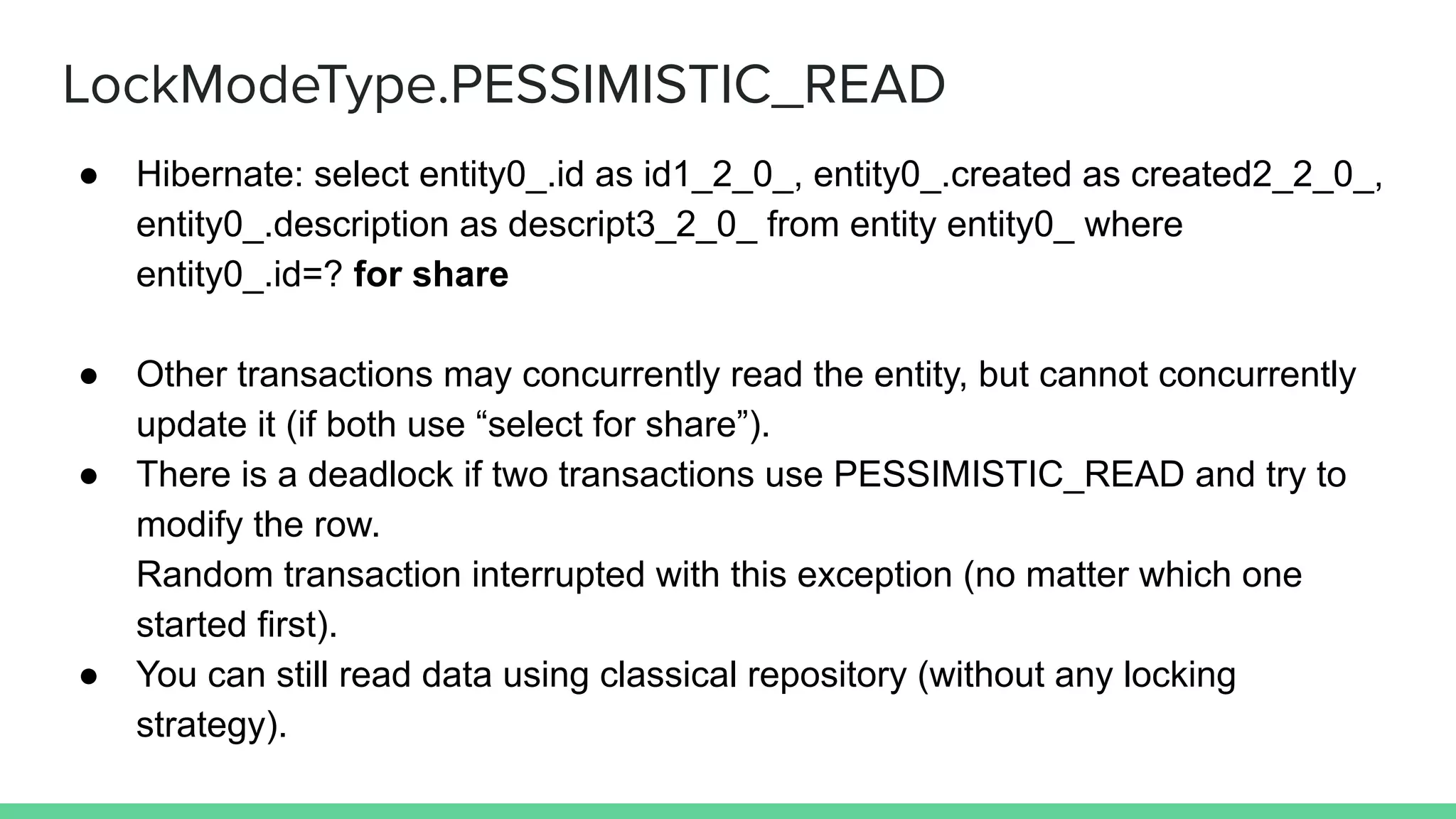

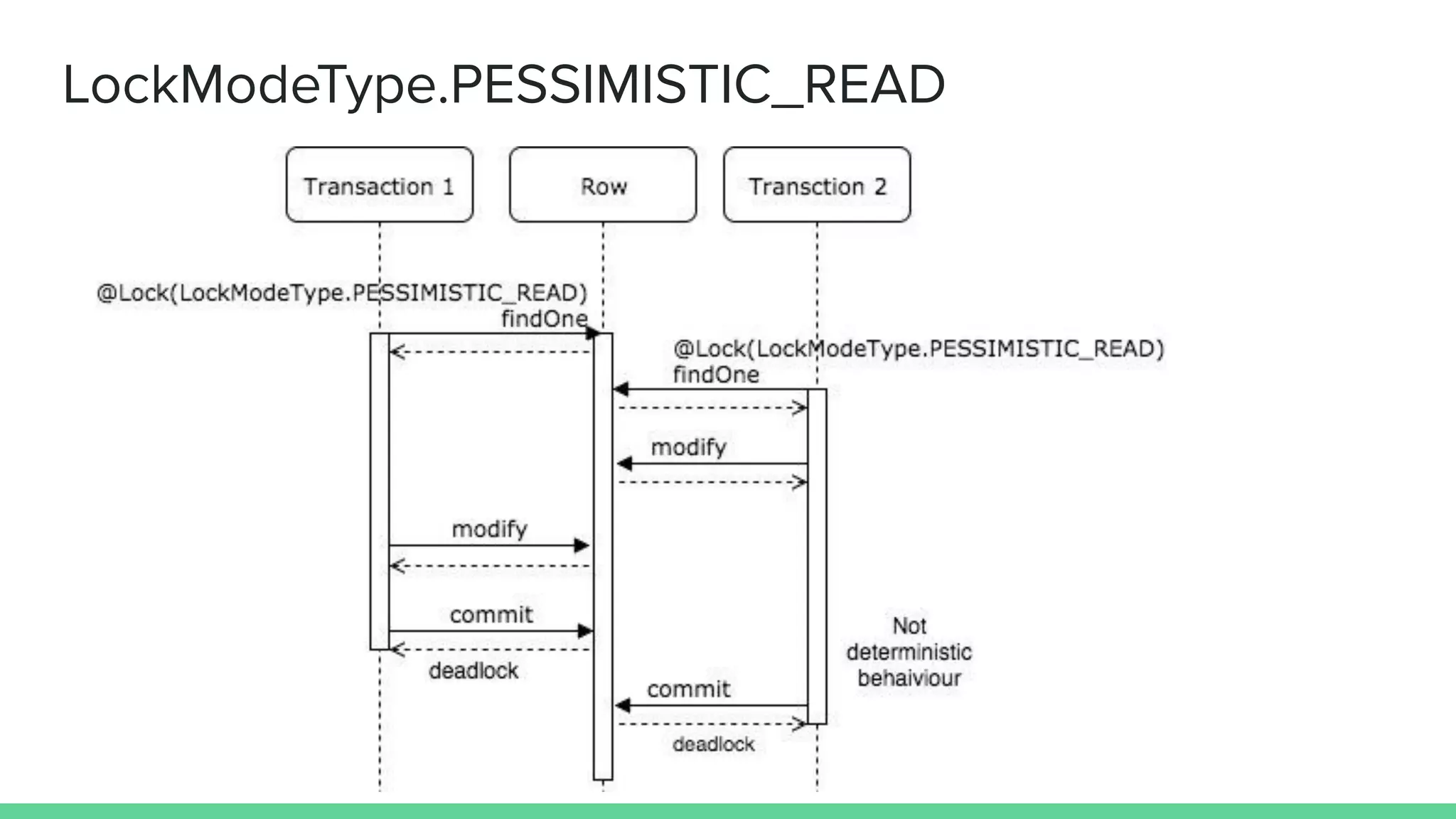

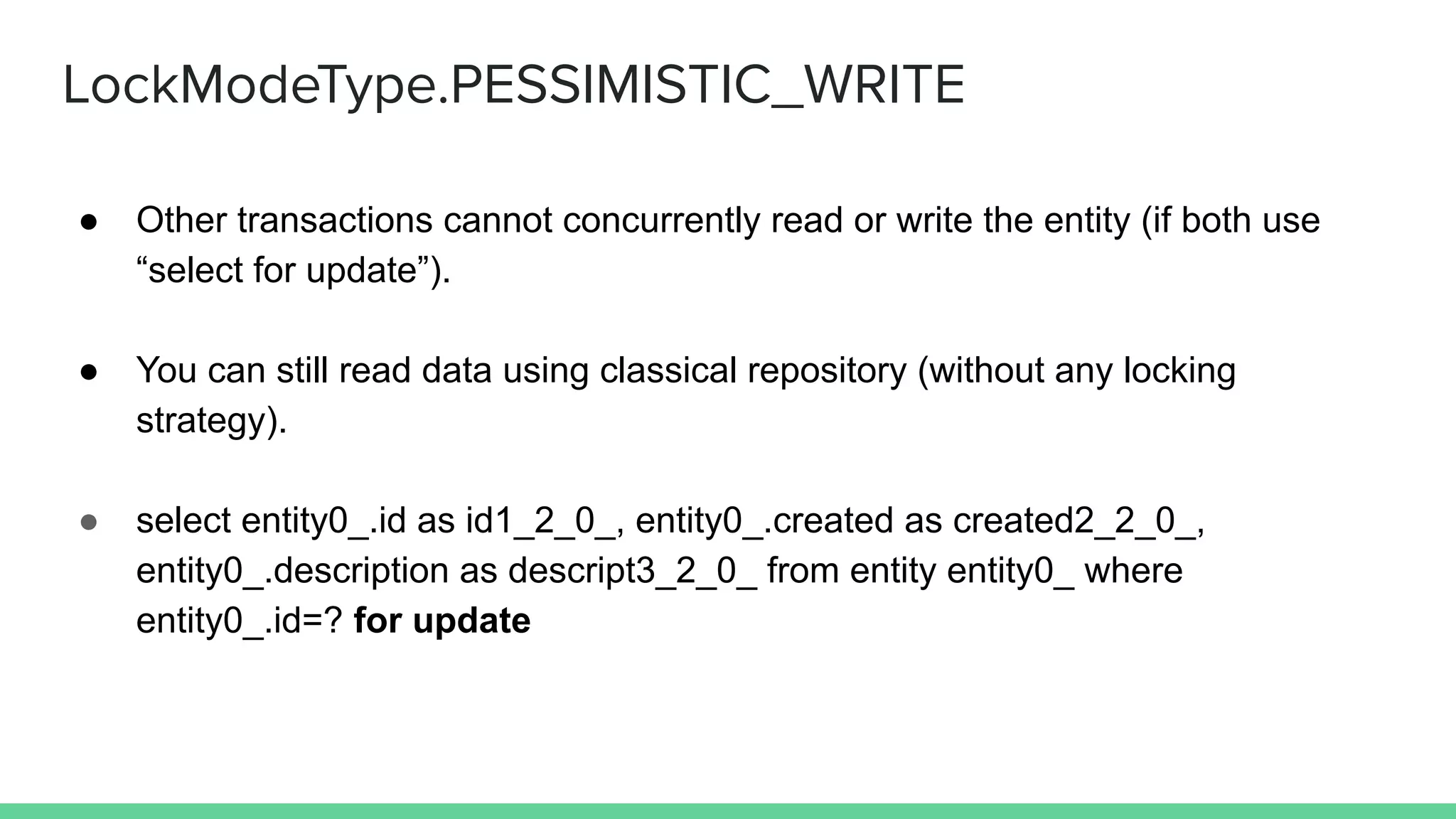

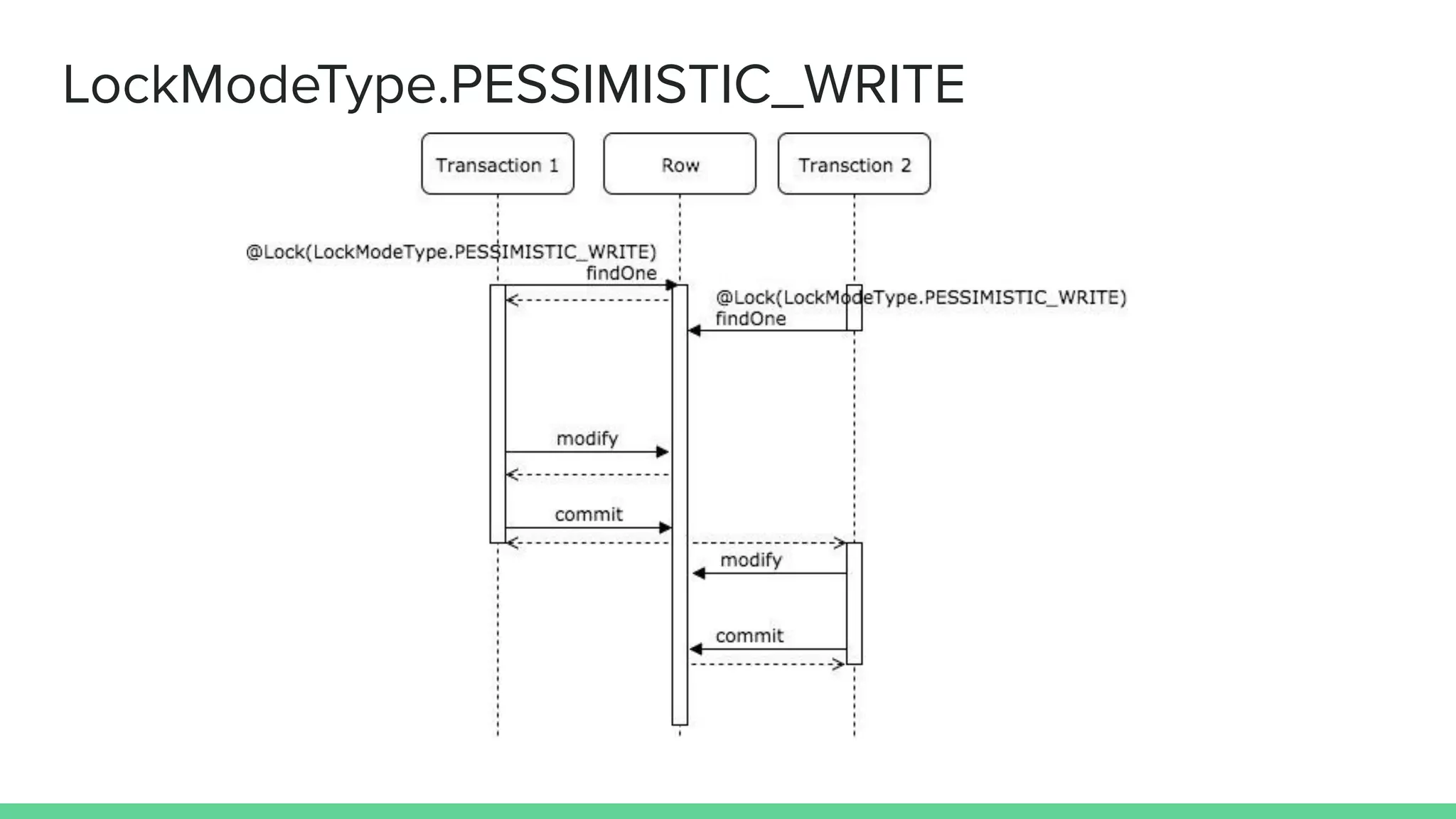

- Pessimistic read locks a row for sharing but allows concurrent reads. Pessimistic write locks a row fully to prevent other transactions from reading or writing it.

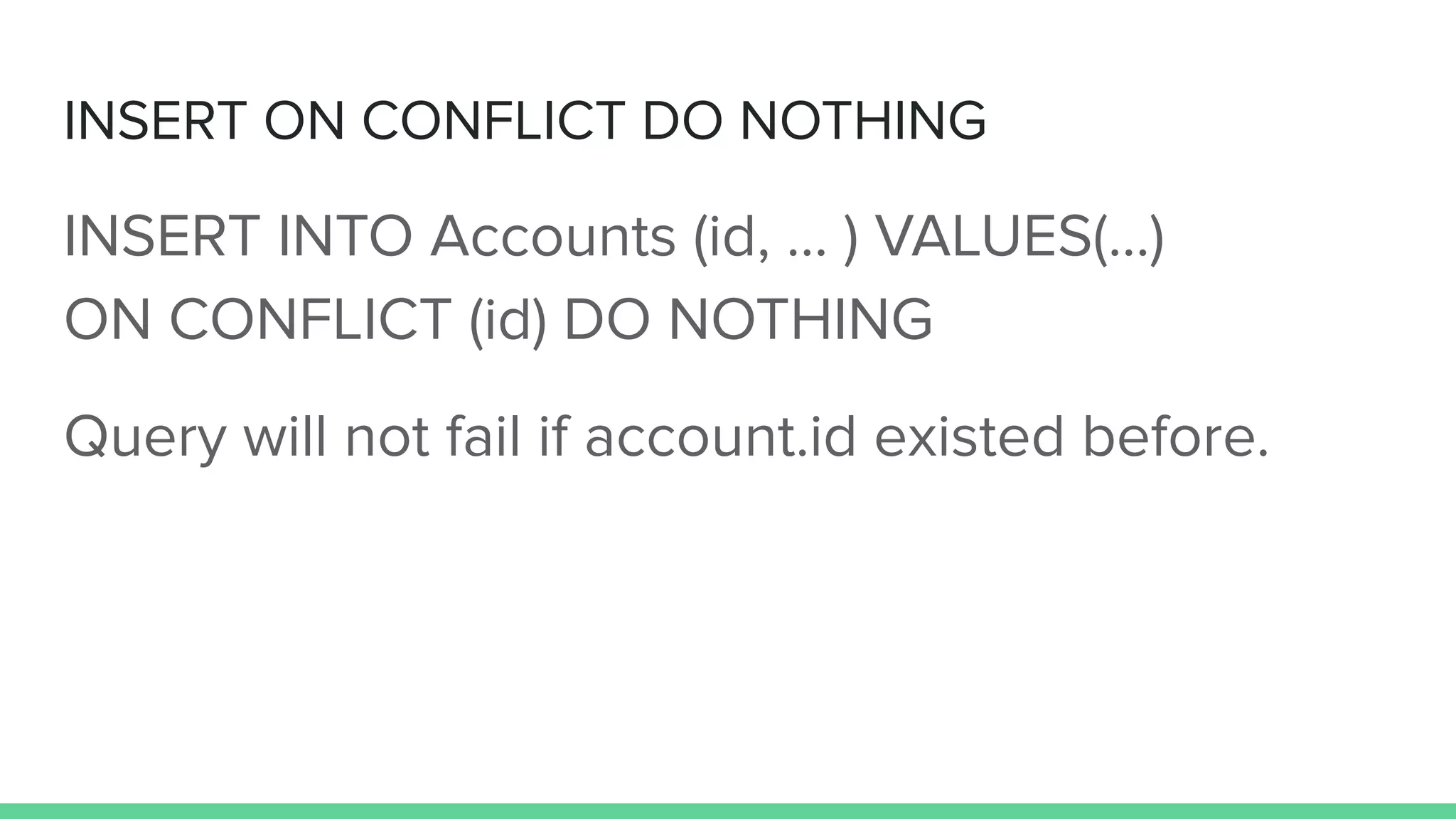

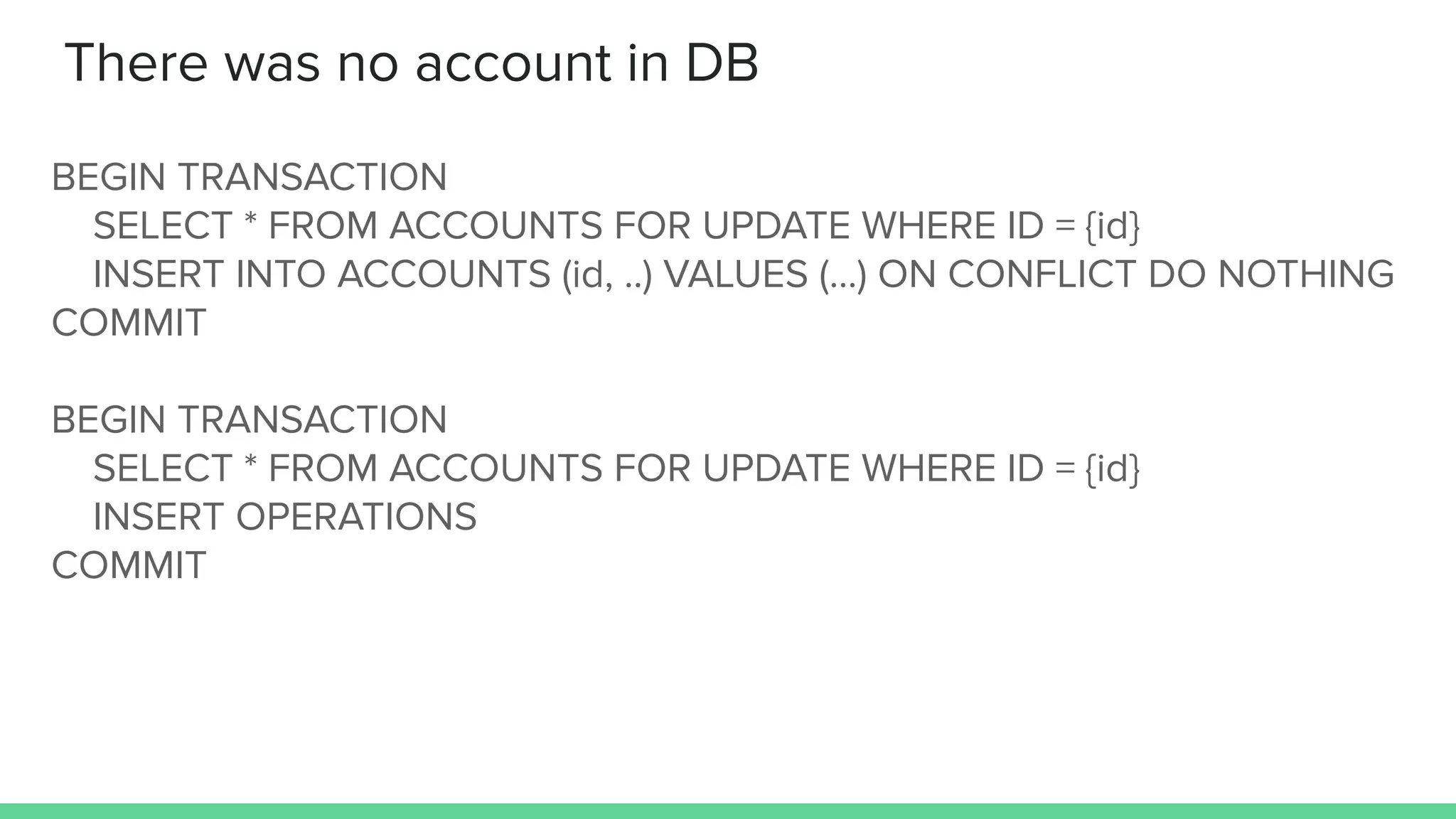

- To handle concurrent requests that may or may not find an account, use pessimistic write locking with SELECT FOR UPDATE to lock the row and prevent conflicts when inserting related data.

- Other options include serializable isolation level, read committed with table locking, or alternative locking mechanisms like Redis.