Embed presentation

Download to read offline

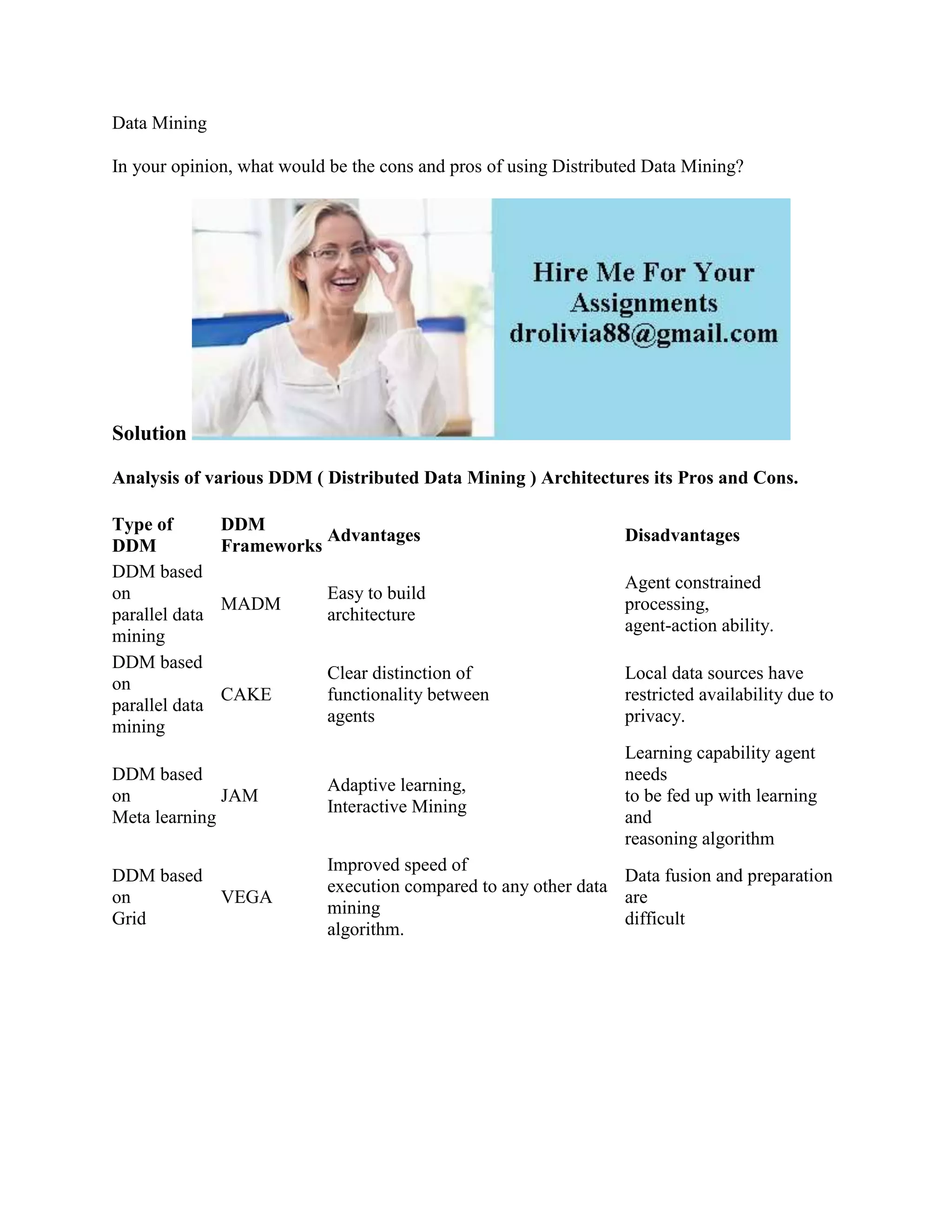

The document discusses the pros and cons of distributed data mining (DDM) architectures, highlighting various types including parallel data mining and meta learning. It identifies advantages such as improved execution speed and ease of building architecture, while noting disadvantages related to privacy and data preparation challenges. Overall, it emphasizes the need for consideration of architectural frameworks in DDM.