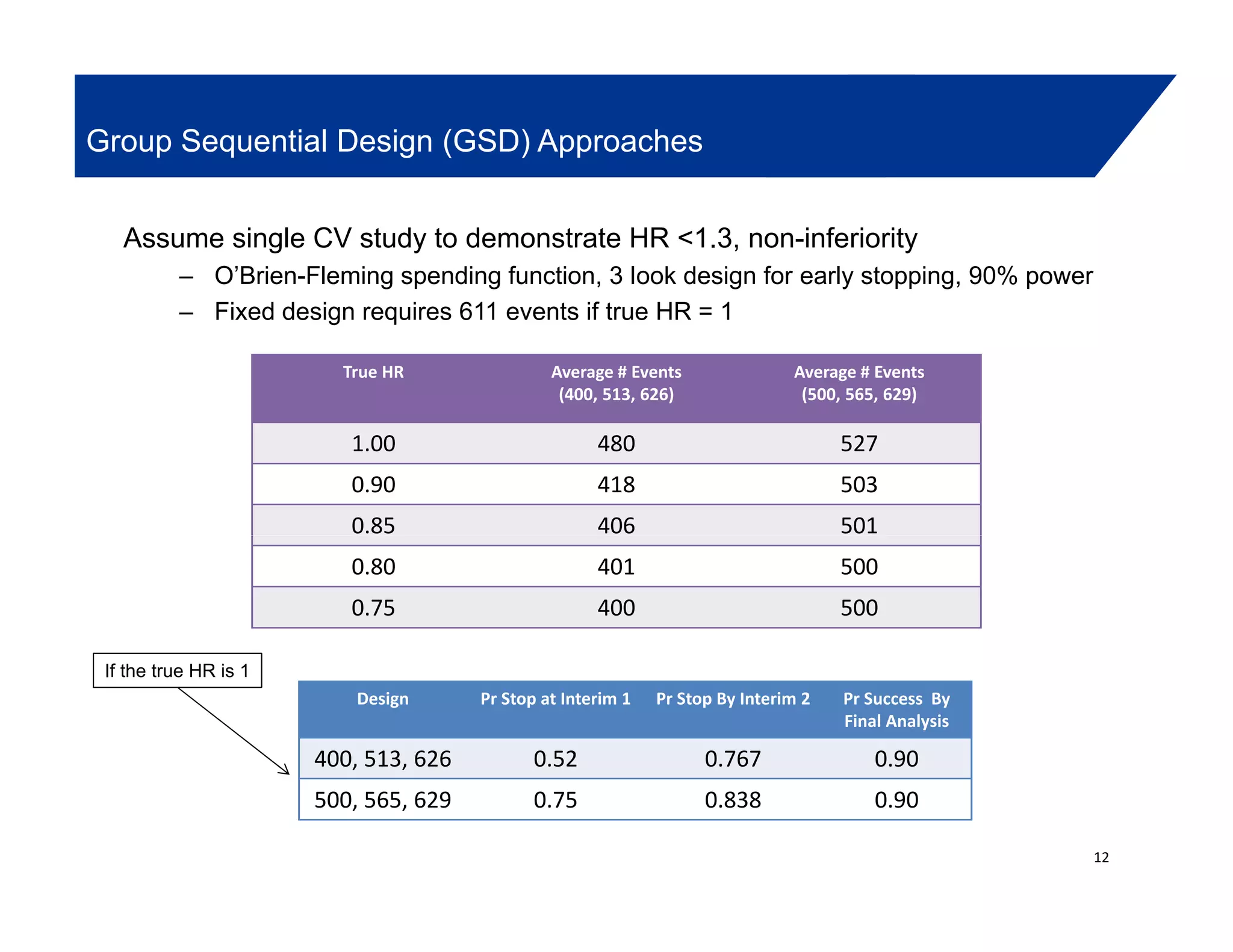

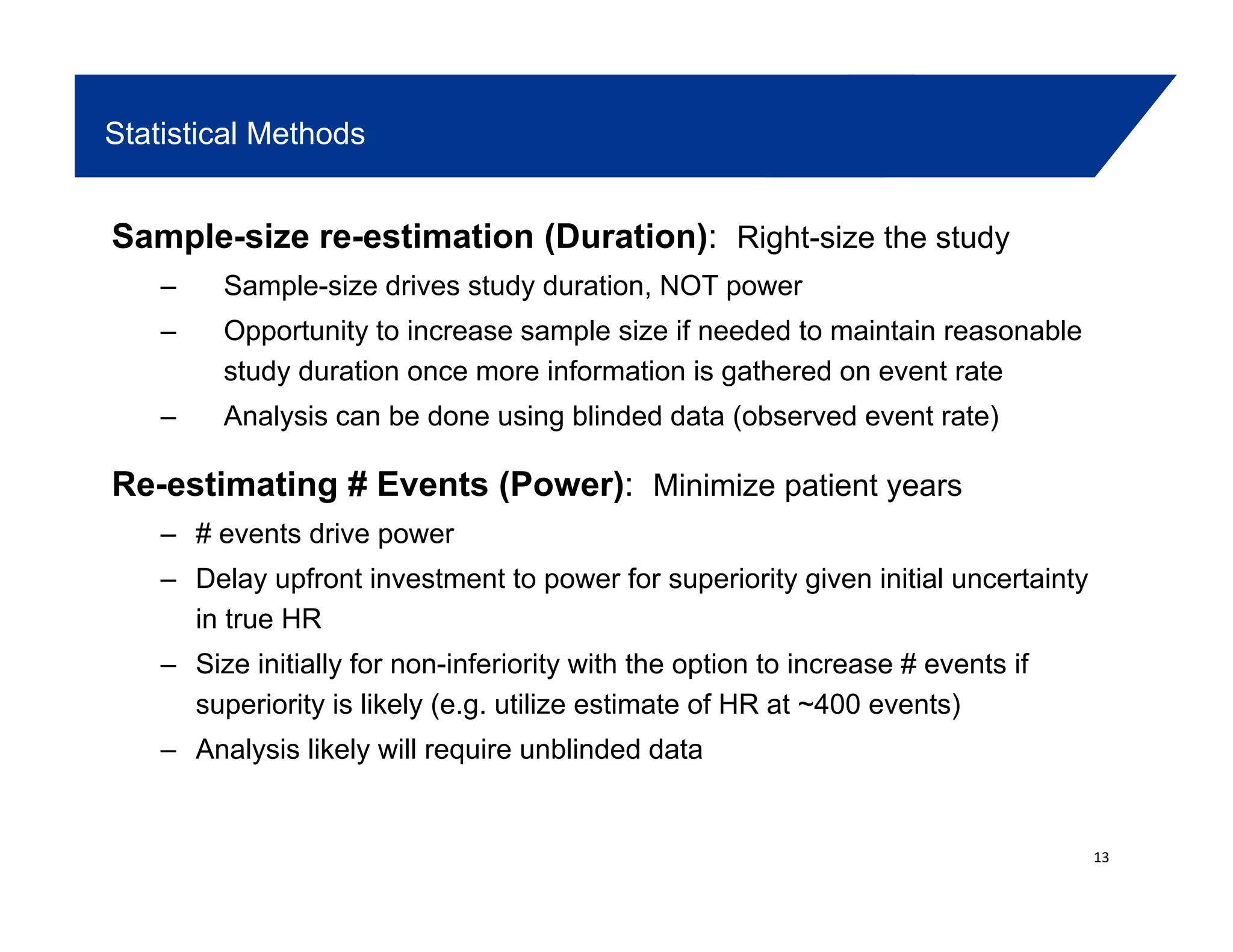

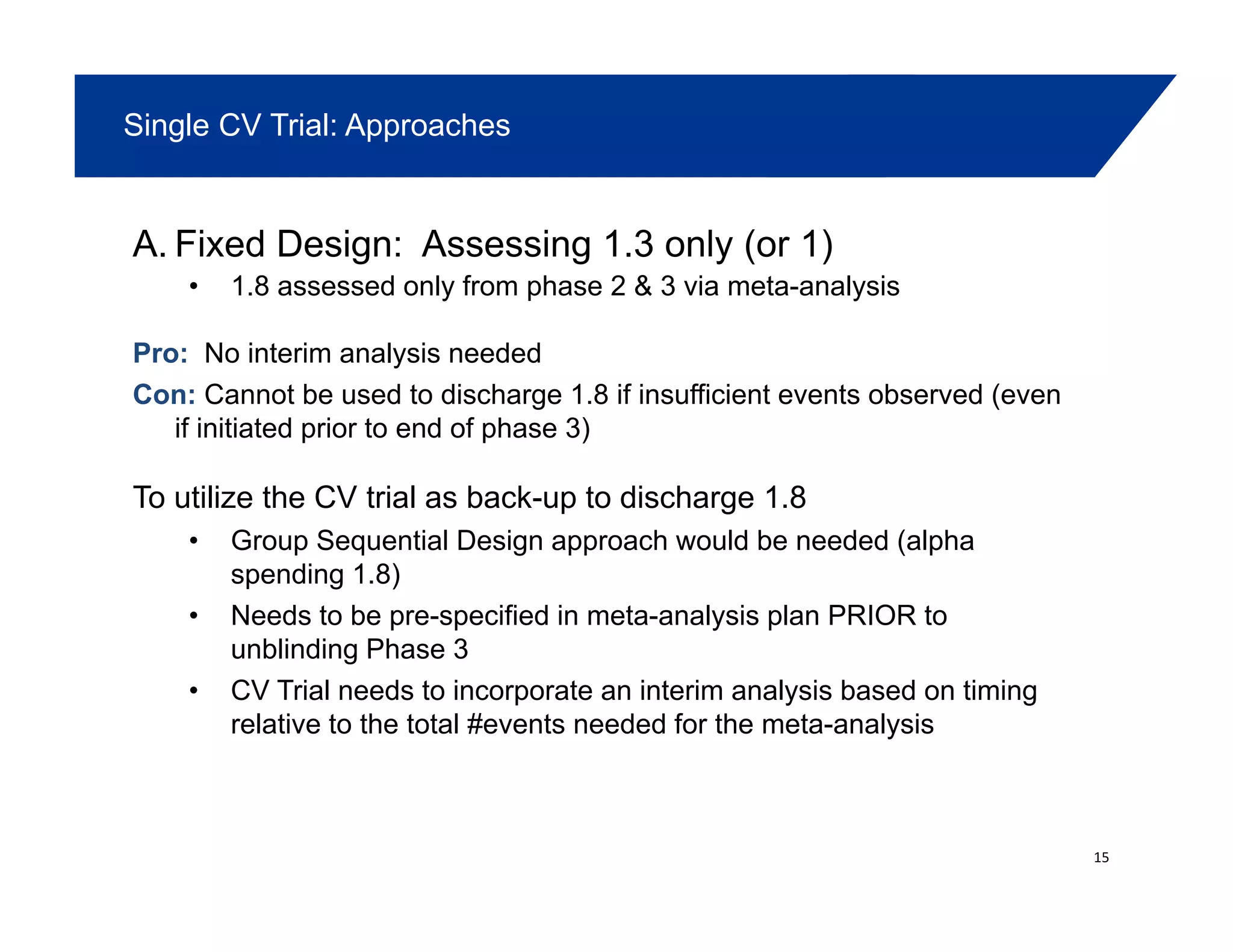

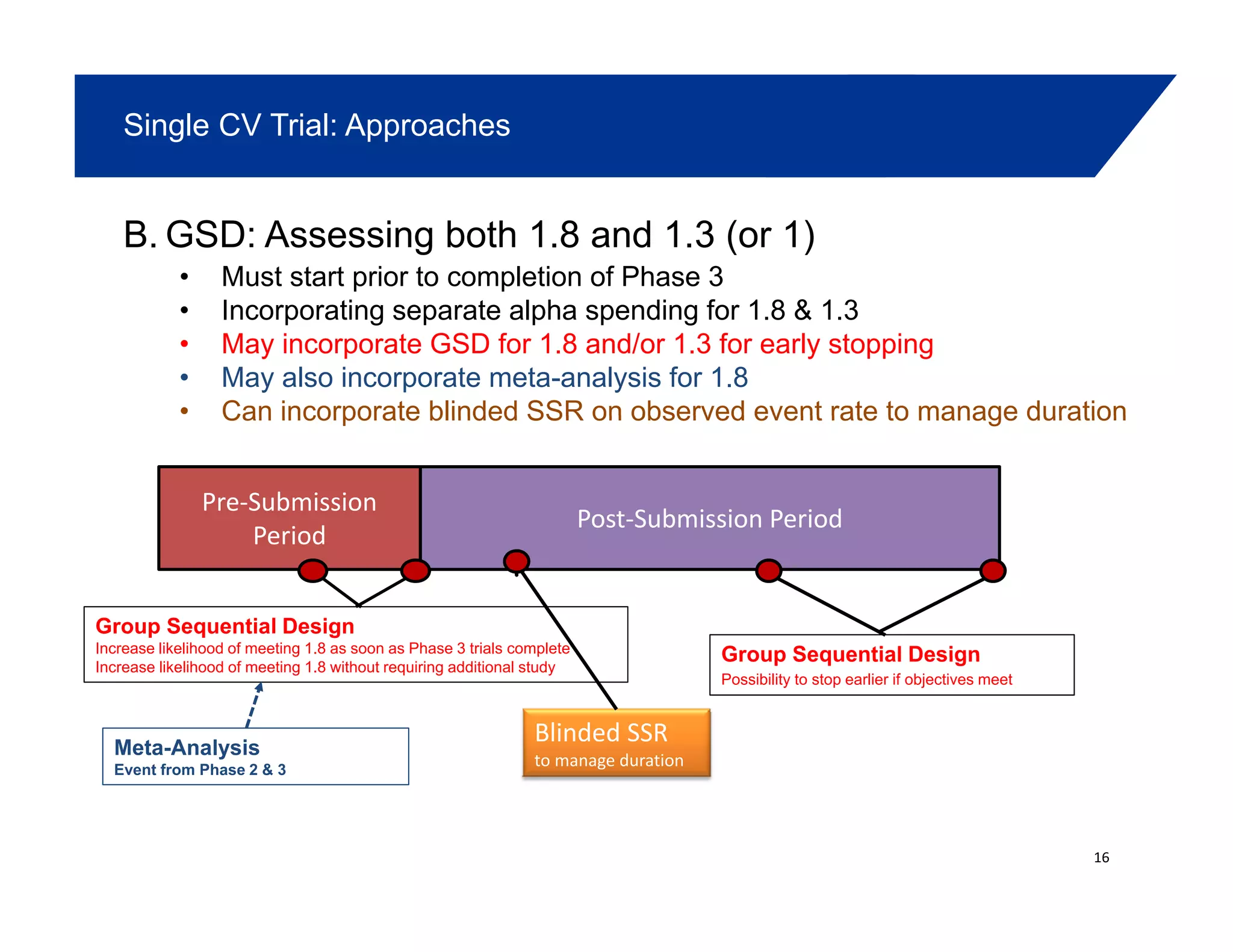

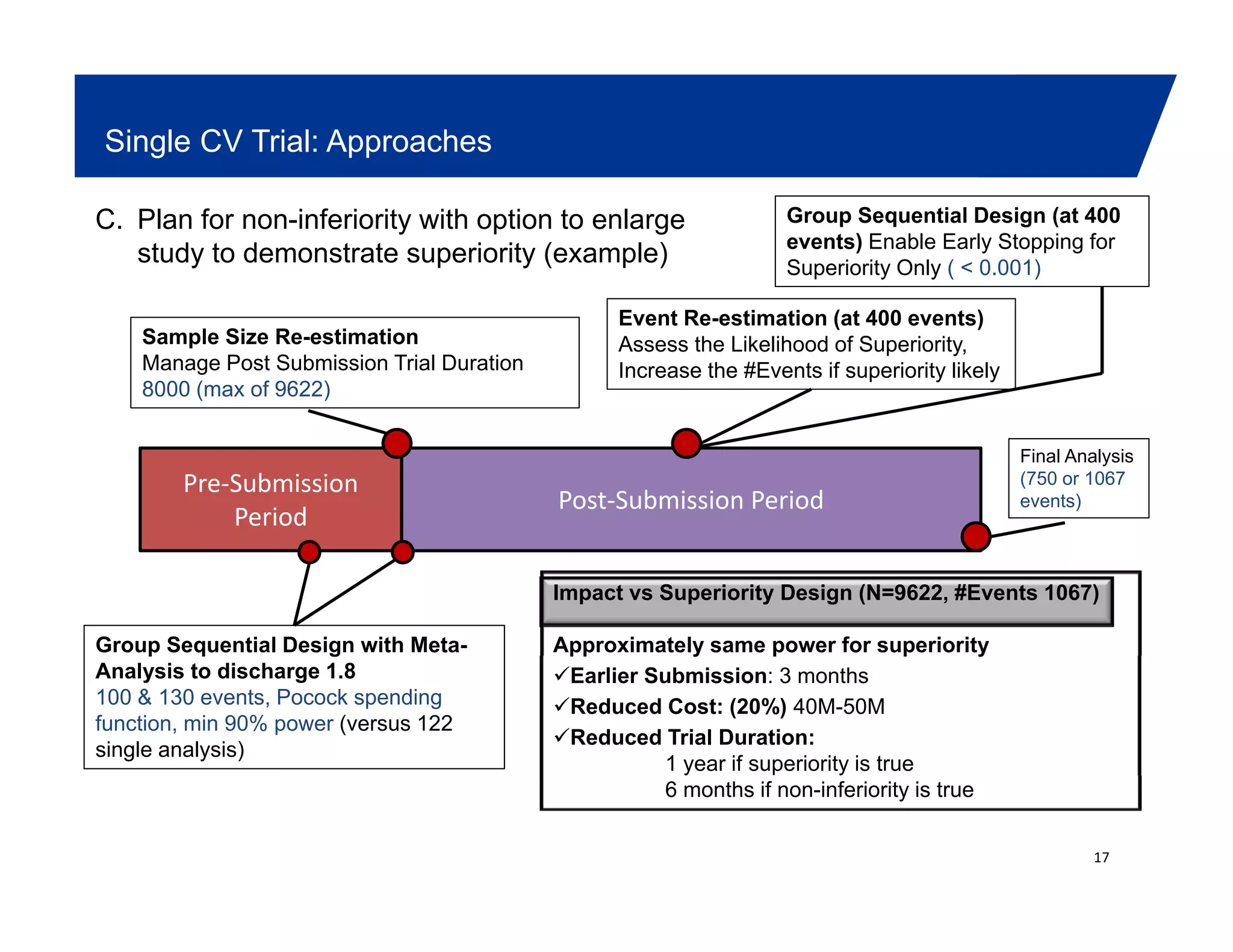

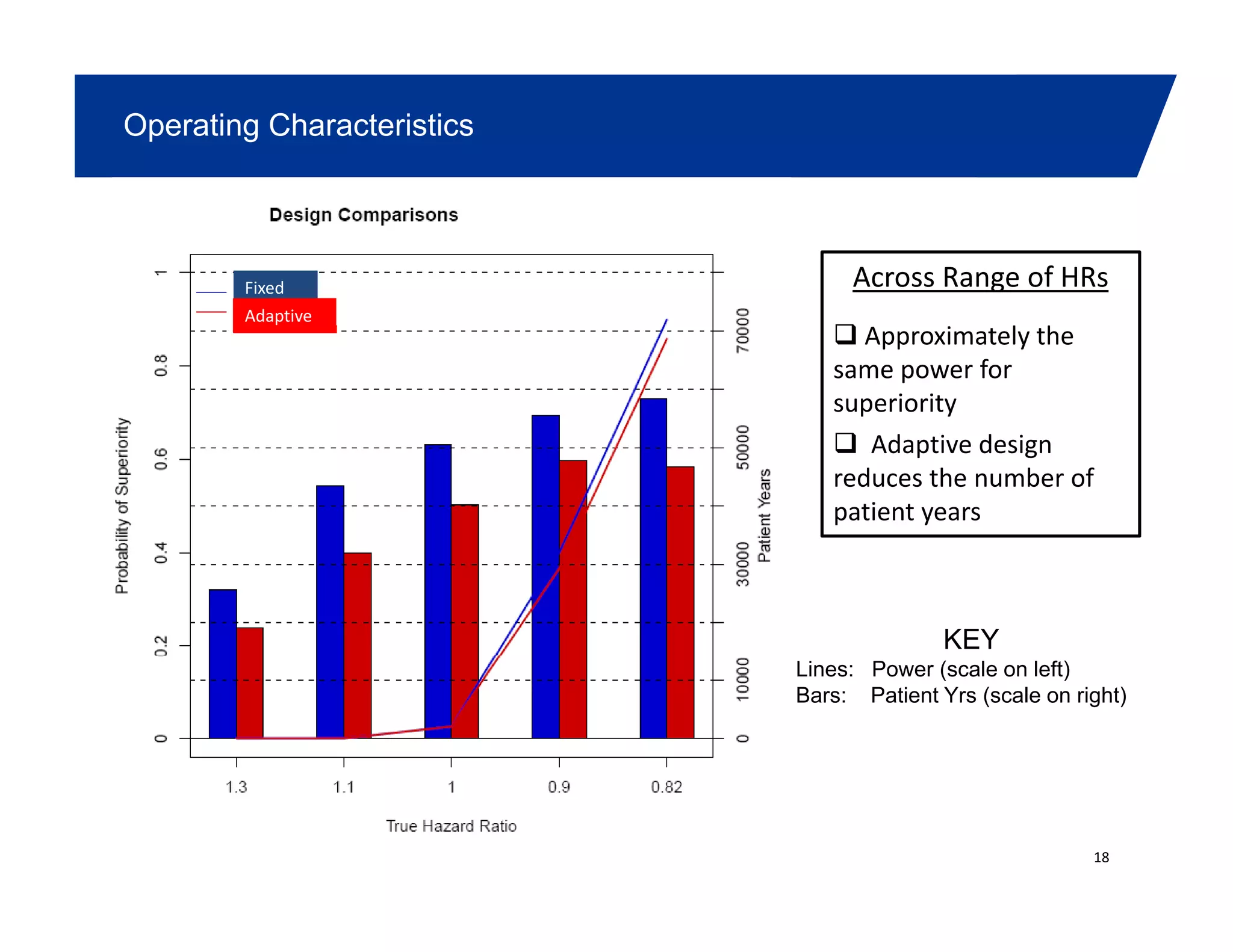

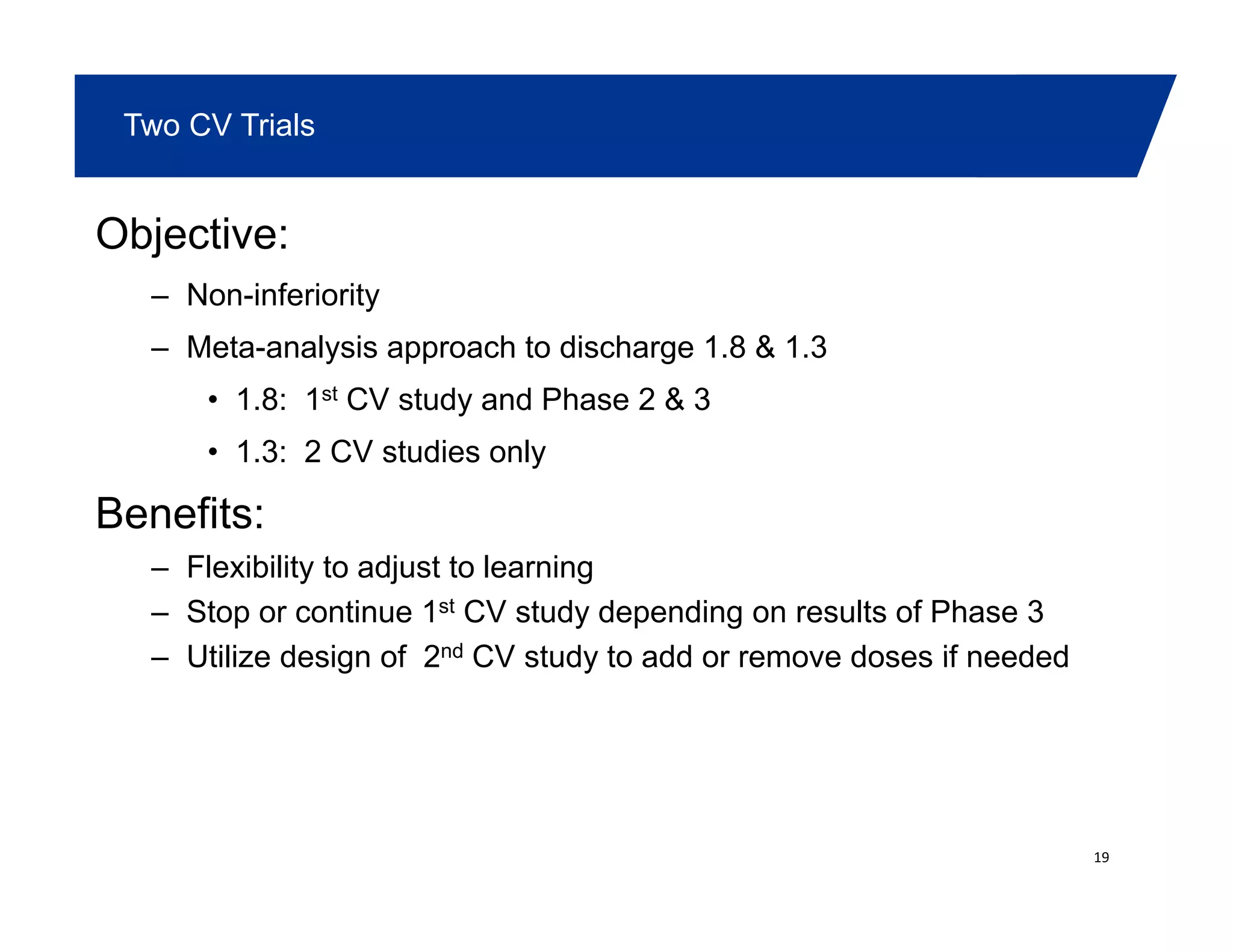

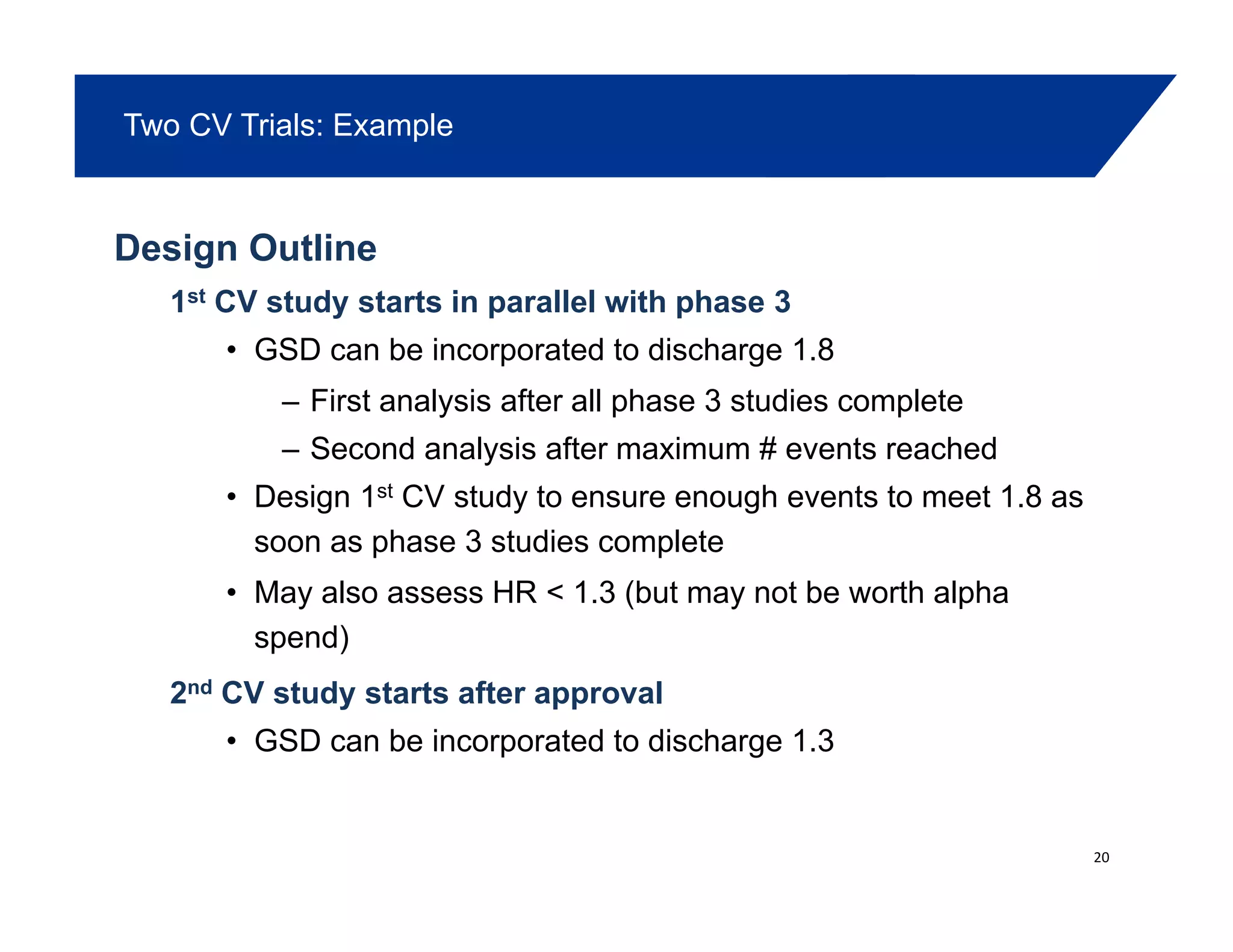

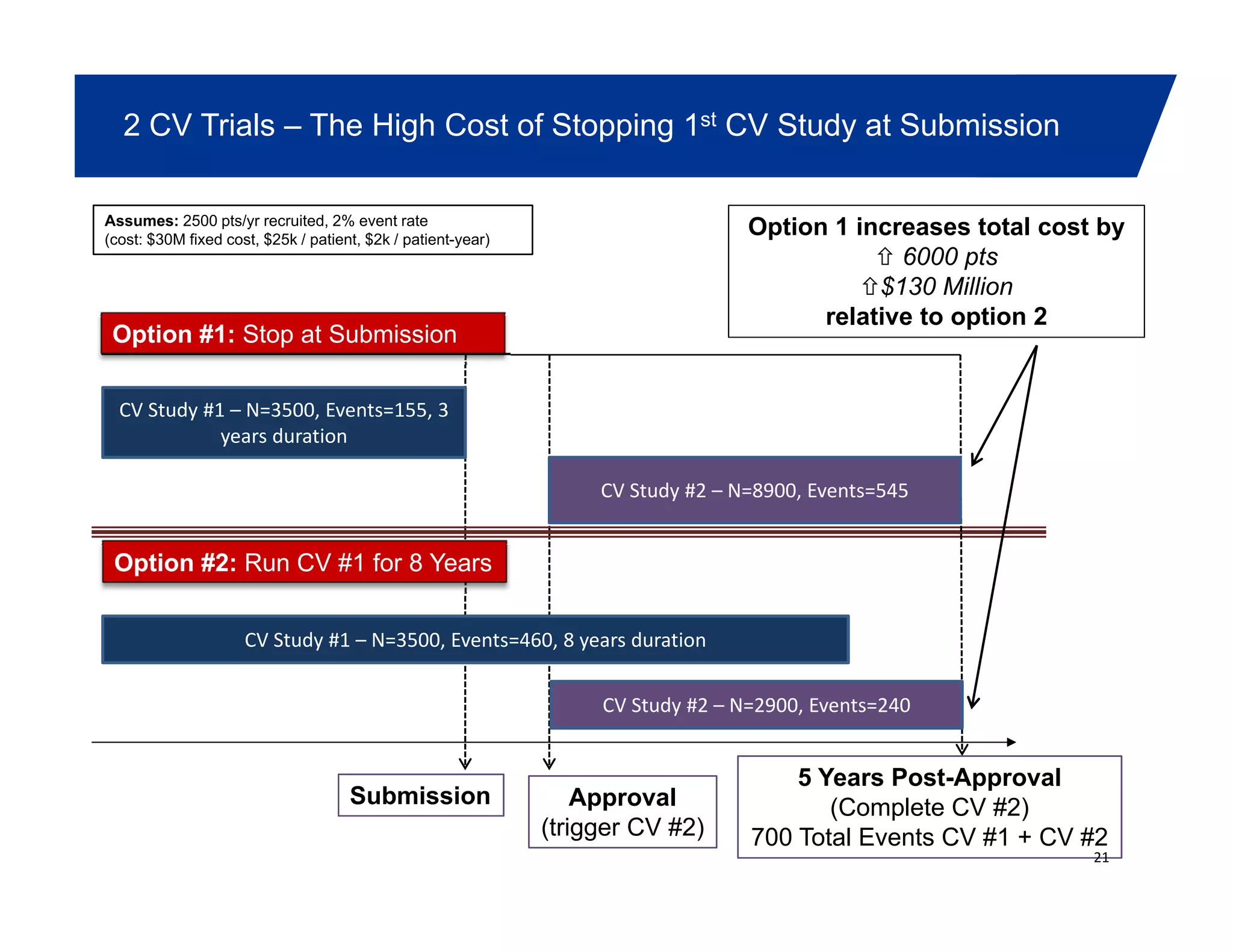

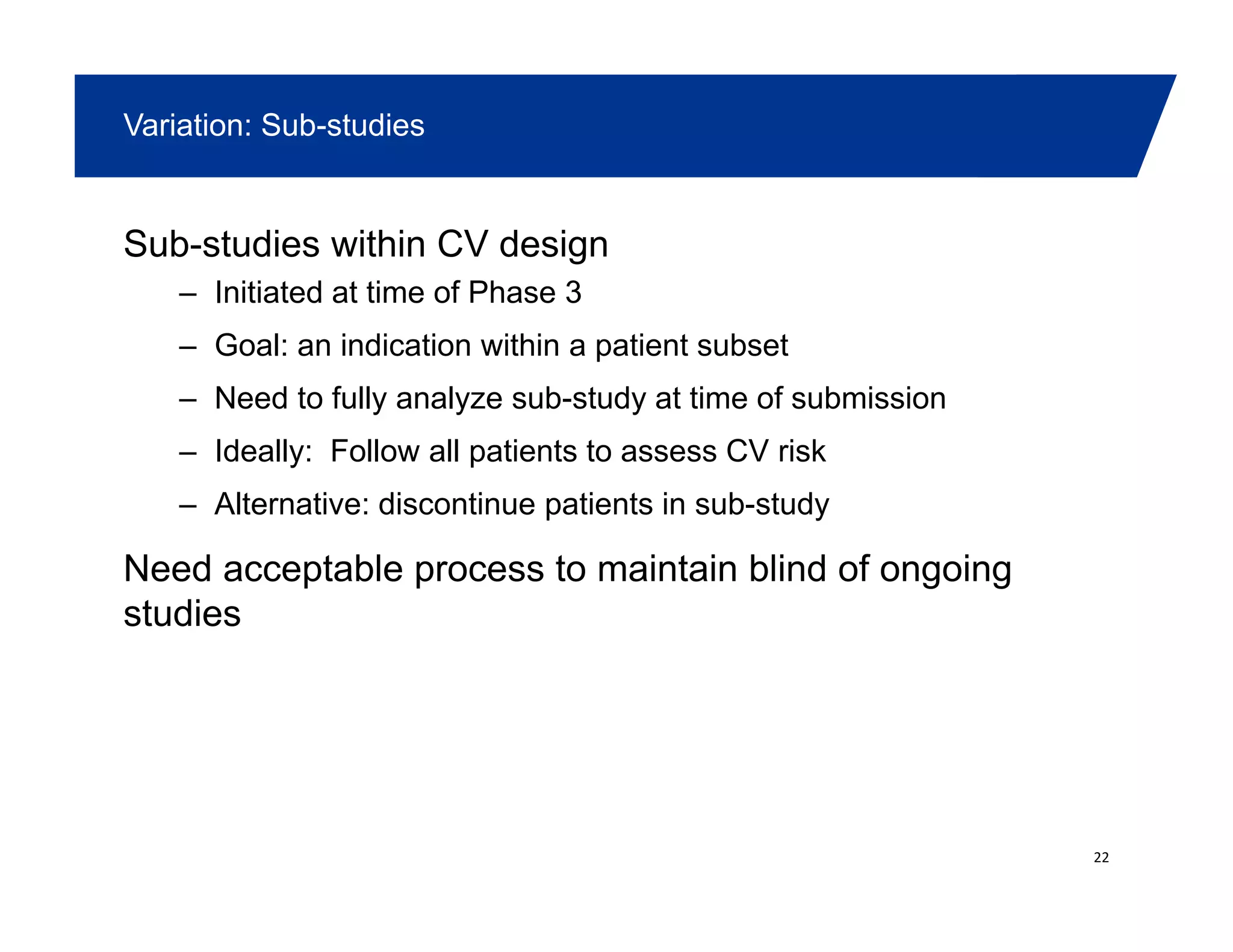

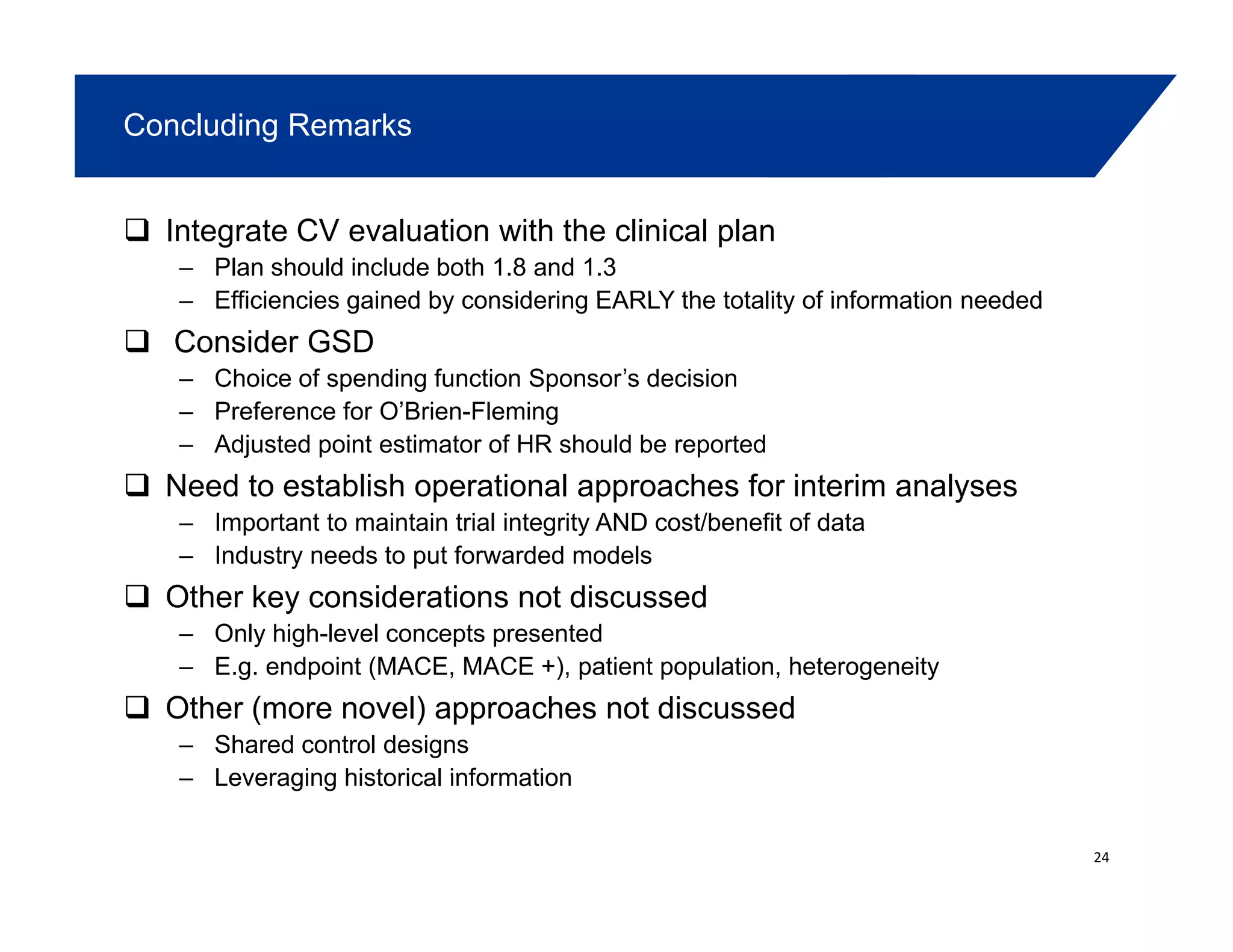

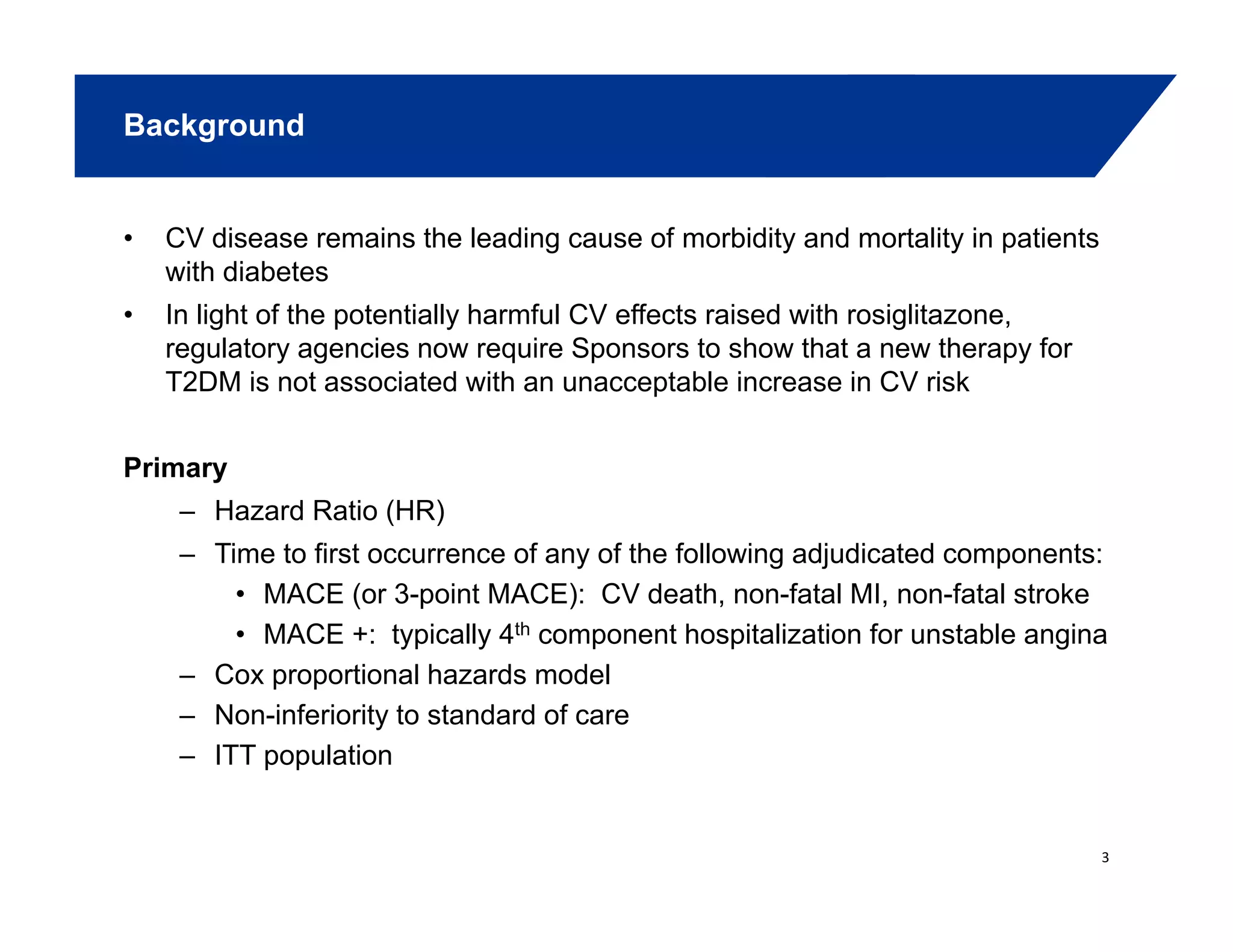

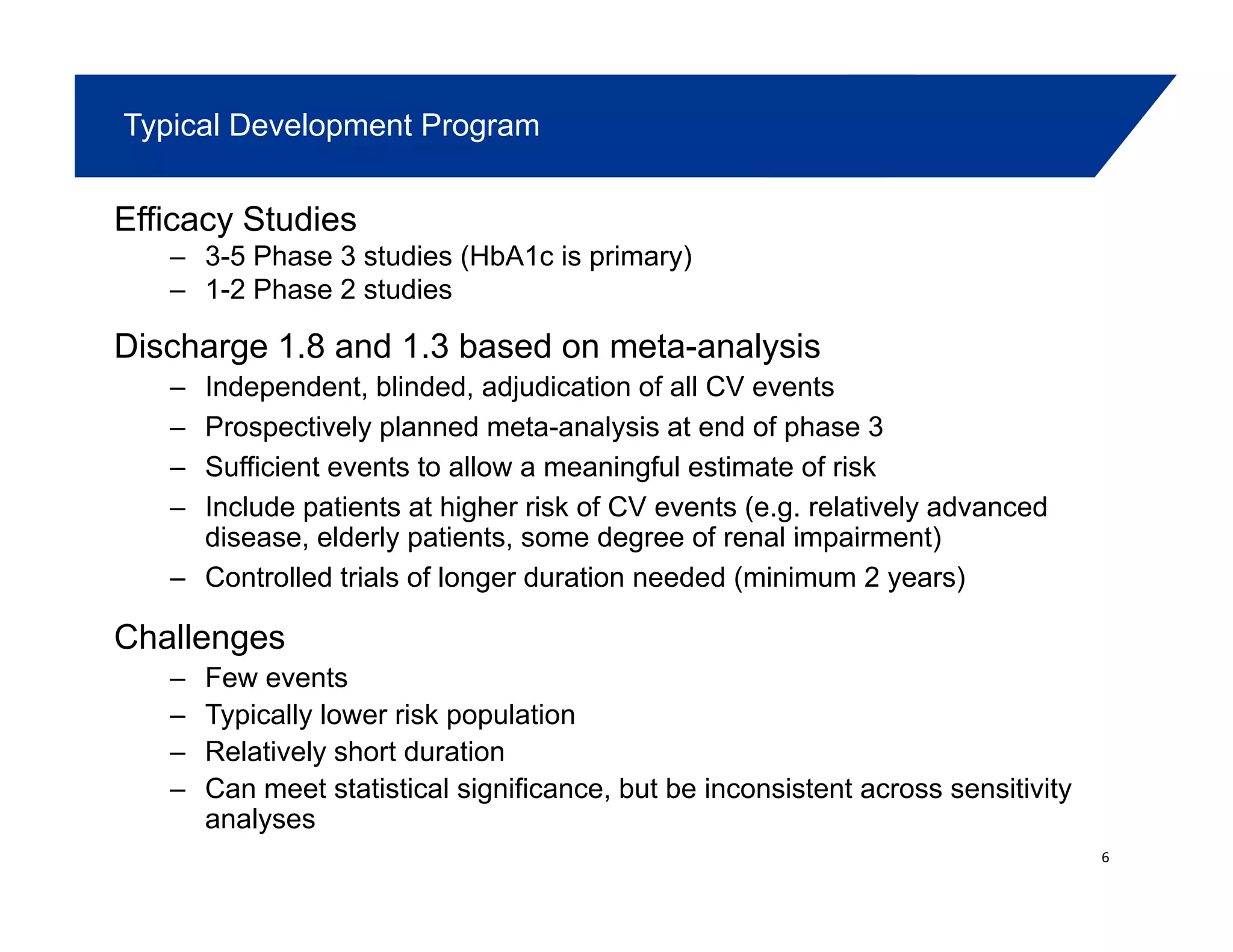

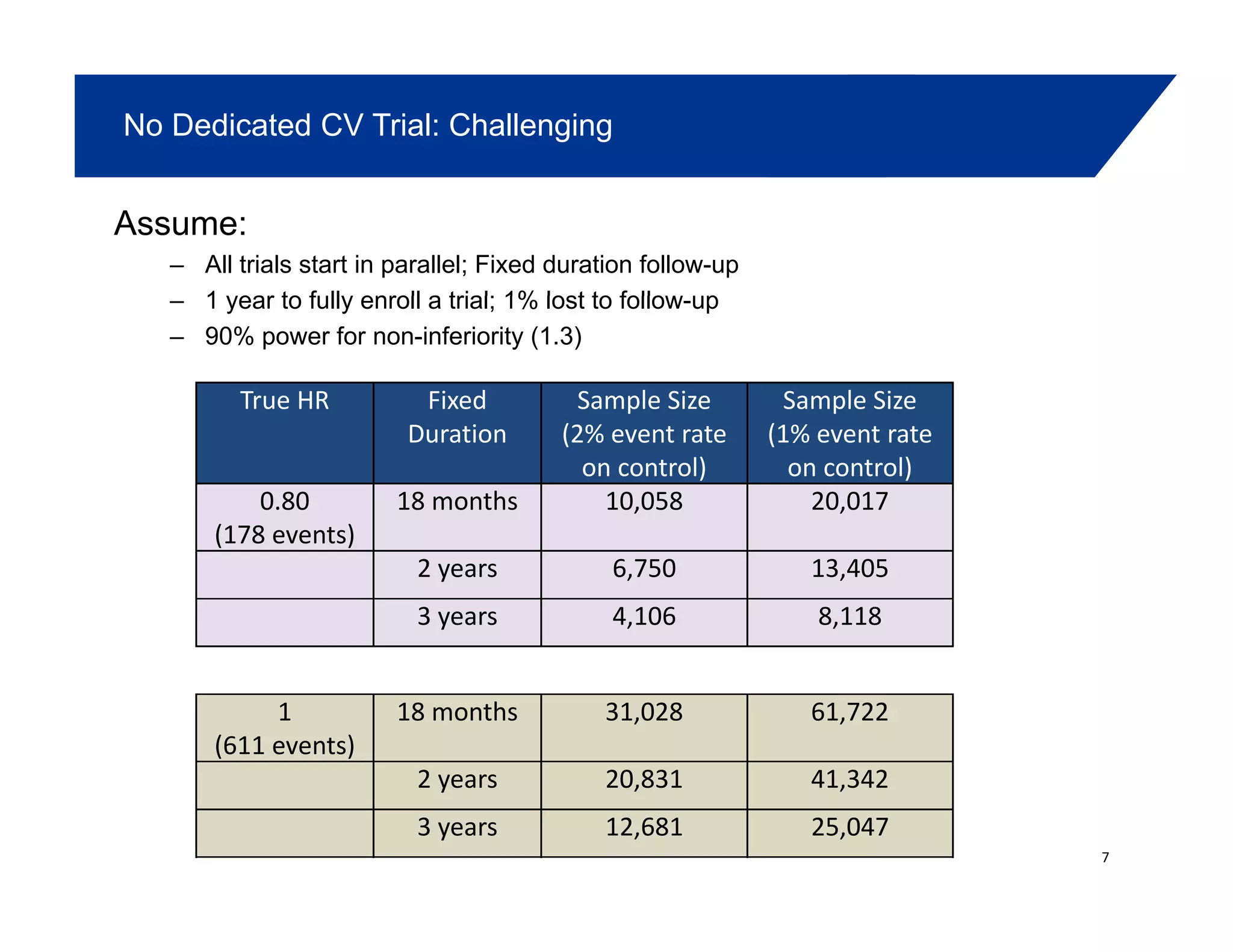

This document summarizes a presentation on approaches to evaluate cardiovascular risk in diabetes drug development. It discusses using meta-analysis and group sequential designs to integrate cardiovascular evaluation into clinical trials and potentially reduce patient exposure. It also compares options like conducting a single large outcome study, two separate cardiovascular outcome trials, or incorporating sub-studies into cardiovascular outcome trials. The presentation emphasizes planning for both non-inferiority and superiority assessments and considering operational aspects like maintaining trial blinding for interim analyses.

![Statistical Methods

Meta-Analysis: Reduce patient exposure by efficiently utilizing events

– Acceptable for 1.8 (phase 2,3 & possibly CV trial)p (p , p y )

– Acceptable for 1.3 (CV trials & possibly phase 2,3 trials)

– ? Acceptable for 1 (CV trials)

• Does superiority need to be demonstrate in a single CV Outcomes trial?• Does superiority need to be demonstrate in a single CV Outcomes trial?

• Typically seeing gated hypothesis testing within meta-analysis: 1st test HR <

1.3, then test HR < 1

• If an interim analysis is utilized for assessing 1.8:

– Need acceptable process to maintain blind of ongoing studies

– Completely blind the sponsor (CRO or some other body)

– Blind the study team, but not the sponsor (e.g. team internal to sponsor, but

firewalled from study team; internal steering committee with CRO)

• What will be published in SBA? [Transparency / Data Confidentiality]

10](https://image.slidesharecdn.com/d1-designandanalysisapproachestoevaluatecardiovascularrisk-2012eugm-150220132855-conversion-gate01/75/D1-design-and-analysis-approaches-to-evaluate-cardiovascular-risk-2012-eugm-10-2048.jpg)