D 17

•

0 likes•29 views

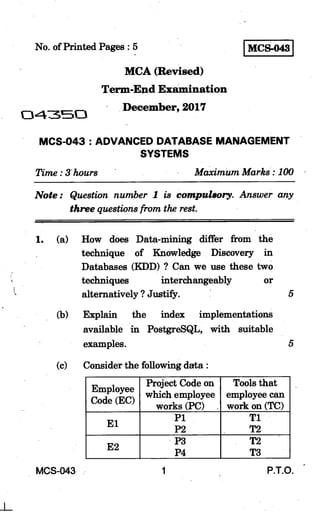

This document outlines exam questions for an Advanced Database Management Systems exam. It covers topics such as data mining vs knowledge discovery in databases, index implementations in PostgreSQL, database normalization, granularity and security/performance, semantic databases, shadow paging, clustering vs classification, database queries, views, XML documents, data dictionaries, multi-version schemes, and the Apriori algorithm for association rule mining. The exam questions require students to define concepts, provide examples, and demonstrate techniques like database normalization and joining normalized relations.

Report

Share

Report

Share

Download to read offline

Recommended

QUERY INVERSION TO FIND DATA PROVENANCE

Day by day data is increasing, and most of the data stored in a database after manual transformations and derivations. Scientists can facilitate data intensive applications to study and understand the behaviour of a complex system. In a data intensive application, a scientific model facilitates raw data products to produce new data products and that data is collected from various sources such as physical, geological, environmental, chemical and biological etc. Based on the generated output, it is important to have the ability of tracing an output data product back to its source values if that particular output seems to have an unexpected value. Data provenance helps scientists to investigate the origin of an unexpected value. In this paper our aim is to find a reason behind the unexpected value from a database using query inversion and we are going to propose some hypothesis to make an inverse query for complex aggregation function and multiple relationship (join, set operation) function.

EFFICIENT SCHEMA BASED KEYWORD SEARCH IN RELATIONAL DATABASES

Keyword search in relational databases allows user to search information without knowing database

schema and using structural query language (SQL). In this paper, we address the problem of generating

and evaluating candidate networks. In candidate network generation, the overhead is caused by raising the

number of joining tuples for the size of minimal candidate network. To reduce overhead, we propose

candidate network generation algorithms to generate a minimum number of joining tuples according to the

maximum number of tuple set. We first generate a set of joining tuples, candidate networks (CNs). It is

difficult to obtain an optimal query processing plan during generating a number of joins. We also develop a

dynamic CN evaluation algorithm (D_CNEval) to generate connected tuple trees (CTTs) by reducing the

size of intermediate joining results. The performance evaluation of the proposed algorithms is conducted

on IMDB and DBLP datasets and also compared with existing algorithms.

CONTEXT-AWARE CLUSTERING USING GLOVE AND K-MEANS

ABSTRACT

In this paper we propose a novel method to cluster categorical data while retaining their context. Typically, clustering is performed on numerical data. However it is often useful to cluster categorical data as well, especially when dealing with data in real-world contexts. Several methods exist which can cluster categorical data, but our approach is unique in that we use recent text-processing and machine learning advancements like GloVe and t- SNE to develop a a context-aware clustering approach (using pre-trained

word embeddings). We encode words or categorical data into numerical, context-aware, vectors that we use to cluster the data points using common clustering algorithms like K-means.

Enhancing the labelling technique of

Clustering the results of a search helps the user to overview the information returned. In this paper, we

look upon the clustering task as cataloguing the search results. By catalogue we mean a structured label

list that can help the user to realize the labels and search results. Labelling Cluster is crucial because

meaningless or confusing labels may mislead users to check wrong clusters for the query and lose extra

time. Additionally, labels should reflect the contents of documents within the cluster accurately. To be able

to label clusters effectively, a new cluster labelling method is introduced. More emphasis was given to

/produce comprehensible and accurate cluster labels in addition to the discovery of document clusters. We

also present a new metric that employs to assess the success of cluster labelling. We adopt a comparative

evaluation strategy to derive the relative performance of the proposed method with respect to the two

prominent search result clustering methods: Suffix Tree Clustering and Lingo.

we perform the experiments using the publicly available Datasets Ambient and ODP-239

Search as-you-type (Exact search)

This PPT includes Search as you type in database using SQL, and it describes Exact search deeply.

A unified approach for spatial data query

With the rapid development in Geographic Information Systems (GISs) and their applications, more and

more geo-graphical databases have been developed by different vendors. However, data integration and

accessing is still a big problem for the development of GIS applications as no interoperability exists among

different spatial databases. In this paper we propose a unified approach for spatial data query. The paper

describes a framework for integrating information from repositories containing different vector data sets

formats and repositories containing raster datasets. The presented approach converts different vector data

formats into a single unified format (File Geo-Database “GDB”). In addition, we employ “metadata” to

support a wide range of users’ queries to retrieve relevant geographic information from heterogeneous and

distributed repositories. Such an employment enhances both query processing and performance.

Recommended

QUERY INVERSION TO FIND DATA PROVENANCE

Day by day data is increasing, and most of the data stored in a database after manual transformations and derivations. Scientists can facilitate data intensive applications to study and understand the behaviour of a complex system. In a data intensive application, a scientific model facilitates raw data products to produce new data products and that data is collected from various sources such as physical, geological, environmental, chemical and biological etc. Based on the generated output, it is important to have the ability of tracing an output data product back to its source values if that particular output seems to have an unexpected value. Data provenance helps scientists to investigate the origin of an unexpected value. In this paper our aim is to find a reason behind the unexpected value from a database using query inversion and we are going to propose some hypothesis to make an inverse query for complex aggregation function and multiple relationship (join, set operation) function.

EFFICIENT SCHEMA BASED KEYWORD SEARCH IN RELATIONAL DATABASES

Keyword search in relational databases allows user to search information without knowing database

schema and using structural query language (SQL). In this paper, we address the problem of generating

and evaluating candidate networks. In candidate network generation, the overhead is caused by raising the

number of joining tuples for the size of minimal candidate network. To reduce overhead, we propose

candidate network generation algorithms to generate a minimum number of joining tuples according to the

maximum number of tuple set. We first generate a set of joining tuples, candidate networks (CNs). It is

difficult to obtain an optimal query processing plan during generating a number of joins. We also develop a

dynamic CN evaluation algorithm (D_CNEval) to generate connected tuple trees (CTTs) by reducing the

size of intermediate joining results. The performance evaluation of the proposed algorithms is conducted

on IMDB and DBLP datasets and also compared with existing algorithms.

CONTEXT-AWARE CLUSTERING USING GLOVE AND K-MEANS

ABSTRACT

In this paper we propose a novel method to cluster categorical data while retaining their context. Typically, clustering is performed on numerical data. However it is often useful to cluster categorical data as well, especially when dealing with data in real-world contexts. Several methods exist which can cluster categorical data, but our approach is unique in that we use recent text-processing and machine learning advancements like GloVe and t- SNE to develop a a context-aware clustering approach (using pre-trained

word embeddings). We encode words or categorical data into numerical, context-aware, vectors that we use to cluster the data points using common clustering algorithms like K-means.

Enhancing the labelling technique of

Clustering the results of a search helps the user to overview the information returned. In this paper, we

look upon the clustering task as cataloguing the search results. By catalogue we mean a structured label

list that can help the user to realize the labels and search results. Labelling Cluster is crucial because

meaningless or confusing labels may mislead users to check wrong clusters for the query and lose extra

time. Additionally, labels should reflect the contents of documents within the cluster accurately. To be able

to label clusters effectively, a new cluster labelling method is introduced. More emphasis was given to

/produce comprehensible and accurate cluster labels in addition to the discovery of document clusters. We

also present a new metric that employs to assess the success of cluster labelling. We adopt a comparative

evaluation strategy to derive the relative performance of the proposed method with respect to the two

prominent search result clustering methods: Suffix Tree Clustering and Lingo.

we perform the experiments using the publicly available Datasets Ambient and ODP-239

Search as-you-type (Exact search)

This PPT includes Search as you type in database using SQL, and it describes Exact search deeply.

A unified approach for spatial data query

With the rapid development in Geographic Information Systems (GISs) and their applications, more and

more geo-graphical databases have been developed by different vendors. However, data integration and

accessing is still a big problem for the development of GIS applications as no interoperability exists among

different spatial databases. In this paper we propose a unified approach for spatial data query. The paper

describes a framework for integrating information from repositories containing different vector data sets

formats and repositories containing raster datasets. The presented approach converts different vector data

formats into a single unified format (File Geo-Database “GDB”). In addition, we employ “metadata” to

support a wide range of users’ queries to retrieve relevant geographic information from heterogeneous and

distributed repositories. Such an employment enhances both query processing and performance.

USING ONTOLOGIES TO IMPROVE DOCUMENT CLASSIFICATION WITH TRANSDUCTIVE SUPPORT...

Many applications of automatic document classification require learning accurately with little training

data. The semi-supervised classification technique uses labeled and unlabeled data for training. This

technique has shown to be effective in some cases; however, the use of unlabeled data is not always

beneficial.

On the other hand, the emergence of web technologies has originated the collaborative development of

ontologies. In this paper, we propose the use of ontologies in order to improve the accuracy and efficiency

of the semi-supervised document classification.

We used support vector machines, which is one of the most effective algorithms that have been studied for

text. Our algorithm enhances the performance of transductive support vector machines through the use of

ontologies. We report experimental results applying our algorithm to three different datasets. Our

experiments show an increment of accuracy of 4% on average and up to 20%, in comparison with the

traditional semi-supervised model.

ModelDR - the tool that untangles complex information

'Whether upgrading data aggregation processes or improving system governance architecture, ModelDR solves the major problem that prevents financial institutions from earning earning more than their cost of capital; the increasing burden of regulation.’

Duet @ TREC 2019 Deep Learning Track

This report discusses three submissions based on the Duet architecture to the Deep Learning track at TREC 2019. For the document retrieval task, we adapt the Duet model to ingest a "multiple field" view of documents—we refer to the new architecture as Duet with Multiple Fields (DuetMF). A second submission combines the DuetMF model with other neural and traditional relevance estimators in a learning-to-rank framework and achieves improved performance over the DuetMF baseline. For the passage retrieval task, we submit a single run based on an ensemble of eight Duet models.

D0373024030

The International Journal of Engineering & Science is aimed at providing a platform for researchers, engineers, scientists, or educators to publish their original research results, to exchange new ideas, to disseminate information in innovative designs, engineering experiences and technological skills. It is also the Journal's objective to promote engineering and technology education. All papers submitted to the Journal will be blind peer-reviewed. Only original articles will be published.

The papers for publication in The International Journal of Engineering& Science are selected through rigorous peer reviews to ensure originality, timeliness, relevance, and readability.

Theoretical work submitted to the Journal should be original in its motivation or modeling structure. Empirical analysis should be based on a theoretical framework and should be capable of replication. It is expected that all materials required for replication (including computer programs and data sets) should be available upon request to the authors.

Conformer-Kernel with Query Term Independence @ TREC 2020 Deep Learning Track

We benchmark Conformer-Kernel models under the strict blind evaluation setting of the TREC 2020 Deep Learning track. In particular, we study the impact of incorporating: (i) Explicit term matching to complement matching based on learned representations (i.e., the “Duet principle”), (ii) query term independence (i.e., the “QTI assumption”) to scale the model to the full retrieval setting, and (iii) the ORCAS click data as an additional document description field. We find evidence which supports that all three aforementioned strategies can lead to improved retrieval quality.

RELATIONAL STORAGE FOR XML RULES

Very few research works have been done on XML security over relational databases despite that XML became the de facto standard for the data representation and exchange on the internet and a lot of XML documents are stored in RDBMS. In [14], the author proposed an access control model for schema-based storage of XML documents in relational storage and translating XML access control rules to relational access control rules. However, the proposed algorithms had performance drawbacks. In this paper, we will use the same access control model of [14] and try to overcome the drawbacks of [14] by proposing an efficient technique to store the XML access control rules in a relational storage of XML DTD. The mapping of the XML DTD to relational schema is proposed in [7]. We also propose an algorithm to translate XPath queries to SQL queries based on the mapping algorithm in [7].

Analysis of different similarity measures: Simrank

SimRank exploits object-to-object relationships very well and finds out the similarity between two objects.

We have used it in our project to find similar reasearch papers from DBLP dataset (DBLP Dataset provides a comprehensive list of research papers in computer science domain).

SimRank is a generic approach and its basic idea can also be applied to other domain of interests as well.

14. Files - Data Structures using C++ by Varsha Patil

14. Files - Data Structures using C++ by Varsha Patil

Document clustering for forensic analysis an approach for improving compute...

Document clustering for forensic analysis an approach for improving computer inspection

Fast and scalable range query processing with strong privacy protection for c...

Networking Domain title 2016-2017

Shakas Technologies Provides, 2016-2017 Project titles, Real time project titles, IEEE Project Titles 2016-2017, NON-IEEE Project Titles, Latest project titles 2016, MCA Project titles, Final Year Project titles 2016-2017..

Contact As:

Shakas Technologies

#13/19, 1st Floor, Municipal Colony,

Kangeyanellore Road, Gandhi Nagar,

Vellore-632006,

Cell: 9500218218

Mobile: 0416-2247353 / 6066663

15. STL - Data Structures using C++ by Varsha Patil

15. STL - Data Structures using C++ by Varsha Patil

Papers We Love Kyiv, July 2018: A Conflict-Free Replicated JSON Datatype

Presentation about paper A Conflict-Free Replicated JSON Datatype by Martin Kleppmann and Alastair R. Beresford

COLOCATION MINING IN UNCERTAIN DATA SETS: A PROBABILISTIC APPROACH

In this paper we investigate colocation mining problem in the context of uncertain data. Uncertain data is a

partially complete data. Many of the real world data is Uncertain, for example, Demographic data, Sensor

networks data, GIS data etc.,. Handling such data is a challenge for knowledge discovery particularly in

colocation mining. One straightforward method is to find the Probabilistic Prevalent colocations (PPCs).

This method tries to find all colocations that are to be generated from a random world. For this we first

apply an approximation error to find all the PPCs which reduce the computations. Next find all the

possible worlds and split them into two different worlds and compute the prevalence probability. These

worlds are used to compare with a minimum probability threshold to decide whether it is Probabilistic

Prevalent colocation (PPCs) or not. The experimental results on the selected data set show the significant

improvement in computational time in comparison to some of the existing methods used in colocation

mining.

More Related Content

What's hot

USING ONTOLOGIES TO IMPROVE DOCUMENT CLASSIFICATION WITH TRANSDUCTIVE SUPPORT...

Many applications of automatic document classification require learning accurately with little training

data. The semi-supervised classification technique uses labeled and unlabeled data for training. This

technique has shown to be effective in some cases; however, the use of unlabeled data is not always

beneficial.

On the other hand, the emergence of web technologies has originated the collaborative development of

ontologies. In this paper, we propose the use of ontologies in order to improve the accuracy and efficiency

of the semi-supervised document classification.

We used support vector machines, which is one of the most effective algorithms that have been studied for

text. Our algorithm enhances the performance of transductive support vector machines through the use of

ontologies. We report experimental results applying our algorithm to three different datasets. Our

experiments show an increment of accuracy of 4% on average and up to 20%, in comparison with the

traditional semi-supervised model.

ModelDR - the tool that untangles complex information

'Whether upgrading data aggregation processes or improving system governance architecture, ModelDR solves the major problem that prevents financial institutions from earning earning more than their cost of capital; the increasing burden of regulation.’

Duet @ TREC 2019 Deep Learning Track

This report discusses three submissions based on the Duet architecture to the Deep Learning track at TREC 2019. For the document retrieval task, we adapt the Duet model to ingest a "multiple field" view of documents—we refer to the new architecture as Duet with Multiple Fields (DuetMF). A second submission combines the DuetMF model with other neural and traditional relevance estimators in a learning-to-rank framework and achieves improved performance over the DuetMF baseline. For the passage retrieval task, we submit a single run based on an ensemble of eight Duet models.

D0373024030

The International Journal of Engineering & Science is aimed at providing a platform for researchers, engineers, scientists, or educators to publish their original research results, to exchange new ideas, to disseminate information in innovative designs, engineering experiences and technological skills. It is also the Journal's objective to promote engineering and technology education. All papers submitted to the Journal will be blind peer-reviewed. Only original articles will be published.

The papers for publication in The International Journal of Engineering& Science are selected through rigorous peer reviews to ensure originality, timeliness, relevance, and readability.

Theoretical work submitted to the Journal should be original in its motivation or modeling structure. Empirical analysis should be based on a theoretical framework and should be capable of replication. It is expected that all materials required for replication (including computer programs and data sets) should be available upon request to the authors.

Conformer-Kernel with Query Term Independence @ TREC 2020 Deep Learning Track

We benchmark Conformer-Kernel models under the strict blind evaluation setting of the TREC 2020 Deep Learning track. In particular, we study the impact of incorporating: (i) Explicit term matching to complement matching based on learned representations (i.e., the “Duet principle”), (ii) query term independence (i.e., the “QTI assumption”) to scale the model to the full retrieval setting, and (iii) the ORCAS click data as an additional document description field. We find evidence which supports that all three aforementioned strategies can lead to improved retrieval quality.

RELATIONAL STORAGE FOR XML RULES

Very few research works have been done on XML security over relational databases despite that XML became the de facto standard for the data representation and exchange on the internet and a lot of XML documents are stored in RDBMS. In [14], the author proposed an access control model for schema-based storage of XML documents in relational storage and translating XML access control rules to relational access control rules. However, the proposed algorithms had performance drawbacks. In this paper, we will use the same access control model of [14] and try to overcome the drawbacks of [14] by proposing an efficient technique to store the XML access control rules in a relational storage of XML DTD. The mapping of the XML DTD to relational schema is proposed in [7]. We also propose an algorithm to translate XPath queries to SQL queries based on the mapping algorithm in [7].

Analysis of different similarity measures: Simrank

SimRank exploits object-to-object relationships very well and finds out the similarity between two objects.

We have used it in our project to find similar reasearch papers from DBLP dataset (DBLP Dataset provides a comprehensive list of research papers in computer science domain).

SimRank is a generic approach and its basic idea can also be applied to other domain of interests as well.

14. Files - Data Structures using C++ by Varsha Patil

14. Files - Data Structures using C++ by Varsha Patil

Document clustering for forensic analysis an approach for improving compute...

Document clustering for forensic analysis an approach for improving computer inspection

Fast and scalable range query processing with strong privacy protection for c...

Networking Domain title 2016-2017

Shakas Technologies Provides, 2016-2017 Project titles, Real time project titles, IEEE Project Titles 2016-2017, NON-IEEE Project Titles, Latest project titles 2016, MCA Project titles, Final Year Project titles 2016-2017..

Contact As:

Shakas Technologies

#13/19, 1st Floor, Municipal Colony,

Kangeyanellore Road, Gandhi Nagar,

Vellore-632006,

Cell: 9500218218

Mobile: 0416-2247353 / 6066663

15. STL - Data Structures using C++ by Varsha Patil

15. STL - Data Structures using C++ by Varsha Patil

Papers We Love Kyiv, July 2018: A Conflict-Free Replicated JSON Datatype

Presentation about paper A Conflict-Free Replicated JSON Datatype by Martin Kleppmann and Alastair R. Beresford

COLOCATION MINING IN UNCERTAIN DATA SETS: A PROBABILISTIC APPROACH

In this paper we investigate colocation mining problem in the context of uncertain data. Uncertain data is a

partially complete data. Many of the real world data is Uncertain, for example, Demographic data, Sensor

networks data, GIS data etc.,. Handling such data is a challenge for knowledge discovery particularly in

colocation mining. One straightforward method is to find the Probabilistic Prevalent colocations (PPCs).

This method tries to find all colocations that are to be generated from a random world. For this we first

apply an approximation error to find all the PPCs which reduce the computations. Next find all the

possible worlds and split them into two different worlds and compute the prevalence probability. These

worlds are used to compare with a minimum probability threshold to decide whether it is Probabilistic

Prevalent colocation (PPCs) or not. The experimental results on the selected data set show the significant

improvement in computational time in comparison to some of the existing methods used in colocation

mining.

What's hot (19)

Supporting search as-you-type using sql in databases

Supporting search as-you-type using sql in databases

USING ONTOLOGIES TO IMPROVE DOCUMENT CLASSIFICATION WITH TRANSDUCTIVE SUPPORT...

USING ONTOLOGIES TO IMPROVE DOCUMENT CLASSIFICATION WITH TRANSDUCTIVE SUPPORT...

ModelDR - the tool that untangles complex information

ModelDR - the tool that untangles complex information

Conformer-Kernel with Query Term Independence @ TREC 2020 Deep Learning Track

Conformer-Kernel with Query Term Independence @ TREC 2020 Deep Learning Track

Analysis of different similarity measures: Simrank

Analysis of different similarity measures: Simrank

14. Files - Data Structures using C++ by Varsha Patil

14. Files - Data Structures using C++ by Varsha Patil

Document clustering for forensic analysis an approach for improving compute...

Document clustering for forensic analysis an approach for improving compute...

Fast and scalable range query processing with strong privacy protection for c...

Fast and scalable range query processing with strong privacy protection for c...

15. STL - Data Structures using C++ by Varsha Patil

15. STL - Data Structures using C++ by Varsha Patil

Papers We Love Kyiv, July 2018: A Conflict-Free Replicated JSON Datatype

Papers We Love Kyiv, July 2018: A Conflict-Free Replicated JSON Datatype

COLOCATION MINING IN UNCERTAIN DATA SETS: A PROBABILISTIC APPROACH

COLOCATION MINING IN UNCERTAIN DATA SETS: A PROBABILISTIC APPROACH

Similar to D 17

BPSC Previous Year Question for AP, ANE, AME, ADA, AE

BPSC Previous Year Question for Assistant Programmer, Assistant Maintenance Engineer, Assistant Network Engineer by Stack IT job Solution

Book : Stack IT job Solution (A pattern Based IT job solution)

বুয়েট, কুয়েট, রুয়েট, ডুয়েট, পিএসসি, টেলিকম, আইবিএ, এমআইএস

সহ যেকোন প্যার্টানে জব প্রস্তুতির একমাত্র বই।

Order Link: https://stackvaly.com/product/stack-it-job-solution-p3qxo

WhatsApp: 01789741518

S2 DATA PROCESSING FIRST TERM C.A 2

This is the Continuous Assessment Test for SS2 DP with Test of Practicals included. It is accurately measured so that more than one students can get a copy from a single print out.

Bangladesh Bank Assistant Maintenance Engineer Question Solution.

Maintenance Engineer Question Solution of Bangladesh Bank. assistant programmer job preparation Bangladesh bank

For each question provide a one to two sentences per questi.pdf

For each question, provide a one to two sentences per question.

Assignments must be submitted as a Microsoft Word or PDF document. Other file formats may not

be accepted.

The file name of the assignment should include the student's name, the assignment number, and

the course name (e.g., John_Smith_Assignment1_Networking101).

All written responses must be typed, double-spaced, and use a standard font (e.g., Times New

Roman or Arial) with 12-point font size.

Assessment questions for the topics:

What is the internet?

a) Define the internet in your own words.

b) What are some of the key components that make up the internet?

Network Edge and Network Core:

a) What is the difference between the network edge and the network core?

b) What types of devices are typically found at the network edge? What types of devices are

typically found in the network core?

Delay, Loss, and Throughput in Packet Switched Networks:

a) What is packet loss? How can it impact network performance?

b) Explain the difference between latency and throughput in the context of packet-switched

networks.

Protocol Layers:

a) What is the OSI model? What are its seven layers?

b) Why is it useful to organize protocols into distinct layers?

Service Models:

a) What is Infrastructure as a Service (IaaS)? How does it differ from Platform as a Service (PaaS)

and Software as a Service (SaaS)?

b) What are some advantages of using a service model for providing computing resources?

Network Security Under Attack:

a) What is a denial-of-service (DoS) attack? How can it be prevented?

b) Describe the difference between phishing and malware attacks.

Is infrastructure network security enough to protect against all cyber threats?

a) Explain why or why not.

b) What are some common vulnerabilities in network infrastructure that can be exploited by

cybercriminals?

What is application security?

a) How does application security differ from infrastructure security?

b) Give an example of an application security vulnerability.

How can a security culture be developed within an organization?

a) What are some ways to promote security awareness and best practices among employees?

b) What are some benefits of having a strong security culture?

What is the role of risk management in network security?

a) Explain why risk management is important for network security.

b) What are some common risk assessment methodologies used in network security?.

Database-management-system-dbms-ppt.pptx

A database management system (or DBMS) is essentially nothing more than a computerized data-keeping system. Users of the system are given facilities to perform several kinds of operations on such a system for either manipulation of the data in the database or the management of the database structure itself.

Preparing for BIT – IT2301 Database Management Systems 2001e

Preparing for BIT – IT2301 Database Management Systems, on Rupavahini by Gihan Wikramanayake, 19th July 2001, 2200-2230 hrs.

USING ONTOLOGIES TO OVERCOMING DRAWBACKS OF DATABASES AND VICE VERSA: A SURVEY

For a same domain, several databases (DBs) exist. The emergence of classical web to the semantic web has

contributed to the appearance of the notion of ontology that have shared and consensual vocabulary. For a

given, it is more interesting to take advantage of existing databases, to build an ontology. Most of the data

are already stored in these databases. So many DBs can be integrated to enable reuse of existing data for

the semantic web. Even for existing ontologies, the relevance of the information they contain requires

regular updating. These databases can be useful sources to enrich these ontologies. In the other hand, for

these ontologies more than the ratio ‘size of the instances on the size of working memory’ is large more

than the management of these instances, in memory, is difficult. Finding a way to store these instances in a

structured manner to satisfy the needs of performance and reliability required for many applications

becomes an obligation. As a consequence, defining query languages to support these structures becomes a

challenge for SW community. We will show through this paper how ontologies can benefit from DBs to

increase system performance and facilitate their design cycle. The DBs in their turn suffers from several

drawbacks namely complexity of the design cycle and lack of semantics. Since ontologies are rich in

semantic, DBs can profit from this advantage to overcoming their drawbacks.

Similar to D 17 (20)

ADBMS ALL 2069-73 [CSITauthority.blogspot.com].pdf![ADBMS ALL 2069-73 [CSITauthority.blogspot.com].pdf](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![ADBMS ALL 2069-73 [CSITauthority.blogspot.com].pdf](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

ADBMS ALL 2069-73 [CSITauthority.blogspot.com].pdf

2009 Punjab Technical University B.C.A Database Management System Question paper

2009 Punjab Technical University B.C.A Database Management System Question paper

BPSC Previous Year Question for AP, ANE, AME, ADA, AE

BPSC Previous Year Question for AP, ANE, AME, ADA, AE

Bangladesh Bank Assistant Maintenance Engineer Question Solution.

Bangladesh Bank Assistant Maintenance Engineer Question Solution.

For each question provide a one to two sentences per questi.pdf

For each question provide a one to two sentences per questi.pdf

Preparing for BIT – IT2301 Database Management Systems 2001e

Preparing for BIT – IT2301 Database Management Systems 2001e

USING ONTOLOGIES TO OVERCOMING DRAWBACKS OF DATABASES AND VICE VERSA: A SURVEY

USING ONTOLOGIES TO OVERCOMING DRAWBACKS OF DATABASES AND VICE VERSA: A SURVEY

Recently uploaded

一比一原版(SFU毕业证)西蒙菲莎大学毕业证成绩单如何办理

SFU毕业证原版定制【微信:176555708】【西蒙菲莎大学毕业证成绩单-学位证】【微信:176555708】(留信学历认证永久存档查询)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

◆◆◆◆◆ — — — — — — — — 【留学教育】留学归国服务中心 — — — — — -◆◆◆◆◆

【主营项目】

一.毕业证【微信:176555708】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【微信:176555708】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分→ 【关于价格问题(保证一手价格)

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

选择实体注册公司办理,更放心,更安全!我们的承诺:可来公司面谈,可签订合同,会陪同客户一起到教育部认证窗口递交认证材料,客户在教育部官方认证查询网站查询到认证通过结果后付款,不成功不收费!

学历顾问:微信:176555708

Halogenation process of chemical process industries

This presentation is about nitration process of industries, unit processes of chemical engineering.

Democratizing Fuzzing at Scale by Abhishek Arya

Presented at NUS: Fuzzing and Software Security Summer School 2024

This keynote talks about the democratization of fuzzing at scale, highlighting the collaboration between open source communities, academia, and industry to advance the field of fuzzing. It delves into the history of fuzzing, the development of scalable fuzzing platforms, and the empowerment of community-driven research. The talk will further discuss recent advancements leveraging AI/ML and offer insights into the future evolution of the fuzzing landscape.

Gen AI Study Jams _ For the GDSC Leads in India.pdf

Gen AI Study Jams _ For the GDSC Leads in India.pdf

Architectural Portfolio Sean Lockwood

This portfolio contains selected projects I completed during my undergraduate studies. 2018 - 2023

Design and Analysis of Algorithms-DP,Backtracking,Graphs,B&B

Dynamic Programming

Backtracking

Techniques for Graphs

Branch and Bound

NO1 Uk best vashikaran specialist in delhi vashikaran baba near me online vas...

NO1 Uk best vashikaran specialist in delhi vashikaran baba near me online vas...Amil Baba Dawood bangali

Contact with Dawood Bhai Just call on +92322-6382012 and we'll help you. We'll solve all your problems within 12 to 24 hours and with 101% guarantee and with astrology systematic. If you want to take any personal or professional advice then also you can call us on +92322-6382012 , ONLINE LOVE PROBLEM & Other all types of Daily Life Problem's.Then CALL or WHATSAPP us on +92322-6382012 and Get all these problems solutions here by Amil Baba DAWOOD BANGALI

#vashikaranspecialist #astrologer #palmistry #amliyaat #taweez #manpasandshadi #horoscope #spiritual #lovelife #lovespell #marriagespell#aamilbabainpakistan #amilbabainkarachi #powerfullblackmagicspell #kalajadumantarspecialist #realamilbaba #AmilbabainPakistan #astrologerincanada #astrologerindubai #lovespellsmaster #kalajaduspecialist #lovespellsthatwork #aamilbabainlahore#blackmagicformarriage #aamilbaba #kalajadu #kalailam #taweez #wazifaexpert #jadumantar #vashikaranspecialist #astrologer #palmistry #amliyaat #taweez #manpasandshadi #horoscope #spiritual #lovelife #lovespell #marriagespell#aamilbabainpakistan #amilbabainkarachi #powerfullblackmagicspell #kalajadumantarspecialist #realamilbaba #AmilbabainPakistan #astrologerincanada #astrologerindubai #lovespellsmaster #kalajaduspecialist #lovespellsthatwork #aamilbabainlahore #blackmagicforlove #blackmagicformarriage #aamilbaba #kalajadu #kalailam #taweez #wazifaexpert #jadumantar #vashikaranspecialist #astrologer #palmistry #amliyaat #taweez #manpasandshadi #horoscope #spiritual #lovelife #lovespell #marriagespell#aamilbabainpakistan #amilbabainkarachi #powerfullblackmagicspell #kalajadumantarspecialist #realamilbaba #AmilbabainPakistan #astrologerincanada #astrologerindubai #lovespellsmaster #kalajaduspecialist #lovespellsthatwork #aamilbabainlahore #Amilbabainuk #amilbabainspain #amilbabaindubai #Amilbabainnorway #amilbabainkrachi #amilbabainlahore #amilbabaingujranwalan #amilbabainislamabad

Vaccine management system project report documentation..pdf

The Division of Vaccine and Immunization is facing increasing difficulty monitoring vaccines and other commodities distribution once they have been distributed from the national stores. With the introduction of new vaccines, more challenges have been anticipated with this additions posing serious threat to the already over strained vaccine supply chain system in Kenya.

Sachpazis:Terzaghi Bearing Capacity Estimation in simple terms with Calculati...

Terzaghi's soil bearing capacity theory, developed by Karl Terzaghi, is a fundamental principle in geotechnical engineering used to determine the bearing capacity of shallow foundations. This theory provides a method to calculate the ultimate bearing capacity of soil, which is the maximum load per unit area that the soil can support without undergoing shear failure. The Calculation HTML Code included.

Water Industry Process Automation and Control Monthly - May 2024.pdf

Water Industry Process Automation and Control Monthly - May 2024.pdfWater Industry Process Automation & Control

Welcome to WIPAC Monthly the magazine brought to you by the LinkedIn Group Water Industry Process Automation & Control.

In this month's edition, along with this month's industry news to celebrate the 13 years since the group was created we have articles including

A case study of the used of Advanced Process Control at the Wastewater Treatment works at Lleida in Spain

A look back on an article on smart wastewater networks in order to see how the industry has measured up in the interim around the adoption of Digital Transformation in the Water Industry.Top 10 Oil and Gas Projects in Saudi Arabia 2024.pdf

Saudi Arabia stands as a titan in the global energy landscape, renowned for its abundant oil and gas resources. It's the largest exporter of petroleum and holds some of the world's most significant reserves. Let's delve into the top 10 oil and gas projects shaping Saudi Arabia's energy future in 2024.

TECHNICAL TRAINING MANUAL GENERAL FAMILIARIZATION COURSE

AIRCRAFT GENERAL

The Single Aisle is the most advanced family aircraft in service today, with fly-by-wire flight controls.

The A318, A319, A320 and A321 are twin-engine subsonic medium range aircraft.

The family offers a choice of engines

HYDROPOWER - Hydroelectric power generation

Overview of the fundamental roles in Hydropower generation and the components involved in wider Electrical Engineering.

This paper presents the design and construction of hydroelectric dams from the hydrologist’s survey of the valley before construction, all aspects and involved disciplines, fluid dynamics, structural engineering, generation and mains frequency regulation to the very transmission of power through the network in the United Kingdom.

Author: Robbie Edward Sayers

Collaborators and co editors: Charlie Sims and Connor Healey.

(C) 2024 Robbie E. Sayers

Cosmetic shop management system project report.pdf

Buying new cosmetic products is difficult. It can even be scary for those who have sensitive skin and are prone to skin trouble. The information needed to alleviate this problem is on the back of each product, but it's thought to interpret those ingredient lists unless you have a background in chemistry.

Instead of buying and hoping for the best, we can use data science to help us predict which products may be good fits for us. It includes various function programs to do the above mentioned tasks.

Data file handling has been effectively used in the program.

The automated cosmetic shop management system should deal with the automation of general workflow and administration process of the shop. The main processes of the system focus on customer's request where the system is able to search the most appropriate products and deliver it to the customers. It should help the employees to quickly identify the list of cosmetic product that have reached the minimum quantity and also keep a track of expired date for each cosmetic product. It should help the employees to find the rack number in which the product is placed.It is also Faster and more efficient way.

Forklift Classes Overview by Intella Parts

Discover the different forklift classes and their specific applications. Learn how to choose the right forklift for your needs to ensure safety, efficiency, and compliance in your operations.

For more technical information, visit our website https://intellaparts.com

Nuclear Power Economics and Structuring 2024

Title: Nuclear Power Economics and Structuring - 2024 Edition

Produced by: World Nuclear Association Published: April 2024

Report No. 2024/001

© 2024 World Nuclear Association.

Registered in England and Wales, company number 01215741

This report reflects the views

of industry experts but does not

necessarily represent those

of World Nuclear Association’s

individual member organizations.

Recently uploaded (20)

Halogenation process of chemical process industries

Halogenation process of chemical process industries

Gen AI Study Jams _ For the GDSC Leads in India.pdf

Gen AI Study Jams _ For the GDSC Leads in India.pdf

Design and Analysis of Algorithms-DP,Backtracking,Graphs,B&B

Design and Analysis of Algorithms-DP,Backtracking,Graphs,B&B

NO1 Uk best vashikaran specialist in delhi vashikaran baba near me online vas...

NO1 Uk best vashikaran specialist in delhi vashikaran baba near me online vas...

Vaccine management system project report documentation..pdf

Vaccine management system project report documentation..pdf

Sachpazis:Terzaghi Bearing Capacity Estimation in simple terms with Calculati...

Sachpazis:Terzaghi Bearing Capacity Estimation in simple terms with Calculati...

Water Industry Process Automation and Control Monthly - May 2024.pdf

Water Industry Process Automation and Control Monthly - May 2024.pdf

Top 10 Oil and Gas Projects in Saudi Arabia 2024.pdf

Top 10 Oil and Gas Projects in Saudi Arabia 2024.pdf

TECHNICAL TRAINING MANUAL GENERAL FAMILIARIZATION COURSE

TECHNICAL TRAINING MANUAL GENERAL FAMILIARIZATION COURSE

Cosmetic shop management system project report.pdf

Cosmetic shop management system project report.pdf

LIGA(E)11111111111111111111111111111111111111111.ppt

LIGA(E)11111111111111111111111111111111111111111.ppt

D 17

- 1. No. of Printed Pages : 5 MCS-043 I MCA (Revised) Term-End Examination 0.473C1 December, 2017 MCS-043 : ADVANCED DATABASE MANAGEMENT SYSTEMS Time : 3" hours Maximum Marks : 100 Note : Question number 1 is compulsory. Answer any three questions from the rest. 1. (a) How does Data-mining differ from the technique of Knowledge Discovery in Databases (KDD) ? Can we use these two techniques interchangeably or alternatively ? Justify. 5 (b) Explain the index implementations available in PostgreSQL, with suitable examples. (c) Consider the following data : Employee Code (EC) Project Code on which employee works (PC) , Tools that employee can work on (TC) El P1 P2 T1 T2 E2 P3 P4 T2 T3 MCS-043 1 P.T.O.

- 2. Employee with code El works on two projects, P1 and P2, and can use two tools T1 and T2. Likewise, E2 works on projects P3 and P4 and can use tools T2 and T3. You may assume that projects and tools are independent of each other, but depend only on employee. Perform the following tasks for the data (i) Represent the data as above in a relation, namely, Employee_Project_Tool (EC, PC, TC). 2 (ii) List all the MVDs in the relation created in part (i) above. Also identify the primary key of the relation. (iii) Normalise the relation created in part (i) into 4NF. 3 (iv) Join the relations created in part (iii) above and show that they will produce the same data on joining as per the relation created in part (i). (d) What is Granularity in databases ? How does granularity relate to the security of databases ? In a concurrent environment, how does granularity affect the performance ? 5 MCS-043 2

- 3. (e) What are Semantic databases ? Give the features of semantic databases. Discuss the process of searching the knowledge in these databases. (f) Illustrate the concept of Shadow Paging with suitable example. Give the advantages and disadvantages of shadow paging. (g) How does Clustering differ from Classification ? Briefly discuss one approach for both, i.e., clustering and classification. 2. (a) What are Alerts, Cursors, Stored Procedures and Triggers ? Give the utility of each. Explain each with suitable code of your choice. 10 (b) What is Simple Hash Join ? Discuss the algorithm and cost calculation process for simple hash join. Explain how Hash join is applicable to Equi join and Natural join. 10 3. (a) Differentiate between the following : (i) Database Queries and Data-mining Queries (ii) Star Schema and Snowflake Schema Give example for each while differentiating. 10 MCS-043 3 P.T.O.

- 4. (b) What are Deadlocks ? How are they detected ? Explain with the help of an example. (c) What are Mobile Databases ? Explain the characteristics of mobile databases. Give an application of mobile databases. 5 4. (a) What are Views in SQL ? What is the significance of views ? Give an example code in SQL to create a view. 5 (b) (i) Create an XML document that stores information about students of a class. The document should use appropriate tags/attributes to store information like student_id (unique), name (consisting of first name and last name), class (consisting of class no. and section) and books issued to the student (minimum 0, maximum 2). Create at least two such student records. 6 (ii) Create the DTD for verification of the above student data. 4 (c) Explain the features and challenges of multimedia databases. 5 5. (a) What is Data Dictionary ? Give the features and benefits of a data dictionary. What are the disadvantages of a data dictionary ? 8 MCS-043 4

- 5. (b) What is the utility of Multi-Version schemes in databases ? Discuss any one multi-version scheme, with suitable example. 5 (c) What is Association Rule Mining ? Write Apriori Algorithm for finding frequent itemset. Discuss it with suitable example. 7 MCS-043 5 6,500