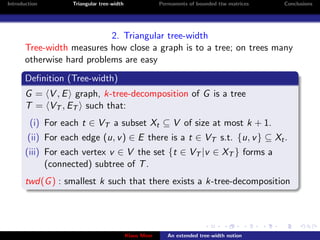

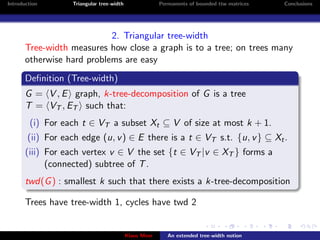

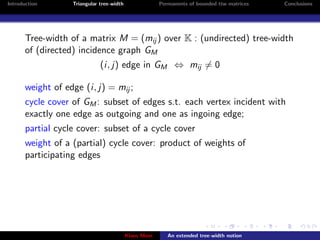

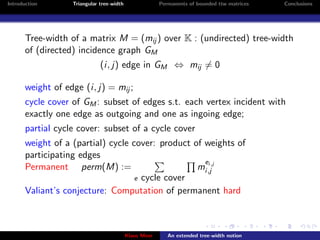

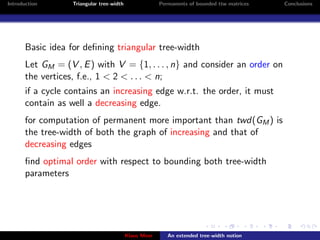

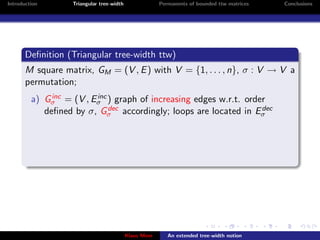

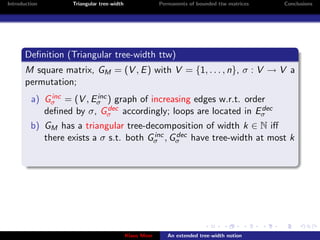

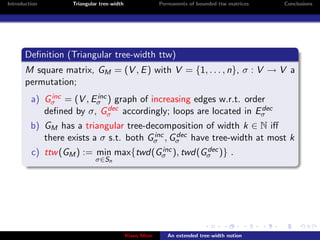

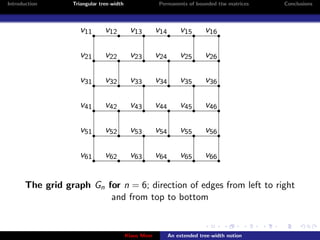

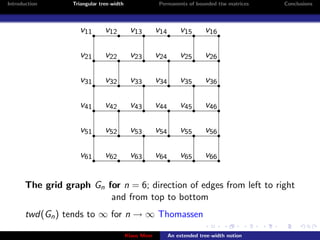

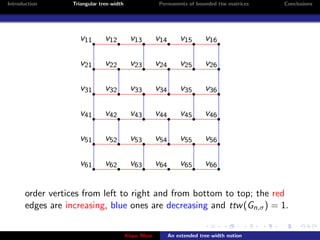

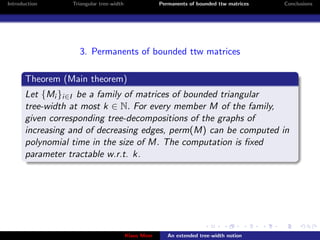

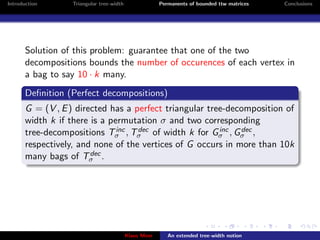

This document discusses triangular tree-width, a new measure for directed graphs that is related to efficiently computing matrix permanents. Triangular tree-width is defined based on the tree-widths of the increasing and decreasing edge subgraphs of a directed graph with respect to a particular vertex ordering. The goal is that bounding the triangular tree-width of a matrix's incidence graph would allow its permanent to be computed efficiently, whereas the standard tree-width may be unbounded. The document outlines the basics of tree-width and motivates the definition of triangular tree-width.