Embed presentation

Download as PDF, PPTX

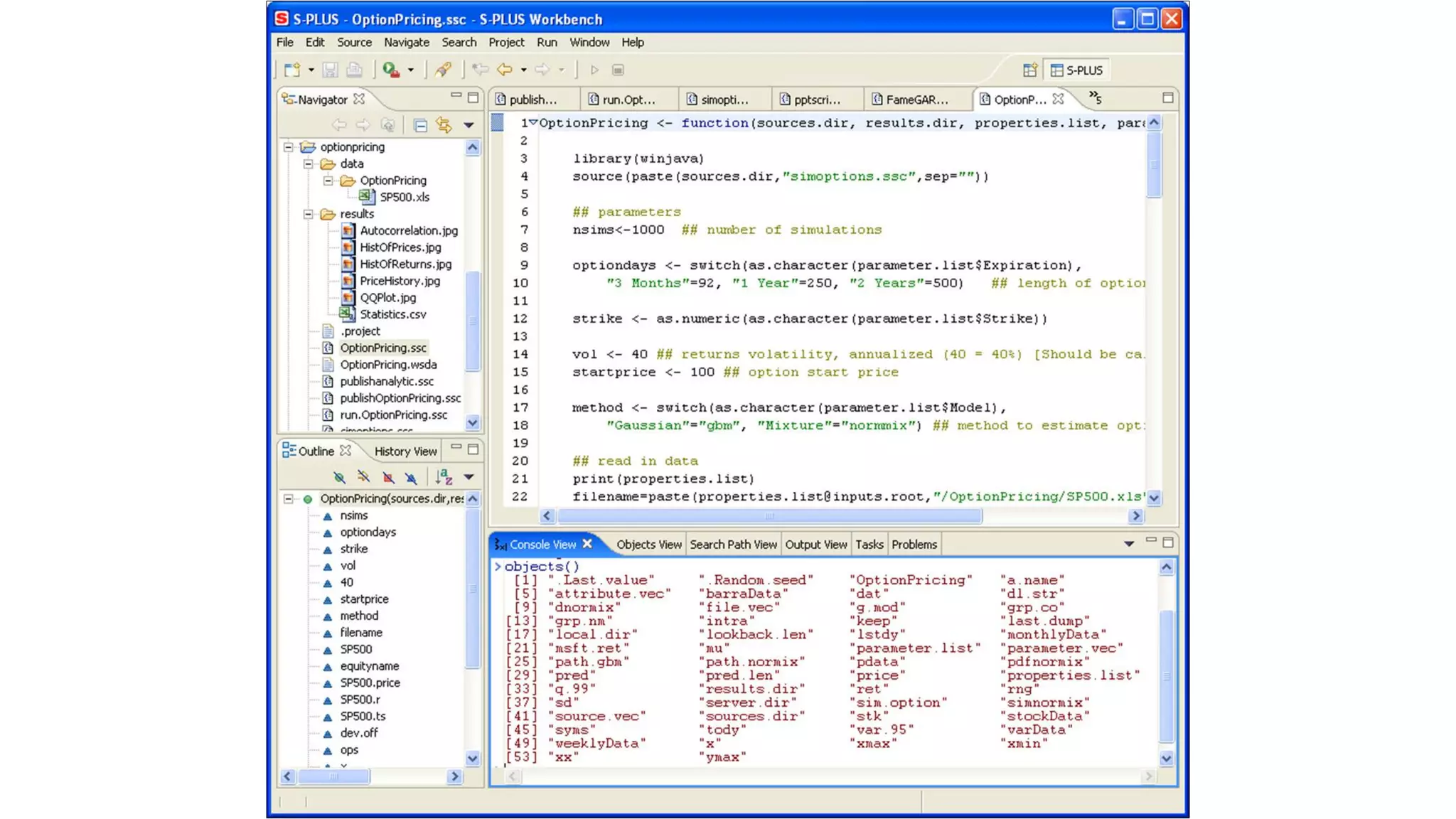

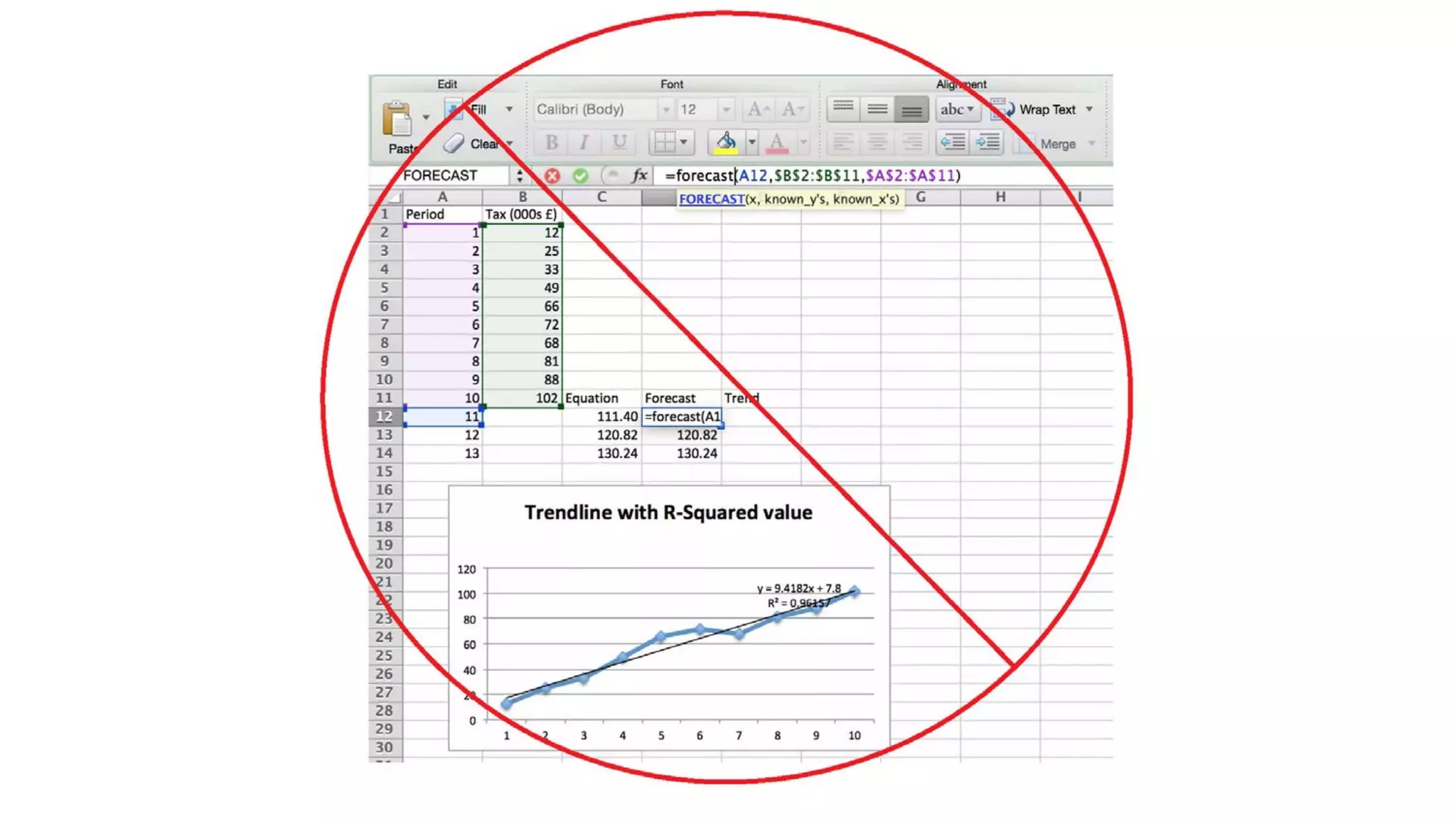

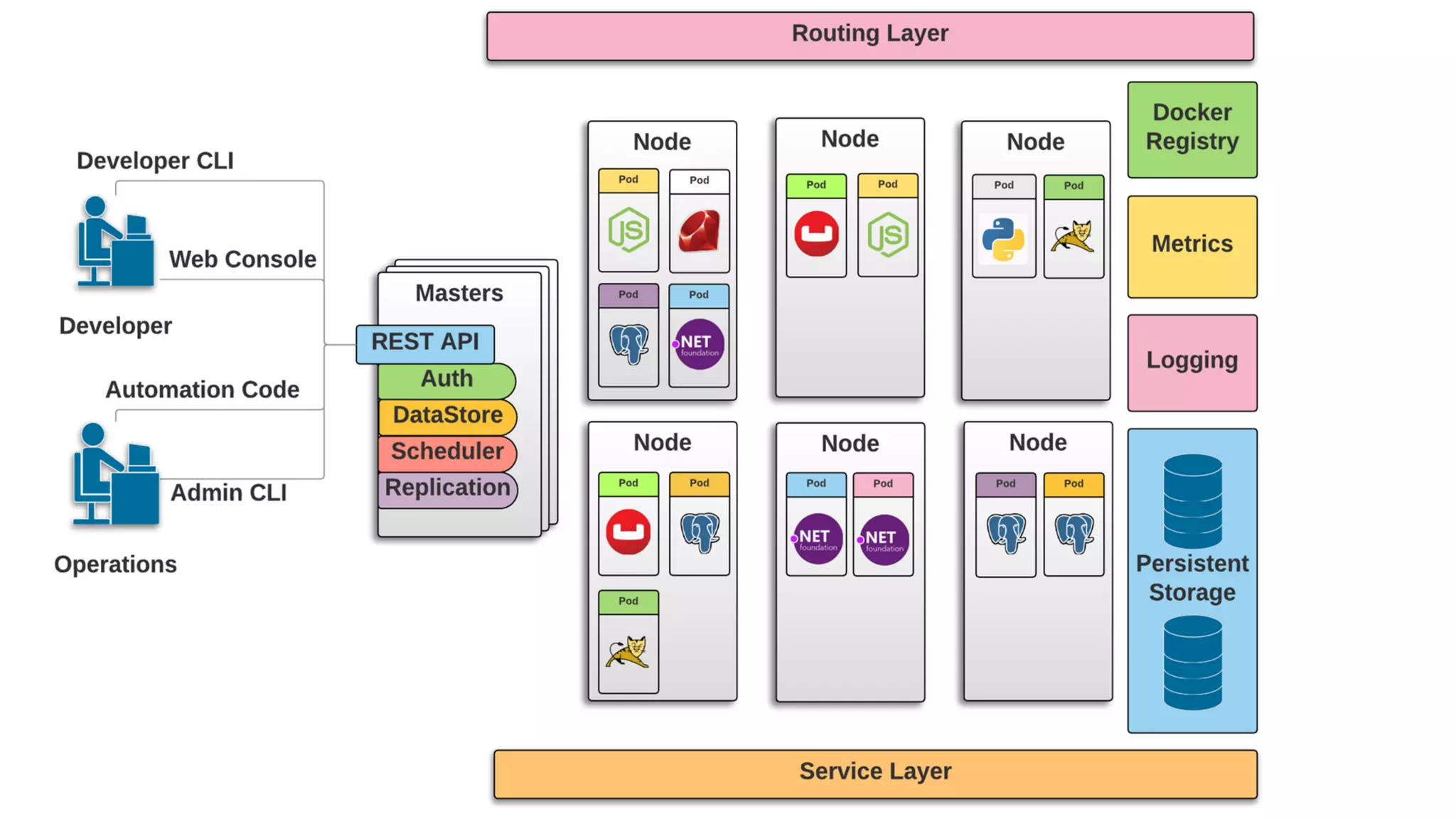

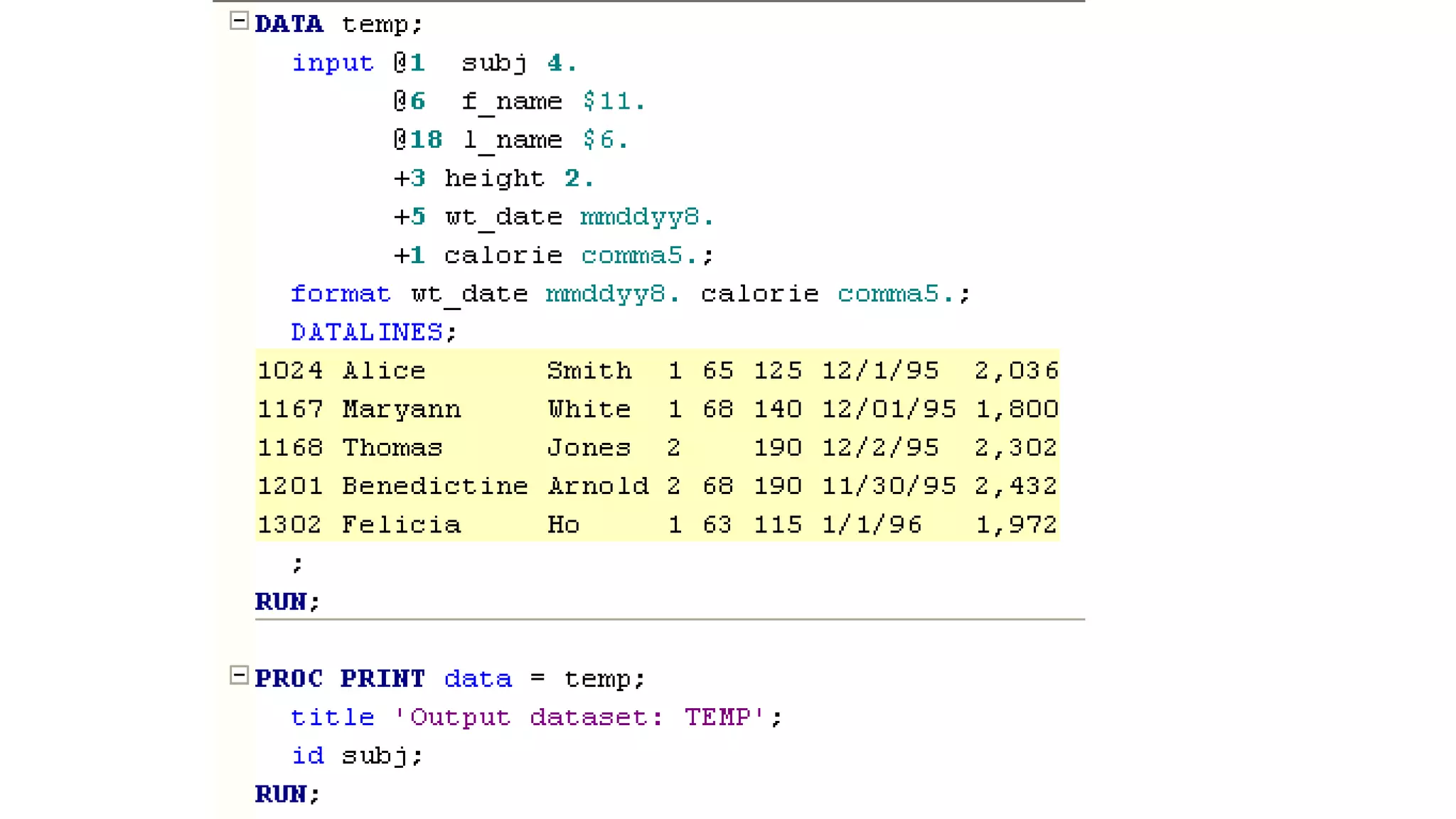

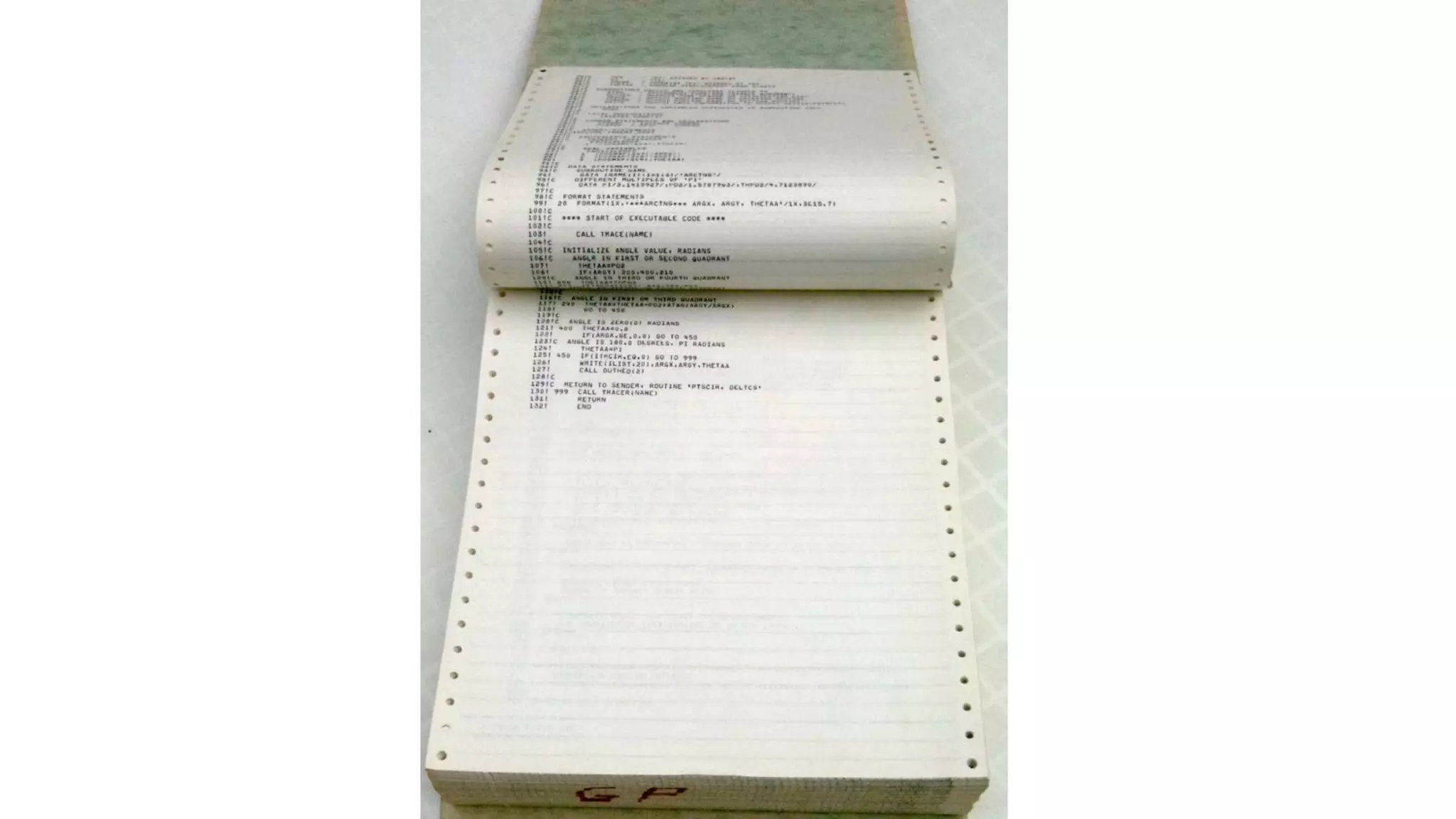

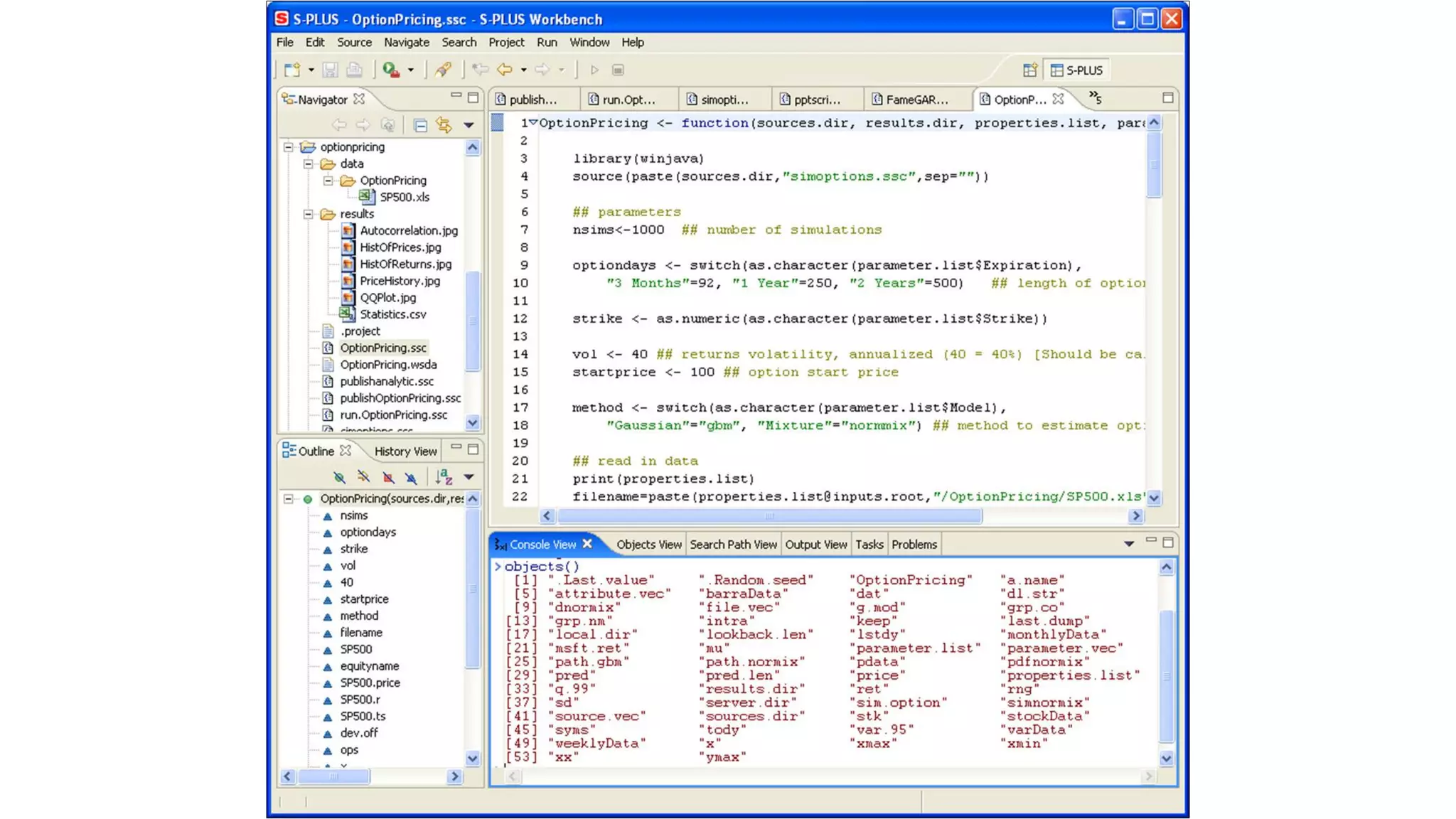

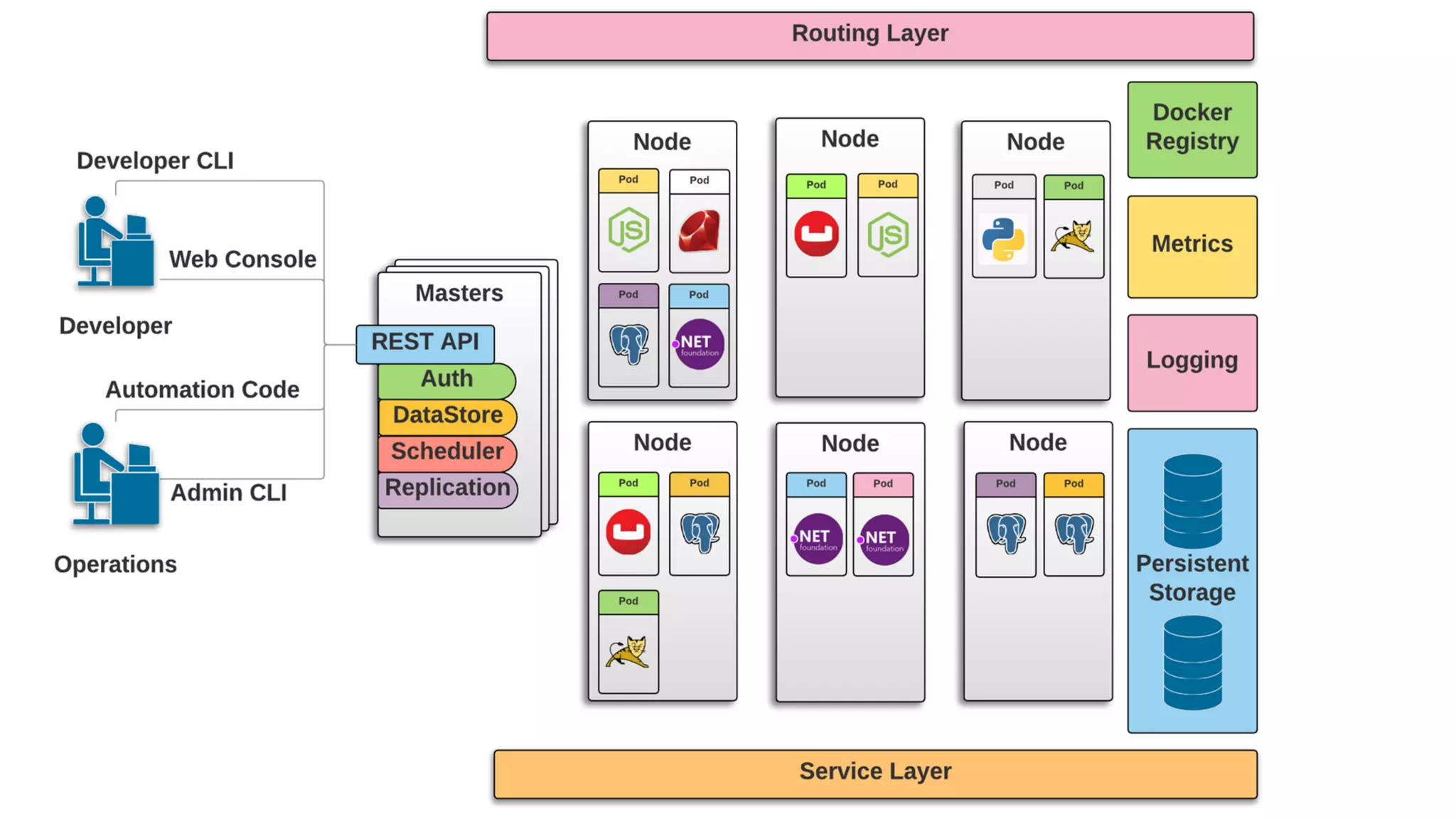

The document introduces the evolution of application infrastructure, highlighting advancements such as distributed software, easier programming languages, and improved statistical libraries. It emphasizes the integration of big data, APIs, and machine learning with web applications using tools like Docker, Kubernetes, and OpenShift. The document provides resources for setting up OpenShift and related tools for analysis and encourages contributions through pull requests.