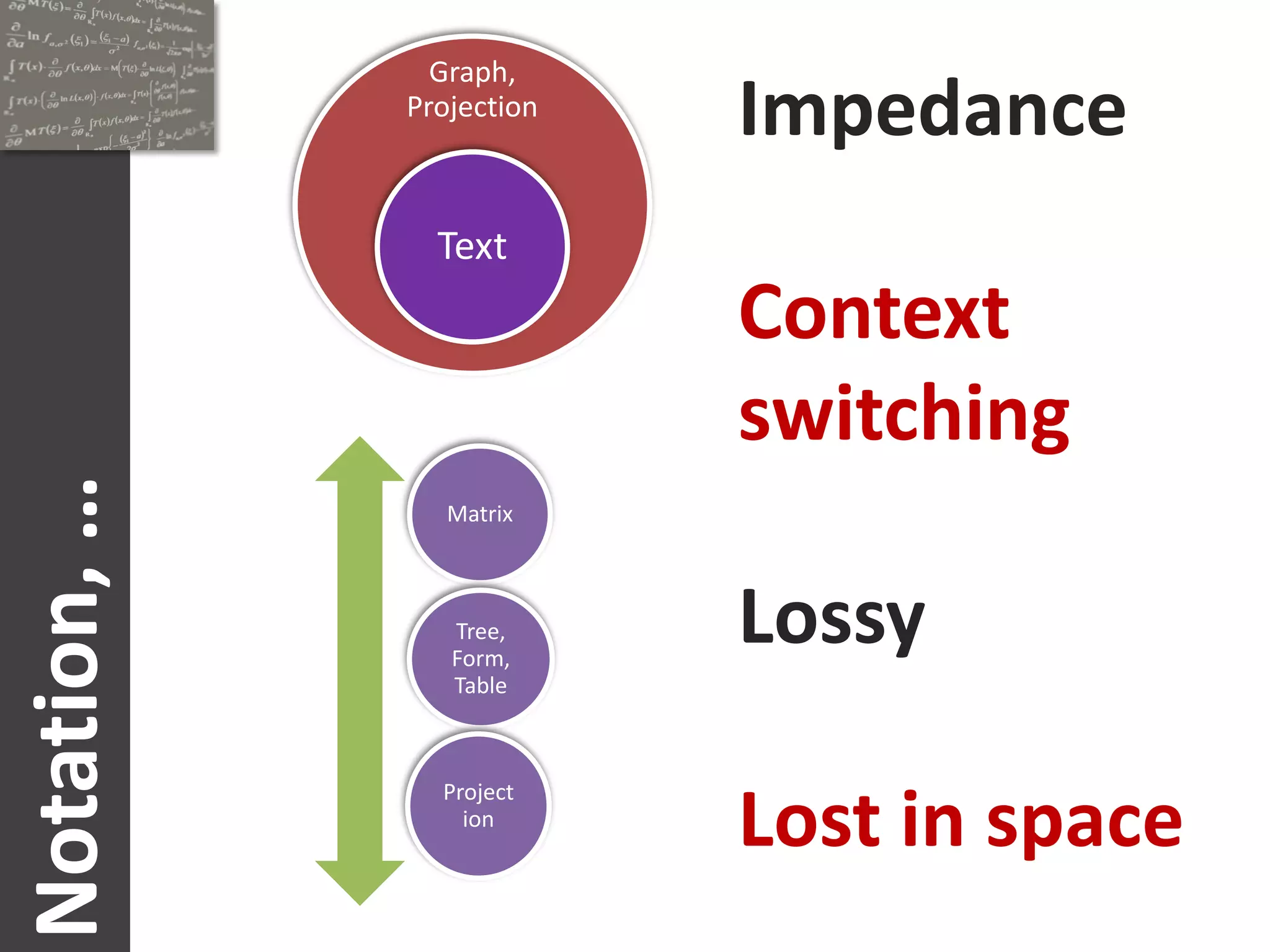

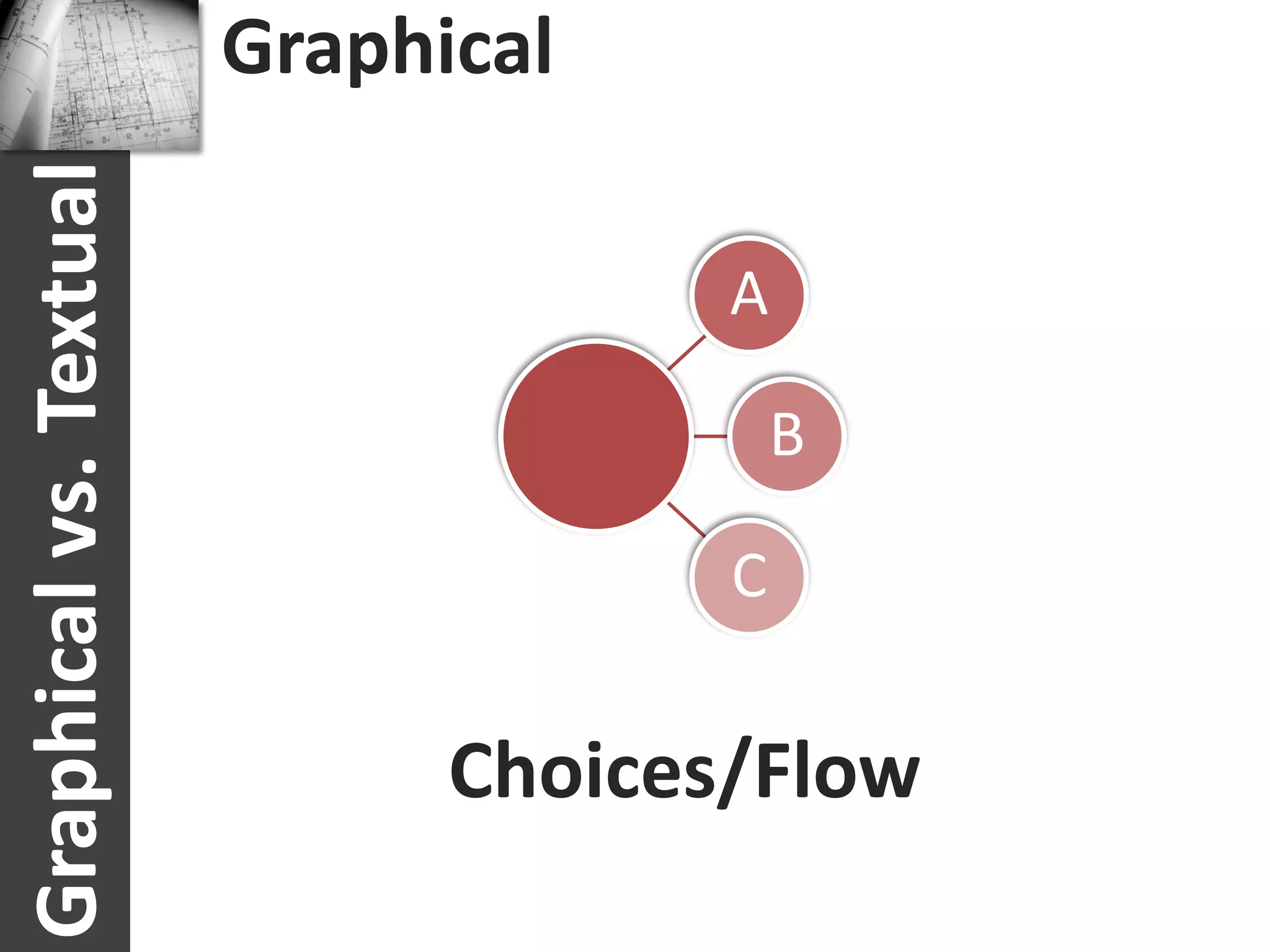

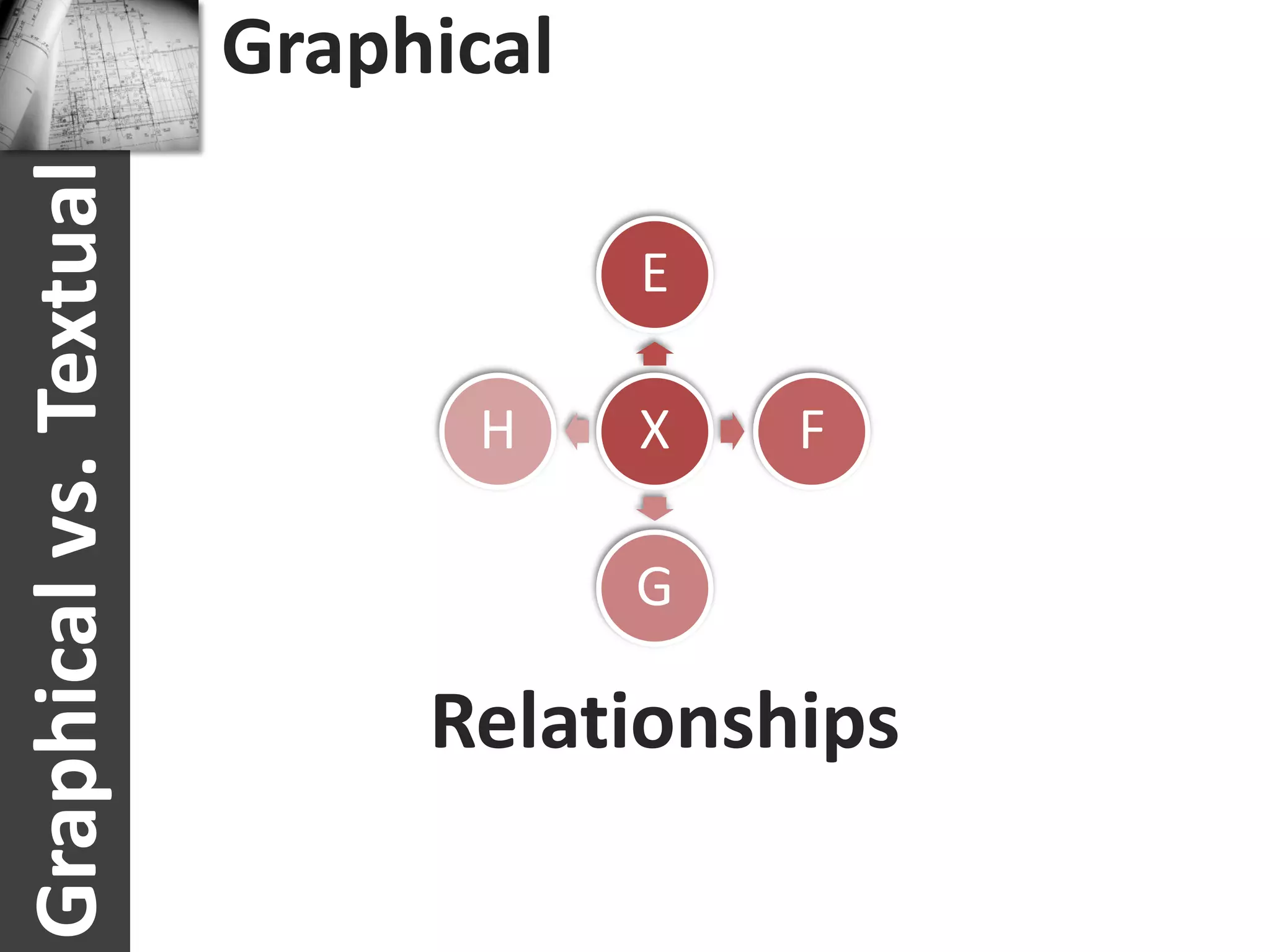

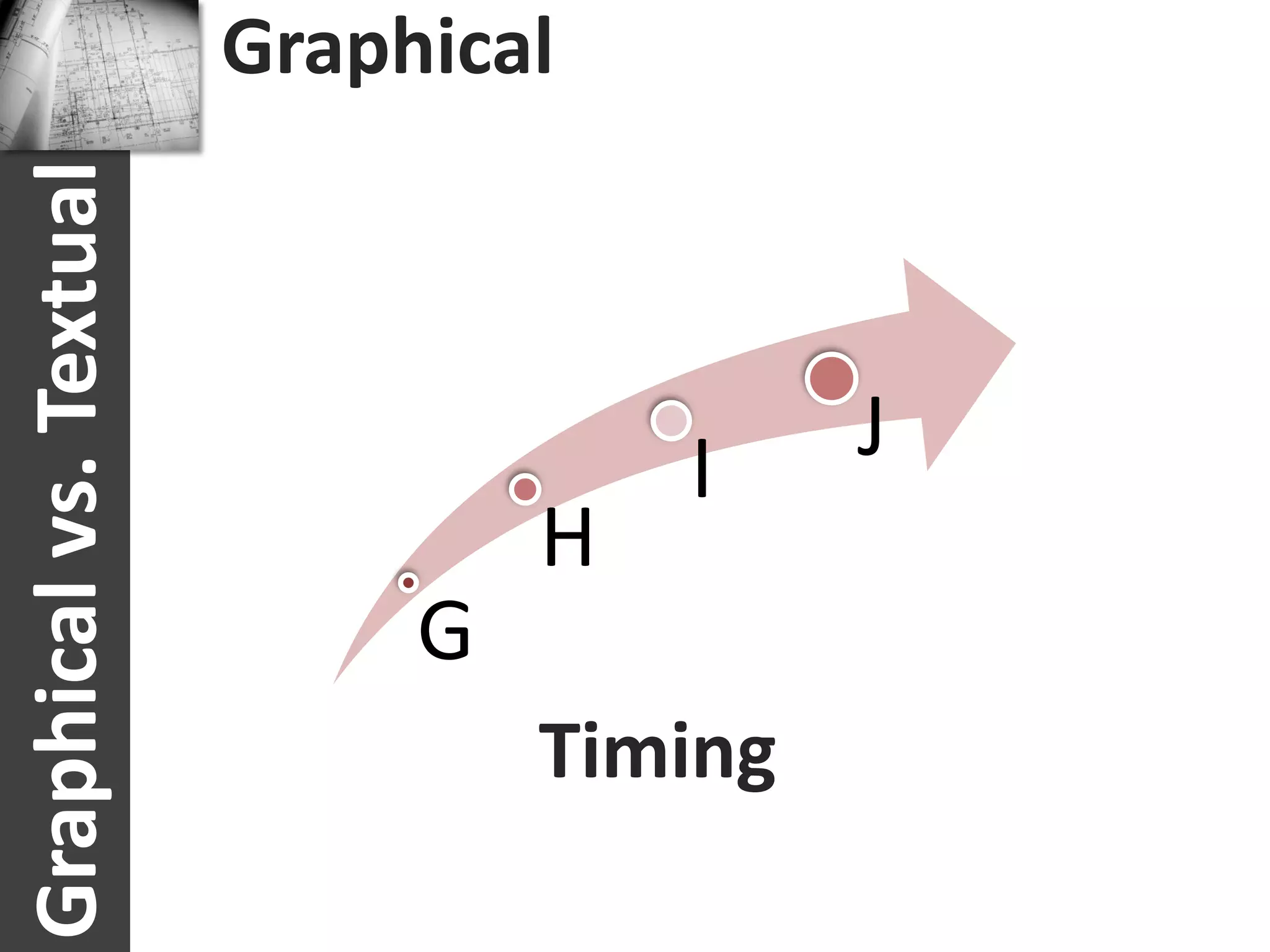

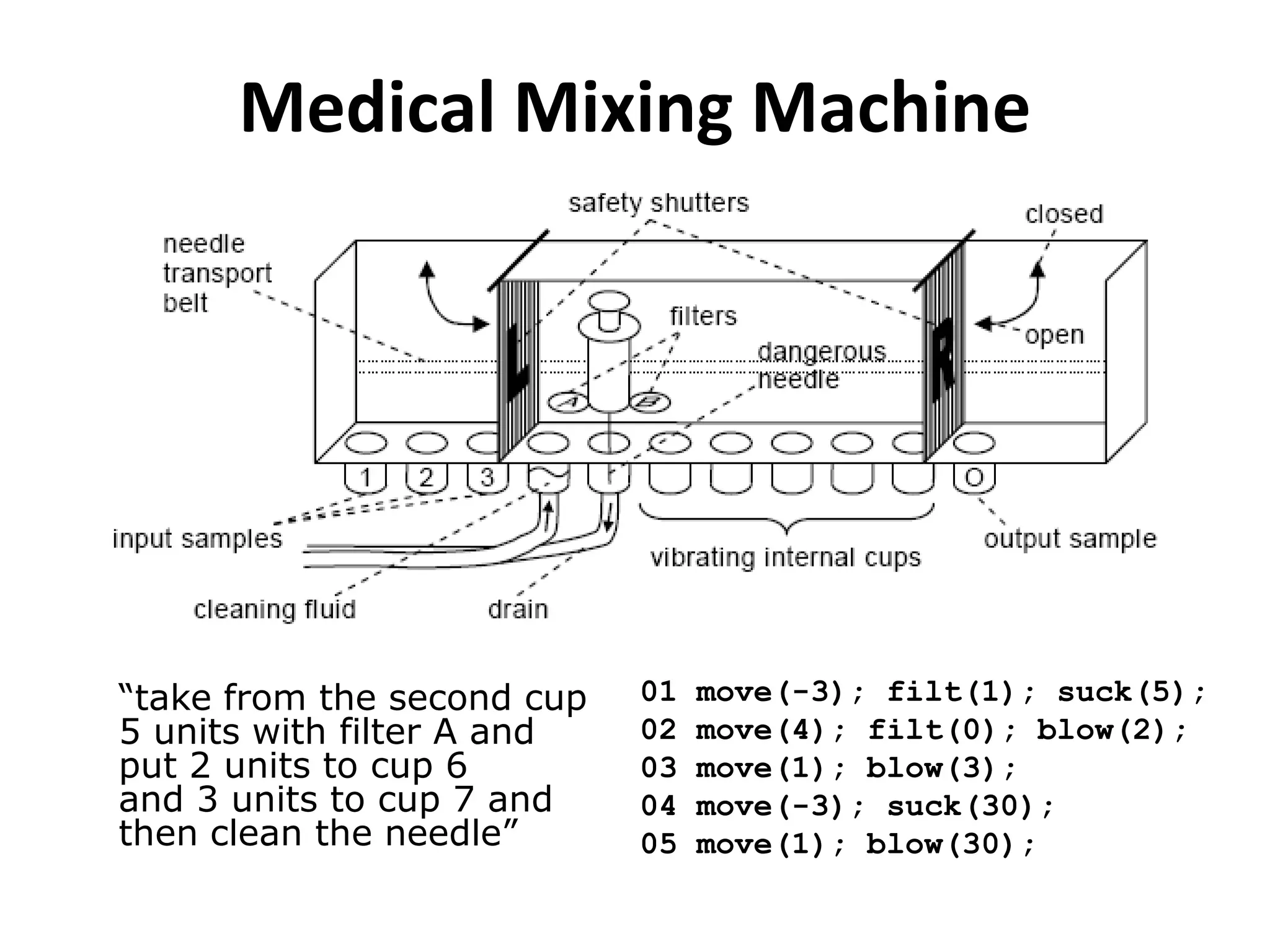

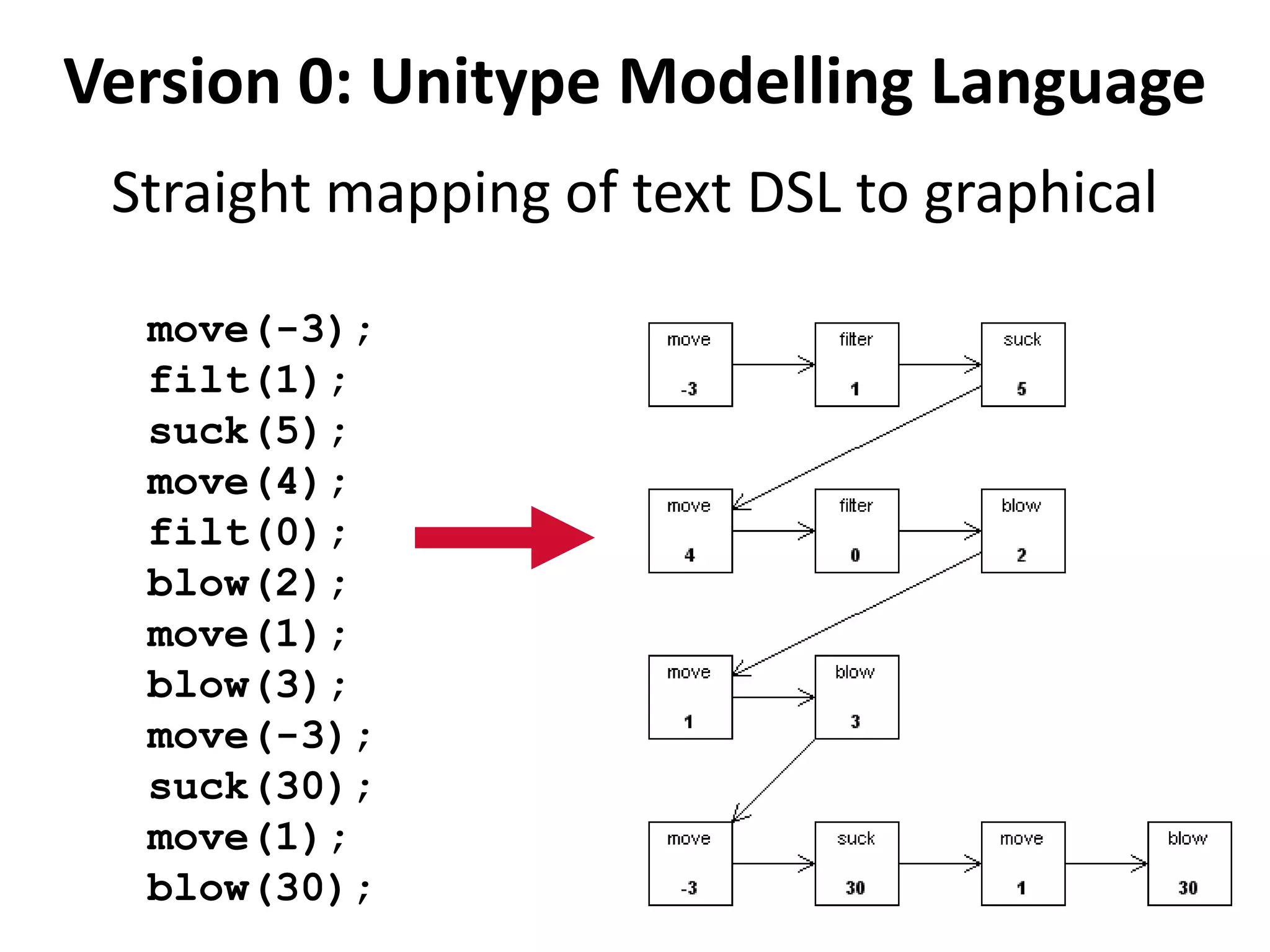

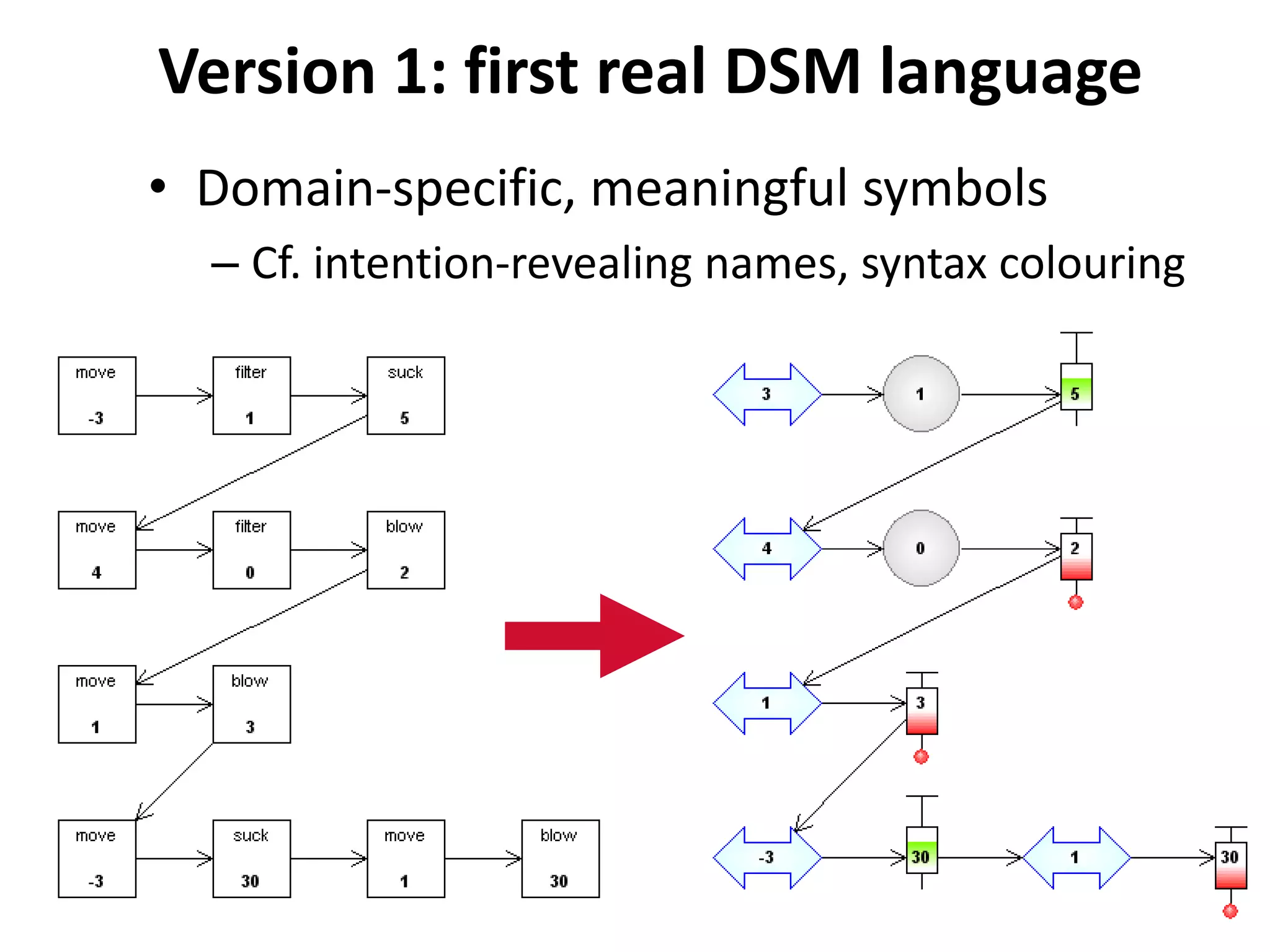

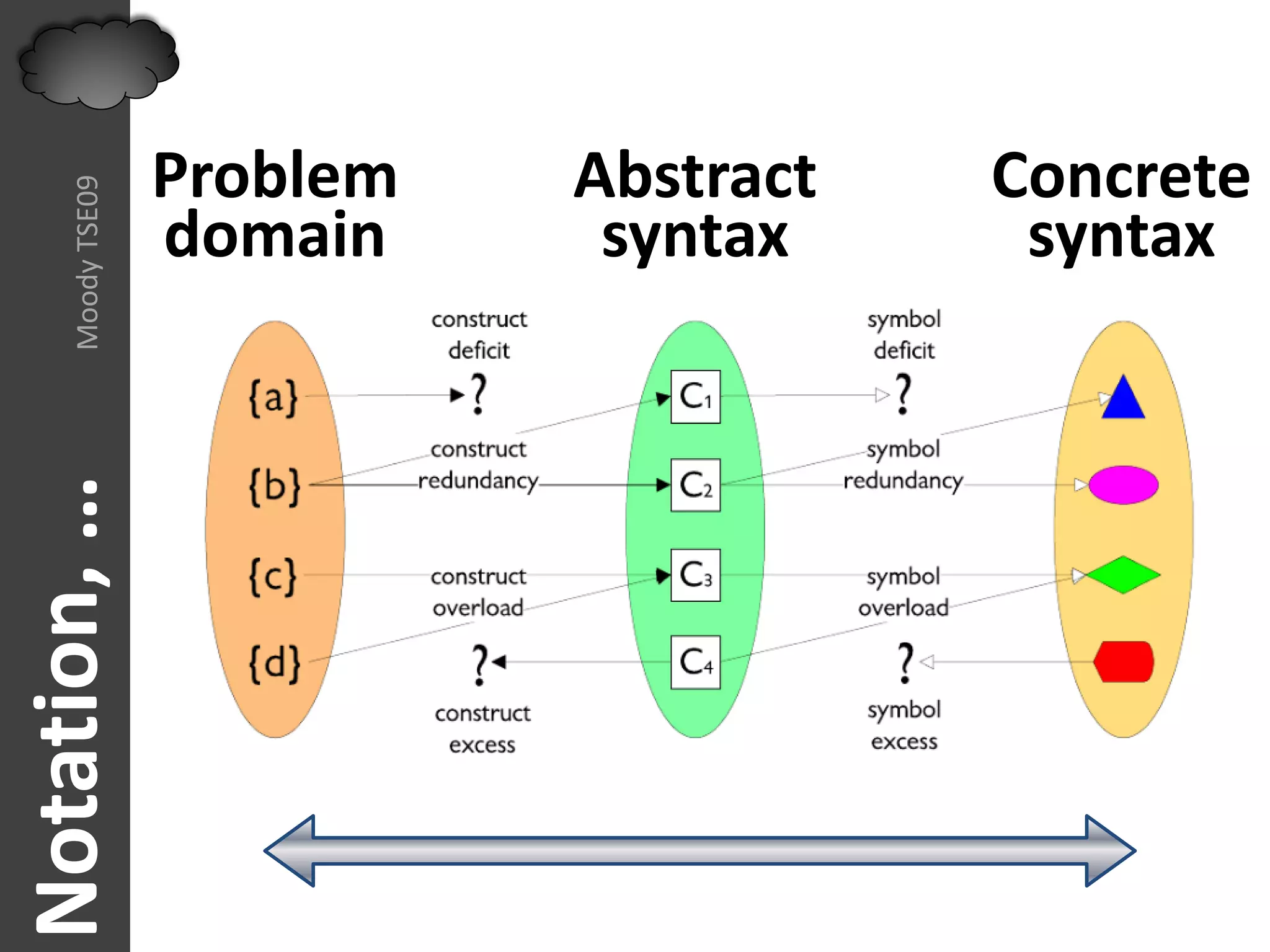

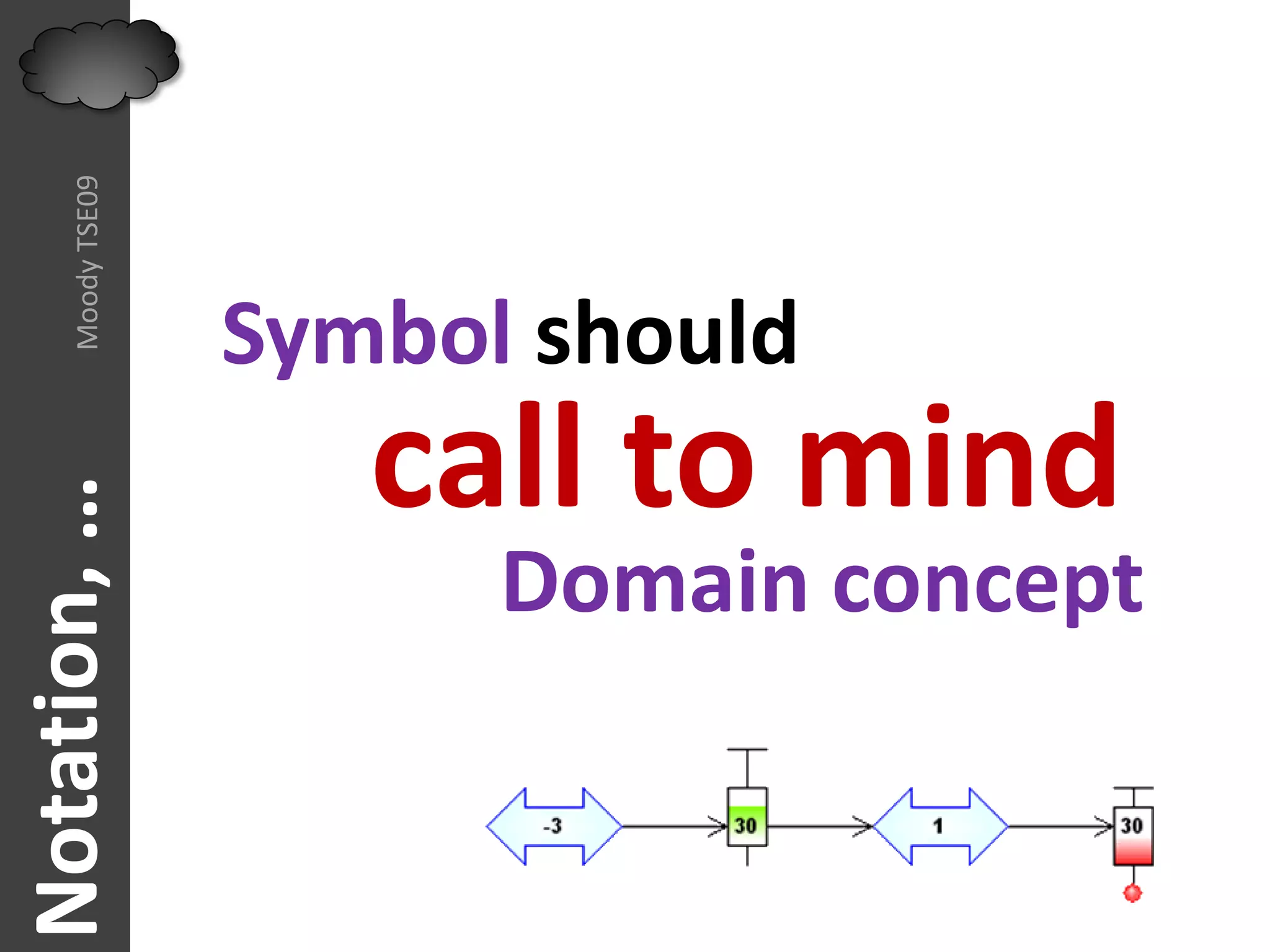

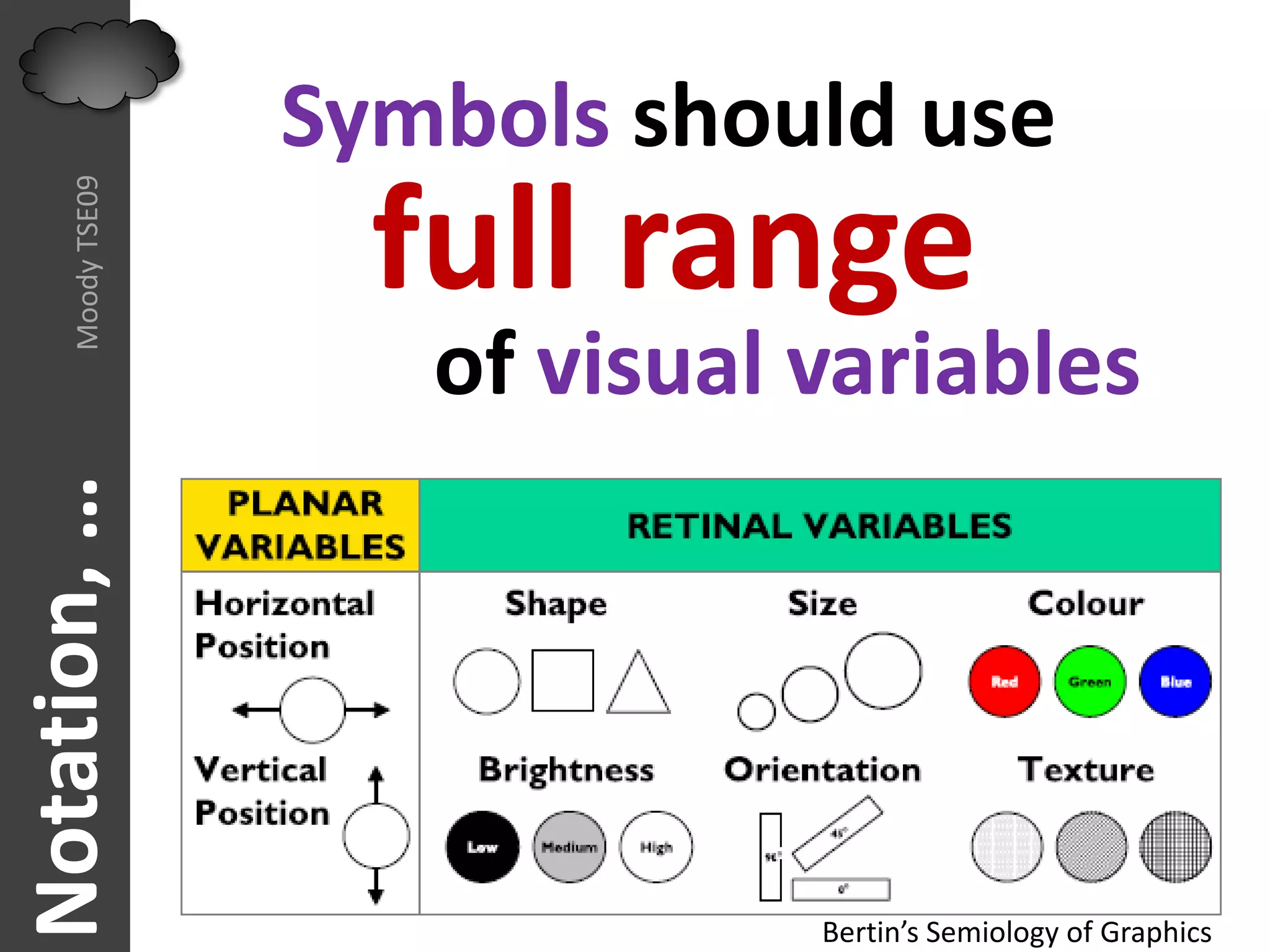

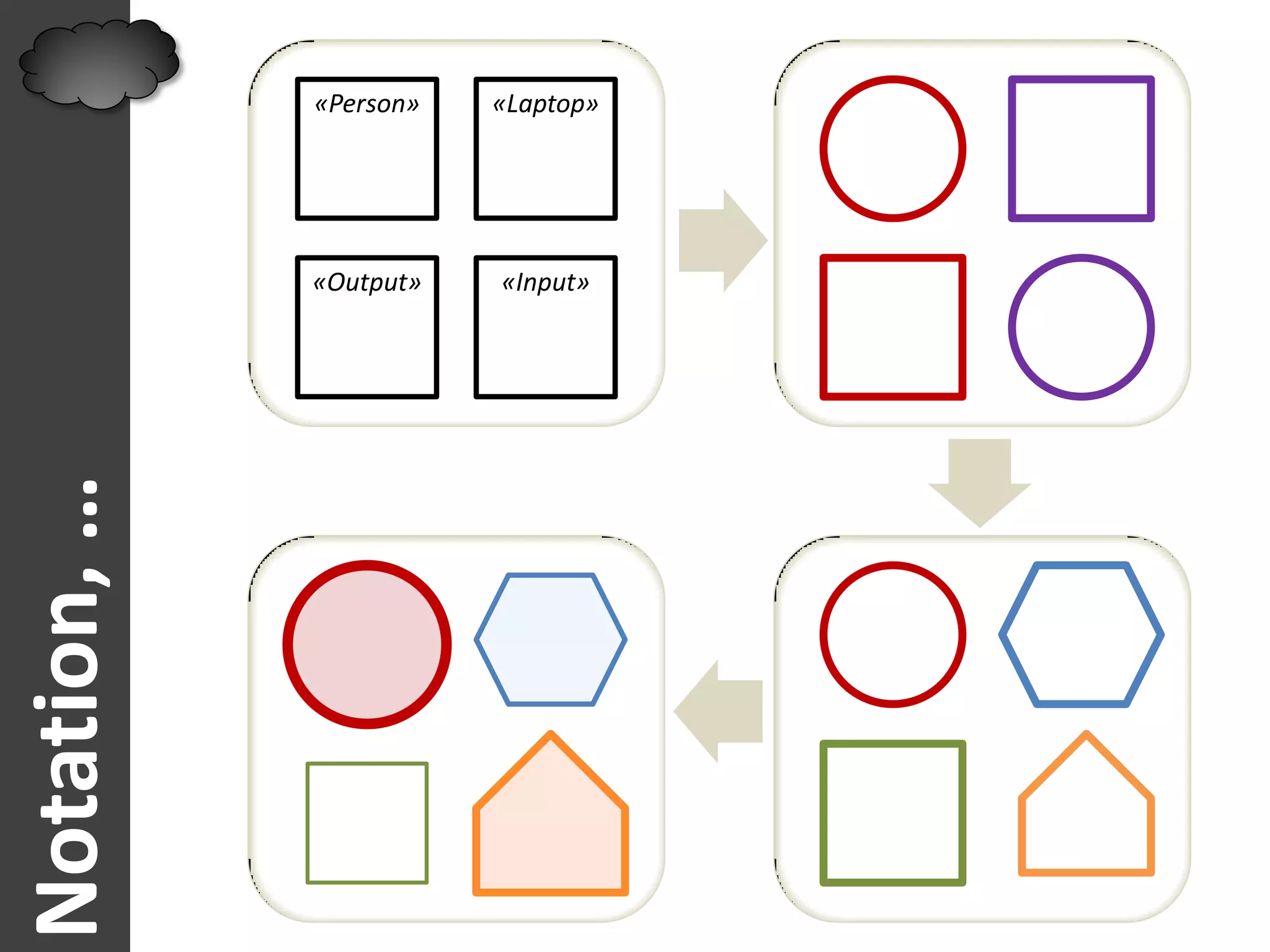

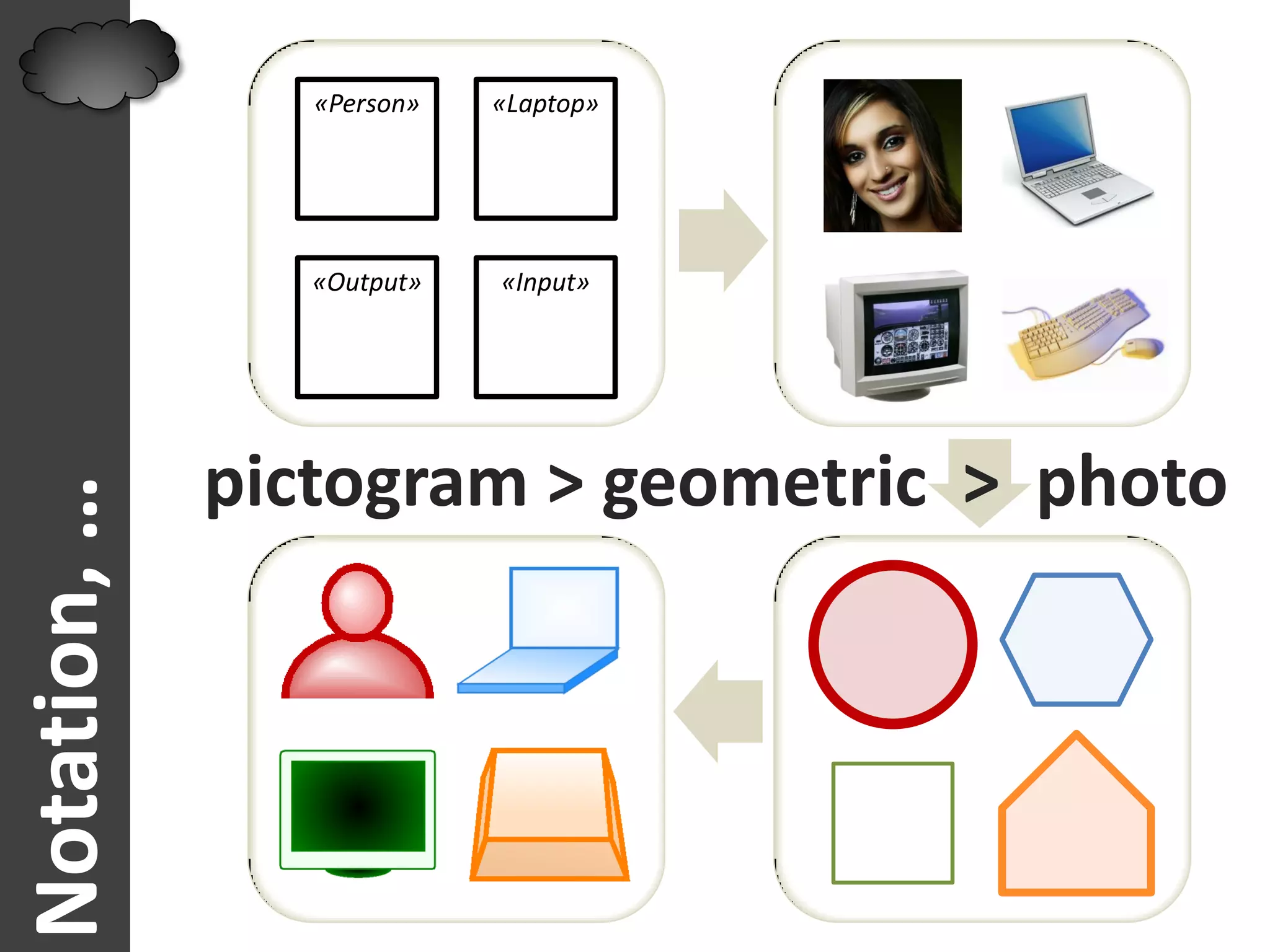

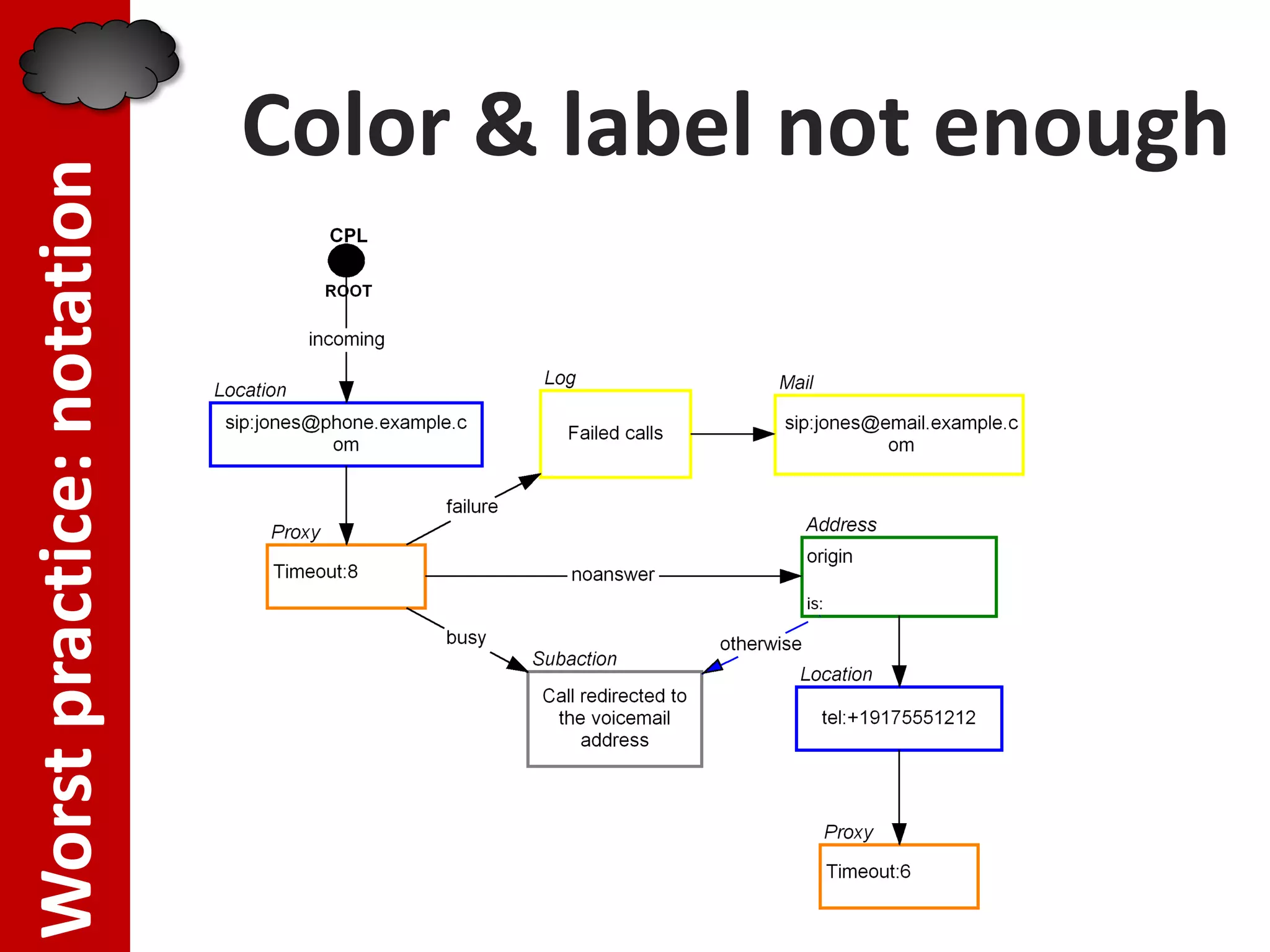

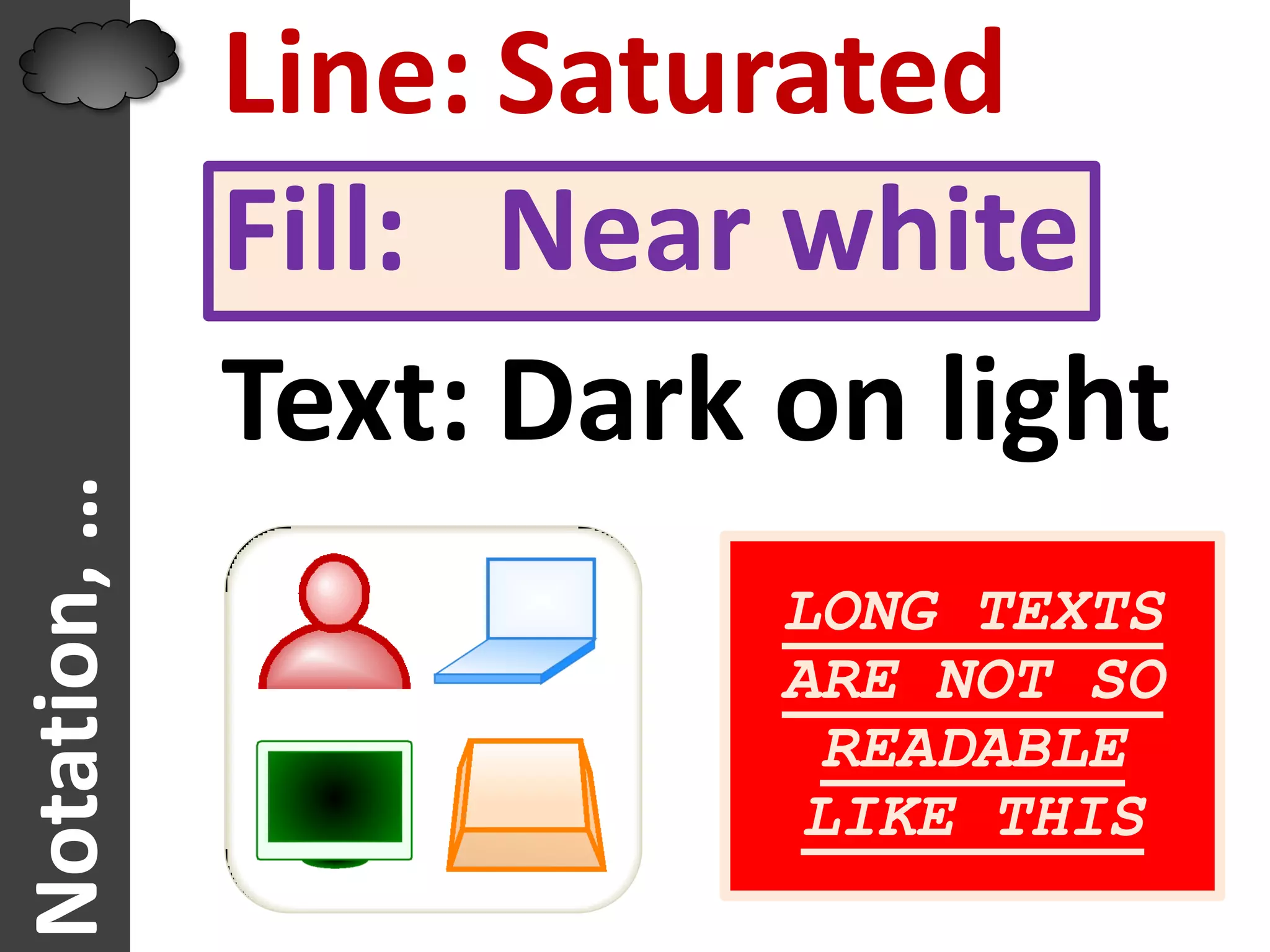

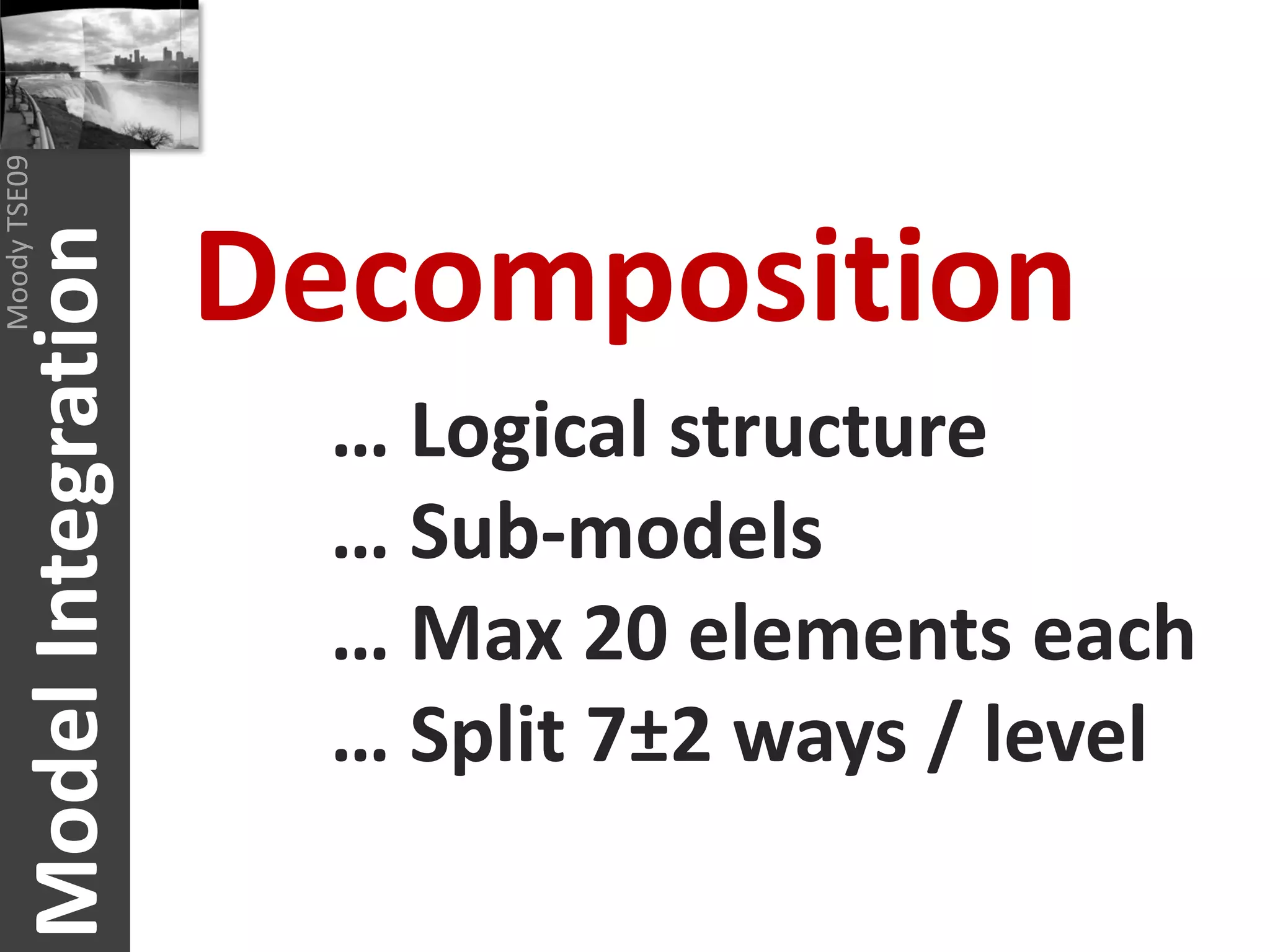

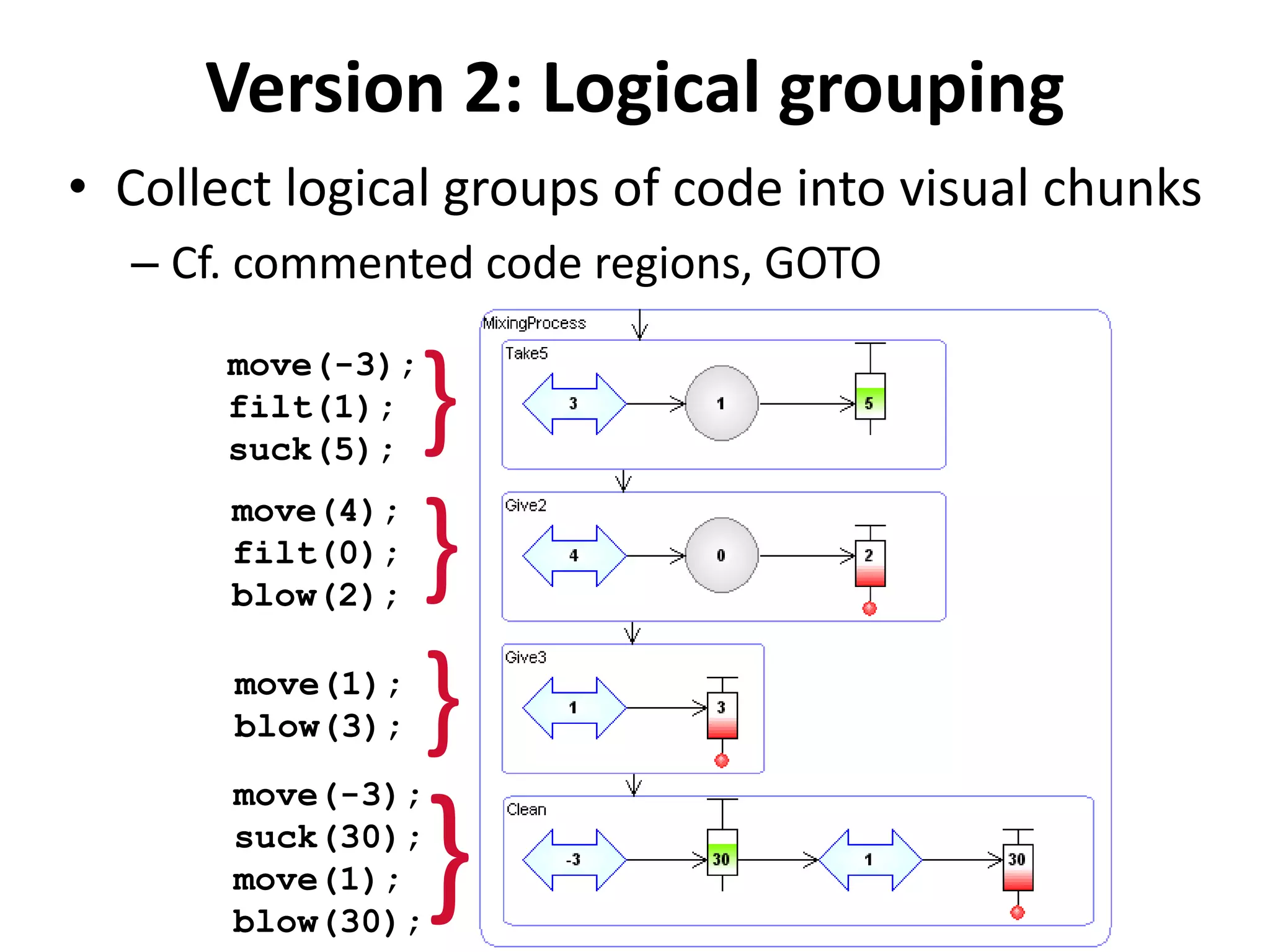

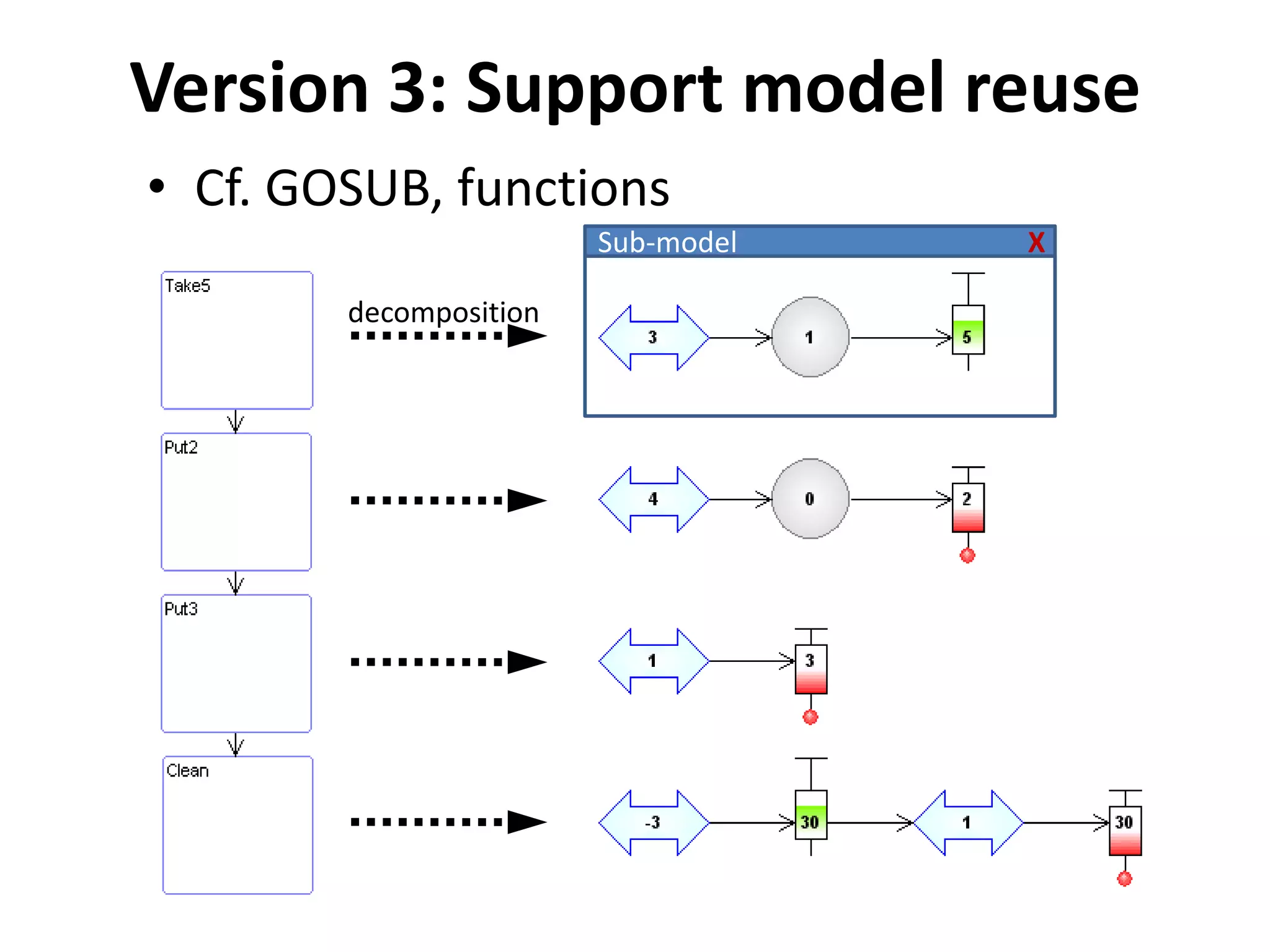

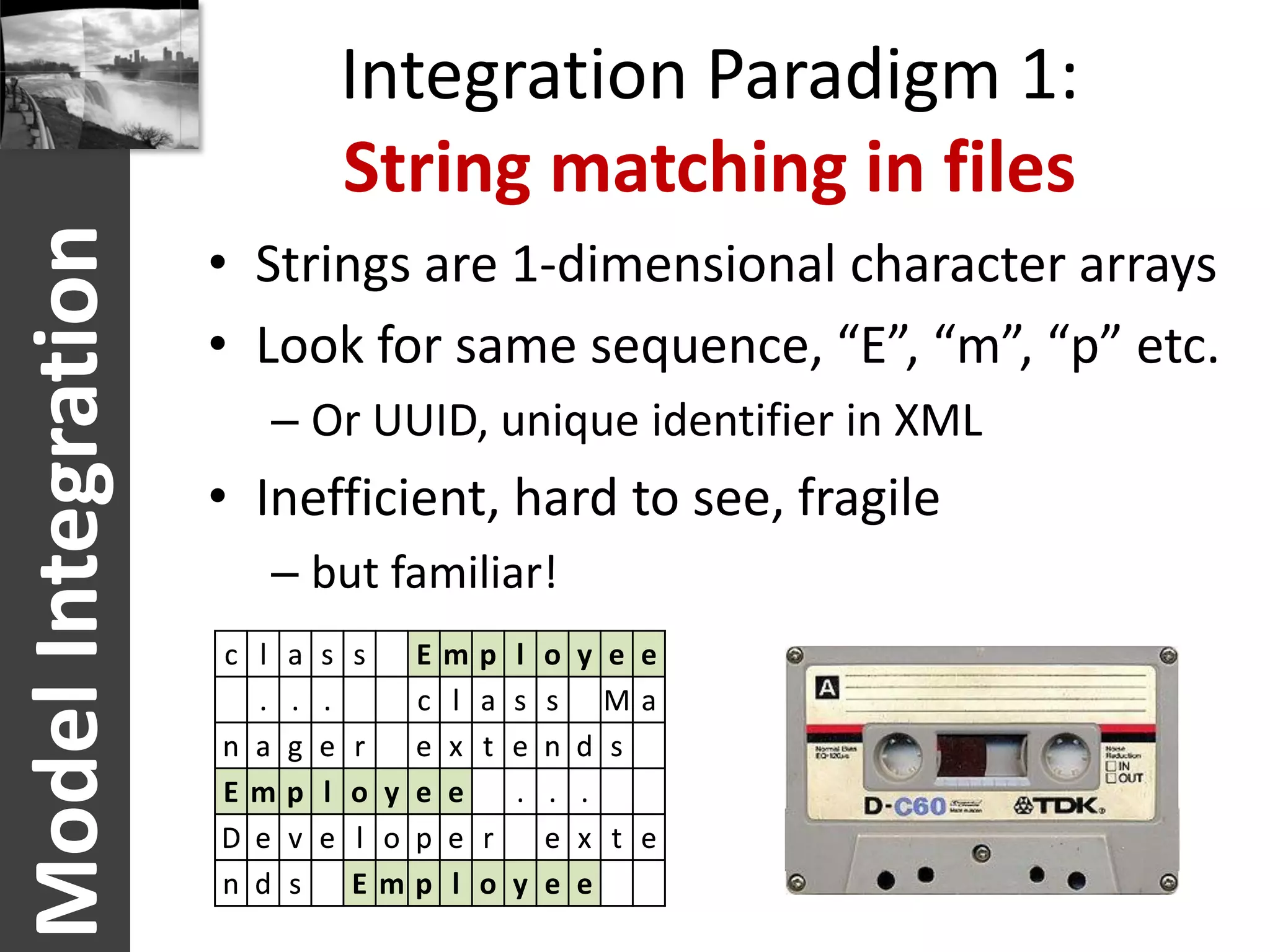

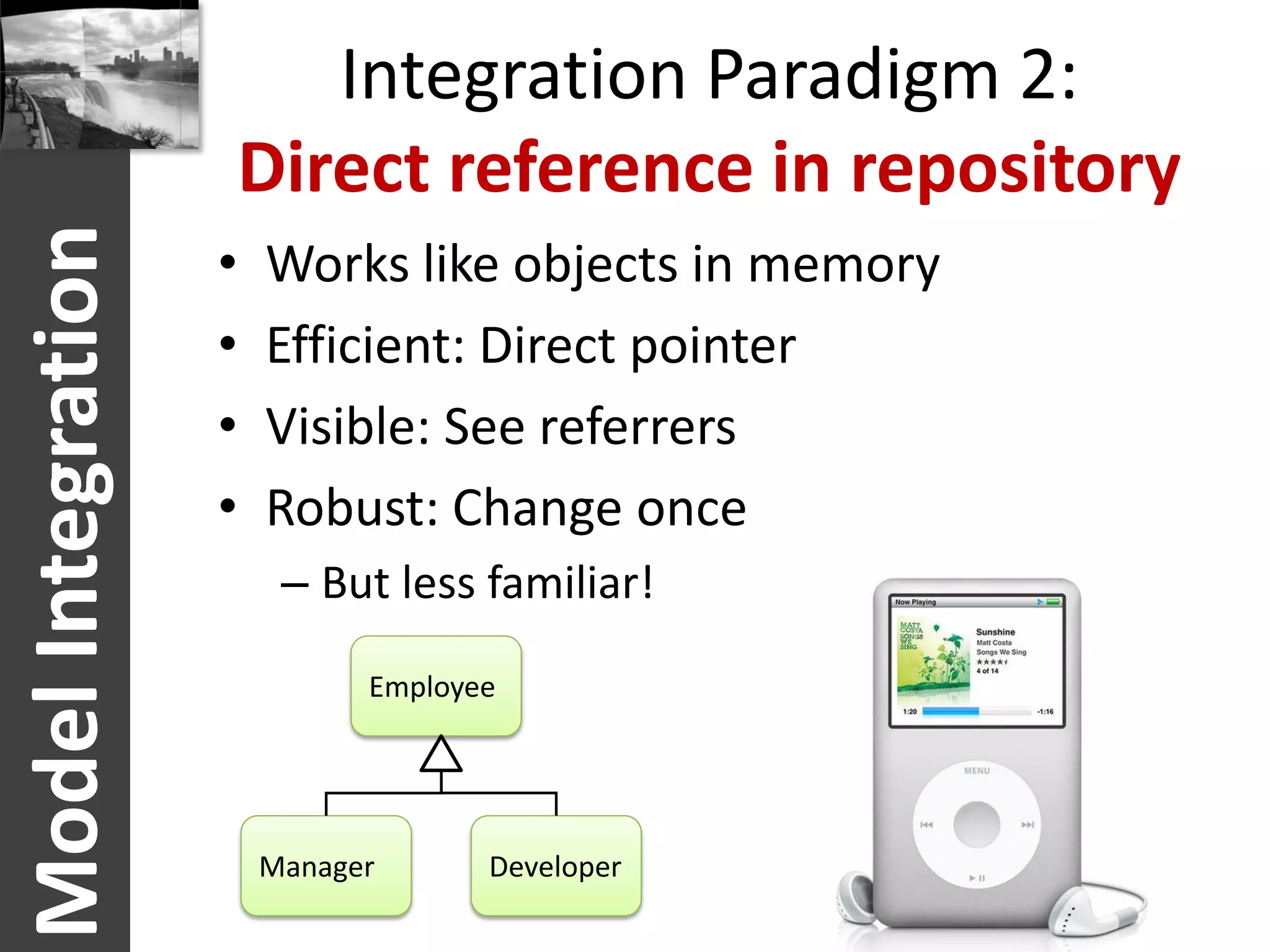

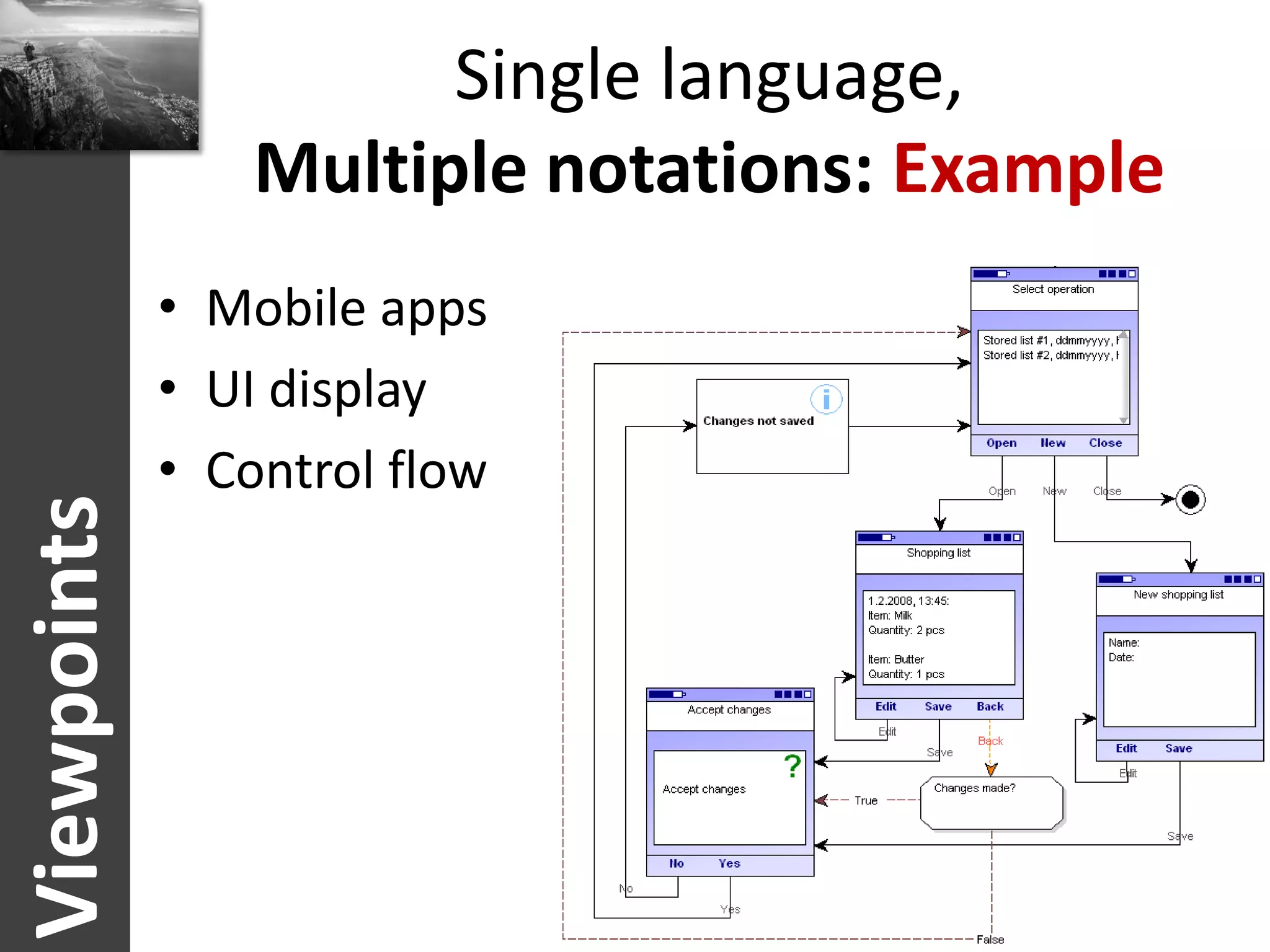

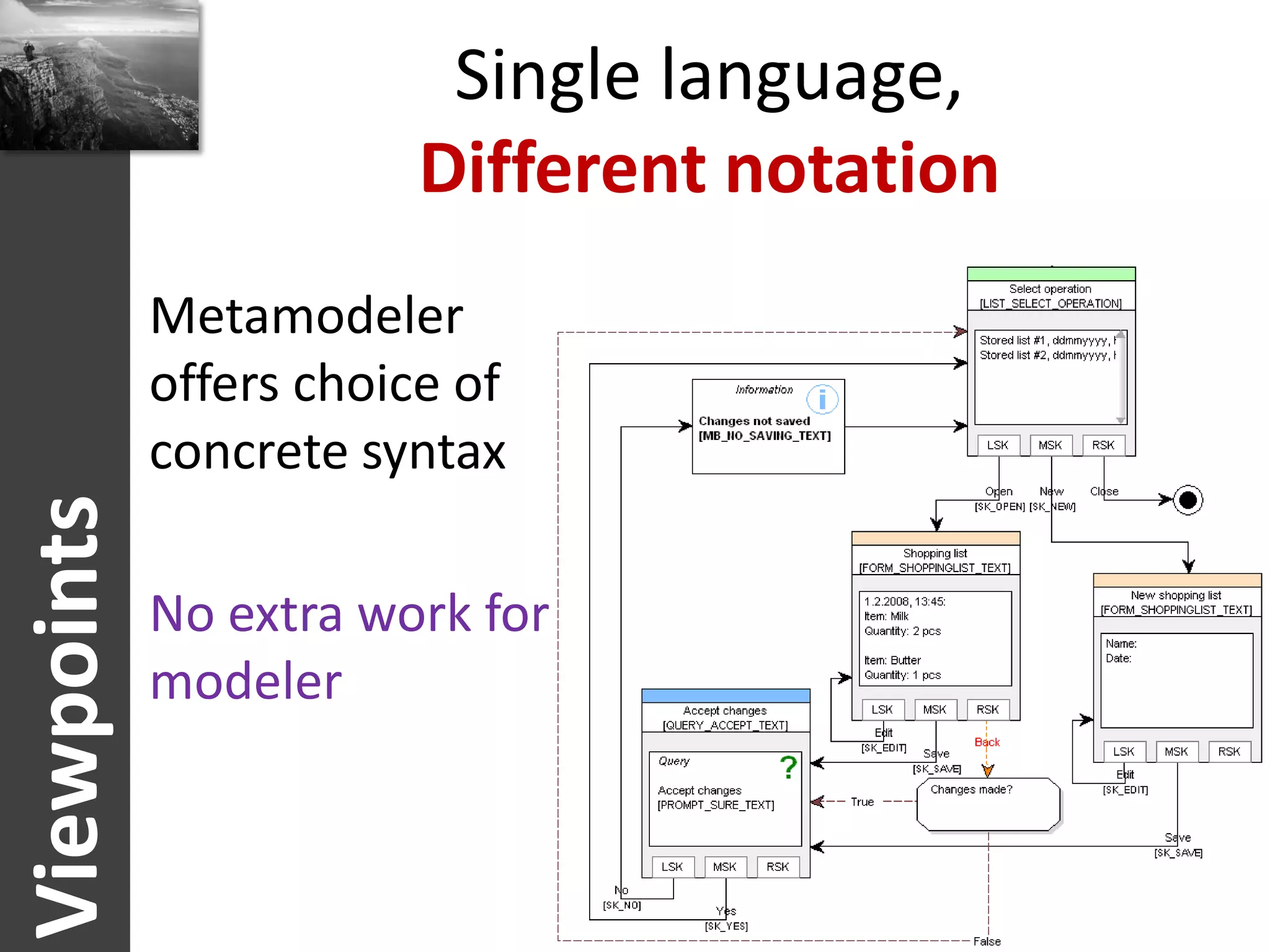

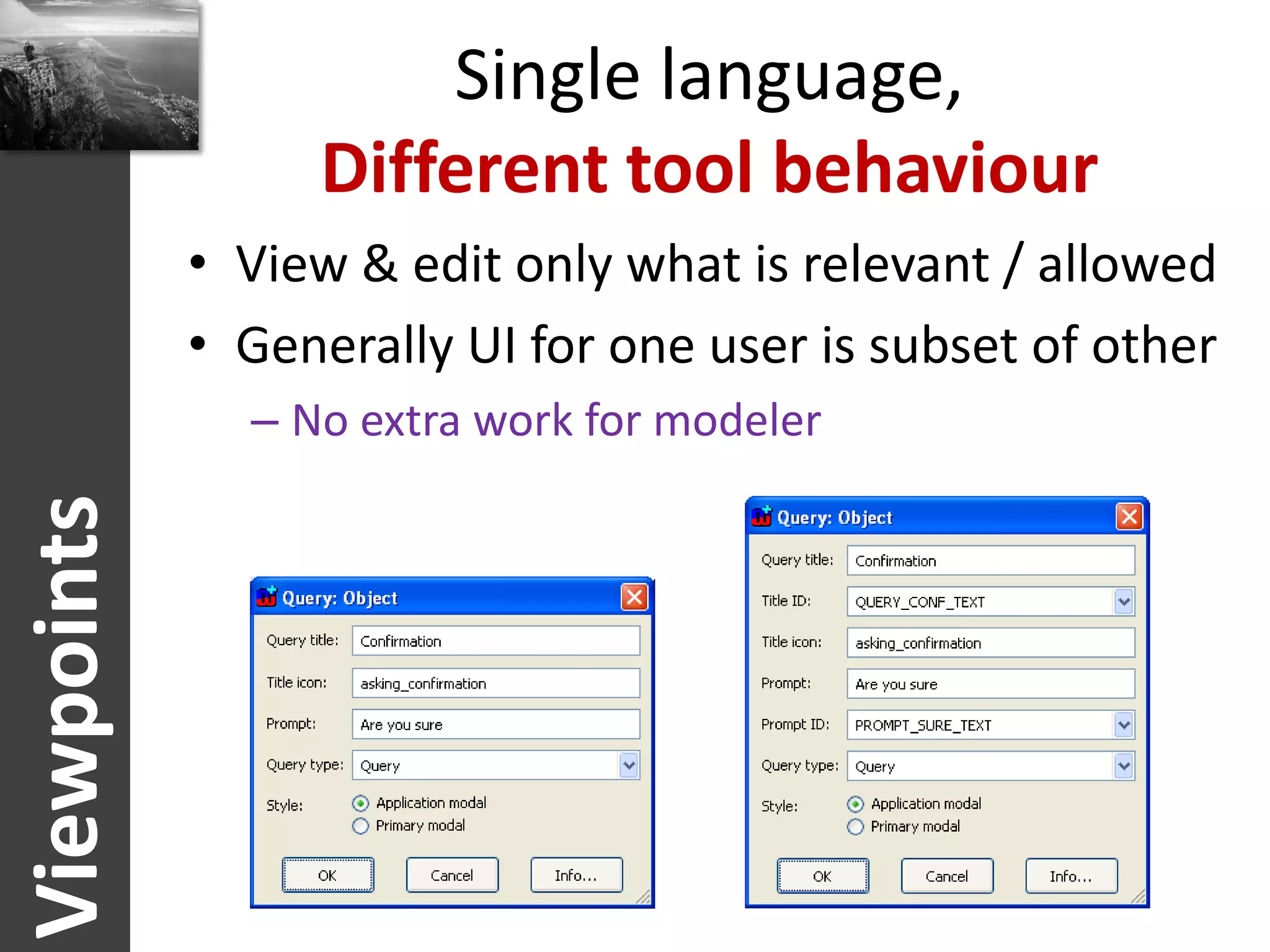

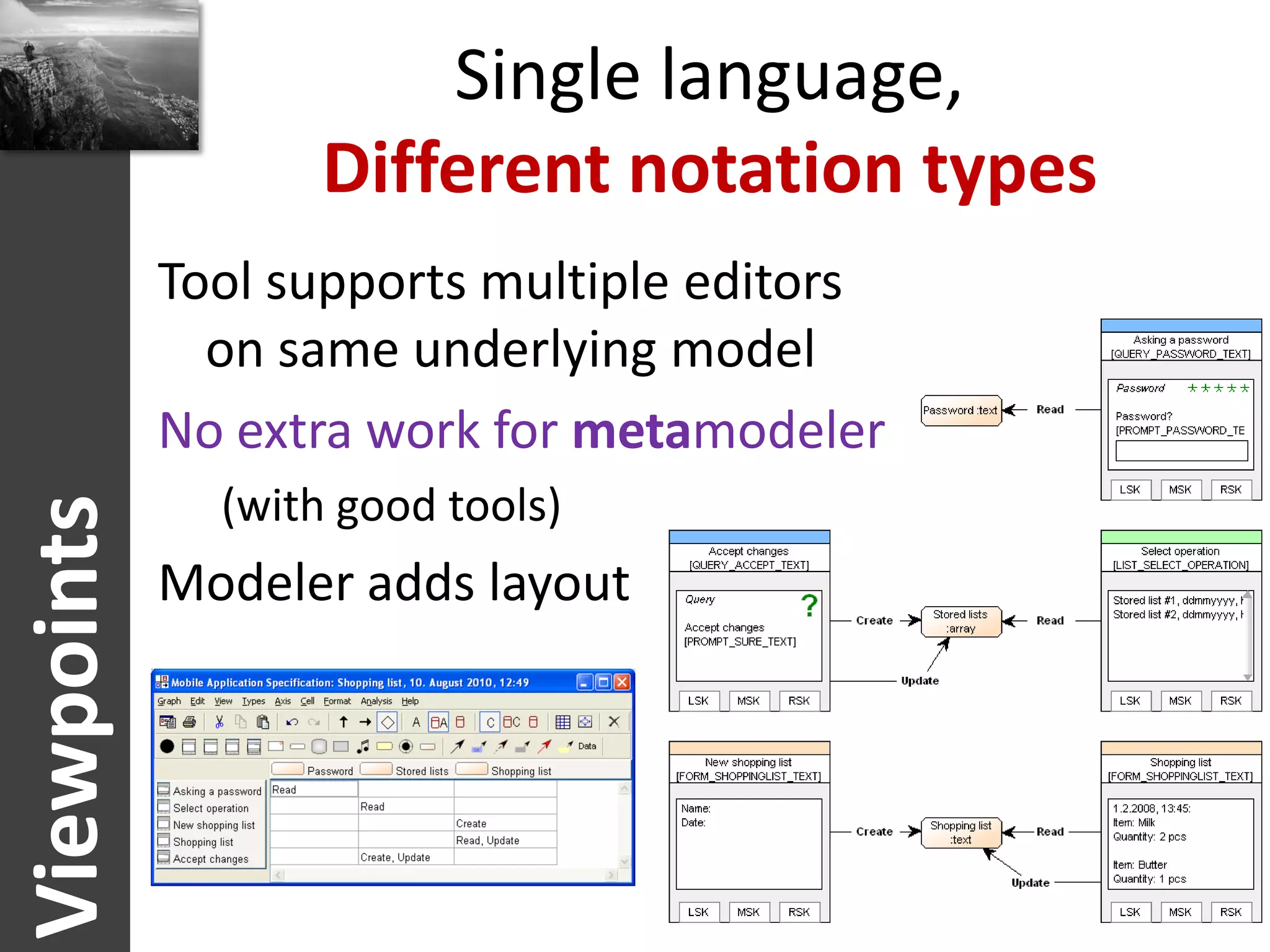

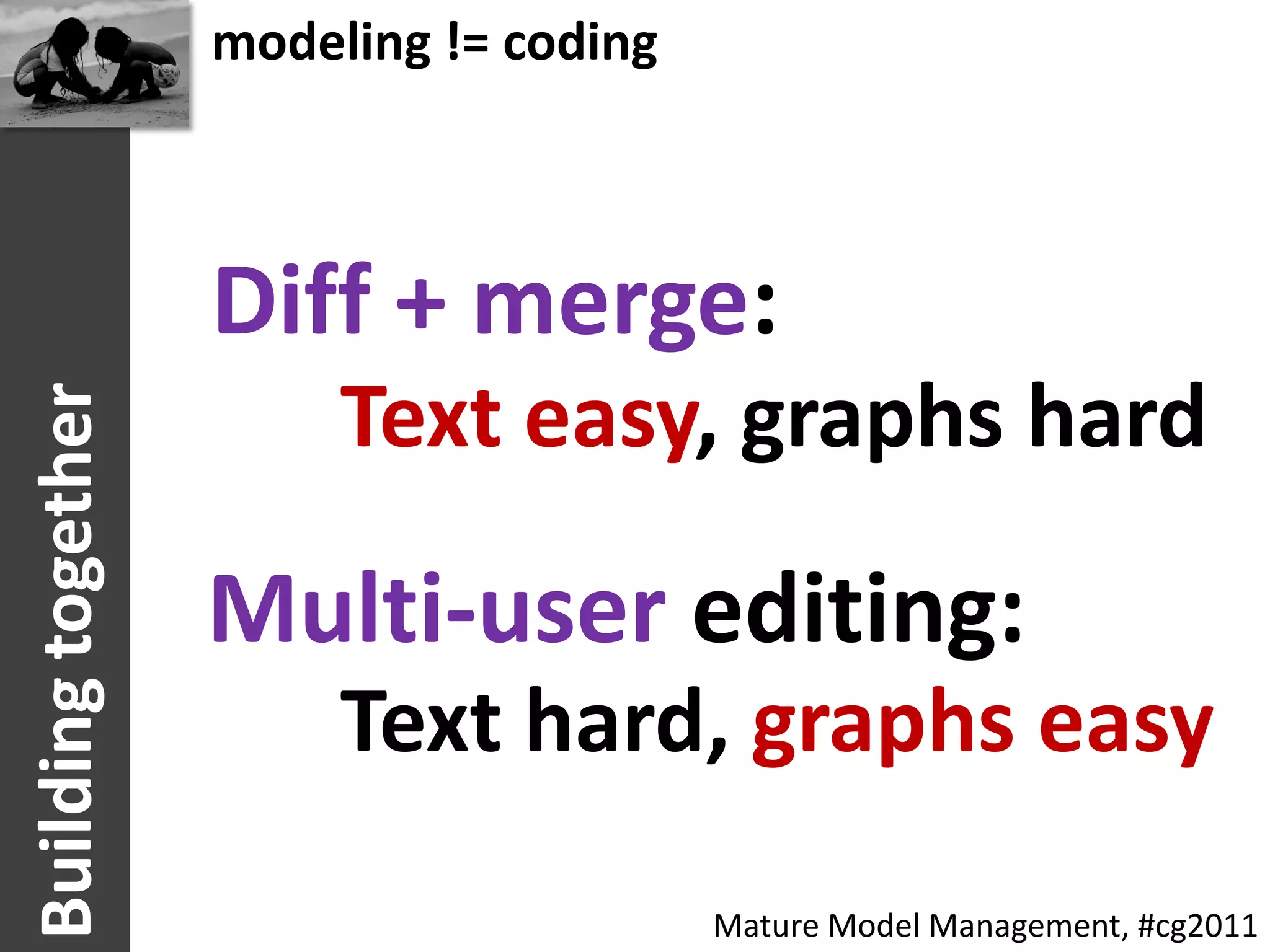

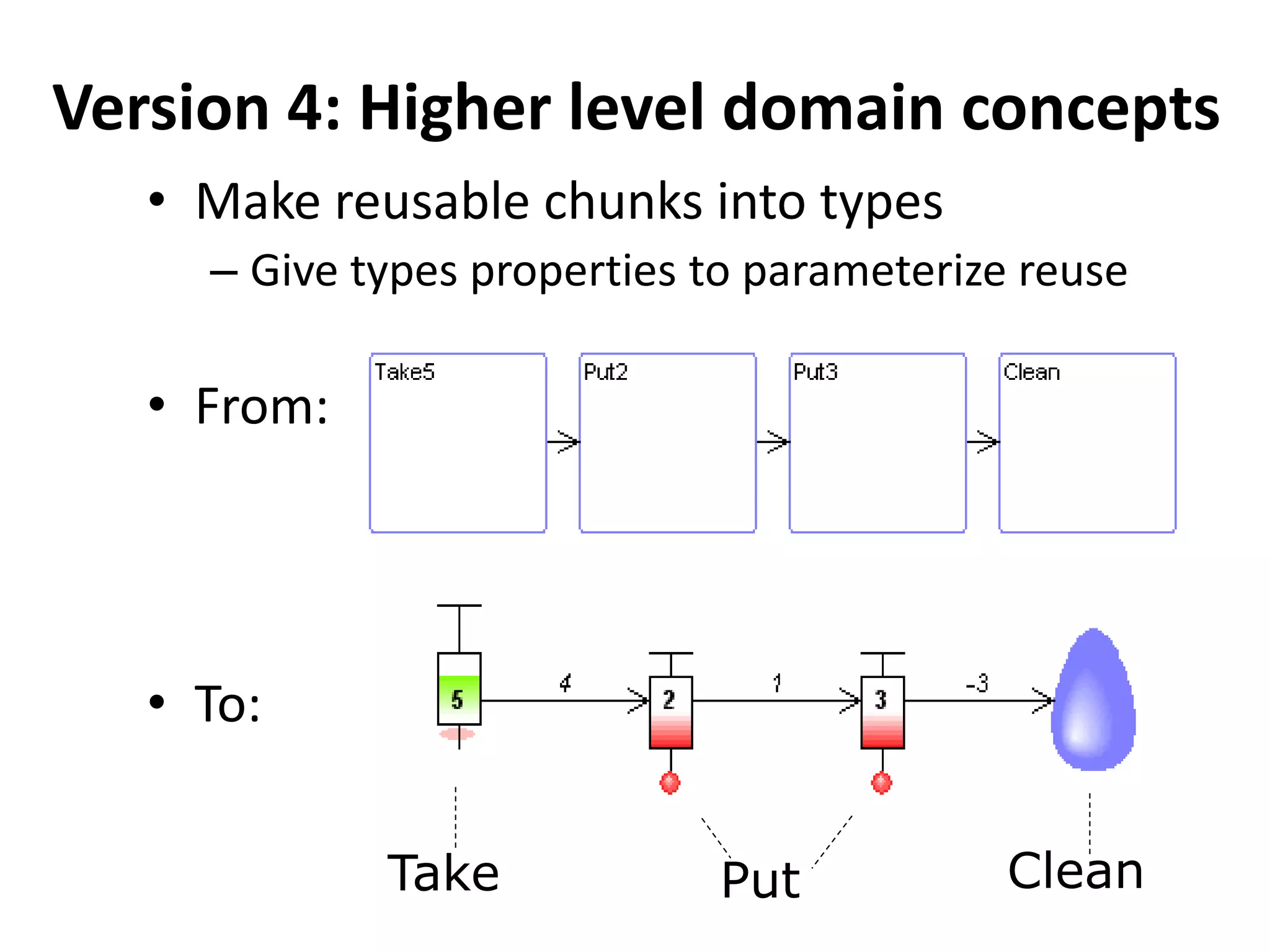

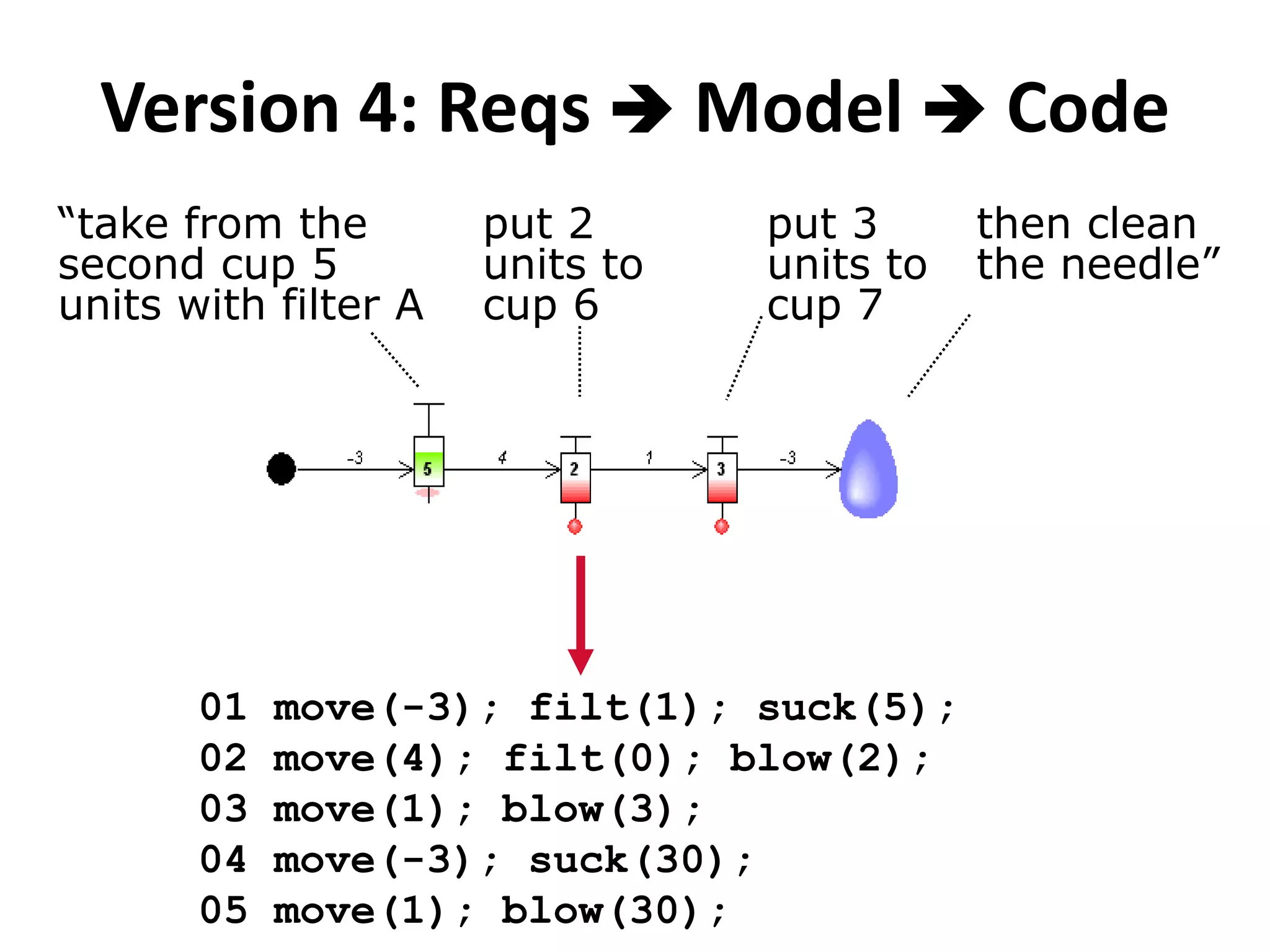

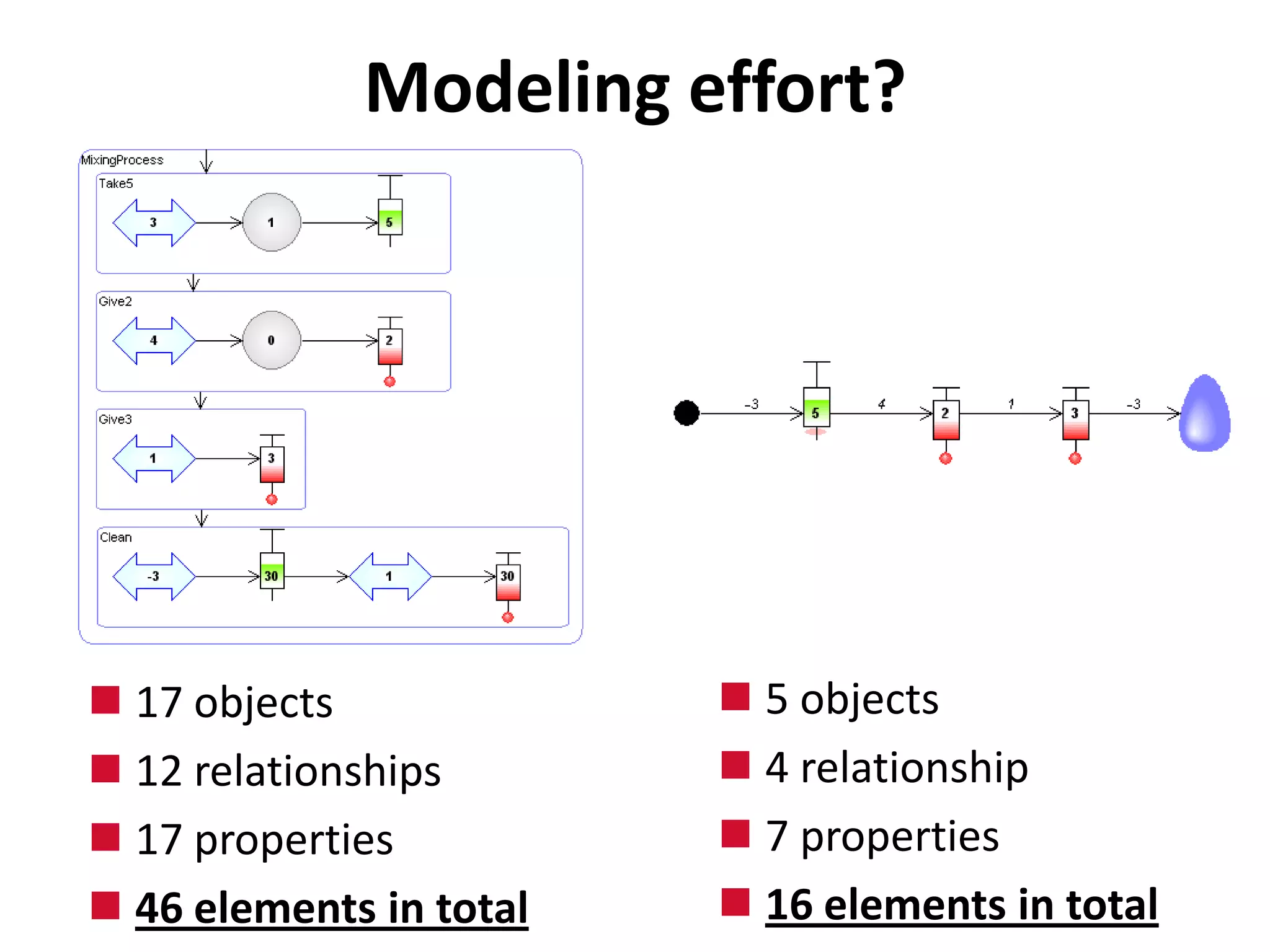

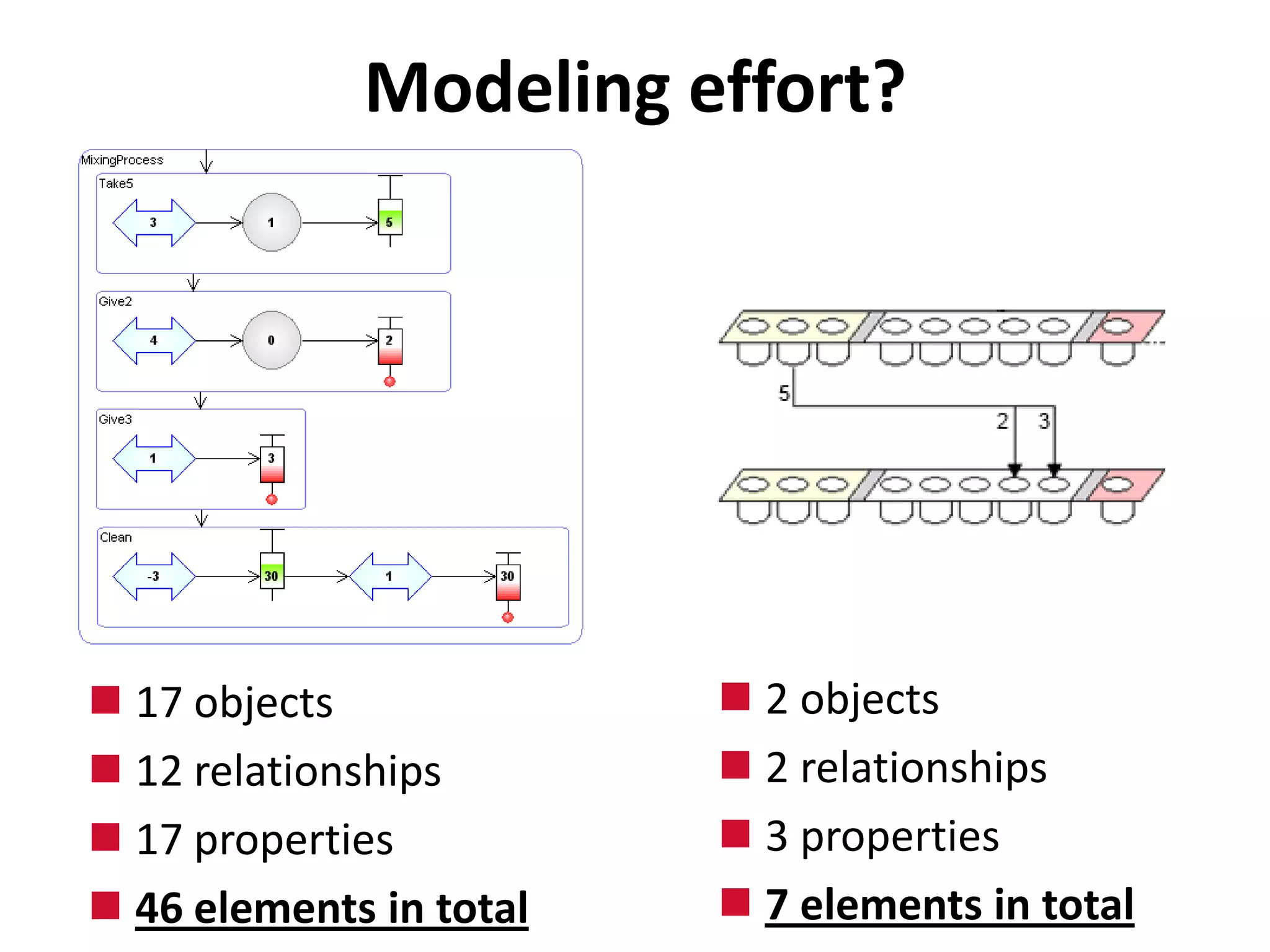

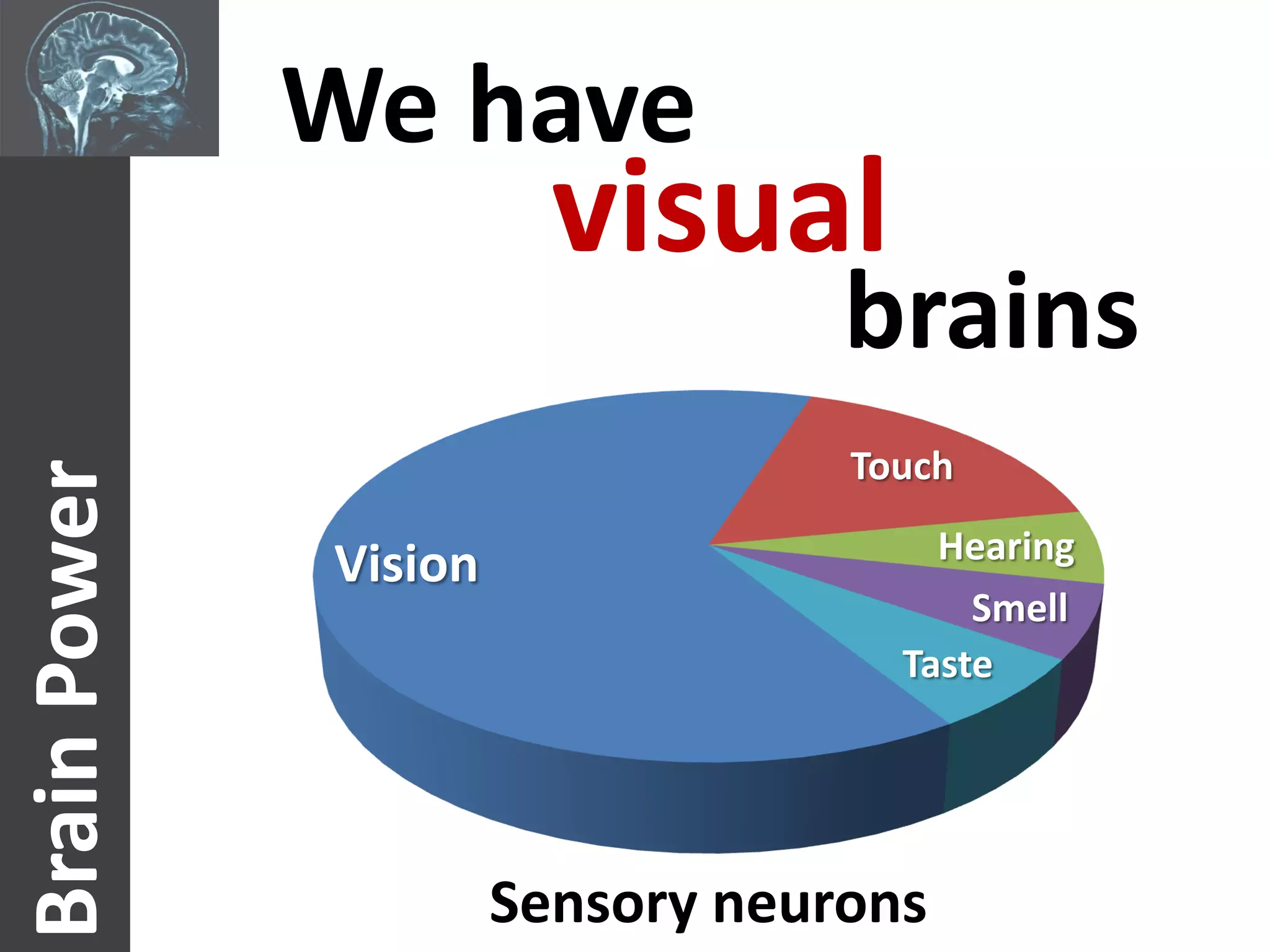

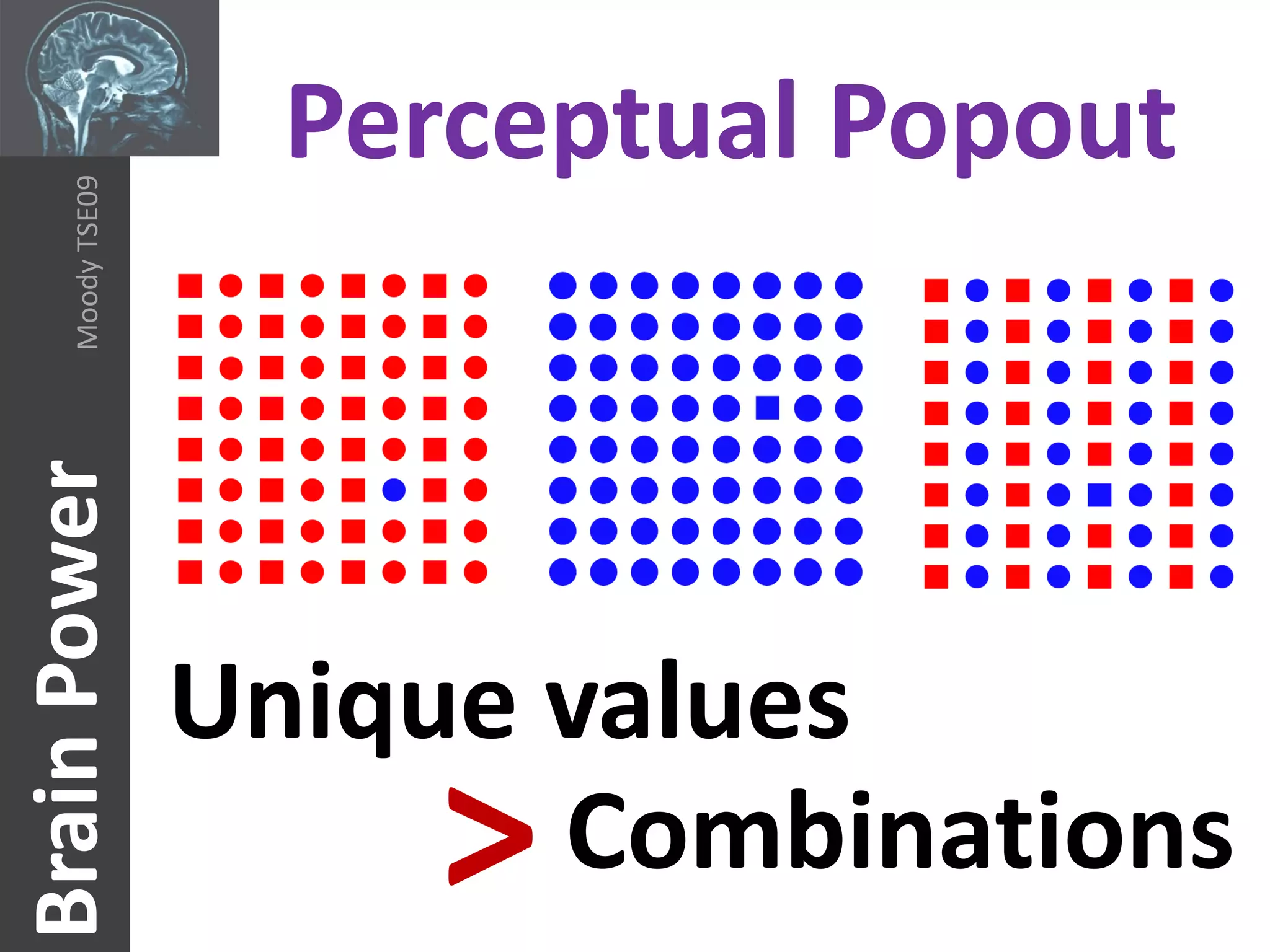

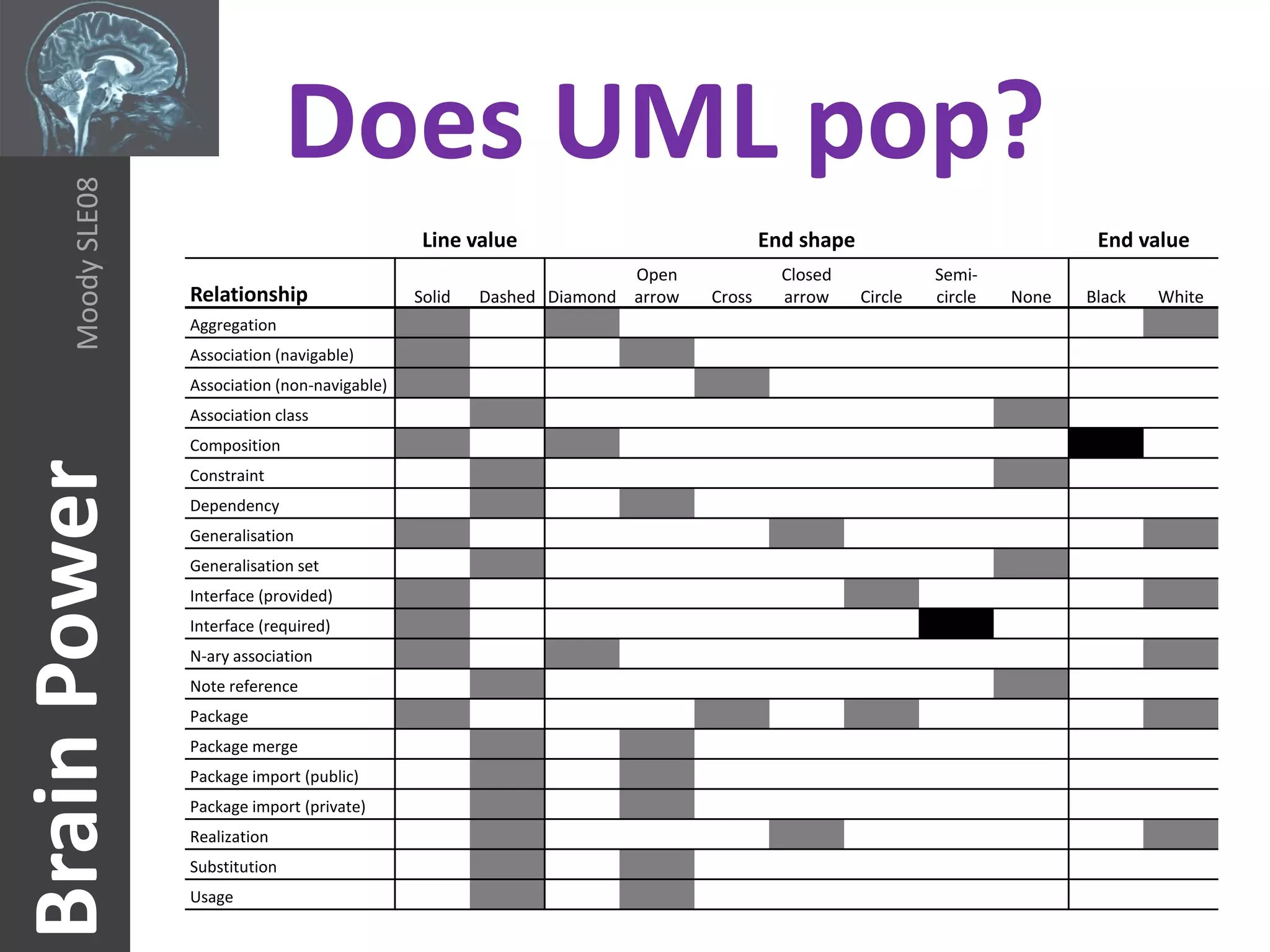

The document discusses the construction of visual notations in software engineering, emphasizing the importance of notation form and content on cognitive effectiveness. It outlines various worst practices in domain-specific modeling, such as choosing inappropriate notation types and neglecting user comprehension. The document also highlights the significance of visual representation and cognitive processing in modeling tasks.

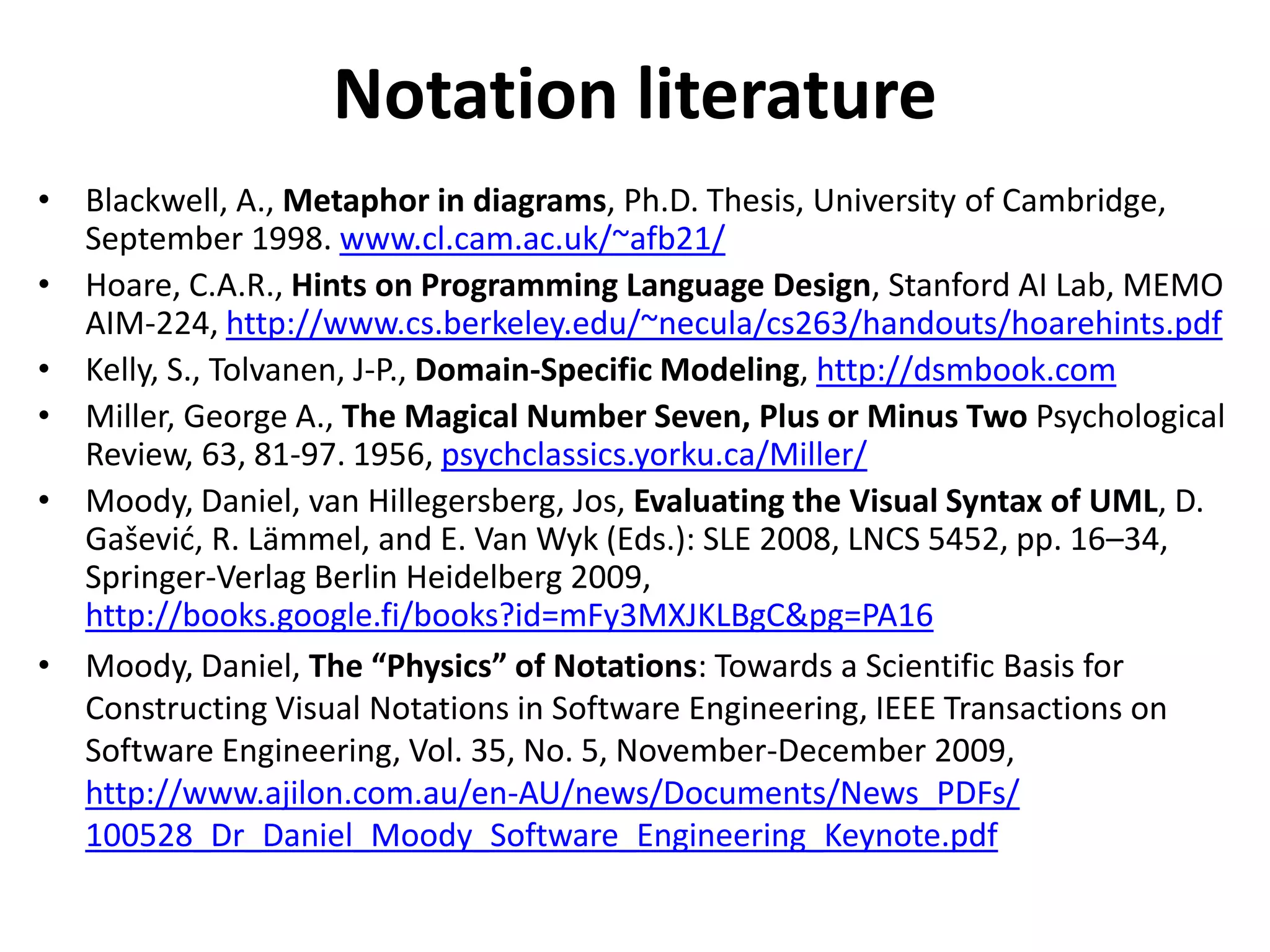

![Daniel Moody

The “Physics” of Notations:

Towards a Scientific Basis for Constructing

Visual Notations in Software Engineering,

IEEE Transactions on Software Engineering,

Best Practices

Vol. 35, No. 5, November-December 2009

[1] Alexander, C.W., Notes On The Synthesis Of Form.

1970, Boston, US: Harvard University Press.

Worst practices

[2] Avison, D.E. and G. Fitzgerald, Information

Systems Development: Methodologies, Techniques

and Tools (3rd edition). 2003, Oxford, United

Kingdom: Blackwell Scientific.

.......

[150] Zhang, J., The Nature of External

Representations in Problem Solving. Cognitive

Science, 1997. 21(2): p. 179-217.

[151] Zhang, J. and D.A. Norman, Representations in

Distributed Cognitive Tasks. Cognitive Science, 1994.](https://image.slidesharecdn.com/concretesyntaxmatters-120430093204-phpapp02/75/Concrete-syntax-matters-2-2048.jpg)

![Moody TSE09

Form ≥ Content

Brain Power

“ research in diagrammatic reasoning shows that the

form of representations has an equal, if not greater,

influence on cognitive effectiveness as their content

[68, 122, 151].

”](https://image.slidesharecdn.com/concretesyntaxmatters-120430093204-phpapp02/75/Concrete-syntax-matters-19-2048.jpg)

![Moody TSE09

Concrete ≥ Abstract

Brain Power

syntax syntax

“

apparently minor changes in visual appearance

can have dramatic impacts on understanding and

problem solving performance [19, 68, 103, 122]...

especially by novices [53, 56, 57, 79, 93, 106, 107].

”](https://image.slidesharecdn.com/concretesyntaxmatters-120430093204-phpapp02/75/Concrete-syntax-matters-20-2048.jpg)