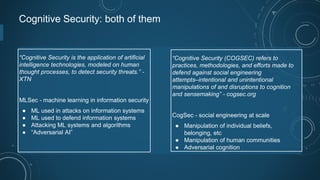

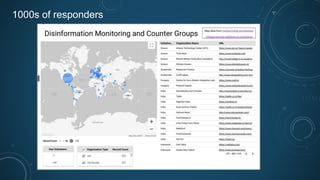

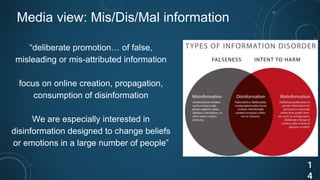

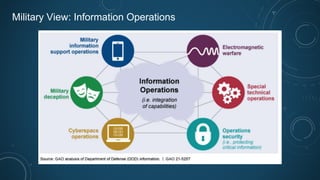

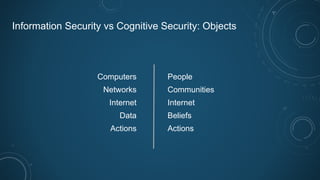

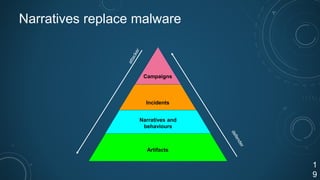

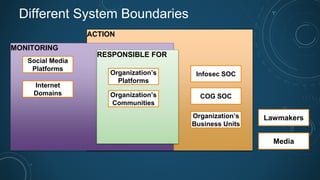

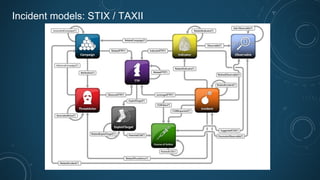

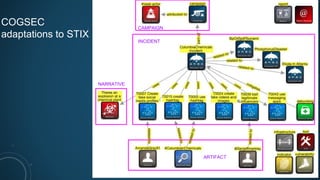

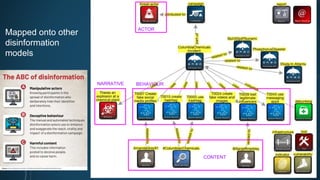

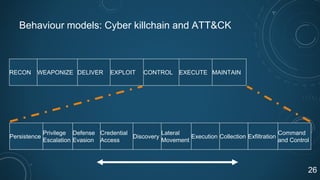

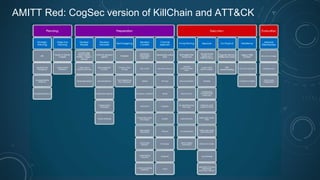

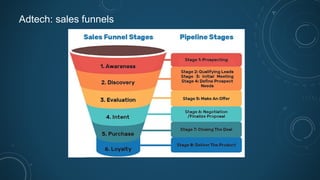

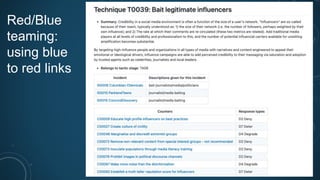

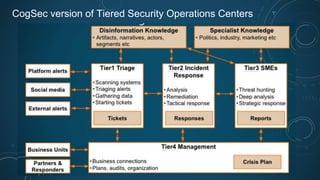

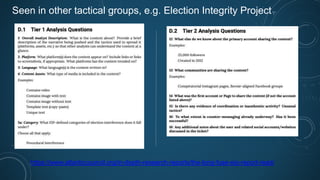

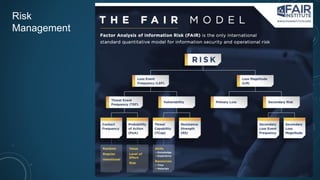

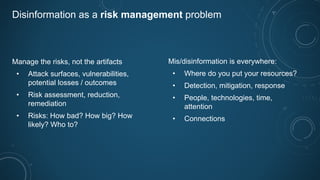

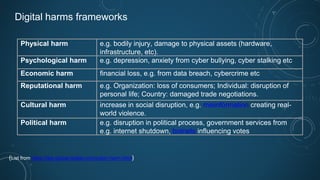

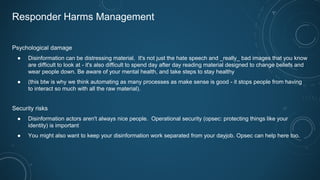

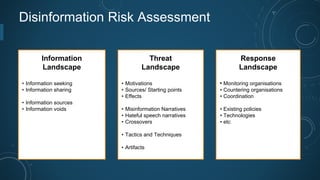

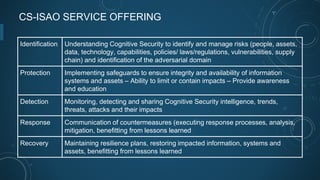

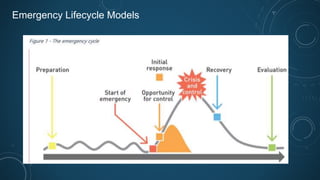

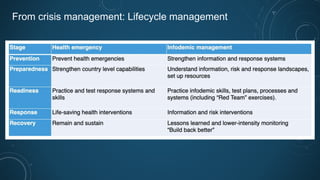

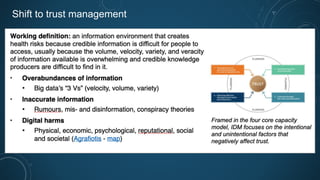

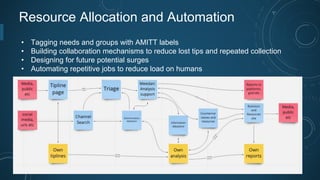

This document discusses cognitive security, which involves defending against attempts to intentionally or unintentionally manipulate cognition and sensemaking at scale. It covers various topics related to cognitive security including actors, channels, influencers, groups, messaging, and tools used in disinformation campaigns. Frameworks are presented for analyzing disinformation incidents, adapting concepts from information security like the cyber kill chain. Response strategies are discussed, drawing from fields like information operations, crisis management, and risk management. The need for a common language and ongoing monitoring and evaluation is emphasized.