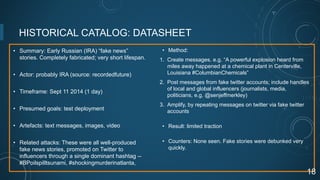

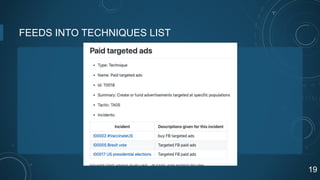

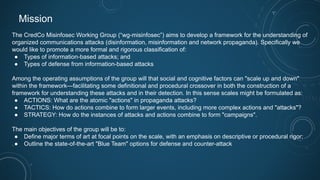

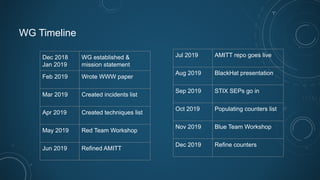

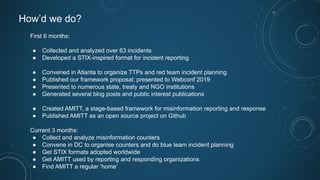

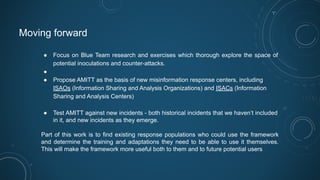

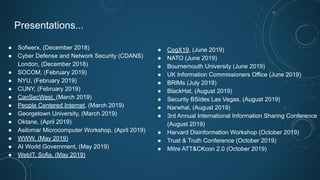

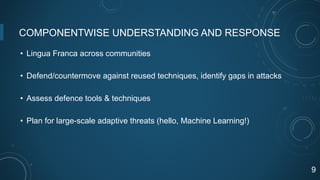

The Credco Misinfosec Working Group aims to create a comprehensive framework for understanding organized communications attacks, including disinformation. In its first six months, the group analyzed over 63 incidents, developed incident reporting formats, and presented their framework at multiple conferences. Current goals include refining their misinformation response framework, promoting its adoption, and establishing it as a foundational tool for combating misinformation globally.

![COMBINING DIFFERENT VIEWS OF MISINFORMATION

• Information security (Gordon, Grugq, Rogers)

• Information operations / influence operations (Lin)

• A form of conflict (Singer, Gerasimov)

• [A social problem]

• [News source pollution]

10](https://image.slidesharecdn.com/wg-misinfosecreportouttocredco-220525133035-0e114405/85/WG-misinfosec-report-out-to-CredCo-pdf-10-320.jpg)