This document provides a comprehensive overview of data cleaning and exploration techniques using Python, OpenRefine, and R. It covers methods for ensuring data consistency, formatting, and handling outliers, as well as tools like pandas and seaborn for data analysis and visualization. Practical coding examples are included to demonstrate the implementation of these techniques.

![Regular Expressions: junk

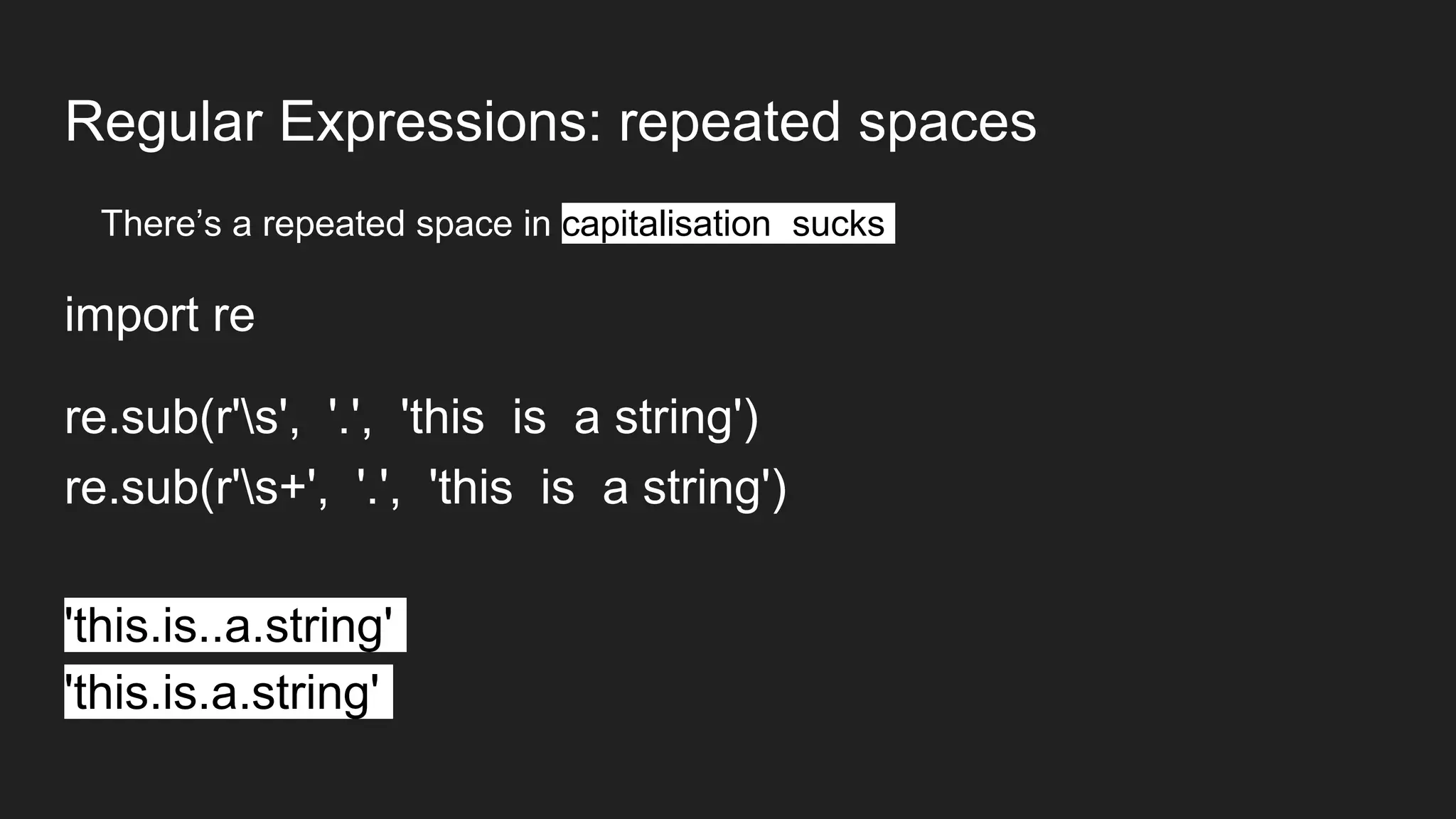

import re

string1 = “This is a! sentence&& with junk!@“

cleanstring1 = re.sub(r'[^w ]', '', string1)

This is a sentence with junk](https://image.slidesharecdn.com/session05cleaningandexploring-160510160255/75/Session-05-cleaning-and-exploring-8-2048.jpg)

![Eyeballing rows

How many rows are there in this dataset?

len(df)

What do my data rows look like?

df.head(5)

df.tail()

df[10:20]](https://image.slidesharecdn.com/session05cleaningandexploring-160510160255/75/Session-05-cleaning-and-exploring-22-2048.jpg)

![Eyeballing columns

What’s in these columns?

df[‘sourceid’]

df[[‘sourceid’,’ag12a_01','ag12a_02_2']]

What’s in the columns when these are true?

df[df.ag12a_01 == ‘YES’]

df[(df.ag12a_01 == 'YES') & (df.ag12a_02_1 == 'NO')]](https://image.slidesharecdn.com/session05cleaningandexploring-160510160255/75/Session-05-cleaning-and-exploring-23-2048.jpg)

![Summarising columns

What are my column names and types?

df.columns

df.dtypes

Which labels do I have in this column?

df['ag12a_03'].unique()

df['ag12a_03'].value_counts()](https://image.slidesharecdn.com/session05cleaningandexploring-160510160255/75/Session-05-cleaning-and-exploring-24-2048.jpg)

![Pivot Tables: Combining data from one dataframe

● pd.pivot_table(df, index=[‘sourceid’, ‘ag12a_03’])](https://image.slidesharecdn.com/session05cleaningandexploring-160510160255/75/Session-05-cleaning-and-exploring-25-2048.jpg)

![Merge: Combining data from multiple frames

longnames = pd.DataFrame({ 'country' : pd.Series(['United States of America', 'Zaire', 'Egypt']),

'longname' : pd.Series([True, True, False])})

merged_data = pd.merge(

left=popstats,

right=longnames,

left_on='Country/territory of residence',

right_on='country')

merged_data[['Year', 'Country/territory of residence', 'longname', 'Total population', 'Origin / Returned from']]](https://image.slidesharecdn.com/session05cleaningandexploring-160510160255/75/Session-05-cleaning-and-exploring-26-2048.jpg)

![Left Joins: Keep everything from the left table…

longnames = pd.DataFrame({ 'country' : pd.Series(['United States of America', 'Zaire', 'Egypt']),

'longname' : pd.Series([True, True, False])})

merged_data = pd.merge(

left=popstats,

right=longnames,

how='left',

left_on='Country/territory of residence',

right_on='country')

merged_data[['Year', 'Country/territory of residence', 'longname', 'Total population', 'Origin / Returned from']]](https://image.slidesharecdn.com/session05cleaningandexploring-160510160255/75/Session-05-cleaning-and-exploring-27-2048.jpg)