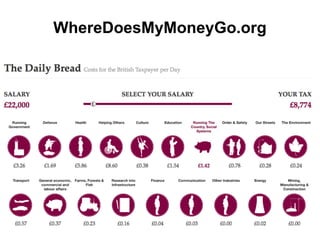

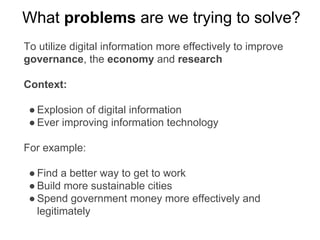

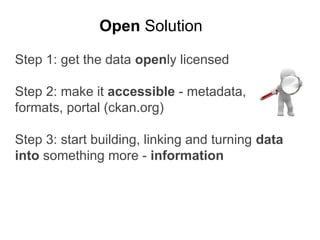

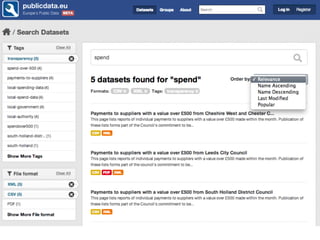

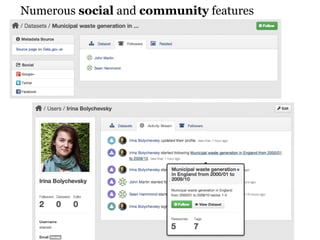

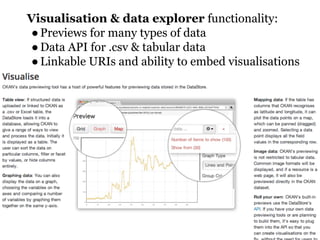

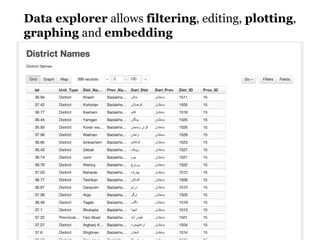

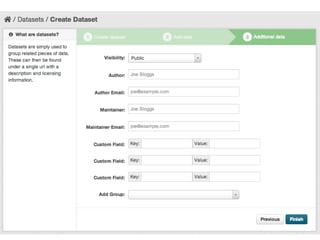

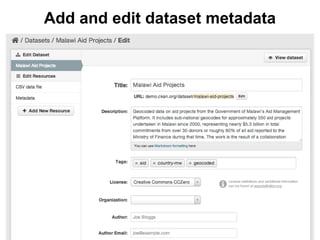

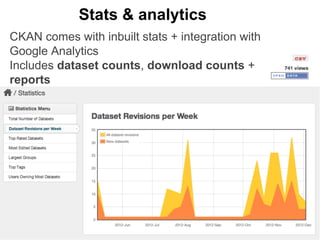

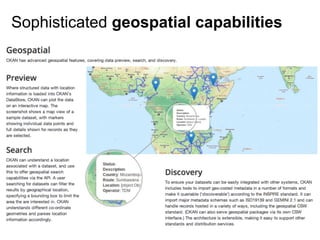

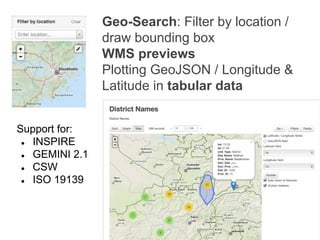

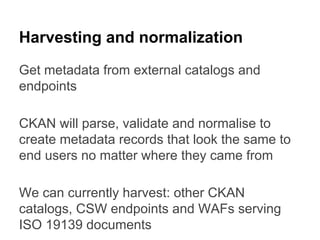

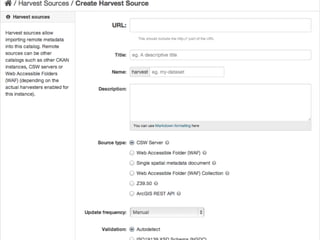

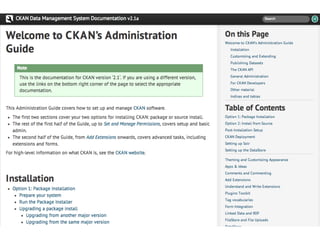

CKAN is an open-source software developed by the Open Knowledge Foundation for managing and discovering open data. It provides tools for data management and user-friendly features for searching and visualizing datasets, supporting various formats and multilingual content. The platform aims to improve governance and data utilization through accessible, openly licensed information across the globe.