Churn Prediction on customer data

•

0 likes•17 views

Statistical Analysis, Pre-processing, Exploratory Analysis, Pivot Tables, charts and graphical representation of Information to improve business decision making.

Report

Share

Report

Share

Download to read offline

Recommended

Eu33884888

IJERA (International journal of Engineering Research and Applications) is International online, ... peer reviewed journal. For more detail or submit your article, please visit www.ijera.com

Cobb Douglas production function in excel

Cobb Douglas production function in excel

Yi=a X1i^b1 X2i^b2 X3i^b3 e^ui

Where,

Y= dependent variable

X=explanatory(independent)variable

a=intercept

bi are regression coefficients

e=2.718………….

u=error term Follows N(0,constant variance)

This function is non linear in parameters so to make it linear in parameters apply ln both sides then equation looks like

lnYi=lna+ b1lnX1i +b2lnX2i +b3lnX3i+ui

Steps

1. Open Excel, Give Variable Names and Provide Data

2. Apply Logarithmic Transformation to the Data

3. Go to Data analysis

4. Get Output

Cobb Douglas production function in SPSS

This document provides instructions for estimating a Cobb-Douglas production function using SPSS. It explains that taking the natural logarithm of both sides of the production function makes it linear in parameters. It then outlines the 5 steps to perform this analysis in SPSS: 1) Go to the variable view, 2) View the data, 3) Apply a logarithmic transformation to the variables, 4) Go to the analyze menu, and 5) Obtain the output, which will provide estimates of the intercept, coefficients, and estimated production function in log-linear form.

Linear Regression and Logistic Regression in ML

Linear regression and logistic regression are statistical modeling techniques. Linear regression predicts continuous dependent variables using independent variables, while logistic regression predicts binary dependent variables. Both aim to model relationships between variables by estimating coefficients. Logistic regression models the log odds of the dependent variable rather than the variable directly. Key evaluation metrics for regression include accuracy, precision, recall, and F1 score, which are calculated using a confusion matrix.

Application of Graphic LASSO in Portfolio Optimization_Yixuan Chen & Mengxi J...

- The document describes using graphical lasso to estimate the precision matrix of stock returns and apply portfolio optimization.

- Graphical lasso estimates the precision matrix instead of the covariance matrix to allow for sparsity. This makes the estimation more efficient for large datasets.

- The study uses 8 different models to simulate stock return data and compares the performance of graphical lasso, sample covariance, and shrinkage estimators on portfolio optimization of in-sample and out-of-sample test data. Graphical lasso performed best on out-of-sample test data, showing it can generate portfolios that generalize well.

Linear Regression vs Logistic Regression | Edureka

YouTube: https://youtu.be/OCwZyYH14uw

** Data Science Certification using R: https://www.edureka.co/data-science **

This Edureka PPT on Linear Regression Vs Logistic Regression covers the basic concepts of linear and logistic models. The following topics are covered in this session:

Types of Machine Learning

Regression Vs Classification

What is Linear Regression?

What is Logistic Regression?

Linear Regression Use Case

Logistic Regression Use Case

Linear Regression Vs Logistic Regression

Blog Series: http://bit.ly/data-science-blogs

Data Science Training Playlist: http://bit.ly/data-science-playlist

Follow us to never miss an update in the future.

YouTube: https://www.youtube.com/user/edurekaIN

Instagram: https://www.instagram.com/edureka_learning/

Facebook: https://www.facebook.com/edurekaIN/

Twitter: https://twitter.com/edurekain

LinkedIn: https://www.linkedin.com/company/edureka

Transportation Problem with Pentagonal Intuitionistic Fuzzy Numbers Solved Us...

This paper presents a solution methodology for transportation problem in an intuitionistic fuzzy environment in

which cost are represented by pentagonal intuitionistic fuzzy numbers. Transportation problem is a particular

class of linear programming, which is associated with day to day activities in our real life. It helps in solving

problems on distribution and transportation of resources from one place to another. The objective is to satisfy

the demand at destination from the supply constraints at the minimum transportation cost possible. The problem

is solved using a ranking technique called Accuracy function for pentagonal intuitionistic fuzzy numbers and

Russell’s Method

Polynomial regression model of making cost prediction in mixed cost analysis

This document presents a study comparing different regression models for predicting costs based on production levels. It finds that a cubic polynomial regression model provides a better fit than linear regression or the high-low method. The study uses cost and production data from a company to build linear, quadratic, and cubic regression models. It finds the cubic polynomial regression has the highest R-squared value and lowest p-value, indicating it is the best-fitting model. The study concludes that polynomial regression generally provides a better approach for cost prediction than conventional linear regression or the high-low method.

Recommended

Eu33884888

IJERA (International journal of Engineering Research and Applications) is International online, ... peer reviewed journal. For more detail or submit your article, please visit www.ijera.com

Cobb Douglas production function in excel

Cobb Douglas production function in excel

Yi=a X1i^b1 X2i^b2 X3i^b3 e^ui

Where,

Y= dependent variable

X=explanatory(independent)variable

a=intercept

bi are regression coefficients

e=2.718………….

u=error term Follows N(0,constant variance)

This function is non linear in parameters so to make it linear in parameters apply ln both sides then equation looks like

lnYi=lna+ b1lnX1i +b2lnX2i +b3lnX3i+ui

Steps

1. Open Excel, Give Variable Names and Provide Data

2. Apply Logarithmic Transformation to the Data

3. Go to Data analysis

4. Get Output

Cobb Douglas production function in SPSS

This document provides instructions for estimating a Cobb-Douglas production function using SPSS. It explains that taking the natural logarithm of both sides of the production function makes it linear in parameters. It then outlines the 5 steps to perform this analysis in SPSS: 1) Go to the variable view, 2) View the data, 3) Apply a logarithmic transformation to the variables, 4) Go to the analyze menu, and 5) Obtain the output, which will provide estimates of the intercept, coefficients, and estimated production function in log-linear form.

Linear Regression and Logistic Regression in ML

Linear regression and logistic regression are statistical modeling techniques. Linear regression predicts continuous dependent variables using independent variables, while logistic regression predicts binary dependent variables. Both aim to model relationships between variables by estimating coefficients. Logistic regression models the log odds of the dependent variable rather than the variable directly. Key evaluation metrics for regression include accuracy, precision, recall, and F1 score, which are calculated using a confusion matrix.

Application of Graphic LASSO in Portfolio Optimization_Yixuan Chen & Mengxi J...

- The document describes using graphical lasso to estimate the precision matrix of stock returns and apply portfolio optimization.

- Graphical lasso estimates the precision matrix instead of the covariance matrix to allow for sparsity. This makes the estimation more efficient for large datasets.

- The study uses 8 different models to simulate stock return data and compares the performance of graphical lasso, sample covariance, and shrinkage estimators on portfolio optimization of in-sample and out-of-sample test data. Graphical lasso performed best on out-of-sample test data, showing it can generate portfolios that generalize well.

Linear Regression vs Logistic Regression | Edureka

YouTube: https://youtu.be/OCwZyYH14uw

** Data Science Certification using R: https://www.edureka.co/data-science **

This Edureka PPT on Linear Regression Vs Logistic Regression covers the basic concepts of linear and logistic models. The following topics are covered in this session:

Types of Machine Learning

Regression Vs Classification

What is Linear Regression?

What is Logistic Regression?

Linear Regression Use Case

Logistic Regression Use Case

Linear Regression Vs Logistic Regression

Blog Series: http://bit.ly/data-science-blogs

Data Science Training Playlist: http://bit.ly/data-science-playlist

Follow us to never miss an update in the future.

YouTube: https://www.youtube.com/user/edurekaIN

Instagram: https://www.instagram.com/edureka_learning/

Facebook: https://www.facebook.com/edurekaIN/

Twitter: https://twitter.com/edurekain

LinkedIn: https://www.linkedin.com/company/edureka

Transportation Problem with Pentagonal Intuitionistic Fuzzy Numbers Solved Us...

This paper presents a solution methodology for transportation problem in an intuitionistic fuzzy environment in

which cost are represented by pentagonal intuitionistic fuzzy numbers. Transportation problem is a particular

class of linear programming, which is associated with day to day activities in our real life. It helps in solving

problems on distribution and transportation of resources from one place to another. The objective is to satisfy

the demand at destination from the supply constraints at the minimum transportation cost possible. The problem

is solved using a ranking technique called Accuracy function for pentagonal intuitionistic fuzzy numbers and

Russell’s Method

Polynomial regression model of making cost prediction in mixed cost analysis

This document presents a study comparing different regression models for predicting costs based on production levels. It finds that a cubic polynomial regression model provides a better fit than linear regression or the high-low method. The study uses cost and production data from a company to build linear, quadratic, and cubic regression models. It finds the cubic polynomial regression has the highest R-squared value and lowest p-value, indicating it is the best-fitting model. The study concludes that polynomial regression generally provides a better approach for cost prediction than conventional linear regression or the high-low method.

11.polynomial regression model of making cost prediction in mixed cost analysis

This document presents a study comparing different regression models for predicting costs based on production levels. It finds that a cubic polynomial regression model provides a better fit than linear regression or the high-low method. The study uses cost and production data from a company to build linear, quadratic, and cubic regression models. It finds the cubic polynomial regression has the highest R-squared value and lowest p-value, indicating it is best able to model the cost patterns in the data. The document concludes the cubic polynomial regression provides a better approach for cost prediction than traditional linear regression or high-low methods.

Ai_Project_report

This document summarizes a student project on building machine learning models to recognize handwritten digits. The project involved collecting handwritten digit data, preprocessing the data, implementing logistic regression with gradient descent, evaluating performance on test data, and achieving over 90% accuracy on the test set. The models have applications in areas like banking, postal services, and document digitization.

Advanced functions visual Basic .net

This document discusses advanced functions and subroutines in Visual Basic, including:

- The difference between iteration and recursion

- Passing parameters by reference and arrays as parameters

- Optional parameters and overloading functions

- Calling one function from another function

It provides examples of writing recursive functions to calculate sums, factorials, and Fibonacci sequences. It also demonstrates overloading functions and using optional parameters.

Machine Learning Application: Credit Scoring

1) The document discusses using machine learning techniques like logistic regression, random forest, and K-means clustering to develop a credit scoring model based on financial ratios to predict a company's probability of default.

2) Random forest performed the best with an AUC of 0.87 and high precision and recall, while logistic regression had a AUC of 0.75 but issues with type II errors.

3) K-means clustering had a lower precision predicting defaults but an acceptable F1-score and AUC of 0.80.

Linear logisticregression

This document provides an overview of linear and logistic regression models. It discusses that linear regression is used for numeric prediction problems while logistic regression is used for classification problems with categorical outputs. It then covers the key aspects of each model, including defining the hypothesis function, cost function, and using gradient descent to minimize the cost function and fit the model parameters. For linear regression, it discusses calculating the regression line to best fit the data. For logistic regression, it discusses modeling the probability of class membership using a sigmoid function and interpreting the odds ratios from the model coefficients.

Linear regression

This document discusses various types of regression modeling and linear regression. It provides examples of linear regression analysis on fraud data and discusses assessing goodness of fit. It also briefly covers non-linear regression, problem areas like heteroskedasticity and collinearity, and model selection methods. Linear regression is presented geometrically and the assumptions and computations of ordinary least squares regression are explained.

Linear algebra application in linear programming

Linear programming is used to maximize or minimize quantities subject to constraints. It can be applied to problems with any number of variables and constraints, as long as the relationships are linear. Key aspects include defining an objective function to optimize, determining the feasible region where all constraints are satisfied, and finding extreme points where the objective function may be maximized or minimized. An example problem involves determining how to allocate candy mixtures to maximize revenue given constraints on available ingredients. The optimal solution is found at an extreme point within the bounded feasible region.

Bayesian analysis of shape parameter of Lomax distribution using different lo...

The Lomax distribution also known as Pareto distribution of the second kind or Pearson Type VI distribution has been used in the analysis of income data, and business failure data. It may describe the lifetime of a decreasing failure rate component as a heavy tailed alternative to the exponential distribution. In this paper we consider the estimation of the parameter of Lomax distribution. Baye’s estimator is obtained by using Jeffery’s and extension of Jeffery’s prior by using squared error loss function, Al-Bayyati’s loss function and Precautionary loss function. Maximum likelihood estimation is also discussed. These methods are compared by using mean square error through simulation study with varying sample sizes. The study aims to find out a suitable estimator of the parameter of the distribution. Finally, we analyze one data set for illustration.

Ridge regression, lasso and elastic net

It is given by Yunting Sun at NYC open data meetup, see more information at www.nycopendata.com or join us at www.meetup.com/nyc-open-data

Post-optimal analysis of LPP

The document discusses post-optimal analysis in linear optimization problems. It describes how changes can affect feasibility or optimality, including changes to right-hand sides, adding new constraints, or changing objective coefficients. It also discusses adding a new activity/variable and using the dual simplex method to find the new optimal solution.

The Basic Model of Computation

The document discusses models of computation and their uses. It provides examples of how a mathematical function takes an input and produces an output based on the model. Models describe how computation, memory, and communication are organized. The complexity of an algorithm can be measured given a model of computation, which allows studying performance independently of specific implementations. It also discusses how variables in a model require declaration and reservation of space in memory, with examples of space needed for different variable types.

Recommender Systems

This document provides an overview of recommender systems including background information, implementation details, and a demonstration. It discusses machine learning applications like unsupervised learning, linear regression, and gradient descent algorithms. It covers content-based filtering using known product features and collaborative filtering to identify unknown product features. Examples are given on using linear regression and gradient descent to make predictions for skills data and handle unknown values.

KNN

The document discusses the K-nearest neighbors (KNN) algorithm, a supervised machine learning classification method. KNN classifies new data based on the labels of the k nearest training samples in feature space. It can be used for both classification and regression problems, though it is mainly used for classification. The algorithm works by finding the k closest samples in the training data to the new sample and predicting the label based on a majority vote of the k neighbors' labels.

Regression analysis on SPSS

This document discusses simple and multiple regression analysis. Simple regression considers the relationship between one explanatory variable and one response variable, while multiple regression considers the relationship between one dependent variable and multiple independent variables. The document provides the formulas for simple and multiple linear regression. It also presents an example using SPSS to analyze the relationship between firm size, age, and performance. The SPSS output includes measures of model fit like R, R-squared, adjusted R-squared, ANOVA, regression coefficients, and diagnostics for assumptions. Hypothesis testing is conducted on the regression coefficients.

5 parallel implementation 06299286

This document describes a parallel implementation of the Apriori algorithm for frequent itemset mining using MapReduce. The key steps are: (1) The Apriori algorithm is broken down into independent mapping tasks to count candidate itemset occurrences in parallel; (2) A MapReduce job is used for each iteration where the map function counts occurrences and the reduce function sums the counts; (3) Experimental results on real datasets show the approach achieves good speedup, scaleup, and can efficiently process large datasets in a distributed manner.

Multiple Regression.ppt

This document discusses multiple regression analysis. It begins by introducing multiple regression as an extension of simple linear regression that allows for modeling relationships between a response variable and multiple explanatory variables. It then covers topics such as examining variable distributions, building regression models, estimating model parameters, and assessing overall model fit and significance of individual predictors. An example demonstrates using multiple regression to build a model for predicting cable television subscribers based on advertising rates, station power, number of local families, and number of competing stations.

working with python

Linear regression and logistic regression are two machine learning algorithms that can be implemented in Python. Linear regression is used for predictive analysis to find relationships between variables, while logistic regression is used for classification with binary dependent variables. Support vector machines (SVMs) are another algorithm that finds the optimal hyperplane to separate data points and maximize the margin between the classes. Key terms discussed include cost functions, gradient descent, confusion matrices, and ROC curves. Code examples are provided to demonstrate implementing linear regression, logistic regression, and SVM in Python using scikit-learn.

Chapter14

The document discusses multiple regression models and their use in predicting a dependent variable from several independent variables. It defines a general multiple regression model and describes how regression coefficients are estimated using the least squares method. It also discusses assessing the significance and utility of regression models through measures like the F-test and R-squared value. An example is provided of researchers using multiple regression to predict lung capacity based on variables like height, age, gender and activity level.

Data Analysison Regression

The document discusses linear regression analysis and its applications. It provides examples of using regression to predict house prices based on house characteristics, economic forecasts based on economic indicators, and determining optimal advertising levels based on past sales data. It also explains key concepts in regression including the least squares method, the regression line, R-squared, and the assumptions of the linear regression model.

Shrinkage Methods in Linear Regression

This document discusses shrinkage methods in linear regression, specifically Lasso and Ridge regression. Ridge regression aims to minimize coefficients by adding a penalty term that is the sum of the squared coefficients to the ordinary least squares objective function. Lasso regression is similar but uses the sum of the absolute values of coefficients as the penalty term. This causes Lasso to tend to set more coefficients exactly to zero, making it more suitable for feature selection. Gradient descent can be used to optimize Ridge regression, while coordinate descent is better for optimizing Lasso due to its non-differentiability at zero.

Business Analytics Foundation with R tools - Part 2

Beamsync is providing analytics training courses in Bangalore. If you are looking business analytics training in Bangalore, then consult Beamsync.

For upcoming training schedules visit: http://beamsync.com/business-analytics-training-bangalore/

Regression

Describing Data using Regression -

Machine Learning, It also explains the concept of regression to the mean

More Related Content

What's hot

11.polynomial regression model of making cost prediction in mixed cost analysis

This document presents a study comparing different regression models for predicting costs based on production levels. It finds that a cubic polynomial regression model provides a better fit than linear regression or the high-low method. The study uses cost and production data from a company to build linear, quadratic, and cubic regression models. It finds the cubic polynomial regression has the highest R-squared value and lowest p-value, indicating it is best able to model the cost patterns in the data. The document concludes the cubic polynomial regression provides a better approach for cost prediction than traditional linear regression or high-low methods.

Ai_Project_report

This document summarizes a student project on building machine learning models to recognize handwritten digits. The project involved collecting handwritten digit data, preprocessing the data, implementing logistic regression with gradient descent, evaluating performance on test data, and achieving over 90% accuracy on the test set. The models have applications in areas like banking, postal services, and document digitization.

Advanced functions visual Basic .net

This document discusses advanced functions and subroutines in Visual Basic, including:

- The difference between iteration and recursion

- Passing parameters by reference and arrays as parameters

- Optional parameters and overloading functions

- Calling one function from another function

It provides examples of writing recursive functions to calculate sums, factorials, and Fibonacci sequences. It also demonstrates overloading functions and using optional parameters.

Machine Learning Application: Credit Scoring

1) The document discusses using machine learning techniques like logistic regression, random forest, and K-means clustering to develop a credit scoring model based on financial ratios to predict a company's probability of default.

2) Random forest performed the best with an AUC of 0.87 and high precision and recall, while logistic regression had a AUC of 0.75 but issues with type II errors.

3) K-means clustering had a lower precision predicting defaults but an acceptable F1-score and AUC of 0.80.

Linear logisticregression

This document provides an overview of linear and logistic regression models. It discusses that linear regression is used for numeric prediction problems while logistic regression is used for classification problems with categorical outputs. It then covers the key aspects of each model, including defining the hypothesis function, cost function, and using gradient descent to minimize the cost function and fit the model parameters. For linear regression, it discusses calculating the regression line to best fit the data. For logistic regression, it discusses modeling the probability of class membership using a sigmoid function and interpreting the odds ratios from the model coefficients.

Linear regression

This document discusses various types of regression modeling and linear regression. It provides examples of linear regression analysis on fraud data and discusses assessing goodness of fit. It also briefly covers non-linear regression, problem areas like heteroskedasticity and collinearity, and model selection methods. Linear regression is presented geometrically and the assumptions and computations of ordinary least squares regression are explained.

Linear algebra application in linear programming

Linear programming is used to maximize or minimize quantities subject to constraints. It can be applied to problems with any number of variables and constraints, as long as the relationships are linear. Key aspects include defining an objective function to optimize, determining the feasible region where all constraints are satisfied, and finding extreme points where the objective function may be maximized or minimized. An example problem involves determining how to allocate candy mixtures to maximize revenue given constraints on available ingredients. The optimal solution is found at an extreme point within the bounded feasible region.

Bayesian analysis of shape parameter of Lomax distribution using different lo...

The Lomax distribution also known as Pareto distribution of the second kind or Pearson Type VI distribution has been used in the analysis of income data, and business failure data. It may describe the lifetime of a decreasing failure rate component as a heavy tailed alternative to the exponential distribution. In this paper we consider the estimation of the parameter of Lomax distribution. Baye’s estimator is obtained by using Jeffery’s and extension of Jeffery’s prior by using squared error loss function, Al-Bayyati’s loss function and Precautionary loss function. Maximum likelihood estimation is also discussed. These methods are compared by using mean square error through simulation study with varying sample sizes. The study aims to find out a suitable estimator of the parameter of the distribution. Finally, we analyze one data set for illustration.

Ridge regression, lasso and elastic net

It is given by Yunting Sun at NYC open data meetup, see more information at www.nycopendata.com or join us at www.meetup.com/nyc-open-data

Post-optimal analysis of LPP

The document discusses post-optimal analysis in linear optimization problems. It describes how changes can affect feasibility or optimality, including changes to right-hand sides, adding new constraints, or changing objective coefficients. It also discusses adding a new activity/variable and using the dual simplex method to find the new optimal solution.

The Basic Model of Computation

The document discusses models of computation and their uses. It provides examples of how a mathematical function takes an input and produces an output based on the model. Models describe how computation, memory, and communication are organized. The complexity of an algorithm can be measured given a model of computation, which allows studying performance independently of specific implementations. It also discusses how variables in a model require declaration and reservation of space in memory, with examples of space needed for different variable types.

Recommender Systems

This document provides an overview of recommender systems including background information, implementation details, and a demonstration. It discusses machine learning applications like unsupervised learning, linear regression, and gradient descent algorithms. It covers content-based filtering using known product features and collaborative filtering to identify unknown product features. Examples are given on using linear regression and gradient descent to make predictions for skills data and handle unknown values.

KNN

The document discusses the K-nearest neighbors (KNN) algorithm, a supervised machine learning classification method. KNN classifies new data based on the labels of the k nearest training samples in feature space. It can be used for both classification and regression problems, though it is mainly used for classification. The algorithm works by finding the k closest samples in the training data to the new sample and predicting the label based on a majority vote of the k neighbors' labels.

Regression analysis on SPSS

This document discusses simple and multiple regression analysis. Simple regression considers the relationship between one explanatory variable and one response variable, while multiple regression considers the relationship between one dependent variable and multiple independent variables. The document provides the formulas for simple and multiple linear regression. It also presents an example using SPSS to analyze the relationship between firm size, age, and performance. The SPSS output includes measures of model fit like R, R-squared, adjusted R-squared, ANOVA, regression coefficients, and diagnostics for assumptions. Hypothesis testing is conducted on the regression coefficients.

5 parallel implementation 06299286

This document describes a parallel implementation of the Apriori algorithm for frequent itemset mining using MapReduce. The key steps are: (1) The Apriori algorithm is broken down into independent mapping tasks to count candidate itemset occurrences in parallel; (2) A MapReduce job is used for each iteration where the map function counts occurrences and the reduce function sums the counts; (3) Experimental results on real datasets show the approach achieves good speedup, scaleup, and can efficiently process large datasets in a distributed manner.

What's hot (15)

11.polynomial regression model of making cost prediction in mixed cost analysis

11.polynomial regression model of making cost prediction in mixed cost analysis

Bayesian analysis of shape parameter of Lomax distribution using different lo...

Bayesian analysis of shape parameter of Lomax distribution using different lo...

Similar to Churn Prediction on customer data

Multiple Regression.ppt

This document discusses multiple regression analysis. It begins by introducing multiple regression as an extension of simple linear regression that allows for modeling relationships between a response variable and multiple explanatory variables. It then covers topics such as examining variable distributions, building regression models, estimating model parameters, and assessing overall model fit and significance of individual predictors. An example demonstrates using multiple regression to build a model for predicting cable television subscribers based on advertising rates, station power, number of local families, and number of competing stations.

working with python

Linear regression and logistic regression are two machine learning algorithms that can be implemented in Python. Linear regression is used for predictive analysis to find relationships between variables, while logistic regression is used for classification with binary dependent variables. Support vector machines (SVMs) are another algorithm that finds the optimal hyperplane to separate data points and maximize the margin between the classes. Key terms discussed include cost functions, gradient descent, confusion matrices, and ROC curves. Code examples are provided to demonstrate implementing linear regression, logistic regression, and SVM in Python using scikit-learn.

Chapter14

The document discusses multiple regression models and their use in predicting a dependent variable from several independent variables. It defines a general multiple regression model and describes how regression coefficients are estimated using the least squares method. It also discusses assessing the significance and utility of regression models through measures like the F-test and R-squared value. An example is provided of researchers using multiple regression to predict lung capacity based on variables like height, age, gender and activity level.

Data Analysison Regression

The document discusses linear regression analysis and its applications. It provides examples of using regression to predict house prices based on house characteristics, economic forecasts based on economic indicators, and determining optimal advertising levels based on past sales data. It also explains key concepts in regression including the least squares method, the regression line, R-squared, and the assumptions of the linear regression model.

Shrinkage Methods in Linear Regression

This document discusses shrinkage methods in linear regression, specifically Lasso and Ridge regression. Ridge regression aims to minimize coefficients by adding a penalty term that is the sum of the squared coefficients to the ordinary least squares objective function. Lasso regression is similar but uses the sum of the absolute values of coefficients as the penalty term. This causes Lasso to tend to set more coefficients exactly to zero, making it more suitable for feature selection. Gradient descent can be used to optimize Ridge regression, while coordinate descent is better for optimizing Lasso due to its non-differentiability at zero.

Business Analytics Foundation with R tools - Part 2

Beamsync is providing analytics training courses in Bangalore. If you are looking business analytics training in Bangalore, then consult Beamsync.

For upcoming training schedules visit: http://beamsync.com/business-analytics-training-bangalore/

Regression

Describing Data using Regression -

Machine Learning, It also explains the concept of regression to the mean

7. logistics regression using spss

This document provides guidance on performing and interpreting logistic regression analyses in SPSS. It discusses selecting appropriate statistical tests based on variable types and study objectives. It covers assumptions of logistic regression like linear relationships between predictors and the logit of the outcome. It also explains maximum likelihood estimation, interpreting coefficients, and evaluating model fit and accuracy. Guidelines are provided on reporting logistic regression results from SPSS outputs.

Logistic regression

very detailed illustration of Log of Odds, Logit/ logistic regression and their types from binary logit, ordered logit to multinomial logit and also with their assumptions.

Thanks, for your time, if you enjoyed this short article there are tons of topics in advanced analytics, data science, and machine learning available in my medium repo. https://medium.com/@bobrupakroy

Suggest one psychological research question that could be answered.docx

Suggest one psychological research question that could be answered by each of the following types of statistical tests:

z test

t test for independent samples, and

t test for dependent samples

FINAL EXAM

STAT 5201

Fall 2016

Due on the class Moodle site or in Room 313 Ford Hall

on Tuesday, December 20 at 11:00 AM

In the second case please deliver to the office staff

of the School of Statistics

READ BEFORE STARTING

You must work alone and may discuss these questions only with the TA or Glen Meeden. You

may use the class notes, the text and any other sources of printed material.

Put each answer on a single sheet of paper. You may use both sides and additional sheets if

needed. Number the question and put your name on each sheet.

If I discover a misprint or error in a question I will post a correction on the class web page. In

case you think you have found an error you should check the class home page before contacting us.

1

1. Find a recent survey reported in a newspaper, magazine or on the web. Briefly describe

the survey. What are the target population and sampled population? What conclusions are drawn

from the survey in the article. Do you think these conclusions are justified? What are the possible

sources of bias in the survey? Please be brief.

2. In a small country a governmental department is interested in getting a sample of school

children from grades three through six. Because of a shortage of buildings many of the schools had

two shifts. That is one group of students came in the morning and a different group came in the

afternoon. The department has a list of all the schools in the country and knows which schools

have two shifts of students and which do not. Devise a sampling plan for selecting the students to

appear in the sample.

3. For some population of size N and some fixed sampling design let π1 be the inclusion

probability for unit i. Assume a sample of size n was used to select a sample.

i) If unit i appears in the sample what is the weight we associate with it?

ii) Suppose the population can be partitioned into four disjoint groups or categories. Let Nj be

the size of the j’th category. For this part of the problem we assume that the Nj’s are not known.

Assume that for units in category j there is a constant probability, say γi that they will respond if

selected in the sample. These γj’s are unknown. Suppose in our sample we see nj units in category

j and 0 < rj ≤ nj respond. Note n1 + n2 + n3 + n4 = n. In this case how much weight should be

assigned to a responder in category j.

iii) Answer the same question in part ii) but now assume that the Nj’s are known.

iv) Instead of categories suppose that there is a real valued auxiliary variable, say age, attached

to each unit and it is known that the probability of response depends on age. That is units of

a similar age have a similar probability of responding when selected in the sample. Very briefly

explain how you would assign adjusted weights o ...

International Journal of Engineering Research and Development (IJERD)

call for paper 2012, hard copy of journal, research paper publishing, where to publish research paper,

journal publishing, how to publish research paper, Call For research paper, international journal, publishing a paper, IJERD, journal of science and technology, how to get a research paper published, publishing a paper, publishing of journal, publishing of research paper, reserach and review articles, IJERD Journal, How to publish your research paper, publish research paper, open access engineering journal, Engineering journal, Mathemetics journal, Physics journal, Chemistry journal, Computer Engineering, Computer Science journal, how to submit your paper, peer reviw journal, indexed journal, reserach and review articles, engineering journal, www.ijerd.com, research journals,

yahoo journals, bing journals, International Journal of Engineering Research and Development, google journals, hard copy of journal

Ch 6 Slides.doc/9929292929292919299292@:&:&:&9/92

Nonlinear regression functions allow the regression model to be nonlinear in one or more independent variables. There are two main approaches to modeling nonlinear relationships: polynomials and logarithmic transformations. Polynomials approximate the relationship with higher-order terms of the independent variable, such as quadratic or cubic terms. Logarithmic transformations model relationships in percentage terms by taking logarithms of variables. Both approaches can be estimated using ordinary least squares regression.

Conference_paper.pdf

This document proposes using machine learning techniques to predict COVID-19 infections based on chest x-ray images. Specifically, it involves using discrete wavelet transform to extract space-frequency features from chest x-rays, reducing the dimensionality of features using Shannon entropy, and then training standard machine learning classifiers like logistic regression, support vector machine, decision tree, and convolutional neural network on the extracted features to classify images as COVID-19 positive or negative. The document provides background on the proposed techniques of discrete wavelet transform, entropy, and various machine learning models.

Exploring Support Vector Regression - Signals and Systems Project

Our team competed in a Kaggle competition to predict the bike share use as a part of their capital bike share program in Washington DC using a powerful function approximation technique called support vector regression.

SupportVectorRegression

This document summarizes an analysis of using Support Vector Regression (SVR) to predict bike rental data from a bike sharing program in Washington D.C. It begins with an introduction to SVR and the bike rental prediction competition. It then shows that linear regression performs poorly on this non-linear problem. The document explains how SVR maps data into higher dimensions using kernel functions to allow for non-linear fits. It concludes by outlining the derivation of the SVR method using kernel functions to simplify calculations for the regression.

Ullmayer_Rodriguez_Presentation

This document presents a comparison of dimension reduction techniques for survival analysis, including principal component analysis (PCA), partial least squares (PLS), and random matrix approaches. Simulation data with 100 observations and 1000 covariates was generated to test the ability of each method to minimize bias and mean squared error in estimating survival functions. PCA and PLS were able to capture 50% of the variance by reducing the dimensions to 37. The estimated survival functions were compared to the true function over 5000 iterations. PLS had the lowest bias and mean squared error, followed by PCA, with the random matrix approaches performing worse.

Explore ml day 2

This document provides an overview of linear regression and logistic regression concepts. It begins with an introduction to linear regression, discussing finding the best fit line to training data. It then covers the loss function and gradient descent optimization algorithm used to minimize loss and fit the model parameters. Next, it discusses logistic regression for classification problems, covering the sigmoid function for hypothesis representation and interpreting probabilities. It concludes by discussing feature scaling techniques like normalization and standardization to prepare data for modeling.

Lecture - 8 MLR.pptx

Multiple linear regression allows modeling of relationships between a dependent variable and multiple independent variables. It estimates the coefficients (betas) that best fit the data to a linear equation. The ordinary least squares method is commonly used to estimate the betas by minimizing the sum of squared residuals. Diagnostics include checking overall model significance with F-tests, individual variable significance with t-tests, and detecting multicollinearity. Qualitative variables require preprocessing with dummy variables before inclusion in a regression model.

1624.pptx

Lab 10 covers hypothesis testing for two population means and linear regression analysis.

Part A discusses hypothesis tests to compare the means of two independent populations, including tests for equal and unequal variances, and matched-pair samples.

Part B introduces linear regression models to describe the relationship between a response variable and one or more predictor variables. It demonstrates a simple linear regression model and multiple linear regression model, using R code to estimate coefficients and predict outcomes.

Two examples are provided to illustrate applying linear regression: Example 1 examines a model for hourly wage with predictors of education, experience, age, and gender. Example 2 analyzes annual salary with education as the sole predictor.

TamingStatistics

This document discusses using operators in APL to perform statistical analysis. It proposes defining operators that take statistical functions or distributions as left operands and relations as right operands. This reduces the number of functions needed compared to other languages. Examples of operators include probability, criticalValue, and hypothesis. Sample data can be represented as raw values, frequencies, or summary statistics, making them interchangeable for the operators. The TamingStatistics namespace implements this approach in APL.

Similar to Churn Prediction on customer data (20)

Business Analytics Foundation with R tools - Part 2

Business Analytics Foundation with R tools - Part 2

Suggest one psychological research question that could be answered.docx

Suggest one psychological research question that could be answered.docx

International Journal of Engineering Research and Development (IJERD)

International Journal of Engineering Research and Development (IJERD)

Exploring Support Vector Regression - Signals and Systems Project

Exploring Support Vector Regression - Signals and Systems Project

More from NidhiArora113

Paid search Advertising Research

Business Research on Paid Search Advertisement conversion rate and investment Decisions using Case Research Methods

Contemperory issues in_it report

Stanley Black & Decker has strong linkages and partnerships to support innovation. It collaborates with universities, research centers, suppliers, customers, and other firms to develop new products and technologies. Stanley also works with its extensive external network of specialists and partners with startups through programs like Techstars to identify emerging technologies. While communication with educational institutions about skills needs could be strengthened, overall Stanley Black & Decker utilizes multiple external connections to drive innovation.

Market Analytics

This document discusses a marketing analytics project that used interviews and a conjoint analysis survey to understand consumer preferences for packaged produce attributes. Key findings include:

1. Interviews with environmentally conscious individuals found that packaging material, produce quality (organic vs non-organic), and price most influenced purchasing decisions.

2. A survey presented product profiles varying these attributes to 52 respondents. Regression analysis showed packaging was the most important attribute, followed by price then quality.

3. The ideal product profile was organic produce in a cardboard box for 80 cents. Younger consumers and those interested in sustainability had a stronger preference for organic produce with no packaging.

Strategic change Analytics Report- Walmart

Walmart implemented strategic changes to address sustainability problems in its supply chain. It identified key issues like complexity in supply chains, worker dignity risks, and climate impacts. Walmart's action plan focused on creating shared value, whole systems change, and collective action. It worked with over 1,000 suppliers on projects like reducing emissions 1 gigaton by 2030. As a result, Walmart achieved a 6.1% reduction in emissions from 2015 to 2017 and diverted 78% of waste from landfills, demonstrating the success of its sustainable supply chain strategies.

Business Insights

This document discusses how businesses can use analytics to address common problems in sales, marketing, operations, and services. It provides examples of the types of metrics that can be analyzed for each area, such as cost per sale, customer conversion and retention rates, and cost per service call. The document also lists some of the capabilities involved in sales, marketing, operations, and services. It then gives examples of value reported from analytics initiatives, such as increasing conversion rates by 25% and decreasing acquisition costs by 30%. However, it notes that additional missing information could allow for a deeper understanding.

Social media monitoring

This document discusses key metrics and tools for social media analytics. It introduces metrics like volume of followers and sentiment scoring. It also discusses tools for social media monitoring like Crimson Hexagon. Finally, it discusses implications of social media strategy and how analytics can help with marketing decisions and customer analytics.

Marketing analytics virginia

This document discusses how marketing analytics can be used to understand customers and make strategic marketing decisions. It provides examples of how analyzing metrics like brand value, customer lifetime value (CLV), and review sentiment can help companies optimize factors like pricing, product offerings, and marketing spending. Specifically, it summarizes that analyzing brand architecture components and their relationship to revenue allows companies to strengthen brand-customer connections. It also gives an example showing how CLV analysis can inform appropriate customer acquisition spending.

More from NidhiArora113 (7)

Recently uploaded

Industrial Tech SW: Category Renewal and Creation

Every industrial revolution has created a new set of categories and a new set of players.

Multiple new technologies have emerged, but Samsara and C3.ai are only two companies which have gone public so far.

Manufacturing startups constitute the largest pipeline share of unicorns and IPO candidates in the SF Bay Area, and software startups dominate in Germany.

The APCO Geopolitical Radar - Q3 2024 The Global Operating Environment for Bu...

The Radar reflects input from APCO’s teams located around the world. It distils a host of interconnected events and trends into insights to inform operational and strategic decisions. Issues covered in this edition include:

Zodiac Signs and Food Preferences_ What Your Sign Says About Your Taste

Know what your zodiac sign says about your taste in food! Explore how the 12 zodiac signs influence your culinary preferences with insights from MyPandit. Dive into astrology and flavors!

The Steadfast and Reliable Bull: Taurus Zodiac Sign

Explore the steadfast and reliable nature of the Taurus Zodiac Sign. Discover the personality traits, key dates, and horoscope insights that define the determined and practical Taurus, and learn how their grounded nature makes them the anchor of the zodiac.

NIMA2024 | De toegevoegde waarde van DEI en ESG in campagnes | Nathalie Lam |...

Nathalie zal delen hoe DEI en ESG een fundamentele rol kunnen spelen in je merkstrategie en je de juiste aansluiting kan creëren met je doelgroep. Door middel van voorbeelden en simpele handvatten toont ze hoe dit in jouw organisatie toegepast kan worden.

DearbornMusic-KatherineJasperFullSailUni

My powerpoint presentation for my Music Retail and Distribution class at Full Sail University

Unveiling the Dynamic Personalities, Key Dates, and Horoscope Insights: Gemin...

Explore the fascinating world of the Gemini Zodiac Sign. Discover the unique personality traits, key dates, and horoscope insights of Gemini individuals. Learn how their sociable, communicative nature and boundless curiosity make them the dynamic explorers of the zodiac. Dive into the duality of the Gemini sign and understand their intellectual and adventurous spirit.

Presentation by Herman Kienhuis (Curiosity VC) on Investing in AI for ABS Alu...

Presentation by Herman Kienhuis (Curiosity VC) on developments in AI, the venture capital investment landscape and Curiosity VC's approach to investing, at the alumni event of Amsterdam Business School (University of Amsterdam) on June 13, 2024 in Amsterdam.

How HR Search Helps in Company Success.pdf

HR search is critical to a company's success because it ensures the correct people are in place. HR search integrates workforce capabilities with company goals by painstakingly identifying, screening, and employing qualified candidates, supporting innovation, productivity, and growth. Efficient talent acquisition improves teamwork while encouraging collaboration. Also, it reduces turnover, saves money, and ensures consistency. Furthermore, HR search discovers and develops leadership potential, resulting in a strong pipeline of future leaders. Finally, this strategic approach to recruitment enables businesses to respond to market changes, beat competitors, and achieve long-term success.

The Most Inspiring Entrepreneurs to Follow in 2024.pdf

In a world where the potential of youth innovation remains vastly untouched, there emerges a guiding light in the form of Norm Goldstein, the Founder and CEO of EduNetwork Partners. His dedication to this cause has earned him recognition as a Congressional Leadership Award recipient.

Sustainable Logistics for Cost Reduction_ IPLTech Electric's Eco-Friendly Tra...

Sustainable Logistics for Cost Reduction_ IPLTech Electric's Eco-Friendly Transport Solution

Garments ERP Software in Bangladesh _ Pridesys IT Ltd.pdf

Pridesys Garments ERP is one of the leading ERP solution provider, especially for Garments industries which is integrated with

different modules that cover all the aspects of your Garments Business. This solution supports multi-currency and multi-location

based operations. It aims at keeping track of all the activities including receiving an order from buyer, costing of order, resource

planning, procurement of raw materials, production management, inventory management, import-export process, order

reconciliation process etc. It’s also integrated with other modules of Pridesys ERP including finance, accounts, HR, supply-chain etc.

With this automated solution you can easily track your business activities and entire operations of your garments manufacturing

proces

TIMES BPO: Business Plan For Startup Industry

Starting a business is like embarking on an unpredictable adventure. It’s a journey filled with highs and lows, victories and defeats. But what if I told you that those setbacks and failures could be the very stepping stones that lead you to fortune? Let’s explore how resilience, adaptability, and strategic thinking can transform adversity into opportunity.

How are Lilac French Bulldogs Beauty Charming the World and Capturing Hearts....

“After being the most listed dog breed in the United States for 31

years in a row, the Labrador Retriever has dropped to second place

in the American Kennel Club's annual survey of the country's most

popular canines. The French Bulldog is the new top dog in the

United States as of 2022. The stylish puppy has ascended the

rankings in rapid time despite having health concerns and limited

color choices.”

How to Buy an Engagement Ring.pcffbhfbfghfhptx

Dive into this presentation and learn about the ways in which you can buy an engagement ring. This guide will help you choose the perfect engagement rings for women.

❼❷⓿❺❻❷❽❷❼❽ Dpboss Matka Result Satta Matka Guessing Satta Fix jodi Kalyan Fin...

❼❷⓿❺❻❷❽❷❼❽ Dpboss Matka Result Satta Matka Guessing Satta Fix jodi Kalyan Fin...❼❷⓿❺❻❷❽❷❼❽ Dpboss Kalyan Satta Matka Guessing Matka Result Main Bazar chart

❼❷⓿❺❻❷❽❷❼❽ Dpboss Matka Result Satta Matka Guessing Satta Fix jodi Kalyan Final ank Satta Matka Dpbos Final ank Satta Matta Matka 143 Kalyan Matka Guessing Final Matka Final ank Today Matka 420 Satta Batta Satta 143 Kalyan Chart Main Bazar Chart vip Matka Guessing Dpboss 143 Guessing Kalyan night Brian Fitzsimmons on the Business Strategy and Content Flywheel of Barstool S...

On episode 272 of the Digital and Social Media Sports Podcast, Neil chatted with Brian Fitzsimmons, Director of Licensing and Business Development for Barstool Sports.

What follows is a collection of snippets from the podcast. To hear the full interview and more, check out the podcast on all podcast platforms and at www.dsmsports.net

Negotiation & Presentation Skills regarding steps in business communication, ...

Business Communication & presentation Skills, Negotiation Skills

Recently uploaded (20)

Registered-Establishment-List-in-Uttarakhand-pdf.pdf

Registered-Establishment-List-in-Uttarakhand-pdf.pdf

The APCO Geopolitical Radar - Q3 2024 The Global Operating Environment for Bu...

The APCO Geopolitical Radar - Q3 2024 The Global Operating Environment for Bu...

Zodiac Signs and Food Preferences_ What Your Sign Says About Your Taste

Zodiac Signs and Food Preferences_ What Your Sign Says About Your Taste

The Steadfast and Reliable Bull: Taurus Zodiac Sign

The Steadfast and Reliable Bull: Taurus Zodiac Sign

NIMA2024 | De toegevoegde waarde van DEI en ESG in campagnes | Nathalie Lam |...

NIMA2024 | De toegevoegde waarde van DEI en ESG in campagnes | Nathalie Lam |...

Unveiling the Dynamic Personalities, Key Dates, and Horoscope Insights: Gemin...

Unveiling the Dynamic Personalities, Key Dates, and Horoscope Insights: Gemin...

Presentation by Herman Kienhuis (Curiosity VC) on Investing in AI for ABS Alu...

Presentation by Herman Kienhuis (Curiosity VC) on Investing in AI for ABS Alu...

The Most Inspiring Entrepreneurs to Follow in 2024.pdf

The Most Inspiring Entrepreneurs to Follow in 2024.pdf

Sustainable Logistics for Cost Reduction_ IPLTech Electric's Eco-Friendly Tra...

Sustainable Logistics for Cost Reduction_ IPLTech Electric's Eco-Friendly Tra...

Garments ERP Software in Bangladesh _ Pridesys IT Ltd.pdf

Garments ERP Software in Bangladesh _ Pridesys IT Ltd.pdf

How are Lilac French Bulldogs Beauty Charming the World and Capturing Hearts....

How are Lilac French Bulldogs Beauty Charming the World and Capturing Hearts....

❼❷⓿❺❻❷❽❷❼❽ Dpboss Matka Result Satta Matka Guessing Satta Fix jodi Kalyan Fin...

❼❷⓿❺❻❷❽❷❼❽ Dpboss Matka Result Satta Matka Guessing Satta Fix jodi Kalyan Fin...

Brian Fitzsimmons on the Business Strategy and Content Flywheel of Barstool S...

Brian Fitzsimmons on the Business Strategy and Content Flywheel of Barstool S...

Negotiation & Presentation Skills regarding steps in business communication, ...

Negotiation & Presentation Skills regarding steps in business communication, ...

Churn Prediction on customer data

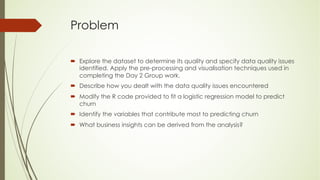

- 1. Problem ´ Explore the dataset to determine its quality and specify data quality issues identified. Apply the pre-processing and visualisation techniques used in completing the Day 2 Group work. ´ Describe how you dealt with the data quality issues encountered ´ Modify the R code provided to fit a logistic regression model to predict churn ´ Identify the variables that contribute most to predicting churn ´ What business insights can be derived from the analysis?

- 2. Preprocessing Data Data cleaning: ´ Dropped four unwanted columns . ´ Changed categorical variables to Numerical variables. ´ No NULL records found Exploratory Data Analysis: ´ Graphs for numerical values show normal Distribution

- 3. ´Gender count comparison for churn – 0 and 1, shows Male-Female count was very high at around 2500 for churn- 0 compared to Male-female count of < 1000 for churn-1, this shows gender can be a good predictor variable for predicting churn. Gender count churn-0 Vs churn-1 0 500 1000 1500 2000 2500 3000 0 1 Fema le Male

- 4. Gender & Partner Count 0 500 1000 1500 2000 2500 3000 0 1 Female Male 0 500 1000 1500 2000 2500 3000 0 1 No Yes

- 5. Dependent & PhoneService Count 0 500 1000 1500 2000 2500 3000 3500 4000 0 1 No Yes 0 500 1000 1500 2000 2500 3000 3500 4000 4500 5000 0 1 No Yes

- 6. MultipleLines & InternetService count 0 500 1000 1500 2000 2500 3000 0 1 No No phone service Yes 0 500 1000 1500 2000 2500 0 1 DSL Fiber optic No

- 7. OnlineSecurity & OnlineBackup 0 500 1000 1500 2000 2500 0 1 No No internet service Yes 0 500 1000 1500 2000 2500 0 1 No No internet service Yes

- 8. Payment Method and PaperlessBilling count 0 200 400 600 800 1000 1200 1400 0 1 Bank transfer (automatic) Credit card (automatic) Electronic check Mailed check 0 500 1000 1500 2000 2500 3000 0 1 No Yes

- 9. Contract & StreamingMovie Count 0 500 1000 1500 2000 2500 0 1 Month-to-month One year Two year 0 500 1000 1500 2000 2500 0 1 No No internet service Yes

- 10. StreamingTV & TechSupport Count 0 200 400 600 800 1000 1200 1400 1600 1800 2000 0 1 No No internet service Yes 0 500 1000 1500 2000 2500 0 1 No No internet service Yes

- 11. Logistic Regression Model ´ Binary LR model works on categorical dependent variables or qualitative variables that can take 2 values, ex: Yes/No, ´ Multinomial LR models can work for three possible categories. ´ LR models estimates probability of occurrence of event using logarithmic likelihood function and not least minimum square method used by regression models. ´ Binary logistic regression model estimates probability of occurrence of dependent variable Y, which present itself in dichotomous form(0/1). ´ 𝑧 = 𝛼 + 𝛽1𝑋1 + 𝛽2𝑋2 + ⋯ + 𝛽𝑛𝑋𝑛 ´ Z is known as logit., a,B1,B2,..Bk are the estimated parameters for explanatory variables X1,X2,…Xk

- 12. LR model ´ Binary logistic regression defines Z logit as natural logarithm of odds, such that: ´ Type equation here. ´ ℓ𝓃(pi/(1-pi))=zi ´ pi=1/(1+e-zi) or p=f(Z) ´ Substitute Categorical variables with dummy Variables, then maximize the logarithmic Likelihood function given by (yi).ln(pi)+(1-yi).ln(1-pi). Keeping 𝛼1=𝛽1= 𝛽 2= 𝛽3=..= 𝛽n=0, calculate zi , pi, lli .

- 13. Logistic Regression ´ M3, M5,M7,M9 cells contain respective estimation parameters, use solver to calculate z, pi, lli ,maximizing the sum of Lli using the data file. ´ There is no percentage of variance w.r.t predicting variables or R^2 as in traditional regression models estimated by least minimum square., More adequate criteria to choose best model is ROC curve(receiver operating characteristic) ´ X2 test is used to verify the model significance , since its null hypothesis are ´ H0: =B2=B2=…=Bk=0 ´ H1: there is atleast one Bi !=0

- 14. Logistic Regression ´ Confusion Matrix based on cutoff = 0.5, representing number of FP, TP, FN, TN ´ OME- Overall model efficiency = (TP+ TN)/Total events ´ Sensitivity= % of hits, for a determined cutoff considering observations that are in-fact events ´ Specificity = % of hits, for a determined cutoff considering observations that are not events.

- 16. LR Results ´ Overall Accuracy: 0.80 ´ Avg_AUC : 0.84 ´ Ang_F1: 0.58

- 17. Precision Vs Recall Graph