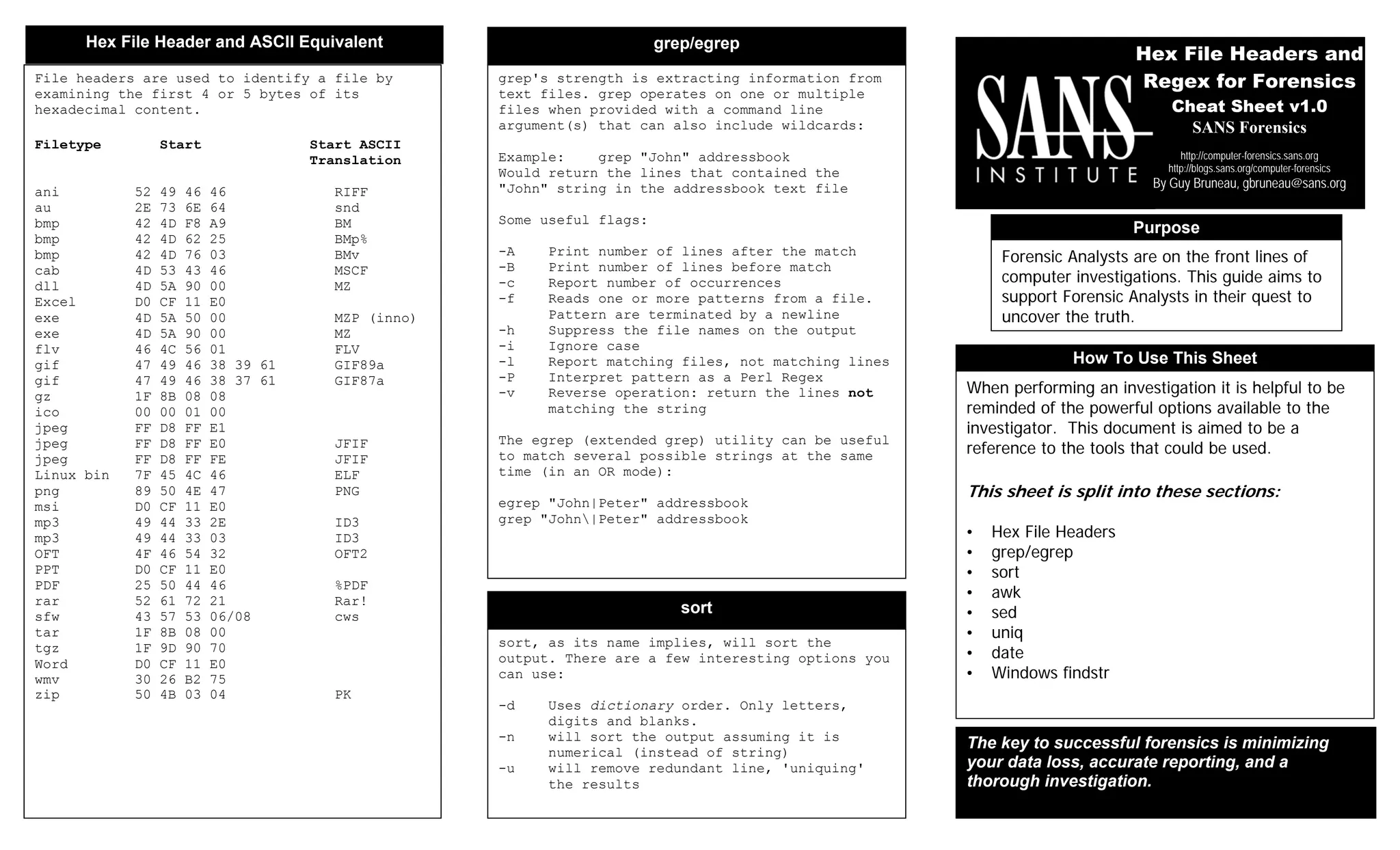

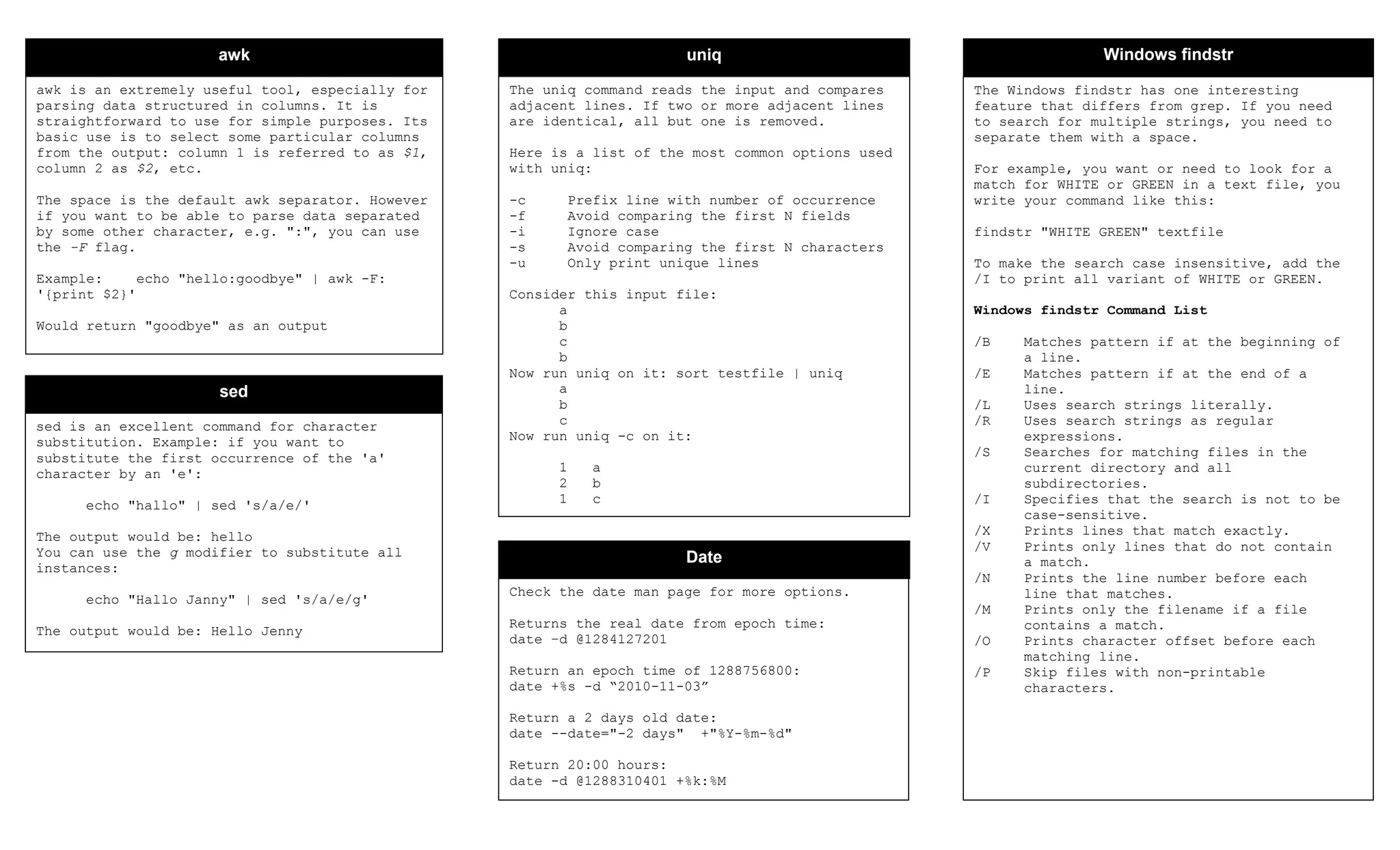

This document serves as a guide for forensic analysts, providing essential commands and tools for computer investigations, primarily focusing on text file analysis with utilities like grep, egrep, and others. It includes detailed information on file headers, sorting, data manipulation using awk and sed, and Windows findstr command functionality. The key takeaway emphasizes minimizing data loss and accurate reporting for successful forensic analyses.