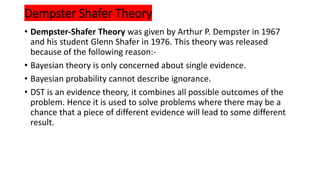

The Certainty Factor Theory uses numeric values between -1 and 1 to represent the likelihood or certainty of statements or hypotheses being true based on evidence. It was developed for artificial intelligence systems to represent uncertain or incomplete information. The Certainty Factor can be calculated based on the Measure of Belief and Measure of Disbelief of hypotheses given evidence, and formulas are provided to combine Certainty Factors from multiple pieces of evidence. However, the theory has limitations, such as difficulty accurately assigning certainty values and the limited numeric range. The Dempster-Shafer Theory was introduced to address some of the limitations of probability theory. It defines a mass function over all subsets of a set of possible conclusions to represent degrees of belief, and uses belief

![• CF is always lies between in the interval 1 and -1

• If CF is -1 then the statement never be true

• +1 then the statement is always true

• Measure of Belief

• It is always denoted as MB[H,E], where H is Hypothesis & E is Evidence

• If MB[H,E]=0 then the H is false for E

• If MB[H,E]=1 then the H is True for E

• It always lies between interval[0,1]](https://image.slidesharecdn.com/certinityfactor-240224042322-993b6c1c/85/Certinity-Factor-and-Dempster-shafer-theory-pptx-2-320.jpg)

![• Measure of Disbelief

• MD[H,E]

• MD[H,E]=0, then H supports Evidence

• MD[H,E]=1, then H Does not supports Evidence

• MB[H,E], MD[H,E]

• Now calculate CF

• If MB[H,E]=1(True) then MD[H,E]=0

• CF[H,E]=1

• If MB[H,E]=0(False) then MD[H,E]=1

• CF[H,E]=0](https://image.slidesharecdn.com/certinityfactor-240224042322-993b6c1c/85/Certinity-Factor-and-Dempster-shafer-theory-pptx-3-320.jpg)

![Formulae for CF

• 1. Multiple Evidences and single Hypothesis

• MB[H,E1 and E2 ]=MB[H,E1]+ MB[H,E2]*[1- MB[H,E1]]

• MD[H,E1 and E2 ]=MD[H,E1]+ MD[H,E2]*[1- MD[H,E1]]

• CF[H,E1 and E2]= MB[H,E1 and E2 ]- MD[H,E1 and E2 ]](https://image.slidesharecdn.com/certinityfactor-240224042322-993b6c1c/85/Certinity-Factor-and-Dempster-shafer-theory-pptx-4-320.jpg)

![EXAMPLE

• MB[H,E1]=0.4

• MD[H,E1]=0 (FOR E1 EVIDANCE]

• MB[H,E21]=0.3

• MD[H,E2]=0.1 (FOR E2 EVIDANCE]](https://image.slidesharecdn.com/certinityfactor-240224042322-993b6c1c/85/Certinity-Factor-and-Dempster-shafer-theory-pptx-5-320.jpg)

![• MB[H,E1 and E2 ]=MB[H,E1]+ MB[H,E2]*[1- MB[H,E1]

• 0.4+0.3*(1-0.4)

• 0.4+0.3*0.6

• 0.58](https://image.slidesharecdn.com/certinityfactor-240224042322-993b6c1c/85/Certinity-Factor-and-Dempster-shafer-theory-pptx-6-320.jpg)

![• MD[H,E1 and E2 ]=MD[H,E1]+ MD[H,E2]*[1- MD[H,E1]

• 0+0.1*(1-0)

• 0.1

• CF=0.58-0.1

• 0.48](https://image.slidesharecdn.com/certinityfactor-240224042322-993b6c1c/85/Certinity-Factor-and-Dempster-shafer-theory-pptx-7-320.jpg)