The document discusses key concepts in master data management including:

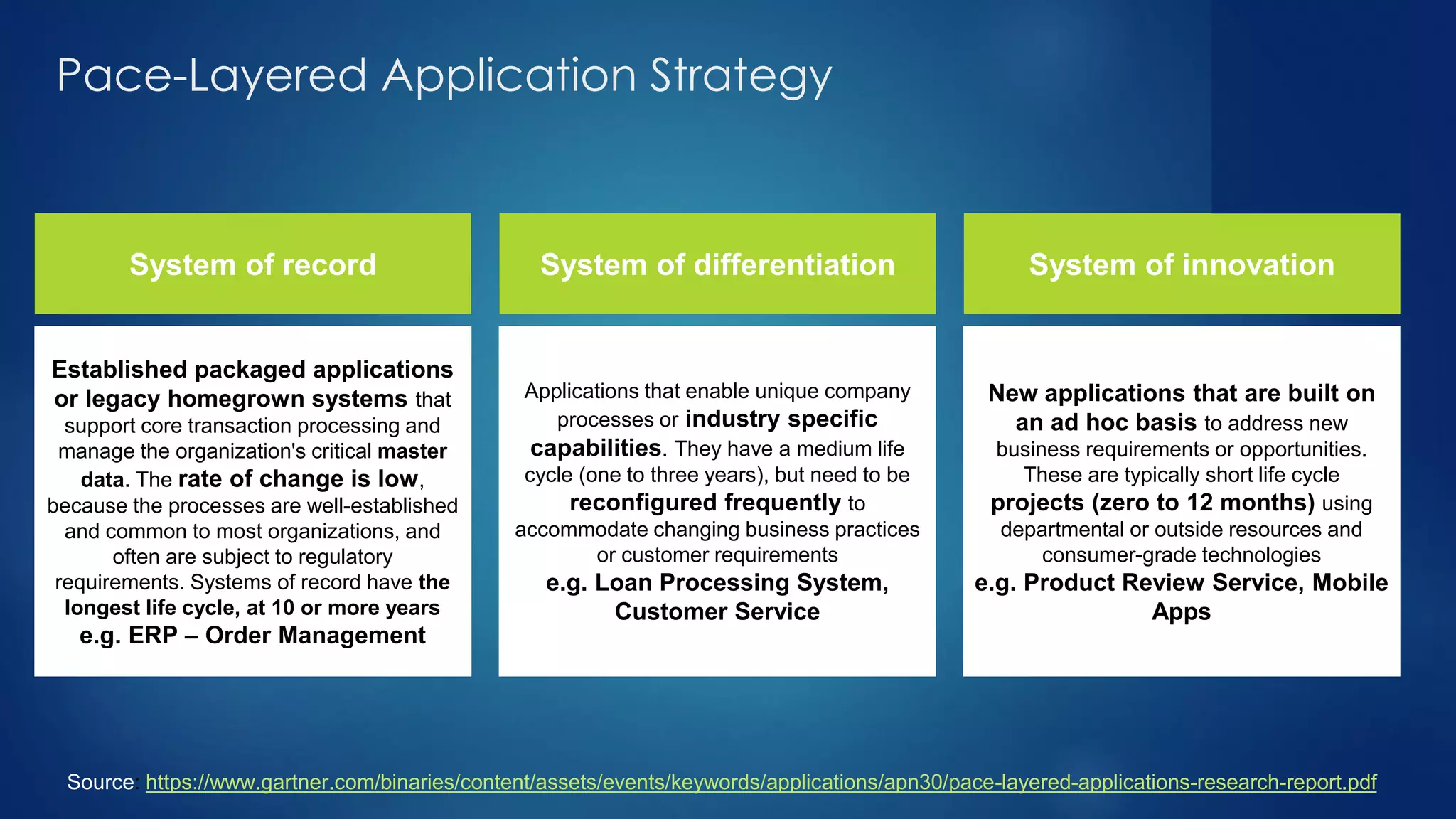

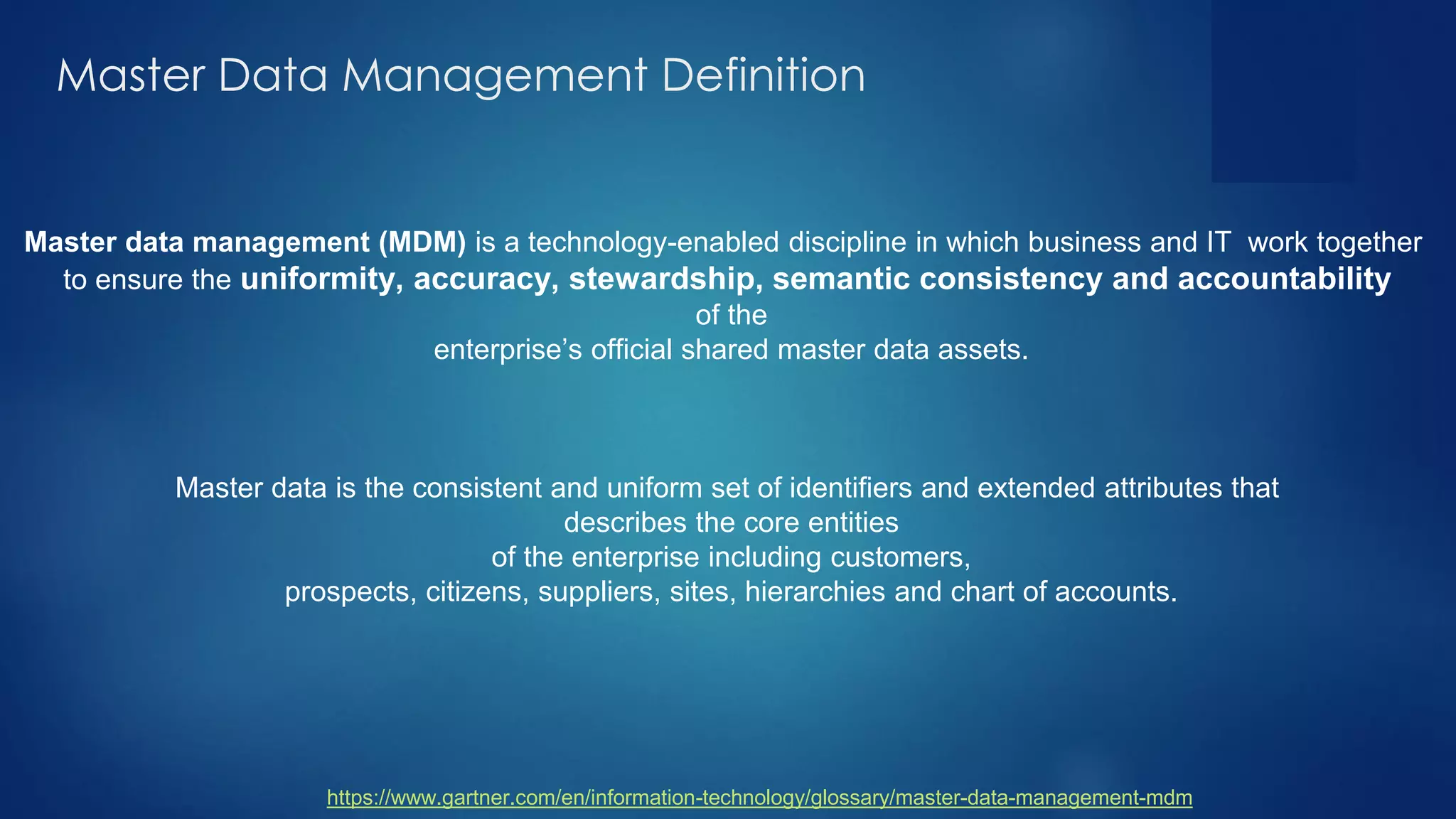

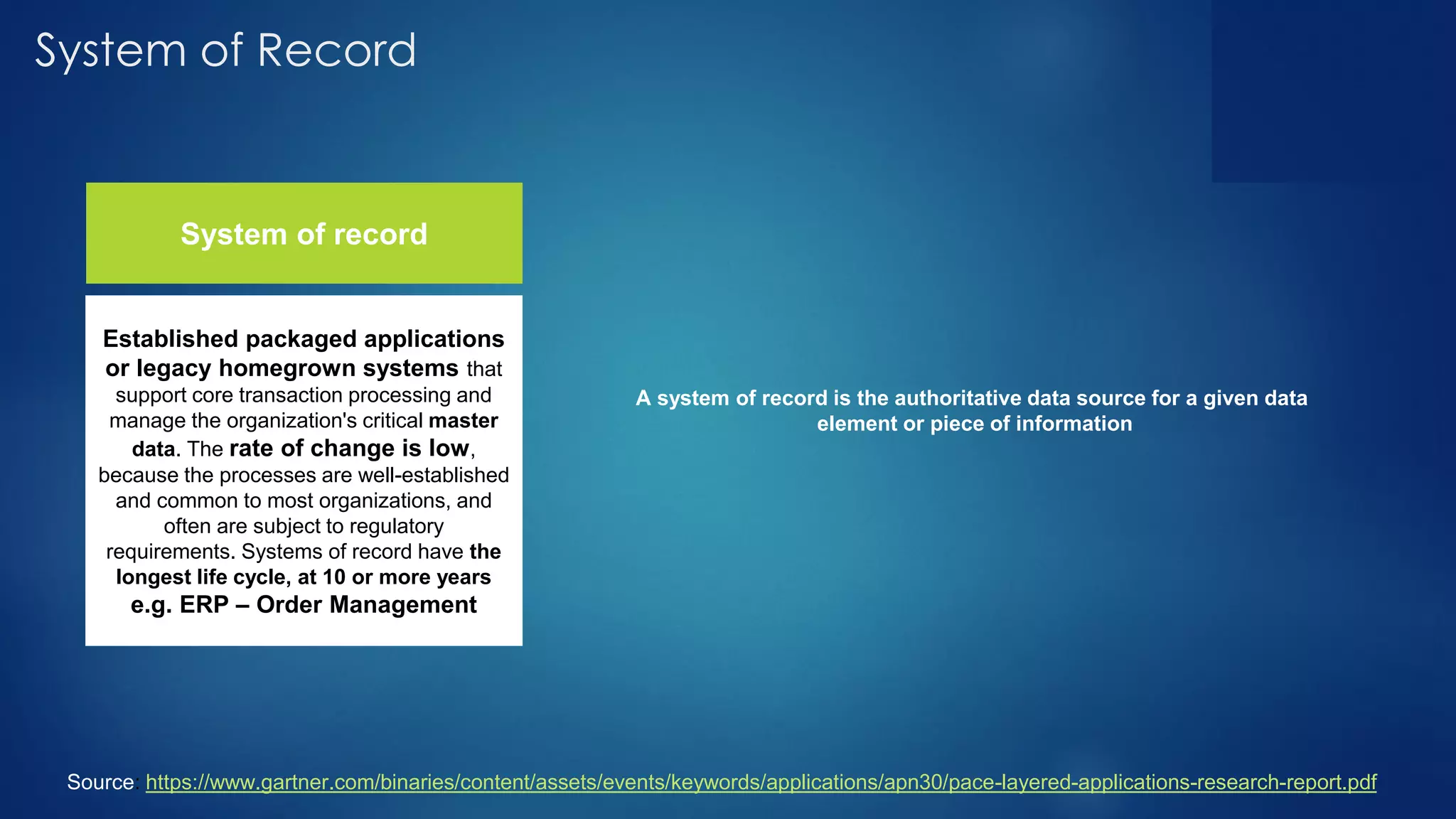

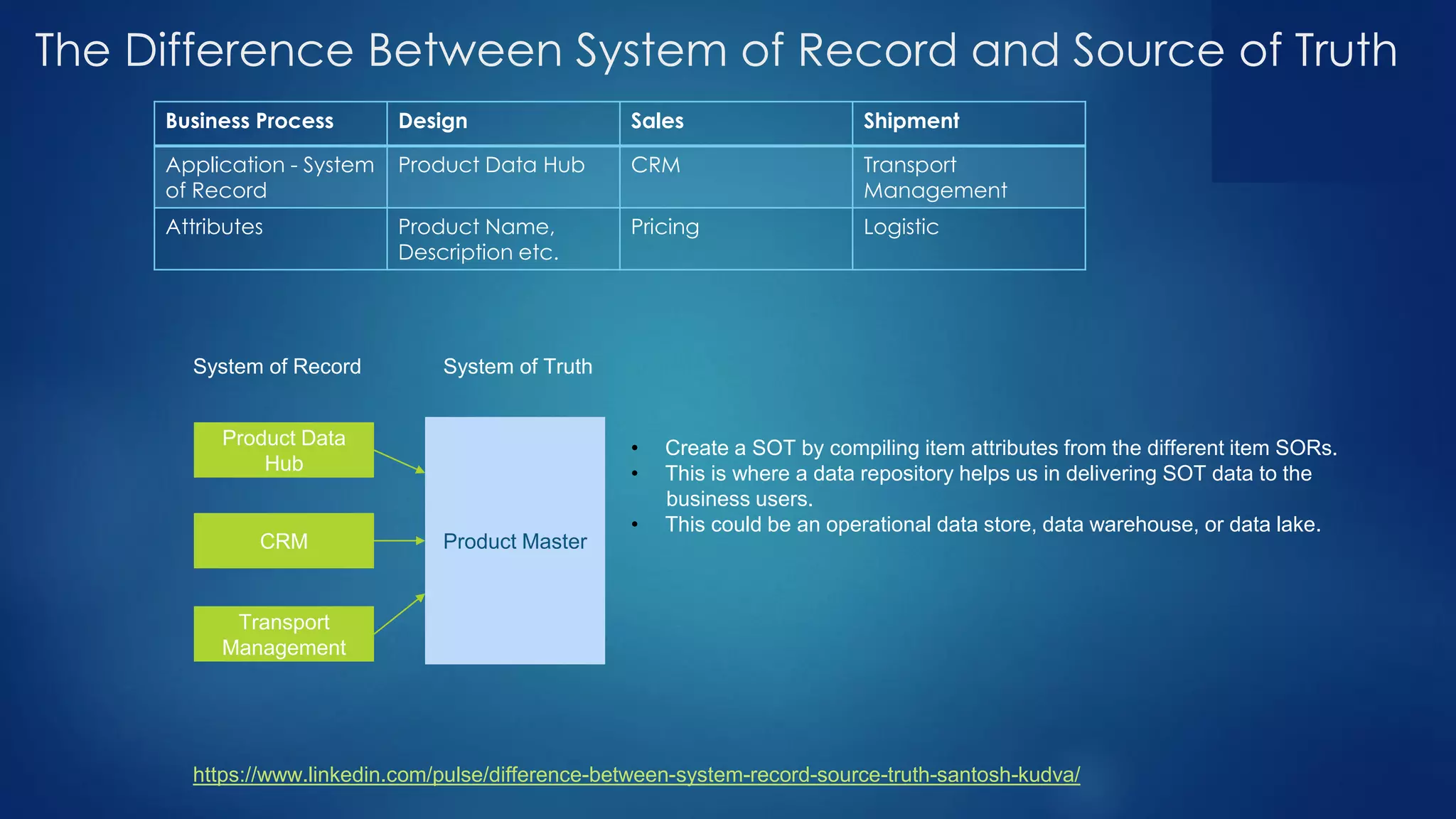

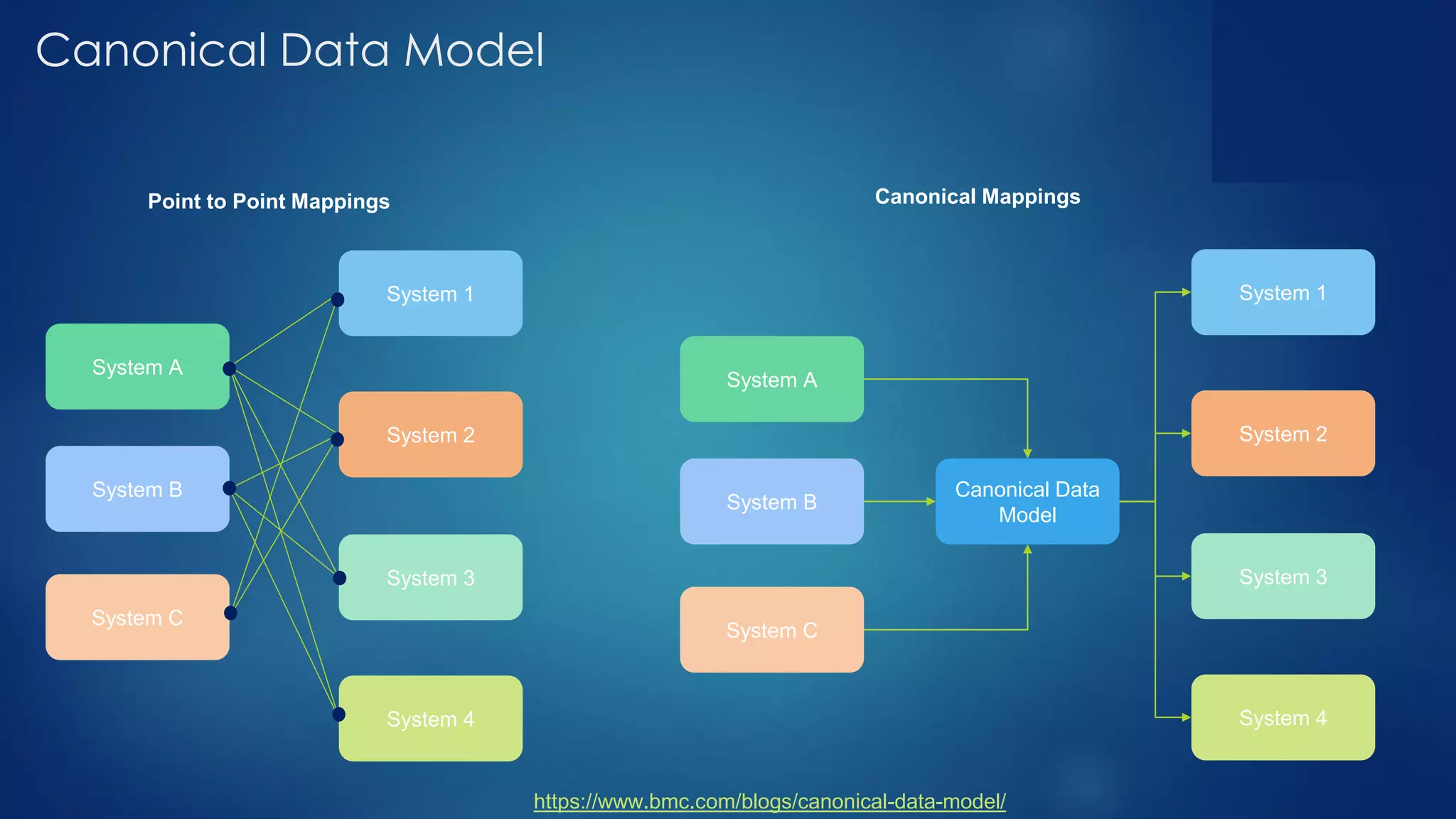

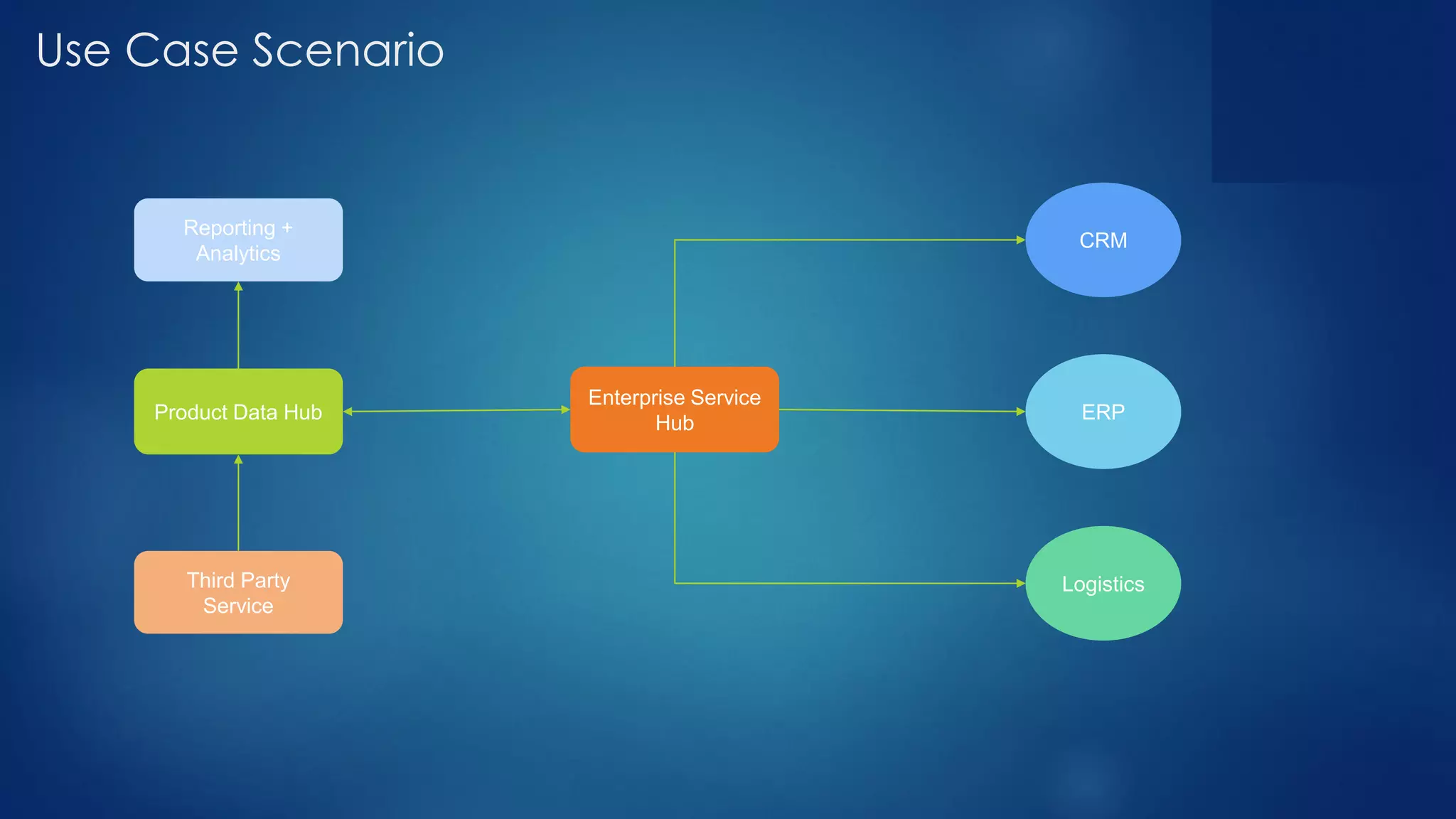

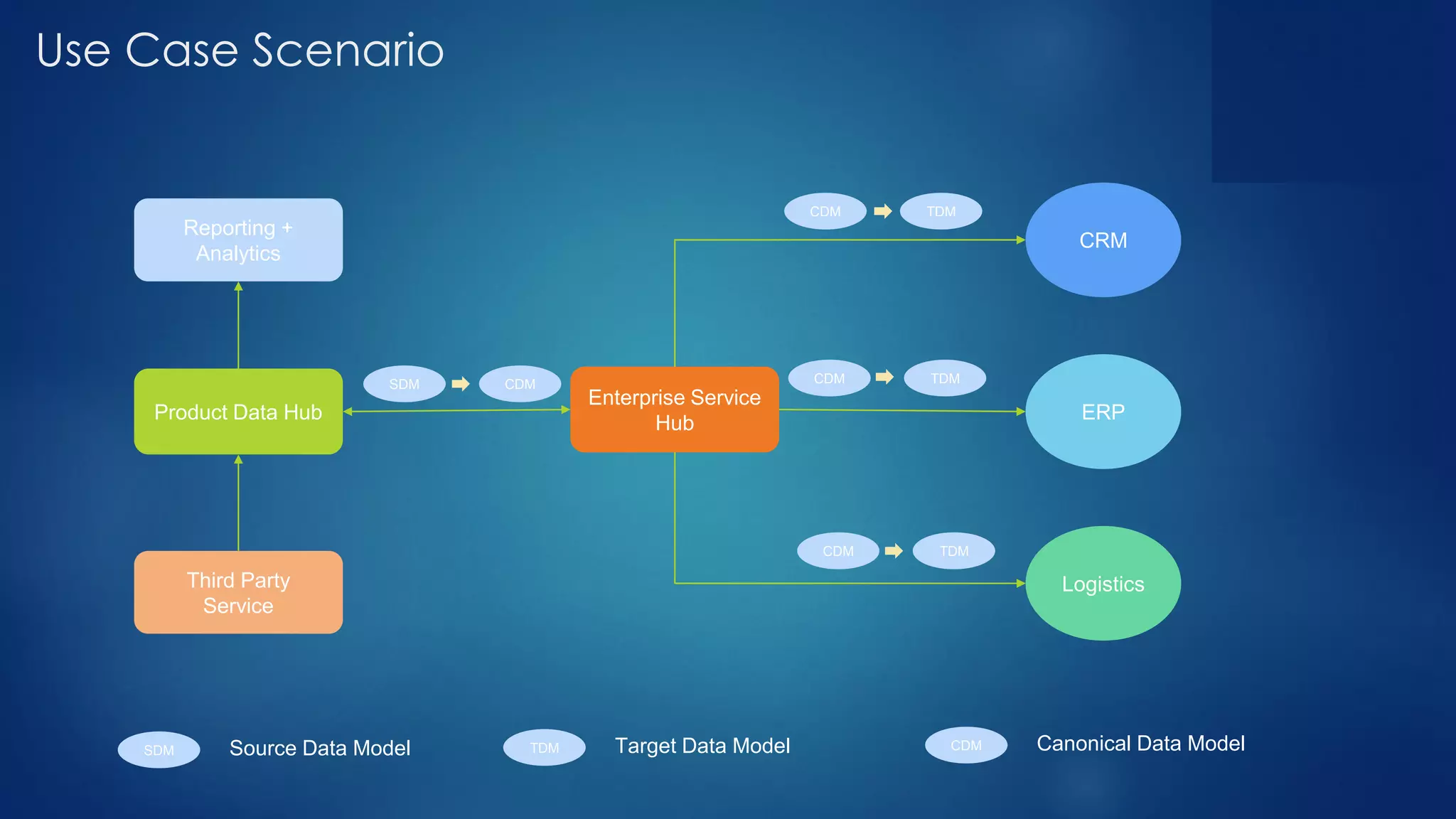

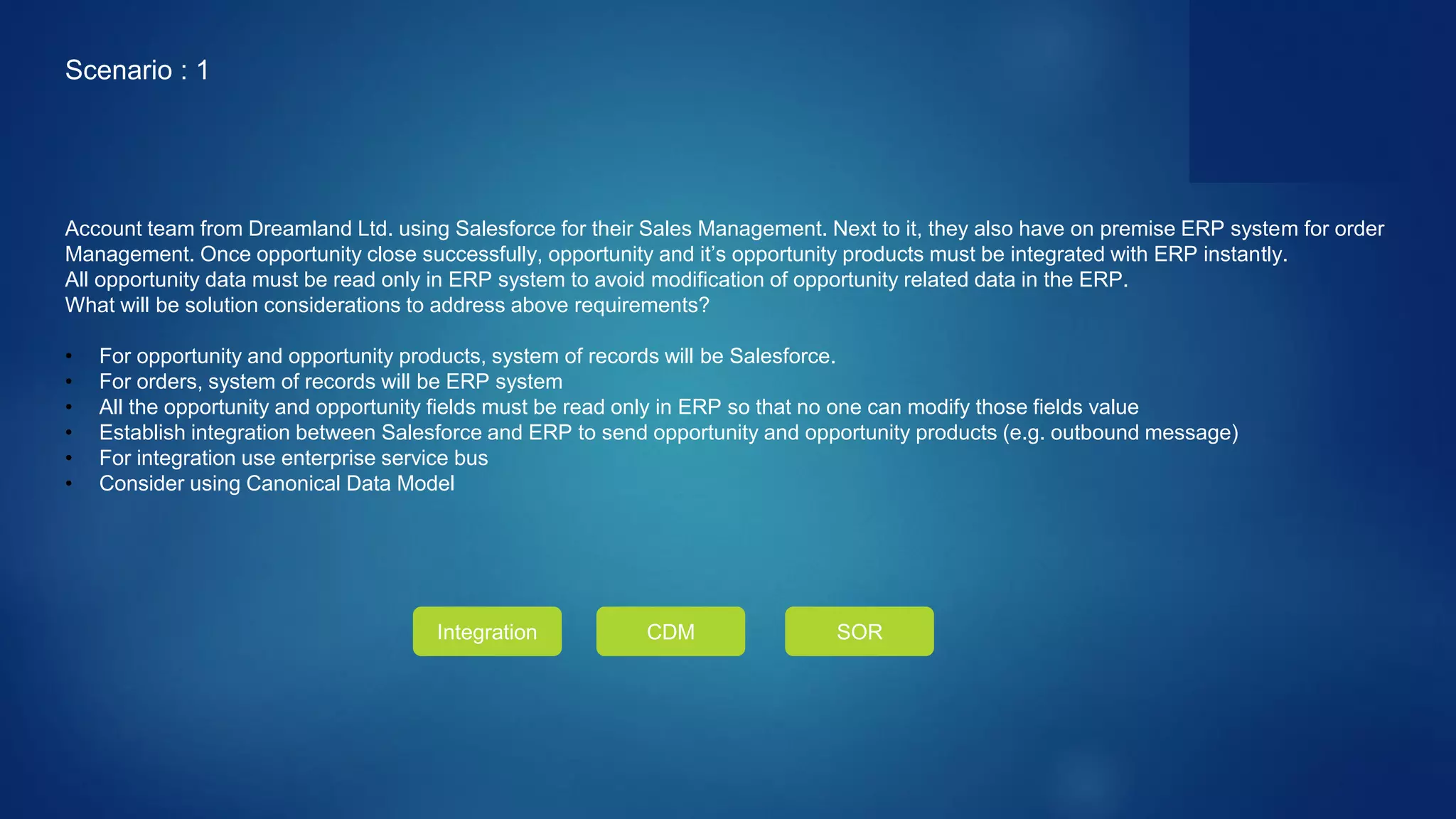

- Defining systems of record, sources of truth, and canonical data models to manage master data across different systems.

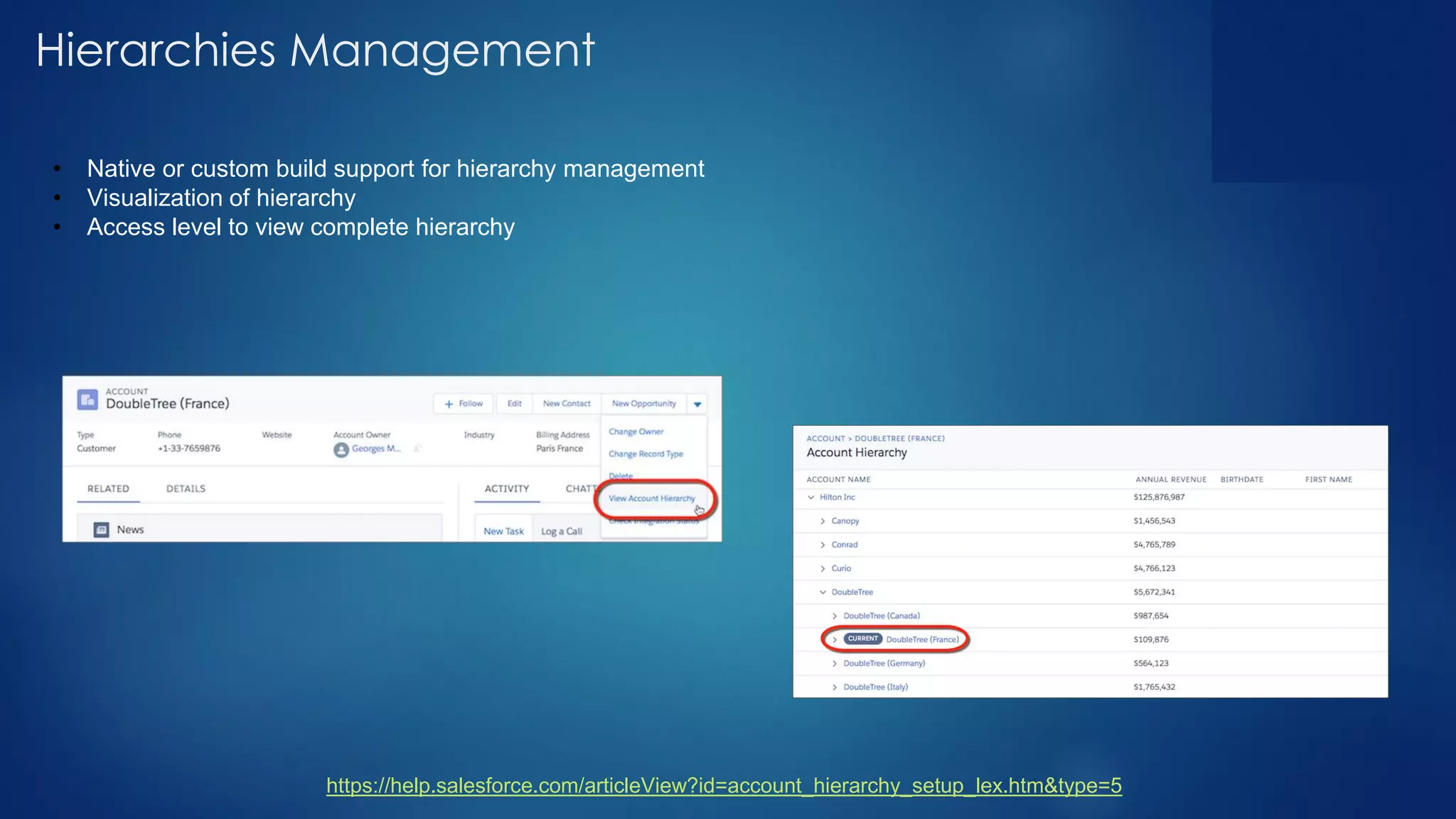

- Mapping data entities and attributes stored in different systems like CRM, ERP, and data hubs.

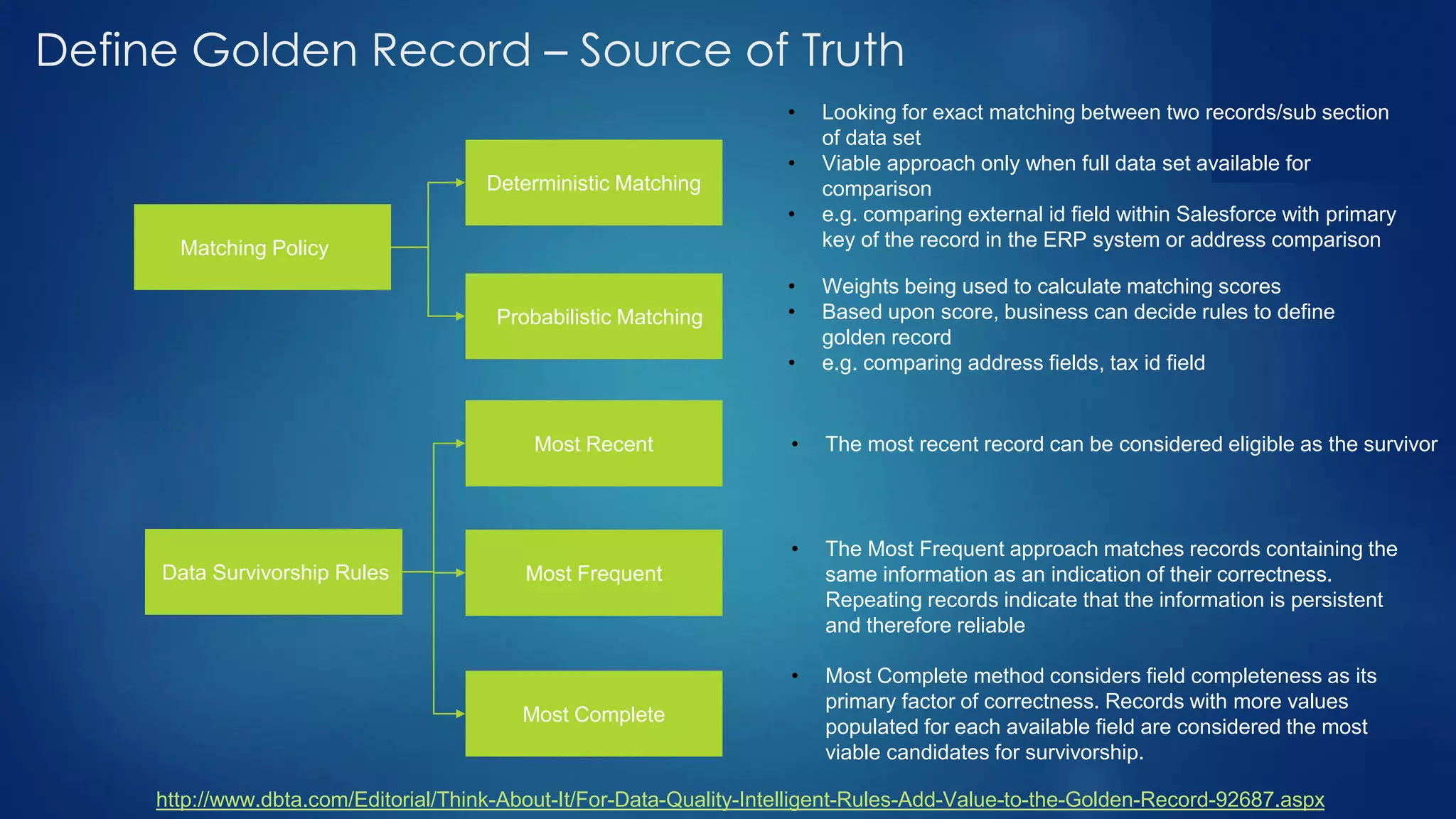

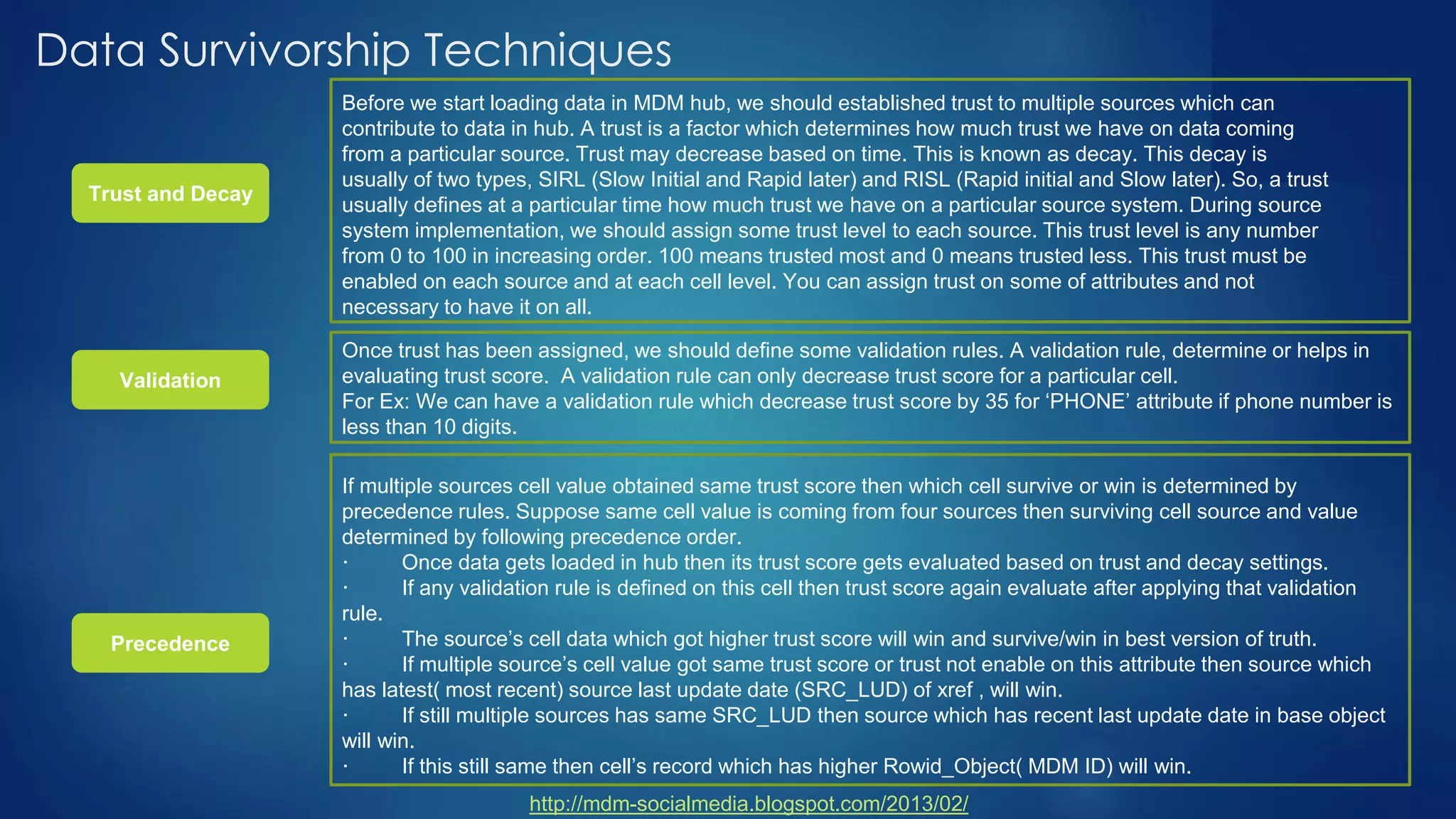

- Techniques for matching, merging, and determining the "single source of truth" for master data when conflicts arise from multiple source systems.