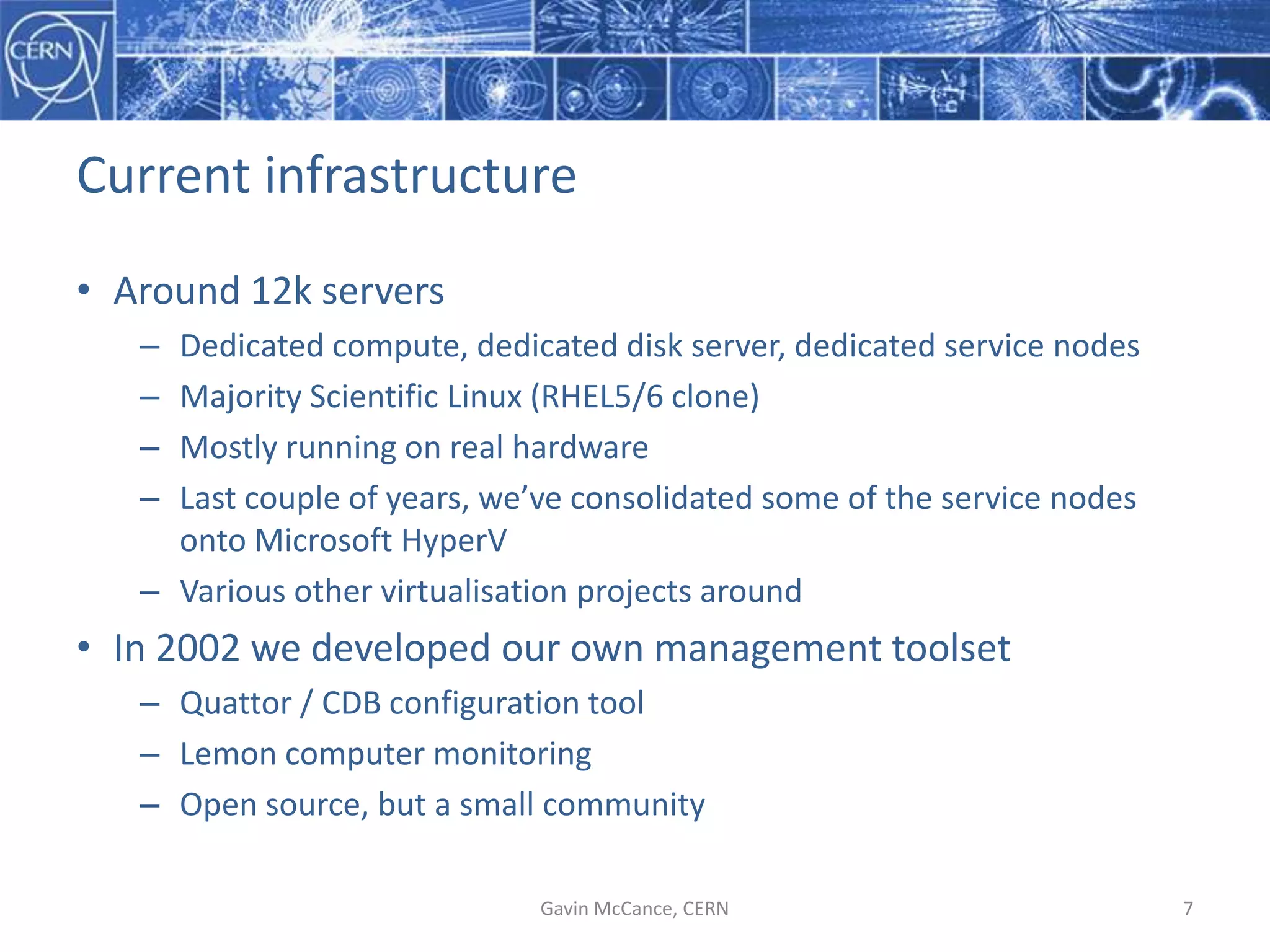

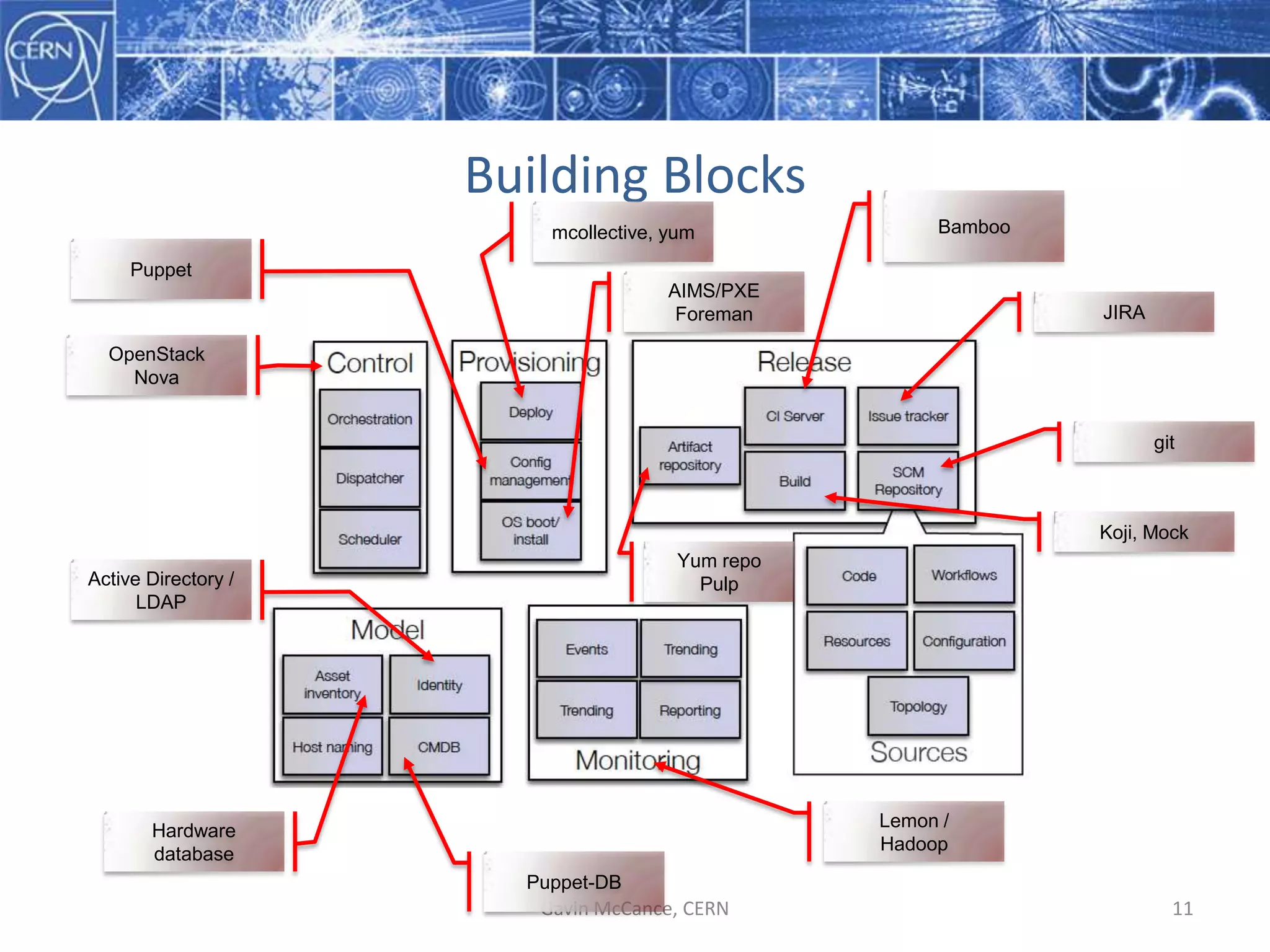

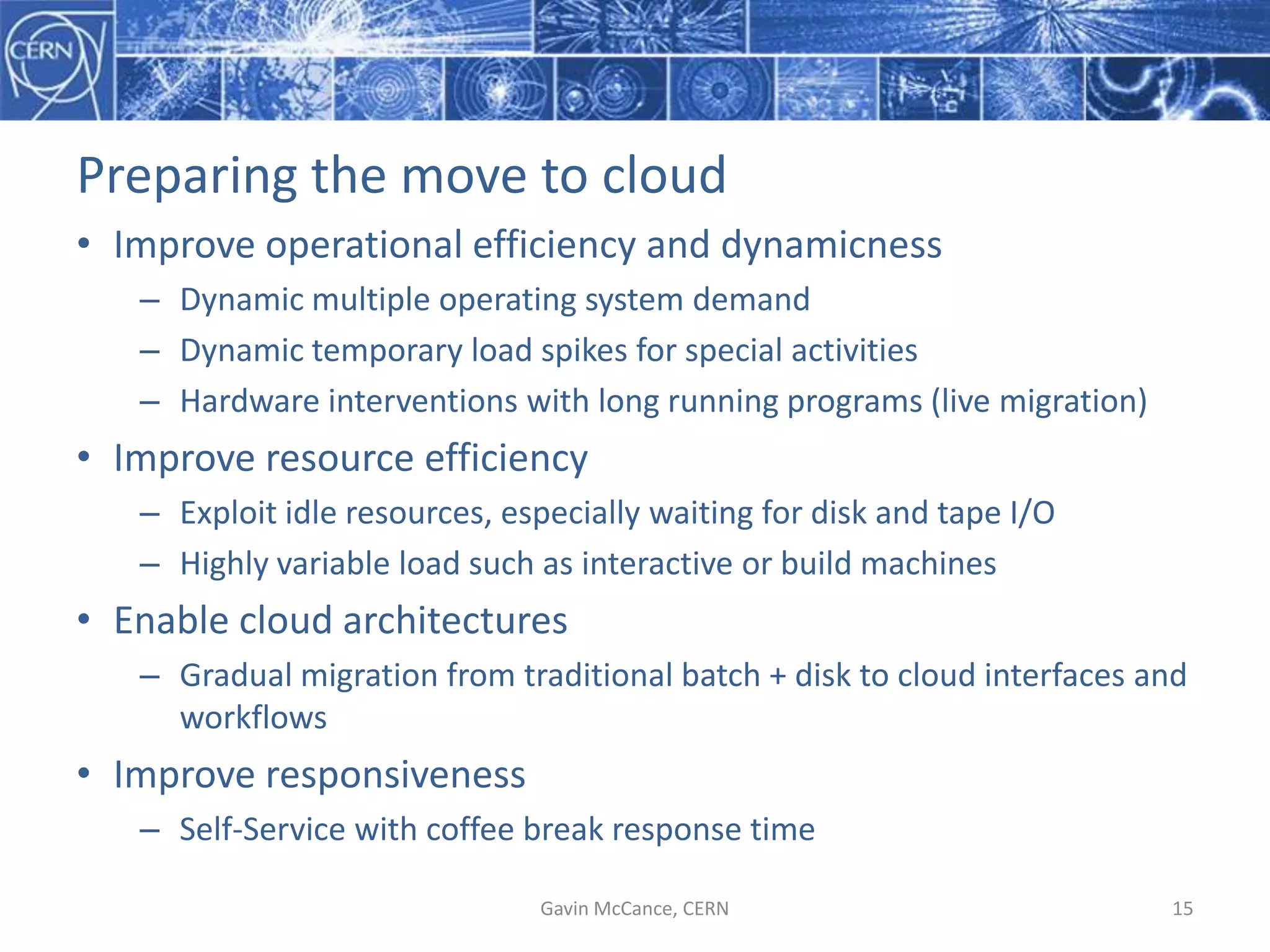

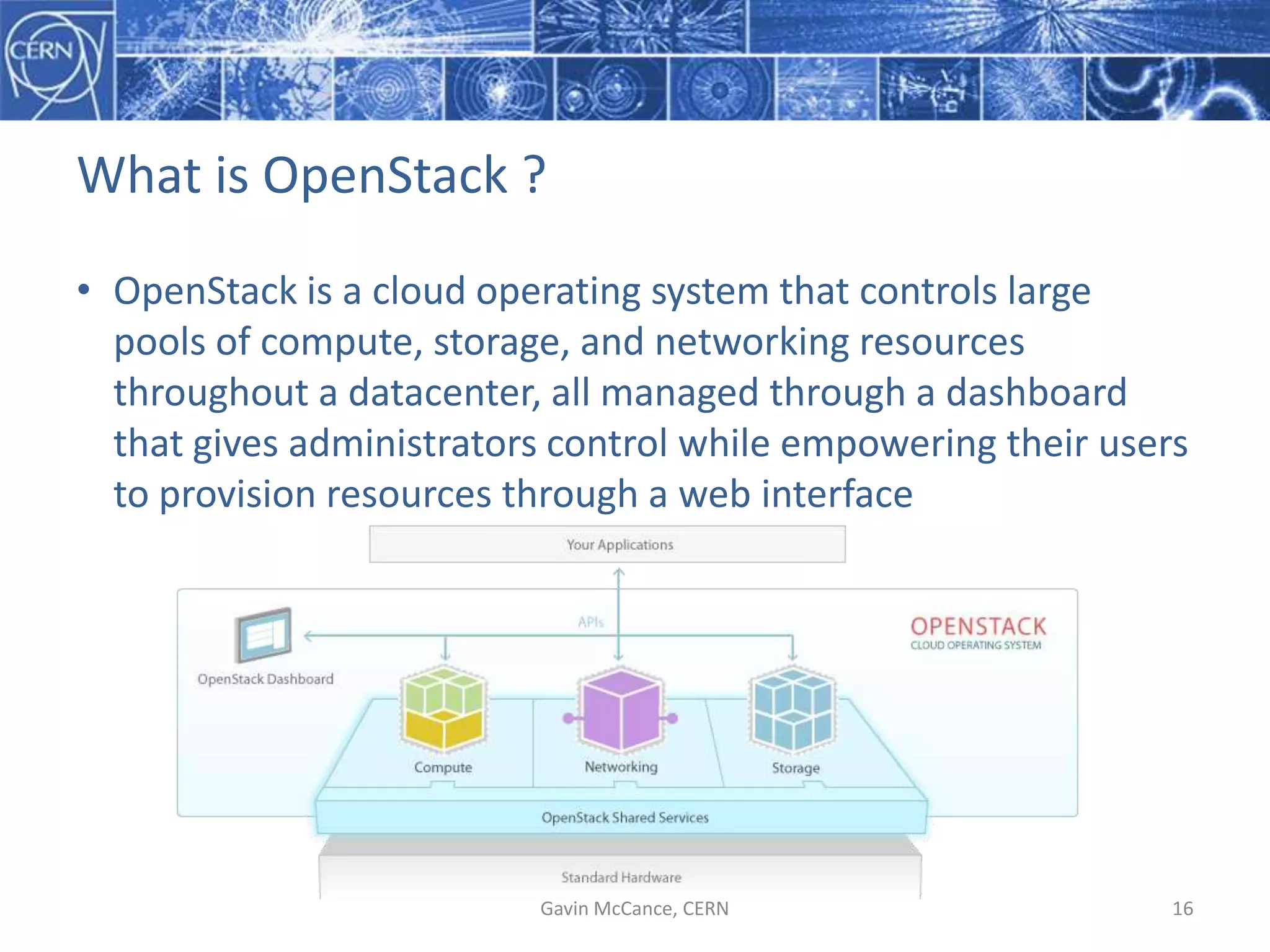

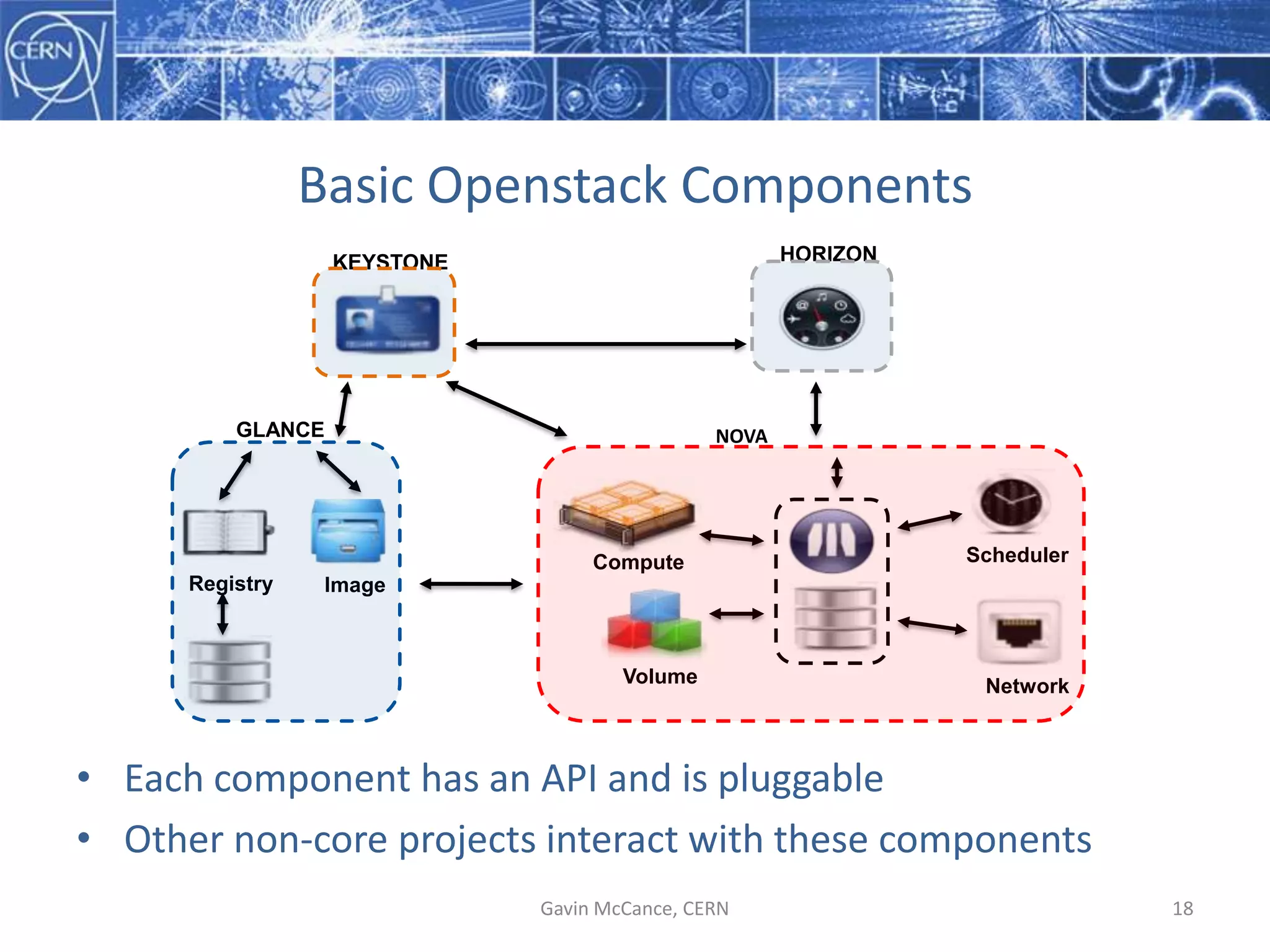

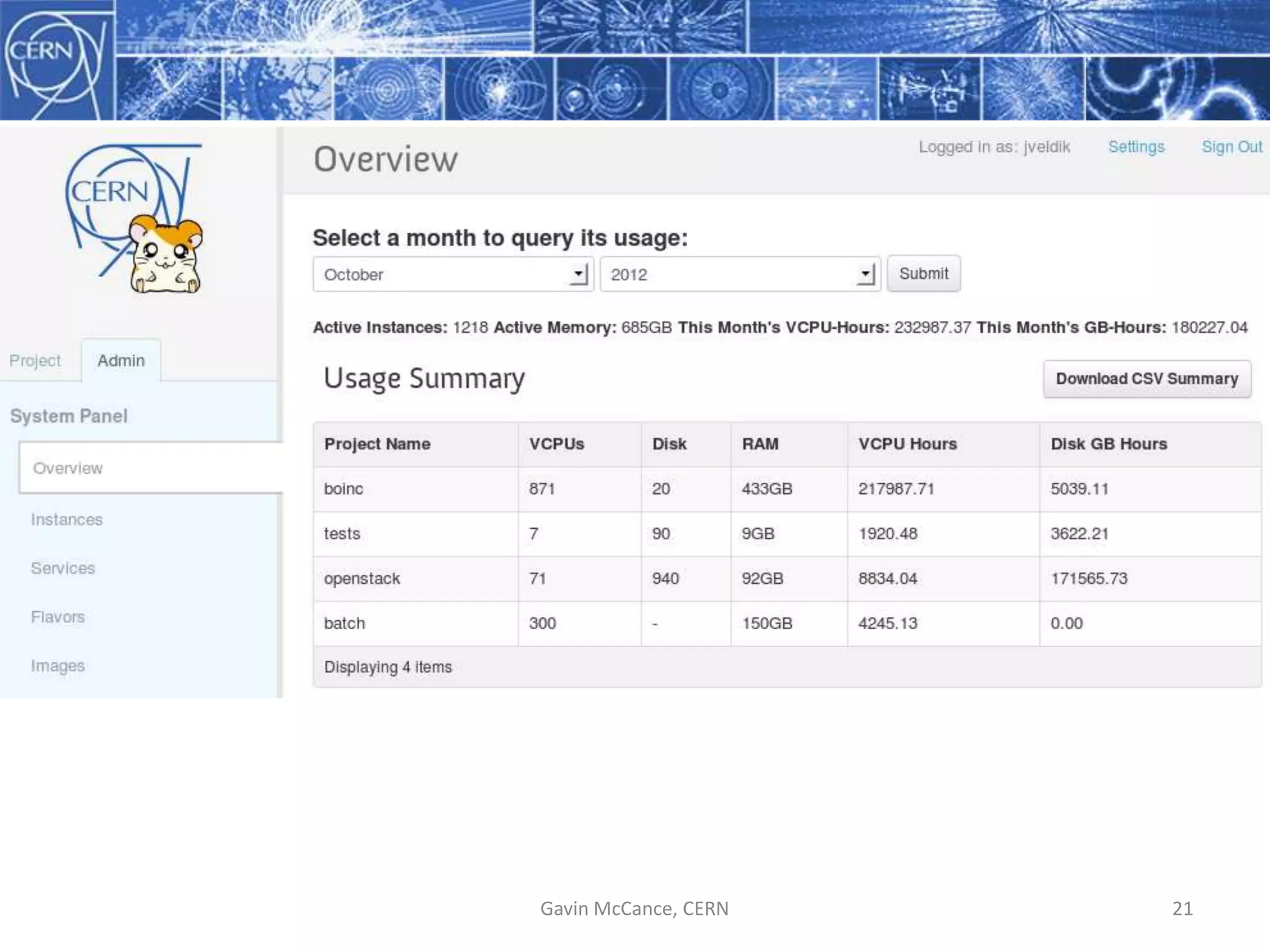

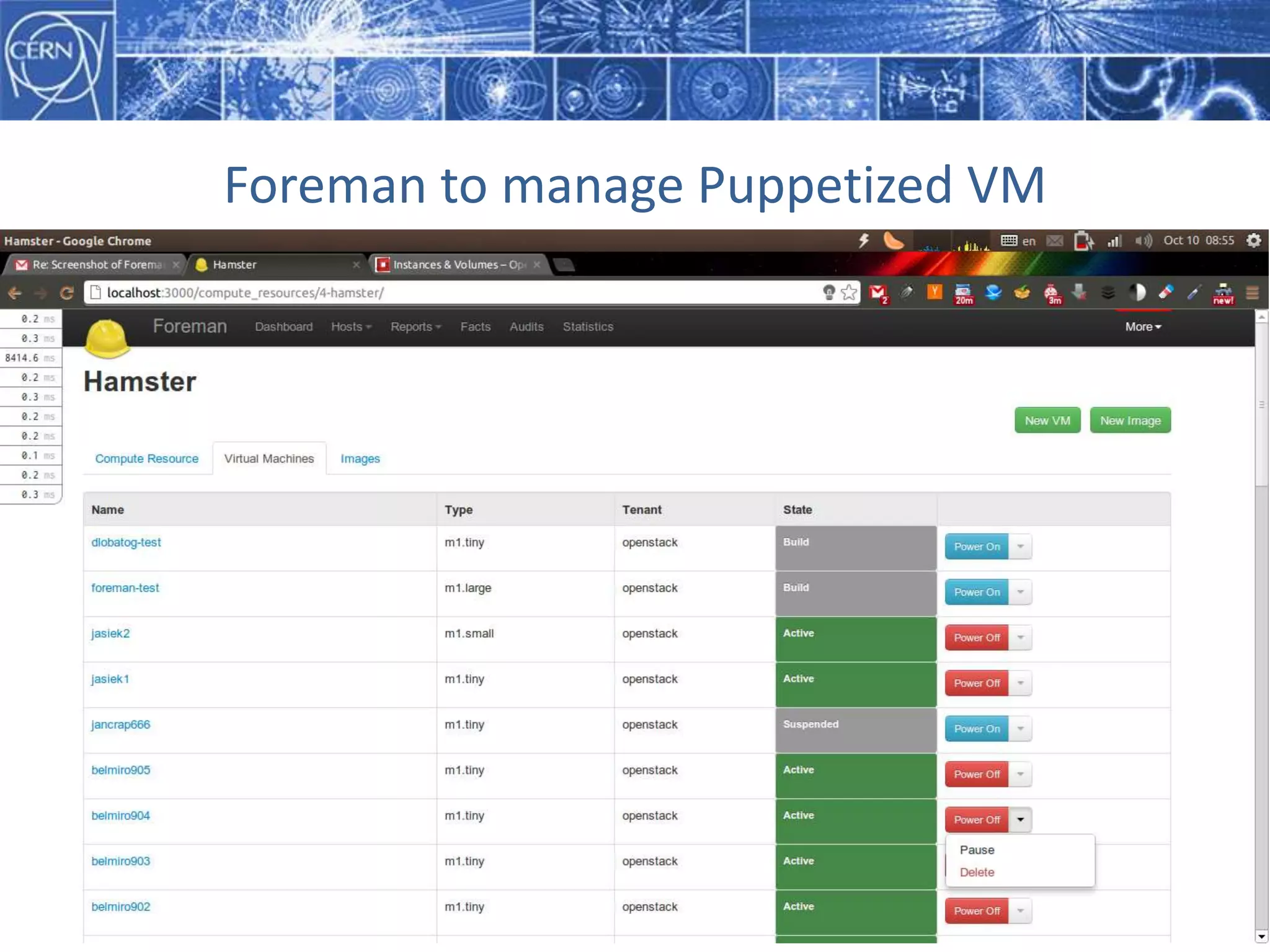

CERN is expanding its computing infrastructure to support growing data and computing needs. It is adopting open source tools like Puppet for configuration management and OpenStack for cloud computing. CERN plans to deploy OpenStack into production in 2013 to manage over 15,000 hypervisors and 100,000 VMs across its data centers by 2015, supporting both traditional and cloud-based workflows. This will enable CERN to more efficiently manage resources and better support dynamic workloads and temporary spikes in demand.